kafka_2.11 简单使用

进过上一篇的引导,相信大家应该都已经安装好了。这次我们就来简单使用一下。

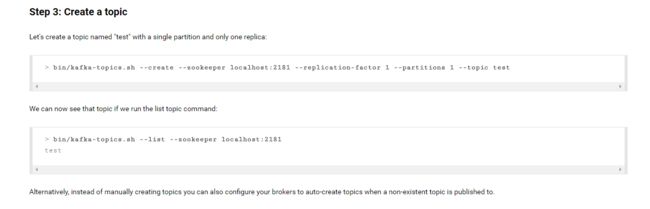

1 创建 topic

像官网上写的那样,我们可以使用 kafka.topics.sh 脚本来创建一个 topic 。不过,前提是我们需要启动zookeeper 和 kafka 服务。

那我们就用官网上例子好了:

[root@master config]# kafka-topics.sh --create --zookeeper localhost:2181 --topic first

Missing required argument "[partitions]"

Option Description

------ -----------

--alter Alter the number of partitions,

replica assignment, and/or

configuration for the topic.

--config A topic configuration override for the

topic being created or altered.The

following is a list of valid

configurations:

cleanup.policy

compression.type

delete.retention.ms

file.delete.delay.ms

flush.messages

flush.ms

follower.replication.throttled.

replicas

index.interval.bytes

leader.replication.throttled.replicas

max.message.bytes

message.format.version

message.timestamp.difference.max.ms

message.timestamp.type

min.cleanable.dirty.ratio

min.compaction.lag.ms

min.insync.replicas

preallocate

retention.bytes

retention.ms

segment.bytes

segment.index.bytes

segment.jitter.ms

segment.ms

unclean.leader.election.enable

See the Kafka documentation for full

details on the topic configs.

--create Create a new topic.

--delete Delete a topic

--delete-config A topic configuration override to be

removed for an existing topic (see

the list of configurations under the

--config option).

--describe List details for the given topics.

--disable-rack-aware Disable rack aware replica assignment

--force Suppress console prompts

--help Print usage information.

--if-exists if set when altering or deleting

topics, the action will only execute

if the topic exists

--if-not-exists if set when creating topics, the

action will only execute if the

topic does not already exist

--list List all available topics.

--partitions <Integer: # of partitions> The number of partitions for the topic

being created or altered (WARNING:

If partitions are increased for a

topic that has a key, the partition

logic or ordering of the messages

will be affected

--replica-assignment A list of manual partition-to-broker

for the topic being

broker_id_for_part1_replica2 , created or altered.

broker_id_for_part2_replica1 :

broker_id_for_part2_replica2 , ...>

--replication-factor <Integer: The replication factor for each

replication factor> partition in the topic being created.

--topic The topic to be create, alter or

describe. Can also accept a regular

expression except for --create option

--topics-with-overrides if set when describing topics, only

show topics that have overridden

configs

--unavailable-partitions if set when describing topics, only

show partitions whose leader is not

available

--under-replicated-partitions if set when describing topics, only

show under replicated partitions

--zookeeper REQUIRED: The connection string for

the zookeeper connection in the form

host:port. Multiple URLS can be

given to allow fail-over.

[root@master config]# kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic first

Created topic "first".

[root@master config]# 哈哈,打错一次他就会给你提示,然后你知道这些参数都是什么意思了,我就不赘述了。

这样我们就创建了一个 topic ,我们还可以使用 kafka.topics.sh 这个脚本来查看 topic 的信息:

[root@master config]# kafka-topics.sh --list --zookeeper localhost:2181

first

[root@master config]# kafka-topics.sh --describe --zookeeper localhost:2181 --topic first

Topic:first PartitionCount:1 ReplicationFactor:1 Configs:

Topic: first Partition: 0 Leader: 1 Replicas: 1 Isr: 1

[root@master config]# 这里显示出来的 0、1指的是我们 kafka 中 broker 的 ID

2 创建 producer

照葫芦画瓢:

[root@master config]# kafka-console-producer.sh

Read data from standard input and publish it to Kafka.

Option Description

------ -----------

--batch-size <Integer: size> Number of messages to send in a single

batch if they are not being sent

synchronously. (default: 200)

--broker-list REQUIRED: The broker list string in

the form HOST1:PORT1,HOST2:PORT2.

--compression-codec [compression-codec] The compression codec: either 'none',

'gzip', 'snappy', or 'lz4'.If

specified without value, then it

defaults to 'gzip'

--key-serializer The class name of the message encoder

implementation to use for

serializing keys. (default: kafka.

serializer.DefaultEncoder)

--line-reader The class name of the class to use for

reading lines from standard in. By

default each line is read as a

separate message. (default: kafka.

tools.

ConsoleProducer$LineMessageReader)

--max-block-ms <Long: max block on The max time that the producer will

send> block for during a send request

(default: 60000)

--max-memory-bytes <Long: total memory The total memory used by the producer

in bytes> to buffer records waiting to be sent

to the server. (default: 33554432)

--max-partition-memory-bytes <Long: The buffer size allocated for a

memory in bytes per partition> partition. When records are received

which are smaller than this size the

producer will attempt to

optimistically group them together

until this size is reached.

(default: 16384)

--message-send-max-retries <Integer> Brokers can fail receiving the message

for multiple reasons, and being

unavailable transiently is just one

of them. This property specifies the

number of retires before the

producer give up and drop this

message. (default: 3)

--metadata-expiry-ms <Long: metadata The period of time in milliseconds

expiration interval> after which we force a refresh of

metadata even if we haven't seen any

leadership changes. (default: 300000)

--old-producer Use the old producer implementation.

--producer-property A mechanism to pass user-defined

properties in the form key=value to

the producer.

--producer.config Producer config properties file. Note

that [producer-property] takes

precedence over this config.

--property A mechanism to pass user-defined

properties in the form key=value to

the message reader. This allows

custom configuration for a user-

defined message reader.

--queue-enqueuetimeout-ms <Integer: Timeout for event enqueue (default:

queue enqueuetimeout ms> 2147483647)

--queue-size <Integer: queue_size> If set and the producer is running in

asynchronous mode, this gives the

maximum amount of messages will

queue awaiting sufficient batch

size. (default: 10000)

--request-required-acks of the producer

required acks> requests (default: 1)

--request-timeout-ms <Integer: request The ack timeout of the producer

timeout ms> requests. Value must be non-negative

and non-zero (default: 1500)

--retry-backoff-ms <Integer> Before each retry, the producer

refreshes the metadata of relevant

topics. Since leader election takes

a bit of time, this property

specifies the amount of time that

the producer waits before refreshing

the metadata. (default: 100)

--socket-buffer-size <Integer: size> The size of the tcp RECV size.

(default: 102400)

--sync If set message send requests to the

brokers are synchronously, one at a

time as they arrive.

--timeout <Integer: timeout_ms> If set and the producer is running in

asynchronous mode, this gives the

maximum amount of time a message

will queue awaiting sufficient batch

size. The value is given in ms.

(default: 1000)

--topic REQUIRED: The topic id to produce

messages to.

--value-serializer The class name of the message encoder

implementation to use for

serializing values. (default: kafka.

serializer.DefaultEncoder)

[root@master config]# kafka-console-producer.sh --broker-list localhost:9092 --topic first

this is a test.

先报错,再看参数什么含义,还是那样,不做赘述。

当命令参数正确执行之后,是没有什么反馈的,它会等待你输入”消息“,然后它会发布给 kafka,交由 kafka 保管,等待消费者进行消费。我们可以使用”Ctrl + C“来停止。

这里我输入了一句话”this is a test.“,这是我们要发布的。

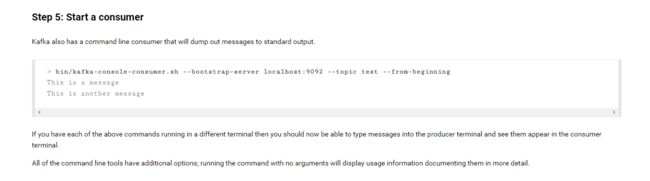

3 创建 consumer

这时候我们会注意到一句话,”If you have each of the above commands running in a different terminal then you should now be able to type messages into the producer terminal and see them appear in the consumer terminal.“ 也就是说,我们如果是在两个终端上创建 producer 和 consumer 的话,我们在 producer 上输入”消息“,我们的 consumer 上会显示出来。我们来试试,重新启动一个终端用来创建 consumer 。

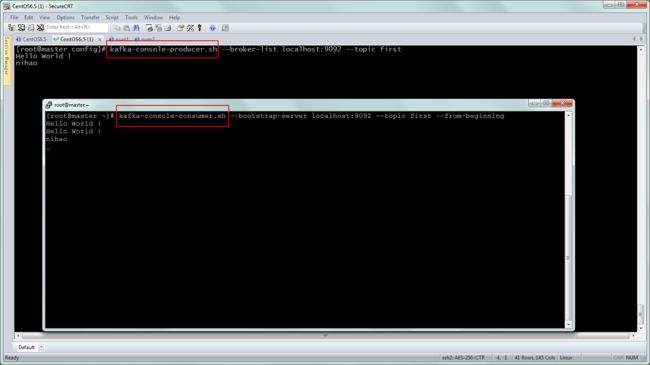

就像图片上显示的那样,我启动了两个。生产者一边生产,消费者一边消费…

你可能会困惑,为什么会显示两个 “Hello World !”,那是因为我为了能截上这张图,搞错了一次,producer 这边是发送了”消息“出去,我的 consumer 也再等待接收,但是它俩不是同一个 topic 。当我把 producer 和 consumer 都停掉之后重新进来就成这样了。

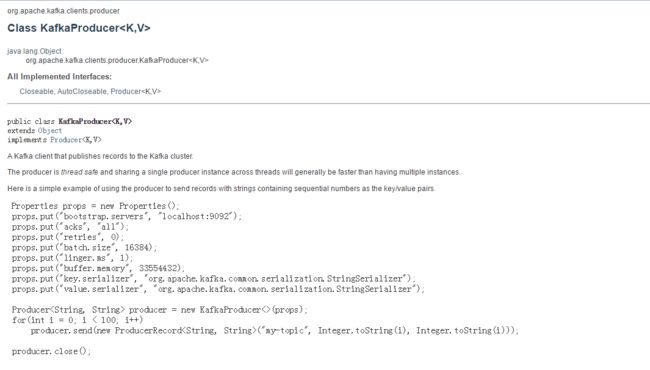

接下来我们使用 API 来写一下,对于安装包里的文档也是够了,打开之后特别的乱,还是看在线的文档吧。

4 API 操作

在这之前,由于我是使用 maven 来构建项目的,我们可以很方便的引入依赖的 jar 包。

新建一个 maven 项目,然后再 pom.xml 中加入:

<dependency>

<groupId>org.apache.kafkagroupId>

<artifactId>kafka-clientsartifactId>

<version>0.10.1.0version>

dependency>这样一来就引入了 kafka 依赖的 jar 包。

我们可以先新建一个 producer :

package com.signal.kafkaTest;

import java.util.Properties;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

public class ProducerTest {

public static void main(String[] args) {

Properties props = new Properties();

props.put("bootstrap.servers", "master:9092,master:9093");

props.put("acks", "all");

props.put("retries", 0);

props.put("batch.size", 16384);

props.put("linger.ms", 1);

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

Producer producer = new KafkaProducer(props);

for(int i=0;i<100;i++){

ProducerRecord r = new ProducerRecord("message","key-"+i,"value-"+i);

producer.send(r);

}

producer.close();

}

}

这里已经非常清楚了^U^,我就偷工减料了。

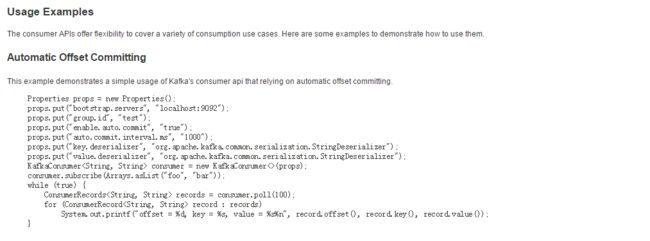

然后是 consumer :

package com.signal.kafkaTest;

import java.util.Arrays;

import java.util.Properties;

import org.apache.kafka.clients.consumer.Consumer;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

public class ConsumerTest {

public static void main(String[] args) {

Properties props = new Properties();

props.put("bootstrap.servers", "master:9092,master:9093");

props.put("group.id", "test");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("session.timeout.ms", "30000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

Consumer consumer = new KafkaConsumer(props);

consumer.subscribe(Arrays.asList("message"));

while(true){

ConsumerRecords records = consumer.poll(10);

for(ConsumerRecord record : records){

System.out.println("offset=" + record.offset() + ",--key=" + record.key() + ",--value=" + record.value());

}

}

}

}

然后先运行 producer ,再运行 consumer ,控制台输出如下:

offset=208,--key=key-0,--value=value-0

offset=209,--key=key-1,--value=value-1

offset=210,--key=key-2,--value=value-2

offset=211,--key=key-3,--value=value-3

offset=212,--key=key-4,--value=value-4

offset=213,--key=key-5,--value=value-5

offset=214,--key=key-6,--value=value-6

offset=215,--key=key-7,--value=value-7

offset=216,--key=key-8,--value=value-8

offset=217,--key=key-9,--value=value-9

offset=218,--key=key-10,--value=value-10

(此处省略)... 这个就是 kafka 的简单使用了,希望大家好好看看文档,都能很快写出来的。