一、什么是宏函数?通过宏定义的函数是宏函数。如下,编译器在预处理阶段会将Add(x,y)替换为((x)*(y))#defineAdd(x,y)((x)*(y))#defineAdd(x,y)((x)*(y))intmain(){inta=10;intb=20;intd=10;intc=Add(a+d,b)*2;cout<

地推话术,如何应对地推过程中家长的拒绝

校师学

相信校长们在做地推的时候经常遇到这种情况:市场专员反馈家长不接单,咨询师反馈难以邀约这些家长上门,校区地推疲软,招生难。为什么?仅从地推层面分析,一方面因为家长受到的信息轰炸越来越多,对信息越来越“免疫”;而另一方面地推人员的专业能力和营销话术没有提高,无法应对家长的拒绝,对有意向的家长也不知如何跟进,眼睁睁看着家长走远;对于家长的疑问,更不知道如何有技巧地回答,机会白白流失。由于回答没技巧和专业

谢谢你们,爱你们!

鹿游儿

昨天家人去泡温泉,二个孩子也带着去,出发前一晚,匆匆下班,赶回家和孩子一起收拾。饭后,我拿出笔和本子(上次去澳门时做手帐的本子)写下了1\2\3\4\5\6\7\8\9,让后让小壹去思考,带什么出发去旅游呢?她在对应的数字旁边画上了,泳衣、泳圈、肖恩、内衣内裤、tapuy、拖鞋……画完后,就让她自己对着这个本子,将要带的,一一带上,没想到这次带的书还是这本《便便工厂》(晚上姑婆发照片过来,妹妹累得

C语言如何定义宏函数?

小九格物

c语言

在C语言中,宏函数是通过预处理器定义的,它在编译之前替换代码中的宏调用。宏函数可以模拟函数的行为,但它们不是真正的函数,因为它们在编译时不会进行类型检查,也不会分配存储空间。宏函数的定义通常使用#define指令,后面跟着宏的名称和参数列表,以及宏展开后的代码。宏函数的定义方式:1.基本宏函数:这是最简单的宏函数形式,它直接定义一个表达式。#defineSQUARE(x)((x)*(x))2.带参

微服务下功能权限与数据权限的设计与实现

nbsaas-boot

微服务java架构

在微服务架构下,系统的功能权限和数据权限控制显得尤为重要。随着系统规模的扩大和微服务数量的增加,如何保证不同用户和服务之间的访问权限准确、细粒度地控制,成为设计安全策略的关键。本文将讨论如何在微服务体系中设计和实现功能权限与数据权限控制。1.功能权限与数据权限的定义功能权限:指用户或系统角色对特定功能的访问权限。通常是某个用户角色能否执行某个操作,比如查看订单、创建订单、修改用户资料等。数据权限:

理解Gunicorn:Python WSGI服务器的基石

范范0825

ipythonlinux运维

理解Gunicorn:PythonWSGI服务器的基石介绍Gunicorn,全称GreenUnicorn,是一个为PythonWSGI(WebServerGatewayInterface)应用设计的高效、轻量级HTTP服务器。作为PythonWeb应用部署的常用工具,Gunicorn以其高性能和易用性著称。本文将介绍Gunicorn的基本概念、安装和配置,帮助初学者快速上手。1.什么是Gunico

小丽成长记(四十三)

玲玲54321

小丽发现,即使她好不容易调整好自己的心态下一秒总会有不确定的伤脑筋的事出现,一个接一个的问题,人生就没有停下的时候,小问题不断出现。不过她今天看的书,她接受了人生就是不确定的,厉害的人就是不断创造确定性,在Ta的领域比别人多的确定性就能让自己脱颖而出,显示价值从而获得的比别人多的利益。正是这样的原因,因为从前修炼自己太少,使得她现在在人生道路上打怪起来困难重重,她似乎永远摆脱不了那种无力感,有种习

学点心理知识,呵护孩子健康

静候花开_7090

昨天听了华中师范大学教育管理学系副教授张玲老师的《哪里才是学生心理健康的最后庇护所,超越教育与技术的思考》的讲座。今天又重新学习了一遍,收获匪浅。张玲博士也注意到了当今社会上的孩子由于心理问题导致的自残、自杀及伤害他人等恶性事件。她向我们普及了一个重要的命题,她说心理健康的一些基本命题,我们与我们通常的一些教育命题是不同的,她还举了几个例子,让我们明白我们原来以为的健康并非心理学上的健康。比如如果

2021年12月19日,春蕾教育集团团建活动感受——黄晓丹

黄错错加油

感受:1.从陌生到熟悉的过程。游戏环节让我们在轻松的氛围中得到了锻炼,也增长了不少知识。2.游戏过程中,我们贡献的是个人力量,展现的是团队的力量。它磨合的往往不止是工作的熟悉,更是观念上契合度的贴近。3.这和工作是一样的道理。在各自的岗位上,每个人摆正自己的位置、各司其职充分发挥才能,并团结一致劲往一处使,才能实现最大的成功。新知:1.团队精神需要不断地创新。过去,人们把创新看作是冒风险,现在人们

Cell Insight | 单细胞测序技术又一新发现,可用于HIV-1和Mtb共感染个体诊断

尐尐呅

结核病是艾滋病合并其他疾病中导致患者死亡的主要原因。其中结核病由结核分枝杆菌(Mycobacteriumtuberculosis,Mtb)感染引起,获得性免疫缺陷综合症(艾滋病)由人免疫缺陷病毒(Humanimmunodeficiencyvirustype1,HIV-1)感染引起。国家感染性疾病临床医学研究中心/深圳市第三人民医院张国良团队携手深圳华大生命科学研究院吴靓团队,共同研究得出单细胞测序

c++ 的iostream 和 c++的stdio的区别和联系

黄卷青灯77

c++算法开发语言iostreamstdio

在C++中,iostream和C语言的stdio.h都是用于处理输入输出的库,但它们在设计、用法和功能上有许多不同。以下是两者的区别和联系:区别1.编程风格iostream(C++风格):C++标准库中的输入输出流类库,支持面向对象的输入输出操作。典型用法是cin(输入)和cout(输出),使用>操作符来处理数据。更加类型安全,支持用户自定义类型的输入输出。#includeintmain(){in

瑶池防线

谜影梦蝶

冥华虽然逃过了影梦的军队,但他是一个忠臣,他选择上报战况。败给影梦后成逃兵,高层亡尔还活着,七重天失守......随便一条,即可处死冥华。冥华自然是知道以仙界高层的习性此信一发自己必死无疑,但他还选择上报实情,因为责任。同样此信送到仙宫后,知道此事的人,大多数人都认定冥华要完了,所以上到仙界高层,下到扫大街的,包括冥华自己,全都准备好迎接冥华之死。如果仙界现在还属于两方之争的话,冥华必死无疑。然而

爬山后遗症

璃绛

爬山,攀登,一步一步走向制高点,是一种挑战。成功抵达是一种无法言语的快乐,在山顶吹吹风,看看风景,这是从未有过的体验。然而,爬山一时爽,下山腿打颤,颠簸的路,一路向下走,腿部力量不够,走起来抖到不行,停不下来了!第二天必定腿疼,浑身酸痛,坐立难安!

项目中 枚举与注解的结合使用

飞翔的马甲

javaenumannotation

前言:版本兼容,一直是迭代开发头疼的事,最近新版本加上了支持新题型,如果新创建一份问卷包含了新题型,那旧版本客户端就不支持,如果新创建的问卷不包含新题型,那么新旧客户端都支持。这里面我们通过给问卷类型枚举增加自定义注解的方式完成。顺便巩固下枚举与注解。

一、枚举

1.在创建枚举类的时候,该类已继承java.lang.Enum类,所以自定义枚举类无法继承别的类,但可以实现接口。

【Scala十七】Scala核心十一:下划线_的用法

bit1129

scala

下划线_在Scala中广泛应用,_的基本含义是作为占位符使用。_在使用时是出问题非常多的地方,本文将不断完善_的使用场景以及所表达的含义

1. 在高阶函数中使用

scala> val list = List(-3,8,7,9)

list: List[Int] = List(-3, 8, 7, 9)

scala> list.filter(_ > 7)

r

web缓存基础:术语、http报头和缓存策略

dalan_123

Web

对于很多人来说,去访问某一个站点,若是该站点能够提供智能化的内容缓存来提高用户体验,那么最终该站点的访问者将络绎不绝。缓存或者对之前的请求临时存储,是http协议实现中最核心的内容分发策略之一。分发路径中的组件均可以缓存内容来加速后续的请求,这是受控于对该内容所声明的缓存策略。接下来将讨web内容缓存策略的基本概念,具体包括如如何选择缓存策略以保证互联网范围内的缓存能够正确处理的您的内容,并谈论下

crontab 问题

周凡杨

linuxcrontabunix

一: 0481-079 Reached a symbol that is not expected.

背景:

*/5 * * * * /usr/IBMIHS/rsync.sh

让tomcat支持2级域名共享session

g21121

session

tomcat默认情况下是不支持2级域名共享session的,所有有些情况下登陆后从主域名跳转到子域名会发生链接session不相同的情况,但是只需修改几处配置就可以了。

打开tomcat下conf下context.xml文件

找到Context标签,修改为如下内容

如果你的域名是www.test.com

<Context sessionCookiePath="/path&q

web报表工具FineReport常用函数的用法总结(数学和三角函数)

老A不折腾

Webfinereport总结

ABS

ABS(number):返回指定数字的绝对值。绝对值是指没有正负符号的数值。

Number:需要求出绝对值的任意实数。

示例:

ABS(-1.5)等于1.5。

ABS(0)等于0。

ABS(2.5)等于2.5。

ACOS

ACOS(number):返回指定数值的反余弦值。反余弦值为一个角度,返回角度以弧度形式表示。

Number:需要返回角

linux 启动java进程 sh文件

墙头上一根草

linuxshelljar

#!/bin/bash

#初始化服务器的进程PId变量

user_pid=0;

robot_pid=0;

loadlort_pid=0;

gateway_pid=0;

#########

#检查相关服务器是否启动成功

#说明:

#使用JDK自带的JPS命令及grep命令组合,准确查找pid

#jps 加 l 参数,表示显示java的完整包路径

#使用awk,分割出pid

我的spring学习笔记5-如何使用ApplicationContext替换BeanFactory

aijuans

Spring 3 系列

如何使用ApplicationContext替换BeanFactory?

package onlyfun.caterpillar.device;

import org.springframework.beans.factory.BeanFactory;

import org.springframework.beans.factory.xml.XmlBeanFactory;

import

Linux 内存使用方法详细解析

annan211

linux内存Linux内存解析

来源 http://blog.jobbole.com/45748/

我是一名程序员,那么我在这里以一个程序员的角度来讲解Linux内存的使用。

一提到内存管理,我们头脑中闪出的两个概念,就是虚拟内存,与物理内存。这两个概念主要来自于linux内核的支持。

Linux在内存管理上份为两级,一级是线性区,类似于00c73000-00c88000,对应于虚拟内存,它实际上不占用

数据库的单表查询常用命令及使用方法(-)

百合不是茶

oracle函数单表查询

创建数据库;

--建表

create table bloguser(username varchar2(20),userage number(10),usersex char(2));

创建bloguser表,里面有三个字段

&nbs

多线程基础知识

bijian1013

java多线程threadjava多线程

一.进程和线程

进程就是一个在内存中独立运行的程序,有自己的地址空间。如正在运行的写字板程序就是一个进程。

“多任务”:指操作系统能同时运行多个进程(程序)。如WINDOWS系统可以同时运行写字板程序、画图程序、WORD、Eclipse等。

线程:是进程内部单一的一个顺序控制流。

线程和进程

a. 每个进程都有独立的

fastjson简单使用实例

bijian1013

fastjson

一.简介

阿里巴巴fastjson是一个Java语言编写的高性能功能完善的JSON库。它采用一种“假定有序快速匹配”的算法,把JSON Parse的性能提升到极致,是目前Java语言中最快的JSON库;包括“序列化”和“反序列化”两部分,它具备如下特征:

【RPC框架Burlap】Spring集成Burlap

bit1129

spring

Burlap和Hessian同属于codehaus的RPC调用框架,但是Burlap已经几年不更新,所以Spring在4.0里已经将Burlap的支持置为Deprecated,所以在选择RPC框架时,不应该考虑Burlap了。

这篇文章还是记录下Burlap的用法吧,主要是复制粘贴了Hessian与Spring集成一文,【RPC框架Hessian四】Hessian与Spring集成

【Mahout一】基于Mahout 命令参数含义

bit1129

Mahout

1. mahout seqdirectory

$ mahout seqdirectory

--input (-i) input Path to job input directory(原始文本文件).

--output (-o) output The directory pathna

linux使用flock文件锁解决脚本重复执行问题

ronin47

linux lock 重复执行

linux的crontab命令,可以定时执行操作,最小周期是每分钟执行一次。关于crontab实现每秒执行可参考我之前的文章《linux crontab 实现每秒执行》现在有个问题,如果设定了任务每分钟执行一次,但有可能一分钟内任务并没有执行完成,这时系统会再执行任务。导致两个相同的任务在执行。

例如:

<?

//

test

.php

java-74-数组中有一个数字出现的次数超过了数组长度的一半,找出这个数字

bylijinnan

java

public class OcuppyMoreThanHalf {

/**

* Q74 数组中有一个数字出现的次数超过了数组长度的一半,找出这个数字

* two solutions:

* 1.O(n)

* see <beauty of coding>--每次删除两个不同的数字,不改变数组的特性

* 2.O(nlogn)

* 排序。中间

linux 系统相关命令

candiio

linux

系统参数

cat /proc/cpuinfo cpu相关参数

cat /proc/meminfo 内存相关参数

cat /proc/loadavg 负载情况

性能参数

1)top

M:按内存使用排序

P:按CPU占用排序

1:显示各CPU的使用情况

k:kill进程

o:更多排序规则

回车:刷新数据

2)ulimit

ulimit -a:显示本用户的系统限制参

[经营与资产]保持独立性和稳定性对于软件开发的重要意义

comsci

软件开发

一个软件的架构从诞生到成熟,中间要经过很多次的修正和改造

如果在这个过程中,外界的其它行业的资本不断的介入这种软件架构的升级过程中

那么软件开发者原有的设计思想和开发路线

在CentOS5.5上编译OpenJDK6

Cwind

linuxOpenJDK

几番周折终于在自己的CentOS5.5上编译成功了OpenJDK6,将编译过程和遇到的问题作一简要记录,备查。

0. OpenJDK介绍

OpenJDK是Sun(现Oracle)公司发布的基于GPL许可的Java平台的实现。其优点:

1、它的核心代码与同时期Sun(-> Oracle)的产品版基本上是一样的,血统纯正,不用担心性能问题,也基本上没什么兼容性问题;(代码上最主要的差异是

java乱码问题

dashuaifu

java乱码问题js中文乱码

swfupload上传文件参数值为中文传递到后台接收中文乱码 在js中用setPostParams({"tag" : encodeURI( document.getElementByIdx_x("filetag").value,"utf-8")});

然后在servlet中String t

cygwin很多命令显示command not found的解决办法

dcj3sjt126com

cygwin

cygwin很多命令显示command not found的解决办法

修改cygwin.BAT文件如下

@echo off

D:

set CYGWIN=tty notitle glob

set PATH=%PATH%;d:\cygwin\bin;d:\cygwin\sbin;d:\cygwin\usr\bin;d:\cygwin\usr\sbin;d:\cygwin\us

[介绍]从 Yii 1.1 升级

dcj3sjt126com

PHPyii2

2.0 版框架是完全重写的,在 1.1 和 2.0 两个版本之间存在相当多差异。因此从 1.1 版升级并不像小版本间的跨越那么简单,通过本指南你将会了解两个版本间主要的不同之处。

如果你之前没有用过 Yii 1.1,可以跳过本章,直接从"入门篇"开始读起。

请注意,Yii 2.0 引入了很多本章并没有涉及到的新功能。强烈建议你通读整部权威指南来了解所有新特性。这样有可能会发

Linux SSH免登录配置总结

eksliang

ssh-keygenLinux SSH免登录认证Linux SSH互信

转载请出自出处:http://eksliang.iteye.com/blog/2187265 一、原理

我们使用ssh-keygen在ServerA上生成私钥跟公钥,将生成的公钥拷贝到远程机器ServerB上后,就可以使用ssh命令无需密码登录到另外一台机器ServerB上。

生成公钥与私钥有两种加密方式,第一种是

手势滑动销毁Activity

gundumw100

android

老是效仿ios,做android的真悲催!

有需求:需要手势滑动销毁一个Activity

怎么办尼?自己写?

不用~,网上先问一下百度。

结果:

http://blog.csdn.net/xiaanming/article/details/20934541

首先将你需要的Activity继承SwipeBackActivity,它会在你的布局根目录新增一层SwipeBackLay

JavaScript变换表格边框颜色

ini

JavaScripthtmlWebhtml5css

效果查看:http://hovertree.com/texiao/js/2.htm代码如下,保存到HTML文件也可以查看效果:

<html>

<head>

<meta charset="utf-8">

<title>表格边框变换颜色代码-何问起</title>

</head>

<body&

Kafka Rest : Confluent

kane_xie

kafkaRESTconfluent

最近拿到一个kafka rest的需求,但kafka暂时还没有提供rest api(应该是有在开发中,毕竟rest这么火),上网搜了一下,找到一个Confluent Platform,本文简单介绍一下安装。

这里插一句,给大家推荐一个九尾搜索,原名叫谷粉SOSO,不想fanqiang谷歌的可以用这个。以前在外企用谷歌用习惯了,出来之后用度娘搜技术问题,那匹配度简直感人。

环境声明:Ubu

Calender不是单例

men4661273

单例Calender

在我们使用Calender的时候,使用过Calendar.getInstance()来获取一个日期类的对象,这种方式跟单例的获取方式一样,那么它到底是不是单例呢,如果是单例的话,一个对象修改内容之后,另外一个线程中的数据不久乱套了吗?从试验以及源码中可以得出,Calendar不是单例。

测试:

Calendar c1 =

线程内存和主内存之间联系

qifeifei

java thread

1, java多线程共享主内存中变量的时候,一共会经过几个阶段,

lock:将主内存中的变量锁定,为一个线程所独占。

unclock:将lock加的锁定解除,此时其它的线程可以有机会访问此变量。

read:将主内存中的变量值读到工作内存当中。

load:将read读取的值保存到工作内存中的变量副本中。

schedule和scheduleAtFixedRate

tangqi609567707

javatimerschedule

原文地址:http://blog.csdn.net/weidan1121/article/details/527307

import java.util.Timer;import java.util.TimerTask;import java.util.Date;

/** * @author vincent */public class TimerTest {

erlang 部署

wudixiaotie

erlang

1.如果在启动节点的时候报这个错 :

{"init terminating in do_boot",{'cannot load',elf_format,get_files}}

则需要在reltool.config中加入

{app, hipe, [{incl_cond, exclude}]},

2.当generate时,遇到:

ERROR

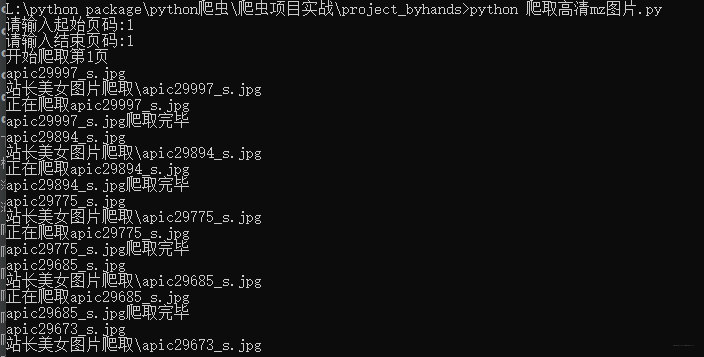

![]() 通过js代码动态添加

通过js代码动态添加 for image_src in image_list:

download_image(image_src)

def download_image(image_src):

dirpath = '站长美女图片爬取'

# 创建文件夹

if not os.path.exists(dirpath):

os.mkdir(dirpath)

# 搞个文件名

filename = os.path.basename(image_src)

print(filename)

# 搞图片路径

filepath = os.path.join(dirpath, filename)

print(filepath)

# 发送请求保存图片

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.149 Safari/537.36',

'Cookie': 'UM_distinctid=172bafc075930f-0b3214295ea2fd-f313f6d-1fa400-172bafc075a521; __gads=ID=7343205fec19267e:T=1592274993:S=ALNI_MZuCx78VBx2WBiIEBOXsKZoldvefg'

}

# https://sc.chinaz.com/tupian/201228241995.htm

# 进行拼接

image_src = 'https:' + image_src

# 像美女图片发起请求

request = urllib.request.Request(url=image_src, headers=headers)

# 获取响应

response = urllib.request.urlopen(request)

# 以二进制的形式保存下来

with open(filepath, 'wb') as fp:

print(f'正在爬取{filename}')

fp.write(response.read())

print(f'{filename}爬取完毕')

time.sleep(2)

def main():

url = 'http://sc.chinaz.com/tupian/xingganmeinvtupian{}.html'

start_page = int(input('请输入起始页码:'))

end_page = int(input('请输入结束页码:'))

for page in range(start_page, end_page + 1):

request = handle_request(url, page)

print('开始爬取第{}页'.format(page))

response = urllib.request.urlopen(request)

content = response.read().decode()

# print(content)

parse_content(content)

print('第{}页爬取结束'.format(page))

if __name__ == '__main__':

start = time.time()

main()

print('蜘蛛结网完毕,收工')

end = time.time()

print(f'爬取所有妹子图片用时: {end-start}s')

for image_src in image_list:

download_image(image_src)

def download_image(image_src):

dirpath = '站长美女图片爬取'

# 创建文件夹

if not os.path.exists(dirpath):

os.mkdir(dirpath)

# 搞个文件名

filename = os.path.basename(image_src)

print(filename)

# 搞图片路径

filepath = os.path.join(dirpath, filename)

print(filepath)

# 发送请求保存图片

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.149 Safari/537.36',

'Cookie': 'UM_distinctid=172bafc075930f-0b3214295ea2fd-f313f6d-1fa400-172bafc075a521; __gads=ID=7343205fec19267e:T=1592274993:S=ALNI_MZuCx78VBx2WBiIEBOXsKZoldvefg'

}

# https://sc.chinaz.com/tupian/201228241995.htm

# 进行拼接

image_src = 'https:' + image_src

# 像美女图片发起请求

request = urllib.request.Request(url=image_src, headers=headers)

# 获取响应

response = urllib.request.urlopen(request)

# 以二进制的形式保存下来

with open(filepath, 'wb') as fp:

print(f'正在爬取{filename}')

fp.write(response.read())

print(f'{filename}爬取完毕')

time.sleep(2)

def main():

url = 'http://sc.chinaz.com/tupian/xingganmeinvtupian{}.html'

start_page = int(input('请输入起始页码:'))

end_page = int(input('请输入结束页码:'))

for page in range(start_page, end_page + 1):

request = handle_request(url, page)

print('开始爬取第{}页'.format(page))

response = urllib.request.urlopen(request)

content = response.read().decode()

# print(content)

parse_content(content)

print('第{}页爬取结束'.format(page))

if __name__ == '__main__':

start = time.time()

main()

print('蜘蛛结网完毕,收工')

end = time.time()

print(f'爬取所有妹子图片用时: {end-start}s')