openstack-nova组件工作流程机制介绍以及详细部署过程

目录

-

-

- 一 ,Nova计算服务在openstack中的作用是什么?

- 二,Nova的系统架构

-

- 1,Nova架构组件作用介绍

- 2,Nova架构基本工作流程图

- 三,nova架构组件详细介绍

-

- 1,Nova组件-Api

- 2,Nova组件-Scheduler

-

- 2.1nova调度器的类型:

- 2.2 过滤调度器调度过程:

- 2.3 过滤器

- 2.4 过滤类型

- 2.5 权重

- 3,Compute组件

- 4, 三个组件总结概述

-

- 4.1 三个组件功能总结

- 4.2 调度器调度流程以及子功能

- 5,Conductor组件

- 6,PlacementAPI组件

- 7,虚拟机实例化流程

- 8,控制台接口介绍

- 9,nova部署的几种模式

- 四,Nova的Cell架构模式介绍

-

- 1,什么是cell ?为什么有cell ?

- 五, nova组件部署过程:

-

- 1,openstack-placement组件部署:

- 2,nova组件部署过程:

-

上一篇 glance组件的部署 点这里

一 ,Nova计算服务在openstack中的作用是什么?

- 计算服务是openstack最核心的服务之一,负责维护和管理云环境的计算资源,它在openstack项目中代号是nova

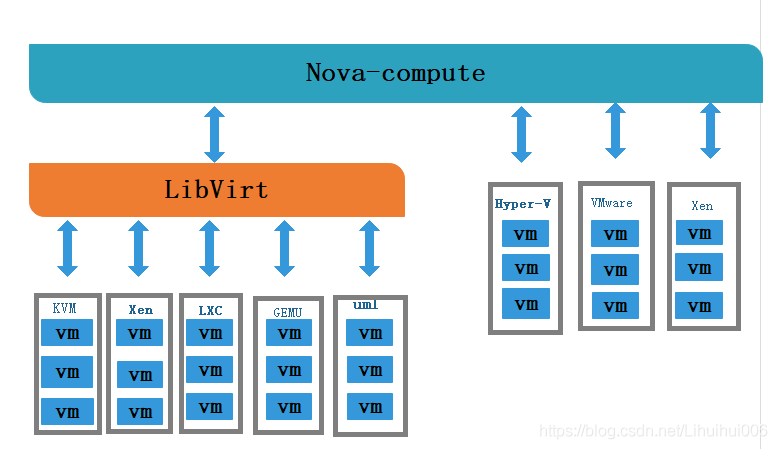

- nova自身并没有提供任何虚拟化能力,它提供计算服务,使用不同的虚拟化驱动来与底层支持的hypervisor(虚拟机管理器)进行交互。所有的计算实例(虚拟服务器)由nova进行生命周期的调度管理(启动,挂起,停止,删除等)

- nova需要keystone,glance,neutron,cinder和swift等其他服务的支持,能与这些服务集成,实现如加密磁盘,裸金属计算实例等

二,Nova的系统架构

1,Nova架构组件作用介绍

- Nova由多个服务器进程组成,每个进程执行不同功能

- DB:用于数据存储的sql数据库

- API:用户接收http请求,转换命令,通过消息队列或者http与其他组件通信的nova组件

- scheduler:用于决定哪台计算节点承载计算实例的nova调度器

- network:管理ip转发,网桥或者虚拟局域网的nova计算组件

- compute:管理虚拟机管理器与虚拟机之间通信的nova计算组件

- conductor:处理需要协调(构建虚拟机或者调整虚拟机大小)的请求,或者处理对象转换

2,Nova架构基本工作流程图

图一:nova内部工作机制及流程:

图二:Nova服务与外部组件之间是如何协调工作的?

流程图解释说明 :当一个实例开始的时候,会先从硬件上通过hypervisor去调用 cpu, 内存,硬盘等一系列资源,到达conductor进行一个预处理,形成一个构建成虚拟机大小的请求,同时也会调用api向存储器发出请求来调用资源,再通过scheduler调度器来决定哪台计算节点承载计算实例(如图一解释,此时有两个方法,一个是直接指定host,无需filter直接发送请求指定computer-node,另外一种就是未指定host,通过一个筛选与权重衡量,调度最合适的计算节点),在这一系列完成之后,会开始进行外部服务,首先会去与keystone进行认证,之后调用网络资源,镜像以及存储资源来完成内部流程(如图二)。

三,nova架构组件详细介绍

1,Nova组件-Api

-

只要跟虚拟机生命周期相关的操作,nova-api都可以响应

-

Nova-api对接收到的http api请求做以下处理:

- 1,检查客户端传入的参数是否合法有效

- 2,调用nova其他服务来处理客户端http请求

- 3,格式化nova其他子服务返回结果并返回给客户端

-

nova-api是外部访问并使用nova提供的各种服务的唯一途径,也是客户端和nova之间的中间层。

-

Api是客户访问nova的http接口,它由nova-api服务实现,nova-api服务接收和响应来自最终用户的计算api请求,作为openstack对外服务的最主要接口,nova-api提供了一个集中的可以查询所有api的端点。总结为·一点:就是作为组件外部和内部交互的入口(http,rabbitmq)

-

所有对nova的请求都首先由nova-api处理,api提供rest标准调用服务,便于与第三方系统集成。(云计算产品/平台集成 )

-

最终用户不会直接改送restrestful api请求,而是通过openstack命令行,dashbord控制面板(web界面)和其他需要跟nova交换的组件来使用这些api.

-

可以从nova-api查询,定位到其他api位置 ,还可以调用其他核心服务共同完成同一个需求。

总结:

Nova-api接收并相应终端用户计算api调用,支持openstack计算api,同时允许被其他云计算平台调用

Nova-api-metadata server接受从原实例元数据发来的请求,通常与nova-network服务在安装多主机模式下运行

nova-compute service 一个守护进程,通过虚拟化层api接口

Nova-conductor 协调nova-compute服务和database之间的交互数据,避免越权,保证安全性

nova-network worker daemon , 类似 nova-compute

2,Nova组件-Scheduler

-

Scheduler可解释为调度器,由nova-scheduler服务实现,主要解决的是如何选择在哪个计算节点上启动实例的问题,它可以应用多种规则,如果考虑内存使用率,cpu负载率,cpu架构(intel/amd)等多种因素,根据一定的算法,确定虚拟机实例能够运行在哪一台计算服务器上,Nova-scheduler服务会从队列中接收一个虚拟机实例的请求,通过读取数据库的内容,从可用资源池中选择最合适的计算节点来创建新的虚拟机实例

-

创建虚拟机实例时,用户会提出资源要求,如cpu,内存,磁盘各需要多少,openstack将这些需求定义在实例类型中,用户只需要指定需要使用哪个实例类型就可以了。

2.1nova调度器的类型:

- 随机调度器(chance scheduler):从所有正常运行nova-compute服务节点中随机选择

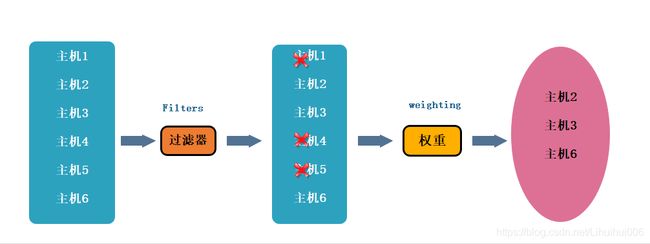

- 过滤器调度器(filter scheduler):根据指定的过滤条件以及权重来选择最佳的计算节点,filter又称为筛选器。

- 缓存调度器(caching scheduler):可看作随机调度器的一种特殊类型,在随机调度的基础上将主机资源信息缓存在本地内存中,然后通过后台的定时任务定时从数据库中获取最新的主机资源信息。

2.2 过滤调度器调度过程:

主要分为二个阶段

- 通过指定的过滤器选择满足条件的计算节点,比如内存使用率小50%,可以使用多个过滤器依次进行过滤。

- 对过滤后的主机列表进行权重计算并排序,选择最优的计算节点来创建虚拟机实例。

2.3 过滤器

-

当过滤调度器需要执行调度操作时,会让过滤器对计算节点进行判断,返回true或false

配置文件:/etc/nova/nova.conf -

scheduler_available_filters选项用于配置可用过滤器,默认是所有Nova自带的或氯气都可以用于过滤作用

Scheduler_available_filters = nova.scheduler.filters.all_filters

-

另外还有一个选项scheduler_default_filters用于指定nova-scheduler服务真正使用的过滤器,默认值如下

scheduler_default_filters = retryfilters.availabilityzoneFilter,RamFilter,

ComputeFilter,CompputerCapabilitiesFilter,ImagePropertiesFilter,

ServerGroupAntiAffinityFilter,ServerGroupAffinityFilter

2.4 过滤类型

过滤顺序:过滤调度器将按照列表中的顺序依次过滤

-

retryfilter(再审过滤器)

主要作用是过滤掉之前已经调度过的节点,如A,B,C都通过了了过滤,A权重最大被选中执行操作,由于某种原因,操作在A上失败了,Nova-filter将重新执行过滤操作,那么此时A就会retryfilter直接排除,以免再次失败 -

AvailabilityZoneFilter (可用区域过滤器)

为提高容灾性并提供隔离服务,可以将计算节点划分到不同的可用区域中,openstack默认有一个命令为nova的可用区域,所有的计算节点初始是放在nove区域的。用户可以根据需要创建自己的一个可用区域,创建实例时,需要指定将实例部署在哪个可用区域中,nova-scheduler执行过滤操作时,会使用availabilityZoneFilter不属于指定可用区域计算节点过滤掉。 -

RamFilter(内存过滤器)

根据可用内存来调度虚拟机创建,将不能满足实例类型内存需求的计算节点过滤掉,但为了提高系统资源利用率,Openstack在计算节点的可用内存时允许超过实际内存大小。超过的程度是通过nova.conf配置文件中

ram_allocation_ratio参数来控制的,默认值是1.5。

viletc/nova/nova.conf

Ram_allocation_ratio=1.5 -

DiskFilter(硬盘调度器)

根据磁盘空间来调度虚拟机创建,将不能满足类型磁盘需求的计算节点过滤掉。磁盘同样允许超量,超量值可修改nova.conf中disk_allocation_ratio中的参数控制,默认值是1.0

vi etc/nova/nova.conf

disk_allocation_ratio=1.0 -

CoreFilter(核心过滤器)

根据可用CP核心来调度虚拟机创建,将不能满足实例类型vCPU需求的计算节点过滤掉。vCPU也允许超量,超量值是通过修改nova.conf中

cpu_allocation_ratio参数控制,默认值是16。

Viletc/nova/nova.conf cpu_allocation_ratio=16.0 -

ComputeFilter(计算过滤器)

保证只有nova-compute服务正常工作的计算节点才能被nova-scheduler调度,它是必选的过滤器。 -

ComputeCapablilitiesFilter (计算能力过滤器)

根据计算节点的特性来过滤,如x86_64和ARM架构的不同节点,要将实例 -

lmagePropertiesFilter(镜像属性过滤器)

根据所选镜像的属性来筛选匹配的计算节点。通过元数据来指定其属性。如希望镜像只运行在KVM的Hypervisor上,可以通过Hypervisor Type属性来指定。

2.5 权重

- nova-scheduler服务可以使用多个过滤器依次进行过滤,过滤之后的节点再通过计算权重选出能够部署实例的节点。

注意: 所有权重位于nova/scheduler/weights目录下,目前默认实现是RAMweighter,根据计算节点空闲的内存量计算权重值,空闲越多,权重越大,实例将会被部署到当前空闲内存最多的计算节点上。

- 它的权重源码位于下面路径

/usr/lib/python2.7/site-packages/nova/scheduler/weights

- openstack源码位置位于下面路径

/usr/lib/python2.7/site-packages

3,Compute组件

-

Nova-compute在计算节点上运行]负责管理节点上的实例。通常一个主机运行一个Nova-compute服务,一个实例部署在哪个可用的主机上取决于调度算法。OpenStack对实例的操作,最后都是提交给Nova-compute来完成。

-

Nova-compute可分为两类,一类是定向openstack报告计算节点的状态,另一类是实现实例生命周期的管理。

-

通过Driver(驱动)架构支持多种Hypervisor虚拟机管理器

-

定期向OpenStack报告计算节点的状态

- 每隔一段时间,nova-compute就会报告当前计算节点的资源使用情况和nova-compute服务状态。

- nova-compute是通过Hypervisor的驱动获取这些信息的。

-

实现虚拟机实例生命周期的管理

- OpenStack对虚拟机实例最主要的操作都是通过nova-compute实现的。创建、关闭、重启、挂起、恢复、中止、调整大小、迁移、快照

- 以实例创建为例来说明nova-compute的实现过程如下步骤:

(1),为实例准备资源。

(2), 创建实例的镜像文件。

(3), 创建实例的XML定义文件。

(4), 创建虚拟网络并启动虚拟机。

4, 三个组件总结概述

4.1 三个组件功能总结

- nova可以控制实例生命周期

- nova-Scheduler 可以决定实例具体创建在哪个计算节点

- nova-compute:工作在计算节点,负责实例具体的创建过程

4.2 调度器调度流程以及子功能

-

主要功能:决定实例具体创建在哪个计算节点

-

调度流程: 是由多个调度器/过滤器共同合作,依次过滤/筛选,最后以评分/权重的方式决定实例的功能

-

调度器子功能:

- 调度器通过不同的规则,对计算节点进行筛选

- 多个过滤器(只负责对应的过滤规则)

- 粗过滤(基础资源),例如CPU、内存、磁盘等

- 精细化的过滤 : 镜像属性、服务性能/契合度等亲和性/反

- 亲和性过滤(高级过滤)

通过这些精细化的过滤,最后为了可以筛选出从理论上,最为合适的计算节点来创建实例,在以上的过滤规则过滤之后,可以进行随机调度(内存调度器),会进行再筛选过滤,再筛选过滤:"污点"机制,会对过滤后,剩下的计算节点集群中,再进行过滤,排除之前有过故障的节点

5,Conductor组件

- 由nova-conductor模块实现,旨在为数据库的访问提供一层安全保障。Nova-conductor作为nova-compute服务与数据库之间交互的中介,避免了直接访问由nova-compute服务创建对接数据库。

- Nova-compute访问数据库的全部操作都改到nova-conductor中, nova-conductor作为对数据库操作的一个代理,而且nova-conductor是部署在控制节点上的。

- Nova-conductor有助于提高数据库的访问性能,nova-compute可以创建多个线程使用远程过程调用(RPC)访问nova-conductor。

- 在一个大规模的openstack部署环境里,管理员可以通过增加nova-conductor的数量来应付曰益增长的计算。

6,PlacementAPI组件

- 对资源的管理全部由计算节点承担,在统计资源使用情况时,只是简单的将所有计算节点的资源情况累加起来,但是系统中还存在外部资源,这些资源由外部系统提供。如ceph、nfs等提供的存储资源等。面对多种多样的资源提供者,管理员需要统一的、简单的管理接口来统计系统中资源使用情况,这个接口就是PlacementAPl。

- acementAPI由nova-placement-api服务来实现,旨在追踪记录资源提供者的目录和资源使用情况。

- 费的资源类型是按类进行跟踪的。如计算节点类、共享存储池类、IP地址类等。

7,虚拟机实例化流程

用户可以通过多种方式访问虚拟机的控制台

- Nova-novncoroxy守护进程:通过vnc连接访问正在运行的实例提供一个代理,支持浏览器novnc客户端。

-Nova-spicehtml5proxy守护进程:通过spice连接访问正在运行的实例提供一个代理,支持基于html5浏览器客户端。 - Nova-xvpvncproxy守护进程:通过vnc连结访问正在运行的实例提供一个代理,支持openstack专用的java客户端。

- Nova-consoleauth守护进程:负责对访问虚拟机控制台提供用户令牌认证。这个服务器必须与控制台代理程序共同使用。

总结下来就是:比如说是novnc客户端是需要通过vnc连接访问正在运行的实例提供一个代理,而访问过程就是由novncoroxy来守护进程,html5是apice提供的代理,由nova-spicehtml5proxy来进行进程守护,而java客户端则是由vnc提供代理,Nova-xvpvncproxy来守护进程。

8,控制台接口介绍

- 用户(可以是OpenStack最终用户,也可以是其他程序)执行Nova Client提供的用于创建虚拟机的命令。

- nova-api服务监听到来自于Nova Client的HTTP请求,并将这些请求转换为AMQP消息之后加入消息队列。

- 通过消息队列调用nova-conductor服务。

- nova-conductor服务从消息队列中接收到虚拟机实例化请求消息后,进行一些准备工作。

- nova-conductor服务通过消息队列告诉nova-scheduler服务去选择一个合适的计算节点来创建虚拟机,此时nova-scheduler会读取数据库的内容。

- nova-conductor服务从nova-scheduler服务得到了合适的将计算节点的信息后,在通过消息队列来通知nova-compute服务实现虚拟机的创建。

9,nova部署的几种模式

四,Nova的Cell架构模式介绍

1,什么是cell ?为什么有cell ?

- 当openstack nova 集群的规模变大时,所有的 Nova Compute节点全部连接到同一个 MQ,在有大量定时任务通过 MQ 上报给Nova-Conductor服务的情况下,数据库和消息队列服务就会出现瓶颈,而此时nova为提高水平扩展及分布式,大规模的部署能力,同时又不增加数据库和消息中间件的复杂度,引入了Cell概念。

此部分是扩展部分,这里就不详细介绍,如有需要,查看cell架构及工作流程图详细介绍点这里,cell详细介绍

五, nova组件部署过程:

1,openstack-placement组件部署:

一,创建placement数据库,并为其登录用户授权

[root@ct ~]# mysql -uroot -p

Enter password:

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 8

Server version: 10.3.20-MariaDB MariaDB Server

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> CREATE DATABASE placement //创建名为placement的数据库,用来存储服务产生的数据

Query OK, 1 row affected (0.001 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'localhost' IDENTIFIED BY 'PLACEMENT_DBPASS'; //给登录用户授权使其能登陆到数据库

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' IDENTIFIED BY 'PLACEMENT_DBPASS'; //授权

Query OK, 0 rows affected (0.000 sec)

MariaDB [(none)]> flush privileges; //重新加载刷新

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> exit

Bye

二,创建placement服务与api的endpoint

#####创建placement用户#######

[root@ct ~]# openstack user create --domain default --password PLACEMENT_PASS placement

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | b316e7bae25c4e69843a43b0ad984c9e |

| name | placement |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

#######给与placement用户对service项目拥有admin权限#######

[root@ct ~]# openstack role add --project service --user placement admin

####创建一个placement服务,服务类型为placement#####

[root@ct ~]# openstack service create --name placement --description "Placement API" placement

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Placement API |

| enabled | True |

| id | ca0ac386ed944093aa8a5b0030f8b709 |

| name | placement |

| type | placement |

+-------------+----------------------------------+

##########注册API端口到placement的service中;注册的信息会写入到mysql中###########

[root@ct ~]# openstack endpoint create --region RegionOne placement public http://ct:8778

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 67144351a2964d019657215614d33902 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | ca0ac386ed944093aa8a5b0030f8b709 |

| service_name | placement |

| service_type | placement |

| url | http://ct:8778 |

+--------------+----------------------------------+

[root@ct ~]# openstack endpoint create --region RegionOne placement internal http://ct:8778

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | e5952252ee5147f7aeceaaf53a3ec22f |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | ca0ac386ed944093aa8a5b0030f8b709 |

| service_name | placement |

| service_type | placement |

| url | http://ct:8778 |

+--------------+----------------------------------+

[root@ct ~]# openstack endpoint create --region RegionOne placement admin http://ct:8778

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | affba36bd91e46d8a6695e631ca58078 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | ca0ac386ed944093aa8a5b0030f8b709 |

| service_name | placement |

| service_type | placement |

| url | http://ct:8778 |

+--------------+----------------------------------+

###### 安装placement服务########

[root@ct ~]# yum -y install openstack-placement-api

########修改placement配置文件#########

[root@ct placement]# cp -a /etc/placement/placement.conf{,.bak}

[root@ct placement]# grep -Ev '^$|#' /etc/placement/placement.conf.bak > /etc/placement/placement.conf

[root@ct placement]# openstack-config --set /etc/placement/placement.conf placement_database connection mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

nstack-config --set /etc/placement/placement.conf keystone_authtoken project_domain_name Default

openstack-config --set /etc/placement/placement.conf keystone_authtoken user_domain_name Default

openstack-config --set /etc/placement/placement.conf keystone_authtoken project_name service

openstack-config --set /etc/placement/placement.conf keystone_authtoken username placement

openstack-config --set /etc/placement/placement.conf keystone_authtoken password PLACEMENT_PASS

[root@ct placement]# openstack-config --set /etc/placement/placement.conf api auth_strategy keystone

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken auth_url http://ct:5000/v3

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken memcached_servers ct:11211

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken auth_type password

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken project_domain_name Default

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken user_domain_name Default

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken project_name service

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken username placement

[root@ct placement]# openstack-config --set /etc/placement/placement.conf keystone_authtoken password PLACEMENT_PASS

#########查看placement配置文件#########

[root@ct placement]# cat placement.conf

[placement_database]

connection = mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

[api]

auth_strategy = keystone

[keystone_authtoken]

auth_url = http://ct:5000/v3 #指定keystone地址

memcached_servers = ct:11211 #session信息是缓存放到了memcached中

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = placement

password = PLACEMENT_PASS

###### 导入数据库#####

[root@ct placement]# su -s /bin/sh -c "placement-manage db sync" placement

/usr/lib/python2.7/site-packages/pymysql/cursors.py:170: Warning: (1280, u"Name 'alembic_version_pkc' ignored for PRIMARY key.")

result = self._query(query)

###### 修改Apache配置文件: 00-placemenct-api.conf(安装完placement服务后会自动创建该文件-虚拟主机配置 ) ######

[root@ct ~]# cd /etc/httpd

[root@ct httpd]# ls

conf conf.d conf.modules.d logs modules run

[root@ct httpd]# cd conf.d

[root@ct conf.d]# ls

00-placement-api.conf autoindex.conf README userdir.conf welcome.conf wsgi-keystone.conf

[root@ct conf.d]# vi 00-placement-api.conf

[root@ct conf.d]# cat 00-placement-api.conf

Listen 8778

WSGIProcessGroup placement-api

WSGIApplicationGroup %{GLOBAL}

WSGIPassAuthorization On

WSGIDaemonProcess placement-api processes=3 threads=1 user=placement group=placement

WSGIScriptAlias / /usr/bin/placement-api

= 2.4>

ErrorLogFormat "%M"

ErrorLog /var/log/placement/placement-api.log

#SSLEngine On

#SSLCertificateFile ...

#SSLCertificateKeyFile ...

Alias /placement-api /usr/bin/placement-api

SetHandler wsgi-script

Options +ExecCGI

WSGIProcessGroup placement-api

WSGIApplicationGroup %{GLOBAL}

WSGIPassAuthorization On

#此处是bug,必须添加下面的配置来启用对placement api的访问,否则在访问apache的

= 2.4> #api时会报403;添加在文件的最后即可

Require all granted

#apache版本;允许apache访问/usr/bin目录;否则/usr/bin/placement-api将不允许被访问

Order allow,deny

Allow from all #允许apache访问

#######重新启动apache######

[root@ct conf.d]# systemctl restart httpd

#####使用curl 访问来进行测试#######

[root@ct conf.d]# curl ct:8778

{"versions": [{"status": "CURRENT", "min_version": "1.0", "max_version": "1.36", "id": "v1.0", "links": [{"href": "", "rel": "self"}]}]}

[root@ct conf.d]# netstat -natp | grep 8778

tcp 0 0 192.168.100.70:33898 192.168.100.70:8778 TIME_WAIT -

tcp6 0 0 :::8778 :::* LISTEN 18226/httpd

######最后查看一下placement的状态#######

[root@ct conf.d]# placement-status upgrade check

+----------------------------------+

| Upgrade Check Results |

+----------------------------------+

| Check: Missing Root Provider IDs |

| Result: Success |

| Details: None |

+----------------------------------+

| Check: Incomplete Consumers |

| Result: Success |

| Details: None |

+----------------------------------+

总结:

Placement提供了placement-apiWSGI脚本,用于与Apache,nginx或其他支持WSGI的Web服务器一起运行服务(通过nginx或apache实现python入口代理)。

根据用于部署OpenStack的打包解决方案,WSGI脚本可能位于/usr/bin 或中/usr/local/bin

Placement服务是从 S 版本,从nova服务中拆分出来的组件,作用是收集各个node节点的可用资源,把node节点的资源统计写入到mysql,Placement服务会被nova scheduler服务进行调用 Placement服务的监听端口是8778

需修改的配置为以下两个:

① placement.conf

主要修改思路:Keystone认证相关(url、HOST:PORT、域、账号密码等)

对接数据库(位置)

② 00-placement-api.conf

主要修改思路:Apache权限、访问控制

2,nova组件部署过程:

一,节点配置规划如下:

控制节点ct部署以下服务:

nova-api(nova主服务),

nova-scheduler(nova调度服务),

nova-conductor(Nova数据库服务,提供数据库访问)

nova-novncproxy(nova的vnc服务,提供实例的控制台,

计算节点配置的是:nova-compute(Nova计算服务)

一,控制节点CT上配置

######创建nova数据库,并执行授权操作#######

[root@ct ~]# mysql -uroot -p

Enter password:

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 8

Server version: 10.3.20-MariaDB MariaDB Server

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> CREATE DATABASE nova_api;

Query OK, 1 row affected (0.000 sec)

MariaDB [(none)]> CREATE DATABASE nova;

Query OK, 1 row affected (0.001 sec)

MariaDB [(none)]> CREATE DATABASE nova_cell0;

Query OK, 1 row affected (0.000 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

Query OK, 0 rows affected (0.002 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';

Query OK, 0 rows affected (0.000 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';

Query OK, 0 rows affected (0.000 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

Query OK, 0 rows affected (0.000 sec)

MariaDB [(none)]> flush privileges;

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> exit

Bye

##########管理nova用户及服务#######

[root@ct ~]# openstack user create --domain default --password NOVA_PASS nova //创建nova用户

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | 2e44121d0d68429e91918d1dac6d1346 |

| name | nova |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

把nova用户添加到service项目,拥有admin权限

[root@ct ~]# openstack role add --project service --user nova admin

创建nova服务

[root@ct ~]# openstack service create --name nova --description "OpenStack Compute" compute

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Compute |

| enabled | True |

| id | 86a82c6058c44435b4a91705e9608f2b |

| name | nova |

| type | compute |

+-------------+----------------------------------+

给nova服务关联endpoint (端点)

[root@ct ~]# openstack endpoint create --region RegionOne compute public http://ct:8774/v2.1

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 96ef2fb7639d4959be4a4920cc143b1d |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 86a82c6058c44435b4a91705e9608f2b |

| service_name | nova |

| service_type | compute |

| url | http://ct:8774/v2.1 |

+--------------+----------------------------------+

[root@ct ~]# openstack endpoint create --region RegionOne compute internal http://ct:8774/v2.1

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | d5431cf4c23a41a9ad894aa93a809f0a |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 86a82c6058c44435b4a91705e9608f2b |

| service_name | nova |

| service_type | compute |

| url | http://ct:8774/v2.1 |

+--------------+----------------------------------+

[root@ct ~]# openstack endpoint create --region RegionOne compute admin http://ct:8774v2.1

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | a7b7f30062b84f23af2b26caa870c3b4 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 86a82c6058c44435b4a91705e9608f2b |

| service_name | nova |

| service_type | compute |

| url | http://ct:8774v2.1 |

+--------------+----------------------------------+

安装nova组件(nova-api,nova-conductor,nova-novncproxy,nova-scheduler)

[root@ct ~]# yum -y install openstack-nova-api openstack-nova-conductor openstack-nova-novncproxy openstack-nova-scheduler

修改Nova配置文件(nova.conf)

[root@ct ~]# cp -a /etc/nova/nova.conf{,.bak}

[root@ct ~]# grep -Ev '^$|#' /etc/nova/nova.conf.bak > /etc/nova/nova.conf

#修改nova.conf

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadata

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 192.168.100.70 ####修改为 ct的IP(内部IP)

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT use_neutron true

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@ct

[root@ct ~]# openstack-config --set /etc/nova/nova.conf api_database connection mysql+pymysql://nova:NOVA_DBPASS@ct/nova_api

[root@ct ~]# openstack-config --set /etc/nova/nova.conf database connection mysql+pymysql://nova:NOVA_DBPASS@ct/nova

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement_database connection mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

[root@ct ~]# openstack-config --set /etc/nova/nova.conf api auth_strategy keystone

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_url http://ct:5000/v3

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken memcached_servers ct:11211

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_type password

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken project_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken user_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken project_name service

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken username nova

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASS

[root@ct ~]# openstack-config --set /etc/nova/nova.conf vnc enabled true

[root@ct ~]# openstack-config --set /etc/nova/nova.conf vnc server_listen ' $my_ip'

[root@ct ~]# openstack-config --set /etc/nova/nova.conf vnc server_proxyclient_address ' $my_ip'

[root@ct ~]# openstack-config --set /etc/nova/nova.conf glance api_servers http://ct:9292

[root@ct ~]# openstack-config --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmp

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement region_name RegionOne

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement project_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement project_name service

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement auth_type password

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement user_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement auth_url http://ct:5000/v3

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement username placement

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement password PLACEMENT_PASS

查看nova.conf

[root@ct ~]# cat /etc/nova/nova.conf

[DEFAULT]

enabled_apis = osapi_compute,metadata #指定支持的api类型

my_ip = 192.168.100.11 #定义本地IP

use_neutron = true #通过neutron获取IP地址

firewall_driver = nova.virt.firewall.NoopFirewallDriver

transport_url = rabbit://openstack:RABBIT_PASS@ct #指定连接的rabbitmq

[api]

auth_strategy = keystone #指定使用keystone认证

[api_database]

connection = mysql+pymysql://nova:NOVA_DBPASS@ct/nova_api

[barbican]

[cache]

[cinder]

[compute]

[conductor]

[console]

[consoleauth]

[cors]

[database]

connection = mysql+pymysql://nova:NOVA_DBPASS@ct/nova

[devices]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://ct:9292

[guestfs]

[healthcheck]

[hyperv]

[ironic]

[key_manager]

[keystone]

[keystone_authtoken] #配置keystone的认证信息

auth_url = http://ct:5000/v3 #到此url去认证

memcached_servers = ct:11211 #memcache数据库地址:端口

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[libvirt]

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency] #指定锁路径

lock_path = /var/lib/nova/tmp #锁的作用是创建虚拟机时,在执行某个操作的时候,需要等此步骤执行完后才能执行下一个步骤,不能并行执行,保证操作是一步一步的执行

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://ct:5000/v3

username = placement

password = PLACEMENT_PASS

[powervm]

[privsep]

[profiler]

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[upgrade_levels]

[vault]

[vendordata_dynamic_auth]

[vmware]

[vnc] #此处如果配置不正确,则连接不上虚拟机的控制台

enabled = true

server_listen = $my_ip #指定vnc的监听地址

server_proxyclient_address = $my_ip #server的客户端地址为本机地址;此地址是管理网的地址

[workarounds]

[wsgi]

[xenserver]

[xvp]

[zvm]

[placement_database]

connection = mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

初始化数据库

[root@ct ~]# su -s /bin/sh -c "nova-manage api_db sync" nova //初始化nova api数据库

注册cell0数据库,Nova服务内部把资源划分到不同的cell中,把计算节点划分到不同的cell中,OpenStack内部基于cell把计算节点进行逻辑上的分组

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

创建cell1单元格

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

824eb30e-c553-4f13-bf13-729527fb0256

初始化nova数据库;可以通过 /var/log/nova/nova-manage.log 日志判断是否初始化成功

[root@ct ~]# su -s /bin/sh -c "nova-manage db sync" nova

/usr/lib/python2.7/site-packages/pymysql/cursors.py:170: Warning: (1831, u'Duplicate index `block_device_mapping_instance_uuid_virtual_name_device_name_idx`. This is deprecated and will be disallowed in a future release')

result = self._query(query)

/usr/lib/python2.7/site-packages/pymysql/cursors.py:170: Warning: (1831, u'Duplicate index `uniq_instances0uuid`. This is deprecated and will be disallowed in a future release')

result = self._query(query)

使用以下命令验证cell0和cell1是否注册成功

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova

+-------+--------------------------------------+----------------------------+-----------------------------------------+----------+

| Name | UUID | Transport URL | Database Connection | Disabled |

+-------+--------------------------------------+----------------------------+-----------------------------------------+----------+

| cell0 | 00000000-0000-0000-0000-000000000000 | none:/ | mysql+pymysql://nova:****@ct/nova_cell0 | False |

| cell1 | 824eb30e-c553-4f13-bf13-729527fb0256 | rabbit://openstack:****@ct | mysql+pymysql://nova:****@ct/nova | False |

+-------+--------------------------------------+----------------------------+-----------------------------------------+----------+

启动nova服务

[root@ct ~]# systemctl enable openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-api.service to /usr/lib/systemd/system/openstack-nova-api.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-scheduler.service to /usr/lib/systemd/system/openstack-nova-scheduler.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-conductor.service to /usr/lib/systemd/system/openstack-nova-conductor.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-novncproxy.service to /usr/lib/systemd/system/openstack-nova-novncproxy.service.

[root@ct ~]# systemctl start openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

检查nova服务端口

[root@ct ~]# netstat -tnlup|egrep '8774|8775'

tcp 0 0 0.0.0.0:8775 0.0.0.0:* LISTEN 18282/python2

tcp 0 0 0.0.0.0:8774 0.0.0.0:* LISTEN 18282/python2

[root@ct ~]# curl http://ct:8774

{"versions": [{"status": "SUPPORTED", "updated": "2011-01-21T11:33:21Z", "links": [{"href": "http://ct:8774/v2/", "rel": "self"}], "min_version": "", "version": "", "id": "v2.0"}, {"status": "CURRENT", "updated": "2013-07-23T11:33:21Z", "links": [{"href": "http://ct:8774/v2.1/", "rel": "self"}], "min_version": "2.1", "version": "2.79", "id": "v2.

二,计算节点配置Nova服务c1节点

安装nova-compute组件

yum -y install openstack-nova-compute

修改配置文件

cp -a /etc/nova/nova.conf{,.bak}

grep -Ev '^$|#' /etc/nova/nova.conf.bak > /etc/nova/nova.conf

openstack-config --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadata

openstack-config --set /etc/nova/nova.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@ct

openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 192.168.100.80 #修改为对应节点的内部IP

openstack-config --set /etc/nova/nova.conf DEFAULT use_neutron true

openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver

openstack-config --set /etc/nova/nova.conf api auth_strategy keystone

openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_url http://ct:5000/v3

openstack-config --set /etc/nova/nova.conf keystone_authtoken memcached_servers ct:11211

openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_type password

openstack-config --set /etc/nova/nova.conf keystone_authtoken project_domain_name Default

openstack-config --set /etc/nova/nova.conf keystone_authtoken user_domain_name Default

openstack-config --set /etc/nova/nova.conf keystone_authtoken project_name service

openstack-config --set /etc/nova/nova.conf keystone_authtoken username nova

openstack-config --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASS

openstack-config --set /etc/nova/nova.conf vnc enabled true

openstack-config --set /etc/nova/nova.conf vnc server_listen 0.0.0.0

openstack-config --set /etc/nova/nova.conf vnc server_proxyclient_address ' $my_ip'

openstack-config --set /etc/nova/nova.conf vnc novncproxy_base_url http://192.168.100.70:6080/vnc_auto.html

openstack-config --set /etc/nova/nova.conf glance api_servers http://ct:9292

openstack-config --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmp

openstack-config --set /etc/nova/nova.conf placement region_name RegionOne

openstack-config --set /etc/nova/nova.conf placement project_domain_name Default

openstack-config --set /etc/nova/nova.conf placement project_name service

openstack-config --set /etc/nova/nova.conf placement auth_type password

openstack-config --set /etc/nova/nova.conf placement user_domain_name Default

openstack-config --set /etc/nova/nova.conf placement auth_url http://ct:5000/v3

openstack-config --set /etc/nova/nova.conf placement username placement

openstack-config --set /etc/nova/nova.conf placement password PLACEMENT_PASS

openstack-config --set /etc/nova/nova.conf libvirt virt_type qemu

查看修改之后的配置文件

[root@c1 ~]# cat /etc/nova/nova.conf

[DEFAULT]

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:RABBIT_PASS@ct

my_ip = 192.168.100.80

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api]

auth_strategy = keystone

[api_database]

[barbican]

[cache]

[cinder]

[compute]

[conductor]

[console]

[consoleauth]

[cors]

[database]

[devices]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://ct:9292

[guestfs]

[healthcheck]

[hyperv]

[ironic]

[key_manager]

[keystone]

[keystone_authtoken]

auth_url = http://ct:5000/v3

memcached_servers = ct:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[libvirt]

virt_type = qemu

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://ct:5000/v3

username = placement

password = PLACEMENT_PASS

[powervm]

[privsep]

[profiler]

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[upgrade_levels]

[vault]

[vendordata_dynamic_auth]

[vmware]

[vnc]

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://192.168.100.70:6080/vnc_auto.html ###此处需要手动添加控制节点的ip地址,以便搭建成功之后能通过UI控制台访问到内部虚拟机

[workarounds]

[wsgi]

[xenserver]

[xvp]

[zvm]

计算节点配置Nova服务c2节点

c1节点与c2节点配置相同,唯一不同的就是配置的时候标注的地方写本机地址

openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 192.168.100.80 #修改为对应节点的内部IP

配置完了查看

[root@c2 ~]# cat /etc/nova/nova.conf

[DEFAULT]

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:RABBIT_PASS@ct

my_ip = 192.168.100.80

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api]

auth_strategy = keystone

[api_database]

[barbican]

[cache]

[cinder]

[compute]

[conductor]

[console]

[consoleauth]

[cors]

[database]

[devices]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://ct:9292

[guestfs]

[healthcheck]

[hyperv]

[ironic]

[key_manager]

[keystone]

[keystone_authtoken]

auth_url = http://ct:5000/v3

memcached_servers = ct:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[libvirt]

virt_type = qemu

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://ct:5000/v3

username = placement

password = PLACEMENT_PASS

[powervm]

[privsep]

[profiler]

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[upgrade_levels]

[vault]

[vendordata_dynamic_auth]

[vmware]

[vnc]

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://192.168.100.70:6080/vnc_auto.html ###此处需要手动添加控制节点的ip地址,以便搭建成功之后能通过UI控制台访问到内部虚拟机

[workarounds]

[wsgi]

[xenserver]

[xvp]

[zvm]

配置成功之后在两台节点c1,c2上开启服务

[root@c1 ~]# systemctl enable libvirtd.service openstack-nova-compute.serviceCreated symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-compute.service to /usr/lib/systemd/system/openstack-nova-compute.service.

[root@c1 ~]# systemctl start libvirtd.service openstack-nova-compute.service

[root@c1 ~]#

在控制节点上操作:

查看compute节点是否注册到controller节点上

[root@ct ~]# openstack compute service list --service nova-compute

+----+--------------+------+------+---------+-------+----------------------------+

| ID | Binary | Host | Zone | Status | State | Updated At |

+----+--------------+------+------+---------+-------+----------------------------+

| 8 | nova-compute | c1 | nova | enabled | up | 2020-12-25T06:11:22.000000 |

| 9 | nova-compute | c2 | nova | enabled | up | 2020-12-25T06:11:22.000000 |

+----+--------------+------+------+---------+-------+----------------------------+

扫描当前openstack中有哪些计算节点可用

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 discover_hosts --verbose" nova

Found 2 cell mappings.

Skipping cell0 since it does not contain hosts.

Getting computes from cell 'cell1': 824eb30e-c553-4f13-bf13-729527fb0256

Checking host mapping for compute host 'c1': a9537ded-8185-4849-bf47-961d1711947c

Creating host mapping for compute host 'c1': a9537ded-8185-4849-bf47-961d1711947c

Checking host mapping for compute host 'c2': d0cd021b-aaa7-4e5b-899b-ba140426e2eb

Creating host mapping for compute host 'c2': d0cd021b-aaa7-4e5b-899b-ba140426e2eb

Found 2 unmapped computes in cell: 824eb30e-c553-4f13-bf13-729527fb0256

解释:发现后会把计算节点创建到cell中,后面就可以在cell中创建虚拟机;相当于openstack内部对计算节点进行分组,把计算节点分配到不同的cell中

默认每次添加个计算节点,在控制端就需要执行一次扫描,比较繁琐,所以可以修改控制端nova的主配置文件

[root@ct ~]# vim /etc/nova/nova.conf

[scheduler]

discover_hosts_in_cells_interval = 300 #每300秒扫描一次

开启服务

[root@ct ~]# systemctl restart openstack-nova-api.service

验证计算节点服务

检查nova的各个服务是否都是正常,以及compute服务是否注册成功

[root@ct ~]# openstack compute service list

+----+----------------+------+----------+---------+-------+----------------------------+

| ID | Binary | Host | Zone | Status | State | Updated At |

+----+----------------+------+----------+---------+-------+----------------------------+

| 3 | nova-conductor | ct | internal | enabled | up | 2020-12-25T06:23:36.000000 |

| 5 | nova-scheduler | ct | internal | enabled | up | 2020-12-25T06:23:38.000000 |

| 8 | nova-compute | c1 | nova | enabled | up | 2020-12-25T06:23:42.000000 |

| 9 | nova-compute | c2 | nova | enabled | up | 2020-12-25T06:23:42.000000 |

+----+----------------+------+----------+---------+-------+----------------------------+

查看各个组件的api是否正常

[root@ct ~]# openstack catalog list

+-----------+-----------+---------------------------------+

| Name | Type | Endpoints |

+-----------+-----------+---------------------------------+

| keystone | identity | RegionOne |

| | | admin: http://ct:5000/v3/ |

| | | RegionOne |

| | | internal: http://ct:5000/v3/ |

| | | RegionOne |

| | | public: http://ct:5000/v3/ |

| | | |

| glance | image | RegionOne |

| | | admin: http://ct:9292 |

| | | RegionOne |

| | | internal: http://ct:9292 |

| | | RegionOne |

| | | public: http://ct:9292 |

| | | |

| nova | compute | RegionOne |

| | | public: http://ct:8774/v2.1 |

| | | RegionOne |

| | | admin: http://ct:8774v2.1 |

| | | RegionOne |

| | | internal: http://ct:8774/v2.1 |

| | | |

| placement | placement | RegionOne |

| | | public: http://ct:8778 |

| | | RegionOne |

| | | admin: http://ct:8778 |

| | | RegionOne |

| | | internal: http://ct:8778 |

| | | |

+-----------+-----------+---------------------------------+

查看是否能够拿到镜像

[root@ct ~]# openstack image list

+--------------------------------------+--------+--------+

| ID | Name | Status |

+--------------------------------------+--------+--------+

| 1059de74-161c-4aa3-b246-ae125283113a | cirros | active |

+--------------------------------------+--------+--------+

查看cell的api和placement的api是否正常,只要其中一个有误,后面将无法创建虚拟机

[root@ct ~]# nova-status upgrade check

+--------------------------------+

| Upgrade Check Results |

+--------------------------------+

| Check: Cells v2 |

| Result: Success |

| Details: None |

+--------------------------------+

| Check: Placement API |

| Result: Success |

| Details: None |

+--------------------------------+

| Check: Ironic Flavor Migration |

| Result: Success |

| Details: None |

+--------------------------------+

| Check: Cinder API |

| Result: Success |

| Details: None |

+--------------------------------+

总结:

Nova分为控制节点、计算节点

Nova组件核心功能是调度资源,在配置文件中需要体现的部分:指向认证节点位置(URL、ENDPOINT)、调用服务、注册、提供支持等,配置文件中的所有配置参数基本都是围绕此范围进行设置