吴恩达机器学习作业一python实现

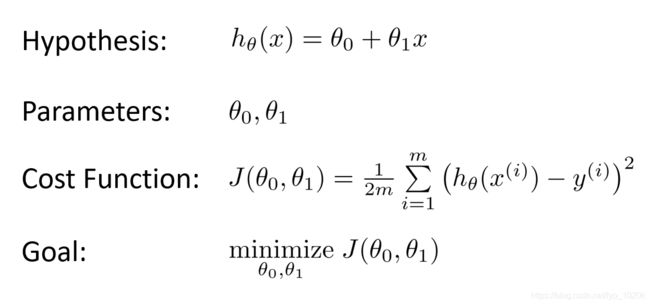

单变量线性回归

参考了黄海广的github:https://github.com/fengdu78/Coursera-ML-AndrewNg-Notes

数据处理

读入数据

path = 'ex1data1.txt'

data = pd.read_csv(path, names=['Population', 'Profit'])可查看数据的一些统计量

图:数据的一些统计量

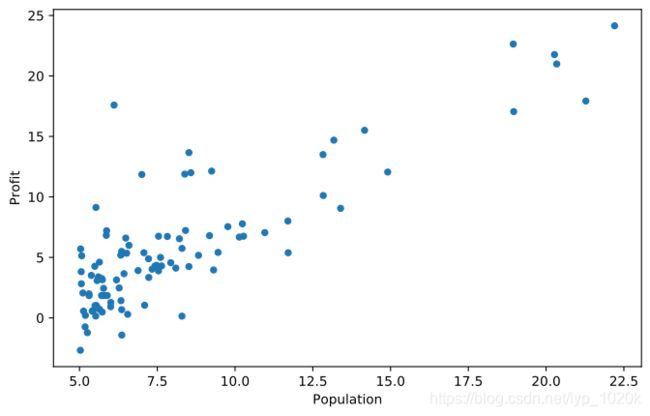

展示数据

data.plot(kind='scatter', x='Population', y='Profit', figsize=(12,8))

plt.show()图:原数据的散点图

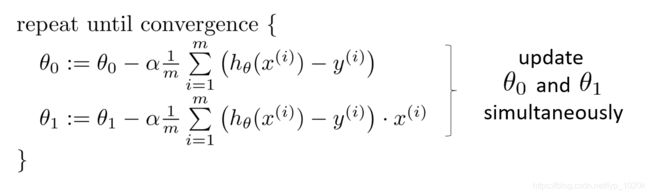

梯度下降

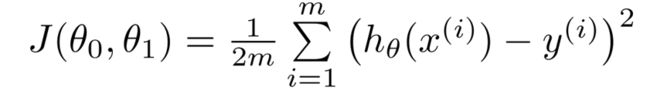

代价函数

# 计算代价函数J(θ)

def cost_function(X, y, theta):

diff = X.dot(theta.T) - y

return sum(np.power(diff, 2))/(2*m)梯度下降法

对θ0和θ1求偏导

# 求偏导

def gradient_function(X, y, theta):

diff = X.dot(theta.T) - y

return diff.dot(X)/m梯度下降

def gradient_descent(X, y, alpha):

theta = np.array((m,1))

gradient = gradient_function(X, y, theta)

while not all (abs(gradient) <= 1e-5):

theta = theta - alpha * gradient

gradient = gradient_function(X, y, theta)

return theta

找到的最佳的θ

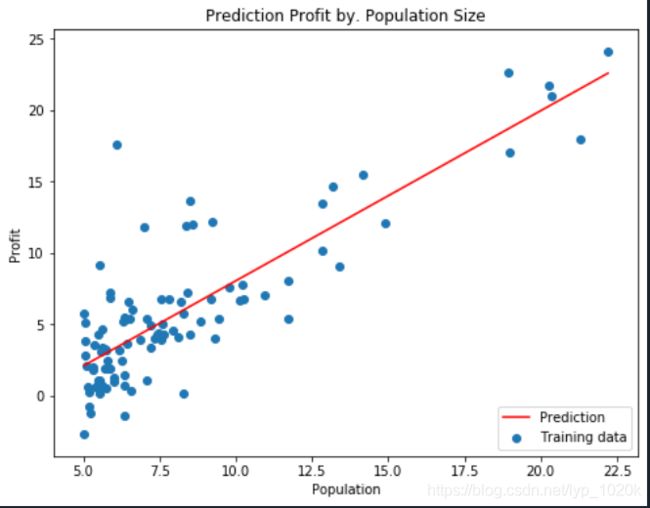

进行可视化

population = np.linspace(data.Population.min(), data.Population.max(), 100) # 横坐标

profit = optimal_theta[0] + (optimal_theta[1] * population) # 纵坐标

fig, ax = plt.subplots(figsize=(8, 6))

ax.plot(population, profit, 'r', label='Prediction')

ax.scatter(data['Population'], data['Profit'], label='Training data')

ax.legend(loc=4) # 4表示标签在右下角

ax.set_xlabel('Population')

ax.set_ylabel('Profit')

ax.set_title('Prediction Profit by. Population Size')

plt.show()源码:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

path = 'ex1data1.txt'

data = pd.read_csv(path, names=['Population', 'Profit'])

m = len(data)

data.plot(kind='scatter', x='Population', y='Profit', figsize=(12,8))

# 计算代价函数J(θ)

def cost_function(X, y, theta):

diff = X.dot(theta.T) - y

return sum(np.power(diff, 2))/(2*m)

# 求偏导

def gradient_function(X, y, theta):

diff = X.dot(theta.T) - y

return diff.dot(X)/m

# 梯度下降

def gradient_descent(X, y, alpha):

theta = np.array((m,1))

gradient = gradient_function(X, y, theta)

while not all (abs(gradient) <= 1e-5):

theta = theta - alpha * gradient

gradient = gradient_function(X, y, theta)

return theta

X = data['Population']

y = data['Profit']

X = np.vstack((pd.Series(np.ones(m)), X)).T

alpha = 0.01

optimal_theta = gradient_descent(X, y, alpha)

print('optimal_theta:', optimal_theta)

population = np.linspace(data.Population.min(), data.Population.max(), 100) # 横坐标

profit = optimal_theta[0] + (optimal_theta[1] * population) # 纵坐标

fig, ax = plt.subplots(figsize=(8, 6))

ax.plot(population, profit, 'r', label='Prediction')

ax.scatter(data['Population'], data['Profit'], label='Training data')

ax.legend(loc=4) # 4表示标签在右下角

ax.set_xlabel('Population')

ax.set_ylabel('Profit')

ax.set_title('Prediction Profit by. Population Size')

plt.show()本人刚开始学,才疏学浅