Paddle图像分割从入门到实践(三):PSPnet与DeepLab

Pyramid Scene Parsing Network(PSPnet)

Unet增强感受野的方法是将不同感受野的特征图进行concat,而PSPnet的思路是使用不同大小的卷积核来卷积,产生不同的感受野.

ReceptiveField

Pyramid Pooling Module

Dilated Conv

由于引入了较大的卷积核,导致网络的计算量成平方倍增加,为解决运算量的问题,我们将卷积核替换为带洞卷积

其中可能需要大量使用到Adaptive pool和1x1 Conv来改变特征图的尺寸

Adaptive pooling计算公式

最终PSPnet的网络结构显示为下图

代码实践

PSPnNet

import numpy as np

import paddle.fluid as fluid

from paddle.fluid.dygraph import to_variable

from paddle.fluid.dygraph import Layer

from paddle.fluid.dygraph import Conv2D

from paddle.fluid.dygraph import BatchNorm

from paddle.fluid.dygraph import Dropout

from resnet_dilated import ResNet50

# pool with different bin_size

# interpolate back to input size

# concat

class PSPModule(Layer):

def __init__(self,num_channels,bin_size_list):

super(PSPModule,self).__init__()

self.bin_size_list = bin_size_list

num_filters = num_channels //len(bin_size_list)

self.features = []

#融合部分

for i in range(len(bin_size_list)):

self.features.append(

fluid.dygraph.Sequential(

Conv2D(num_channels,num_filters,1),

BatchNorm(num_filters,act='relu')

)

)

def forward(self,inputs):

out = [inputs]

for idx,f in enumerate(self.features):

x = fluid.layers.adaptive_pool2d(inputs,self.bin_size_list[idx])

x = f(x)

x = fluid.layers.interpolate(x,inputs.shape[2::],align_corners=True)

out.append(x)

#out is list

out = fluid.layers.concat(out,axis=1) #NCHW

return out

class PSPNet(Layer):

def __init__(self, num_classes=59, backbone='resnet50'):

super(PSPNet, self).__init__()

res = ResNet50(False)

# stem: res.conv, res.pool2d_max

self.layer0 = fluid.dygraph.Sequential(

res.conv,

res.pool2d_max

)

self.layer1 =res.layer1

self.layer2 =res.layer2

self.layer3 =res.layer3

self.layer4 =res.layer4

num_channels = 2048

# psp: 2048 -> 2048*2

self.pspmodule = PSPModule(num_channels,[1,2,3,6])

num_channels *= 2

# cls: 2048*2 -> 512 -> num_classes

self.classifier = fluid.dygraph.Sequential(

Conv2D(num_channels=num_channels,num_filters=512,filter_size=3,padding=1),

BatchNorm(512,'relu'),

Dropout(0.1),

Conv2D(num_channels= 512,num_filters=num_classes,filter_size=1),

)

# aux: 1024 -> 256 -> num_classes

def forward(self, inputs):

#backbone

x = self.layer0(inputs)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

#pspmodule

x = self.pspmodule(x)

x = self.classifier(x)

x = fluid.layers.interpolate(x,inputs.shape[2::],align_corners=True)

return x

# aux: tmp_x = layer3

def main():

with fluid.dygraph.guard(fluid.CPUPlace()):

x_data=np.random.rand(2,3, 473, 473).astype(np.float32)

x = to_variable(x_data)

model = PSPNet(num_classes=59)

model.train()

pred, aux = model(x)

print(pred.shape, aux.shape)

if __name__ =="__main__":

main()

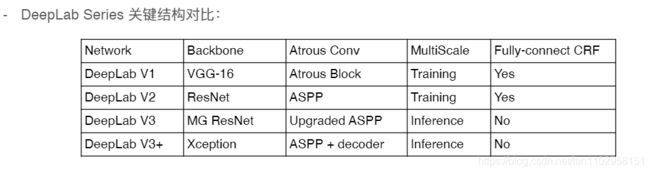

DeepLab

代码实践

import numpy as np

import paddle.fluid as fluid

from paddle.fluid.dygraph import to_variable

from paddle.fluid.dygraph import Layer

from paddle.fluid.dygraph import Conv2D

from paddle.fluid.dygraph import BatchNorm

from paddle.fluid.dygraph import Dropout

from resnet_multi_grid import ResNet50

class ASPPPooling(Layer):

# TODO:

def __init__(self,num_channels,num_filters):

super(ASPPPooling,self).__init__()

self.features = fluid.dygraph.Sequential(

Conv2D(num_channels,num_filters,1),

BatchNorm(num_filters,act = 'relu')

)

def forward(self,inputs):

n,c,h,w = inputs.shape

x = fluid.layers.adaptive_pool2d(inputs,1)

x = self.features(x)

x = fluid.layers.interpolate(x,(h,w),align_corners=False)

return x

class ASPPConv(fluid.dygraph.Sequential):

# TODO:

def __init__(self,num_channels,num_filters,dilation):

super(ASPPConv,self).__init__(

Conv2D(num_channels,num_filters,filter_size=3,padding=dilation,dilation=dilation),

BatchNorm(num_filters,act='relu')

)

class ASPPModule(Layer):

# TODO:

def __init__(self,num_channels,num_filters,rates):

super(ASPPModule,self).__init__()

self.features =[]

self.features.append(

fluid.dygraph.Sequential(

Conv2D(num_channels,num_filters,1),

BatchNorm(num_filters,act = 'relu')

)

)

self.features.append(ASPPPooling(num_channels,num_filters))

for r in rates:

self.features.append(

ASPPConv(num_channels,num_filters,r)

)

self.project = fluid.dygraph.Sequential(

Conv2D(num_filters*(2+len(rates)),num_filters,1),

BatchNorm(num_filters,act = 'relu')

)

def forward(self,inputs):

res = []

for op in self.features:

res.append(op(inputs))

x = fluid.layers.concat(res,axis=1)

#print(x.shape)

x =self.project(x)

return x

class DeepLabHead(fluid.dygraph.Sequential):

def __init__(self, num_channels, num_classes):

super(DeepLabHead, self).__init__(

ASPPModule(num_channels, 256, [12, 24, 36]),

Conv2D(256, 256, 3, padding=1),

BatchNorm(256, act='relu'),

Conv2D(256, num_classes, 1)

)

class DeepLab(Layer):

# TODO:

def __init__(self,num_classes=59):

super(DeepLab,self).__init__()

resnet = ResNet50(pretrained=False, duplicate_blocks=True)

self.layer0 = fluid.dygraph.Sequential(

resnet.conv,

resnet.pool2d_max

)

self.layer1 = resnet.layer1

self.layer2 = resnet.layer2

self.layer3 = resnet.layer3

self.layer4 = resnet.layer4

self.layer5 = resnet.layer5

self.layer6 = resnet.layer6

self.layer7 = resnet.layer7

feature_dim = 2048

self.classifier = DeepLabHead(feature_dim,num_classes)

def forward(self,inputs):

n , c, h, w = inputs.shape

x = self.layer0(inputs)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.layer5(x)

x = self.layer6(x)

x = self.layer7(x)

x = self.classifier(x)

x = fluid.layers.interpolate(x,(h,w),align_corners = False)

return x

def main():

with fluid.dygraph.guard():

x_data = np.random.rand(2, 3, 512, 512).astype(np.float32)

x = to_variable(x_data)

model = DeepLab(num_classes=59)

model.eval()

pred = model(x)

print(pred.shape)

if __name__ == '__main__':

main()