单层神经网络实现mnist手写数字识别

一 Mnist数据集介绍

Mnist数据集分为两部分:55000行训练数据(mnist.train)和10000行测试数据(mnist.test),每行数据由一张包含手写数字图片和对应的标签组成,手写数字为单通道28*28大小的图像,对应的标签为0-9之间的数字,由one-hot编码构成。

二 神经网络构建

这里使用一种最简单的神经网络(由输入直接到输出)即全连接神经网络,对于mnist手写数字识别,已知训练样本为28*28的单通道图像,最终要得到该样本属于0 1 2 3 4 5 6 7 8 9中的哪一类,这是一个10分类问题。输入一个28*28的训练样本,则其特征数量为784,将N个样本同时输入则输入的数据为[N*784],得到的输出为[N*10]。由于为单层神经网络,因此权重为[784*10],偏置为[10]。将输出求平均交叉熵损失,然后使用梯度下降优化损失,最后得出准确率。

1.建立数据占位符

with tf.variable_scope("data"):

x = tf.placeholder(tf.float32, [None, 784]) # 训练样本特征值

y_true = tf.placeholder(tf.float32, [None, 10]) # 训练样本目标值2.建立一个全连接层的神经网络

with tf.variable_scope("fc_model"):

# 随机初始化权重和偏置

weight = tf.Variable(tf.random_normal([784,10], mean=0.0, stddev=1.0), name="weight")

bias = tf.Variable(tf.constant(0.0, shape=[10]), name="bias")

# 预测None个样本的输出结果maxtrix [None,784]*[784,10] + [10] = [None, 10]

y_predict = tf.matmul(x, weight) + bias3.求平均交叉熵损失

with tf.variable_scope("soft_cross"):

# 求平均交叉熵损失

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_true, logits=y_predict)) # labels:真实值 logists:预测值

4.梯度下降优化损失

with tf.variable_scope("optimizer"):

train_op = tf.train.GradientDescentOptimizer(0.1).minimize(loss)5.计算准确率

with tf.variable_scope("acc"):

# equal_list: None个样本 [1,0,1,0,0,1.......],相同为1不相同为0

equal_list = tf.equal(tf.argmax(y_true, 1), tf.argmax(y_predict, 1))

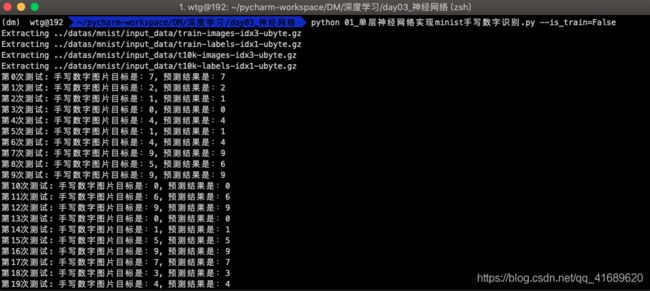

accuracy = tf.reduce_mean(tf.cast(equal_list, tf.float32))三 结果展示

1.训练数据

python 01_单层神经网络实现minist手写数字识别.py --is_train=Truepython 01_单层神经网络实现minist手写数字识别.py --is_train=False四 代码实现

# -*- coding: utf-8 -*-

"""

--------------------------------------------------------

# @Version : python3.7

# @Author : wangTongGen

# @File : 01_单层神经网络实现minist手写数字识别.py

# @Software: PyCharm

# @Time : 2019/3/27 15:40

--------------------------------------------------------

"""

import os

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

os.environ["TF_CPP_MIN_LOG_LEVEL"] = "2"

tf.app.flags.DEFINE_boolean("is_train", True, "指定程序是预测还是训练")

FLAGS = tf.app.flags.FLAGS

def full_connected():

# 获取真实数据

mnist = input_data.read_data_sets("../datas/mnist/input_data", one_hot=True)

# 1.建立数据占位符 x [None,784] y_true [None, 10]

with tf.variable_scope("data"):

x = tf.placeholder(tf.float32, [None, 784])

y_true = tf.placeholder(tf.float32, [None, 10])

# 2.建立一个全连接层的神经网络 w[784,10] b[10]

with tf.variable_scope("fc_model"):

# 随机初始化权重和偏置

weight = tf.Variable(tf.random_normal([784,10], mean=0.0, stddev=1.0), name="weight")

bias = tf.Variable(tf.constant(0.0, shape=[10]), name="bias")

# 预测None个样本的输出结果maxtrix [None,784]*[784,10] + [10] = [None, 10]

y_predict = tf.matmul(x, weight) + bias

# 3.求出所有样本的损失,然后求平均值

with tf.variable_scope("soft_cross"):

# 求平均交叉熵损失

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_true, logits=y_predict)) # labels:真实值 logists:预测值

# 4.梯度下降优化损失

with tf.variable_scope("optimizer"):

train_op = tf.train.GradientDescentOptimizer(0.1).minimize(loss)

# 5.计算准确率

with tf.variable_scope("acc"):

# equal_list: None个样本 [1,0,1,0,0,1.......],相同为1不相同为0

equal_list = tf.equal(tf.argmax(y_true, 1), tf.argmax(y_predict, 1))

accuracy = tf.reduce_mean(tf.cast(equal_list, tf.float32))

# 定义一个初始化变量op

init_op = tf.global_variables_initializer()

# 收集变量 单个数字值收集

tf.summary.scalar("losses", loss)

tf.summary.scalar("accuracy", accuracy)

# 高纬度变量收集

tf.summary.histogram("weightes", weight)

tf.summary.histogram("biases", bias)

# 定义一个合并变量的op

merged = tf.summary.merge_all()

# 创建一个saver

saver = tf.train.Saver()

# 开启会话训练

with tf.Session() as sess:

# 初始化变量

sess.run(init_op)

# 建立事件文件, 然后写入

fileWriter = tf.summary.FileWriter("./tmp/summary/test", graph=sess.graph)

if FLAGS.is_train:

# 迭代步数去训练,更新参数预测

for i in range(2000):

# 取出真实存在的特征值和目标值

mnist_x, mnist_y = mnist.train.next_batch(50)

# 运行train_op训练

sess.run(train_op, feed_dict={x:mnist_x, y_true:mnist_y})

# 写入每步训练的值

summary = sess.run(merged, feed_dict={x:mnist_x, y_true:mnist_y})

fileWriter.add_summary(summary, i)

print("训练第%d步,准确率为:%f" % (i, sess.run(accuracy, feed_dict={x:mnist_x, y_true:mnist_y})))

# 保存模型

saver.save(sess, "./tmp/ckpt/fc_model")

else:

# 加载模型

saver.restore(sess, "./tmp/ckpt/fc_model")

for i in range(100):

# 每次测试一张图片

x_test, y_test = mnist.test.next_batch(1)

print("第%d次测试: 手写数字图片目标是:%d, 预测结果是:%d" %(

i,

tf.argmax(y_test, 1).eval(),

tf.argmax(sess.run(y_predict, feed_dict={x:x_test, y_true:y_test}), 1).eval()

))

if __name__ == '__main__':

full_connected()