李宏毅机器学习作业4——Recurrent Neural Network

本作业来源于李宏毅机器学习作业说明,详情可看 Homework 4 - Recurrent Neural Network(友情提示,可能需要)

参考 李宏毅2020机器学习作业4-RNN:句子情感分类

作业要求

作业要求:通过循环神经网络(Recurrent Neural Networks, RNN)对句子进行情感分类。给定一句句子,判断这句句子是正面还是负面的(正面标1,负面标0)

用到的数据:链接:https://pan.baidu.com/s/1zfigNt2f1n4oTDRyeAZktQ 提取码:1234 。请确保里面又三个文件,testing_data.txt、training_label.txt、training_nolabel.txt。文本数据是从推特上收集到的推文(英文文本),每篇推文都会被标注为正面或者负面。

现在来看看数据吧:

- training_label

格式为:标签 +++$+++ 文本(+++$+++ 仅仅是分隔符)1 +++$+++ are wtf ... awww thanks ! 1 +++$+++ leavingg to wait for kaysie to arrive myspacin itt for now ilmmthek .! 0 +++$+++ i wish i could go and see duffy when she comes to mamaia romania . 1 +++$+++ i know eep ! i can ' t wait for one more day ....

以第一句为例:1表示句子“are wtf … awww thanks !”是正面的 - training_nolabel.txt

只有句子没有label的training data,用来做半监督学习,约120万句句子mkhang mlbo . dami niang followers ee . di q rin naman sia masisisi . desperate n kng desperate , pero dpt tlga replyn nia q = d don ' t you hate it when you hang on to a seemingly interesting movie to see the ending only to find out that the ending sucks ? ok so never went to the movies because friend wasn ' t feeling well but next weekend . back to work today , wasn ' t too bad . can ' t wait to see diversity ' s performance ! - testing_data.txt

格式:第一行是表头,从第二行开始是数据,第一列是id,第二列是文本id,text 0,my dog ate our dinner . no , seriously ... he ate it . 1,omg last day sooon n of primary noooooo x im gona be swimming out of school wif the amount of tears am gona cry 2,stupid boys .. they ' re so .. stupid !

最终需要判断 testing data 里面的句子是 0 或 1,约20万句句子

概念介绍

参考了这篇文章,感觉它这里写的挺好https://blog.csdn.net/iteapoy/article/details/105931612

单词的表示

人可以理解文字,但是对于机器来说,数字是更好理解的(因为数字可以进行运算),因此,我们需要把文字变成数字。

- 中文句子以“字”为单位。一句中文句子是由一个个字组成的,每个字都分别变成词向量,用一个向量vector来表示一个字的意思。

- 英文句子以“单词”为单位。一句英文句子是由一个个单词组成的,每个单词都分别变成词向量,用一个向量vector来表示一个单词的意思。

句子的表示

对于一句句子的处理,先建立字典,字典内含有每一个字所对应到的索引。比如:

- “I have a pen.” -> [1, 2, 3, 4]

- “I have an apple.” -> [1, 2, 5, 6]

得到句子的向量有两种方法:

- 直接用 bag of words (BOW) 的方式获得一个代表该句的向量。

- 我们已经用一个向量 vector 来表示一个单词,然后我们就可以用RNN模型来得到一个表示句子向量。

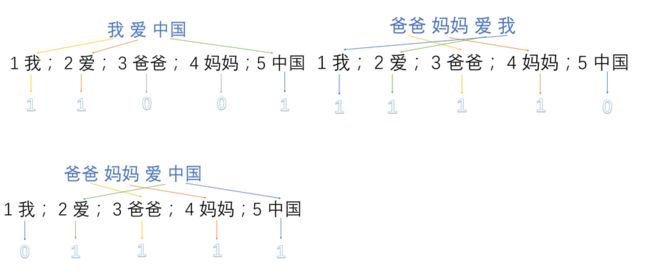

1-of-N encoding

一个向量,长度为N,其中有1个是1,N − 1个都是0,也叫one-hot编码,中文翻译成“独热编码”。

one-hot在特征提取上属于词袋模型(bag of words),假设语料库中有三句话:

- 我爱中国

- 爸爸妈妈爱我

- 爸爸妈妈爱中国

首先,将语料库中的每句话分成单词,并编号:

- 1:我 2:爱 3:爸爸 4:妈妈 5:中国

然后,用one-hot对每句话提取特征向量:

所以最终得到的每句话的特征向量就是:

- 我爱中国 -> 1,1,0,0,1

- 爸爸妈妈爱我 -> 1,1,1,1,0

- 爸爸妈妈爱中国 -> 0,1,1,1,1

那么这样做的优点和缺点都有什么?

优点:

- 解决了分类器处理离散数据困难的问题

- 一定程度上起到了扩展特征的作用(上例中从3扩展到了9)

缺点:

-

占用内存大:总共有多少个字,向量就有多少维,但是其中很多都是0,只有1个是1.

比如: 200000 ( d a t a ) ∗ 30 ( l e n g t h ) ∗ 20000 ( v o c a b s i z e ) ∗ 4 ( B y t e ) = 4.8 ∗ 1 0 11 = 480 G B 200000(data)*30(length)*20000(vocab \ size) *4(Byte) = 4.8 ∗ 10^{11}= 480 GB 200000(data)∗30(length)∗20000(vocab size)∗4(Byte)=4.8∗1011=480GB -

one-hot是一个词袋模型,不考虑词与词之间的顺序问题,而在文本中,词的顺序是一个很重要的问题

-

one-hot是基于词与词之间相互独立的情况下的,然而在多数情况中,词与词之间应该是相互影响的

-

one-hot得到的特征是离散的,稀疏的

Bag of Words (BOW)

BOW 的概念就是将句子里的文字变成一个袋子装着这些词,BOW不考虑文法以及词的顺序。

比如,有两句句子:

1. John likes to watch movies. Mary likes movies too.

2. John also likes to watch football games.

有一个字典:[ “John”, “likes”, “to”, “watch”, “movies”, “also”, “football”, “games”, “Mary”, “too” ]

在 BOW 的表示方法下,第一句句子 “John likes to watch movies. Mary likes movies too.” 在该字典中,每个单词的出现次数为:

John:1次

likes:2次

to:1次

watch:1次

movies:2次

also:0次

football:0次

games:0次

Mary:1次

too:1次

因此,“John likes to watch movies. Mary likes movies too.”的表示向量即为:[1, 2, 1, 1, 2, 0, 0, 0, 1, 1](1是表示这个单词在句子里出现了一次,2表示这个单词在句子里出现了2次,0表示未出现),第二句句子同理,最终两句句子的表示向量如下:

1. John likes to watch movies. Mary likes movies too. -> [1, 2, 1, 1, 2, 0, 0, 0, 1, 1]

2. John also likes to watch football games. -> [1, 1, 1, 1, 0, 1, 1, 1, 0, 0]

之后,把句子的BOW输入DNN,得到预测值,与标签进行对比。

具体的可以看这篇文章BoW(词袋)模型详细介绍

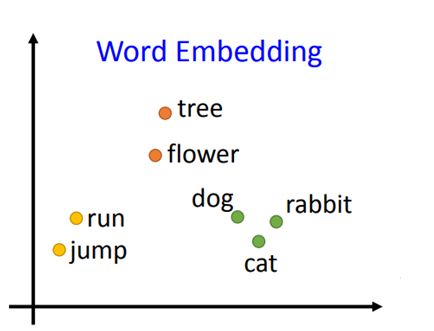

word embedding

词嵌入(word embedding),也叫词的向量化(word to vector),即把单词变成向量(vector)。训练词嵌入的方法有两种:

- 可以用一些方法 (比如 skip-gram, CBOW) 预训练(pretrain)出 word embedding ,在本次作业中只能用已有.txt中的数据进行预训练。

- 可以把它作为模型的一部分(词嵌入层),与模型的其他部分一起训练

具体的可以参考这篇文章 Unsupervised Learning: Word Embedding

Semi-supervised Learning 半监督学习

在机器学习中,最宝贵的可能是有标注的数据。想要得到无标注的数据很容易,爬虫去网络上爬取一些文本即可,但是想要得到有标注的数据,就需要人工手动标注,成本很高。

半监督学习,简单来说,就是机器利用一部分有标注的数据(通常比较少) 和 一部分无标注的数据(通常比较多) 来进行训练。

半监督学习的方法有很多种,最容易理解、也最好操作的一种是Self-Training:把训练好的模型对无标签的数据( unlabeled data )做预测,将预测值作为该数据的标签(label),并加入这些新的有标签的数据做训练。可以通过调整阈值(threshold),或是多次取样来得到比较可信的数据。

比如:在测试阶段,prediction > 0.5 的数据会被标上 1,prediction < 0.5 的数据被标上0 (= 0.5 的情况,你自己提前指定是0或者是1,并始终保持一致)。在 Self-Training 中,你可以设置 pos_threshold = 0.8,意思是只有 prediction > 0.8 的数据会被标上 1,并放入训练集,而 0.5 < prediction < 0.8 的数据仍然属于无标签的数据。

Recurrent Neural Network

导包

由于python库的版本等问题,在程序运行时可能会出现一些warning(警告),但是它们并不会影响程序运行,出于程序员的强迫症的考虑,屏蔽它们。

# this is for filtering the warnings

import warnings

warnings.filterwarnings('ignore')

然后导入需要的库

import torch

import numpy as np

import pandas as pd

import torch.optim as optim

import torch.nn.functional as F

import argparse

from gensim.models import Word2Vec

from torch.utils.data import DataLoader, Dataset

from torch import nn

from sklearn.model_selection import train_test_split

读取数据和准确度函数

创建两个读取数据的函数

def load_training_data(path='training_label.txt'):

# 读取 training 需要的数据

# 如果是 'training_label.txt',需要读取 label,如果是 'training_nolabel.txt',不需要读取 label

if 'training_label' in path:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

lines = [line.strip('\n').split(' ') for line in lines]

# 每行按空格分割后,第2个符号之后都是句子的单词

x = [line[2:] for line in lines]

# 每行按空格分割后,第0个符号是label

y = [line[0] for line in lines]

return x, y

else:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

x = [line.strip('\n').split(' ') for line in lines]

return x

def load_testing_data(path='testing_data'):

# 读取 testing 需要的数据

with open(path, 'r') as f:

lines = f.readlines()

# 第0行是表头,从第1行开始是数据

# 第0列是id,第1列是文本,按逗号分割,需要逗号之后的文本

X = ["".join(line.strip('\n').split(",")[1:]).strip() for line in lines[1:]]

X = [sen.split(' ') for sen in X]

return X

我这里报了一个错,其实就是编码不对而已

UnicodeDecodeError: 'gbk' codec can't decode byte 0xab in position 1236: illegal multibyte sequence

解决方法:

with open(path, 'r',encoding='utf-8') as f:

接下来写一个函数,对我们的预测和label进行一个比较,从而获取准确度。

def evaluation(outputs, labels):

# outputs => 预测值,概率(float)

# labels => 真实值,标签(0或1)

outputs[outputs>=0.5] = 1 # 大于等于 0.5 为正面

outputs[outputs<0.5] = 0 # 小于 0.5 为负面

accuracy = torch.sum(torch.eq(outputs, labels)).item()

return accuracy

词嵌入 word2vec

把 training 和 testing 中的每个单词都分别变成词向量,这里用的是word embedding

这段代码在训练 word to vector 时是用 cpu,可能要花 10 分钟以上。

def train_word2vec(x):

# 训练 word to vector 的 word embedding

# window:滑动窗口的大小,min_count:过滤掉语料中出现频率小于min_count的词

model = Word2Vec(x, size=250, window=5, min_count=5, workers=12, iter=10, sg=1)

return model

# 读取 training 数据

print("loading training data ...")

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 读取 testing 数据

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 把 training 中的 word 变成 vector

# model = train_word2vec(train_x + train_x_no_label + test_x) # w2v_all

model = train_word2vec(train_x + test_x) # w2v

# 保存 vector

print("saving model ...")

# model.save('w2v_all.model')

model.save('w2v.model')

这里用到了 Gensim 来进行 word2vec 的操作。没有 gensim 的可以用 conda install gensim 或者 pip install gensim 安装一下

Gensim是一款开源的第三方Python工具包,用于从原始的非结构化的文本中,无监督地学习到文本隐层的主题向量表达。

它支持包括TF-IDF,LSA,LDA,和word2vec在内的多种主题模型算法。

详情请看:Gensim英文官方文档

Word2Vec 模块具体的 API 如下:

class gensim.models.word2vec.Word2Vec(

sentences=None,

size=100,

alpha=0.025,

window=5,

min_count=5,

max_vocab_size=None,

sample=0.001,

seed=1,

workers=3,

min_alpha=0.0001,

sg=0,

hs=0,

negative=5,

cbow_mean=1,

hashfxn=<built-in function hash>,

iter=5,

null_word=0,

trim_rule=None,

sorted_vocab=1,

batch_words=10000,

compute_loss=False)

参数含义:

- size: 词向量的维度。

- alpha: 模型初始的学习率。

- window: 表示在一个句子中,当前词于预测词在一个句子中的最大距离。

- min_count: 用于过滤操作,词频少于 min_count 次数的单词会被丢弃掉,默认值为 5。

- max_vocab_size: 设置词向量构建期间的 RAM 限制。如果所有的独立单词数超过这个限定词,那么就删除掉其中词频最低的那个。根据统计,每一千万个单词大概需要 1GB 的RAM。如果我们把该值设置为 None ,则没有限制。

- sample: 高频词汇的随机降采样的配置阈值,默认为 1e-3,范围是 (0, 1e-5)。

- seed: 用于随机数发生器。与词向量的初始化有关。

- workers: 控制训练的并行数量。

- min_alpha: 随着训练进行,alpha 线性下降到 min_alpha。

- sg: 用于设置训练算法。当 sg=0,使用 CBOW 算法来进行训练;当 sg=1,使用 skip-gram 算法来进行训练。

- hs: 如果设置为 1 ,那么系统会采用 hierarchica softmax 技巧。如果设置为 0(默认情况),则系统会采用 negative samping 技巧。

- negative: 如果这个值大于 0,那么 negative samping 会被使用。该值表示 “noise words” 的数量,一般这个值是 5 - 20,默认是 5。如果这个值设置为 0,那么 negative samping 没有使用。

- cbow_mean: 如果这个值设置为 0,那么就采用上下文词向量的总和。如果这个值设置为 1 (默认情况下),那么我们就采用均值。但这个值只有在使用 CBOW 的时候才起作用。

- hashfxn: hash函数用来初始化权重,默认情况下使用 Python 自带的 hash 函数。

- iter: 算法迭代次数,默认为 5。

- trim_rule: 用于设置词汇表的整理规则,用来指定哪些词需要被剔除,哪些词需要保留。默认情况下,如果 word count < min_count,那么该词被剔除。这个参数也可以被设置为 None,这种情况下 min_count 会被使用。

- sorted_vocab: 如果这个值设置为 1(默认情况下),则在分配 word index 的时候会先对单词基于频率降序排序。

- batch_words: 每次批处理给线程传递的单词的数量,默认是 10000。

具体的使用,可以看这里 Gensim 中 word2vec 函数的使用

原理浅析,可以看这里 [Word2vec原理浅析及gensim中word2vec使用]((2条消息) Word2vec原理浅析及gensim中word2vec使用_luoxuexiong的博客-CSDN博客)

数据预处理

对数据进行预处理

# 数据预处理

class Preprocess():

def __init__(self, sentences, sen_len, w2v_path):

self.w2v_path = w2v_path # word2vec的存储路径

self.sentences = sentences # 句子

self.sen_len = sen_len # 句子的固定长度

self.idx2word = []

self.word2idx = {

}

self.embedding_matrix = []

def get_w2v_model(self):

# 读取之前训练好的 word2vec

self.embedding = Word2Vec.load(self.w2v_path)

self.embedding_dim = self.embedding.vector_size

def add_embedding(self, word):

# 这里的 word 只会是 "" 或 ""

# 把一个随机生成的表征向量 vector 作为 "" 或 "" 的嵌入

vector = torch.empty(1, self.embedding_dim)

torch.nn.init.uniform_(vector)

# 它的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix = torch.cat([self.embedding_matrix, vector], 0)

def make_embedding(self, load=True):

print("Get embedding ...")

# 获取训练好的 Word2vec word embedding

if load:

print("loading word to vec model ...")

self.get_w2v_model()

else:

raise NotImplementedError

# 遍历嵌入后的单词

for i, word in enumerate(self.embedding.wv.vocab):

print('get words #{}'.format(i+1), end='\r')

# 新加入的 word 的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix.append(self.embedding[word])

print('')

# 把 embedding_matrix 变成 tensor

self.embedding_matrix = torch.tensor(self.embedding_matrix)

# 将 和 加入 embedding

self.add_embedding("" )

self.add_embedding("" )

print("total words: {}".format(len(self.embedding_matrix)))

return self.embedding_matrix

def pad_sequence(self, sentence):

# 将每个句子变成一样的长度,即 sen_len 的长度

if len(sentence) > self.sen_len:

# 如果句子长度大于 sen_len 的长度,就截断

sentence = sentence[:self.sen_len]

else:

# 如果句子长度小于 sen_len 的长度,就补上 符号,缺多少个单词就补多少个

pad_len = self.sen_len - len(sentence)

for _ in range(pad_len):

sentence.append(self.word2idx["" ])

assert len(sentence) == self.sen_len

return sentence

def sentence_word2idx(self):

# 把句子里面的字变成相对应的 index

sentence_list = []

for i, sen in enumerate(self.sentences):

print('sentence count #{}'.format(i+1), end='\r')

sentence_idx = []

for word in sen:

if (word in self.word2idx.keys()):

sentence_idx.append(self.word2idx[word])

else:

# 没有出现过的单词就用 表示

sentence_idx.append(self.word2idx["" ])

# 将每个句子变成一样的长度

sentence_idx = self.pad_sequence(sentence_idx)

sentence_list.append(sentence_idx)

return torch.LongTensor(sentence_list)

def labels_to_tensor(self, y):

# 把 labels 转成 tensor

y = [int(label) for label in y]

return torch.LongTensor(y)

定义一个预处理的类Preprocess():

- w2v_path:word2vec的存储路径

- sentences:句子

- sen_len:句子的固定长度

- idx2word 是一个列表,比如:self.idx2word[1] = ‘he’

- word2idx 是一个字典,记录单词在 idx2word 中的下标,比如:self.word2idx[‘he’] = 1

- embedding_matrix 是一个列表,记录词嵌入的向量,比如:self.embedding_matrix[1] = ‘he’ vector

对于句子,我们就可以通过 embedding_matrix[word2idx[‘he’] ] 找到 ‘he’ 的词嵌入向量。

Preprocess()的调用如下:

- 训练模型:

preprocess = Preprocess(train_x, sen_len, w2v_path=w2v_path) - 测试模型:

preprocess = Preprocess(test_x, sen_len, w2v_path=w2v_path)

另外,这里除了出现在 train_x 和 test_x 中的单词外,还需要两个单词(或者叫特殊符号):

- “

”:Padding的缩写,把所有句子都变成一样长度时,需要用" "补上空白符 - “

”:Unknown的缩写,凡是在 train_x 和 test_x 中没有出现过的单词,都用" "来表示

定义Dataset

因为我么用的是pytorch嘛,那么可以利用 torch.utils.data 的 Dataset 及 DataLoader 來"包装" data,使后续的 training 及 testing 更为方便。

class TwitterDataset(Dataset):

"""

Expected data shape like:(data_num, data_len)

Data can be a list of numpy array or a list of lists

input data shape : (data_num, seq_len, feature_dim)

__len__ will return the number of data

"""

def __init__(self, X, y):

self.data = X

self.label = y

def __getitem__(self, idx):

if self.label is None: return self.data[idx]

return self.data[idx], self.label[idx]

def __len__(self):

return len(self.data)

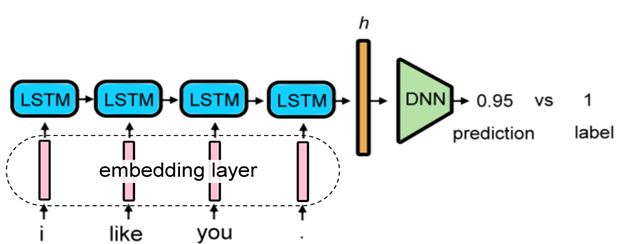

定义LSTM

定义LSTM模型(Long Short-Term Memory,长短期记忆网络),LSTM的效果是比普通的RNN好的,因为LSTM可以解决梯度消失的问题。所以现在当我们说RNN的时候,一般都是指LSTM.

具体的可以看这篇文章 Recurrent Neural Network part2

把句子丢到LSTM中,变成一个输出向量,再把这个输出丢到分类器classifier中,进行二元分类。

class LSTM_Net(nn.Module):

def __init__(self, embedding, embedding_dim, hidden_dim, num_layers, dropout=0.5, fix_embedding=True):

super(LSTM_Net, self).__init__()

# embedding layer

self.embedding = torch.nn.Embedding(embedding.size(0),embedding.size(1))

self.embedding.weight = torch.nn.Parameter(embedding)

# 是否将 embedding 固定住,如果 fix_embedding 为 False,在训练过程中,embedding 也会跟着被训练

self.embedding.weight.requires_grad = False if fix_embedding else True

self.embedding_dim = embedding.size(1)

self.hidden_dim = hidden_dim

self.num_layers = num_layers

self.dropout = dropout

self.lstm = nn.LSTM(embedding_dim, hidden_dim, num_layers=num_layers, batch_first=True)

self.classifier = nn.Sequential(

nn.Dropout(dropout),

nn.Linear(hidden_dim, 1),

nn.Sigmoid()

)

def forward(self, inputs):

inputs = self.embedding(inputs)

x, _ = self.lstm(inputs, None)

# x 的 dimension (batch, seq_len, hidden_size)

# 取用 LSTM 最后一层的 hidden state 丢到分类器中

x = x[:, -1, :]

x = self.classifier(x)

return x

training

将 training 和 validation 封装成函数

def training(batch_size, n_epoch, lr, train, valid, model, device):

# 输出模型总的参数数量、可训练的参数数量

total = sum(p.numel() for p in model.parameters())

trainable = sum(p.numel() for p in model.parameters() if p.requires_grad)

print('\nstart training, parameter total:{}, trainable:{}\n'.format(total, trainable))

loss = nn.BCELoss() # 定义损失函数为二元交叉熵损失 binary cross entropy loss

t_batch = len(train) # training 数据的batch size大小

v_batch = len(valid) # validation 数据的batch size大小

optimizer = optim.Adam(model.parameters(), lr=lr) # optimizer用Adam,设置适当的学习率lr

total_loss, total_acc, best_acc = 0, 0, 0

for epoch in range(n_epoch):

total_loss, total_acc = 0, 0

# training

model.train() # 将 model 的模式设为 train,这样 optimizer 就可以更新 model 的参数

for i, (inputs, labels) in enumerate(train):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device, dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

optimizer.zero_grad() # 由于 loss.backward() 的 gradient 会累加,所以每一个 batch 后需要归零

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

batch_loss.backward() # 计算 loss 的 gradient

optimizer.step() # 更新模型参数

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

print('Epoch | {}/{}'.format(epoch+1,n_epoch))

print('Train | Loss:{:.5f} Acc: {:.3f}'.format(total_loss/t_batch, total_acc/t_batch*100))

# validation

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

with torch.no_grad():

total_loss, total_acc = 0, 0

for i, (inputs, labels) in enumerate(valid):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device, dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

print("Valid | Loss:{:.5f} Acc: {:.3f} ".format(total_loss/v_batch, total_acc/v_batch*100))

if total_acc > best_acc:

# 如果 validation 的结果优于之前所有的結果,就把当下的模型保存下来,用于之后的testing

best_acc = total_acc

torch.save(model, "ckpt.model")

print('***************************************')

nn.BCELoss与nn.CrossEntropyLoss的区别:

我刚看到的时候以为这两个是差不多的,但细想了一下,感觉应该有些区别了,查了下资料,看看区别吧:

-

使用nn.BCELoss

需要在该层前面加上Sigmoid函数,公式如下:l o s s ( X i , y i ) = − w i [ y i l o g x i + ( 1 − y i ) l o g ( 1 − x i ) ] loss(X_i,y_i) = -w_i[y_ilogx_i+(1-y_i)log(1-x_i)] loss(Xi,yi)=−wi[yilogxi+(1−yi)log(1−xi)]

-

使用nn.CrossEntropyLoss会

自动加上Sofrmax层,公式如下:l o s s ( X i , y i ) = − w l a b e l l o g e x l a b e l ∑ j = 1 N e x j loss(X_i,y_i) = -w_{label}log\frac{e^{x_{label}}}{\sum^N_{j=1}e^{x_j}} loss(Xi,yi)=−wlabellog∑j=1Nexjexlabel

可以看出,这两个计算损失的函数使用的激活函数不同,故而最后的计算公式不同。

调用前面的封装的Preprocess(),training(),进行训练。

train_test_split()的使用说明:

- test_size:样本占比。

- random_state:随机数的种子。 填0或不填,每次都会不一样。填其他数字,每次会固定得到同样的随机分配。

- stratify:保持split前类的分布。一般在数据不平衡时使用。

# 通过 torch.cuda.is_available() 的值判断是否可以使用 GPU ,如果可以的话 device 就设为 "cuda",没有的话就设为 "cpu"

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 定义句子长度、要不要固定 embedding、batch 大小、要训练几个 epoch、 学习率的值、 w2v的路径

sen_len = 20

fix_embedding = True # fix embedding during training

batch_size = 128

epoch = 10

lr = 0.001

w2v_path = 'w2v_all.model'

print("loading data ...") # 读取 'training_label.txt' 'training_nolabel.txt'

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 对 input 跟 labels 做预处理

preprocess = Preprocess(train_x, sen_len, w2v_path=w2v_path)

embedding = preprocess.make_embedding(load=True)

train_x = preprocess.sentence_word2idx()

y = preprocess.labels_to_tensor(y)

# 定义模型

model = LSTM_Net(embedding, embedding_dim=250, hidden_dim=150, num_layers=1, dropout=0.5, fix_embedding=fix_embedding)

model = model.to(device) # device为 "cuda",model 使用 GPU 来训练(inputs 也需要是 cuda tensor)

# 把 data 分为 training data 和 validation data(将一部分 training data 作为 validation data)

X_train, X_val, y_train, y_val = train_test_split(train_x, y, test_size = 0.1, random_state = 1, stratify = y)

print('Train | Len:{} \nValid | Len:{}'.format(len(y_train), len(y_val)))

# 把 data 做成 dataset 供 dataloader 取用

train_dataset = TwitterDataset(X=X_train, y=y_train)

val_dataset = TwitterDataset(X=X_val, y=y_val)

# 把 data 转成 batch of tensors

train_loader = DataLoader(train_dataset, batch_size = batch_size, shuffle = True, num_workers = 0)

val_loader = DataLoader(val_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 开始训练

training(batch_size, epoch, lr, train_loader, val_loader, model, device)

testing

将 testing 封装成函数

def testing(batch_size, test_loader, model, device):

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

ret_output = [] # 返回的output

with torch.no_grad():

for i, inputs in enumerate(test_loader):

inputs = inputs.to(device, dtype=torch.long)

outputs = model(inputs)

outputs = outputs.squeeze()

outputs[outputs>=0.5] = 1 # 大于等于0.5为正面

outputs[outputs<0.5] = 0 # 小于0.5为负面

ret_output += outputs.int().tolist()

return ret_output

调用testing()进行预测,预测数据保存为predict.csv,约1.6M

# 测试模型并作预测

# 读取测试数据test_x

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 对test_x作预处理

preprocess = Preprocess(test_x, sen_len, w2v_path=w2v_path)

embedding = preprocess.make_embedding(load=True)

test_x = preprocess.sentence_word2idx()

test_dataset = TwitterDataset(X=test_x, y=None)

test_loader = DataLoader(test_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 读取模型

print('\nload model ...')

model = torch.load('ckpt.model')

# 测试模型

outputs = testing(batch_size, test_loader, model, device)

# 保存为 csv

tmp = pd.DataFrame({

"id":[str(i) for i in range(len(test_x))],"label":outputs})

print("save csv ...")

tmp.to_csv('predict.csv', index=False)

print("Finish Predicting")

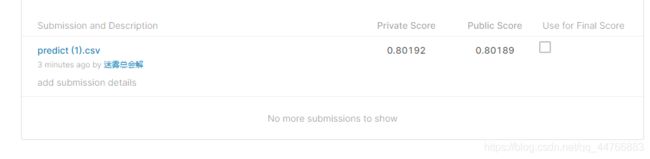

结果

将预测的结果上传到kaggle上,看看分数。

修改代码

加入无标记数据

上述代码在进行 word2vec 时,仅仅使用了 train_x + test_x 的语料数据,下面根据 train_x + train_x_no_label + test_x 的语料数据来建立词典,得到新的词嵌入向量。

把原来的代码

# 把 training 中的 word 变成 vector

# model = train_word2vec(train_x + train_x_no_label + test_x) # w2v_all

model = train_word2vec(train_x + test_x) # w2v

# 保存 vector

print("saving model ...")

# model.save('w2v_all.model')

model.save('w2v.model')

改为:

# 把 training 中的 word 变成 vector

model = train_word2vec(train_x + train_x_no_label + test_x) # w2v_all

# model = train_word2vec(train_x + test_x) # w2v

# 保存 vector

print("saving model ...")

model.save('w2v_all.model')

# model.save('w2v.model')

并且把 w2v_path = 'w2v.model' 改为 w2v_path = 'w2v_all.model'

训练迭代次数 epoch 增加。

完整代码如下:

# utils.py

# 用来定义一些之后常用到的函数

import torch

import numpy as np

import pandas as pd

import torch.optim as optim

import torch.nn.functional as F

def load_training_data(path='training_label.txt'):

# 读取 training 需要的数据

# 如果是 'training_label.txt',需要读取 label,如果是 'training_nolabel.txt',不需要读取 label

if 'training_label' in path:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

lines = [line.strip('\n').split(' ') for line in lines]

# 每行按空格分割后,第2个符号之后都是句子的单词

x = [line[2:] for line in lines]

# 每行按空格分割后,第0个符号是label

y = [line[0] for line in lines]

return x, y

else:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

x = [line.strip('\n').split(' ') for line in lines]

return x

def load_testing_data(path='testing_data'):

# 读取 testing 需要的数据

with open(path, 'r') as f:

lines = f.readlines()

# 第0行是表头,从第1行开始是数据

# 第0列是id,第1列是文本,按逗号分割,需要逗号之后的文本

X = ["".join(line.strip('\n').split(",")[1:]).strip() for line in lines[1:]]

X = [sen.split(' ') for sen in X]

return X

def evaluation(outputs, labels):

# outputs => 预测值,概率(float)

# labels => 真实值,标签(0或1)

outputs[outputs>=0.5] = 1 # 大于等于 0.5 为正面

outputs[outputs<0.5] = 0 # 小于 0.5 为负面

accuracy = torch.sum(torch.eq(outputs, labels)).item()

return accuracy

from gensim.models import Word2Vec

def train_word2vec(x):

# 训练 word to vector 的 word embedding

# window:滑动窗口的大小,min_count:过滤掉语料中出现频率小于min_count的词

model = Word2Vec(x, size=250, window=5, min_count=5, workers=12, iter=10, sg=1)

return model

# 读取 training 数据

print("loading training data ...")

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 读取 testing 数据

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 把 training 中的 word 变成 vector

model = train_word2vec(train_x + train_x_no_label + test_x) # w2v_all

# model = train_word2vec(train_x + test_x) # w2v

# 保存 vector

print("saving model ...")

model.save('w2v_all.model')

# model.save('w2v.model')

# 数据预处理

class Preprocess():

def __init__(self, sentences, sen_len, w2v_path):

self.w2v_path = w2v_path # word2vec的存储路径

self.sentences = sentences # 句子

self.sen_len = sen_len # 句子的固定长度

self.idx2word = []

self.word2idx = {

}

self.embedding_matrix = []

def get_w2v_model(self):

# 读取之前训练好的 word2vec

self.embedding = Word2Vec.load(self.w2v_path)

self.embedding_dim = self.embedding.vector_size

def add_embedding(self, word):

# 这里的 word 只会是 "" 或 ""

# 把一个随机生成的表征向量 vector 作为 "" 或 "" 的嵌入

vector = torch.empty(1, self.embedding_dim)

torch.nn.init.uniform_(vector)

# 它的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix = torch.cat([self.embedding_matrix, vector], 0)

def make_embedding(self, load=True):

print("Get embedding ...")

# 获取训练好的 Word2vec word embedding

if load:

print("loading word to vec model ...")

self.get_w2v_model()

else:

raise NotImplementedError

# 遍历嵌入后的单词

for i, word in enumerate(self.embedding.wv.vocab):

print('get words #{}'.format(i+1), end='\r')

# 新加入的 word 的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix.append(self.embedding[word])

print('')

# 把 embedding_matrix 变成 tensor

self.embedding_matrix = torch.tensor(self.embedding_matrix)

# 将 和 加入 embedding

self.add_embedding("" )

self.add_embedding("" )

print("total words: {}".format(len(self.embedding_matrix)))

return self.embedding_matrix

def pad_sequence(self, sentence):

# 将每个句子变成一样的长度,即 sen_len 的长度

if len(sentence) > self.sen_len:

# 如果句子长度大于 sen_len 的长度,就截断

sentence = sentence[:self.sen_len]

else:

# 如果句子长度小于 sen_len 的长度,就补上 符号,缺多少个单词就补多少个

pad_len = self.sen_len - len(sentence)

for _ in range(pad_len):

sentence.append(self.word2idx["" ])

assert len(sentence) == self.sen_len

return sentence

def sentence_word2idx(self):

# 把句子里面的字变成相对应的 index

sentence_list = []

for i, sen in enumerate(self.sentences):

print('sentence count #{}'.format(i+1), end='\r')

sentence_idx = []

for word in sen:

if (word in self.word2idx.keys()):

sentence_idx.append(self.word2idx[word])

else:

# 没有出现过的单词就用 表示

sentence_idx.append(self.word2idx["" ])

# 将每个句子变成一样的长度

sentence_idx = self.pad_sequence(sentence_idx)

sentence_list.append(sentence_idx)

return torch.LongTensor(sentence_list)

def labels_to_tensor(self, y):

# 把 labels 转成 tensor

y = [int(label) for label in y]

return torch.LongTensor(y)

from torch.utils.data import DataLoader, Dataset

class TwitterDataset(Dataset):

"""

Expected data shape like:(data_num, data_len)

Data can be a list of numpy array or a list of lists

input data shape : (data_num, seq_len, feature_dim)

__len__ will return the number of data

"""

def __init__(self, X, y):

self.data = X

self.label = y

def __getitem__(self, idx):

if self.label is None: return self.data[idx]

return self.data[idx], self.label[idx]

def __len__(self):

return len(self.data)

from torch import nn

class LSTM_Net(nn.Module):

def __init__(self, embedding, embedding_dim, hidden_dim, num_layers, dropout=0.5, fix_embedding=True):

super(LSTM_Net, self).__init__()

# embedding layer

self.embedding = torch.nn.Embedding(embedding.size(0),embedding.size(1))

self.embedding.weight = torch.nn.Parameter(embedding)

# 是否将 embedding 固定住,如果 fix_embedding 为 False,在训练过程中,embedding 也会跟着被训练

self.embedding.weight.requires_grad = False if fix_embedding else True

self.embedding_dim = embedding.size(1)

self.hidden_dim = hidden_dim

self.num_layers = num_layers

self.dropout = dropout

self.lstm = nn.LSTM(embedding_dim, hidden_dim, num_layers=num_layers, batch_first=True)

self.classifier = nn.Sequential( nn.Dropout(dropout),

nn.Linear(hidden_dim, 1),

nn.Sigmoid() )

def forward(self, inputs):

inputs = self.embedding(inputs)

x, _ = self.lstm(inputs, None)

# x 的 dimension (batch, seq_len, hidden_size)

# 取用 LSTM 最后一层的 hidden state 丢到分类器中

x = x[:, -1, :]

x = self.classifier(x)

return x

def training(batch_size, n_epoch, lr, train, valid, model, device):

# 输出模型总的参数数量、可训练的参数数量

total = sum(p.numel() for p in model.parameters())

trainable = sum(p.numel() for p in model.parameters() if p.requires_grad)

print('\nstart training, parameter total:{}, trainable:{}\n'.format(total, trainable))

loss = nn.BCELoss() # 定义损失函数为二元交叉熵损失 binary cross entropy loss

t_batch = len(train) # training 数据的batch size大小

v_batch = len(valid) # validation 数据的batch size大小

optimizer = optim.Adam(model.parameters(), lr=lr) # optimizer用Adam,设置适当的学习率lr

total_loss, total_acc, best_acc = 0, 0, 0

for epoch in range(n_epoch):

total_loss, total_acc = 0, 0

# training

model.train() # 将 model 的模式设为 train,这样 optimizer 就可以更新 model 的参数

for i, (inputs, labels) in enumerate(train):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device, dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

optimizer.zero_grad() # 由于 loss.backward() 的 gradient 会累加,所以每一个 batch 后需要归零

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

batch_loss.backward() # 计算 loss 的 gradient

optimizer.step() # 更新模型参数

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

print('Epoch | {}/{}'.format(epoch+1,n_epoch))

print('Train | Loss:{:.5f} Acc: {:.3f}'.format(total_loss/t_batch, total_acc/t_batch*100))

# validation

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

with torch.no_grad():

total_loss, total_acc = 0, 0

for i, (inputs, labels) in enumerate(valid):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device, dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

print("Valid | Loss:{:.5f} Acc: {:.3f} ".format(total_loss/v_batch, total_acc/v_batch*100))

if total_acc > best_acc:

# 如果 validation 的结果优于之前所有的結果,就把当下的模型保存下来,用于之后的testing

best_acc = total_acc

torch.save(model, "ckpt.model")

print('-----------------------------------------------')

def testing(batch_size, test_loader, model, device):

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

ret_output = [] # 返回的output

with torch.no_grad():

for i, inputs in enumerate(test_loader):

inputs = inputs.to(device, dtype=torch.long)

outputs = model(inputs)

outputs = outputs.squeeze()

outputs[outputs>=0.5] = 1 # 大于等于0.5为正面

outputs[outputs<0.5] = 0 # 小于0.5为负面

ret_output += outputs.int().tolist()

return ret_output

from sklearn.model_selection import train_test_split

# 通过 torch.cuda.is_available() 的值判断是否可以使用 GPU ,如果可以的话 device 就设为 "cuda",没有的话就设为 "cpu"

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 定义句子长度、要不要固定 embedding、batch 大小、要训练几个 epoch、 学习率的值、 w2v的路径

sen_len = 20

fix_embedding = True # fix embedding during training

batch_size = 128

epoch = 10

lr = 0.001

w2v_path = 'w2v_all.model'

print("loading data ...") # 读取 'training_label.txt' 'training_nolabel.txt'

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 对 input 跟 labels 做预处理

preprocess = Preprocess(train_x, sen_len, w2v_path=w2v_path)

embedding = preprocess.make_embedding(load=True)

train_x = preprocess.sentence_word2idx()

y = preprocess.labels_to_tensor(y)

# 定义模型

model = LSTM_Net(embedding, embedding_dim=250, hidden_dim=150, num_layers=1, dropout=0.5, fix_embedding=fix_embedding)

model = model.to(device) # device为 "cuda",model 使用 GPU 来训练(inputs 也需要是 cuda tensor)

# 把 data 分为 training data 和 validation data(将一部分 training data 作为 validation data)

X_train, X_val, y_train, y_val = train_test_split(train_x, y, test_size = 0.1, random_state = 1, stratify = y)

print('Train | Len:{} \nValid | Len:{}'.format(len(y_train), len(y_val)))

# 把 data 做成 dataset 供 dataloader 取用

train_dataset = TwitterDataset(X=X_train, y=y_train)

val_dataset = TwitterDataset(X=X_val, y=y_val)

# 把 data 转成 batch of tensors

train_loader = DataLoader(train_dataset, batch_size = batch_size, shuffle = True, num_workers = 0) # 为了比较模型性能,将shuffle设置为False,实际运用中应该设置成True

val_loader = DataLoader(val_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 开始训练

training(batch_size, epoch, lr, train_loader, val_loader, model, device)

# 测试模型并作预测

# 读取测试数据test_x

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 对test_x作预处理

preprocess = Preprocess(test_x, sen_len, w2v_path=w2v_path)

embedding = preprocess.make_embedding(load=True)

test_x = preprocess.sentence_word2idx()

test_dataset = TwitterDataset(X=test_x, y=None)

test_loader = DataLoader(test_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 读取模型

print('\nload model ...')

model = torch.load('ckpt.model')

# 测试模型

outputs = testing(batch_size, test_loader, model, device)

# 保存为 csv

tmp = pd.DataFrame({

"id":[str(i) for i in range(len(test_x))],"label":outputs})

print("save csv ...")

tmp.to_csv('predict.csv', index=False)

print("Finish Predicting")

self-training

主要定义了函数 add_label():

def add_label(outputs, threshold=0.9):

id = (outputs>=threshold) | (outputs<1-threshold)

outputs[outputs>=threshold] = 1 # 大于等于 threshold 为正面

outputs[outputs<1-threshold] = 0 # 小于 threshold 为负面

return outputs.long(), id

在 training()函数中增加了 self-training部分。

此外,修改 model 的 classifier 部分,变成了两层全连接层:

self.classifier = nn.Sequential( nn.Dropout(dropout),

nn.Linear(hidden_dim, 64),

nn.Dropout(dropout),

nn.Linear(64, 1),

nn.Sigmoid() )

完整代码如下

# 设置后可以过滤一些无用的warning

import warnings

warnings.filterwarnings('ignore')

# utils.py

# 用来定义一些之后常用到的函数

import torch

import numpy as np

import pandas as pd

import torch.optim as optim

import torch.nn.functional as F

def load_training_data(path='training_label.txt'):

# 读取 training 需要的数据

# 如果是 'training_label.txt',需要读取 label,如果是 'training_nolabel.txt',不需要读取 label

if 'training_label' in path:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

lines = [line.strip('\n').split(' ') for line in lines]

# 每行按空格分割后,第2个符号之后都是句子的单词

x = [line[2:] for line in lines]

# 每行按空格分割后,第0个符号是label

y = [line[0] for line in lines]

return x, y

else:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

x = [line.strip('\n').split(' ') for line in lines]

return x

def load_testing_data(path='testing_data'):

# 读取 testing 需要的数据

with open(path, 'r') as f:

lines = f.readlines()

# 第0行是表头,从第1行开始是数据

# 第0列是id,第1列是文本,按逗号分割,需要逗号之后的文本

X = ["".join(line.strip('\n').split(",")[1:]).strip() for line in lines[1:]]

X = [sen.split(' ') for sen in X]

return X

def evaluation(outputs, labels):

# outputs => 预测值,概率(float)

# labels => 真实值,标签(0或1)

outputs[outputs>=0.5] = 1 # 大于等于 0.5 为正面

outputs[outputs<0.5] = 0 # 小于 0.5 为负面

accuracy = torch.sum(torch.eq(outputs, labels)).item()

return accuracy

from gensim.models import Word2Vec

def train_word2vec(x):

# 训练 word to vector 的 word embedding

# window:滑动窗口的大小,min_count:过滤掉语料中出现频率小于min_count的词

model = Word2Vec(x, size=256, window=5, min_count=5, workers=12, iter=10, sg=1)

return model

# 读取 training 数据

print("loading training data ...")

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 读取 testing 数据

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 把 training 中的 word 变成 vector

model = train_word2vec(train_x + train_x_no_label + test_x) # w2v_all

# model = train_word2vec(train_x + test_x) # w2v

# 保存 vector

print("saving model ...")

model.save('w2v_all.model')

# model.save('w2v.model')

# 数据预处理

class Preprocess():

def __init__(self, sen_len, w2v_path):

self.w2v_path = w2v_path # word2vec的存储路径

self.sen_len = sen_len # 句子的固定长度

self.idx2word = []

self.word2idx = {

}

self.embedding_matrix = []

def get_w2v_model(self):

# 读取之前训练好的 word2vec

self.embedding = Word2Vec.load(self.w2v_path)

self.embedding_dim = self.embedding.vector_size

def add_embedding(self, word):

# 这里的 word 只会是 "" 或 ""

# 把一个随机生成的表征向量 vector 作为 "" 或 "" 的嵌入

vector = torch.empty(1, self.embedding_dim)

torch.nn.init.uniform_(vector)

# 它的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix = torch.cat([self.embedding_matrix, vector], 0)

def make_embedding(self, load=True):

print("Get embedding ...")

# 获取训练好的 Word2vec word embedding

if load:

print("loading word to vec model ...")

self.get_w2v_model()

else:

raise NotImplementedError

# 遍历嵌入后的单词

for i, word in enumerate(self.embedding.wv.vocab):

print('get words #{}'.format(i+1), end='\r')

# 新加入的 word 的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix.append(self.embedding[word])

print('')

# 把 embedding_matrix 变成 tensor

self.embedding_matrix = torch.tensor(self.embedding_matrix)

# 将 和 加入 embedding

self.add_embedding("" )

self.add_embedding("" )

print("total words: {}".format(len(self.embedding_matrix)))

return self.embedding_matrix

def pad_sequence(self, sentence):

# 将每个句子变成一样的长度,即 sen_len 的长度

if len(sentence) > self.sen_len:

# 如果句子长度大于 sen_len 的长度,就截断

sentence = sentence[:self.sen_len]

else:

# 如果句子长度小于 sen_len 的长度,就补上 符号,缺多少个单词就补多少个

pad_len = self.sen_len - len(sentence)

for _ in range(pad_len):

sentence.append(self.word2idx["" ])

assert len(sentence) == self.sen_len

return sentence

def sentence_word2idx(self, sentences):

# 把句子里面的字变成相对应的 index

sentence_list = []

for i, sen in enumerate(sentences):

print('sentence count #{}'.format(i+1), end='\r')

sentence_idx = []

for word in sen:

if (word in self.word2idx.keys()):

sentence_idx.append(self.word2idx[word])

else:

# 没有出现过的单词就用 表示

sentence_idx.append(self.word2idx["" ])

# 将每个句子变成一样的长度

sentence_idx = self.pad_sequence(sentence_idx)

sentence_list.append(sentence_idx)

return torch.LongTensor(sentence_list)

def labels_to_tensor(self, y):

# 把 labels 转成 tensor

y = [int(label) for label in y]

return torch.LongTensor(y)

def get_pad(self):

return self.word2idx["" ]

from torch.utils.data import DataLoader, Dataset

class TwitterDataset(Dataset):

"""

Expected data shape like:(data_num, data_len)

Data can be a list of numpy array or a list of lists

input data shape : (data_num, seq_len, feature_dim)

__len__ will return the number of data

"""

def __init__(self, X, y):

self.data = X

self.label = y

def __getitem__(self, idx):

if self.label is None: return self.data[idx]

return self.data[idx], self.label[idx]

def __len__(self):

return len(self.data)

from torch import nn

class LSTM_Net(nn.Module):

def __init__(self, embedding, embedding_dim, hidden_dim, num_layers, dropout=0.5, fix_embedding=True):

super(LSTM_Net, self).__init__()

# embedding layer

self.embedding = torch.nn.Embedding(embedding.size(0),embedding.size(1))

self.embedding.weight = torch.nn.Parameter(embedding)

# 是否将 embedding 固定住,如果 fix_embedding 为 False,在训练过程中,embedding 也会跟着被训练

self.embedding.weight.requires_grad = False if fix_embedding else True

self.embedding_dim = embedding.size(1)

self.hidden_dim = hidden_dim

self.num_layers = num_layers

self.dropout = dropout

self.lstm = nn.LSTM(embedding_dim, hidden_dim, num_layers=num_layers, batch_first=True)

self.classifier = nn.Sequential( nn.Dropout(dropout),

nn.Linear(hidden_dim, 64),

nn.Dropout(dropout),

nn.Linear(64, 1),

nn.Sigmoid() )

def forward(self, inputs):

inputs = self.embedding(inputs)

x, _ = self.lstm(inputs, None)

# x 的 dimension (batch, seq_len, hidden_size)

# 取用 LSTM 最后一层的 hidden state 丢到分类器中

x = x[:, -1, :]

x = self.classifier(x)

return x

def add_label(outputs, threshold=0.9):

id = (outputs>=threshold) | (outputs<1-threshold)

outputs[outputs>=threshold] = 1 # 大于等于 threshold 为正面

outputs[outputs<1-threshold] = 0 # 小于 threshold 为负面

return outputs.long(), id

def training(batch_size, n_epoch, lr, X_train, y_train, val_loader, train_x_no_label, model, device):

# 输出模型总的参数数量、可训练的参数数量

total = sum(p.numel() for p in model.parameters())

trainable = sum(p.numel() for p in model.parameters() if p.requires_grad)

print('\nstart training, parameter total:{}, trainable:{}\n'.format(total, trainable))

loss = nn.BCELoss() # 定义损失函数为二元交叉熵损失 binary cross entropy loss

optimizer = optim.Adam(model.parameters(), lr=lr) # optimizer用Adam,设置适当的学习率lr

total_loss, total_acc, best_acc = 0, 0, 0

for epoch in range(n_epoch):

print(X_train.shape)

train_dataset = TwitterDataset(X=X_train, y=y_train)

train_loader = DataLoader(train_dataset, batch_size = batch_size, shuffle = True, num_workers = 0)

total_loss, total_acc = 0, 0

# training

model.train() # 将 model 的模式设为 train,这样 optimizer 就可以更新 model 的参数

for i, (inputs, labels) in enumerate(train_loader):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device, dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

optimizer.zero_grad() # 由于 loss.backward() 的 gradient 会累加,所以每一个 batch 后需要归零

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

batch_loss.backward() # 计算 loss 的 gradient

optimizer.step() # 更新模型参数

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

print('Epoch | {}/{}'.format(epoch+1,n_epoch))

t_batch = len(train_loader)

print('Train | Loss:{:.5f} Acc: {:.3f}'.format(total_loss/t_batch, total_acc/t_batch*100))

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

# self-training

if epoch >= 4 :

train_no_label_dataset = TwitterDataset(X=train_x_no_label, y=None)

train_no_label_loader = DataLoader(train_no_label_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

train_x_no_label_tmp = torch.Tensor([[]])

with torch.no_grad():

for i, (inputs) in enumerate(train_no_label_loader):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

labels, id = add_label(outputs)

# 加入新标注的数据

X_train = torch.cat((X_train.to(device), inputs[id]), dim=0)

y_train = torch.cat((y_train.to(device), labels[id]), dim=0)

if i == 0:

train_x_no_label = inputs[~id]

else:

train_x_no_label = torch.cat((train_x_no_label.to(device), inputs[~id]), dim=0)

# validation

if val_loader is None:

torch.save(model, "ckpt.model")

else:

with torch.no_grad():

total_loss, total_acc = 0, 0

for i, (inputs, labels) in enumerate(val_loader):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device, dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

v_batch = len(val_loader)

print("Valid | Loss:{:.5f} Acc: {:.3f} ".format(total_loss/v_batch, total_acc/v_batch*100))

if total_acc > best_acc:

# 如果 validation 的结果优于之前所有的結果,就把当下的模型保存下来,用于之后的testing

best_acc = total_acc

torch.save(model, "ckpt.model")

print('-----------------------------------------------')

from sklearn.model_selection import train_test_split

# 通过 torch.cuda.is_available() 的值判断是否可以使用 GPU ,如果可以的话 device 就设为 "cuda",没有的话就设为 "cpu"

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 定义句子长度、要不要固定 embedding、batch 大小、要训练几个 epoch、 学习率的值、 w2v的路径

sen_len = 20

fix_embedding = True # fix embedding during training

batch_size = 128

epoch = 11

lr = 8e-4

w2v_path = 'w2v_all.model'

print("loading data ...") # 读取 'training_label.txt' 'training_nolabel.txt'

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 对 input 跟 labels 做预处理

preprocess = Preprocess(sen_len, w2v_path=w2v_path)

embedding = preprocess.make_embedding(load=True)

train_x = preprocess.sentence_word2idx(train_x)

y = preprocess.labels_to_tensor(y)

train_x_no_label = preprocess.sentence_word2idx(train_x_no_label)

# 把 data 分为 training data 和 validation data(将一部分 training data 作为 validation data)

X_train, X_val, y_train, y_val = train_test_split(train_x, y, test_size = 0.1, random_state = 1, stratify = y)

print('Train | Len:{} \nValid | Len:{}'.format(len(y_train), len(y_val)))

val_dataset = TwitterDataset(X=X_val, y=y_val)

val_loader = DataLoader(val_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 定义模型

model = LSTM_Net(embedding, embedding_dim=256, hidden_dim=128, num_layers=1, dropout=0.5, fix_embedding=fix_embedding)

model = model.to(device) # device为 "cuda",model 使用 GPU 来训练(inputs 也需要是 cuda tensor)

# 开始训练

# training(batch_size, epoch, lr, X_train, y_train, val_loader, train_x_no_label, model, device)

training(batch_size, epoch, lr, train_x, y, None, train_x_no_label, model, device)

def testing(batch_size, test_loader, model, device):

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

ret_output = [] # 返回的output

with torch.no_grad():

for i, inputs in enumerate(test_loader):

inputs = inputs.to(device, dtype=torch.long)

outputs = model(inputs)

outputs = outputs.squeeze()

outputs[outputs>=0.5] = 1 # 大于等于0.5为正面

outputs[outputs<0.5] = 0 # 小于0.5为负面

ret_output += outputs.int().tolist()

return ret_output

# 测试模型并作预测

# 读取测试数据test_x

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 对test_x作预处理

test_x = preprocess.sentence_word2idx(test_x)

test_dataset = TwitterDataset(X=test_x, y=None)

test_loader = DataLoader(test_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 读取模型

print('\nload model ...')

model = torch.load('ckpt.model')

# 测试模型

outputs = testing(batch_size, test_loader, model, device)

# 保存为 csv

tmp = pd.DataFrame({

"id":[str(i) for i in range(len(test_x))],"label":outputs})

print("save csv ...")

tmp.to_csv('predict.csv', index=False)

print("Finish Predicting")

去除标点符号 + Bi-LSTM+ Attention

利用 re 库去除 .,?!' 等标点符号和数字 0-9

原始代码:

def load_training_data(path='training_label.txt'):

# 读取 training 需要的数据

# 如果是 'training_label.txt',需要读取 label,如果是 'training_nolabel.txt',不需要读取 label

if 'training_label' in path:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

lines = [line.strip('\n').split(' ') for line in lines]

# 每行按空格分割后,第2个符号之后都是句子的单词

x = [line[2:] for line in lines]

# 每行按空格分割后,第0个符号是label

y = [line[0] for line in lines]

return x, y

else:

with open(path, 'r') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

x = [line.strip('\n').split(' ') for line in lines]

return x

def load_testing_data(path='testing_data'):

# 读取 testing 需要的数据

with open(path, 'r') as f:

lines = f.readlines()

# 第0行是表头,从第1行开始是数据

# 第0列是id,第1列是文本,按逗号分割,需要逗号之后的文本

X = ["".join(line.strip('\n').split(",")[1:]).strip() for line in lines[1:]]

X = [sen.split(' ') for sen in X]

return X

修改为:

def load_training_data(path='training_label.txt'):

# 读取 training 需要的数据

# 如果是 'training_label.txt',需要读取 label,如果是 'training_nolabel.txt',不需要读取 label

if 'training_label' in path:

with open(path, 'r', encoding='UTF-8') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

lines = [line.strip('\n') for line in lines]

# 每行按空格分割后,第2个符号之后都是句子的单词

x = [line[10:] for line in lines]

x = [re.sub(r"([.!?,'])", r"", s) for s in x]

x = [' '.join(s.split()) for s in x]

x = [s.split() for s in x]

# 每行按空格分割后,第0个符号是label

y = [line[0] for line in lines]

return x, y

else:

with open(path, 'r', encoding='UTF-8') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

x = [line.strip('\n') for line in lines]

x = [re.sub(r"([.!?,'])", r"", s) for s in x]

x = [' '.join(s.split()) for s in x]

x = [s.split() for s in x]

return x

def load_testing_data(path='testing_data'):

# 读取 testing 需要的数据

with open(path, 'r', encoding='UTF-8') as f:

lines = f.readlines()

# 第0行是表头,从第1行开始是数据

# 第0列是id,第1列是文本,按逗号分割,需要逗号之后的文本

X = ["".join(line.strip('\n').split(",")[1:]).strip() for line in lines[1:]]

X = [re.sub(r"([.!?,'])", r"", s) for s in X]

X = [' '.join(s.split()) for s in X]

X = [s.split() for s in X]

return X

模型用到了双向的 LSTM 模型和注意力机制,模型定义如下:

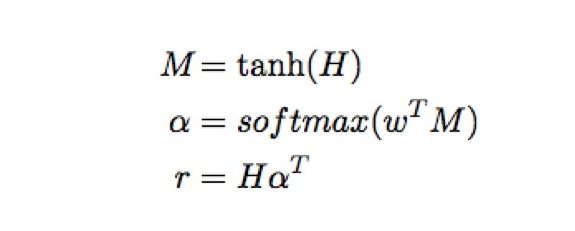

公式:

计算(参考下列代码中的attention):

- 将BILSTM网络输出的结果(shape:[batch_size, time_step, hidden_dims * num_directions(=2)])拆成两个大小为[batch_size, time_step, hidden_dims]的Tensor;

- 将第一步拆出的两个Tensor进行相加运算得到

h(shape:[batch_size, time_step, hidden_dims]); - 将

h进行tanh激活,得到m(shape:[batch_size, time_step, hidden_dims]); - 将BILSTM网络最后一个隐状态(shape:[batch_size, num_layers * num_directions, hidden_dims])在第二维度进行求和,得到新的

lstm_hidden(shape:[batch_size, hidden_dims]); - 将

lstm_hidden的维度从[batch_size, n_hidden]扩展到[batch_size, 1, hidden_dims]; - 使用

slef.atten_layer(h)获得用于后续计算权重的向量atten_w(shape:[batch_size, 1, hidden_dims]); - 使用

torch.bmm(atten_w, m.transpose(1, 2))得到atten_context(shape:[batch_size, 1, time_step]); - 将

atten_context使用F.softmax(atten_context, dim=-1)进行归一化,得到基于上下文权重的softmax_w(shape:[batch_size, 1, time_step]); - 使用

torch.bmm(softmax_w, h)得到基于权重的BILSTM输出context(shape:[batch_size, 1, hidden_dims]); - 将

context的第二维度消掉,得到result(shape:[batch_size, hidden_dims]) ; - 返回

result;

class Atten_BiLSTM(nn.Module):

def __init__(self, embedding, embedding_dim, hidden_dim, num_layers, dropout=0.5, fix_embedding=True):

super(Atten_BiLSTM, self).__init__()

# embedding layer

self.embedding = torch.nn.Embedding(embedding.size(0), embedding.size(1))

self.embedding.weight = torch.nn.Parameter(embedding)

# 是否将 embedding 固定住,如果 fix_embedding 为 False,在训练过程中,embedding 也会跟着被训练

self.embedding.weight.requires_grad = False if fix_embedding else True

self.embedding_dim = embedding.size(1)

self.hidden_dim = hidden_dim

self.num_layers = num_layers

self.dropout = nn.Dropout(dropout)

self.lstm = nn.LSTM(embedding_dim, hidden_dim, num_layers=num_layers, batch_first=True, bidirectional=True)

self.classifier = nn.Sequential(nn.Dropout(dropout),

nn.Linear(hidden_dim, 64),

nn.Dropout(dropout),

nn.Linear(64, 32),

nn.Dropout(dropout),

nn.Linear(32, 16),

nn.Dropout(dropout),

nn.Linear(16, 1),

nn.Sigmoid())

self.attention_layer = nn.Sequential(

nn.Linear(hidden_dim, hidden_dim),

nn.ReLU()

)

def attention(self, output, hidden):

# output (batch_size, seq_len, hidden_dims * num_direction)

# hidden (batch_size, num_layers * num_direction, hidden_dims)

output = output[:, :, :self.hidden_dim] + output[:, :, self.hidden_dim:] # (batch_size, seq_len, hidden_dims)

m = nn.Tanh()(output) # (batch_size, seq_len, hidden_size)

# [batch_size, hidden_dims]

hidden = torch.sum(hidden, dim=1)

hidden = hidden.unsqueeze(1) # (batch_size, 1, hidden_size)

atten_w = self.attention_layer(hidden) # (batch_size, 1, hidden_size)

atten_context = torch.bmm(atten_w, m.transpose(1, 2)) # (batch_size, 1, seq_len)

softmax_w = F.softmax(atten_context, dim=-1) # (batch_size, 1, seq_len)

context = torch.bmm(softmax_w, output) # (batch_size, 1, hidden_dims)

return context.squeeze(1)

def forward(self, inputs):

inputs = self.embedding(inputs)

# 可以把hidden理解为当前时刻,LSTM层的输出结果,而cell_state是记忆单元中的值

# output则是包括当前时刻以及之前时刻所有hidden的输出值

# 在只有单时间步的时候:output = hidden

# 在多时间步时:output可以看做是各个时间点hidden的输出

# x (batch, seq_len, hidden_dims)

# hidden (num_layers *num_direction, batch_size, hidden_dims)

x, (hidden, cell_state) = self.lstm(inputs, None)

hidden = hidden.permute(1, 0, 2) # (batch_size, num_layers *num_direction, hidden_dims)

# atten_out [batch_size, 1, hidden_dims]

atten_out = self.attention(x, hidden)

return self.classifier(atten_out)

关于的unsqueeze的一点疑惑:

data.shape

torch.Size([5, 3])

data.unsqueeze(0).shape

torch.Size([1, 5, 3])

data.unsqueeze(1).shape

torch.Size([5, 1, 3])

data.unsqueeze(2).shape

torch.Size([5, 3, 1])

data.unsqueeze(-2).shape

torch.Size([5, 1, 3])

data.unsqueeze(-1).shape

torch.Size([5, 3, 1])

data.unsqueeze(-3).shape

torch.Size([1, 5, 3])

关于上面的Bi-LSTM+Attention,不清楚的可以看看这三篇文章:

- 【NLP实践】使用Pytorch进行文本分类——BILSTM+ATTENTION

- 易于理解的一些时序相关的操作(LSTM)和注意力机制(Attention Model)

- pytorch 中LSTM的输出值

- 双向LSTM+Attention文本分类模型(附pytorch代码)

完整代码:

# 设置后可以过滤一些无用的warning

import warnings

warnings.filterwarnings('ignore')

# utils.py

# 用来定义一些之后常用到的函数

import torch

import numpy as np

import pandas as pd

import torch.optim as optim

import torch.nn.functional as F

from gensim.models import Word2Vec

from torch.autograd import Variable

from torch import nn

import re

def load_training_data(path='training_label.txt'):

# 读取 training 需要的数据

# 如果是 'training_label.txt',需要读取 label,如果是 'training_nolabel.txt',不需要读取 label

if 'training_label' in path:

with open(path, 'r', encoding='UTF-8') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

lines = [line.strip('\n') for line in lines]

# 每行按空格分割后,第2个符号之后都是句子的单词

x = [line[10:] for line in lines]

x = [re.sub(r"([.!?,'])", r"", s) for s in x]

x = [' '.join(s.split()) for s in x]

x = [s.split() for s in x]

# 每行按空格分割后,第0个符号是label

y = [line[0] for line in lines]

return x, y

else:

with open(path, 'r', encoding='UTF-8') as f:

lines = f.readlines()

# lines是二维数组,第一维是行line(按回车分割),第二维是每行的单词(按空格分割)

x = [line.strip('\n') for line in lines]

x = [re.sub(r"([.!?,'])", r"", s) for s in x]

x = [' '.join(s.split()) for s in x]

x = [s.split() for s in x]

return x

def load_testing_data(path='testing_data'):

# 读取 testing 需要的数据

with open(path, 'r', encoding='UTF-8') as f:

lines = f.readlines()

# 第0行是表头,从第1行开始是数据

# 第0列是id,第1列是文本,按逗号分割,需要逗号之后的文本

X = ["".join(line.strip('\n').split(",")[1:]).strip() for line in lines[1:]]

X = [re.sub(r"([.!?,'])", r"", s) for s in X]

X = [' '.join(s.split()) for s in X]

X = [s.split() for s in X]

return X

def evaluation(outputs, labels):

# outputs => 预测值,概率(float)

# labels => 真实值,标签(0或1)

outputs[outputs>=0.5] = 1 # 大于等于 0.5 为正面

outputs[outputs<0.5] = 0 # 小于 0.5 为负面

accuracy = torch.sum(torch.eq(outputs, labels)).item()

return accuracy

def train_word2vec(x):

# 训练 word to vector 的 word embedding

# window:滑动窗口的大小,min_count:过滤掉语料中出现频率小于min_count的词

model = Word2Vec(x, size=256, window=5, min_count=5, workers=12, iter=10, sg=1)

return model

# 读取 training 数据

print("loading training data ...")

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 读取 testing 数据

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 把 training 中的 word 变成 vector

model = train_word2vec(train_x + train_x_no_label + test_x) # w2v_all

# model = train_word2vec(train_x + test_x) # w2v

# 保存 vector

print("saving model ...")

model.save('w2v_all.model')

# model.save('w2v.model')

# 数据预处理

class Preprocess():

def __init__(self, sen_len, w2v_path):

self.w2v_path = w2v_path # word2vec的存储路径

self.sen_len = sen_len # 句子的固定长度

self.idx2word = []

self.word2idx = {

}

self.embedding_matrix = []

def get_w2v_model(self):

# 读取之前训练好的 word2vec

self.embedding = Word2Vec.load(self.w2v_path)

self.embedding_dim = self.embedding.vector_size

def add_embedding(self, word):

# 这里的 word 只会是 "" 或 ""

# 把一个随机生成的表征向量 vector 作为 "" 或 "" 的嵌入

vector = torch.empty(1, self.embedding_dim)

torch.nn.init.uniform_(vector)

# 它的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix = torch.cat([self.embedding_matrix, vector], 0)

def make_embedding(self, load=True):

print("Get embedding ...")

# 获取训练好的 Word2vec word embedding

if load:

print("loading word to vec model ...")

self.get_w2v_model()

else:

raise NotImplementedError

# 遍历嵌入后的单词

for i, word in enumerate(self.embedding.wv.vocab):

print('get words #{}'.format(i+1), end='\r')

# 新加入的 word 的 index 是 word2idx 这个词典的长度,即最后一个

self.word2idx[word] = len(self.word2idx)

self.idx2word.append(word)

self.embedding_matrix.append(self.embedding[word])

print('')

# 把 embedding_matrix 变成 tensor

self.embedding_matrix = torch.tensor(self.embedding_matrix)

# 将 和 加入 embedding

self.add_embedding("" )

self.add_embedding("" )

print("total words: {}".format(len(self.embedding_matrix)))

return self.embedding_matrix

def pad_sequence(self, sentence):

# 将每个句子变成一样的长度,即 sen_len 的长度

if len(sentence) > self.sen_len:

# 如果句子长度大于 sen_len 的长度,就截断

sentence = sentence[:self.sen_len]

else:

# 如果句子长度小于 sen_len 的长度,就补上 符号,缺多少个单词就补多少个

pad_len = self.sen_len - len(sentence)

for _ in range(pad_len):

sentence.append(self.word2idx["" ])

assert len(sentence) == self.sen_len

return sentence

def sentence_word2idx(self, sentences):

# 把句子里面的字变成相对应的 index

sentence_list = []

for i, sen in enumerate(sentences):

print('sentence count #{}'.format(i+1), end='\r')

sentence_idx = []

for word in sen:

if (word in self.word2idx.keys()):

sentence_idx.append(self.word2idx[word])

else:

# 没有出现过的单词就用 表示

sentence_idx.append(self.word2idx["" ])

# 将每个句子变成一样的长度

sentence_idx = self.pad_sequence(sentence_idx)

sentence_list.append(sentence_idx)

return torch.LongTensor(sentence_list)

def labels_to_tensor(self, y):

# 把 labels 转成 tensor

y = [int(label) for label in y]

return torch.LongTensor(y)

from torch.utils.data import DataLoader, Dataset

class TwitterDataset(Dataset):

"""

Expected data shape like:(data_num, data_len)

Data can be a list of numpy array or a list of lists

input data shape : (data_num, seq_len, feature_dim)

__len__ will return the number of data

"""

def __init__(self, X, y):

self.data = X

self.label = y

def __getitem__(self, idx):

if self.label is None: return self.data[idx]

return self.data[idx], self.label[idx]

def __len__(self):

return len(self.data)

class Atten_BiLSTM(nn.Module):

def __init__(self, embedding, embedding_dim, hidden_dim, num_layers, dropout=0.5, fix_embedding=True):

super(Atten_BiLSTM, self).__init__()

# embedding layer

self.embedding = torch.nn.Embedding(embedding.size(0), embedding.size(1))

self.embedding.weight = torch.nn.Parameter(embedding)

# 是否将 embedding 固定住,如果 fix_embedding 为 False,在训练过程中,embedding 也会跟着被训练

self.embedding.weight.requires_grad = False if fix_embedding else True

self.embedding_dim = embedding.size(1)

self.hidden_dim = hidden_dim

self.num_layers = num_layers

self.dropout = nn.Dropout(dropout)

self.lstm = nn.LSTM(embedding_dim, hidden_dim, num_layers=num_layers, batch_first=True, bidirectional=True)

self.classifier = nn.Sequential(nn.Dropout(dropout),

nn.Linear(hidden_dim, 64),

nn.Dropout(dropout),

nn.Linear(64, 32),

nn.Dropout(dropout),

nn.Linear(32, 16),

nn.Dropout(dropout),

nn.Linear(16, 1),

nn.Sigmoid())

self.attention_layer = nn.Sequential(

nn.Linear(hidden_dim, hidden_dim),

nn.ReLU()

)

def attention(self, output, hidden):

# output (batch_size, seq_len, hidden_size * num_direction)

# hidden (batch_size, num_layers * num_direction, hidden_size)

output = output[:,:,:self.hidden_dim] + output[:,:,self.hidden_dim:] # (batch_size, seq_len, hidden_size)

hidden = torch.sum(hidden, dim=1)

hidden = hidden.unsqueeze(1) # (batch_size, 1, hidden_size)

atten_w = self.attention_layer(hidden) # (batch_size, 1, hidden_size)

m = nn.Tanh()(output) # (batch_size, seq_len, hidden_size)

atten_context = torch.bmm(atten_w, m.transpose(1, 2))

softmax_w = F.softmax(atten_context, dim=-1)

context = torch.bmm(softmax_w, output)

return context.squeeze(1)

def forward(self, inputs):

inputs = self.embedding(inputs)

# x (batch, seq_len, hidden_size)

# hidden (num_layers *num_direction, batch_size, hidden_size)

x, (hidden, _) = self.lstm(inputs, None)

hidden = hidden.permute(1, 0, 2) # (batch_size, num_layers *num_direction, hidden_size)

# atten_out [batch_size, 1, hidden_dim]

atten_out = self.attention(x, hidden)

return self.classifier(atten_out)

def add_label(outputs, threshold=0.9):

id = (outputs>=threshold) | (outputs<1-threshold)

outputs[outputs>=threshold] = 1 # 大于等于 threshold 为正面

outputs[outputs<1-threshold] = 0 # 小于 threshold 为负面

return outputs.long(), id

def training(batch_size, n_epoch, lr, X_train, y_train, val_loader, train_x_no_label, model, device):

# 输出模型总的参数数量、可训练的参数数量

total = sum(p.numel() for p in model.parameters())

trainable = sum(p.numel() for p in model.parameters() if p.requires_grad)

print('\nstart training, parameter total:{}, trainable:{}\n'.format(total, trainable))

loss = nn.BCELoss() # 定义损失函数为二元交叉熵损失 binary cross entropy loss

optimizer = optim.Adam(model.parameters(), lr=lr) # optimizer用Adam,设置适当的学习率lr

total_loss, total_acc, best_acc = 0, 0, 0

start_epoch = 5

for epoch in range(n_epoch):

print(X_train.shape)

train_dataset = TwitterDataset(X=X_train, y=y_train)

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers=0)

total_loss, total_acc = 0, 0

# training

model.train() # 将 model 的模式设为 train,这样 optimizer 就可以更新 model 的参数

for i, (inputs, labels) in enumerate(train_loader):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device,

dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

optimizer.zero_grad() # 由于 loss.backward() 的 gradient 会累加,所以每一个 batch 后需要归零

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

batch_loss.backward() # 计算 loss 的 gradient

optimizer.step() # 更新模型参数

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

print('Epoch | {}/{}'.format(epoch + 1, n_epoch))

t_batch = len(train_loader)

print('Train | Loss:{:.5f} Acc: {:.3f}'.format(total_loss / t_batch, total_acc / t_batch * 100))

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

# self-training

if epoch >= start_epoch:

train_no_label_dataset = TwitterDataset(X=train_x_no_label, y=None)

train_no_label_loader = DataLoader(train_no_label_dataset, batch_size=batch_size, shuffle=False,

num_workers=0)

with torch.no_grad():

for i, (inputs) in enumerate(train_no_label_loader):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

labels, id = add_label(outputs)

# 加入新标注的数据

X_train = torch.cat((X_train.to(device), inputs[id]), dim=0)

y_train = torch.cat((y_train.to(device), labels[id]), dim=0)

if i == 0:

train_x_no_label = inputs[~id]

else:

train_x_no_label = torch.cat((train_x_no_label.to(device), inputs[~id]), dim=0)

# validation

if val_loader is None:

torch.save(model, "ckpt.model")

else:

with torch.no_grad():

total_loss, total_acc = 0, 0

for i, (inputs, labels) in enumerate(val_loader):

inputs = inputs.to(device, dtype=torch.long) # 因为 device 为 "cuda",将 inputs 转成 torch.cuda.LongTensor

labels = labels.to(device,

dtype=torch.float) # 因为 device 为 "cuda",将 labels 转成 torch.cuda.FloatTensor,loss()需要float

outputs = model(inputs) # 模型输入Input,输出output

outputs = outputs.squeeze() # 去掉最外面的 dimension,好让 outputs 可以丢进 loss()

batch_loss = loss(outputs, labels) # 计算模型此时的 training loss

accuracy = evaluation(outputs, labels) # 计算模型此时的 training accuracy

total_acc += (accuracy / batch_size)

total_loss += batch_loss.item()

v_batch = len(val_loader)

print("Valid | Loss:{:.5f} Acc: {:.3f} ".format(total_loss / v_batch, total_acc / v_batch * 100))

if total_acc > best_acc:

# 如果 validation 的结果优于之前所有的結果,就把当下的模型保存下来,用于之后的testing

best_acc = total_acc

torch.save(model, "ckpt.model")

print('-----------------------------------------------')

from sklearn.model_selection import train_test_split

# 通过 torch.cuda.is_available() 的值判断是否可以使用 GPU ,如果可以的话 device 就设为 "cuda",没有的话就设为 "cpu"

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 定义句子长度、要不要固定 embedding、batch 大小、要训练几个 epoch、 学习率的值、 w2v的路径

sen_len = 40

fix_embedding = True # fix embedding during training

batch_size = 128

epoch = 20

lr = 2e-3

w2v_path = 'w2v_all.model'

print("loading data ...") # 读取 'training_label.txt' 'training_nolabel.txt'

train_x, y = load_training_data('training_label.txt')

train_x_no_label = load_training_data('training_nolabel.txt')

# 对 input 跟 labels 做预处理

preprocess = Preprocess(sen_len, w2v_path=w2v_path)

embedding = preprocess.make_embedding(load=True)

train_x = preprocess.sentence_word2idx(train_x)

y = preprocess.labels_to_tensor(y)

train_x_no_label = preprocess.sentence_word2idx(train_x_no_label)

# 把 data 分为 training data 和 validation data(将一部分 training data 作为 validation data)

X_train, X_val, y_train, y_val = train_test_split(train_x, y, test_size = 0.1, random_state = 1, stratify = y)

print('Train | Len:{} \nValid | Len:{}'.format(len(y_train), len(y_val)))

val_dataset = TwitterDataset(X=X_val, y=y_val)

val_loader = DataLoader(val_dataset, batch_size = batch_size, shuffle = False, num_workers = 0)

# 定义模型

model = Atten_BiLSTM(embedding, embedding_dim=256, hidden_dim=128, num_layers=1, dropout=0.5, fix_embedding=fix_embedding)

model = model.to(device) # device为 "cuda",model 使用 GPU 来训练(inputs 也需要是 cuda tensor)

# 开始训练

training(batch_size, epoch, lr, X_train, y_train, val_loader, train_x_no_label, model, device)

# training(batch_size, epoch, lr, train_x, y, None, train_x_no_label, model, device)

def testing(batch_size, test_loader, model, device):

model.eval() # 将 model 的模式设为 eval,这样 model 的参数就会被固定住

ret_output = [] # 返回的output

with torch.no_grad():

for i, inputs in enumerate(test_loader):

inputs = inputs.to(device, dtype=torch.long)

outputs = model(inputs)

outputs = outputs.squeeze()

outputs[outputs >= 0.5] = 1 # 大于等于0.5为正面

outputs[outputs < 0.5] = 0 # 小于0.5为负面

ret_output += outputs.int().tolist()

return ret_output

# 测试模型并作预测

# 读取测试数据test_x

print("loading testing data ...")

test_x = load_testing_data('testing_data.txt')

# 对test_x作预处理

test_x = preprocess.sentence_word2idx(test_x)

test_dataset = TwitterDataset(X=test_x, y=None)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False, num_workers=0)

# 读取模型

print('\nload model ...')

model = torch.load('ckpt.model')

# 测试模型

outputs = testing(batch_size, test_loader, model, device)

# 保存为 csv

tmp = pd.DataFrame({

"id": [str(i) for i in range(len(test_x))], "label": outputs})

print("save csv ...")

tmp.to_csv('predict.csv', index=False)

print("Finish Predicting")