GFS分布式文件系统——理论加实例

目录

- 一、GlusterFS概述

- 二、GlusterFS工作原理

- 三、GlusterFS的卷类型

-

- 3.1 分布式卷

- 3.2 条带卷

- 3.3 复制卷

- 3.4分布式条带卷

- 3.5 分布式复制卷

- 四、GlusterFS部署实例

- 五、测试结果(现象详解)

一、GlusterFS概述

■ GlusterFS简介

■ GlusterFS术语

- Brick

- Volume

- FUSE

- VFS

- Glusterd

■ 模块化堆栈式架构

二、GlusterFS工作原理

■ GlusterFS工作流程

- 客户端或应用程序通过GlusterFS的挂载点访问数据

- linux系统内核通过VFS API收到请求并处理

- VFS将数据递交给FUSE内核文件系统,fuse文件系统则是将数据通过/dev/fuse设备文件递交给了GlusterFS client端

- GlusterFS client收到数据后,client根据配置文件的配置对数据进行处理

- 通过网络将数据传递至远端的GlusterFS Server,并且将数据写入到服务器存储设备上

■ 弹性HASH算法

- 通过HASH算法得到一个32位的整数

- 划分为N个连续的子空间,每个空间对应一个Brick(存储块)

- 弹性HASH算法的优点

◆ 保证数据平均分布在每一个Brick中

◆解决了对元数据服务器的依赖,进而解决了单点故障以及访问瓶颈

三、GlusterFS的卷类型

■ 分布式卷

■ 条带卷

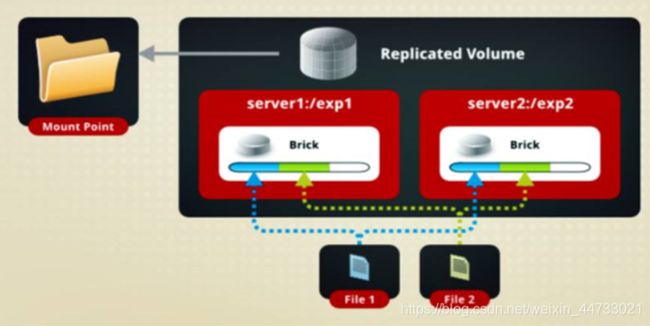

■ 复制卷

■ 分布式条带卷

■ 分布式复制卷

■ 条带复制卷

■ 分布式条带复制卷

3.1 分布式卷

■ 分布式卷

- 没有对文件进行分块处理

- 通过扩展文件属性保存HASH值

- 支持的底层文件系统有EXT3、EXT4、ZFS、XFS等

如图所示:没有分块处理,文件只能存在一个server中,效率不提升

分布式卷,不是轮询存储,而是HASA算法随机存储

■ 分布式卷的特点

- 文件分布在不同的服务器,不具备冗余性

- 更容易和廉价地扩展卷的大小

- 单点故障会造成数据丢失

- 依赖底层的数据保护

■ 创建分布式卷

- 创建一个名为dis-volume的分布式卷,文件将根据HASH分布在server1:/dir1、server2:/dir2和server3:/dir3中

gluster volume create dis-volume server1:/dir1 server2:/dir2 server3:/dir3

3.2 条带卷

■ 根据偏移量将文件分成N块(N个条带节点),轮询的存储在每个Brick Server节点

■ 存储大文件时,性能尤为突出

■ 不具备冗余性,类似Raid0

如图所示:从多个server中同时读取文件,效率提升

■ 特点

- 数据被分割成更小块分布到块服务器群中的不同条带区

- 分布减少了负载且更小的文件加速了存取的速度

- 没有数据冗余

■ 创建条带卷

- 创建了一个名为Stripe-volume的条带卷,文件将被分块轮询的存储在Server1:/dir1和Server2:/dir2两个Brick中

gluster volume create stripe-volume stripe 2 transport tcp server1:/dir1 server2:/dir2

3.3 复制卷

■ 同一文件保存一份或多分副本

■ 因为要保存副本,所以磁盘利用率较低

若多个节点上的存储空间不一致,将按照木桶效应取最低节点的容量作为该卷的总容量

■ 创建复制卷

- 创建名为rep-volume的复制卷,文件将同时存储两个副本,分别在Server1:/dir1和Server2:/dir2两个Brick中

gluster volume create rep-volume replica 2 transport tcp server1:/dir1 server2:/dir2

3.4分布式条带卷

■ 分布式条带卷

- 兼顾分布式卷和条带卷的功能

- 主要用于大文件访问处理

- 至少最少需要4台服务器

■ 创建分布式条带卷 - 创建了名为dis-stripe的分布式条带卷,配置分布式的条带卷时,卷中Brick所包含的存储服务器数必须是条带数的倍数(>=2倍)

gluster volume create dis-stripe stripe 2 transport tcp server1:/dir1

server2:/dir2 server3:/dir3 server4:/dir4

3.5 分布式复制卷

■ 分布式复制卷

■ 创建分布式复制卷

- 创建名为dis-rep的分布式条带卷,配置分布式复制卷时,卷中Brick所包含的存储服务器数必须是条带数的倍数(>=2倍)

gluster volume create dis-rep replica 2 transport tcp server1:/dir1 server2;

ldir2 server3:/dir3 server4:/dir4

四、GlusterFS部署实例

准备5台虚拟机,4台做分布式存储系统,一台做客户机

每一台先做基础设置

[root@localhost ~]# setenforce 0 //关闭核心防护

[root@localhost ~]# systemctl stop firewalld //关闭防火墙

[root@localhost ~]# systemctl disable firewalld //开机关闭防火墙

[root@localhost ~]# systemctl stop NetworkManager //关闭网络管理

[root@localhost ~]# systemctl disable NetworkManager

重启完后,查看一下,确保磁盘添加成功

[root@localhost ~]# fdisk -l

进行磁盘的分区、格式化和挂载,通过shell脚本运行,如下

[root@localhost ~]# vim disk.sh

#!/bin/bash

echo "进行磁盘创建,格式化,挂载"

fdisk -l |grep sd[b-z]

echo "------------------------------------"

read -p "你想选择上面那块磁盘挂载:" ci

case $ci in

sda)

echo "请选择数据盘挂载.."

exit

;;

sd[b-z])

fdisk /dev/$ci <<EOF

n

p

w

EOF

#创建挂载目录

mkdir -p /data/${ci}1

ta=/data/${ci}1

#格式化磁盘

mkfs.xfs /dev/${ci}1 &>/dev/null

#挂载磁盘

echo "/dev/${ci}1 $ta xfs defaults 0 0 ">>/etc/fstab

mount -a

;;

quit)

exit

;;

*)

echo "请选择正确的磁盘....重新尝试"

;;

esac

[root@localhost ~]# chmod +x disk.sh //加执行权限

[root@localhost ~]# ./disk.sh //执行

进行磁盘创建,格式化,挂载

磁盘 /dev/sdc:21.5 GB, 21474836480 字节,41943040 个扇区

磁盘 /dev/sde:21.5 GB, 21474836480 字节,41943040 个扇区

磁盘 /dev/sdb:21.5 GB, 21474836480 字节,41943040 个扇区

磁盘 /dev/sdd:21.5 GB, 21474836480 字节,41943040 个扇区

------------------------------------

你想选择上面那块磁盘挂载:sdb //此处输入需要挂载的磁盘

依次在四台机子上执行四个磁盘的挂载

挂载成功查看如下

以下是所有node节点的操作,以node1为例

[root@localhost ~]# hostnamectl set-hostname node1 //更改主机名

[root@localhost ~]# su

[root@node1 ~]# vim /etc/hosts //做映射

20.0.0.22 node1

20.0.0.23 node2

20.0.0.24 node3

20.0.0.25 node4

[root@node1 ~]# ping node1 //ping通主机名说明映射成功

PING node1 (20.0.0.22) 56(84) bytes of data.

64 bytes from node1 (20.0.0.22): icmp_seq=1 ttl=64 time=0.043 ms

64 bytes from node1 (20.0.0.22): icmp_seq=2 ttl=64 time=0.070 ms

从网上寻找到的gfs包源放在win10真机上,在win10上设置把它共享出来,让虚拟机可以挂载

win10上操作:

1.需要共享的目录:右键----》授予访问权限----》特定用户----》

添加everyone,权限设为读取

2.设置本地安全策略

本地策略-----》用户权限分配----》拒绝从网络访问计算机 清空

本地策略----》安全选项----》网络访问:本地账户的共享和安全模型----》仅来宾

3.网络和共享中心----》高级共享设置-----》所有项都为允许,无密码保护

如果smbclient报错host is down

把smb服务开启来

建立glfs库

[root@node1 ~]# smbclient -L //20.0.0.1/ //查看共享目录

Enter SAMBA\root's password:

Sharename Type Comment

--------- ---- -------

ADMIN$ Disk 远程管理

C$ Disk 默认共享

D$ Disk 默认共享

E$ Disk 默认共享

F$ Disk 默认共享

G$ Disk 默认共享

gfsrepo Disk

H$ Disk 默认共享

IPC$ IPC 远程 IPC

Users Disk

Connection to 20.0.0.1 failed (Error NT_STATUS_RESOURCE_NAME_NOT_FOUND)

NetBIOS over TCP disabled -- no workgroup available

[root@node1 ~]#mkdir /abc //创建挂载目录

[root@node1 ~]# mount.cifs //20.0.0.1/gfsrepo /abc //把目录挂载在/abc下

Password for root@//20.0.0.1/gfsrepo: //无密码保护,直接回车

[root@node1 ~]# cd /etc/yum.repos.d

[root@node1 yum.repos.d]# vim glfs.repo //创建glfs的yum仓库 路径指向挂载点/abc

[GLFS]

name=glfs

baseurl=file:///abc

gpgcheck=0

enabled=1

[root@node1 yum.repos.d]# yum clean all

[root@node1 yum.repos.d]# yum list //加载可下载包

[root@node1 yum.repos.d]# ntpdate ntp1.aliyun.com //进行事件同步(要联网)

30 Oct 01:19:22 ntpdate[41521]: adjust time server 120.25.115.20 offset -0.010928 sec

yum安装。开启gluster服务

[root@node1 yum.repos.d]# yum -y install glusterfs glusterfs-server glusterfs-fuse glusterfs-rdma

[root@node1 yum.repos.d]# systemctl start glusterd.service

[root@node1 yum.repos.d]# systemctl enable glusterd.service

Created symlink from /etc/systemd/system/multi-user.target.wants/glusterd.service to /usr/lib/systemd/system/glusterd.service.

[root@node1 yum.repos.d]# systemctl status glusterd.service

创建存储信任池,在一台节点上添加别的就可以了,至此池子搭建完毕

[root@node1 yum.repos.d]# gluster peer probe node2 ##添加池子,peer匹配, probe信任+节点名称

peer probe: success.

[root@node1 yum.repos.d]# gluster peer probe node3

peer probe: success.

[root@node1 yum.repos.d]# gluster peer probe node4

peer probe: success.

[root@node1 yum.repos.d]# gluster peer status //查看所有节点,每台能看到除直接之外的节点

Number of Peers: 3

Hostname: node2

Uuid: 2e130ae0-5865-42bb-b0ac-ac73d9499fba

State: Peer in Cluster (Connected)

Hostname: node3

Uuid: f096134f-02ec-4234-a153-47ae0e93995b

State: Peer in Cluster (Connected)

Hostname: node4

Uuid: 425e09af-2643-4585-b037-2c1cc1bc2bfa

State: Peer in Cluster (Connected)

创建分布式卷

[root@node1 ~]# gluster volume create dis-vol node1:/data/sdb1 node2:/data/sdb1 force

volume create: dis-vol: success: please start the volume to access data

[root@node1 ~]# gluster volume info dis-vol ##查看卷信息

Volume Name: dis-vol

Type: Distribute

Volume ID: 3c138947-448f-4821-b049-7ec67655f17d

Status: Created ##开始是created,需要开启才能使用

Snapshot Count: 0

Number of Bricks: 2

Transport-type: tcp

Bricks: ##模块组成

Brick1: node1:/data/sdb1

Brick2: node2:/data/sdb1

Options Reconfigured:

transport.address-family: inet

nfs.disable: on

[root@node1 ~]# gluster volume start dis-vol ##开启

volume start: dis-vol: success

[root@node1 ~]# gluster volume info dis-vol ##再看一下

Volume Name: dis-vol

Type: Distribute

Volume ID: 3c138947-448f-4821-b049-7ec67655f17d

Status: Started ##已经started了,可以使用

Snapshot Count: 0

Number of Bricks: 2

Transport-type: tcp

Bricks:

Brick1: node1:/data/sdb1

Brick2: node2:/data/sdb1

Options Reconfigured:

transport.address-family: inet

nfs.disable: on

创建条带卷

[root@node1 ~]# gluster volume create stripe-vol stripe 2 node1:/data/sdc1 node2:/data/sdc1 force

volume create: stripe-vol: success: please start the volume to access data

[root@node1 ~]# gluster volume start stripe-vol

volume start: stripe-vol: success

[root@node1 ~]# gluster volume info stripe-vol

Volume Name: stripe-vol

Type: Stripe

Volume ID: e0fd6f99-2fc7-4320-83bd-cc1fa6cfa1dc

Status: Started

Snapshot Count: 0

Number of Bricks: 1 x 2 = 2

Transport-type: tcp

Bricks:

Brick1: node1:/data/sdc1

Brick2: node2:/data/sdc1

Options Reconfigured:

transport.address-family: inet

nfs.disable: on

创建复制卷

[root@node1 ~]# gluster volume create rep-vol replica 2 node3:/data/sdb1 node4:/data/sdb1 force

volume create: rep-vol: success: please start the volume to access data

[root@node1 ~]# gluster volume start rep-vol

volume start: rep-vol: success

[root@node1 ~]# gluster volume info rep-vol

Volume Name: rep-vol

Type: Replicate

Volume ID: 715f66b9-233f-42c7-9bba-3cfa995cd79a

Status: Started

Snapshot Count: 0

Number of Bricks: 1 x 2 = 2

Transport-type: tcp

Bricks:

Brick1: node3:/data/sdb1

Brick2: node4:/data/sdb1

Options Reconfigured:

transport.address-family: inet

nfs.disable: on

创建分布式条带卷

[root@node1 ~]# gluster volume create dis-stripe stripe 2 node1:/data/sdd1 node2:/data/sdd1 node3:/data/sdd1 node4:/data/sdd1 force

volume create: dis-stripe: success: please start the volume to access data

[root@node1 ~]# gluster volume start dis-stripe

volume start: dis-stripe: success

[root@node1 ~]# gluster volume info dis-stripe

Volume Name: dis-stripe

Type: Distributed-Stripe

Volume ID: f08670fb-ca8c-48a7-a10e-adc0eddbd2e4

Status: Started

Snapshot Count: 0

Number of Bricks: 2 x 2 = 4

Transport-type: tcp

Bricks:

Brick1: node1:/data/sdd1

Brick2: node2:/data/sdd1

Brick3: node3:/data/sdd1

Brick4: node4:/data/sdd1

Options Reconfigured:

transport.address-family: inet

nfs.disable: on

创建分布式复制卷

[root@node1 ~]# gluster volume create dis-rep replica 2 node1:/data/sde1 node2:/data/sde1 node3:/data/sde1 node4:/data/sde1 force

volume create: dis-rep: success: please start the volume to access data

[root@node1 ~]# gluster volume start dis-rep

volume start: dis-rep: success

[root@node1 ~]# gluster volume info dis-rep

Volume Name: dis-rep

Type: Distributed-Replicate

Volume ID: f4c8a913-bf64-44aa-b8b7-4017da8a5709

Status: Started

Snapshot Count: 0

Number of Bricks: 2 x 2 = 4

Transport-type: tcp

Bricks:

Brick1: node1:/data/sde1

Brick2: node2:/data/sde1

Brick3: node3:/data/sde1

Brick4: node4:/data/sde1

Options Reconfigured:

transport.address-family: inet

nfs.disable: on

客户机上

向之前node机上一样搭建glfs仓库

[root@localhost ~]# yum -y install glusterfs glusterfs-fuse //安装工具

[root@localhost ~]# vim /etc/hosts //做主机名映射,以便识别

20.0.0.22 node1

20.0.0.23 node2

20.0.0.24 node3

20.0.0.25 node4

[root@localhost ~]# mkdir /gfs //创建挂载目录

[root@localhost ~]# mkdir /gfs/stripe

[root@localhost ~]# mkdir /gfs/rep

[root@localhost ~]# mkdir /gfs/dis_stripe

[root@localhost ~]# mkdir /gfs/dis_rep

[root@localhost ~]# mkdir /gfs/dis

[root@localhost test]# mount.glusterfs node1:dis-vol dis //进行相对应挂载

[root@localhost test]# mount.glusterfs node1:stripe-vol stripe/

[root@localhost test]# mount.glusterfs node1:rep-vol rep

[root@localhost test]# mount.glusterfs node1:dis-stripe dis_stripe

[root@localhost test]# mount.glusterfs node1:dis-rep dis_rep

五、测试结果(现象详解)

创建测试文件,5个test,块大小为1M,数量为40,即40M ,复制到各个存储目录

dd if=/dev/zero of=/test1.log bs=1M count=40

dd if=/dev/zero of=/test2.log bs=1M count=40

dd if=/dev/zero of=/test3.log bs=1M count=40

dd if=/dev/zero of=/test4.log bs=1M count=40

dd if=/dev/zero of=/test5.log bs=1M count=40

[root@localhost ~]# cd /gfs

[root@localhost gfs]#cp /test* dis

[root@localhost gfs]# cp /test* dis_rep

[root@localhost gfs]# cp /test* dis_stripe

[root@localhost gfs]# cp /test* rep

[root@localhost gfs]# cp /test* stripe

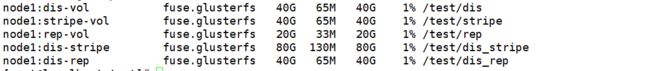

查看存储情况

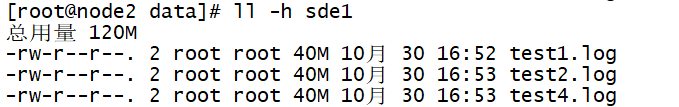

dis-vol为分布式卷,由node1和node2的sdb1组成,分布如下,通过hash算法分开存储了

stripe-vol为条带卷,由node1的sdc1和node2的sdc1组成,可以看到,它每一份文件都分开两块存储

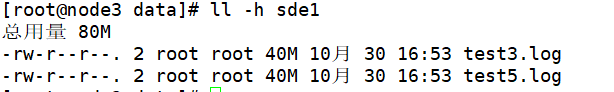

rep-vol复制卷,由node3的sdb1和node4的sdb1组成,可以看到两个部分一样,复制存储

dis-stripe为分布式条带卷,可以看到先分布式把124放到node1和2,把3和5f放到node3和4,然后在内部进行条带存储

dis-rep分布式复制卷,可以看到分布式和上面那种一样,但是是复制存储,每个文件都是复制存放在两个节点内