- PHP环境搭建详细教程

好看资源平台

前端php

PHP是一个流行的服务器端脚本语言,广泛用于Web开发。为了使PHP能够在本地或服务器上运行,我们需要搭建一个合适的PHP环境。本教程将结合最新资料,介绍在不同操作系统上搭建PHP开发环境的多种方法,包括Windows、macOS和Linux系统的安装步骤,以及本地和Docker环境的配置。1.PHP环境搭建概述PHP环境的搭建主要分为以下几类:集成开发环境:例如XAMPP、WAMP、MAMP,这

- 高端密码学院笔记285

柚子_b4b4

高端幸福密码学院(高级班)幸福使者:李华第(598)期《幸福》之回归内在深层生命原动力基础篇——揭秘“激励”成长的喜悦心理案例分析主讲:刘莉一,知识扩充:成功=艰苦劳动+正确方法+少说空话。贪图省力的船夫,目标永远下游。智者的梦再美,也不如愚人实干的脚印。幸福早课堂2020.10.16星期五一笔记:1,重视和珍惜的前提是知道它的价值非常重要,当你珍惜了,你就真正定下来,真正的学到身上。2,大家需要

- 【华为OD技术面试真题 - 技术面】- python八股文真题题库(1)

算法大师

华为od面试python

华为OD面试真题精选专栏:华为OD面试真题精选目录:2024华为OD面试手撕代码真题目录以及八股文真题目录文章目录华为OD面试真题精选1.数据预处理流程数据预处理的主要步骤工具和库2.介绍线性回归、逻辑回归模型线性回归(LinearRegression)模型形式:关键点:逻辑回归(LogisticRegression)模型形式:关键点:参数估计与评估:3.python浅拷贝及深拷贝浅拷贝(Shal

- 【六】阿伟开始搭建Kafka学习环境

能源恒观

中间件学习kafkaspring

阿伟开始搭建Kafka学习环境概述上一篇文章阿伟学习了Kafka的核心概念,并且把市面上流行的消息中间件特性进行了梳理和对比,方便大家在学习过程中进行对比学习,最后梳理了一些Kafka使用中经常遇到的Kafka难题以及解决思路,经过上一篇的学习我相信大家对Kafka有了初步的认识,本篇将继续学习Kafka。一、安装和配置学习一项技术首先要搭建一套服务,而Kafka的运行主要需要部署jdk、zook

- Python实现简单的机器学习算法

master_chenchengg

pythonpython办公效率python开发IT

Python实现简单的机器学习算法开篇:初探机器学习的奇妙之旅搭建环境:一切从安装开始必备工具箱第一步:安装Anaconda和JupyterNotebook小贴士:如何配置Python环境变量算法初体验:从零开始的Python机器学习线性回归:让数据说话数据准备:从哪里找数据编码实战:Python实现线性回归模型评估:如何判断模型好坏逻辑回归:从分类开始理论入门:什么是逻辑回归代码实现:使用skl

- springboot+vue项目实战一-创建SpringBoot简单项目

苹果酱0567

面试题汇总与解析springboot后端java中间件开发语言

这段时间抽空给女朋友搭建一个个人博客,想着记录一下建站的过程,就当做笔记吧。虽然复制zjblog只要一个小时就可以搞定一个网站,或者用cms系统,三四个小时就可以做出一个前后台都有的网站,而且想做成啥样也都行。但是就是要从新做,自己做的意义不一样,更何况,俺就是专门干这个的,嘿嘿嘿要做一个网站,而且从零开始,首先呢就是技术选型了,经过一番思量决定选择-SpringBoot做后端,前端使用Vue做一

- Vue( ElementUI入门、vue-cli安装)

m0_l5z

elementuivue.js

一.ElementUI入门目录:1.ElementUI入门1.1ElementUI简介1.2Vue+ElementUI安装1.3开发示例2.搭建nodejs环境2.1nodejs介绍2.2npm是什么2.3nodejs环境搭建2.3.1下载2.3.2解压2.3.3配置环境变量2.3.4配置npm全局模块路径和cache默认安装位置2.3.5修改npm镜像提高下载速度2.3.6验证安装结果3.运行n

- 【Python搞定车载自动化测试】——Python实现车载以太网DoIP刷写(含Python源码)

疯狂的机器人

Python搞定车载自动化pythonDoIPUDSISO142291SO13400Bootloadertcp/ip

系列文章目录【Python搞定车载自动化测试】系列文章目录汇总文章目录系列文章目录前言一、环境搭建1.软件环境2.硬件环境二、目录结构三、源码展示1.DoIP诊断基础函数方法2.DoIP诊断业务函数方法3.27服务安全解锁4.DoIP自动化刷写四、测试日志1.测试日志五、完整源码链接前言随着智能电动汽车行业的发展,汽车=智能终端+四个轮子,各家车企都推出了各自的OTA升级方案,本章节主要介绍如何使

- 进销存小程序源码 PHP网络版ERP进销存管理系统 全开源可二开

摸鱼小号

php

可直接源码搭建部署发布后使用:一、功能模块介绍该系统模板主要有进,销,存三个主要模板功能组成,下面将介绍各模块所对应的功能;进:需要将产品采购入库,自动生成采购明细台账同时关联财务生成付款账单;销:是指对客户的销售订单记录,汇总生成产品销售明细及回款计划;存:库存的日常盘点与统计,库存下限预警、出入库台账、库存位置等。1.进购管理采购订单:采购下单审批→由上级审批通过采购入库;采购入库:货品到货>

- 最简单将静态网页挂载到服务器上(不用nginx)

全能全知者

服务器nginx运维前端html笔记

最简单将静态网页挂载到服务器上(不用nginx)如果随便弄个静态网页挂在服务器都要用nignx就太麻烦了,所以直接使用Apache来搭建一些简单前端静态网页会相对方便很多检查Web服务器服务状态:sudosystemctlstatushttpd#ApacheWeb服务器如果发现没有安装web服务器:安装Apache:sudoyuminstallhttpd启动Apache:sudosystemctl

- ai绘画工具midjourney怎么下载?附作品管理教程

设计师早上好

Midjourney是一款功能强大的AI绘画工具,它使用机器学习技术和深度神经网络等算法,可以生成各种艺术风格的绘画作品。在创意设计、广告宣传等方面有着广泛的应用前景。那么,ai绘画工具midjourney怎么下载?本文将为您介绍Midjourney的下载以及作品的相关管理。一、Midjourney下载Midjourney的下载非常简单,只需打开Midjourney官网(点击“GetMidjour

- NPM私库搭建-verdaccio(Linux)

Beam007

npmlinux前端

1、安装nodelinux服务器安装nodea)、官网下载所需的node版本https://nodejs.org/dist/v14.21.0/b)、解压安装包若下载的是xxx.tar.xz文件,解压命令为tar-xvfxxx.tar.xzc)、修改环境变量修改:/etc/profile文件#SETPATHFORNODEJSexportNODE_HOME=NODEJS解压安装的路径exportPAT

- [实践应用] 深度学习之模型性能评估指标

YuanDaima2048

深度学习工具使用深度学习人工智能损失函数性能评估pytorchpython机器学习

文章总览:YuanDaiMa2048博客文章总览深度学习之模型性能评估指标分类任务回归任务排序任务聚类任务生成任务其他介绍在机器学习和深度学习领域,评估模型性能是一项至关重要的任务。不同的学习任务需要不同的性能指标来衡量模型的有效性。以下是对一些常见任务及其相应的性能评估指标的详细解释和总结。分类任务分类任务是指模型需要将输入数据分配到预定义的类别或标签中。以下是分类任务中常用的性能指标:准确率(

- [实践应用] 深度学习之优化器

YuanDaima2048

深度学习工具使用pytorch深度学习人工智能机器学习python优化器

文章总览:YuanDaiMa2048博客文章总览深度学习之优化器1.随机梯度下降(SGD)2.动量优化(Momentum)3.自适应梯度(Adagrad)4.自适应矩估计(Adam)5.RMSprop总结其他介绍在深度学习中,优化器用于更新模型的参数,以最小化损失函数。常见的优化函数有很多种,下面是几种主流的优化器及其特点、原理和PyTorch实现:1.随机梯度下降(SGD)原理:随机梯度下降通过

- 【2023年】云计算金砖牛刀小试6

geekgold

云计算服务器网络kubernetes容器

第一套【任务1】私有云服务搭建[10分]【题目1】基础环境配置[0.5分]使用提供的用户名密码,登录提供的OpenStack私有云平台,在当前租户下,使用CentOS7.9镜像,创建两台云主机,云主机类型使用4vCPU/12G/100G_50G类型。当前租户下默认存在一张网卡,自行创建第二张网卡并连接至controller和compute节点(第二张网卡的网段为10.10.X.0/24,X为工位号

- 做事一定要认真

地上的垚

大脑突然被惊醒,我猛然起身,接着发了下呆,灵魂回归后意识到:啊,今天上班要迟到了!我按了按手机发现手机已关机,略微一看,原来是昨晚充电器没插上。一件微不足道的事折射出我的粗心大意,反映了我对待事情漠不关心,草草了事的态度。许许多多的事情都需要认认真真的对待才能做好,认真是自我努力的表现。工作中,我总是不停的犯错误,我谴责自己:连这点小事都要犯错,你有什么用啊。同时也安慰自己:不过是一点小错误而已,

- 吴恩达深度学习笔记(30)-正则化的解释

极客Array

正则化(Regularization)深度学习可能存在过拟合问题——高方差,有两个解决方法,一个是正则化,另一个是准备更多的数据,这是非常可靠的方法,但你可能无法时时刻刻准备足够多的训练数据或者获取更多数据的成本很高,但正则化通常有助于避免过拟合或减少你的网络误差。如果你怀疑神经网络过度拟合了数据,即存在高方差问题,那么最先想到的方法可能是正则化,另一个解决高方差的方法就是准备更多数据,这也是非常

- 个人学习笔记7-6:动手学深度学习pytorch版-李沐

浪子L

深度学习深度学习笔记计算机视觉python人工智能神经网络pytorch

#人工智能##深度学习##语义分割##计算机视觉##神经网络#计算机视觉13.11全卷积网络全卷积网络(fullyconvolutionalnetwork,FCN)采用卷积神经网络实现了从图像像素到像素类别的变换。引入l转置卷积(transposedconvolution)实现的,输出的类别预测与输入图像在像素级别上具有一一对应关系:通道维的输出即该位置对应像素的类别预测。13.11.1构造模型下

- 用kubedam搭建的k8s证书过期处理方法

我滴鬼鬼呀wks

k8s1024程序员节

kubeadm部署的k8s证书过期1、查看证书过期时间kubeadmalphacertscheck-expiration若证书已经过期无法试用kubectl命令建议修改服务器时间到未过期的时间段2、配置kube-controller-manager.yaml文件cat/etc/kubernetes/manifests/kube-controller-manager.yamlapiVersion:v

- 计算机视觉中,Pooling的作用

Wils0nEdwards

计算机视觉人工智能

在计算机视觉中,Pooling(池化)是一种常见的操作,主要用于卷积神经网络(CNN)中。它通过对特征图进行下采样,减少数据的空间维度,同时保留重要的特征信息。Pooling的作用可以归纳为以下几个方面:1.降低计算复杂度与内存需求Pooling操作通过对特征图进行下采样,减少了特征图的空间分辨率(例如,高度和宽度)。这意味着网络需要处理的数据量会减少,从而降低了计算量和内存需求。这对大型神经网络

- 神经网络-损失函数

红米煮粥

神经网络人工智能深度学习

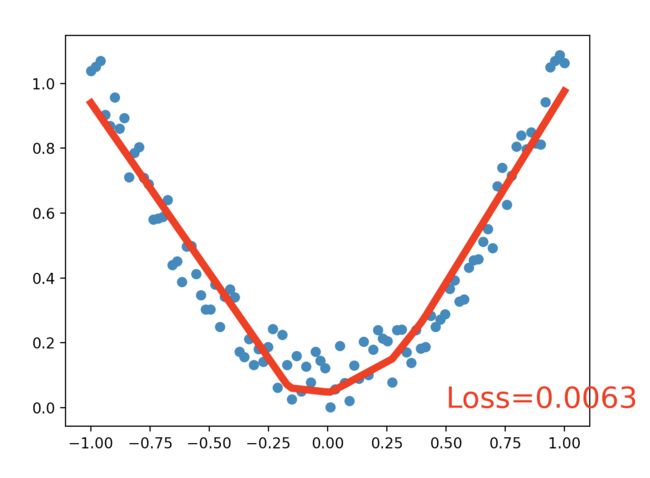

文章目录一、回归问题的损失函数1.均方误差(MeanSquaredError,MSE)2.平均绝对误差(MeanAbsoluteError,MAE)二、分类问题的损失函数1.0-1损失函数(Zero-OneLossFunction)2.交叉熵损失(Cross-EntropyLoss)3.合页损失(HingeLoss)三、总结在神经网络中,损失函数(LossFunction)扮演着至关重要的角色,它

- Playwright 自动化验证码教程

吉小雨

python库自动化数据库运维python

Playwright自动化点击验证码教程在自动化测试中,Playwright是一个流行的浏览器自动化工具,支持多种浏览器的高效操作。验证码(如图片验证码、滑动验证码等)是网页中常见的反自动化机制,常常需要特别处理。我们将介绍如何使用Playwright自动化点击验证码,并提供几种常见验证码的处理方案。官方文档链接:Playwright官方文档一、Playwright环境搭建1.1安装Playwri

- 【鸿蒙应用】总结一下ArkUI

读心悦

鸿蒙基础鸿蒙应用

ArkUI是HarmonyOS应用界面的UI开发框架,提供了简洁的UI语法、UI组件、动画机制和事件交互等等UI开发基础,以此满足应用开发者对UI界面开发的需求。组件是界面搭建的最小单位,开发者通过多种组件的组合构成完整的界面。页面是ArkUI最小的调度分隔单位,开发者可以将应用设计为多个功能页面,每一个页面进行单独的文件管理,并且通过页面路由API完成页面之间的调度管理,以此来实现应用内功能的解

- 无人值守模式,自习室创业,真的那么赚钱吗?

森屿旅人

“创业是一条不归路,不要拿自己亏不起的钱当赌注!”在和大家分享无人自习室创业经历前,先和大家强调上面这一句话,创过业的朋友,应该深有体会。因为,我们要深刻的认知市场规律,一个行业,如果利润很高,那必然趋之若鹜得涌入,所以在市场充分博弈以后,市场会回归价值本身,这个是市场的客观规律。因此,不要抓风口,抓风口,说实在的,和赌博无异,那些和你鼓吹风口的人,永远是把你当成一根韭菜,诚然,真正赚钱的项目,不

- 损失函数与反向传播

Star_.

PyTorchpytorch深度学习python

损失函数定义与作用损失函数(lossfunction)在深度学习领域是用来计算搭建模型预测的输出值和真实值之间的误差。1.损失函数越小越好2.计算实际输出与目标之间的差距3.为更新输出提供依据(反向传播)常见的损失函数回归常见的损失函数有:均方差(MeanSquaredError,MSE)、平均绝对误差(MeanAbsoluteErrorLoss,MAE)、HuberLoss是一种将MSE与MAE

- python画出分子化学空间分布(UMAP)

Sakaiay

python

利用umap画出分子化学空间分布图安装pipinstallumap-learn下面是用一个数据集举的例子importtorchimportumapimportpandasaspdimportnumpyasnpimportmatplotlib.pyplotaspltimportseabornassnsfromsklearn.manifoldimportTSNEfromrdkit.Chemimport

- 七.正则化

愿风去了

吴恩达机器学习之正则化(Regularization)http://www.cnblogs.com/jianxinzhou/p/4083921.html从数学公式上理解L1和L2https://blog.csdn.net/b876144622/article/details/81276818虽然在线性回归中加入基函数会使模型更加灵活,但是很容易引起数据的过拟合。例如将数据投影到30维的基函数上,模

- BP神经网络的传递函数

大胜归来19

MATLAB

BP网络一般都是用三层的,四层及以上的都比较少用;传输函数的选择,这个怎么说,假设你想预测的结果是几个固定值,如1,0等,满足某个条件输出1,不满足则0的话,首先想到的是hardlim函数,阈值型的,当然也可以考虑其他的;然后,假如网络是用来表达某种线性关系时,用purelin---线性传输函数;若是非线性关系的话,用别的非线性传递函数,多层网络时,每层不一定要用相同的传递函数,可以是三种配合,可

- 神经网络传递函数sigmoid,神经网络传递函数作用

快乐的小荣荣

神经网络机器学习深度学习人工智能

神经网络传递函数选取不同会有特别大差别嘛?只是最后一层,但前面层是非线性,那么可能存在区别不大的情况。线性函数f(a*input)=af(input),一般来说,input为向量,最简化情况下,可以假设input的各个维度,a1=a2=a3。。。意味着你线性层只是简单的对输入做了scale~而神经网络能起作用的原因,在于通过足够复杂的非线性函数,来模拟任何的分布。所以,神经网络必须要用非线性函数。

- 掌中星

江封时

chapter.1易言相信,林半星会是他人生中最美丽的一个意外。对,意外。青春里的意外。第一次遇见林半星,是在学校门口拐弯处的停车棚。由于大家基本上都不想挤公交又不情愿步行到学校,从上学期开始就有许多学生骑自行车过来,于是就有了这个学校临时搭建的简陋的停车棚。易言家的小店和小屋子在学校对街,虽然离学校不远,但他讨厌步行,为了方便,还是骑上了父亲的有些年头的黑色自行车。黑色自行车甚至还没有街边的共享

- 关于旗正规则引擎中的MD5加密问题

何必如此

jspMD5规则加密

一般情况下,为了防止个人隐私的泄露,我们都会对用户登录密码进行加密,使数据库相应字段保存的是加密后的字符串,而非原始密码。

在旗正规则引擎中,通过外部调用,可以实现MD5的加密,具体步骤如下:

1.在对象库中选择外部调用,选择“com.flagleader.util.MD5”,在子选项中选择“com.flagleader.util.MD5.getMD5ofStr({arg1})”;

2.在规

- 【Spark101】Scala Promise/Future在Spark中的应用

bit1129

Promise

Promise和Future是Scala用于异步调用并实现结果汇集的并发原语,Scala的Future同JUC里面的Future接口含义相同,Promise理解起来就有些绕。等有时间了再仔细的研究下Promise和Future的语义以及应用场景,具体参见Scala在线文档:http://docs.scala-lang.org/sips/completed/futures-promises.html

- spark sql 访问hive数据的配置详解

daizj

spark sqlhivethriftserver

spark sql 能够通过thriftserver 访问hive数据,默认spark编译的版本是不支持访问hive,因为hive依赖比较多,因此打的包中不包含hive和thriftserver,因此需要自己下载源码进行编译,将hive,thriftserver打包进去才能够访问,详细配置步骤如下:

1、下载源码

2、下载Maven,并配置

此配置简单,就略过

- HTTP 协议通信

周凡杨

javahttpclienthttp通信

一:简介

HTTPCLIENT,通过JAVA基于HTTP协议进行点与点间的通信!

二: 代码举例

测试类:

import java

- java unix时间戳转换

g21121

java

把java时间戳转换成unix时间戳:

Timestamp appointTime=Timestamp.valueOf(new SimpleDateFormat("yyyy-MM-dd HH:mm:ss").format(new Date()))

SimpleDateFormat df = new SimpleDateFormat("yyyy-MM-dd hh:m

- web报表工具FineReport常用函数的用法总结(报表函数)

老A不折腾

web报表finereport总结

说明:本次总结中,凡是以tableName或viewName作为参数因子的。函数在调用的时候均按照先从私有数据源中查找,然后再从公有数据源中查找的顺序。

CLASS

CLASS(object):返回object对象的所属的类。

CNMONEY

CNMONEY(number,unit)返回人民币大写。

number:需要转换的数值型的数。

unit:单位,

- java jni调用c++ 代码 报错

墙头上一根草

javaC++jni

#

# A fatal error has been detected by the Java Runtime Environment:

#

# EXCEPTION_ACCESS_VIOLATION (0xc0000005) at pc=0x00000000777c3290, pid=5632, tid=6656

#

# JRE version: Java(TM) SE Ru

- Spring中事件处理de小技巧

aijuans

springSpring 教程Spring 实例Spring 入门Spring3

Spring 中提供一些Aware相关de接口,BeanFactoryAware、 ApplicationContextAware、ResourceLoaderAware、ServletContextAware等等,其中最常用到de匙ApplicationContextAware.实现ApplicationContextAwaredeBean,在Bean被初始后,将会被注入 Applicati

- linux shell ls脚本样例

annan211

linuxlinux ls源码linux 源码

#! /bin/sh -

#查找输入文件的路径

#在查找路径下寻找一个或多个原始文件或文件模式

# 查找路径由特定的环境变量所定义

#标准输出所产生的结果 通常是查找路径下找到的每个文件的第一个实体的完整路径

# 或是filename :not found 的标准错误输出。

#如果文件没有找到 则退出码为0

#否则 即为找不到的文件个数

#语法 pathfind [--

- List,Set,Map遍历方式 (收集的资源,值得看一下)

百合不是茶

listsetMap遍历方式

List特点:元素有放入顺序,元素可重复

Map特点:元素按键值对存储,无放入顺序

Set特点:元素无放入顺序,元素不可重复(注意:元素虽然无放入顺序,但是元素在set中的位置是有该元素的HashCode决定的,其位置其实是固定的)

List接口有三个实现类:LinkedList,ArrayList,Vector

LinkedList:底层基于链表实现,链表内存是散乱的,每一个元素存储本身

- 解决SimpleDateFormat的线程不安全问题的方法

bijian1013

javathread线程安全

在Java项目中,我们通常会自己写一个DateUtil类,处理日期和字符串的转换,如下所示:

public class DateUtil01 {

private SimpleDateFormat dateformat = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

public void format(Date d

- http请求测试实例(采用fastjson解析)

bijian1013

http测试

在实际开发中,我们经常会去做http请求的开发,下面则是如何请求的单元测试小实例,仅供参考。

import java.util.HashMap;

import java.util.Map;

import org.apache.commons.httpclient.HttpClient;

import

- 【RPC框架Hessian三】Hessian 异常处理

bit1129

hessian

RPC异常处理概述

RPC异常处理指是,当客户端调用远端的服务,如果服务执行过程中发生异常,这个异常能否序列到客户端?

如果服务在执行过程中可能发生异常,那么在服务接口的声明中,就该声明该接口可能抛出的异常。

在Hessian中,服务器端发生异常,可以将异常信息从服务器端序列化到客户端,因为Exception本身是实现了Serializable的

- 【日志分析】日志分析工具

bit1129

日志分析

1. 网站日志实时分析工具 GoAccess

http://www.vpsee.com/2014/02/a-real-time-web-log-analyzer-goaccess/

2. 通过日志监控并收集 Java 应用程序性能数据(Perf4J)

http://www.ibm.com/developerworks/cn/java/j-lo-logforperf/

3.log.io

和

- nginx优化加强战斗力及遇到的坑解决

ronin47

nginx 优化

先说遇到个坑,第一个是负载问题,这个问题与架构有关,由于我设计架构多了两层,结果导致会话负载只转向一个。解决这样的问题思路有两个:一是改变负载策略,二是更改架构设计。

由于采用动静分离部署,而nginx又设计了静态,结果客户端去读nginx静态,访问量上来,页面加载很慢。解决:二者留其一。最好是保留apache服务器。

来以下优化:

- java-50-输入两棵二叉树A和B,判断树B是不是A的子结构

bylijinnan

java

思路来自:

http://zhedahht.blog.163.com/blog/static/25411174201011445550396/

import ljn.help.*;

public class HasSubtree {

/**Q50.

* 输入两棵二叉树A和B,判断树B是不是A的子结构。

例如,下图中的两棵树A和B,由于A中有一部分子树的结构和B是一

- mongoDB 备份与恢复

开窍的石头

mongDB备份与恢复

Mongodb导出与导入

1: 导入/导出可以操作的是本地的mongodb服务器,也可以是远程的.

所以,都有如下通用选项:

-h host 主机

--port port 端口

-u username 用户名

-p passwd 密码

2: mongoexport 导出json格式的文件

- [网络与通讯]椭圆轨道计算的一些问题

comsci

网络

如果按照中国古代农历的历法,现在应该是某个季节的开始,但是由于农历历法是3000年前的天文观测数据,如果按照现在的天文学记录来进行修正的话,这个季节已经过去一段时间了。。。。。

也就是说,还要再等3000年。才有机会了,太阳系的行星的椭圆轨道受到外来天体的干扰,轨道次序发生了变

- 软件专利如何申请

cuiyadll

软件专利申请

软件技术可以申请软件著作权以保护软件源代码,也可以申请发明专利以保护软件流程中的步骤执行方式。专利保护的是软件解决问题的思想,而软件著作权保护的是软件代码(即软件思想的表达形式)。例如,离线传送文件,那发明专利保护是如何实现离线传送文件。基于相同的软件思想,但实现离线传送的程序代码有千千万万种,每种代码都可以享有各自的软件著作权。申请一个软件发明专利的代理费大概需要5000-8000申请发明专利可

- Android学习笔记

darrenzhu

android

1.启动一个AVD

2.命令行运行adb shell可连接到AVD,这也就是命令行客户端

3.如何启动一个程序

am start -n package name/.activityName

am start -n com.example.helloworld/.MainActivity

启动Android设置工具的命令如下所示:

# am start -

- apache虚拟机配置,本地多域名访问本地网站

dcj3sjt126com

apache

现在假定你有两个目录,一个存在于 /htdocs/a,另一个存在于 /htdocs/b 。

现在你想要在本地测试的时候访问 www.freeman.com 对应的目录是 /xampp/htdocs/freeman ,访问 www.duchengjiu.com 对应的目录是 /htdocs/duchengjiu。

1、首先修改C盘WINDOWS\system32\drivers\etc目录下的

- yii2 restful web服务[速率限制]

dcj3sjt126com

PHPyii2

速率限制

为防止滥用,你应该考虑增加速率限制到您的API。 例如,您可以限制每个用户的API的使用是在10分钟内最多100次的API调用。 如果一个用户同一个时间段内太多的请求被接收, 将返回响应状态代码 429 (这意味着过多的请求)。

要启用速率限制, [[yii\web\User::identityClass|user identity class]] 应该实现 [[yii\filter

- Hadoop2.5.2安装——单机模式

eksliang

hadoophadoop单机部署

转载请出自出处:http://eksliang.iteye.com/blog/2185414 一、概述

Hadoop有三种模式 单机模式、伪分布模式和完全分布模式,这里先简单介绍单机模式 ,默认情况下,Hadoop被配置成一个非分布式模式,独立运行JAVA进程,适合开始做调试工作。

二、下载地址

Hadoop 网址http:

- LoadMoreListView+SwipeRefreshLayout(分页下拉)基本结构

gundumw100

android

一切为了快速迭代

import java.util.ArrayList;

import org.json.JSONObject;

import android.animation.ObjectAnimator;

import android.os.Bundle;

import android.support.v4.widget.SwipeRefreshLayo

- 三道简单的前端HTML/CSS题目

ini

htmlWeb前端css题目

使用CSS为多个网页进行相同风格的布局和外观设置时,为了方便对这些网页进行修改,最好使用( )。http://hovertree.com/shortanswer/bjae/7bd72acca3206862.htm

在HTML中加入<table style=”color:red; font-size:10pt”>,此为( )。http://hovertree.com/s

- overrided方法编译错误

kane_xie

override

问题描述:

在实现类中的某一或某几个Override方法发生编译错误如下:

Name clash: The method put(String) of type XXXServiceImpl has the same erasure as put(String) of type XXXService but does not override it

当去掉@Over

- Java中使用代理IP获取网址内容(防IP被封,做数据爬虫)

mcj8089

免费代理IP代理IP数据爬虫JAVA设置代理IP爬虫封IP

推荐两个代理IP网站:

1. 全网代理IP:http://proxy.goubanjia.com/

2. 敲代码免费IP:http://ip.qiaodm.com/

Java语言有两种方式使用代理IP访问网址并获取内容,

方式一,设置System系统属性

// 设置代理IP

System.getProper

- Nodejs Express 报错之 listen EADDRINUSE

qiaolevip

每天进步一点点学习永无止境nodejs纵观千象

当你启动 nodejs服务报错:

>node app

Express server listening on port 80

events.js:85

throw er; // Unhandled 'error' event

^

Error: listen EADDRINUSE

at exports._errnoException (

- C++中三种new的用法

_荆棘鸟_

C++new

转载自:http://news.ccidnet.com/art/32855/20100713/2114025_1.html

作者: mt

其一是new operator,也叫new表达式;其二是operator new,也叫new操作符。这两个英文名称起的也太绝了,很容易搞混,那就记中文名称吧。new表达式比较常见,也最常用,例如:

string* ps = new string("

- Ruby深入研究笔记1

wudixiaotie

Ruby

module是可以定义private方法的

module MTest

def aaa

puts "aaa"

private_method

end

private

def private_method

puts "this is private_method"

end

end