kubernetes 概念与(离线)部署(一)

Kubernetes 简介:

Kubernetes 是谷歌开源的容器集群管理系统,是 Google 多年大规模容器管理技术Borg 的开源版本,主要功能包括:

- 基于容器的应用部署、维护和滚动升级

- 负载均衡和服务发现

- 跨机器和跨地区的集群调度

- 自动伸缩

- 无状态服务和有状态服务

- 广泛的 Volume 支持

- 插件机制保证扩展性

Kubernetes 发展非常迅速,已经成为容器编排领域的领导者。

Kubernetesd的基本概念:来源《kubernetes指南》

Container

Container(容器)是一种便携式、轻量级的操作系统级虚拟化技术。它使用namespace 隔离不同的软件运行环境,并通过镜像自包含软件的运行环境,从而使得容器可以很方便的在任何地方运行。

Pod

Kubernetes 使用 Pod 来管理容器,每个 Pod 可以包含一个或多个紧密关联的容器。Pod 是一组紧密关联的容器集合,它们共享 PID、IPC、Network 和 UTS namespace,是 Kubernetes 调度的基本单位。Pod 内的多个容器共享网络和文件系统,可以通过进程间通信和文件共享这种简单高效的方式组合完成服务。

Node

Node 是 Pod 真正运行的主机,可以是物理机,也可以是虚拟机。为了管理 Pod,每个Node 节点上至少要运行 container runtime(比如 docker 或者 rkt)、 kubelet 和kube-proxy 服务。

Namespace

Namespace 是对一组资源和对象的抽象集合,比如可以用来将系统内部的对象划分为不同的项目组或用户组。常见的 pods, services, replication controllers 和 deployments等都是属于某一个 namespace 的(默认是 default),而 node, persistentVolumes 等则不属于任何 namespace。

Service

Service 是应用服务的抽象,通过 labels 为应用提供负载均衡和服务发现。匹配 labels的 Pod IP 和端口列表组成 endpoints,由 kube-proxy 负责将服务 IP 负载均衡到这些endpoints 上。

Label

Label 是识别 Kubernetes 对象的标签,以 key/value 的方式附加到对象上(key 最长不能超过 63 字节,value 可以为空,也可以是不超过 253 字节的字符串)。

核心组件:

Kubernetes 主要由以下几个核心组件组成:

- etcd 保存了整个集群的状态;

- apiserver 提供了资源操作的唯一入口,并提供认证、授权、访问控制、API 注册和发现等机制;

- controller manager 负责维护集群的状态,比如故障检测、自动扩展、滚动更新等;

- scheduler 负责资源的调度,按照预定的调度策略将 Pod 调度到相应的机器上;

- kubelet 负责维护容器的生命周期,同时也负责 Volume(CVI)和网络(CNI)的管理;

- Container runtime 负责镜像管理以及 Pod 和容器的真正运行(CRI);

- kube-proxy 负责为 Service 提供 cluster 内部的服务发现和负载均衡;

除了核心组件,还有一些推荐的 Add-ons:

- kube-dns 负责为整个集群提供 DNS 服务

- Ingress Controller 为服务提供外网入口

- Heapster 提供资源监控

- Dashboard 提供 GUI

- Federation 提供跨可用区的集群

- Fluentd-elasticsearch 提供集群日志采集、存储与查询

Kubernetes 1.9.0(离线)部署

集群可以分为一个或多个master和若干个Node节点组成;集群所有的控制命令都是在master节点上运行;master节点主要由四个模块组成:etcd、api server、controller manager、scheduler。

Master节点:

- API Server:K8S对外的唯一接口,提供HTTP/HTTPS RESTful API,即kubernetes API。所有的请求都需要经过这个接口进行通信。主要处理REST操作以及更新ETCD中的对象。是所有资源增删改查的唯一入口。

- Scheduler:资源调度,负责决定将Pod放到哪个Node上运行。Scheduler在调度时会对集群的结构进行分析,当前各个节点的负载,以及应用对高可用、性能等方面的需求。

- Controller Manager:负责管理集群各种资源,保证资源处于预期的状态。Controller Manager由多种controller组成,包括replication controller、endpoints controller、namespace controller、serviceaccounts controller等

- ETCD:负责保存k8s 集群的配置信息和各种资源的状态信息,当数据发生变化时,etcd会快速地通知k8s相关组件。

- Pod网络:Pod要能够相互间通信,K8S集群必须部署Pod网络,flannel是其中一种的可选方案。

- kube-dns:负责为整个集群提供 DNS 服务

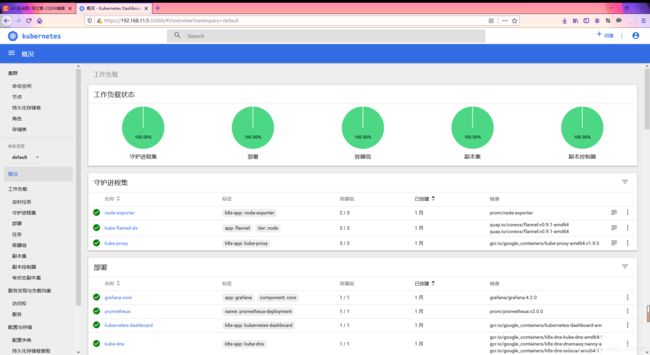

- kubernetes-dashboard: web(UI)界面

Node节点:

- Kubelet:kubelet是node的agent,当Scheduler确定在某个Node上运行Pod后,会将Pod的具体配置信息(image、volume等)发送给该节点的kubelet,kubelet会根据这些信息创建和运行容器,并向master报告运行状态。

- Kube-proxy:service在逻辑上代表了后端的多个Pod,外借通过service访问Pod。service接收到请求就需要kube-proxy完成转发到Pod的。每个Node都会运行kube-proxy服务,负责将访问的service的TCP/UDP数据流转发到后端的容器,如果有多个副本,kube-proxy会实现负载均衡,有2种方式:LVS或者Iptables;

- Docker Engine:负责节点的容器的管理工作;

基本环境准备:

同步时间:

yum install ntp

ntpdate cn.pool.ntp.org

timedatectl set-timezone Asia/Shanghai

timedatectl set-local-rtc 1

timedatectl set-ntp 1

关闭selinux / 防火墙

setenforce 0 #临时关闭selinux,永久修改需要编辑/etc/selinux/config 将【SELINUX】设置为【disabled】

systemctl stop firewalld.service

systemctl disable firewalld.service

升级系统内核:

最简单的方式就是sudo yum -y update,需要下载1G左右的升级文件。

也可以只升级内核:sudo yum update -y kernel,只需要下载100M左右的升级文件。

内核升级完毕后,重启。然后重新启动Docker服务,成功。

如果开启了 swap 分区,kubelet 会启动失败(可以通过将参数 --fail-swap-on 设置为false 来忽略 swap on),故需要在每台机器上关闭 swap 分区:

sudo swapoff -a

为了防止开机自动挂载 swap 分区,可以注释 /etc/fstab 中相应的条目:

sudo sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

打开iptables内生的桥接相关功能,已经默认开启了,没开启的自行开启

[root@node1 ~]# cat /proc/sys/net/bridge/bridge-nf-call-ip6tables

1

[root@node1 ~]# cat /proc/sys/net/bridge/bridge-nf-call-iptables

1部署Docker:

#卸载旧版本Dokcer

sudo yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-engine

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo #部署docker源

yum list docker-ce --showduplicates | sort -r #查看dokcer的版本列表

yum install -y yum-utils

yum install --setopt=obsoletes=0 docker-ce-selinux-17.03.2.ce-1.el7.centos.noarch docker-ce-17.03.2.ce-1.el7.centos

三、kubernetes离线部署

1、主机

| hostname |

ip |

节点服务 |

版本 |

| k8s-master |

192.168.11.5 |

etcd,kube-apiserver,kube-controller-manager,kube-scheduler |

CentOS7.3 64位 |

| k8s-node2 |

192.168.11.4 |

kubelet,kube-proxy,docker |

CentOS7.3 64位 |

| k8s-node3 |

192.168.11.23 |

kubelet,kube-proxy,docker |

CentOS7.3 64位 |

2、软件包与版本;

准备k8s.images.tar.bz包;下载地址见百度云盘链接: https://pan.baidu.com/s/1c2O1gIW 密码: 9s92

[root@master tools]# bunzip2 k8s_images.tar.bz2

[root@node1 k8s_images]# ll

total 194492

-rw-r--r-- 1 502 games 31324 Dec 12 2017 container-selinux-2.33-1.git86f33cd.el7.noarch.rpm

-rw-r--r-- 1 502 games 286680 Aug 10 2017 device-mapper-1.02.140-8.el7.x86_64.rpm

-rw-r--r-- 1 502 games 183872 Aug 10 2017 device-mapper-event-1.02.140-8.el7.x86_64.rpm

-rw-r--r-- 1 502 games 183428 Aug 10 2017 device-mapper-event-libs-1.02.140-8.el7.x86_64.rpm

-rw-r--r-- 1 502 games 319392 Aug 10 2017 device-mapper-libs-1.02.140-8.el7.x86_64.rpm

-rw-r--r-- 1 502 games 409432 Aug 10 2017 device-mapper-persistent-data-0.7.0-0.1.rc6.el7.x86_64.rpm

-rw-r--r-- 1 502 games 19529520 Jun 28 2017 docker-ce-17.03.2.ce-1.el7.centos.x86_64.rpm

-rw-r--r-- 1 502 games 29108 Jun 28 2017 docker-ce-selinux-17.03.2.ce-1.el7.centos.noarch.rpm

drwxr-xr-x 2 502 games 4096 Apr 22 15:46 docker_images

-rw-r--r-- 1 502 games 17241234 Dec 26 2017 kubeadm-1.9.0-0.x86_64.rpm

-rw-r--r-- 1 502 games 9310446 Dec 26 2017 kubectl-1.9.0-0.x86_64.rpm

-rw-r--r-- 1 502 games 2802 Jan 1 2018 kube-flannel.yml

-rw-r--r-- 1 502 games 17593026 Dec 26 2017 kubelet-1.9.9-9.x86_64.rpm

-rw-r--r-- 1 502 games 9008838 Dec 26 2017 kubernetes-cni-0.6.0-0.x86_64.rpm

-rw-r--r-- 1 502 games 121195008 Jan 1 2018 kubernetes-dashboard_v1.8.1.tar

-rw-r--r-- 1 502 games 4821 Jan 1 2018 kubernetes-dashboard.yaml

-rw-r--r-- 1 502 games 56988 Aug 11 2017 libseccomp-2.3.1-3.el7.x86_64.rpm

-rw-r--r-- 1 502 games 50076 Apr 13 2017 libtool-ltdl-2.4.2-22.el7_3.x86_64.rpm

-rw-r--r-- 1 502 games 252528 Jun 24 2016 libxml2-python-2.9.1-6.el7_2.3.x86_64.rpm

-rw-r--r-- 1 502 games 338448 Jul 4 2014 lsof-4.87-4.el7.x86_64.rpm

-rw-r--r-- 1 502 games 1322492 Aug 11 2017 lvm2-2.02.171-8.el7.x86_64.rpm

-rw-r--r-- 1 502 games 1077080 Aug 11 2017 lvm2-libs-2.02.171-8.el7.x86_64.rpm

-rw-r--r-- 1 502 games 273012 Jul 4 2014 python-kitchen-1.1.1-5.el7.noarch.rpm

-rw-r--r-- 1 502 games 296632 Aug 11 2017 socat-1.7.3.2-2.el7.x86_64.rpm

-rw-r--r-- 1 502 games 120184 Aug 11 2017 yum-utils-1.1.31-42.el7.noarch.rpm导入镜像至docker

docker load -i docker_images/etcd-amd64_v3.1.10.tar

docker load -i docker_images/flannel:v0.9.1-amd64.tar

docker load -i docker_images/k8s-dns-dnsmasq-nanny-amd64_v1.14.7.tar

docker load -i docker_images/k8s-dns-kube-dns-amd64_1.14.7.tar

docker load -i docker_images/k8s-dns-sidecar-amd64_1.14.7.tar

docker load -i docker_images/kube-apiserver-amd64_v1.9.0.tar

docker load -i docker_images/kube-controller-manager-amd64_v1.9.0.tar

docker load -i docker_images/kube-proxy-amd64_v1.9.0.tar

docker load -i docker_images/kube-scheduler-amd64_v1.9.0.tar

docker load -i docker_images/pause-amd64_3.0.tar

docker load -i kubernetes-dashboard_v1.8.1.tar部署K8S

rpm -ivh socat-1.7.3.2-2.el7.x86_64.rpm

rpm -ivh kubernetes-cni-0.6.0-0.x86_64.rpm kubelet-1.9.9-9.x86_64.rpm kubectl-1.9.0-0.x86_64.rpm

rpm -ivh kubectl-1.9.0-0.x86_64.rpm rpm -ivh kubeadm-1.9.0-0.x86_64.rpm错误:rpm 包报错时;

[root@k8s-2 k8s_images]# rpm -ivh kubernetes-cni-0.6.0-0.x86_64.rpm kubelet-1.9.9-9.x86_64.rpm kubectl-1.9.0-0.x86_64.rpm

warning: kubernetes-cni-0.6.0-0.x86_64.rpm: Header V4 RSA/SHA1 Signature, key ID 3e1ba8d5: NOKEY error: Failed dependencies: ebtables is needed by kubelet-1.9.0-0.x86_64解决方案: 缺少 ebtables 依赖报错误;

kubelet的【cgroup-driver】需要和docker的保持一致,通过命令【docker info】可以查看docker的【Cgroup Driver】属性值。

这里可以看到docker的【Cgroup Driver】是【cgroupfs】,所以这里需要将kubelet的【cgroup-driver】也修改为【cgroupfs】。

[root@node1 k8s_images]# vim /etc/systemd/system/kubelet.service.d/10-kubeadm.conf #修改kubeadm.conf

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf"

Environment="KUBELET_SYSTEM_PODS_ARGS=--pod-manifest-path=/etc/kubernetes/manifests --allow-privileged=true"

Environment="KUBELET_NETWORK_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin"

Environment="KUBELET_DNS_ARGS=--cluster-dns=10.96.0.10 --cluster-domain=cluster.local"

Environment="KUBELET_AUTHZ_ARGS=--authorization-mode=Webhook --client-ca-file=/etc/kubernetes/pki/ca.crt"

Environment="KUBELET_CADVISOR_ARGS=--cadvisor-port=0"

Environment="KUBELET_CGROUP_ARGS=--cgroup-driver=cgroupfs" ###修改这里;

Environment="KUBELET_CERTIFICATE_ARGS=--rotate-certificates=true --cert-dir=/var/lib/kubelet/pki"

ExecStart=

ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_SYSTEM_PODS_ARGS $KUBELET_NETWORK_ARGS $KUBELET_DNS_ARGS $KUBELET_AUTHZ_ARGS $KUBELET_CADVISOR_ARGS $KUBELET_CGROUP_ARGS $KUBELET_CERTIFICATE_ARGS $KUBELET_EXTRA_ARGS kubelet的【cgroup-driver】需要和docker的保持一致,通过命令【docker info】可以查看docker的【Cgroup Driver】属性值。

[root@master ~]# docker info|grep Cgroup

Cgroup Driver: cgroupfs这里可以看到docker的【Cgroup Driver】是【cgroupfs】,所以这里需要将kubelet的【cgroup-driver】也修改为【cgroupfs】。

修改完成后重载配置文件,设置kubelet开机启动;

systemctl daemon-reload && systemctl enable kubelet配置master节点

2.1 初始化Kubernetes

kubeadm init --kubernetes-version=v1.9.0 --pod-network-cidr=10.244.0.0/16kubernetes默认支持多重网络插件如flannel、weave、calico,这里使用flanne,就必须要设置【--pod-network-cidr】参数,10.244.0.0/16是kube-flannel.yml里面配置的默认网段,这里的【--pod-network-cidr】参数要和【kube-flannel.yml】文件中的【Network】参数对应。

初始化输入如下:

[root@k8smaster ~]# kubeadm init --kubernetes-version=v1.9.0 --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.9.0

[init] Using Authorization modes: [Node RBAC]

[preflight] Running pre-flight checks.

[WARNING FileExisting-crictl]: crictl not found in system path

[preflight] Starting the kubelet service

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [k8smaster kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.242.136]

[certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] Generated sa key and public key.

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] Valid certificates and keys now exist in "/etc/kubernetes/pki"

[kubeconfig] Wrote KubeConfig file to disk: "admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "scheduler.conf"

[controlplane] Wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] Wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] Wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] Waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests".

[init] This might take a minute or longer if the control plane images have to be pulled.

[apiclient] All control plane components are healthy after 44.002305 seconds

[uploadconfig] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[markmaster] Will mark node k8smaster as master by adding a label and a taint

[markmaster] Master k8smaster tainted and labelled with key/value: node-role.kubernetes.io/master=""

[bootstraptoken] Using token: abb43a.62186b817d71bcd2

[bootstraptoken] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: kube-dns

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join --token abb43a.62186b817d71bcd2 192.168.242.136:6443 --discovery-token-ca-cert-hash sha256:6a7625aa2928085fde84cfd918398408771dfe6af5c88c73b2d47527a00a8dad将【 kubeadm join --token xxxx】这段记下来,加入node节点需要用到这个令牌,如果忘记了可以使用如下命令查看

kubeadm token list #查看令牌

kubeadm token create #令牌的时效性是24个小时,如果过期了可以使用如下命令创建配置环境变量

此时root用户还不能使用kubelet控制集群,需要按照以下方法配置环境变量

将信息写入bash_profile文件

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

运行命令立即生效

source ~/.bash_profile

查看版本测试下

kubectl version安装flannel

直接使用离线包里面的【kube-flannel.yml】

kubectl create -f /usr/local/k8s_images/kube-flannel.yml配置node节点

使用配置master节点初始化Kubernetes生成的token将3个node节点加入集群;

kubeadm join --token abb43a.62186b817d71bcd2 192.168.6.31:6443 --discovery-token-ca-cert-hash sha256:6a7625aa2928085fde84cfd918398408771dfe6af5c88c73b2d47527a00a8dad查看集群节点信息

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready master 34d v1.9.0

node2 Ready 34d v1.9.0

node3 Ready 34d v1.9.0

kubernetes会在每个node节点创建flannel和kube-proxy的pod,通过如下命令查看pods

[root@master tools]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system etcd-master 1/1 Running 0 39m

kube-system kube-apiserver 0/1 CrashLoopBackOff 8 16m

kube-system kube-apiserver-master 1/1 Running 0 16m

kube-system kube-controller-manager-master 1/1 Running 1 39m

kube-system kube-dns-6f4fd4bdf-j5qc4 3/3 Running 0 40m

kube-system kube-flannel-ds-7ph9c 1/1 Running 0 37m

kube-system kube-flannel-ds-h64p7 1/1 Running 0 21m

kube-system kube-flannel-ds-sms26 1/1 Running 0 39m

kube-system kube-proxy-kn9nc 1/1 Running 0 21m

kube-system kube-proxy-nvr7c 1/1 Running 0 40m

kube-system kube-proxy-ql99p 1/1 Running 0 37m

kube-system kube-scheduler-master 1/1 Running 1 39m

kube-system kubernetes-dashboard-58f5cb49c-qv84d 1/1 Running 0 18m查看集群状态

[root@master tools]# kubectl cluster-info

Kubernetes master is running at https://192.168.6.31:6443

KubeDNS is running at https://192.168.6.31:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

搭建dashboard

在master节点上,直接使用离线包里面的【kubernetes-dashboard.yaml】来创建

kubectl create -f /usr/local/k8s_images/kubernetes-dashboard.yaml接着设置验证方式,默认验证方式有kubeconfig和token,这里使用basicauth的方式进行apiserver的验证。

创建【/etc/kubernetes/pki/basic_auth_file】用于存放用户名、密码、用户ID。

admin,admin,2编辑【/etc/kubernetes/manifests/kube-apiserver.yaml】文件,添加basic_auth验证

vim /etc/kubernetes/manifests/kube-apiserver.yaml添加一行

- --basic_auth_file=/etc/kubernetes/pki/basic_auth_filesystemctl restart kubelet #重启kubele接下来给admin用户授权,k8s1.6后版本都采用RBAC授权模型,默认cluster-admin是拥有全部权限的,将admin和cluster-admin bind这样admin就有cluster-admin的权限。查看cluster-admin

kubectl get clusterrole/cluster-admin -o yaml #以yaml格式查看

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

creationTimestamp: 2021-04-09T02:32:04Z

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: cluster-admin

resourceVersion: "12"

selfLink: /apis/rbac.authorization.k8s.io/v1/clusterroles/cluster-admin

uid: c5788b02-98db-11eb-8e3e-fa163ec3b4df

rules:

- apiGroups:

- '*'

resources:

- '*'

verbs:

- '*'

- nonResourceURLs:

- '*'

verbs:

- '*'

将admin和cluster-admin绑定

kubectl create clusterrolebinding login-on-dashboard-with-cluster-admin --clusterrole=cluster-admin --user=admin然后查看一下

kubectl get clusterrolebinding/login-on-dashboard-with-cluster-admin -o yaml现在可以登录试试,在浏览器中输入地址【https://192.168.6.31:32666】,这里需要用Firefox,Chrome由于安全机制访问不了。

选择【Basic】认证方式,输入【/etc/kubernetes/pki/basic_auth_file】文件中配置的用户名和密码登录。

安装参考文章:https://segmentfault.com/a/1190000012755243

kubeadm token create --print-join-command #重新生成Token链接

kubectl delete node node3 #删除node节点

# 卸载服务

kubeadm reset

# 删除rpm包

rpm -qa|grep kube*|xargs rpm --nodeps -e

# 删除容器及镜像

docker images -qa|xargs docker rmi -f