Hbase简介及Hbase部署、原理和使用介绍,Phoenix使用

Hbase简介及Hbase部署、原理和使用介绍(+phoenix使用)

HBase概述

HBase定义

HBase是一种分布式、可扩展、支持海量数据存储的NoSQL数据库。就像Bigtable利用了Google文件系统(File System)所提供的分布式数据存储一样,HBase在Hadoop之上提供了类似于Bigtable的能力。HBase是Apache的Hadoop项目的子项目。HBase不同于一般的关系数据库,它是一个适合于非结构化数据存储的数据库。另一个不同的是HBase基于列的而不是基于行的模式。

· 是一种面向列簇存储的非关系型数据库。

· 用于存储结构化和非结构化的数据,适用于单表非关系型数据的存储,不适合做关联查询,类似JOIN等操作。

· 基于HDFS,数据持久化存储的体现形式是HFile,存放于DataNode中,被ResionServer以region的形式进行管理。

· 延迟较低,接入在线业务使用,面对大量的企业数据,HBase可以实现单表大量数据的存储,同时提供了高效的数据访问速度。

HBase数据模型

逻辑上,HBase的数据模型同关系型数据库很类似,数据存储在一张表中,有行有列。但从HBase的底层物理存储结构(K-V)来看,HBase更像是一个multi-dimensional map。

HBase数据模型

1. Name Space

命名空间,类似于关系型数据库的DatabBase概念,每个命名空间下有多个表。HBase有两个自带的命名空间,分别是hbase和default,hbase中存放的是HBase内置的表,default表是用户默认使用的命名空间。

2.Region

类似于关系型数据库的表概念。不同的是,HBase定义表时只需要声明列族即可,不需要声明具体的列。这意味着,往HBase写入数据时,字段可以动态、按需指定。因此,和关系型数据库相比,HBase能够轻松应对字段变更的场景。

3.Row

HBase表中的每行数据都由一个RowKey和多个Column(列)组成,数据是按照RowKey的字典顺序存储的,并且查询数据时只能根据RowKey进行检索,所以RowKey的设计十分重要。

Row Key

Rowkey 的概念和 mysql 中的主键类似,Hbase 使用 Rowkey 来唯一的区分某一行的数据。Hbase只支持3种查询方式: 1、基于Rowkey的单行查询,2、基于Rowkey的范围扫描 ,3、全表扫描

因此,Rowkey对Hbase的性能影响非常大。设计的时候要兼顾基于Rowkey的单行查询也要键入Rowkey的范围扫描。

4.Column

HBase中的每个列都由Column Family(列族)和Column Qualifier(列限定符)进行限定,例如info:name,info:age。建表时,只需指明列族,而列限定符无需预先定义。

Column Family(列簇)

Hbase 通过列簇划分数据的存储,列簇下面可以包含任意多的列,实现灵活的数据存取。列簇是由一个一个的列组成(任意多),在列数据为空的情况下,不会占用存储空间。

Hbase 创建表的时候必须指定列簇。就像关系型数据库创建的时候必须指定具体的列是一样的。

Hbase的列簇不是越多越好,官方推荐的是列簇最好小于或者等于3。一般是1个列簇。

新的列簇成员(列)可以随后动态加入,Family下面可以有多个Qualifier,所以可以简单的理解为,HBase中的列是二级列,也就是说Family是第一级列,Qualifier是第二级列。

5.Time Stamp

用于标识数据的不同版本(version),每条数据写入时,如果不指定时间戳,系统会自动为其加上该字段,其值为写入HBase的时间。

6.Cell

由{rowkey, column Family:column Qualifier, time Stamp} 唯一确定的单元。cell中的数据是没有类型的,全部是字节数组形式存贮。

HBase逻辑存储

HBase物理存储

HBase入门架构

【注意】:

这里先简单了解一下大致思路即可,后续部署完毕和简单的操作体验后,再对照架构图梳理原理和思路,对架构认知才会清晰,所以这里看个概念,了解一下有些什么组件。

1.Region Server

Region Server为 Region的管理者,其实现类为HRegionServer,主要作用如下:

对于数据的操作:get, put, delete;

对于Region的操作:splitRegion、compactRegion。

2.Master

Master是所有Region Server的管理者,其实现类为HMaster,主要作用如下:

对于表的操作:create, delete, alter

对于RegionServer的操作:分配regions到每个RegionServer,监控每个RegionServer的状态,负载均衡和故障转移。

3.Zookeeper

HBase通过Zookeeper来做Master的高可用、RegionServer的监控、元数据的入口以及集群配置的维护等工作。

4.HDFS

HDFS为HBase提供最终的底层数据存储服务,同时为HBase提供高可用的支持。

HBase安装部署

HBase安装准备

安装部署前,需要已经具备如下环境

-

java环境 # 必备

-

/etc/hosts 主机解析 # 把相关几台服务器的主机名和ip解析

11.8.37.50 ops01

11.8.36.63 ops02

11.8.36.76 ops03

11.8.38.86 ops04 -

Zookeeper # 存储元数据的入口以及集群配置的维护

Zookeeper 相关介绍部署连接:https://blog.csdn.net/wt334502157/article/details/115213645

-

Hadoop # 利用hdfs存储数据

Hadoop 相关介绍部署连接:https://blog.csdn.net/wt334502157/article/details/114916871

-

上传安装包

hbase官网:http://hbase.apache.org/

hbase所有版本官网下载:http://archive.apache.org/dist/hbase/

本次部署实验版本为2.0.5:http://archive.apache.org/dist/hbase/2.0.5/hbase-2.0.5-bin.tar.gz

phoenix下载地址:http://archive.apache.org/dist/phoenix/

(phoenix 提前下载,工具后续需要用到,Phoenix是HBase的开源SQL皮肤工具)

phoenix本次部署实验版本5.0.0:http://archive.apache.org/dist/phoenix/apache-phoenix-5.0.0-HBase-2.0/bin/apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz

环境检查:

# 相关服务器java环境

wangting@ops01:/home/wangting >java -version

openjdk version "1.8.0_232"

OpenJDK Runtime Environment (build 1.8.0_232-b09)

OpenJDK 64-Bit Server VM (build 25.232-b09, mixed mode)

# 相关服务器均配置hosts解析

wangting@ops01:/home/wangting >cat /etc/hosts

127.0.0.1 ydt-cisp-ops01

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

11.16.0.176 rancher.mydomain.com

11.8.38.123 www.tongtongcf.com

# ops集群

11.8.37.50 ops01

11.8.36.63 ops02

11.8.36.76 ops03

11.8.38.86 ops04

# 检查集群的zookeeper状态

wangting@ops01:/home/wangting >for i in ops01 ops02 ops03 ; do echo ===== $i ===== && ssh $i netstat -tnlpu | grep -E "2181|2888|3888";done

===== ops01 ===== # follow

tcp6 0 0 11.8.37.50:3888 :::* LISTEN 41773/java

tcp6 0 0 :::2181 :::* LISTEN 41773/java

===== ops02 ===== # leader

tcp6 0 0 11.8.36.63:3888 :::* LISTEN 33012/java

tcp6 0 0 :::2181 :::* LISTEN 33012/java

tcp6 0 0 11.8.36.63:2888 :::* LISTEN 33012/java

===== ops03 ===== # follow

tcp6 0 0 11.8.36.76:3888 :::* LISTEN 102422/java

tcp6 0 0 :::2181 :::* LISTEN 102422/java

wangting@ops01:/home/wangting >

# 查看集群已经启动的java应用,如果脚本在几个服务器逐个执行 [wangting@ops01:/home/wangting >jps ]命令即可

wangting@ops01:/home/wangting >jpsall.sh # hadoop相关服务都正常运行着

============= ops01 =============

42305 Kafka

70611 DataNode

70437 NameNode

110869 JobHistoryServer

72582 RunJar

117864 Jps

71290 NodeManager

76269 RunJar

41773 QuorumPeerMain

72319 RunJar

============= ops02 =============

33012 QuorumPeerMain

66996 Jps

40603 NodeManager

40476 ResourceManager

33518 Kafka

85150 DataNode

============= ops03 =============

52849 DataNode

121382 NodeManager

102886 Kafka

102422 QuorumPeerMain

52969 SecondaryNameNode

7486 Jps

============= ops04 =============

103152 Jps

11914 DataNode

12171 NodeManager

=====================================

正常配置:

ops01 : NameNode | DataNode / NodeManager

ops02 : DataNode / ResourceManager | NodeManager

ops03 : SecondaryNameNode | DataNode / NodeManager

ops04 : NodeManager / DataNode

# 查看一下hdfs集群信息状态,当前4台服务器ops01|ops02|ops03|ops04 (实验3台机器即可)

wangting@ops01:/home/wangting >hadoop dfsadmin -report

WARNING: Use of this script to execute dfsadmin is deprecated.

WARNING: Attempting to execute replacement "hdfs dfsadmin" instead.

2021-05-09 16:09:15,947 INFO [main] Configuration.deprecation (Configuration.java:logDeprecation(1395)) - No unit for dfs.client.datanode-restart.timeout(30) assuming SECONDS

Configured Capacity: 348732002304 (324.78 GB)

Present Capacity: 260076236800 (242.21 GB)

DFS Remaining: 258633764864 (240.87 GB)

DFS Used: 1442471936 (1.34 GB)

DFS Used%: 0.55%

Replicated Blocks:

Under replicated blocks: 0

Blocks with corrupt replicas: 0

Missing blocks: 0

Missing blocks (with replication factor 1): 0

Low redundancy blocks with highest priority to recover: 0

Pending deletion blocks: 0

Erasure Coded Block Groups:

Low redundancy block groups: 0

Block groups with corrupt internal blocks: 0

Missing block groups: 0

Low redundancy blocks with highest priority to recover: 0

Pending deletion blocks: 0

-------------------------------------------------

Live datanodes (4):

Name: 11.8.36.63:9866 (ops02)

Hostname: ops02

Decommission Status : Normal

Configured Capacity: 47549591552 (44.28 GB)

DFS Used: 462950400 (441.50 MB)

Non DFS Used: 10877906944 (10.13 GB)

DFS Remaining: 33769746432 (31.45 GB)

DFS Used%: 0.97%

DFS Remaining%: 71.02%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Sun May 09 16:09:15 CST 2021

Last Block Report: Sun May 09 12:30:23 CST 2021

Num of Blocks: 113

Name: 11.8.36.76:9866 (ops03)

Hostname: ops03

Decommission Status : Normal

Configured Capacity: 47549591552 (44.28 GB)

DFS Used: 465338368 (443.78 MB)

Non DFS Used: 7783776256 (7.25 GB)

DFS Remaining: 36861489152 (34.33 GB)

DFS Used%: 0.98%

DFS Remaining%: 77.52%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Sun May 09 16:09:14 CST 2021

Last Block Report: Sun May 09 11:46:50 CST 2021

Num of Blocks: 123

Name: 11.8.37.50:9866 (ops01)

Hostname: ops01

Decommission Status : Normal

Configured Capacity: 206083227648 (191.93 GB)

DFS Used: 480792576 (458.52 MB)

Non DFS Used: 49684766720 (46.27 GB)

DFS Remaining: 147036725248 (136.94 GB)

DFS Used%: 0.23%

DFS Remaining%: 71.35%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Sun May 09 16:09:14 CST 2021

Last Block Report: Sun May 09 16:00:38 CST 2021

Num of Blocks: 175

Name: 11.8.38.86:9866 (ops04)

Hostname: ops04

Decommission Status : Normal

Configured Capacity: 47549591552 (44.28 GB)

DFS Used: 33390592 (31.84 MB)

Non DFS Used: 4111409152 (3.83 GB)

DFS Remaining: 40965804032 (38.15 GB)

DFS Used%: 0.07%

DFS Remaining%: 86.15%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Sun May 09 16:09:14 CST 2021

Last Block Report: Sun May 09 14:57:26 CST 2021

Num of Blocks: 117

HBase安装

# 准备使用的安装包

wangting@ops01:/opt/software >ll | grep -E "hbase|phoenix"

-rw-r--r-- 1 wangting wangting 436868323 May 9 17:11 apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz

-rw-r--r-- 1 wangting wangting 132569269 May 9 16:27 hbase-2.0.5-bin.tar.gz

wangting@ops01:/opt/software >

# 解压目录至/opt/module/

wangting@ops01:/opt/software >tar -zxvf hbase-2.0.5-bin.tar.gz -C /opt/module/

wangting@ops01:/opt/software >

wangting@ops01:/opt/software >cd /opt/module/hbase-2.0.5/conf/

# 修改hbase-env.sh,打开HBASE_MANAGES_ZK的注释,并修改为false

wangting@ops01:/opt/module/hbase-2.0.5/conf >vim hbase-env.sh

export HBASE_MANAGES_ZK=false

# [注意:]设置HBASE_MANAGES_ZK=false,功能为表示禁止使用HBase自带的Zookeeper,用自建的zk集群

# 修改配置文件hbase-site.xml ,引入必要配置功能

wangting@ops01:/opt/module/hbase-2.0.5/conf >vim hbase-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>hbase.rootdir</name>

<value>hdfs://ops01:8020/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>ops01,ops02,ops03</value>

</property>

<property>

<name>hbase.unsafe.stream.capability.enforce</name>

<value>false</value>

</property>

<property>

<name>hbase.wal.provider</name>

<value>filesystem</value>

</property>

<property>

<name>hbase.master.maxclockskew</name>

<value>180000</value>

</property>

</configuration>

wangting@ops01:/opt/module/hbase-2.0.5/conf >

# 修改regionservers,配置集群区域主机信息,切记已经配置了hosts文件中的解析,否则服务和zk都会连接不上

wangting@ops01:/opt/module/hbase-2.0.5/conf >vim regionservers

ops01

ops02

ops03

wangting@ops01:/opt/module/hbase-2.0.5/conf >

# 将hbase安装目录改名,切换目录方便

wangting@ops01:/opt/module/hbase-2.0.5/conf >cd /opt/module/

wangting@ops01:/opt/module >mv hbase-2.0.5/ hbase

# 将hadoop相关配置文件软链至hbase的配置目录下

wangting@ops01:/opt/module/hbase-2.0.5/conf >ls /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml

/opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml

wangting@ops01:/opt/module >ln -s /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml /opt/module/hbase/conf/core-site.xml

wangting@ops01:/opt/module >ls /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

/opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

wangting@ops01:/opt/module >ln -s /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml /opt/module/hbase/conf/hdfs-site.xml

wangting@ops01:/opt/module >cd /opt/module/hbase/conf/

wangting@ops01:/opt/module/hbase/conf >ll

total 40

lrwxrwxrwx 1 wangting wangting 49 May 10 11:43 core-site.xml -> /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml

-rw-r--r-- 1 wangting wangting 1811 Mar 18 2019 hadoop-metrics2-hbase.properties

-rw-r--r-- 1 wangting wangting 4271 Mar 18 2019 hbase-env.cmd

-rw-r--r-- 1 wangting wangting 7236 May 10 11:36 hbase-env.sh

-rw-r--r-- 1 wangting wangting 2257 Mar 18 2019 hbase-policy.xml

-rw-r--r-- 1 wangting wangting 1434 May 10 11:39 hbase-site.xml

lrwxrwxrwx 1 wangting wangting 49 May 10 11:44 hdfs-site.xml -> /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

-rw-r--r-- 1 wangting wangting 4977 Mar 18 2019 log4j.properties

-rw-r--r-- 1 wangting wangting 19 May 10 11:40 regionservers

wangting@ops01:/opt/module/hbase/conf >

# 修改环境变量/etc/profile,增加hbase信息

wangting@ops01:/opt/module/hbase/conf >sudo vim /etc/profile

#hbase

export HBASE_HOME=/opt/module/hbase

export PATH=$PATH:$HBASE_HOME/bin

wangting@ops01:/opt/module/hbase/conf >source /etc/profile

wangting@ops01:/opt/module/hbase/conf >

# 将配置好的hbase安装目录分发至其它服务器

wangting@ops01:/opt/module/hbase/conf >scp -r /opt/module/hbase ops02:/opt/module/

wangting@ops01:/opt/module/hbase/conf >scp -r /opt/module/hbase ops03:/opt/module/

# 配置ops02的环境变量

wangting@ops02:/opt/module >sudo vim /etc/profile

[sudo] password for wangting:

wangting@ops02:/opt/module >source /etc/profile

wangting@ops02:/opt/module >

# 配置ops03的环境变量

wangting@ops03:/opt/module >sudo vim /etc/profile

[sudo] password for wangting:

wangting@ops03:/opt/module >source /etc/profile

wangting@ops03:/opt/module >

# 启动服务前查看服务器时间是否异常,不同步需要同步时间

wangting@ops01:/opt/module/hbase >for i in ops01 ops02 ops03; do echo $i && ssh $i date|awk -F" " '{print $4}' ;done

ops01

11:53:25

ops02

11:53:25

ops03

11:53:25

wangting@ops01:/opt/module/hbase >

# 启动服务

wangting@ops01:/opt/module/hbase >start-hbase.sh

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/hbase/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

running master, logging to /opt/module/hbase/logs/hbase-wangting-master-ops01.out

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/hbase/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

ops03: +======================================================================+

ops03: | Error: JAVA_HOME is not set |

ops03: +----------------------------------------------------------------------+

ops03: | Please download the latest Sun JDK from the Sun Java web site |

ops03: | > http://www.oracle.com/technetwork/java/javase/downloads |

ops03: | |

ops03: | HBase requires Java 1.8 or later. |

ops03: +======================================================================+

ops01: +======================================================================+

ops01: | Error: JAVA_HOME is not set |

ops01: +----------------------------------------------------------------------+

ops01: | Please download the latest Sun JDK from the Sun Java web site |

ops01: | > http://www.oracle.com/technetwork/java/javase/downloads |

ops01: | |

ops01: | HBase requires Java 1.8 or later. |

ops01: +======================================================================+

ops02: +======================================================================+

ops02: | Error: JAVA_HOME is not set |

ops02: +----------------------------------------------------------------------+

ops02: | Please download the latest Sun JDK from the Sun Java web site |

ops02: | > http://www.oracle.com/technetwork/java/javase/downloads |

ops02: | |

ops02: | HBase requires Java 1.8 or later. |

ops02: +======================================================================+

# 服务启动发现有JAVA_HOME识别不到的情况

wangting@ops01:/opt/module/hbase >

# 停止hbase服务,解决问题

wangting@ops01:/opt/module/hbase >stop-hbase.sh

stopping hbase...

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/hbase/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

wangting@ops01:/opt/module/hbase >

# 找不到JAVA_HOME ,需要修改一下hbase-env.sh 3台服务器都需要操作

# 增加真实的JAVA_HOME路径保存

wangting@ops01:/opt/module/hbase/conf >tail -4 hbase-env.sh

# java

export JAVA_HOME=/usr/jdk1.8.0_131/

# 再次启动服务

wangting@ops01:/opt/module/hbase/conf >start-hbase.sh

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/hbase/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

running master, logging to /opt/module/hbase/logs/hbase-wangting-master-ops01.out

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/hbase/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

ops01: running regionserver, logging to /opt/module/hbase/bin/../logs/hbase-wangting-regionserver-ops01.out

ops03: running regionserver, logging to /opt/module/hbase/bin/../logs/hbase-wangting-regionserver-ops03.out

ops02: running regionserver, logging to /opt/module/hbase/bin/../logs/hbase-wangting-regionserver-ops02.out

服务start启动后,先大致浏览一下运行环境

官方文档手册:http://hbase.apache.org/book.html

从官方文档手册查阅端口介绍:

- hbase.master.port 16000

- hbase.master.info.port 16010

- hbase.regionserver.port 16020

- hbase.regionserver.info.port 16030

# 查看一下相关端口是否都已经成功开启

wangting@ops01:/opt/module/hbase/conf >for i in ops01 ops02 ops03; do echo === $i === && ssh $i netstat -tnlpu|grep -E "16000|16010|16020|16030" ;done

=== ops01 ===

tcp6 0 0 11.8.37.50:16020 :::* LISTEN 66421/java

tcp6 0 0 :::16030 :::* LISTEN 66421/java

tcp6 0 0 11.8.37.50:16000 :::* LISTEN 66222/java

tcp6 0 0 :::16010 :::* LISTEN 66222/java

=== ops02 ===

tcp6 0 0 11.8.37.50:16020 :::* LISTEN 66421/java

tcp6 0 0 :::16030 :::* LISTEN 66421/java

tcp6 0 0 11.8.37.50:16000 :::* LISTEN 66222/java

tcp6 0 0 :::16010 :::* LISTEN 66222/java

=== ops03 ===

tcp6 0 0 11.8.37.50:16020 :::* LISTEN 66421/java

tcp6 0 0 :::16030 :::* LISTEN 66421/java

tcp6 0 0 11.8.37.50:16000 :::* LISTEN 66222/java

tcp6 0 0 :::16010 :::* LISTEN 66222/java

打开浏览器访问验证一下效果(windows没有配置域名解析,这里需要直接输入ip地址加端口):

到此,服务安装部署完毕

HBase简单操作入门

# 进入HBase客户端命令行,命令行操作仅仅为了熟悉一些常用功能有哪些,真实场景大多都是用工具来操作

wangting@ops01:/opt/module/hbase/conf >hbase shell

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/hbase/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

HBase Shell

Use "help" to get list of supported commands.

Use "exit" to quit this interactive shell.

For Reference, please visit: http://hbase.apache.org/2.0/book.html#shell

Version 2.0.5, r76458dd074df17520ad451ded198cd832138e929, Mon Mar 18 00:41:49 UTC 2019

Took 0.0036 seconds

hbase(main):001:0>

# 查看命令行帮助,大致熟悉一下有哪些类型的操作命令

hbase(main):002:0* help

# 查看当前数据库中有哪些表,相当于sql的show tables

hbase(main):004:0* list

TABLE

0 row(s)

Took 0.4673 seconds

=> []

# 创建表,注意创建表的时候只需要表名和列族,因为column是可随意增加的,建表无需考虑

hbase(main):006:0* create 'ZTbigdata','info'

Created table ZTbigdata

Took 1.3820 seconds

=> Hbase::Table - ZTbigdata

# 向表中插入数据,类似sql的insert

hbase(main):007:0>

hbase(main):008:0* put 'ZTbigdata','1001','info:company','caitong'

Took 0.1842 seconds

hbase(main):009:0> put 'ZTbigdata','1001','info:usercode','00100000001'

Took 0.0075 seconds

hbase(main):010:0> put 'ZTbigdata','1002','info:name','dongbei'

Took 0.0063 seconds

hbase(main):011:0> put 'ZTbigdata','1002','info:type','quanshang'

Took 0.0061 seconds

hbase(main):012:0> put 'ZTbigdata','1002','info:usercode','002002002'

Took 0.0069 seconds

hbase(main):013:0>

# 查看表中数据,ROW中的类似于主键,查对应的ROW能查到对应行的信息

hbase(main):030:0> scan 'ZTbigdata'

ROW COLUMN+CELL

1001 column=info:company, timestamp=1620636573247, value=caitong

1001 column=info:usercode, timestamp=1620636580325, value=00100000001

1002 column=info:name, timestamp=1620636587121, value=dongbei

1002 column=info:type, timestamp=1620636593149, value=quanshang

1002 column=info:usercode, timestamp=1620636600145, value=002002002

2 row(s)

Took 0.9372 seconds

# 条件查询

hbase(main):031:0> scan 'ZTbigdata',{

STARTROW => '1001', STOPROW => '1001'}

ROW COLUMN+CELL

1001 column=info:company, timestamp=1620636573247, value=caitong

1001 column=info:usercode, timestamp=1620636580325, value=00100000001

1 row(s)

Took 0.0213 seconds

hbase(main):032:0> scan 'ZTbigdata',{

STARTROW => '1001'}

ROW COLUMN+CELL

1001 column=info:company, timestamp=1620636573247, value=caitong

1001 column=info:usercode, timestamp=1620636580325, value=00100000001

1002 column=info:name, timestamp=1620636587121, value=dongbei

1002 column=info:type, timestamp=1620636593149, value=quanshang

1002 column=info:usercode, timestamp=1620636600145, value=002002002

2 row(s)

Took 0.0129 seconds

# 查看表结构

hbase(main):034:0> describe 'ZTbigdata'

Table ZTbigdata is ENABLED

ZTbigdata

COLUMN FAMILIES DESCRIPTION

{

NAME => 'info', VERSIONS => '1', EVICT_BLOCKS_ON_CLOSE => 'false', NEW_VERSION_BEHAVIOR => 'false', KEEP_DELETED_CELLS => 'FALSE', CACHE_DATA_ON_WRITE => 'false', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', MIN_VERSIONS => '0', RE

PLICATION_SCOPE => '0', BLOOMFILTER => 'ROW', CACHE_INDEX_ON_WRITE => 'false', IN_MEMORY => 'false', CACHE_BLOOMS_ON_WRITE => 'false', PREFETCH_BLOCKS_ON_OPEN => 'false', COMPRESSION => 'NONE', BLOCKCACHE => 'true', BLOCKSIZE => '65536'

}

1 row(s)

Took 0.0584 seconds

# 更新指定字段的数据

hbase(main):035:0> put 'ZTbigdata','1001','info:company','caitongnew'

Took 0.0816 seconds

hbase(main):036:0> put 'ZTbigdata','1001','info:usercode','00100000002'

Took 0.0071 seconds

hbase(main):037:0> scan 'ZTbigdata'

ROW COLUMN+CELL

1001 column=info:company, timestamp=1620637070160, value=caitongnew

1001 column=info:usercode, timestamp=1620637077504, value=00100000002

1002 column=info:name, timestamp=1620636587121, value=dongbei

1002 column=info:type, timestamp=1620636593149, value=quanshang

1002 column=info:usercode, timestamp=1620636600145, value=002002002

2 row(s)

Took 0.0219 seconds

# 查看“指定行”或“指定列族:列”的数据

hbase(main):038:0> get 'ZTbigdata','1001'

COLUMN CELL

info:company timestamp=1620637070160, value=caitongnew

info:usercode timestamp=1620637077504, value=00100000002

1 row(s)

Took 0.0309 seconds

hbase(main):039:0> get 'ZTbigdata','1001','info:company'

COLUMN CELL

info:company timestamp=1620637070160, value=caitongnew

1 row(s)

Took 0.0145 seconds

# 统计表数据行数

hbase(main):040:0> count 'ZTbigdata'

2 row(s)

Took 0.0449 seconds

=> 2

# 删除数据 [ 删除某rowkey的全部数据 ]

hbase(main):041:0> deleteall 'ZTbigdata','1001'

Took 0.0091 seconds

# 删除数据 [ 删除某rowkey的某一列数据 ]

hbase(main):042:0> delete 'ZTbigdata','1002','info:usercode'

Took 0.0081 seconds

# 清空表数据

hbase(main):043:0> truncate 'ZTbigdata'

Truncating 'ZTbigdata' table (it may take a while):

Disabling table...

Truncating table...

Took 2.0349 seconds

# [注意:] 清空表的操作顺序为先disable,然后再truncate

# 删除表 - 第一步:让该表为disable状态

hbase(main):044:0> disable 'ZTbigdata'

Took 0.7933 seconds

# 删除表 - 第二步:drop这个表

hbase(main):045:0> drop 'ZTbigdata'

Took 0.2539 seconds

# 再次查看表,此时ZTbigdata表已经没了

hbase(main):046:0> list

TABLE

0 row(s)

Took 0.0064 seconds

=> []

# 再次创建表,尝试直接drop表查看返回结果

hbase(main):047:0> create 'ZTbigdata','info'

Created table ZTbigdata

Took 0.7455 seconds

=> Hbase::Table - ZTbigdata

hbase(main):048:0> list

TABLE

ZTbigdata

1 row(s)

Took 0.0085 seconds

=> ["ZTbigdata"]

# 如果不先更改disable状态直接drop,会抛出ERROR

hbase(main):049:0> drop 'ZTbigdata'

ERROR: Table ZTbigdata is enabled. Disable it first.

Drop the named table. Table must first be disabled:

hbase> drop 't1'

hbase> drop 'ns1:t1'

Took 0.0203 seconds

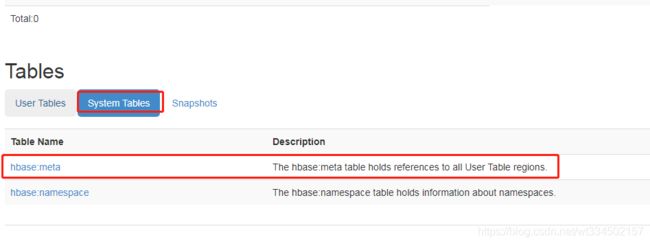

借助图形界面上信息,验证操作记录

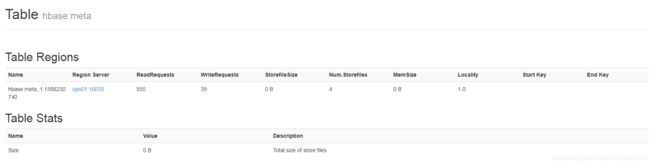

System Tables中能查看到所有表的meta元数据存储信息

【注意1】:

在hbase shell命令行操作时,如果出现操作行不是0> 结尾, 是*结尾,这时候可以输入2个 ‘ ’ 来恢复

hbase(main):044:0*

hbase(main):045:0* ‘’

=> ""

hbase(main):046:0> # 回到这种状态可以正常交互命令

【注意2】:

在我们启动hbase集群服务时,start-hbase.sh 在集群的哪台执行均可,但是在哪台执行,就意味着哪台就是master,浏览器中访问时,用master加16010端口访问

【注意3】:

start-hbase.sh是集群启动方式,也可以通过

hbase-daemon.sh start master

hbase-daemon.sh start regionserver

来逐个服务器启动master和regionserver

HBase进阶和原理解析

架构原理

- StoreFile

保存实际数据的物理文件,StoreFile以HFile的形式存储在HDFS上。每个Store会有一个或多个StoreFile(HFile),数据在每个StoreFile中都是有序的。 - MemStore

写缓存,由于HFile中的数据要求是有序的,所以数据是先存储在MemStore中,排好序后,等到达刷写时机才会刷写到HFile,每次刷写都会形成一个新的HFile。 - WAL

由于数据要经MemStore排序后才能刷写到HFile,但把数据保存在内存中会有很高的概率导致数据丢失,为了解决这个问题,数据会先写在一个叫做Write-Ahead logfile的文件中,然后再写入MemStore中。所以在系统出现故障的时候,数据可以通过这个日志文件重建。

数据写流程

写流程:

-

Client查看缓存,是否有Meta的cache;没有时则先访问zookeeper,获取hbase:meta表位于哪个Region Server。zookeeper返回meta表的元数据信息其中包含存储位置,例如meta表存储在Region Server: ops01上

-

访问对应的Region Server: ops01,从而获取hbase:meta表数据,根据读请求的namespace:table/rowkey,查询出目标数据位于哪个Region Server中的哪个Region中。并将该table的region信息以及meta表的位置信息缓存在客户端的meta cache,方便下次访问。

-

与目标Region Server进行通讯;

-

将数据顺序写入(追加)到WAL;

-

将数据写入对应的MemStore,数据会在MemStore进行排序;

-

向客户端发送ack;

-

等达到MemStore的刷写时机后,将数据刷写到HFile。

MemStore Flush

MemStore刷写时机:

- 当某个memstroe的大小达到了hbase.hregion.memstore.flush.size(默认值128M),其所在region的所有memstore都会刷写。

当memstore的大小达到了

hbase.hregion.memstore.flush.size(默认值128M)

hbase.hregion.memstore.block.multiplier(默认值4)

时,会阻止继续往该memstore写数据。 - 当region server中memstore的总大小达到

java_heapsize

hbase.regionserver.global.memstore.size(默认值0.4)

hbase.regionserver.global.memstore.size.upper.limit(默认值0.95),

region会按照其所有memstore的大小顺序(由大到小)依次进行刷写。直到region server中所有memstore的总大小减小到hbase.regionserver.global.memstore.size.lower.limit以下。

当region server中memstore的总大小达到

java_heapsize*hbase.regionserver.global.memstore.size(默认值0.4)

时,会阻止继续往所有的memstore写数据。 - 到达自动刷写的时间,也会触发memstore flush。自动刷新的时间间隔由该属性进行配置hbase.regionserver.optionalcacheflushinterval(默认1小时)。

- 当WAL文件的数量超过hbase.regionserver.max.logs,region会按照时间顺序依次进行刷写,直到WAL文件数量减小到hbase.regionserver.max.log以下

数据读流程

读流程:

- Client先访问zookeeper,获取hbase:meta表位于哪个Region Server。

- 访问对应的Region Server,获取hbase:meta表,根据读请求的namespace:table/rowkey,查询出目标数据位于哪个Region Server中的哪个Region中。并将该table的region信息以及meta表的位置信息缓存在客户端的meta cache,方便下次访问。

- 与目标Region Server进行通讯;

- 分别在Block Cache(读缓存),MemStore和Store File(HFile)中查询目标数据,并将查到的所有数据进行合并。此处所有数据是指同一条数据的不同版本(time stamp)或者不同的类型(Put/Delete)。

- 将从文件中查询到的数据块(Block,HFile数据存储单元,默认大小为64KB)缓存到Block Cache。

- 将合并后的最终结果返回给客户端。

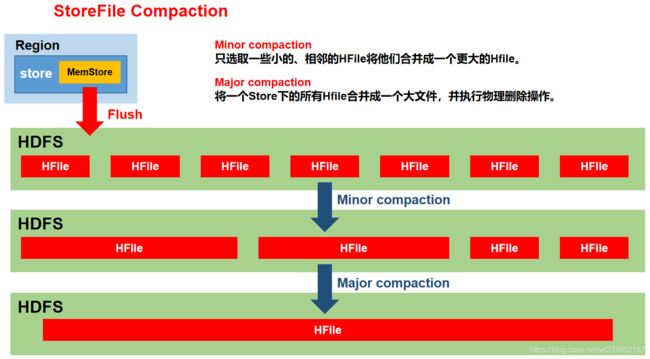

StoreFile Compaction

由于memstore每次刷写都会生成一个新的HFile,且同一个字段的不同版本(timestamp)和不同类型(Put/Delete)有可能会分布在不同的HFile中,因此查询时需要遍历所有的HFile。为了减少HFile的个数,以及清理掉过期和删除的数据,会进行StoreFile Compaction。

Compaction分为两种,分别是Minor Compaction和Major Compaction。Minor Compaction会将临近的若干个较小的HFile合并成一个较大的HFile,但不会清理过期和删除的数据。Major Compaction会将一个Store下的所有的HFile合并成一个大HFile,并且会清理掉过期和删除的数据。

Region Split

默认情况下,每个Table起初只有一个Region,随着数据的不断写入,Region会自动进行拆分。刚拆分时,两个子Region都位于当前的Region Server,但处于负载均衡的考虑,HMaster有可能会将某个Region转移给其他的Region Server。

Region Split时机:

1)当1个region中的某个Store下所有StoreFile的总大小超过hbase.hregion.max.filesize,该Region就会进行拆分(0.94版本之前)。

2)当1个region中的某个Store下所有StoreFile的总大小超过Min(R^2 * “hbase.hregion.memstore.flush.size”,hbase.hregion.max.filesize"),该Region就会进行拆分,其中R为当前Region Server中属于该Table的个数(0.94版本之后)。

HBase整合Phoenix使用

Phoenix简介

Phoenix定义

Phoenix是HBase的开源SQL皮肤。可以使用标准JDBC API代替HBase客户端API来创建表,插入数据和查询HBase数据。

Phoenix特点

1) 容易集成:如Spark,Hive,Pig,Flume和Map Reduce;

2) 操作简单:DML命令以及通过DDL命令创建和操作表和版本化增量更改;

3) 支持HBase二级索引创建。

Phoenix架构

Phoenix安装部署

# 安装包

wangting@ops01:/home/wangting >cd /opt/software/

wangting@ops01:/opt/software >ll | grep phoenix

-rw-r--r-- 1 wangting wangting 436868323 May 9 17:11 apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz

# 解压

wangting@ops01:/opt/software >tar -zxvf apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz -C /opt/module/

wangting@ops01:/opt/software >cd /opt/module/

wangting@ops01:/opt/module >ll

total 44

drwxr-xr-x 5 wangting wangting 4096 Jun 27 2018 apache-phoenix-5.0.0-HBase-2.0-bin

drwxrwxr-x 2 wangting wangting 4096 Apr 4 11:01 datas

drwxr-xr-x 12 wangting wangting 4096 Apr 24 16:37 flume

-rw-rw-r-- 1 wangting wangting 30 Apr 25 11:33 group.log

drwxr-xr-x 12 wangting wangting 4096 Mar 12 11:38 hadoop-3.1.3

drwxrwxr-x 8 wangting wangting 4096 May 10 11:55 hbase

drwxrwxr-x 11 wangting wangting 4096 Apr 2 15:14 hive

drwxr-xr-x 7 wangting wangting 4096 Apr 29 11:07 kafka

drwxrwxr-x 3 wangting wangting 4096 Apr 10 16:25 tez

drwxrwxr-x 5 wangting wangting 4096 Apr 2 15:03 tez-0.9.2_bak0410

drwxr-xr-x 8 wangting wangting 4096 Mar 25 11:02 zookeeper-3.5.7

# 改名

wangting@ops01:/opt/module >mv apache-phoenix-5.0.0-HBase-2.0-bin phoenix

wangting@ops01:/opt/module >cd phoenix/

wangting@ops01:/opt/module/phoenix >

# 复制server包和client包到hbase集群各节点的lib目录下

wangting@ops01:/opt/module/phoenix >cp phoenix-5.0.0-HBase-2.0-server.jar /opt/module/hbase/lib/

wangting@ops01:/opt/module/phoenix >scp phoenix-5.0.0-HBase-2.0-server.jar ops02:/opt/module/hbase/lib/

phoenix-5.0.0-HBase-2.0-server.jar 100% 40MB 112.8MB/s 00:00

wangting@ops01:/opt/module/phoenix >scp phoenix-5.0.0-HBase-2.0-server.jar ops03:/opt/module/hbase/lib/

phoenix-5.0.0-HBase-2.0-server.jar 100% 40MB 117.3MB/s 00:00

wangting@ops01:/opt/module/phoenix >cp phoenix-5.0.0-HBase-2.0-client.jar /opt/module/hbase/lib/

wangting@ops01:/opt/module/phoenix >scp phoenix-5.0.0-HBase-2.0-client.jar ops02:/opt/module/hbase/lib/

phoenix-5.0.0-HBase-2.0-client.jar 100% 129MB 115.3MB/s 00:01

wangting@ops01:/opt/module/phoenix >scp phoenix-5.0.0-HBase-2.0-client.jar ops03:/opt/module/hbase/lib/

phoenix-5.0.0-HBase-2.0-client.jar 100% 129MB 117.0MB/s 00:01

wangting@ops01:/opt/module/phoenix >

# 添加环境变量

wangting@ops01:/opt/module/phoenix >sudo vim /etc/profile

#phoenix

export PHOENIX_HOME=/opt/module/phoenix

export PATH=$PATH:$PHOENIX_HOME/bin

wangting@ops01:/opt/module/phoenix >source /etc/profile

# 检查一下zookeeper的2181端口运行状态

wangting@ops01:/opt/module/phoenix >for i in ops01 ops02 ops03 ;do ssh $i netstat -tnlpu|grep 2181;done

tcp6 0 0 :::2181 :::* LISTEN 41773/java

tcp6 0 0 :::2181 :::* LISTEN 41914/java

tcp6 0 0 :::2181 :::* LISTEN 52733/java

# 重启hbase集群

wangting@ops01:/opt/module/phoenix >stop-hbase.sh

stopping hbase.........

wangting@ops01:/opt/module/phoenix >start-hbase.sh

# 启动Phoenix 指定zookeeper集群

wangting@ops01:/opt/module/phoenix >sqlline.py ops01,ops02,ops03:2181

Setting property: [incremental, false]

Setting property: [isolation, TRANSACTION_READ_COMMITTED]

issuing: !connect jdbc:phoenix:ops01,ops02,ops03:2181 none none org.apache.phoenix.jdbc.PhoenixDriver

Connecting to jdbc:phoenix:ops01,ops02,ops03:2181

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/phoenix/phoenix-5.0.0-HBase-2.0-client.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

21/05/13 15:32:18 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Connected to: Phoenix (version 5.0)

Driver: PhoenixEmbeddedDriver (version 5.0)

Autocommit status: true

Transaction isolation: TRANSACTION_READ_COMMITTED

Building list of tables and columns for tab-completion (set fastconnect to true to skip)...

133/133 (100%) Done

Done

sqlline version 1.2.0

0: jdbc:phoenix:ops01,ops02,ops03:2181>

# Phoenix和hive使用方式一样,部署一个节点则使用一个节点,实验一个节点即可,如果多个服务器部署,分发一下

wangting@ops01:/opt/module/phoenix >scp -r /opt/module/phoenix ops02:/opt/module/

wangting@ops01:/opt/module/phoenix >scp -r /opt/module/phoenix ops03:/opt/module/

wangting@ops01:/opt/module/phoenix >scp -r /opt/module/phoenix ops04:/opt/module/

##############

wangting@ops02:/opt/module/phoenix >sudo vim /etc/profile

wangting@ops02:/opt/module/phoenix >source /etc/profile

wangting@ops02:/opt/module/phoenix >

##############

wangting@ops03:/opt/module/phoenix >sudo vim /etc/profile

wangting@ops03:/opt/module/phoenix >source /etc/profile

wangting@ops03:/opt/module/phoenix >

##############

wangting@ops04:/opt/module/phoenix >sudo vim /etc/profile

wangting@ops04:/opt/module/phoenix >source /etc/profile

wangting@ops04:/opt/module/phoenix >

Phoenix Shell操作

-- 查询表

0: jdbc:phoenix:ops01,ops02,ops03:2181> !table

+------------+--------------+-------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+---------+

| TABLE_CAT | TABLE_SCHEM | TABLE_NAME | TABLE_TYPE | REMARKS | TYPE_NAME | SELF_REFERENCING_COL_NAME | REF_GENERATION | INDEX_STATE | IMMUTABLE_ROWS | SALT_BUCKETS | MULTI_TENANT | VIEW_STATEMENT | VIEW_TYPE | INDEX_T |

+------------+--------------+-------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+---------+

| | SYSTEM | CATALOG | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | FUNCTION | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | LOG | SYSTEM TABLE | | | | | | true | 32 | false | | | |

| | SYSTEM | SEQUENCE | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | STATS | SYSTEM TABLE | | | | | | false | null | false | | | |

+------------+--------------+-------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+---------+

0: jdbc:phoenix:ops01,ops02,ops03:2181>

-- 创建表

0: jdbc:phoenix:ops01,ops02,ops03:2181> CREATE TABLE IF NOT EXISTS ztdata(id VARCHAR primary key,name VARCHAR);

No rows affected (1.324 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181> CREATE TABLE IF NOT EXISTS us_population (

. . . . . . . . . . . . . . . . . . . > State CHAR(2) NOT NULL,

. . . . . . . . . . . . . . . . . . . > City VARCHAR NOT NULL,

. . . . . . . . . . . . . . . . . . . > Population BIGINT

. . . . . . . . . . . . . . . . . . . > CONSTRAINT my_pk PRIMARY KEY (state, city));

No rows affected (1.3 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181>

-- 向表内插入数据

0: jdbc:phoenix:ops01,ops02,ops03:2181> upsert into ztdata values('1001','caitong');

1 row affected (0.107 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181> upsert into ztdata values('1002','guangfa');

1 row affected (0.012 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181> upsert into ztdata values('1003','fangzheng');

1 row affected (0.009 seconds)

-- 查询表中数据

0: jdbc:phoenix:ops01,ops02,ops03:2181> select * from ztdata;

+-------+------------+

| ID | NAME |

+-------+------------+

| 1001 | caitong |

| 1002 | guangfa |

| 1003 | fangzheng |

+-------+------------+

3 rows selected (0.051 seconds)

-- 条件查询

0: jdbc:phoenix:ops01,ops02,ops03:2181> select * from ztdata where id='1001';

+-------+----------+

| ID | NAME |

+-------+----------+

| 1001 | caitong |

+-------+----------+

1 row selected (0.021 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181> select * from ztdata where id='1002';

+-------+----------+

| ID | NAME |

+-------+----------+

| 1002 | guangfa |

+-------+----------+

1 row selected (0.02 seconds)

-- 删除数据条目

0: jdbc:phoenix:ops01,ops02,ops03:2181> delete from ztdata where id='1001';

1 row affected (0.016 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181> select * from ztdata;

+-------+------------+

| ID | NAME |

+-------+------------+

| 1002 | guangfa |

| 1003 | fangzheng |

+-------+------------+

2 rows selected (0.023 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181>

-- 删表

0: jdbc:phoenix:ops01,ops02,ops03:2181> !table

+------------+--------------+----------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+------+

| TABLE_CAT | TABLE_SCHEM | TABLE_NAME | TABLE_TYPE | REMARKS | TYPE_NAME | SELF_REFERENCING_COL_NAME | REF_GENERATION | INDEX_STATE | IMMUTABLE_ROWS | SALT_BUCKETS | MULTI_TENANT | VIEW_STATEMENT | VIEW_TYPE | INDE |

+------------+--------------+----------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+------+

| | SYSTEM | CATALOG | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | FUNCTION | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | LOG | SYSTEM TABLE | | | | | | true | 32 | false | | | |

| | SYSTEM | SEQUENCE | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | STATS | SYSTEM TABLE | | | | | | false | null | false | | | |

| | | US_POPULATION | TABLE | | | | | | false | null | false | | | |

| | | ZTDATA | TABLE | | | | | | false | null | false | | | |

+------------+--------------+----------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+------+

0: jdbc:phoenix:ops01,ops02,ops03:2181> drop table ztdata;

No rows affected (1.415 seconds)

0: jdbc:phoenix:ops01,ops02,ops03:2181> !table

+------------+--------------+----------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+------+

| TABLE_CAT | TABLE_SCHEM | TABLE_NAME | TABLE_TYPE | REMARKS | TYPE_NAME | SELF_REFERENCING_COL_NAME | REF_GENERATION | INDEX_STATE | IMMUTABLE_ROWS | SALT_BUCKETS | MULTI_TENANT | VIEW_STATEMENT | VIEW_TYPE | INDE |

+------------+--------------+----------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+------+

| | SYSTEM | CATALOG | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | FUNCTION | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | LOG | SYSTEM TABLE | | | | | | true | 32 | false | | | |

| | SYSTEM | SEQUENCE | SYSTEM TABLE | | | | | | false | null | false | | | |

| | SYSTEM | STATS | SYSTEM TABLE | | | | | | false | null | false | | | |

| | | US_POPULATION | TABLE | | | | | | false | null | false | | | |

+------------+--------------+----------------+---------------+----------+------------+----------------------------+-----------------+--------------+-----------------+---------------+---------------+-----------------+------------+------+

0: jdbc:phoenix:ops01,ops02,ops03:2181>

-- 退出命令行

0: jdbc:phoenix:ops01,ops02,ops03:2181> !quit

Closing: org.apache.phoenix.jdbc.PhoenixConnection

wangting@ops01:/opt/module/phoenix >