Kubernetes第二曲 集群部署(Etcd+Flannel)

Kubernetes 集群部署(Etcd+Flannel)

- 一、官方提供的三种部署方式

-

- 1、 minikube

- 2、 kubeadm

- 3、 二进制包

- 二、kubernetes平台环境规划

-

- 1、服务器配置

- 2、服务器角色

- 3、单master集群架构图

- 4、多master集群架构图

- 三、自签SSL证书

- 四、环境部署

- 五、Etcd数据库集群部署

- 六、node安装docker(先装node1,再装node2)

- 七、Flannel容器集群网络部署

-

- 1、 vxlan网络拓扑

- 2、 集群内不同节点间容器通讯流程

- 八、flannel网络配置

-

- 1、写入分配的子网段到ETCD中,供flannel使用

- 2、配置docker连接flanne

一、官方提供的三种部署方式

1、 minikube

minikube是一个工具,可以在本地快速运行一个单点的kubernetes,仅用于尝试K8S或日常开发的测试环境使用

部署地址:https://kubernetes.io/docs/setup/minkube/

2、 kubeadm

kubeadm也是一个工具,提供kubeadm init和kubeadm join,用于快速部署kubernetes集群

部署地址:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

3、 二进制包

推荐,从官方下载发行的二进制包,手动部署每个组件,组成kubernetes集群

下载地址:https://github.com/kubernetes/kubernetes/releases

二、kubernetes平台环境规划

1、服务器配置

| 软件 | 版本 |

|---|---|

| linux操作系统 | CentOS7.5_x64 |

| kubernetes | 1.12 |

| Docker | 18.xx-ce |

| Etcd | 3.x |

| Flannel | 0.10 |

2、服务器角色

| 角色 | IP | 组件 | 推荐配置 |

|---|---|---|---|

| master01 | 192.168.221.10 | kube-apiserver、kube-controller-manager、kube-scheduler、etcd | 2+4 |

| master02 | 192.168.221.20 | kube-apiserver、kube-controller-manager、kube-scheduler、etcd | 2+4 |

| node01 | 192.168.221.30 | kubelet、kube-proxy、docker、flannel、etcd | 2+4 |

| node02 | 192.168.221.40 | kubelet、kube-proxy、docker、flannel | 2+4 |

| Load Balancer | 192.168.221.50 | NginxL4 | 2+4 |

| Load Balancer | 192.168.221.60 | NginxL4 | 2+4 |

| Registry | 192.168.221.70 | Harbor | 2+4 |

3、单master集群架构图

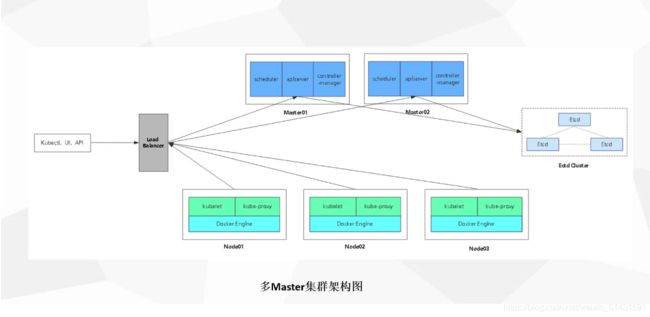

4、多master集群架构图

三、自签SSL证书

| 组件 | 使用的证书 |

|---|---|

| etcd | ca.pem、server.pem、server-key.pem |

| flannel | ca.pem、server.pem、server-key.pem |

| kube-apiserver | ca.pem、server.pem、server-key.pem |

| kubelet | ca.pem、server.pem |

| kube-proxy | ca.pem、kube-proxy.pem、kube-proxy-key.pem |

| kubectl | ca.pem、admin.pem、admin-key.pem |

四、环境部署

官网地址:https://github.com/kubernetes/kubernetes/releases?after=v1.13.1

五、Etcd数据库集群部署

实验环境部如下:

| Master1:192.168.221.70/24 | kube-apiserver kube-controller-manager kube-scheduler etcd |

|---|---|

| Node01:192.168.221.90/24 | kubelet kube-proxy docker flannel etcd |

| Node02:192.168.221.100/24 | kubelet kube-proxy docker flannel etcd |

#master操作

[root@master1 ~]# mkdir k8s

[root@master1 ~]# cd k8s/

[root@master1 k8s]# ls #将以下两个脚本文件上传到该目录下

etcd-cert.sh etcd.sh #etcd-cert.sh为etcd证书的脚本,etcd.sh为etcd服务的脚本

[root@master1 k8s]# mkdir etcd-cert

[root@master1 k8s]# ls

etcd-cert etcd-cert.sh etcd.sh

[root@master1 k8s]# mv etcd-cert.sh etcd-cert

#下载证书制作工具

[root@master1 k8s]# vim cfssl.sh

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

#下载cfssl官方包

[root@master1 k8s]# bash cfssl.sh

[root@master1 k8s]# ls /usr/local/bin/

cfssl cfssl-certinfo cfssljson

#开始制作证书

//cfssl 生成证书工具 , cfssljson通过传入json文件生成证书,cfssl-certinfo查看证书信息

#定义ca证书

[root@master1 ~]# cd /root/k8s/etcd-cert

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

#实现证书签名

[root@master1 ~]# cd /root/k8s/etcd-cert

cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

#生产证书,生成ca-key.pem ca.pem

[root@master1 etcd-cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2021/04/10 22:53:30 [INFO] generating a new CA key and certificate from CSR

2021/04/10 22:53:30 [INFO] generate received request

2021/04/10 22:53:30 [INFO] received CSR

2021/04/10 22:53:30 [INFO] generating key: rsa-2048

2021/04/10 22:53:30 [INFO] encoded CSR

2021/04/10 22:53:30 [INFO] signed certificate with serial number 397125830926114737701706075410049927608256756699

#指定etcd三个节点之间的通信验证

[root@master1 ~]# cd /root/k8s/etcd-cert

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.221.70",

"192.168.221.90",

"192.168.221.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

#生成ETCD证书 server-key.pem server.pem

[root@master1 ~]# cd /root/k8s/etcd-cert

[root@master1 etcd-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

2021/04/10 22:57:45 [INFO] generate received request

2021/04/10 22:57:45 [INFO] received CSR

2021/04/10 22:57:45 [INFO] generating key: rsa-2048

2021/04/10 22:57:45 [INFO] encoded CSR

2021/04/10 22:57:45 [INFO] signed certificate with serial number 219342381748991728851284184667512408378336584859

2021/04/10 22:57:45 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master1 etcd-cert]# ls

ca-config.json ca-key.pem server.csr server.pem

ca.csr ca.pem server-csr.json

ca-csr.json etcd-cert.sh server-key.pem

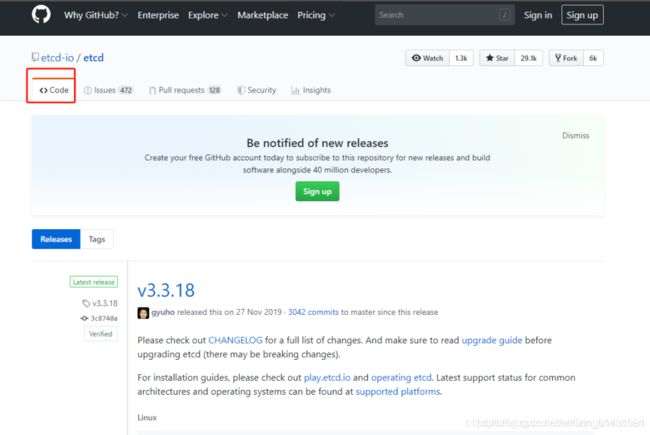

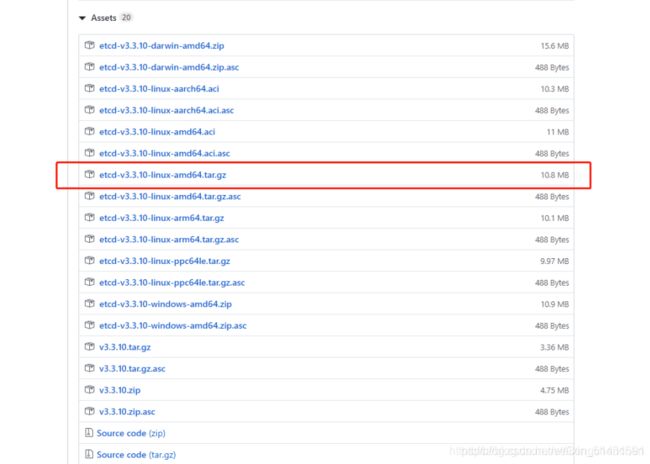

#ETCD 二进制包地址

https://github.com/etcd-io/etcd/releases

#将以上三个压缩包上传到 /root/k8s 目录中

[root@master1 etcd-cert]# cd ~/k8s/

[root@master1 k8s]# ls

cfssl.sh etcd.sh flannel-v0.10.0-linux-amd64.tar.gz

etcd-cert etcd-v3.3.10-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

[root@master1 k8s]# tar zxvf etcd-v3.3.10-linux-amd64.tar.gz

[root@master1 k8s]# ls etcd-v3.3.10-linux-amd64

Documentation etcdctl README.md

etcd README-etcdctl.md READMEv2-etcdctl.md

[root@master1 k8s]# mkdir /opt/etcd/{

cfg,bin,ssl} -p //cfg:配置文件,bin:命令文件,ssl:证书

[root@master1 k8s]# mv etcd-v3.3.10-linux-amd64/etcd etcd-v3.3.10-linux-amd64/etcdctl /opt/etcd/bin/

[root@master1 k8s]# ls /opt/etcd/bin/

etcd etcdctl

#证书拷贝

[root@master1 k8s]# cp etcd-cert/*.pem /opt/etcd/ssl/

[root@master1 k8s]# ls /opt/etcd/ssl/

ca-key.pem ca.pem server-key.pem server.pem

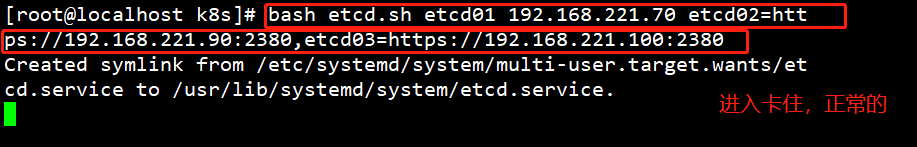

#进入卡住状态等待其他节点加入

[root@master1 k8s]# bash etcd.sh etcd01 192.168.221.70 etcd02=https://192.168.221.90:2380,etcd03=https://192.168.221.100:2380

#新开master1的一个会话,会发现etcd进程已经开启

[root@localhost ~]# ps -ef | grep etcd

#拷贝证书去其他节点

[root@master1 k8s]# scp -r /opt/etcd/ root@192.168.221.90:/opt/

[root@master1 k8s]# scp -r /opt/etcd/ root@192.168.221.100:/opt/

#启动脚本拷贝其他节点

[root@master1 ~]# scp /usr/lib/systemd/system/etcd.service root@192.168.221.90:/usr/lib/systemd/system/

[root@master1 ~]# scp /usr/lib/systemd/system/etcd.service root@192.168.221.100:/usr/lib/systemd/system/

#在node1节点与node2节点均需修改

###在node1节点修改

[root@node1 system]# cd /opt/etcd/cfg/

[root@node1 cfg]# vim etcd

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.221.90:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.221.90:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.221.90:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.221.90:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.221.70:2380,etcd02=https://192.168.221.90:2380,etcd03=https://192.168.221.100:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

###在node2节点修改

[root@node2 system]# cd /opt/etcd/cfg/

[root@node2 cfg]# vim etcd

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.221.100:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.221.100:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.221.100:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.221.100:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.221.700:2380,etcd02=https://192.168.221.90:2380,etcd03=https://192.168.221.100:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#启动

#首先在master1节点上进行启动

[root@master1 ~]# cd /root/k8s/

[root@master1 k8s]# bash etcd.sh etcd01 192.168.221.70 etcd02=https://192.168.221.90:2380,etcd03=https://192.168.221.100:2380

#接着在node1和node2节点分别进行启动

[root@node1 cfg]# systemctl start etcd.service

[root@node2 cfg]# systemctl start etcd.service

#检查群集状态,在master1上进行检查

[root@master1 ~]# cd /root/k8s/etcd-cert/

[root@master1 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.221.70:2379,https://192.168.221.90:2379,https://192.168.221.100:2379" cluster-health

member 995575c0c650e89b is healthy: got healthy result from https://192.168.221.70:2379

member 9abd0b70bb7a8d9a is healthy: got healthy result from https://192.168.221.90:2379

member acca2e49c9c76117 is healthy: got healthy result from https://192.168.221.100:2379

cluster is healthy

六、node安装docker(先装node1,再装node2)

所有node节点部署docker引擎

详见docker安装脚本

七、Flannel容器集群网络部署

overlay network:覆盖网络,在基础网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路tunnmel连接起来

vxlan:将原数据包封装到UDP协议中,并使用基础网络的IP/mac作为外层报文头进行封装,然后在以太网二层链路上传输,到达目的地后由隧道端点解封装并将数据发送给目标地址

flannel:是overlay网络中的一种,也是将源数据包封装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VXLAN、aws VPS和gce路由等数据转发方式

1、 vxlan网络拓扑

2、 集群内不同节点间容器通讯流程

MTU=1500 包头=20 剩下下层数据不超过1480 内部字节设置为1450 30字节用来包装podip

MTU=1500 包头=20 剩下下层数据不超过1480 内部字节设置为1450 30字节用来包装podip

八、flannel网络配置

1、写入分配的子网段到ETCD中,供flannel使用

#master操作

[root@localhost etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-

key.pem --endpoints="https://192.168.221.70:2379,https://192.168.221.90:2379,https://192.168.221.100:2379" set

/coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{

"Network": "172.17.0.0/16", "Backend": {

"Type": "vxlan"}}

#查看写入的信息

[root@localhost etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-

key.pem --endpoints="https://192.168.221.70:2379,https://192.168.221.90:2379,https://192.168.221.100:2379" get

/coreos.com/network/config

{

"Network": "172.17.0.0/16", "Backend": {

"Type": "vxlan"}}

#拷贝到所有node节点(只需要部署在node节点即可)

[root@localhost k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz root@192.168.221.90:/root

[root@localhost k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz root@192.168.221.100:/root

#所有node节点操作解压

[root@localhost ~]# tar zxvf flannel-v0.10.0-linux-amd64.tar.gz

flanneld

mk-docker-opts.sh

README.md

#k8s工作目录

[root@localhost ~]# mkdir /opt/kubernetes/{

cfg,bin,ssl} -p

[root@localhost ~]# mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

#开启flannel网络功能

[root@localhost ~]# bash flannel.sh https://192.168.221.70:2379,https://192.168.221.90:2379,https://192.168.221.100:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/flanneld.service to /usr/lib/systemd/system/flanneld.service.

2、配置docker连接flanne

[root@localhost ~]# vim /usr/lib/systemd/system/docker.service

[Service]

Type=notify

# the default is not to use systemd for cgroups because the delegate issues still

# exists and systemd currently does not support the cgroup feature set required

# for containers run by docker

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H fd:// --containerd=/run/containerd/containerd.sock

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=0

RestartSec=2

Restart=always

[root@localhost ~]# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.42.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

//说明:bip指定启动时的子网

DOCKER_NETWORK_OPTIONS=" --bip=172.17.42.1/24 --ip-masq=false --mtu=1450"

#重启docker服务

[root@localhost ~]# systemctl daemon-reload

[root@localhost ~]# systemctl restart docker

#查看flannel网络

[root@localhost ~]# ifconfig

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.84.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::fc7c:e1ff:fe1d:224 prefixlen 64 scopeid 0x20<link>

ether fe:7c:e1:1d:02:24 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 26 overruns 0 carrier 0 collisions 0

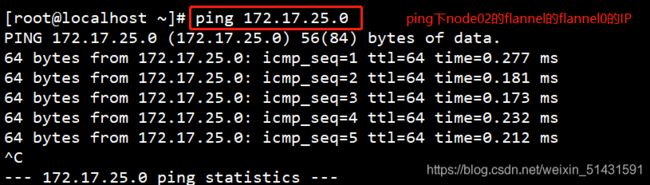

//测试ping通对方docker0网卡 证明flannel起到路由作用

[root@localhost ~]# docker run -it centos:7 /bin/bash

[root@5f9a65565b53 /]# yum install net-tools -y

[root@5f9a65565b53 /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.84.2 netmask 255.255.255.0 broadcast 172.17.84.255

ether 02:42:ac:11:54:02 txqueuelen 0 (Ethernet)

RX packets 18192 bytes 13930229 (13.2 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 6179 bytes 337037 (329.1 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

//再次测试ping通两个node中的centos:7容器