【MEC笔记-概述 】MEC

参考出处:https://blog.csdn.net/weixin_43502661/article/details/89228324

论文名:A Survey on Mobile Edge Computing: The Communication Perspective

- 1 平台

-

- MEC VS MCC

- 2 Models

-

- 计算任务的模型

-

- 1 Task Model for Binary Offloading二进制任务卸载模型

- 2 Task Models for Partial Offloading可分的任务卸载模型

- 通信的模型

- 移动设备能耗的计算模型

- MEC服务器能耗的计算模型

-

- 应用的时延

- 能量消耗

- 总结和深入的见解

- 3 MEC系统的资源管理

-

- 单用户Single-User MEC Systems

-

- Deterministic Task Model With Partial Offloading

- Stochastic Task Model

- Summary and Insight

- 多用户Multiuser MEC Systems

-

- Joint Radio-and-Computational Resource Allocation集中的无线与计算资源分配

- MEC Server Scheduling调度

- Multiuser Cooperative Edge Computing多用户协作

- Summary and Insight

- MEC Systems With Heterogeneous Servers

-

- Server Selection

- Server Cooperation

- Computation Migration

- Summary and Insight

- Challenges

- 4 技术问题、挑战、未来的研究方向

-

- MEC系统部署

-

- 1 Site Selection for MEC Servers站点选择

- 2 MEC Network Architecture 网络架构

- 3 Server Density Planning 服务器密度规划

- Cache-Enabled MEC启用缓存MEC

-

- 1 Service Caching for MEC Resource Allocation用于资源分配服务缓存

- 2 Data Caching for MEC Data Analytics 用于数据分析的数据缓存

- Mobility Management(user‘s) for MEC

-

- 1) Mobility-Aware Online Prefetching(在线预读移动感知)

- 2) Mobility-Aware Offloading Using D2D

- 3) Mobility-Aware Fault-Tolerant MEC容错的移动感知MEC

- 4) Mobility-Aware Server Scheduling

- Green MEC

-

- 1) Dynamic Right-Sizing for Energy-Proportional MEC

- 2) Geographical Load Balancing(GLB) for MEC

- 3) Renewable(可再生) Energy-Powered MEC Systems

- Security and Privacy Issues in MEC

-

- 1) Trust and Authentication Mechanisms

- 2) Networking Security:

- 3) Secure and Private Computation

- 5 STANDARDIZATION E FFORTS AND USE SCENARIOS OF MEC

-

- Referenced MEC Server Framework

- Technical Challenges and Requirements

- Use Scenarios

- MEC in 5G Standardizations

-

- 1) Functionality Supports Offered by 5G Networks:

- 2) Innovative Features in 5G to Facilitate MEC:

1 平台

MEC的实现基于虚拟平台,基于NFV、ICN、SDN技术的最新技术

| 缩写 | 详细 | 解释 |

|---|---|---|

| NFV | 网络功能虚拟化network functions virtualization | 通过在边缘设备中创建虚拟机给移动设备提供计算服务,这样可以在边缘设备中同时运行不同的任务 |

| ICN | 信息为中心网络information-centric networks | 为MEC提供端对端的服务识别模式,为实现内容感知计算,从以主机为中心切换到以信息为中心 |

| SDN | 软件定义网络software-defined networks | 允许MEC管理者通过功能抽象来管理服务,实可伸缩和动态计算 |

MEC VS MCC

优点

MEC has the advantages of

- achieving lower latency(closer to users)

- saving energy for mobile devices(computation offloading)

- supporting context-aware computing(based on the information created by end user to provide related service)

- enhancing privacy and security for mobile applications( avoid uploading restricted data and material to remote data centers)

2 Models

计算任务的模型

1 Task Model for Binary Offloading二进制任务卸载模型

定义 : 不能分割的高度集成的或者相对简单的任务和必须作为一个整体被执行(在移动设备上或者卸载到MEC服务器上执行)的任务都可以叫做Binary Offloading

详细:

- 模型一

表示模型:

A ( L , τ d , X ) A(L,τ_d ,X) A(L,τd,X)

L L L (in bits):input-data size

τ d τ_d τd(in second):the completion deadline(hard deadline)

X X X(in CPU cycles per bit):computation workload/intensity

These parameters are related to the nature of the applications and can be estimated through task profilers

特别的,This model can also be generalized to handle the soft deadline requirement which allows a small portion of tasks to be completed after τ d \tau_d τd

- 模型二

A ( L , τ d , X ) A(L,\tau_d ,X) A(L,τd,X) τ d \tau_d τd is the completion deadline(soft deadline)

random variable X X X,define x 0 x_0 x0 as a positive integer

P r ( X > x 0 ) ≤ ρ , 0 < ρ ≪ 1 P r ( L W > W ρ ) ≤ ρ , W ρ = L x 0 P_r(X>x_0)≤\rho , 0 < \rho \ll1\\P_r(LW>W_\rho) \leq \rho, W_\rho=Lx_0 Pr(X>x0)≤ρ,0<ρ≪1Pr(LW>Wρ)≤ρ,Wρ=Lx0

Then given the L-bit task-input data, W ρ W_\rho Wρ upper bounds the number of required CPU cycles almost surely.

2 Task Models for Partial Offloading可分的任务卸载模型

- 模型一:data-partition model

适用场景: the task-input bits are bit-wiseindependent and can be arbitrarily divided into different groups and executed by different entities in MEC systems

- 模型二 t a s k − c a l l task-call task−call g r a p h graph graph

考虑依赖性:程序或者组件之间的依赖性是无法忽略的,这要求该种模型能够捕捉不同功能间的依赖性以及使这些功能正常运行。

表示模型:

G ( V , E ) G ( V , E ) G(V,E)

the set of vertices(定点集) V : V: V: different procedures in the application

the set of edges (边界集) E : E: E: call dependencies

三种典型的依赖模型

图片描述

node 1 and node N in Fig. 4( a)–4( c) are components that must be executed locally,with the reason that represent the step of collecting the I/O data and displaying the computation results ,respectively.

c : c: c:the required computation workloads and resources of each procedure can also be specified in the vertices

w : w: w:the amount of input/output data of each procedure can be characterized by imposing weights on the edges

通信的模型

设计焦点是高效的空中接口 Reducing communication latency by designing a highly efficient air interface is the main design focus.

通信双方:In MEC systems, communications are typically between APs and mobile devices with the possibility of direct D2D communications.

在MEC系统中,终端设备不能直接跟MEC服务器进行通信(由于缺乏无线接口),但是可以通过与APs(包括:BS、公共WiFi路由)进行D2D通信,无线APs不仅仅能为MEC服务器提供无线接口,还能通过回程链路接入远程数据中心,能够更进一步帮助MEC服务器卸载计算任务到其他的MEC服务器和大规模云数据中心。另外,D2D还能实现一簇移动设备之间的对等资源共享和计算载荷均衡。

MEC系统中可能用到的关键无线通信技术:

WiFi and LTE (or 5G) are two primary technologies enabling the access to MEC systems

移动设备能耗的计算模型

- (执行速度)the execution latency for task A ( L , τ , X ) A(L,τ,X) A(L,τ,X)can be calculated accordingly to:

(1) t m = L X f m t_m =\frac{LX}{f_m} \tag{1} tm=fmLX(1)

f m is bounded by a maximum value, f C P U m a x f_{CPU}^{max} fCPUmax

- (能耗)the energy consumption can be derived:

(2) E m = κ L X f m 2 E_m = \kappa LXf_m^2 \tag{2} Em=κLXfm2(2)

the energy consumption of a CPU cycle is given

by κ f m 2 \kappa f_m^2 κfm2 , where κ \kappa κ is a constant related to the hardware architecture - (其他能耗) other hardware components

MEC服务器能耗的计算模型

考虑服务时延:由于边缘服务器的计算资源相对小,在设计MEC系统时必须考虑服务延迟时间。

应用的时延

时延敏感的应用的时延

consider the exact server-computation latency for latency-sensitive applications

设定MEC服务器为不同移动设备分配不同的VM

Specifically, assume the MEC server allocates different VMs for different mobile devices,allowing independent computation

服务执行时间:

server execution time denoted by t s , k t _{s,k} ts,k

t s , k = w k f s , k t_{s,k}=\frac{w_k}{f_{s,k}} ts,k=fs,kwk

w k w_k wk: the number of required CPU cycles for processing the offloaded computation workload

f s , k f_{s,k} fs,k :denote the allocated servers’ CPU-cycle frequency for mobile device k

表示移动设备k所分配到的CPU频率

注意

the server scheduling queuing delay should be accounted

总的服务器的计算时延(包括队列时延)

Without loss of generality, denote k k k as the processing order for a mobile device and name it as mobile k k k.

the total server-computation latency including the queuing delay for device k

denoted by T s , k T_{s,k} Ts,k

(3) T s , k = ∑ i ≤ k t s , i T_{s,k}=\sum_{i≤k}t_{s,i} \tag{3} Ts,k=i≤k∑ts,i(3)

非时延敏感的应用

对于非时延敏感的应用,平均的服务器计算时间基于随机模型。例如,the task arrivals and service time are modeled by the Poisson and exponential processes,respectively.

多VM所致的计算时延

multiple VMs sharing the same physical machine will introduce the I/O interference among different VMs,denoted by T s , k ′ T_{s,k}' Ts,k′

T s , k ′ = T s , k ( 1 + ϵ ) n T'_{s,k}=T_{s,k} (1+\epsilon)^n Ts,k′=Ts,k(1+ϵ)n

ϵ \epsilon ϵ: the performance degradation factor as the percentage increasing of the latency 作为增加时延的可能性的性能恶化因子

能量消耗

- 模型一

based on the DVFS( dynamic frequency and voltage scaling) technique

Consider an MEC server that handles K computation tasks and the k-th task is allocated with w k w_k wk CPU cycles with CPU-cycle frequency f s , k f_{s,k } fs,k.

Hence, the total energy consumed by the CPU at the MEC server,denoted by E s E_s Es

(4) E s = ∑ k = 1 K κ w k f s , k 2 E_s=\sum ^K_{k=1}\kappa w_kf^2_{s,k} \tag{4} Es=k=1∑Kκwkfs,k2(4)

- 模型二

under CPU utilization ratio

[89] X. Fan, W.-D. Weber, and L. A. Barroso, “Power provisioning for

a warehouse-sized computer,” in Proc. 34th ACM Annu. Int. Symp.

Comput. Archit. (ISCA), San Diego, CA, USA, Jun. 2007, pp. 13–23.

[90] C.-C. Lin, P. Liu, and J.-J. Wu, “Energy-efficient virtual machine pro-

vision algorithms for cloud systems,” in Proc. IEEE Utility Cloud

Comput. (UCC), Melbourne, VIC, Australia, Dec. 2011, pp. 81–88.

(5) E s = α E m a x + ( 1 − α ) E m a x u E_s=\alpha E_{max}+(1−\alpha)E_{max}u \tag{5} Es=αEmax+(1−α)Emaxu(5)

E m a x E_{max} Emax is the energy consumption for a fully-utilized server

α \alpha α is the fraction of the idle energy consumption(空闲的能量消耗的占比)

u u u denotes the CPU utilization ratio

模型的启示

This model suggests that energy-efficient MEC should allow servers to be switched into the sleep mode in the case of light load and consolidation of computation loads into fewer active servers.(处理轻量任务和将任务合并到任务更少的服务器中处理时,切换到睡眠模式)

总结和深入的见解

- leverage and integrate techniques from both areas of wireless

communications and mobile computing - different computation task models for different MEC applications

Moreover, for a specific application, the task model also depends on the offloading scenario

e.g. the data-partition model -->offloading input-data

the task-call graph–>each task component can be offloaded as a whole - MEC has the potential to reduce the transmission energy consumption due to shorter distances and advanced wireless communication techniques

- Dynamic CPU-cycle frequency control

——key technique for both mobile devices and MEC servers

——should approach the optimal tradeoff betweencomputation latency and energy consumption - Load-balancing and intelligent scheduling policies can be designed to reduce the total computation latency of MEC(small computation capacity or heavy computation loads)

3 MEC系统的资源管理

Classification of resource management techniques for MEC

Classification of resource management techniques for MEC

单用户Single-User MEC Systems

-

决策模型一:Deterministic Task Model With Binary Offloading

一个特定任务或者本地计算任务什么时候应该卸载到边缘服务器Consider the mentioned single-user MEC system where the binary offloading decision is on whether a particular task should be offloaded for edge execution or local computation

从能耗的角度来说

这个问题的研究可以追溯到传统云计算系统的研究上, where the communication links were typically assumed to have a fixed rate B B B

卸载任务到云端能改善时延性能的情况

Offloading the computation to the cloud server can improve the latency performance only when

(6) w f m > d B + w f s \frac{w}{f_m}>\frac {d}{B}+\frac{w}{f_s} \tag{6} fmw>Bd+fsw(6)

denote:

w w w : the amount of computation (in CPU cycles) for a task

f m f_m fm : the CPU speed of the mobile device

f s f_s fs : the CPU speed at the cloud server

d d d : the input data size

- 适用的应用

holds for applications that require heavy computation and have small amount of data input, or when the cloud server is fast, and the transmission rate is sufficiently high

推广到卸载任务到边缘服务器的情况

卸载任务到边缘服务器能节能的情况

Offloading the task could help save mobile energy when

(7) p m × w f m > p t × d B + p i × w f s p_m×\frac{w}{f_m}>p_t ×\frac {d}{B}+p_i×\frac{w}{f_s} \tag{7} pm×fmw>pt×Bd+pi×fsw(7)

denote:

p m p_m pm : the CPU power consumption at the mobile device

p t p_t pt : the transmission power

p i p_i pi : the power consumption at the device when the task is running at the server任务在边缘设备中运行时的能耗

- 适用的应用

holds, i.e., applications with heavy computation and light

communication should be offloaded

从无线通信的角度来说

能耗决定卸载决策

The offloading decision was determined by the computation mode (either offloading or local computing) that incurs less energy consumption

CPU-cycle应该自适应发射功率

the optimal CPU-cycle frequencies for local computing and time division for offloading should be adaptive to the transferred power

- 无线传输与功率有怎样的关系?

在无线通信中,数据速率有时变性。

the data rates for wireless communications are not constant and change with the time-varying channel gains as well as depend on the transmission power. This calls for the design of control policies for power adaptation and data scheduling to streamline the offloading process.

数据速率变化,一方面因为时变信道,另一方面因为传输能量。这就需要电源自适应的控制和数据调度策略的设计以使卸载过程更加高效。

香农公式

揭示能量与速率的关系。 task offloading is desirable when the channel power gain is greater than a threshold and the server CPU is fast enough。

在使用无线通信的系统优化能耗这个问题上,有学者进行了更加深入的研究

W. Zhang et al., “Energy-optimal mobile cloud computing under stochastic wireless channel,” IEEE Trans. Wireless Commun., vol. 12, no. 9, pp. 4569–4581, Sep. 2013.

本地执行的电源优化

DVFS technique

凸优化问题a convex optimization problem

在同一计算时长上CPU频率最优的最优解由KKT条件得到。The optimal CPU-cycle frequencies over the computation duration were derived in closed form by solving the Karush-Kuhn-Tucker (KKT) conditions, suggesting that the processor should speed up as the number of completed CPU cycles increases

数据调度

通过DP技术得到最优数传调度和得到期限问题上的最小能耗的缩放比例原则

Under the Gilbert-Elliott channel model,the optimal data transmission scheduling was obtained through dynamic programming (DP) techniques, and the scaling law of the minimum expected energy consumption with respect to the execution deadline was also derived.

Deterministic Task Model With Partial Offloading

相对复杂的移动应用可以分为一系列的子任务。

下面的文献讲述了部分卸载进一步优化MEC的性能。(从这里开始到这部分结束的最后一篇)

Y. Wang, M. Sheng, X. Wang, L. Wang, and J. Li, “Mobile-edge computing: Partial computation offloading using dynamic voltage scaling,”IEEE Trans. Commun., vol. 64, no. 10, pp. 4268–4282, Oct. 2016.

- 程序分区的全粒度被认为任务的输入数据可以被任意切分到本地和远程处理器。

- 解决最小化受时延限制的移动能耗的问题需要对 offloading ratio, transmission power and CPU-cycle frequency同时优化。

- 非凸。 energy and latency minimization problems are non-convex。

- 提出子优化算法

下面文献中使用 task-call graphs以明确不同子任务间的依赖关系,代码分区策略被用以动态产任务卸载的优化集。(从这里开始到这部分结束的最后一篇)

M. Jia, J. Cao, and L. Yang, “Heuristic offloading of concurrent tasks for computation-intensive applications in mobile cloud computing,” in Proc. IEEE Int. Conf. Comput. Commun. (INFOCOM WKSHPS), Toronto, ON, Canada, Apr./May 2014, pp. 352–357.

- 利用移动设备和MEC服务器之间的负载均衡,提出了启发式的程序分割算法去最小化执行时延。

Y.-H. Kao, B. Krishnamachari, M.-R. Ra, and F. Bai, “Hermes: Latency optimal task assignment for resource-constrained mobile computing,” in Proc. IEEE Int. Conf. Comput. Commun. (INFOCOM), Hong Kong, Apr./May 2015, pp. 1894–1902.

- 具有规定资源利用限制的时延最小化问题,提出了具有可靠性能的多项式时间近似解.

S. E. Mahmoodi, R. N. Uma, and K. P. Subbalakshmi, “Optimal joint scheduling and cloud offloading for mobile applications,” IEEE Trans. Cloud Comput., to be published.

- 为了实现通过计算卸载来最大化节能,使用整数规划的方法对调度和云卸载判决进行共同优化。(整数规划是指规划中的变量(全部或部分)限制为整数)

W. Zhang, Y. Wen, and D. O. Wu, “Collaborative task execution in mobile cloud computing under a stochastic wireless channel,” IEEE Trans. Wireless Commun., vol. 14, no. 1, pp. 81–93, Jan. 2015.

- 考虑到信道模型包括(阻塞衰落信道block fading channel、独立同分布的随机数信道 independent and identical distributed stochastic channel、马尔科夫随机信道 the Markovian stochastic channel),具有时间限制的能耗优化问题被认为为是随机的最短路径问题,并且一次性执行策略 one-climb policie被认为是最优的。

S. Khalili and O. Simeone, “Inter-layer per-mobile optimization of cloud mobile computing: A message-passing approach,” Trans. Emerg. Telecommun. Technol., vol. 27, no. 6, pp. 814–827, Jun. 2016.

P. D. Lorenzo, S. Barbarossa, and S. Sardellitti, “Joint optimization of radio resources and code partitioning in mobile edge computing.”[Online]. Available: http://arxiv.org/abs/1307.3835v3

S. E. Mahmoodi, K. P. Subbalakshmi, and V. Sagar, “Cloud offloading for multi-radio enabled mobile devices,” in Proc. IEEE Int. Conf.Commun. (ICC), London, U.K., Jun. 2015, pp. 5473–5478.

- 物理层的参数( such as the transmission and reception power, constellation星座 size, as well as the data allocation for different radio interfaces)可以和程序分区方案一起优化。

Stochastic Task Model

特征:任务到达具有随机性,到达但没被处理的任务会加入到任务缓冲队列中

这样的系统的长期性能与决策任务相比更具相关性,而且优化系统操作的时间相关性使得系统设计非常具有挑战性。

D. Huang, P. Wang, and D. Niyato, “A dynamic offloading algorithm for mobile computing,” IEEE Trans. Wireless Commun., vol. 11, no. 6, pp. 1991–1995, Jun. 2012.

- 在最小化移动能耗的同时保持时间超过限期的执行程序的比例在阈值内,该文提出一个基于 Lyapunov optimization techniques的动态卸载的算法,这个算法用来确定移动用户所使用的一个应用的卸载软件组件。这个应用中的无线通信速率在不同的地点会变化。

J. Liu, Y. Mao, J. Zhang, and K. B. Letaief, “Delay-optimal computation task scheduling for mobile-edge computing systems,” in Proc.IEEE Int. Symp. Inf. Theory (ISIT), Barcelona, Spain, Jul. 2016,pp. 1451–1455.

- 设计了基于 Markov decision process (MDP) 的优化时延任务调度策略。该策略会控制本地处理以及传输单元的状态,而且任务缓冲队列的长度基于信道状况。

- 说明:optimal task-scheduling policy 比the greedy scheduling policy(在本地CPU/传输单元任何空闲的时候,任务都会被调去)有显著的优势

S. E. Mahmoodi, K. P. Subbalakshmi, and V. Sagar, “Cloud offloading for multi-radio enabled mobile devices,” in Proc. IEEE Int. Conf.Commun. (ICC), London, U.K., Jun. 2015, pp. 5473–5478.

D. Huang, P. Wang, and D. Niyato, “A dynamic offloading algorithm for mobile computing,” IEEE Trans. Wireless Commun., vol. 11, no. 6, pp. 1991–1995, Jun. 2012.

J. Liu, Y. Mao, J. Zhang, and K. B. Letaief, “Delay-optimal computation task scheduling for mobile-edge computing systems,” in Proc.IEEE Int. Symp. Inf. Theory (ISIT), Barcelona, Spain, Jul. 2016,pp. 1451–1455.

S. Chen, Y. Wang, and M. Pedram, “A semi-Markovian decision process based control method for offloading tasks from mobile devices to the cloud,” in Proc. IEEE Glob. Commun. Conf. (GLOBECOM),Atlanta, GA, USA, Dec. 2013, pp. 2885–2890.

S.-T. Hong and H. Kim, “QoE-aware computation offloading scheduling to capture energy-latency tradeoff in mobile clouds,” in Proc. IEEE Int. Conf. Sens. Commun. Netw. (SECON), London, U.K., Jun. 2016,

pp. 1–9.

- 在半MDP框架下同时控制 local CPU frequency, modulation scheme(调制方案) as well as data rates来减少平均的长期的执行代价

J. Kwak, Y. Kim, J. Lee, and S. Chong, “DREAM: Dynamic

resource and task allocation for energy minimization in mobile cloud systems,” IEEE J. Sel. Areas Commun., vol. 33, no. 12, pp. 2510–2523, Dec. 2015.

- 研究运行异构类型的应用时MEC系统能耗与时延平衡的问题。including the non-offloadable workload, cloud-offloadable workload and network traffic.

- 提出共同决策offloading policy, task allocation, CPU clock speed, and selected network interface的 Lyapunov optimization-based algorithm。

Z. Jiang and S. Mao, “Energy delay tradeoff in cloud offloading for

multi-core mobile devices,” IEEE Access, vol. 3, pp. 2306–2316, 2015.

- 多核移动设备这方面的的研究。

Summary and Insight

单用户MEC系统的论文研究点对比

binary offloading

- 对于能量节省,当用户具有一个理想的信道或者移动设备具有很小的计算容量,此时的卸载计算优于本地计算,而且波速成形和MIMO技术的使用也减少了卸载时的能量消耗。

对于降低时延,当用户具有大带宽并且MEC服务器具有大的计算容量时,卸载计算优于本地计算。

Partial offloading

- 许组件/数据的灵活分割。过卸载耗时耗能的子任务给MEC服务器,相比于二进制卸载,部分卸载能够节省很多能量并且减小计算时延。图理论是非常有用的工具,根据任务关系图进行任务调度。

** stochastic task models**

- 任务到达和信道的时间相关性可以被用来设计合适的动态的计算卸载策略。通过卸载比例控制去维持用户和服务器的缓冲区任务稳定性是至关重要的。

多用户Multiuser MEC Systems

多移动设备共享同一MEC。

Joint Radio-and-Computational Resource Allocation集中的无线与计算资源分配

以下是对不同MEC系统的集中型和分散性资源分配的研究:

C. You, K. Huang, H. Chae, and B.-H. Kim, “Energy-efficient resource allocation for mobile-edge computation offloading,” IEEE Trans. Wireless Commun., vol. 16, no. 33, pp. 1397–1411, Mar. 2016.

- 多个移动用户分时共享一个MEC服务器,这些用户有不同的工作量和本地计算能力。 为了最小化整体的能量消耗构建出一个凸优化问题关键点是对于控制卸载数据大小和时间分配的优化策略有一个简单的阈值结构。

- 另外,首先根据用户的信道条件和本地计算能耗提出了一个卸载优先级函数。然后,优先级大于或者小于给定阈值的用户将会分别执行完整的或者最低限度的卸载(为了满足给定的截止时间)。

- 可以被基于OFDMA的MEC系统沿用以设计close-to-optimal computation offloading policy。

Joint allocation of computation and communication resources in multiuser mobile cloud computing

- 为了降低整体功耗,MEC服务器对不同的用户使用确定的移动传输功率和已制定的CPU周期。最优的解决方案显示,对于每个移动设备,传输功率和已分配的CPU周期的数量之间存在最优的一对一映射关系。

Joint optimization of radio resources and code partitioning in mobile edge computing

- 对上一篇论文的研究工作有更加深入的工作以解释基于 task-call graphs的模型的二进制任务卸载的优化问题。

Latency optimization for resource allocation in mobile-edge computation offloading

- 考虑了MEC系统中的视频压缩卸载,并且最小化本地压缩、边缘云压缩、分割压缩卸载场景中的时延。

Joint offloading decision and resource allocation for multi-user multi-task mobile cloud

- 为最小化能耗与时延,通过 a separable semidefinite relaxation 方法,优化卸载决策与通信资源的分配

Joint offloading and resource allocation for computation and communication in mobile cloud with computing access point

- 上一篇论文工作的延伸,将计算资源与处理开销加入考虑。

Power-delay tradeoff in multi-user mobile-edge computing systems

- 随机任务到达模型中,多用户MEC系统的能耗与时延平衡问题。Lyapunov optimization-based online algorithm 解决集中处理可用无线与计算资源的问题。

Joint energy minimization and resource allocation in C-RAN with mobile cloud

- 研究基于cloud radio access network (C-RAN)的多用户MEC系统的集中资源处理问题。

基于游戏理论和分解技术的资源分配推动对分布式资源的研究

假设计算任务分别在本地执行或通过单个和多个干扰通道完全卸载。

在固定的移动传输功率的条件下,为最小化总能耗和卸载时延问题,建立整数规划问题,这是 NP-hard方程(NP-hard :non-deterministic polynomial,指所有NP问题都能在多项式时间复杂度内归约到的问题)

游戏理论技术被应用与开发一个分布式算法从而实现纳什均衡。(纳什平衡(Nash equilibrium),又称为非合作博弈均衡纳什平衡(Nash equilibrium),又称为非合作博弈均衡。在一个博弈过程中,无论对方的策略选择如何,当事人一方都会选择某个确定的策略,则该策略被称作支配性策略。如果两个博弈的当事人的策略组合分别构成各自的支配性策略,那么这个组合就被定义为纳什平衡。)

进一步说,对于每个用户来说,仅当接收干扰低于阈值,卸载会有益处。

Multi-user mobile cloud offloading game with computing access point

Game-theoretic analysis

of computation offloading for cloudlet-based mobile cloud computing

- 更进一步,对于每个用户都有多个任务,并且能够卸载计算任务给连接在同一个边缘服务器的多个APs(如BS)的场景下实现分布式卸载。

- 除传输功率,也有考虑扫描AP的电能和固定的电路功率

- 只有当选择同一AP的新用户获得一个更大的增益,这个移动设备才会卸载任务到该AP上

Efficient multi-user computation offloading for mobile-edge cloud computing

基于的系统模型

Multiuser joint task offloading and resource optimization in proximate clouds

- 研究 mobile-transmission power and the CPU-cycle allocation of the edge server的集中优化

- 为了解决公式化的混合整数问题,利用分解技术去最优化资源分配和有序的卸载判决

- 卸载判决问题被转变成求子模块极大值的问题并且可以通过设计贪婪算法去解决

Energy-efficient dynamic offloading and resource scheduling in mobile cloud computing

Joint optimization of radio and computational resources for multicell mobile-edge computing

- 分别使用相似的分解技术和连续凸近似技术去设计分布式资源分配算法

MEC Server Scheduling调度

研究前提

用户的同步性和本地-边缘并行计算的灵活性

但是,在接下来对实际的MEC的研究中, 这些要求需要被放宽。

首先,任务到达的异步性引发队列时延,因为服务器按照顺序进行缓冲和计算的。

Joint scheduling of communication and computation resources in multiuser wireless application offloading

- 为了处理任务到达的突发性问题,服务器调度与上下行链路传输调度相结合,使用排队论最小化平均时延

Joint subcarrier and CPU time allocation for mobile edge computing

然后,甚至对于同步到达的任务,执行不同类型的应用(比如从时延敏感和时延迟钝的应用)时,用户之间的时延要求也有很大不同,这就要求服务器调度去安排基于时延要求的不同优先等级

Multi-user computation partitioning for latency sensitive mobile cloud applications

- 最后,每个计算任务都会包括一些相互依赖的子任务,所以这种模型的调度必须满足任务依赖的需求。

- 提出algorithm去解决formulated mixed-integer 问题。首先优化每个用户的计算分区,找寻在这些分区中执行时间违法限制的然后调整。

Energy-efficient dynamic offloading and resource scheduling in mobile cloud computing

- 由于task-call graphs模型大大复杂化时间表征,该文对此提出解决方法:1.将每个子任务的准备时间的测量方法定义为

完成前面所有任务计算的最早的时间。2.通过分布式的算法集中优化mobile CPU-cycle frequency and mobile-transmission power以减少总移动电源消耗和计算时延

Multiuser Cooperative Edge Computing多用户协作

两个优点使其成为有前景的技术:

- 当MEC服务器服务大量需要卸载的移动用户时,其有限的计算资源可能会出现过载的情况,这时,通过移动设备点对点协作通信的方式可以减轻MEC服务器的负担。

- 在用户之间共享资源,能够平衡用户的计算工作量和计算能力分布不均的问题。

Exploring device-to-device communication for mobile cloud computing

- 研究怎样检测和使用其他用户的计算资源,基于D2D

Device-

to-device-based heterogeneous radio access network architecture for mobile cloud computing

- 提出了基于D2D的异构MCC网络,这一新颖的框架增强了网络容量和卸载能力

Energy efficient cooperative computing in mobile wireless sensor networks

- 无线传感器网络,提出协作计算网络去增强它的计算容量

- 最小功耗下的最优计算分区。该结果被用以设计公平感知、高效节能的合作节点的选择

Energy-traffic trade-off cooperative offloading for mobile cloud computing

- 研究结果显示用户间的计算资源共享可以减少通信流量

Joint computation and communication cooperation for mobile edge computing

- 通过部署一个辅助器,提出四槽联合计算通信合作协议

- 这里的辅助器不仅仅计算来自用户的卸载任务,而且还扮演一个中继节点,将任务传递到MEC服务器

Exploiting non-causal CPU-state information for energy-efficient mobile cooperative computing

- 研究了在点对点协作计算系统中的最优卸载策略,这里的计算辅助器有一个时变的计算资源

- 基于辅助器CPU的控制文件和缓冲区大小,建立起卸载可行通道

- 实现最优卸载的方法采用“string-pulling”策略

Computation peer offloading for energy-

constrained mobile edge computing in small-cell networks

- 提出了基于Lyapunov优化和游戏理论方法的在线点卸载框架,这能够让小基站之间的合作处理网络中空间分布不均的工作量。

Summary and Insight

- 考虑到MEC系统具有有限的无线和计算资源,对于卸载计算,为实现系统级的目标(比如:最小化移动设备的总功耗、用户获得的最大信道增益、最低的本地计算能量消耗)有一个高的优先级,因为它们能够很大程度上节能。然而,对于大量卸载用户,将会造成用户间的通信和计算干扰,将会反过来减少系统受益。

- 为有效减少多用户总的计算时延,MEC服务器的调度设计应该安排更高的优先级给具有更加严格的时延要求和重计算任务的用户。更进一步,并行计算也能进一步提高服务器的计算速度。

- 清理大量分布式计算资源不仅可以缓解网络拥塞,还可以提高资源利用率,实现普适计算。这个愿景可能会通过点对点移动设备协作边缘计算来实现,最主要的优点包括通过D2D技术的短距离通信、计算资源和结果的共享。

MEC Systems With Heterogeneous Servers

- heterogeneous MEC (Het-MEC)

异构MEC系统:由一个中心云和多个边缘服务器组成。不同等级的中心云和边缘云之间的合作交互带来了很多研究挑战,最近有很大吸引力的研究是服务器选择、协作和计算迁移。

Server Selection

关键的设计点是,卸载的目的地,例如,是边缘服务器还是中心云

A cooperative scheduling scheme of local cloud and Internet cloud for delay-aware mobile cloud computing

- 提出一个算法,同时利用边缘服务器近距离低时延和云中心计算资源丰富的特点以最大化卸载成功率

- 明确来说,当MEC服务器的计算卸载任务超过给定阈值时,时延容忍的任务就会卸载到中心云,从而让出足够的边缘服务器资源给时延敏感的任务

A game theoretic resource allocation for overall energy minimization in mobile cloud computing system

- 服务器的选择最主要的挑战来自卸载计算量与已选边缘服务器的相关性。为解决该问题,构建一个堵塞游戏的公式以最小化总功耗。

Offloading in mobile edge computing: Task allocation and computational frequency scaling

- 提出允许移动设备将任务卸载到多个MEC的框架

- 提出semidefinite relaxation-based algorithms (半定松弛的算法)去做出任务分配决策和CPU频率的缩放。

Server Cooperation

通过服务器协作进行的资源共享不仅能够提升资源利用率和提高运营商受益,而且能够提高用户的体验。

A framework for cooperative resource management in mobile cloud computing

- 最先提出一个框架,框架组件包括:资源分配、收益管理、服务提供协作。

- 首先资源分配在确定性和随机的用户信息的情况下被优化,来最大化总收益;

- 其次,考虑到自身利益至上的云服务提供商,一种基于游戏理论的分布式算法被提出去去最大化服务提供商的利润,实现纳什均衡;

Decentralized and optimal resource cooperation in geo-distributed mobile cloud computing

- 在不同云数据中心的服务提供者的资源共享

- 建立联盟游戏的公式,并通过稳定的保证收敛的博弈论算法( game-theoretic algorithm)去解

Proactive edge computing in latency-constrained fog networks

- 提出的一种新的协作方法:通过主动地缓存计算结果去最小化计算时延,边缘服务器同时使用计算和存储资源

- 该相应的任务分配问题被制定为一个匹配游戏,并通过基于延迟接受算法(deferred-acceptance algorithm)的高效算法。

延迟接受算法是约会配对问题,非常有趣

Computation Migration

计算迁移主要产生于卸载用户之间的移动性。当用户移动到接近新的MEC服务器时,网络控制器将会选择将计算迁移到新的服务器中进行,或者在原来的服务器计算并将计算结果通过新的服务器发送给用户。

Mobility-induced service migration in mobile microclouds

- 被制定为一个基于随机漫步移动模型的MDP问题

- 显示最优策略具有基于阈值的结构,即只有当两个服务器的距离被两个已知阈值限定的时候,才会进行计算迁移

Dynamic service migration and workload scheduling in edge-clouds

- 进一步研究上面的问题,边缘服务器中的工作负载调度与服务迁移集成,以使用 Lyapunov optimization techniques来最小化平均总传输和重新配置成本。

Joint offloading decision and resource allocation for mobile cloud with computing access point

- 框架

- MEC服务器可以在本地处理卸载的计算任务或将它们迁移到中央云服务器。

- 制定了一个优化问题以最小化移动能量消耗和计算延迟之和。这个问题通过两阶段算法(two-stage algorithm)解决:首先通过半定松弛和随机化技术确定每个用户的卸载决策,然后为所有用户执行资源分配优化。

Summary and Insight

1.为了减少计算总时延,将时延不敏感和计算量巨大的任务卸载到远程中心云服务器,而时延敏感的在边缘服务器计算;

2.服务器协作能够提升MEC服务器的计算效率和资源利用率,更重要的是它能够平衡网络中的计算卸载分布以便去减少总的计算时延同时使得资源被更好的利用。而且,服务器协作设计需要考虑时间和空间上的计算任务到达、服务器计算容量、时变信道和服务器单独受益。

3.在MEC移动性管理中,计算迁移是一个有效的方法。是否迁移取决于迁移开销、用户和服务器之间的距离、信道条件、服务器计算容量。具体的说,当用户远离原来的服务器时,最好将计算任务迁移到用户附近的服务器上。

Challenges

三项在研究MEC的资源管理中面临的挑战,而且仍未解决。

-

Two-Timescale Resource Management:为了简单起见,在整个任务执行过程中,无线信道被认为是保持静态的。然而,当信道相干时间远小于时延要求的时候,整个假设是非常不合理的。

-

Online Task Partitioning:为了易于优化,当前的文献在解决任务分区问题时忽略了无线信道的波动而且在执行程序开始前的获取任务分区判决。在这样脱机的任务分区判决的系统中,信道条件的改变将会导致无效的甚至是不可行的卸载,这将会使得计算性能严重恶化。为了开发在线任务分区策略,应该将信道统计信息纳入到制定的任务分区问题中,这明显属于NP-hard问题,即使在静态信道的情况下

Collaborative task execution in mobile cloud computing under a stochastic wireless channel

Online placement of multi-component applications in edge computing environments

- 分别通过连续的或树拓扑的任务关系图( task-call graphs),得到一个应用的近似的在线任务分区算法,然而没有得到通用模型的解决方式

- Large-Scale Optimization :在同时服务大量的移动设备时,多MEC服务器协作允许它们的资源被联合管理。网络规模的增大给资源管理带来大规模优化的问题,这些问题关于大量的卸载决定以及无线电和计算资源分配变量。传统的集中联合无线电和计算资源管理算法应用到大规模MEC系统的时候,需要大量的资源。这不可避免地导致显著的执行延迟,并可能削弱由MEC范例带来的潜在的性能改进,例如延迟减少(可能被削弱)。为了实现有效的资源管理,要求设计低复杂度并具有较少信令和计算开销的优化算法。

Large-scale convex optimization for dense wireless cooperative networks

- 尽管该文章为无线电资源管理提供强大的工具,但由于其组合和非凸性质,它无法直接应用于优化卸载,所以需要新的算法。

4 技术问题、挑战、未来的研究方向

MEC系统部署

1 Site Selection for MEC Servers站点选择

与基站选择问题不同, 因为the optimal placement of edge servers is coupled with the computational resource provisioning

应该考虑的两个方面 the system planners and

administrators should account for two important factors:

计费和计算需求

存在问题与解决方法

计算需求较高的地区应安装更多MEC服务器,但这种地方的租费贵,将MEC部署在已存在的基础设施(微基站)的位置是非常有前景的建议。

然而,这未能解决问题是,在macro cells中,由于差的信号质量,用户体验效果难以保证。

Femtocells: Past, present, and future

Modeling and analysis of K-tier downlink heterogeneous cellular networks

- 对于有些应用(比如智能家庭)期望将计算资源移动到离终端更近的地方,这个可以通过往 small-cell BSs注入计算资源的方式实现,但存在一些障碍:

- 物理上的限制,这种情景下的MEC服务器的计算能力远低于macro BSs(微宏基站),使计算密集的任务是一个挑战,可行的方法是:建立一个MEC系统分级结构,由不同的通信和计算能力的MEC服务器组成;

- 家庭用户随意部署的小蜂窝基站(small-cell BSs)通常没有与MEC提供商合作的动机,所以需要实行奖励机制以促使这种基站的拥有者去租借站点。

- 安全问题。small-cell BSs may incur security problems as they are easy-to-reach and vulnerable to external attacks

另外,并非所有计算热点的位置都有通信设备。 所以要部署有无线收发器的边缘服务器。we need to deploy edge servers with wireless transceivers by properly choosing new locations.

而且,高租金的地方,最好分配大的计算资源以服务更多的用户,获得更大的收益。

2 MEC Network Architecture 网络架构

MEC系统效率非常依赖架构,需要考虑的方面包括:workload intensity and communication rate statistics

未来的移动计算网络被设想为由三层组成:cloud, edge (a.k.a. fog layer), and the service subscriber layer,如图所示

通过蜂窝网络中异构网络的分层类推,从直觉上,异构MEC系统也由多层组成,MEC系统的分层结构不仅保留异构网络带来高效传输的优点,而且可以通过将计算工作量分布部署到多层从而具有处理峰值计算任务的能力。但由于需要考虑其他因素( workload intensity, communication cost between different tiers, workload distribution strategies, etc),计算容量供应的问题尚未解决。

可以使用非专用的计算资源(比如:笔记本电脑、手机等)来进行专用的计算,提高计算资源的利用率,减少了部署的费用。但是这会出现资源管理和安全的问题,由于它的专一和自组织性质。

An approach to ad hoc cloud computing

A stochastic workload distribution approach for an ad hoc mobile cloud

AMCloud: Toward a secure autonomic mobile ad hoc cloud computing system

Vehicular fog computing: A viewpoint of vehicles as the infrastructures

- This paradigm is termed as the Ad-hoc mobile cloud

3 Server Density Planning 服务器密度规划

服务器密度要迎合使用者的需求(与the infrastructure deployment costs and marketing strategies有关)

用几何理论对MEC系统进行性能分析是非常可行的,但需要一下几点问题:

Energy-optimal mobile cloud computing under stochastic wireless channel

Delay-optimal computation task scheduling for mobile-edge computing systems

- 计算的时间标量和无线信道的相干时间可能是不同的,这使得在无线网络中存在的结果并不能快速应用到MEC系统中,可行的解决方法是使用马尔可夫链结合几何理论去得到稳定的计算行为。

- 计算卸载策略会影响无线资源管理策略,这也需要被考虑;

An enhanced community-based mobility model for distributed mobile social networks

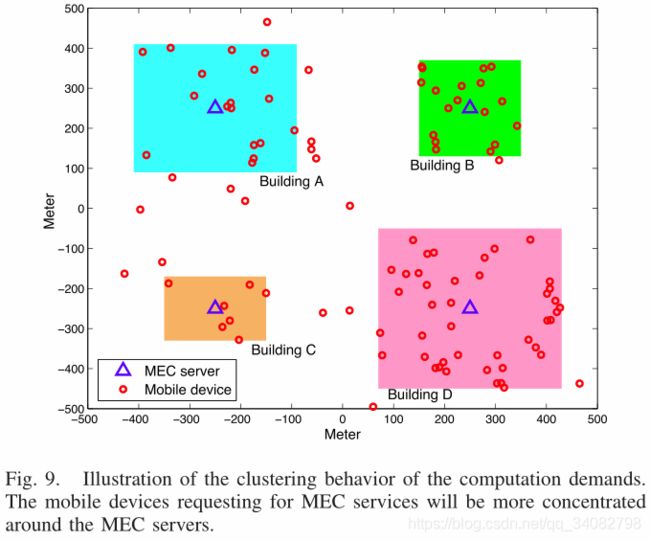

- 计算需求通常是不均匀地成群分布,如图所以不能用均匀泊松点过程处理,要求更先进的点处理方法, e.g., the Ginibre α-determinantal point process (DPP),获取边缘节点的集群行为

Cache-Enabled MEC启用缓存MEC

缓存是为了使内容接近最终用户,MEC是部署边缘服务器来处理边缘的计算密集型任务用户增强用户体验。cache-enabled MEC是将两者融合。

1 Service Caching for MEC Resource Allocation用于资源分配服务缓存

两种可行的方法:

- 基于空间的受欢迎服务的缓存spatial popularity-driven service caching:

根据用户的位置以及周围用户相同的兴趣,在不同的服务器中缓存不同的组合和服务的数量。

举例:游客在博物馆想使用AR眼镜来享受更好的用户体验,因此期望在这个区域内的MEC服务器缓存多种AR服务,以便提供实时服务。

为了实现最优的空间服务缓存,需要建立一个空间应用欢迎度分布模型,去特征化每个应用在不同位置的受欢迎程度。(基于这个,我们采用不同的资源分配优化算法,比如博弈论、凸优化) - 基于临时欢迎度服务缓存temporal popularity-driven service caching:

与1.中不同的是,该方法只在一个时间段内利用欢迎度信息,比如用户倾向于在晚饭之后玩移动云游戏,那么这类信息将会建议MEC操作者在这个时间段内缓存一些游戏服务应用。

缺点:由于频繁的缓存和释放操作,欢迎度信息是时变的并且MEC服务器具有有限的资源,会增加服务器开销。

2 Data Caching for MEC Data Analytics 用于数据分析的数据缓存

现在很多应用程序都涉及基于数据分析的集中计算。通过智能数据缓存技术可以解决这个问题,即存储频繁使用的数据库。更进一步,缓存可能被其他用户重复使用的计算结果,这更进一步提高了整个MEC系统的计算性能。

Modeling and characterizing user experience in a cloud server based mobile gaming approach

- it(Data Caching for MEC Data Analytics) emerges as

a leading technique for next generation mobile computing

infrastructures.

Collaborative multi-bitrate video caching and processing in mobile-edge computing networks

- 研究了在MEC的协作多比特率视频缓存和处理。

对于在单个MEC边缘服务器的数据缓存:一个关键的问题是在大量的数据库和有限的存储资源之间的折中。MEC系统中的数据缓存会对计算精度,延迟和边缘服务器能耗产生多种影响,但尚未在现有文献中表征。

进一步,建立一个数据库欢迎度分布模型(database popularity distribution model)能够统计地描述对不同MEC应用的不同数据库设置。

On the modeling and analysis of heterogeneous radio access networks using a Poisson cluster process

- 大规模使用缓存的MEC网络的性能可以使用随机几何学分析,通过将附近的用户作为集群建模

Mobility Management(user‘s) for MEC

在一些应用中,用户的信息(位置与个人喜好)提高了边缘计算器处理用户计算请求的效率。但用户的移动性为实现随时的和可靠的计算带来困难,原因如下:

1. 异构。频繁在多种边缘服务器(多种系统配置和用户服务器协作政策)中的切换是复杂的。

2. 干扰。用户在不同小区之间移动将会引发干扰,会使传输性能下降;

3. 时延。频繁的切换会提高时延并恶化用户体验。

以下文献致力于设计移动感知的MEC系统:

Mobility-assisted opportunistic computation offloading

- 定义间接触时间和联系率( inter-contact

time and contact rate) - 设计一个机会型卸载策略,以最大化任务卸载成功的可能性

Offloading in mobile cloudlet systems with intermittent connectivity

- 用户可连接到的边缘服务器的数量通过HPPP建立模型

- 通过解决设定的MDP问题最小化代价以优化卸载决策

User mobility model based computation offloading decision for mobile cloud

MuSIC: Mobility-aware optimal service allocation in mobile cloud computing

- 提出移动性的模型

- 分别通过用户可以连接的的一系列网络和一个二维的位置-时间工作流程来表征移动性

Efficient mobility and traffic management for delay tolerant cloud data in 5G networks

- 移动性管理结合流量控制为使用时延容忍的任务的用户提供更佳的体验,通过设计智能蜂窝基站合作机制。

Edge caching with mobility prediction in virtualized LTE mobile networks

- 在 Follow-Me Cloud中边缘缓存与移动性预测相结合,增强在边缘内容缓存的迁移

Mobility-aware caching for content-centric wireless networks: Modeling and methodology

- 提出移动感知无线缓存

接下来介绍一系列的有趣的研究方向:

1) Mobility-Aware Online Prefetching(在线预读移动感知)

传统的移动计算卸载设计弊端:

- long fetching latency( requires excessive fetching of a large volume of data for handover and thus brings long fetching latency)

- heavy loads

解决方法:

Live prefetching for mobile computation offloading

- 在服务计算时间内,使用用户轨迹的统计型信息和将部分未来计算数据预取到预测的下一个服务器中,即所说的在线预取。

尽管减少了切换时延与更高效地卸载,但带来新的问题:

1. 轨迹预测模型需要在复杂与精准中做折中;

2. 预取数据的选择;通过自适应的传输功率控制,提前读取集中计算部分;

2) Mobility-Aware Offloading Using D2D

D2D通信优点:提高网络容量、减轻蜂窝系统中数据流量负担、减少传输功耗(因为短距离传输)。

D2D在移动性中的问题:

1.如何综合D2D通信和蜂窝系统的优点;

One possible approach:可以将集中的计算任务卸载到基站侧的边缘服务器(具有大的计算容量),以便减少服务器计算时间;同时大数据量的和精确计算的请求通过D2D通信被邻近的用户取回,从而有较高的能量效率;

2. 考虑到 users’ mobility information, dynamic channels and heterogeneous users’ computation capabilities,应该优化用于卸载的周围用户的选择。

3. 大规模的D2D通信链路将会引入干扰,所以可以应用引入干扰消除和认知无线电技术(advanced interference cancellation and cognitive radio techniques )

3) Mobility-Aware Fault-Tolerant MEC容错的移动感知MEC

场景:对于时延敏感和资源要求(resource-demanding )苛刻的应用程序,任何的计算错误会带来严重的后果

包括三个主要部分:错误预防、错误检测、错误恢复。

Fault prevention:

通过使用其他的可靠的卸载链路来避免和防止MEC错误;宏基站和中心云都能被选为防护云,因为它们有大范围网络覆盖允许持续的MEC服务。关键的设计挑战是:如何在QoS(比如:发送错误的可能性)和由于单个用户的额外卸载链路的能量消耗间进行折中,以及多用户MEC应用如何分配保护云。

fault detection:

收集错误信息,可以通过设置智能及时检测和MEC服务的接收反馈来实现。使用channel and mobility estimation techniques 去评估错误达到减少错误检测时间的目的。

** fault recovery**:

针对检测到的错误,执行恢复技术可以达到持续并加速MEC服务的效果。因错误而暂停的服务可以被切换到更加可靠的在高速卸载情况下具有自适应的功率控制的后备无线链路中。其他方法: migrating the workloads to neighboring MEC systems directly or through ad-hoc relay nodes in

Recovery for overloaded mobile edge computing

4) Mobility-Aware Server Scheduling

在动态环境下的移动性多用户MEC系统需要自适应的服务调度。这种调度计划可以结合实时用户信息而且不时重新生成调度顺序。

解决:

在动态调度机制下,具有差的条件的用户将会被分配一个高的卸载优先级以满足截止时间条件。

设计移动感知的优先级卸载函数:由以下两个步骤实现:第一步是去精确地预测用户的移动性配置和信道条件,这里主要的挑战是:移动性效果和卸载优先级函数之间的映射;

Optimization of resource provisioning cost in cloud computing

Reservation-based resource scheduling and code partition in mobile cloud computing

- 第二步是资源预留,能够增强服务器的调度性能。

- 为了保障时延敏感的且高移动性的用户的服务质量,MEC服务器会预留专用的计算资源为这些用户提供可靠的计算服务。对于其他时延不敏感用户,MEC服务器可以实现按需(on-demand)配置。对于混合型的,通过在有qos保障下服务的最多用户数来优化调度策略。

Green MEC

考虑节能主要设计方法有:MEC能量均衡的动态精简、MEC的地理卸载均衡、可再生能源在MEC系统的使用。

1) Dynamic Right-Sizing for Energy-Proportional MEC

energy-proportional (or power-proportional) servers:

服务器的能耗应与其计算负载成比例。

反例:一种实现服务器能量均衡的方法是对计算量较少的MEC服务器进行关闭或者减慢操作,但是伴随着节能的同时,服务器在开关两种状态之间的切换会带来一些问题:额外开销与时延、体验恶化、开关的磨损风险增加。总之,这不是一个好的方式。

**解决:**为了实现有效的动态精简均衡,每个边缘服务器的计算工作量概括需要被准确地预测。MEC服务系统来说,许多原因导致它的工作负载模式多变,所以这要求更精确的预测技术。此外,在线动态均衡算法需要较少的预测信息,需要被发展。

2) Geographical Load Balancing(GLB) for MEC

Online algorithms for geographical load balancing

Temperature aware workload management in geo-distributed data centers

利用工作负载模式,温度和电价的空间多样性,在不同的数据中心之间做出工作负载路由决策

例子:一个MEC服务器集群为一个移动用户提供服务,一方面会提升任务量较少的服务器的能量效率和用户的体验,另一方面会延长移动设备的电池寿命。

另外,GLB的实现要求有效的资源管理技术。

应用GLB需要考虑的因素:

- 因为任务迁移需要经过蜂窝核心网,所以当进行GLB判决的时候需要监控和考虑网络拥堵情况;

- 为进行无缝任务迁移,一个虚拟机需要提前迁移或者设置到另一个边缘服务器,这可能会造成额外的功耗;

- 运营商需要考虑节能和低时延之间的折中,以顾及与服务订阅者双方间的利益

- 现存的传统的云计算赋予边缘服务器额外的选项,即将关键的时延和集中计算任务卸载到远程云数据中心处理,会使优化复杂化。

3) Renewable(可再生) Energy-Powered MEC Systems

energy harvesting (EH)技术在mec中的可行性:1. the MEC servers are expected to be densely-deployed and have low power consumption

2. EH is able to prolong their battery lives;3. eliminates the need of human intervention;

可再生能源功率的MEC系统主要考虑的问题有:绿色感知能量资源分配和计算卸载。

代替满足用户体验的情况下最小化能耗的方式,而对于可再生能源功率的MEC系统的设计原则需要改为在可再生能源能量的限制下优化系统性能。

energy side information (ESI)

Online learning for offloading and autoscaling in renewable-powered mobile edge computing

- For EH-powered MEC servers, the system operator should decide the amount of workload required to be offloaded from the edge server to the central cloud, as well as the processing speed of the edge server, according to the information of the core network congestion state, computation workload, and ESI. This problem was solved by a learning-based online algorithm

Dynamic computation offloading for mobile-edge computing with energy harvesting devices

- for EH-powered mobile devices, a dynamic computation offloading policy has been proposed in this paper using Lyapunov optimization techniques based on both the CSI and ESI.

- BUT they cannot provide a comprehensive solution for large-scale MEC systems.

可再生能量的随机性为系统带来不稳定性,以下是一些解决方法:

Transmit power minimization

for wireless networks with energy harvesting relays

- 成本低,可密集布置。由此产生的重叠服务区域提供了可用能量的卸载多样性避免性能下降。

Online algorithms for geographical load balancing

- 选择太阳能。solar energy is more suitable for workloads with a high peak-to-mean ratio (PMR),while wind energy fits better for workloads with a small PMR.

Optimal power allocation for energy harvesting and power grid coexisting wireless communication systems

On optimizing green energy utilization for cellular networks with hybrid energy supplies

Grid energy consumption and QoS tradeoff in hybrid energy supply wireless networks

- 混合能量提高系统稳定性。powered by both the electric grid and the harvested energy,and equipping uninterrupted power supply (UPS) units 。

Enabling wireless power transfer in cellular networks: Architecture, modeling and deployment

Energy efficient mobile cloud computing powered by wireless energy transfer

应用: the computation offloading for mobile devices in MEC systems

Energy efficient resource allocation for wireless power transfer enabled collaborative mobile clouds

应用:data offloading for collaborative mobile clouds

- wireless power transfer (WPT). the edge servers can be powered by WPT when the renewable energy is insufficient for reliability.局限: novel

energy beamforming techniques are needed to increase the charging efficiency、由于无线供电系统中的双重远近问题,需要更精细的调度以保证多移动用户的公平性。

Security and Privacy Issues in MEC

MEC的特点带来的在安全与私隐方面的问题:

- MEC固有的异构网络使得传统的认证机制不可用;

- 然后,支持MEC的通信技术的多样性和网络管理机制的软件性质带来了新的安全威胁;

- 边缘服务器可能是窃听者或攻击者

1) Trust and Authentication Mechanisms

问题:由于不同类型的边缘服务器来自于不同的供应商,使得传统的信任认证机制不可用,并且由于很多边缘服务器服大量移动设备,这使得信任认证机制比起传统的云计算系统变得非常复杂。最小化认证机制与设计的分配策略的开销十分困难。

2) Networking Security:

问题:MEC系统中,不同的网络(比如:WiFi、LTE、5G都有不同的信任域),在现有的解决方案中,认证机构只能将证书分发给位于其自己的信任域中的所有元素,这使得很难保证不同信任域中通信的隐私和数据完整性。

解决:为了解决这个问题,我们使用密码属性( cryptographic attributes)作为信任证书去交换回话密钥。也可以使用定义多个信任域之间进行协商和维护域间的信任证书的联合内容网络(federated content networks)的概念。

Providing security in NFV: Challenges and opportunities

- SDN和NFV等技术引入会简化MEC网络,但是这些软件技术也是易受攻击的。因此需要一个新颖的、健壮的安全机制,比如:内部管理程序、运行时内存分析、集中安全管理。( hypervisor introspection, run-time memory analysis, and centralized security management)

3) Secure and Private Computation

Non-interactive verifiable computing: Outsourcing computation to untrusted workers

为了实现安全和隐私的计算,边缘平台在执行计算任务时不需要知道原始的用户数据,并且计算结果需要被认证,这可以通过加密算法和认证计算技术( encryption algorithms and verifiable computing techniques)实现。

例子

Secure optimization computation outsourcing in cloud computing: A case study of linear programming

- secure computation mechanisms for LP problems was developed

- 效果:This method of result validation achieves a big improvement in computation efficiency via high-level LP computation compared to the generic circuit representation, and it incurs close-to-zero additional overhead on both the client and cloud server,

5 STANDARDIZATION E FFORTS AND USE SCENARIOS OF MEC

Referenced MEC Server Framework

- the IaaS( Infrastructure as a Service 基础设施即服务) controller provides a security and resource sandbox

Technical Challenges and Requirements

- Network Integration:MEC服务是部署在通信网络之上的新型服务,MEC平台所以对3GPP的网络架构应该透明;即MEC的引入不影响已有的3GPP规格。

- Application Portability:要求MEC应用可以无缝地被不同供应商提供的MEC服务器加载和执行。这要求平台应用管理、打包机制、部署、应用管理上的一致性。

- Security:由于整合了计算和IT服务,MEC系统具有更多的安全挑战;

- Performance:应该提供足够的容量来处理系统部署阶段中的用户流量;此外,由于高度虚拟化的特性,所提供的性能可能受损,特别是对于那些需要大量使用硬件资源或具有低延迟要求的应用程序。最后,如何提高虚拟化环境的效率将会是一个挑战;

- Resilience: MEC系统应该提供一定的弹性,满足高可用性要求他们的网络运营商要求。MEC平台和应用应该具有容错能力以免对其他正常的网络操作产生不利影响;

- Operation:虚拟化和云技术使各方能够参与MEC系统的管理。 因此,管理框架的实施还应考虑潜在部署的多样性

- Regulatory and Legal Considerations:MEC系统的部署需要满足合法的管理需求,比如隐私和收费。

除了上述之外,用户的移动性、应用和流量迁移、连接和存储的要求。

Use Scenarios

-

Video Stream Analysis Service :

广泛应用包括:车辆识别、脸部识别、家居安全监控

MEC for video stream analysis

the edge server should have the ability to conduct video management and analysis, and only the valuable video clips (screenshots) will be backed up to the cloud data centers

the edge server should have the ability to conduct video management and analysis, and only the valuable video clips (screenshots) will be backed up to the cloud data centers -

Augmented Reality Service:

应用: museum video guides、Online games

MEC for AR services

例子:

Intel mobile edge computing technology improves the augmented reality experience

- 英特尔已经实施了一个演示,并在移动世界大会( Mobile World Congress 2016)上进行了路演

- IoT Applications :

对于IoT设备,需要将集中的计算任务卸载到远端去处理(返回处理结果),从而延长电池使用寿命。IoT设备在独自获取分布式信息的时候会出现困难,而MEC服务器具有高性能计算能力并且能够收集分布式信息,MEC服务器的部署将会有效简化IoT设备。物联网的另一个重要特征是运行不同形式协议的设备的异构性,所以它们的管理需要被低时延网关(可以是MEC服务器)实现。 - Connected Vehicles :

应用:自动汽车、unmanned aerial vehicles (UAVs)无人机

MEC for connected vehicles

UTM infrastructure and connected society

- UAV的提出Nokia proposed the UAV traffic management (UTM) based MEC architecture for connected UAVs,where the UTM unit provides functions of fleet management, automated UAV missions, 3D navigation, and collision avoidance.

- 存在问题:现有的移动网络主要是专为地面用户设计的,无人机将具有非常有限的连接和带宽。因此,重新配置移动网络,以确保无人机和基础设施之间的连接和低延迟,为无人机设计MEC系统至关重要的任务。

其他: active device tracking, RAN-aware content optimization, distributed content and Domain Name System (DNS) caching, enterprise networks, as well as safe-and-smart cities

MEC in 5G Standardizations

1) Functionality Supports Offered by 5G Networks:

To integrate MEC in 5G systems, the recent 5G technical specifications have explicitly pointed out necessary functionality supports that should be offered by 5G networks for edge computing, as listed below:

- 5G核心网络应选择在本地数据网络中要路由到应用程序的流量;

- 5G核心网络选择靠近UE的用户平面功能(UPF: user plane function),以通过接口从本地数据网络路由和执行流量控制,

这应该基于UE的订阅数据,UE位置和来自应用功能(AF:application function)的数据; - 5G网络应保证会话和服务连续性,以实现UE和应用程序的移动性;

- 5G核心网络和AF应通过网络曝光功能(NEF: network exposure function)相互提供信息。

- 策略控制功能(PCF:policy control function)为路由到本地数据网络的业务提供QoS控制和计费的规则

2) Innovative Features in 5G to Facilitate MEC:

Support ServiceRequirement: QoS特性(在资源类型,优先级方面,分组延迟预算和分组错误率),描述了QoS流在UE和UPF之间边缘到边缘接收的分组转发处理,它与 5G QoS指标(5QI: 5G QoS Indicator)结合

Technical specification group services and system aspects; System architecture for the 5G systems; Stage 2 (Release 15)

- a standardized 5QI to QoS mapping table

- showing a broad range of services that can be supported in 5G systems

Advanced Mobility Management Strategy: 引入移动模式来设计5G系统的移动性管理策略。 移动模式在无线通信系统中设计先进传输方案和MEC应用起到重要作用。

Capability of Network Slicing: 网络切片是一种允许在公共共享物理基础架构之上创建多个网络实例的敏捷和虚拟网络架构。凭借5G系统中网络切片的能力,MEC应用可以得到优化的专用网络资源,这有助于大大减少接入网络的延迟,并支持MEC服务用户的密集接入。