数仓 用户认证 安全模式下启动Hadoop集群

文章目录

- 修改特定本地路径权限

- 启动HDFS

- 修改HDFS特定路径访问权限

- 启动Yarn

- 启动HistoryServer

修改特定本地路径权限

| local | $HADOOP_LOG_DIR | hdfs:hadoop | drwxrwxr-x |

|---|---|---|---|

| local | dfs.namenode.name.dir | hdfs:hadoop | drwx------ |

| local | dfs.datanode.data.dir | hdfs:hadoop | drwx------ |

| local | dfs.namenode.checkpoint.dir | hdfs:hadoop | drwx------ |

| local | yarn.nodemanager.local-dirs | yarn:hadoop | drwxrwxr-x |

| local | yarn.nodemanager.log-dirs | yarn:hadoop | drwxrwxr-x |

1)$HADOOP_LOG_DIR(所有节点)

该变量位于hadoop-env.sh文件,默认值为 ${HADOOP_HOME}/logs

[root@hadoop102 ~]# chown hdfs:hadoop /opt/module/hadoop-3.1.3/logs/

[root@hadoop102 ~]# chmod 775 /opt/module/hadoop-3.1.3/logs/

[root@hadoop103 ~]# chown hdfs:hadoop /opt/module/hadoop-3.1.3/logs/

[root@hadoop103 ~]# chmod 775 /opt/module/hadoop-3.1.3/logs/

[root@hadoop104 ~]# chown hdfs:hadoop /opt/module/hadoop-3.1.3/logs/

[root@hadoop104 ~]# chmod 775 /opt/module/hadoop-3.1.3/logs/

2)dfs.namenode.name.dir(NameNode节点)

该参数位于hdfs-site.xml文件,默认值为file://${hadoop.tmp.dir}/dfs/name

[root@hadoop102 ~]# chown -R hdfs:hadoop /opt/module/hadoop-3.1.3/data/dfs/name/

[root@hadoop102 ~]# chmod 700 /opt/module/hadoop-3.1.3/data/dfs/name/

3)dfs.datanode.data.dir(DataNode节点)

该参数为于hdfs-site.xml文件,默认值为file://${hadoop.tmp.dir}/dfs/data

[root@hadoop102 ~]# chown -R hdfs:hadoop /opt/module/hadoop-3.1.3/data/dfs/data/

[root@hadoop102 ~]# chmod 700 /opt/module/hadoop-3.1.3/data/dfs/data/

[root@hadoop103 ~]# chown -R hdfs:hadoop /opt/module/hadoop-3.1.3/data/dfs/data/

[root@hadoop103 ~]# chmod 700 /opt/module/hadoop-3.1.3/data/dfs/data/

[root@hadoop104 ~]# chown -R hdfs:hadoop /opt/module/hadoop-3.1.3/data/dfs/data/

[root@hadoop104 ~]# chmod 700 /opt/module/hadoop-3.1.3/data/dfs/data/

4)dfs.namenode.checkpoint.dir(SecondaryNameNode节点)

该参数位于hdfs-site.xml文件,默认值为file://${hadoop.tmp.dir}/dfs/namesecondary

[root@hadoop104 ~]# chown -R hdfs:hadoop /opt/module/hadoop-3.1.3/data/dfs/namesecondary/

[root@hadoop104 ~]# chmod 700 /opt/module/hadoop-3.1.3/data/dfs/namesecondary/

5)yarn.nodemanager.local-dirs(NodeManager节点)

该参数位于yarn-site.xml文件,默认值为file://${hadoop.tmp.dir}/nm-local-dir

[root@hadoop102 ~]# chown -R yarn:hadoop /opt/module/hadoop-3.1.3/data/nm-local-dir/

[root@hadoop102 ~]# chmod -R 775 /opt/module/hadoop-3.1.3/data/nm-local-dir/

[root@hadoop103 ~]# chown -R yarn:hadoop /opt/module/hadoop-3.1.3/data/nm-local-dir/

[root@hadoop103 ~]# chmod -R 775 /opt/module/hadoop-3.1.3/data/nm-local-dir/

[root@hadoop104 ~]# chown -R yarn:hadoop /opt/module/hadoop-3.1.3/data/nm-local-dir/

[root@hadoop104 ~]# chmod -R 775 /opt/module/hadoop-3.1.3/data/nm-local-dir/

6)yarn.nodemanager.log-dirs(NodeManager节点)

该参数位于yarn-site.xml文件,默认值为$HADOOP_LOG_DIR/userlogs

[root@hadoop102 ~]# chown yarn:hadoop /opt/module/hadoop-3.1.3/logs/userlogs/

[root@hadoop102 ~]# chmod 775 /opt/module/hadoop-3.1.3/logs/userlogs/

[root@hadoop103 ~]# chown yarn:hadoop /opt/module/hadoop-3.1.3/logs/userlogs/

[root@hadoop103 ~]# chmod 775 /opt/module/hadoop-3.1.3/logs/userlogs/

[root@hadoop104 ~]# chown yarn:hadoop /opt/module/hadoop-3.1.3/logs/userlogs/

[root@hadoop104 ~]# chmod 775 /opt/module/hadoop-3.1.3/logs/userlogs/

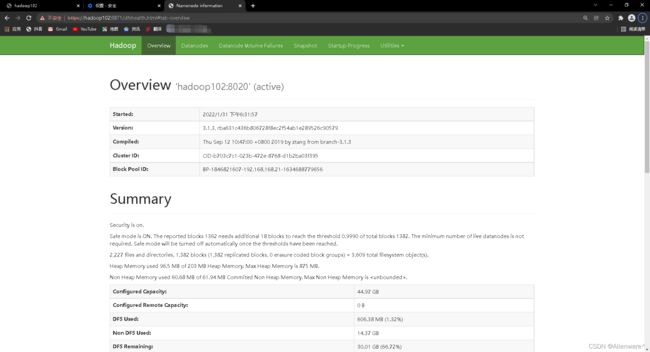

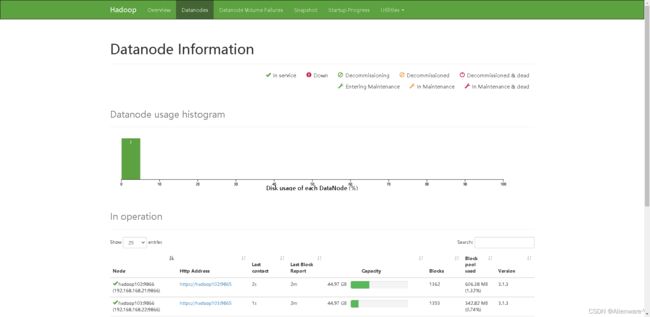

启动HDFS

需要注意的是,启动不同服务时需要使用对应的用户

1.单点启动

(1)启动NameNode

[root@hadoop102 ~]# sudo -i -u hdfs hdfs --daemon start namenode

(2)启动DataNode

[root@hadoop102 ~]# sudo -i -u hdfs hdfs --daemon start datanode

[root@hadoop103 ~]# sudo -i -u hdfs hdfs --daemon start datanode

[root@hadoop104 ~]# sudo -i -u hdfs hdfs --daemon start datanode

(3)启动SecondaryNameNode

[root@hadoop104 ~]# sudo -i -u hdfs hdfs --daemon start secondarynamenode

说明:

-i:重新加载环境变量

-u:以特定用户的身份执行后续命令

2.群起

1)在主节点(hadoop102)配置hdfs用户到所有节点的免密登录。

[root@hadoop102 ~]# su hdfs

[hdfs@hadoop102 root]$ ssh-keygen

[hdfs@hadoop102 root]$ ssh-copy-id hadoop102

[hdfs@hadoop102 root]$ ssh-copy-id hadoop103

[hdfs@hadoop102 root]$ ssh-copy-id hadoop104

ssh的密码是hdfs

2)修改主节点(hadoop102)节点的$HADOOP_HOME/sbin/start-dfs.sh脚本,在顶部增加以下环境变量。

[root@hadoop102 ~]# vim $HADOOP_HOME/sbin/start-dfs.sh

在顶部增加如下内容

HDFS_DATANODE_USER=hdfs

HDFS_NAMENODE_USER=hdfs

HDFS_SECONDARYNAMENODE_USER=hdfs

注:$HADOOP_HOME/sbin/stop-dfs.sh也需在顶部增加上述环境变量才可使用。

3)以root用户执行群起脚本,即可启动HDFS集群。

[root@hadoop102 ~]# start-dfs.sh

到最后会出现权限问题:/etc/security/keytab/keystore

排除需要用 :

在hadoop103和hadoop104中输入即可

chown -R root:hadoop /etc/security/keytab/keystore

3.查看HFDS web页面

访问地址为https://hadoop102:9871

修改HDFS特定路径访问权限

| hdfs | / | hdfs:hadoop | drwxr-xr-x |

|---|---|---|---|

| hdfs | /tmp | hdfs:hadoop | drwxrwxrwxt |

| hdfs | /user | hdfs:hadoop | drwxrwxr-x |

| hdfs | yarn.nodemanager.remote-app-log-dir | yarn:hadoop | drwxrwxrwxt |

| hdfs | mapreduce.jobhistory.intermediate-done-dir | mapred:hadoop | drwxrwxrwxt |

| hdfs | mapreduce.jobhistory.done-dir | mapred:hadoop | drwxrwx— |

说明:

若上述路径不存在,需手动创建

1)创建hdfs/hadoop主体,执行以下命令并按照提示输入密码(123456)

[root@hadoop102 ~]# kadmin.local -q "addprinc hdfs/hadoop"

2)认证hdfs/hadoop主体,执行以下命令并按照提示输入密码(123456)

[root@hadoop102 ~]# kinit hdfs/hadoop

3)按照上述要求修改指定路径的所有者和权限

(1)修改/、/tmp、/user路径

[root@hadoop102 ~]# hadoop fs -chown hdfs:hadoop / /tmp /user

[root@hadoop102 ~]# hadoop fs -chmod 755 /

[root@hadoop102 ~]# hadoop fs -chmod 1777 /tmp

[root@hadoop102 ~]# hadoop fs -chmod 775 /user

(2)参数yarn.nodemanager.remote-app-log-dir位于yarn-site.xml文件,默认值/tmp/logs

[root@hadoop102 ~]# hadoop fs -chown yarn:hadoop /tmp/logs

[root@hadoop102 ~]# hadoop fs -chmod 1777 /tmp/logs

(3)参数mapreduce.jobhistory.intermediate-done-dir位于mapred-site.xml文件,默认值为/tmp/hadoop-yarn/staging/history/done_intermediate,需保证该路径的所有上级目录(除/tmp)的所有者均为mapred,所属组为hadoop,权限为770

[root@hadoop102 ~]# hadoop fs -chown -R mapred:hadoop /tmp/hadoop-yarn/staging/history/done_intermediate

[root@hadoop102 ~]# hadoop fs -chmod -R 1777 /tmp/hadoop-yarn/staging/history/done_intermediate

[root@hadoop102 ~]# hadoop fs -chown mapred:hadoop /tmp/hadoop-yarn/staging/history/

[root@hadoop102 ~]# hadoop fs -chown mapred:hadoop /tmp/hadoop-yarn/staging/

[root@hadoop102 ~]# hadoop fs -chown mapred:hadoop /tmp/hadoop-yarn/

[root@hadoop102 ~]# hadoop fs -chmod 770 /tmp/hadoop-yarn/staging/history/

[root@hadoop102 ~]# hadoop fs -chmod 770 /tmp/hadoop-yarn/staging/

[root@hadoop102 ~]# hadoop fs -chmod 770 /tmp/hadoop-yarn/

4)参数mapreduce.jobhistory.done-dir位于mapred-site.xml文件,默认值为/tmp/hadoop-yarn/staging/history/done,需保证该路径的所有上级目录(除/tmp)的所有者均为mapred,所属组为hadoop,权限为770

[root@hadoop102 ~]# hadoop fs -chown -R mapred:hadoop /tmp/hadoop-yarn/staging/history/done

[root@hadoop102 ~]# hadoop fs -chmod -R 750 /tmp/hadoop-yarn/staging/history/done

[root@hadoop102 ~]# hadoop fs -chown mapred:hadoop /tmp/hadoop-yarn/staging/history/

[root@hadoop102 ~]# hadoop fs -chown mapred:hadoop /tmp/hadoop-yarn/staging/

[root@hadoop102 ~]# hadoop fs -chown mapred:hadoop /tmp/hadoop-yarn/

[root@hadoop102 ~]# hadoop fs -chmod 770 /tmp/hadoop-yarn/staging/history/

[root@hadoop102 ~]# hadoop fs -chmod 770 /tmp/hadoop-yarn/staging/

[root@hadoop102 ~]# hadoop fs -chmod 770 /tmp/hadoop-yarn/

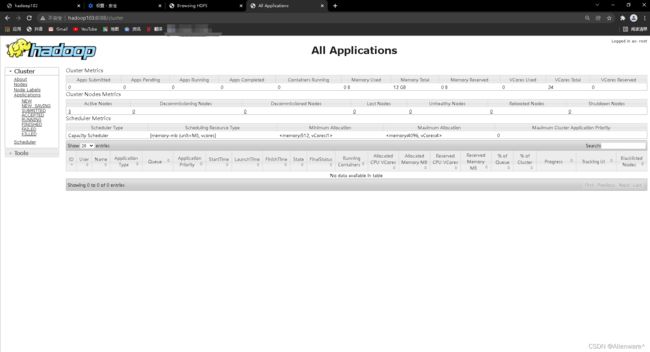

启动Yarn

1,单点启动

启动ResourceManager

[root@hadoop103 ~]# sudo -i -u yarn yarn --daemon start resourcemanager

启动NodeManager

[root@hadoop102 ~]# sudo -i -u yarn yarn --daemon start nodemanager

[root@hadoop103 ~]# sudo -i -u yarn yarn --daemon start nodemanager

[root@hadoop104 ~]# sudo -i -u yarn yarn --daemon start nodemanager

2,群起

1)在Yarn主节点(hadoop103)配置yarn用户到所有节点的免密登录。

[root@hadoop103 ~]# su yarn

[yarn@hadoop103 root]$ ssh-keygen

[yarn@hadoop103 root]$ ssh-copy-id hadoop102

[yarn@hadoop103 root]$ ssh-copy-id hadoop103

[yarn@hadoop103 root]$ ssh-copy-id hadoop104

密码是yarn

2)修改主节点(hadoop103)的$HADOOP_HOME/sbin/start-yarn.sh,在顶部增加以下环境变量。

[root@hadoop103 ~]# vim $HADOOP_HOME/sbin/start-yarn.sh

在顶部增加如下内容

YARN_RESOURCEMANAGER_USER=yarn

YARN_NODEMANAGER_USER=yarn

注:stop-yarn.sh也需在顶部增加上述环境变量才可使用。

3)以root用户执行$HADOOP_HOME/sbin/start-yarn.sh脚本即可启动yarn集群。

[root@hadoop103 ~]# start-yarn.sh

3,访问Yarn web页面

访问地址为http://hadoop103:8088

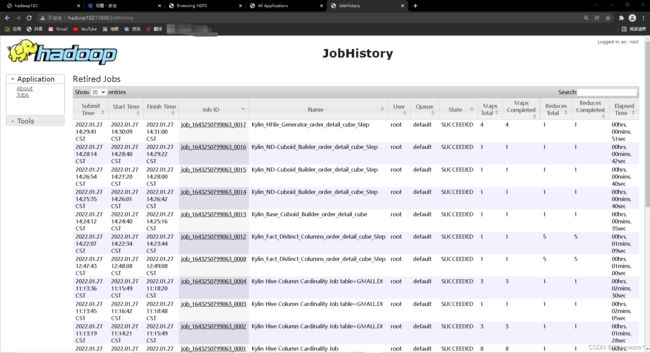

启动HistoryServer

1,启动历史服务器

[root@hadoop102 ~]# sudo -i -u mapred mapred --daemon start historyserver

关闭历史服务器

[root@hadoop102 ~]# sudo -i -u mapred mapred --daemon stop historyserver