二进制搭建kubernetes1.20.6

二进制搭建kubernetes1.20.6

集群角色规划

| 集群角色 | IP | hostname | 组件 |

|---|---|---|---|

| Master | 10.4.7.30 | bst-30 | apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx |

| Master | 10.4.7.31 | bst-31 | apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx |

| Master | 10.4.7.32 | bst-32 | apiserver、controller-manager、scheduler、etcd、docker |

| Node | 10.4.7.40 | bst-40 | kubelet、kube-proxy、dokcer、calico、coredns |

| Vip | 10.4.7.100 |

集权网络规划:

节点网络:10.4.7.0/24

service网络:192.168.0.0/16

pod网络:172.7.0.0/16

初始化

1.配置主机名

所有机器都修改

[root@localhost ~]# hostnamectl set-hostname bst-30 && bash

[root@bst-30 ~]#

2.修改hosts文件,并实现无密码互登录

所有机器都修改

[root@bst-40 ~]# cat /etc/hosts

10.4.7.30 bst-30

10.4.7.31 bst-31

10.4.7.32 bst-32

10.4.7.40 bst-40

#配置免密登录

[root@bst-30 ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:YioehXkzyrH4k6XKMJoWc+kZxeL1cGf4zHyWVOvBEfo root@bst-30

The key's randomart image is:

+---[RSA 2048]----+

| .. |

| .o |

| . . .o o |

| + = o o ..+ |

| = X * S . oE. |

| = @.= o = + . |

|+ @++ o |

|+*+= |

|=oo. |

+----[SHA256]-----+

[root@bst-30 ~]# ssh-copy-id -i .ssh/id_rsa.pub bst-31

[root@bst-30 ~]# ssh-copy-id -i .ssh/id_rsa.pub bst-32

[root@bst-30 ~]# ssh-copy-id -i .ssh/id_rsa.pub bst-30

[root@bst-30 ~]# ssh-copy-id -i .ssh/id_rsa.pub bst-40

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub"

The authenticity of host 'bst-40 (10.4.7.40)' can't be established.

ECDSA key fingerprint is SHA256:mpOVdMGjUG1FAg202dMqBsEDkI9UUDQ5hGpsyR5ohG8.

ECDSA key fingerprint is MD5:a9:c4:d1:16:8a:56:52:63:19:1e:22:5d:45:34:10:e1.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@bst-40's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'bst-40'"

and check to make sure that only the key(s) you wanted were added.

3.关闭防火墙和selinux

systemctl stop firewalld && systemctl disable firewalld

[root@bst-40 ~]# getenforce

Disabled

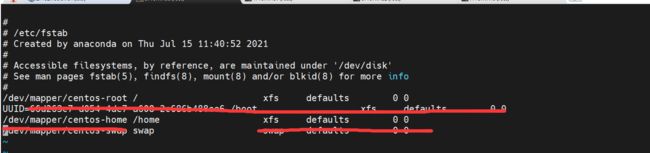

4.关闭交换分区

克隆的虚拟机删除UUID

#临时关闭

swapoff -a

#永久关闭,删除这两行

5.修改内核参数

#加载模块

modprobe br_netfilter

#查看模块

lsmod |grep br_netfilter

#修改内核参数

cat > /etc/sysctl.d/k8s.conf <6.配置时间同步

yum install ntpdate -y、

systemctl start ntpdate.service && systemctl enable ntpdate.service

#跟网络源做同步

ntpdate cn.pool.ntp.org

#把时间同步做成计划任务

crontab -e

* */1 * * * /usr/sbin/ntpdate cn.pool.ntp.org

#重启crond服务

service crond restart

7.安装iptables

yum install iptables-services -y

#先关闭,等集群搭建起来后,再开启

service iptables stop && systemctl disable iptables

#清空防火墙规则

iptables -F

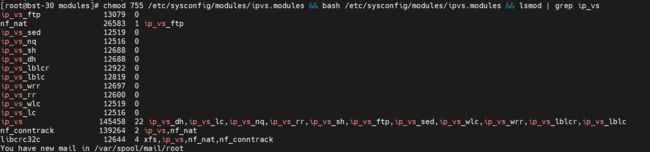

8.开启ipvs

#/etc/sysconfig/modules/ipvs.sh

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in ${ipvs_modules}; do

/sbin/modinfo -F filename ${kernel_module} > /dev/null 2>&1

if [ 0 -eq 0 ]; then

/sbin/modprobe ${kernel_module}

fi

done

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

#将脚本scp到其它机器并执行(理论上node节点加载ipvs即可)

[root@bst-30 modules]# scp ipvs.modules bst-31:/etc/sysconfig/modules/

ipvs.modules 100% 320 314.9KB/s 00:00

[root@bst-30 modules]# scp ipvs.modules bst-32:/etc/sysconfig/modules/

ipvs.modules 100% 320 138.5KB/s 00:00

[root@bst-30 modules]# scp ipvs.modules bst-40:/etc/sysconfig/modules/

ipvs.modules 100% 320 121.9KB/s 00:00

9.安装基础软件包

yum install -y yum-utils device-mapper-persistent-data lvm2 wget net-tools nfs-utils lrzsz gcc gcc-c++ make cmake libxml2-devel openssl-devel curl curl-devel unzip sudo ntp libaio-devel wget vim ncurses-devel autoconf automake zlib-devel python-devel epel-release openssh-server socat ipvsadm conntrack ntpdate telnet rsync

10.安装docker-ce(node节点)

yum -y install yum-utils

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce

systemctl start docker && systemctl enable docker

vi /etc/docker/daemon.json

{

"registry-mirrors":["https://rsbud4vc.mirror.aliyuncs.com","https://registry.docker-cn.com","https://docker.mirrors.ustc.edu.cn","https://dockerhub.azk8s.cn","http://hub-mirror.c.163.com","http://qtid6917.mirror.aliyuncs.com", "https://rncxm540.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"storage-driver": "overlay2",

"insecure-registries": ["registry.access.redhat.com","quay.io","harbor.zq.com"],

"bip": "172.7.40.1/24",

"live-restore": true

}

#重启

修改docker文件驱动为systemd,默认为cgroupfs,kubelet默认使用systemd,两者必须一致才可以。

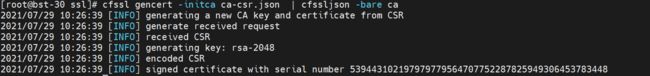

安装证书签发工具

cfssl:https://github.com/cloudflare/cfssl/releases

[root@bst-30 cfssl]# cp cfssl_linux-amd64 /usr/local/bin/cfssl

[root@bst-30 cfssl]# cp cfssl-certinfo_1.5.0_linux_amd64 /usr/local/bin/cfssl-certinfo

[root@bst-30 cfssl]# cp cfssljson_1.5.0_linux_amd64 /usr/local/bin/cfssljson

#添加可执行权限

[root@bst-30 cfssl]# chmod +x /usr/local/bin/cfssl*

[root@bst-30 cfssl]# ll /usr/local/bin/cfssl*

-rwxr-xr-x 1 root root 10376657 Jul 29 10:18 /usr/local/bin/cfssl

-rwxr-xr-x 1 root root 12021008 Jul 29 10:19 /usr/local/bin/cfssl-certinfo

-rwxr-xr-x 1 root root 9663504 Jul 29 10:19 /usr/local/bin/cfssljson

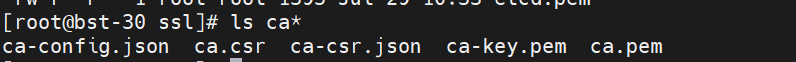

配置ca证书

(bst-30:/opt/ssl)

生成ca证书请求文件ca-csr.json

#ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}

],

"ca": {

"expiry": "87600h"

}

}

[root@bst-30 ssl]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

注:

CN:Common Name(公用名称),kube-apiserver 从证书中提取该字段作为请求的用户名 (User Name);浏览器使用该字段验证网站是否合法;对于 SSL 证书,一般为网站域名;而对于代码签名证书则为申请单位名称;而对于客户端证书则为证书申请者的姓名。

O:Organization(单位名称),kube-apiserver 从证书中提取该字段作为请求用户所属的组 (Group);对于 SSL 证书,一般为网站域名;而对于代码签名证书则为申请单位名称;而对于客户端单位证书则为证书申请者所在单位名称。

L 字段:所在城市

S 字段:所在省份

C 字段:只能是国家字母缩写,如中国:CN

生成ca证书文件ca-config.json

#ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

部署etcd集群

(bst-30,bst-31,bst-32)

etcd:https://github.com/etcd-io/etcd/releases/tag/v3.4.13

创建工作目录

mkdir -p /etc/etcd/ssl

生成etcd证书

生成etcd请求证书etcd-csr.json

#etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.4.7.30",

"10.4.7.31",

"10.4.7.32",

"10.4.7.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}]

}

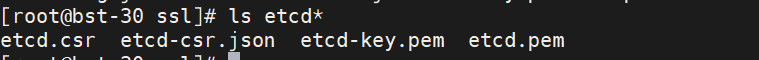

[root@bst-30 ssl]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

拷贝证书文件

[root@bst-30 ssl]# scp ca.pem etcd-key.pem etcd.pem bst-30:/etc/etcd/ssl/

ca.pem 100% 1310 1.3MB/s 00:00

etcd-key.pem 100% 1675 1.9MB/s 00:00

etcd.pem 100% 1395 1.9MB/s 00:00

[root@bst-30 ssl]# scp ca.pem etcd-key.pem etcd.pem bst-31:/etc/etcd/ssl/

[root@bst-30 ssl]# scp ca.pem etcd-key.pem etcd.pem bst-32:/etc/etcd/ssl/

拷贝二进制文件文件

[root@bst-30 etcd-v3.4.13-linux-amd64]# cp etcd* /usr/local/bin/

[root@bst-30 etcd-v3.4.13-linux-amd64]# scp etcd* bst-31:/usr/local/bin/

[root@bst-30 etcd-v3.4.13-linux-amd64]# scp etcd* bst-32:/usr/local/bin/

创建配置文件

#/etc/etcd/etcd.conf

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.4.7.30:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.4.7.30:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.4.7.30:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.4.7.30:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.4.7.30:2380,etcd2=https://10.4.7.31:2380,etcd3=https://10.4.7.32:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_NAME:节点名称,集群中唯一

ETCD_DATA_DIR:数据目录

ETCD_LISTEN_PEER_URLS:集群通信监听地址

ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

ETCD_INITIAL_CLUSTER:集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN:集群Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

mkdir -p /var/lib/etcd/default.etcd

创建启动systemd文件

#/usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

启动etcd集群并检查

systemctl start etcd && systemctl enable etcd

/usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://10.4.7.30:2379,https://10.4.7.31:2379,https://10.4.7.32:2379 endpoint health

安装kubernetes组件

1.20.6:https://dl.k8s.io/v1.20.6/kubernetes-server-linux-amd64.tar.gz

生成集群证书

生成apiserver请求证书kube-apiserver-csr.json

#kube-apiserver-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.4.7.30",

"10.4.7.31",

"10.4.7.32",

"10.4.7.33",

"10.4.7.100",

"192.168.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}

]

}

如果 hosts 字段不为空则需要指定授权使用该证书的 IP 或域名列表。 由于该证书后续被 kubernetes master 集群使用,需要将master节点的IP都填上,同时还需要填写 service 网络的首个IP。(一般是 kube-apiserver 指定的 service-cluster-ip-range 网段的第一个IP,如 192.168.0.1)

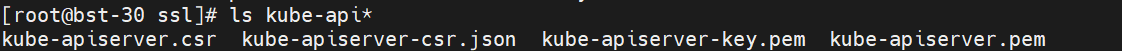

生成apiserver证书文件

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

拷贝证书文件

mkdir -p /etc/kubernetes/ssl

[root@bst-30 ssl]# scp ca*.pem kube-apiserver*.pem bst-30:/etc/kubernetes/ssl/

ca-key.pem 100% 1675 1.1MB/s 00:00

ca.pem 100% 1310 1.1MB/s 00:00

kube-apiserver-key.pem 100% 1675 1.5MB/s 00:00

kube-apiserver.pem 100% 1586 1.7MB/s 00:00

You have new mail in /var/spool/mail/root

[root@bst-30 ssl]# scp ca*.pem kube-apiserver*.pem bst-31:/etc/kubernetes/ssl/

[root@bst-30 ssl]# scp ca*.pem kube-apiserver*.pem bst-32:/etc/kubernetes/ssl/

拷贝二进制文件

[root@bst-30 bin]# ls

apiextensions-apiserver kube-apiserver kube-controller-manager kubectl kube-proxy.docker_tag kube-scheduler.docker_tag

kubeadm kube-apiserver.docker_tag kube-controller-manager.docker_tag kubelet kube-proxy.tar kube-scheduler.tar

kube-aggregator kube-apiserver.tar kube-controller-manager.tar kube-proxy kube-scheduler mounter

[root@bst-30 bin]# rsync -vaz kube-apiserver kube-scheduler kube-controller-manager kubectl bst-30:/usr/local/bin/

sending incremental file list

kube-apiserver

kube-controller-manager

kube-scheduler

kubectl

sent 81,384,468 bytes received 92 bytes 6,028,485.93 bytes/sec

total size is 314,871,808 speedup is 3.87

You have new mail in /var/spool/mail/root

[root@bst-30 bin]# rsync -vaz kube-apiserver kube-scheduler kube-controller-manager kubectl bst-31:/usr/local/bin/

[root@bst-30 bin]# rsync -vaz kube-apiserver kube-scheduler kube-controller-manager kubectl bst-32:/usr/local/bin/

启动TLS Bootstrapping 机制

Master apiserver启用TLS认证后,每个节点的 kubelet 组件都要使用由 apiserver 使用的 CA 签发的有效证书才能与 apiserver 通讯,当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。

为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。

Bootstrap 是很多系统中都存在的程序,比如 Linux 的bootstrap,bootstrap 一般都是作为预先配置在开启或者系统启动的时候加载,这可以用来生成一个指定环境。Kubernetes 的 kubelet 在启动时同样可以加载一个这样的配置文件,这个文件的内容类似如下形式:

apiVersion: v1

clusters: null

contexts:

- context:

cluster: kubernetes

user: kubelet-bootstrap

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kubelet-bootstrap

user: {}

TLS bootstrapping 具体引导过程

1.TLS 作用

TLS 的作用就是对通讯加密,防止中间人窃听;同时如果证书不信任的话根本就无法与 apiserver 建立连接,更不用提有没有权限向apiserver请求指定内容。

\2. RBAC 作用

当 TLS 解决了通讯问题后,那么权限问题就应由 RBAC 解决(可以使用其他权限模型,如 ABAC);RBAC 中规定了一个用户或者用户组(subject)具有请求哪些 api 的权限;在配合 TLS 加密的时候,实际上 apiserver 读取客户端证书的 CN 字段作为用户名,读取 O字段作为用户组.

以上说明:第一,想要与 apiserver 通讯就必须采用由 apiserver CA 签发的证书,这样才能形成信任关系,建立 TLS 连接;第二,可以通过证书的 CN、O 字段来提供 RBAC 所需的用户与用户组。

kubelet 首次启动流程

TLS bootstrapping 功能是让 kubelet 组件去 apiserver 申请证书,然后用于连接 apiserver;那么第一次启动时没有证书如何连接 apiserver ?

在apiserver 配置中指定了一个 token.csv 文件,该文件中是一个预设的用户配置;同时该用户的Token 和 由apiserver 的 CA签发的用户被写入了 kubelet 所使用的 bootstrap.kubeconfig 配置文件中;这样在首次请求时,kubelet 使用 bootstrap.kubeconfig 中被 apiserver CA 签发证书时信任的用户来与 apiserver 建立 TLS 通讯,使用 bootstrap.kubeconfig 中的用户 Token 来向 apiserver 声明自己的 RBAC 授权身份.

token.csv格式:

3940fd7fbb391d1b4d861ad17a1f0613,kubelet-bootstrap,10001,“system:kubelet-bootstrap”

首次启动时,可能与遇到 kubelet 报 401 无权访问 apiserver 的错误;这是因为在默认情况下,kubelet 通过 bootstrap.kubeconfig 中的预设用户 Token 声明了自己的身份,然后创建 CSR 请求;但是不要忘记这个用户在我们不处理的情况下他没任何权限的,包括创建 CSR 请求;所以需要创建一个 ClusterRoleBinding,将预设用户 kubelet-bootstrap 与内置的 ClusterRole system:node-bootstrapper 绑定到一起,使其能够发起 CSR 请求。

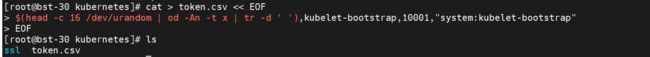

创建token.csv文件

(master节点都创建)

#/etc/kubernetes/token.csv

cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

#格式:token,用户名,UID,用户组

部署api-server组件

创建api-server配置文件

#/etc/kubernetes/kube-apiserver.conf

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=10.4.7.30 \

--secure-port=6443 \

--advertise-address=10.4.7.30 \

--insecure-port=0 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=192.168.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.4.7.30:2379,https://10.4.7.31:2379,https://10.4.7.32:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

–logtostderr:启用日志

–v:日志等级

–log-dir:日志目录

–etcd-servers:etcd集群地址

–bind-address:监听地址

–secure-port:https安全端口

–advertise-address:集群通告地址

–allow-privileged:启用授权

–service-cluster-ip-range:Service虚拟IP地址段

–enable-admission-plugins:准入控制模块

–authorization-mode:认证授权,启用RBAC授权和节点自管理

–enable-bootstrap-token-auth:启用TLS bootstrap机制

–token-auth-file:bootstrap token文件

–service-node-port-range:Service nodeport类型默认分配端口范围

–kubelet-client-xxx:apiserver访问kubelet客户端证书

–tls-xxx-file:apiserver https证书

–etcd-xxxfile:连接Etcd集群证书 –

-audit-log-xxx:审计日志

创建服务启动文件

#/usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

拷贝配置文件和启动文件并修改

[root@bst-30 kubernetes]# rsync -vaz /etc/kubernetes/kube-apiserver.conf bst-31:/etc/kubernetes/

sending incremental file list

kube-apiserver.conf

sent 712 bytes received 35 bytes 498.00 bytes/sec

total size is 1,592 speedup is 2.13

You have new mail in /var/spool/mail/root

[root@bst-30 kubernetes]# rsync -vaz /etc/kubernetes/kube-apiserver.conf bst-32:/etc/kubernetes/

[root@bst-30 kubernetes]# rsync -vaz /usr/lib/systemd/system/kube-apiserver.service bst-31:/usr/lib/systemd/system/

sending incremental file list

kube-apiserver.service

sent 345 bytes received 35 bytes 760.00 bytes/sec

total size is 361 speedup is 0.95

[root@bst-30 kubernetes]# rsync -vaz /usr/lib/systemd/system/kube-apiserver.service bst-32:/usr/lib/systemd/system/

#修改--bind-address=10.4.7.30 \--advertise-address=10.4.7.30 \ 为本机的实际IP

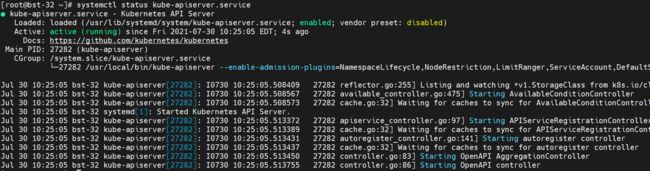

启动api-server并验证

systemctl start kube-apiserver && systemctl enable kube-apiserver

部署kubectl组件

Kubectl是客户端工具,操作k8s资源的,如增删改查等。

Kubectl操作资源的时候,怎么知道连接到哪个集群,需要一个文件/etc/kubernetes/admin.conf,kubectl会根据这个文件的配置,去访问k8s资源。/etc/kubernetes/admin.con文件记录了访问的k8s集群,和用到的证书。

可以设置一个环境变量KUBECONFIG

export KUBECONFIG =/etc/kubernetes/admin.conf

这样在操作kubectl,就会自动加载KUBECONFIG来操作要管理哪个集群的k8s资源了

也可以按照下面方法,这个是在kubeadm初始化k8s的时候会告诉我们要用的一个方法

cp /etc/kubernetes/admin.conf /root/.kube/config

这样我们在执行kubectl,就会加载/root/.kube/config文件,去操作k8s资源了

如果设置了KUBECONFIG,那就会先找到KUBECONFIG去操作k8s,如果没有KUBECONFIG变量,那就会使用/root/.kube/config文件决定管理哪个k8s集群的资源

创建证书请求文件

#admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"O": "system:masters",

"OU": "system"

}

]

}

说明: 后续 kube-apiserver 使用 RBAC 对客户端(如 kubelet、kube-proxy、Pod)请求进行授权; kube-apiserver 预定义了一些 RBAC 使用的 RoleBindings,如 cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予了调用kube-apiserver 的所有 API的权限; O指定该证书的 Group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,由于证书被 CA 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 API 的权限;

注: 这个admin 证书,是将来生成管理员用的kube config 配置文件用的,现在我们一般建议使用RBAC 来对kubernetes 进行角色权限控制, kubernetes 将证书中的CN 字段 作为User, O 字段作为 Group; “O”: “system:masters”, 必须是system:masters,否则后面kubectl create clusterrolebinding报错。

证书O配置为system:masters 在集群内部cluster-admin的clusterrolebinding将system:masters组和cluster-admin clusterrole绑定在一起

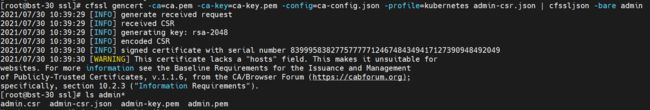

生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

拷贝证书

cp admin*.pem /etc/kubernetes/ssl/

创建kubeconfig配置文件

kubeconfig 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书(这里如果报错找不到kubeconfig路径,请手动复制到相应路径下,没有则忽略)

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.4.7.30:6443 --kubeconfig=kube.config

![]()

设置客户端认证参数

kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

![]()

设置上下文参数

kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

![]()

设置当前上下文

kubectl config use-context kubernetes --kubeconfig=kube.config

mkdir ~/.kube -p

cp kube.config ~/.kube/config

授权kubernetes证书访问kubelet api权限

kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

![]()

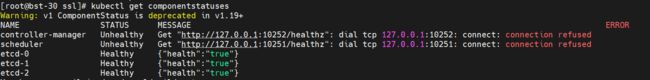

查看集群组件状态

kubectl cluster-info

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-cYyChyVM-1627742056840)(C:/Users/zhangbo/AppData/Roaming/Typora/typora-user-images/image-20210730225631154.png)]

kubectl get componentstatuses

kubectl get all --all-namespaces

同步kubectl文件到其它节点

[root@bst-31 ~]# mkdir /root/.kube/

[root@bst-32 ~]# mkdir /root/.kube/

[root@bst-30 ssl]# rsync -vaz /root/.kube/config bst-31:/root/.kube/

[root@bst-30 ssl]# rsync -vaz /root/.kube/config bst-32:/root/.kube/

#拷贝完记得验证下

配置kuectl子命令补全

yum install -y bash-completion

[root@bst-30 ssl]# source /usr/share/bash-completion/bash_completion

[root@bst-30 ssl]# source <(kubectl completion bash)

[root@bst-30 ssl]# kubectl completion bash > ~/.kube/completion.bash.inc

[root@bst-30 ssl]# source '/root/.kube/completion.bash.inc'

[root@bst-30 ssl]# source $HOME/.bash_profile

kubectl备忘录:https://kubernetes.io/zh/docs/reference/kubectl/cheatsheet/

部署kube-controller-manager组件

创建证书请求文件

#kube-controller-manager-csr.json

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"10.4.7.30",

"10.4.7.31",

"10.4.7.32",

"10.4.7.100"

],

"names": [

{

"C": "CN",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

注: hosts 列表包含所有 kube-controller-manager 节点 IP; CN 为 system:kube-controller-manager、O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限

生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

拷贝证书

[root@bst-30 ssl]# scp kube-controller-manager*.pem bst-30:/etc/kubernetes/ssl/

kube-controller-manager-key.pem 100% 1675 638.8KB/s 00:00

kube-controller-manager.pem 100% 1468 750.9KB/s 00:00

[root@bst-30 ssl]# scp kube-controller-manager*.pem bst-31:/etc/kubernetes/ssl/

[root@bst-30 ssl]# scp kube-controller-manager*.pem bst-32:/etc/kubernetes/ssl/

创建kube-controller-manager的kubeconfig

1.设置集群参数

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.4.7.30:6443 --kubeconfig=kube-controller-manager.kubeconfig

![]()

2.设置客户端认证参数

kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

![]()

3.设置上下文参数

kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

![]()

4.设置当前上下文

kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

![]()

拷贝到其它节点

[root@bst-30 ssl]# scp kube-controller-manager.kubeconfig bst-30:/etc/kubernetes/

kube-controller-manager.kubeconfig 100% 6375 3.0MB/s 00:00

[root@bst-30 ssl]# scp kube-controller-manager.kubeconfig bst-31:/etc/kubernetes/

kube-controller-manager.kubeconfig 100% 6375 3.2MB/s 00:00

[root@bst-30 ssl]# scp kube-controller-manager.kubeconfig bst-32:/etc/kubernetes/

创建配置文件

#/etc/kubernetes/kube-controller-manager.conf

KUBE_CONTROLLER_MANAGER_OPTS=" \

--secure-port=10257 \

--bind-address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=192.168.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--allocate-node-cidrs=true \

--cluster-cidr=172.7.0.0/16 \

--experimental-cluster-signing-duration=87600h \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-use-rest-clients=true \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

#–cluster-cidr=172.7.0.0/16 \pod_IP的范围应和docker在一个段

创建启动文件

#/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

拷贝配置文件和启动文件到节点

[root@bst-31 kubernetes]# scp /etc/kubernetes/kube-controller-manager.conf bst-30:/etc/kubernetes/

[root@bst-31 kubernetes]# scp /etc/kubernetes/kube-controller-manager.conf bst-32:/etc/kubernetes/

[root@bst-31 kubernetes]# scp /etc/kubernetes/kube-controller-manager.conf bst-31:/etc/kubernetes/

[root@bst-31 kubernetes]# scp /usr/lib/systemd/system/kube-controller-manager.service bst-30:/usr/lib/systemd/system/

[root@bst-31 kubernetes]# scp /usr/lib/systemd/system/kube-controller-manager.service bst-31:/usr/lib/systemd/system/

[root@bst-31 kubernetes]# scp /usr/lib/systemd/system/kube-controller-manager.service bst-32:/usr/lib/systemd/system/

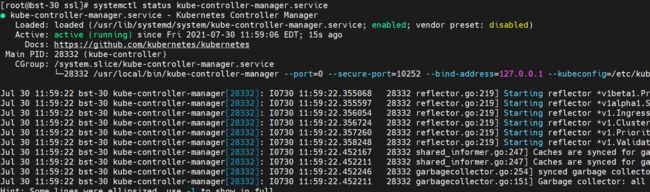

启动检查

systemctl start kube-controller-manager && systemctl enable kube-controller-manager

部署kube-scheduler组件

生成证书请求文件

#kube-scheduler-csr.json

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"10.4.7.30",

"10.4.7.31",

"10.4.7.32",

"10.4.7.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

hosts 列表包含所有 kube-scheduler 节点 IP; CN 为 system:kube-scheduler、O 为 system:kube-scheduler,kubernetes 内置的 ClusterRoleBindings system:kube-scheduler 将赋予 kube-scheduler 工作所需的权限。

生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

![]()

拷贝证书到节点

[root@bst-30 ssl]# scp kube-scheduler*.pem bst-30:/etc/kubernetes/ssl/

kube-scheduler-key.pem 100% 1675 1.6MB/s 00:00

kube-scheduler.pem 100% 1440 1.8MB/s 00:00

You have new mail in /var/spool/mail/root

[root@bst-30 ssl]# scp kube-scheduler*.pem bst-31:/etc/kubernetes/ssl/

[root@bst-30 ssl]# scp kube-scheduler*.pem bst-32:/etc/kubernetes/ssl/

创建kube-scheduler的kubeconfig

1.设置集群参数

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.4.7.30:6443 --kubeconfig=kube-scheduler.kubeconfig

![]()

2.设置客户端认证参数

kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

![]()

3.设置上下文参数

kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

![]()

4.设置当前上下文

kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

![]()

拷贝kube-scheduler.kubeconfig

[root@bst-30 ssl]# rsync -avz kube-scheduler.kubeconfig bst-30:/etc/kubernetes/

sending incremental file list

kube-scheduler.kubeconfig

sent 4,228 bytes received 35 bytes 8,526.00 bytes/sec

total size is 6,299 speedup is 1.48

[root@bst-30 ssl]# rsync -avz kube-scheduler.kubeconfig bst-31:/etc/kubernetes/

[root@bst-30 ssl]# rsync -avz kube-scheduler.kubeconfig bst-32:/etc/kubernetes/

创建配置文件

#/etc/kubernetes/kube-scheduler.conf

KUBE_SCHEDULER_OPTS="--address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

创建启动文件

#/usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

拷贝配置文件和启动文件到其它节点

[root@bst-30 ssl]# rsync -avz /etc/kubernetes/kube-scheduler.conf bst-31:/etc/kubernetes/

[root@bst-30 ssl]# rsync -avz /etc/kubernetes/kube-scheduler.conf bst-32:/etc/kubernetes/

[root@bst-30 ssl]# rsync -avz /usr/lib/systemd/system/kube-scheduler.service bst-31:/usr/lib/systemd/system/

[root@bst-30 ssl]# rsync -avz /usr/lib/systemd/system/kube-scheduler.service bst-32:/usr/lib/systemd/system/

启动并检查

systemctl start kube-scheduler && systemctl enable kube-scheduler

部署kubelet组件

kubelet: 每个Node节点上的kubelet定期就会调用API Server的REST接口报告自身状态,API Server接收这些信息后,将节点状态信息更新到etcd中。kubelet也通过API Server监听Pod信息,从而对Node机器上的POD进行管理,如创建、删除、更新Pod

创建kubelet-bootstrap.kubeconfig

在master节点的/opt/ssl下生成

#将机器的token码赋值给变量BOOTSTRAP_TOKEN

BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.4.7.30:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

拷贝kubelet-bootstrap.kubeconfig文件

[root@bst-40 ~]# mkdir /etc/kubernetes/ssl -p

[root@bst-30 ssl]# scp kubelet-bootstrap.kubeconfig bst-40:/etc/kubernetes/

拷贝ca.pem到node节点

[root@bst-30 ssl]# scp ca.pem bst-40:/etc/kubernetes/ssl/

拷贝二进制文件到/usr/local/bin下

[root@bst-30 bin]# rsync -avz kubelet kube-proxy bst-40:/usr/local/bin/

创建配置文件kubelet.json

node节点

#/etc/kubernetes/kubelet.json

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "10.4.7.40",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"featureGates": {

"RotateKubeletClientCertificate": true,

"RotateKubeletServerCertificate": true

},

"clusterDomain": "cluster.local.",

"clusterDNS": ["192.168.0.2"]

}

创建启动文件kubelet.service

pause下载地址:k8s.gcr.io/pause:3.2

#/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--network-plugin=cni \

--pod-infra-container-image=docker.io/dockub0314/pause:3.2 \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

–hostname-override:显示名称,集群中唯一

–network-plugin:启用CNI

–kubeconfig:空路径,会自动生成,后面用于连接apiserver

–bootstrap-kubeconfig:首次启动向apiserver申请证书

–config:配置参数文件

–cert-dir:kubelet证书生成目录

–pod-infra-container-image:管理Pod网络容器的镜像

注:kubelete.json配置文件address改为各个节点的ip地址,在各个work节点上启动服务

mkdir /var/lib/kubelet

mkdir /var/log/kubernetes

启动验证

systemctl start kubelet && systemctl enable kubelet

主节点approve

确认kubelet服务启动成功后,接着到bst-30节点上Approve一下bootstrap请求。

[root@bst-30 bin]# kubectl get csr

![]()

approve

kubectl certificate approve node-csr-7b9mnzxDTOhwaNzEsBTpjfeSRi3mHkQLJrUFbM0axdY

![]()

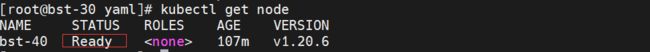

查看node节点

ps:一定要关闭节点的交换分区。

kubectl get nodes

部署kube-proxy组件

创建证书请求文件

#kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}

]

}

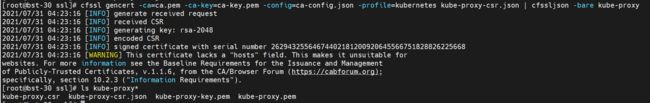

#生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

创建kubeconfig文件

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.4.7.30:6443 --kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

复制kube-proxy.kubeconfig文件到node节点

[root@bst-30 ssl]# scp kube-proxy.kubeconfig bst-40:/etc/kubernetes/

创建配置文件

#/etc/kubernetes/kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 10.4.7.40

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 172.7.0.0/16

healthzBindAddress: 10.4.7.40:10256

kind: KubeProxyConfiguration

metricsBindAddress: 10.4.7.40:10249

mode: "ipvs"

创建启动文件

#/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

mkdir -p /var/lib/kube-proxy

启动检查

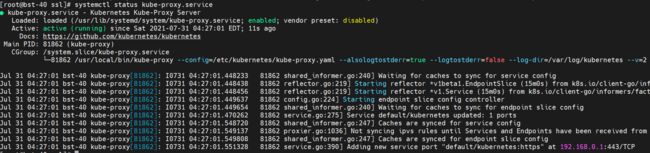

systemctl start kube-proxy && systemctl enable kube-proxy

部署calico组件

calico.yaml:https://gitee.com/zhang-bo-ops/fighter3-picgo/blob/master/yaml/calico.yaml

其中有这么一项将它改成自己的

[root@bst-30 yaml]# cat calico.yaml | tail -n +180 | head -n 2

- name: CALICO_IPV4POOL_CIDR

value: "172.7.0.0/16"

[root@bst-30 yaml]# kubectl apply -f calico.yaml

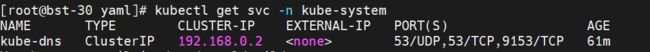

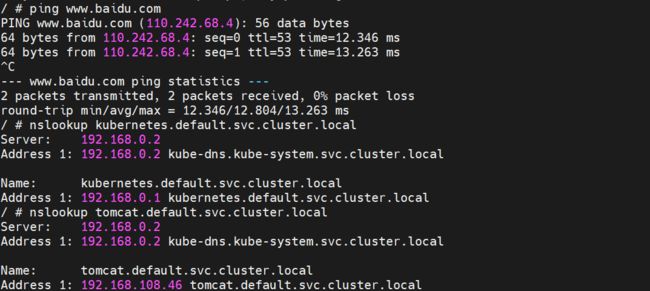

部署coredns

coredns.yaml:https://gitee.com/zhang-bo-ops/fighter3-picgo/blob/master/yaml/coredns.yaml

其中有这么一项将它改成自己的(kubelet中指定的"clusterDNS": [“192.168.0.2”])

[root@bst-30 yaml]# cat coredns.yaml | grep clusterIP

clusterIP: 192.168.0.2

[root@bst-30 yaml]# kubectl apply -f coredns.yaml

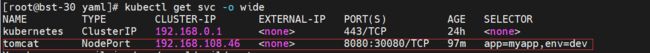

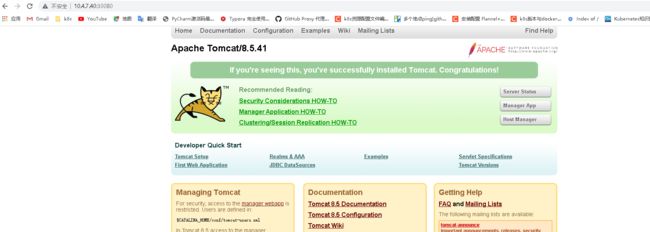

测试整个集群

tomcat和busybox

apiVersion: v1 #pod属于k8s核心组v1

kind: Pod #创建的是一个Pod资源

metadata: #元数据

name: demo-pod #pod名字

namespace: default #pod所属的名称空间

labels:

app: myapp #pod具有的标签

env: dev #pod具有的标签

spec:

containers: #定义一个容器,容器是对象列表,下面可以有多个name

- name: tomcat-pod-java #容器的名字

ports:

- containerPort: 8080

image: docker.io/tomcat:8.5-jre8-alpine #容器使用的镜像

imagePullPolicy: IfNotPresent

- name: busybox

image: docker.io/busybox:lastest

command: #command是一个列表,定义的时候下面的参数加横线

- "/bin/sh"

- "-c"

- "sleep 3600"

service

apiVersion: v1

kind: Service

metadata:

name: tomcat

spec:

type: NodePort

ports:

- port: 8080

nodePort: 30080

selector:

app: myapp

env: dev

busybox验证coredns