MapReduce05 框架原理OutPutFormat数据输出

文章目录

- 4.OutputFormat数据输出

-

- OutputFormat接口实现类

- 自定义OutputFormat

-

- 自定义OutputFormat步骤

- 自定义OutputFormat案例

-

- 需求

- 需求分析

- 案例实现

- 输出结果

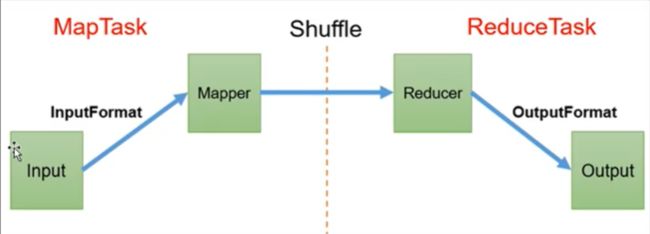

MapReduce 框架原理

1.InputFormat可以对Mapper的输入进行控制

2.Reducer阶段会主动拉取Mapper阶段处理完的数据

3.Shuffle可以对数据进行排序、分区、压缩、合并,核心部分。

4.OutPutFomat可以对Reducer的输出进行控制

4.OutputFormat数据输出

OutputFormat接口实现类

OutputFormat是MapReduce输出的基类,所有MapReduce输出都实现了OutputFormat接口

- OutputFormat

- FileOutputFormat

- TextOutputFormat 默认

- FileOutputFormat

自定义OutputFormat

应用场景

输出数据到MySQL/HBase等

自定义OutputFormat步骤

1.自定义一个类继承FileOutputFormat

2.重写getRecordWriter方法

3.创建返回类RecordWeiter,kv同1,改写输出数据的方法write()

自定义OutputFormat案例

需求

过滤输入的log日志,包含ranan的网站输出到D:\hadoop_data\output\ranan.log,不包含ranan的网站输出到D:\hadoop_data\output\other.log

输入数据:D:\hadoop_data\input\inputoutputformat\log.txt

http://www.baidu.com

http://www.google.com

http://cn.bing.com

http://www.ranan.com

http://www.sohu.com

http://www.sina.com

http://www.sin2a.com

http://www.sin2desa.com

http://www.sindsafa.com

需求分析

分区输出的文件名不能自己命名,所以这里采用自定义OutputFormat类

1.创建一个类LogRecordWriter继承RecordWriter

1.1 创建两个文件的输出流:rananOut、otherOut

1.2 如果包含ranan,输出到rananOut流,如果不包含ranan,输出到otherOut流

2.在job驱动中配置使用自定义类job.setOutFormatClass(LogRecordWriter.class)

案例实现

LogMapper类

输入的k是偏移量LongWritable,输入的v是一行Text。观察输出只需要一行网站,那么输出的k是一行类容,输出的v是NullWritable

为什么不k是空,因为k是会排序的,需要实现可排序

package ranan.mapreduce.outputformat;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class LogMapper extends Mapper <LongWritable,Text,Text, NullWritable>{

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, NullWritable>.Context context) throws IOException, InterruptedException {

context.write(value,NullWritable.get());

}

}

LogReducer

只起到数据传递的作用

注意要防止两条一样的进来输出一条出去的情况

package ranan.mapreduce.outputformat;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class LogReducer extends Reducer<Text, NullWritable,Text,NullWritable> {

@Override

protected void reduce(Text key, Iterable<NullWritable> values, Reducer<Text, NullWritable, Text, NullWritable>.Context context) throws IOException, InterruptedException {

for(NullWritable value:values){

context.write(key,NullWritable.get());

}

//直接写进来两条一样的只会输出一条出去

//context.write(key,NullWritable.get());

}

LogOutputFormat类

package ranan.mapreduce.outputformat;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class LogOutputFormat extends FileOutputFormat<Text, NullWritable> {

@Override

public RecordWriter<Text, NullWritable> getRecordWriter(TaskAttemptContext job) throws IOException, InterruptedException {

//这里返回值需要RecordWriter类,创建这个类 传递job配置信息!

LogRecordWriter lrw = new LogRecordWriter(job);

return lrw;

}

}

LogRecordWriter类

作为RecordWriter方法的返回值,主要的实现写在这里

package ranan.mapreduce.outputformat;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import java.io.IOException;

public class LogRecordWriter extends RecordWriter<Text, NullWritable> {

private FSDataOutputStream rananOut;

private FSDataOutputStream otherOut;

//与自定义LogOutputFormat产生联系

public LogRecordWriter(TaskAttemptContext job) {

//创建两条输出流

try {

//get的报错直接处理,参数的配置信息使用job的配置信息

FileSystem fs = FileSystem.get(job.getConfiguration());

rananOut = fs.create(new Path("D:\\hadoop_data\\output\\ranan.log"));

otherOut = fs.create(new Path("D:\\hadoop_data\\output\\other.log"));

} catch (IOException e) {

e.printStackTrace();

}

}

@Override

public void write(Text key, NullWritable value) throws IOException, InterruptedException {

//具体写

//输入是每一行的内容,类型是Text

String log = key.toString();

if(log.contains("ranan")) {

rananOut.writeBytes(log);

}

else {

//writeBytes参数是string类型

otherOut.writeBytes(log);

}

}

//资源关闭

@Override

public void close(TaskAttemptContext context) throws IOException, InterruptedException {

IOUtils.closeStream(rananOut);

IOUtils.closeStream(otherOut); //TOUtiles工具类

}

}

LogDriver类

虽然我们自定义OutputFormat继承了FileOutputFormat,自定义了输出路径。

而FileOutputFormat需要输出一个_SUCCESS文件,依旧需要设置一个输出路径输出_SUCCESS文件

package ranan.mapreduce.outputformat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class LogDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

// 1 获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2 设置jar

job.setJarByClass(LogDriver.class);

//3 关联Mapper,Reducer

job.setMapperClass(LogMapper.class);

job.setReducerClass(LogReducer.class);

// 4 设置mapper 输出的key和value类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

// 5 设置最终数据输出的key和value类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

// 设置自定义的outputFormat

job.setOutputFormatClass(LogOutputFormat.class);

// 6 设置数据的输入路径和输出路径

FileInputFormat.setInputPaths(job, new Path("D:\\hadoop_data\\input\\inputoutputformat\\log.txt"));

//虽然我们自定义OutputFormat继承了FileOutputFormat,而FileOutputFormat需要输出一个_SUCCESS文件,依旧需要设置一个输出路径输出_SUCCESS文件

FileOutputFormat.setOutputPath(job, new Path("D:\\hadoop_data\\output\\sucess"));

// 7 提交job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}

输出结果

发现other.log里的网址连在一起了,输出的时候没输出回车

修改LogRecordWriter类

if(log.contains("ranan")) {

rananOut.writeBytes(log + "\n");

}

else {

//writeBytes参数是string类型

otherOut.writeBytes(log+ "\n");

}