卷积神经网络(CNN)原理和实现

卷积神经网络相关概念

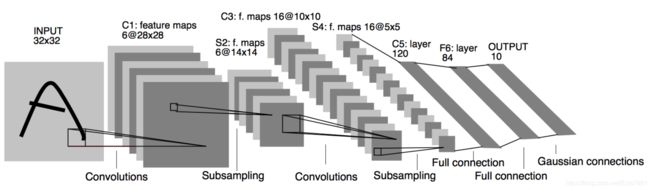

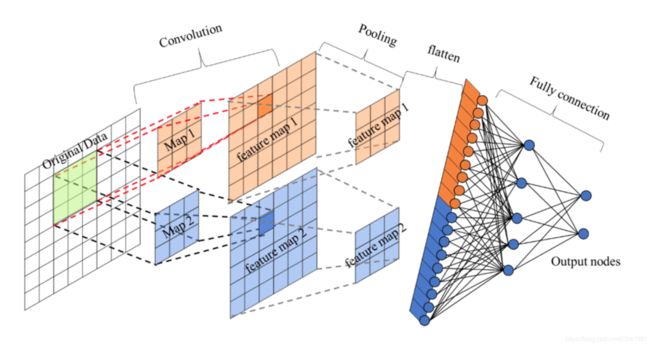

卷积神经网络包含的重要结构有:卷积层、池化层、全连接层

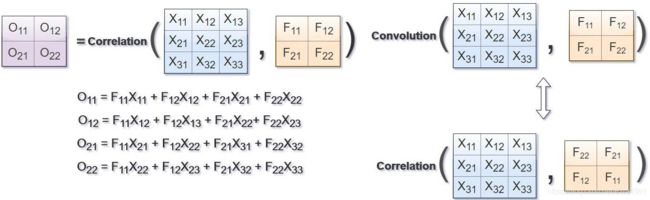

卷积层(Convolutions)

(1) 概念:卷积运算的目的是提取输入的不同特征,某些卷积层可能只能提取一些低级的特征如边缘、线条和角等层级,更多层的网路能从低级特征中迭代提取更复杂的特征。

(2) 运算规则:可参考https://mlnotebook.github.io/post/CNN1/,如图:

(3) padding-零填充:在图片像素的最外层加上若干层0值,若一层,记做p =1。

零填充有两种形式:

- Valid :不填充,也就是最终大小为

- Same:输出大小与原图大小一致

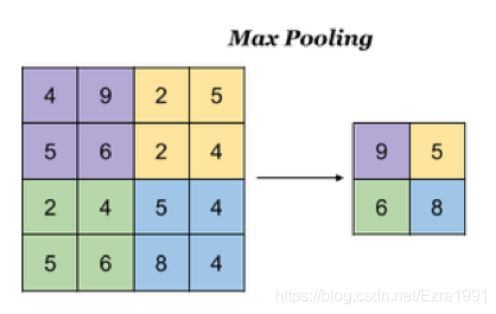

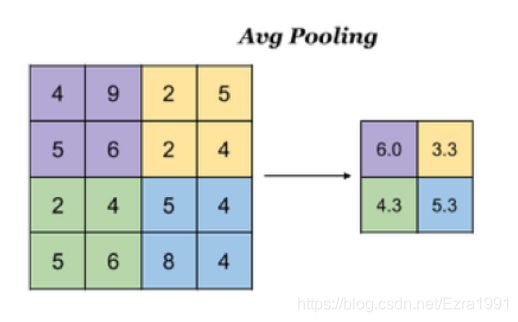

池化层(Pooling)

(1) 目的:降低了后续网络层的输入维度,缩减模型大小,提高计算速度。

(2) 计算规则:

- 最大池化:Max Pooling,取窗口内的最大值作为输出

- 平均池化:Avg Pooling,取窗口内的所有值的均值作为输出

全连接层

卷积层+激活层+池化层可以看成是CNN的特征学习/特征提取层,而学习到的特征(Feature Map)最终应用于模型任务(分类、回归):

- 先对所有 Feature Map 进行扁平化(flatten, 即 reshape 成 1 x N 向量)

- 再接一个或多个全连接层,进行模型学习

基于Keras实现CNN

输入图像数据集shape为32*32*3,最终要将图片分为100个类别

模型编写

两层卷积层+两个神经网络层

网络设计:

第一层

卷积:32个filter、大小5*5、strides=1、padding="SAME"

激活:Relu

池化:大小2x2、strides=2

第二层

卷积:64个filter、大小5*5、strides=1、padding="SAME"

激活:Relu

池化:大小2x2、strides=2

全连接层

Keras API实现

class CNNMist(object):

# 编写两层+两层全连接层网络模型

model = keras.models.Sequential([

# 卷积层1:32个5*5*3的filter,strides=1,padding="same"

keras.layers.Conv2D(32, kernel_size=5, strides=1, padding="same",

data_format="channels_last", activation=tf.nn.relu,

# kernel_regularizer=keras.regularizers.L2(0.01)),

),

# dropout正则化,(神经元)丢弃率0.2

keras.layers.Dropout(0.2),

# 池化层1:2*2窗口,strides=2

keras.layers.MaxPool2D(pool_size=2, strides=2, padding="same"),

# 卷积层2:64个5*5*32的filter,strides=1,padding="same"

keras.layers.Conv2D(64, kernel_size=5, strides=1, padding="same",

data_format="channels_last", activation=tf.nn.relu),

# 池化层1:2*2窗口,strides=2 [None, 8, 8, 64]

keras.layers.MaxPool2D(pool_size=2, strides=2, padding="same"),

# 全连接层神经网络 [None, 8, 8, 64]->[None, 8 * 8 * 64]

keras.layers.Flatten(),

# 全连接层神经网络

# 1024个神经元网络层

keras.layers.Dense(1024, activation=tf.nn.relu),

# 100个神经元神经网络

keras.layers.Dense(100, activation=tf.nn.softmax)

])正则化

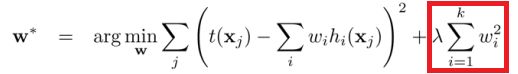

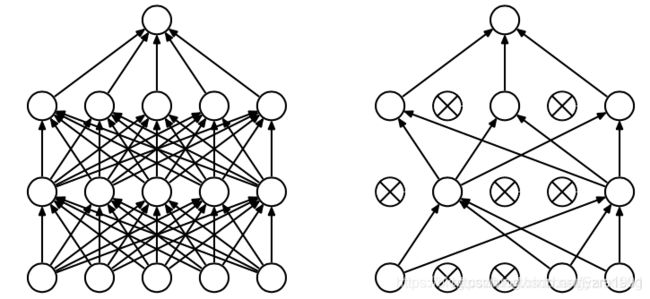

在成本函数中加入一个正则化项(惩罚项),惩罚模型的复杂度,防止网络过拟合。常用的正则化有L2正则化和Dropout。

- L2正则化:

- Dropout:每次做完dropout,相当于从原始的网络中找到一个更小的网络。

完整代码和演示

import os

from tensorflow.python.keras.datasets import cifar10

import tensorflow as tf

from tensorflow import keras

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

class CNNMist(object):

# 编写两层+两层全连接层网络模型

model = keras.models.Sequential([

# 卷积层1:32个5*5*3的filter,strides=1,padding="same"

keras.layers.Conv2D(32, kernel_size=5, strides=1, padding="same",

data_format="channels_last", activation=tf.nn.relu,

# kernel_regularizer=keras.regularizers.L2(0.01)),

),

# dropout正则化,(神经元)丢弃率0.2

keras.layers.Dropout(0.2),

# 池化层1:2*2窗口,strides=2

keras.layers.MaxPool2D(pool_size=2, strides=2, padding="same"),

# 卷积层2:64个5*5*32的filter,strides=1,padding="same"

keras.layers.Conv2D(64, kernel_size=5, strides=1, padding="same",

data_format="channels_last", activation=tf.nn.relu),

# 池化层1:2*2窗口,strides=2 [None, 8, 8, 64]

keras.layers.MaxPool2D(pool_size=2, strides=2, padding="same"),

# 全连接层神经网络 [None, 8, 8, 64]->[None, 8 * 8 * 64]

keras.layers.Flatten(),

# 全连接层神经网络

# 1024个神经元网络层

keras.layers.Dense(1024, activation=tf.nn.relu),

# 100个神经元神经网络

keras.layers.Dense(100, activation=tf.nn.softmax)

])

def __init__(self):

# 获取训练测试数据

"""

shape

(50000, 32, 32, 3)

(10000, 32, 32, 3)

"""

(self.x_train, self.y_train), (self.x_test, self.y_test) = cifar10.load_data()

# 进行数据归一化

self.x_train = self.x_train / 255.0

self.x_test = self.x_test / 255.0

def compile(self):

"""

编译模型

:return:

"""

CNNMist.model.compile(optimizer=keras.optimizers.Adam(),

loss=keras.losses.sparse_categorical_crossentropy,

metrics=["accuracy"])

def fit(self):

"""训练"""

CNNMist.model.fit(self.x_train, self.y_train, epochs=10, batch_size=16)

def evaluate(self):

"""评估"""

test_loss, test_acc = CNNMist.model.evaluate(self.x_test, self.y_test)

print(f'test loss is {test_loss}, and test_accuracy is {test_acc}!')

if __name__ == '__main__':

# 查看能使用的GPU

print(tf.config.list_physical_devices('GPU'))

cnn = CNNMist()

cnn.compile()

cnn.fit()

cnn.evaluate()

# 打印模型概览

print(cnn.model.summary())(1) 运行环境:

python3.6+tensorflow-gpu==2.4.0

(2) 效果演示:

3125/3125 [==============================] - 15s 4ms/step - loss: 1.6314 - accuracy: 0.4154

Epoch 2/10

3125/3125 [==============================] - 13s 4ms/step - loss: 1.0455 - accuracy: 0.6294

Epoch 3/10

3125/3125 [==============================] - 13s 4ms/step - loss: 0.7893 - accuracy: 0.7251

Epoch 4/10

3125/3125 [==============================] - 14s 4ms/step - loss: 0.5664 - accuracy: 0.8016

Epoch 5/10

3125/3125 [==============================] - 13s 4ms/step - loss: 0.3819 - accuracy: 0.8693

Epoch 6/10

3125/3125 [==============================] - 13s 4ms/step - loss: 0.2405 - accuracy: 0.9184

Epoch 7/10

3125/3125 [==============================] - 13s 4ms/step - loss: 0.1785 - accuracy: 0.9409

Epoch 8/10

3125/3125 [==============================] - 14s 4ms/step - loss: 0.1409 - accuracy: 0.9532

Epoch 9/10

3125/3125 [==============================] - 13s 4ms/step - loss: 0.1364 - accuracy: 0.9564

Epoch 10/10

3125/3125 [==============================] - 13s 4ms/step - loss: 0.1101 - accuracy: 0.9635

313/313 [==============================] - 1s 2ms/step - loss: 1.8996 - accuracy: 0.6726

test loss is 1.899588704109192, and test_accuracy is 0.6725999712944031!