分布式搜索elasticsearch搜索功能【深入】

elasticsearch搜索功能【深入】

- 分布式搜索elasticsearch搜索功能【深入】

-

- 1.数据聚合

-

- 1.1 聚合的种类

- 1.2 DSL实现聚合

-

- 1.2.1 Bucket聚合

- 1.2.2 Metrics聚合

- 1.3 RestAPI实现聚合

- 2.自动补全

-

- 2.1 拼音分词器

- 2.2 自定义分词器

- 2.3 自动补全查询

- 2.4实现酒店搜索框自动补全

- 3.数据同步

-

- 3.1 数据同步思路分析

- 3.2 实现elasticsearch与数据库数据同步

- 4.集群

-

- 4.1 搭建ES集群

-

- 4.1.1 部署es集群

- 4.1.2 集群状态监控

- 4.1.3 创建索引库

-

- 1)利用kibana的DevTools创建索引库

- 2)利用cerebro创建索引库

- 4.1.4 查看分片效果

- 4.2集群的节点角色

- 4.3集群脑裂问题

- 4.4集群分布式存储

- 4.5集群分布式查询

- 4.6集群故障转移

学习地址

分布式搜索elasticsearch搜索功能【深入】

1.数据聚合

1.1 聚合的种类

聚合(aggregations)可以实现对文档数据的统计、分析、运算。聚合常见的有三类:

桶(Bucket)聚合:用来对文档做分组,并统计每组数量

- Term Aggregation:按照文档字段值分组

- Date Histogram:按照日期阶梯分组,例如一周为一组,或者一月为一组度量(Metric)聚合:用以计算一些值,比如:最大值、最小值、平均值等

- Avg:求平均值

- Max:求最大值

- Min:求最小值

- Stats:同时求max、min、avg、sum等、管道(pipeline)聚合:基于其他聚合结果再做聚合

参与聚合的字段类型必须是:

- keyword

- 数值

- 日期

- 布尔

1.2 DSL实现聚合

现在,要统计所有数据中的酒店品牌有多少种,此时可以根据酒店品牌的名称做聚合。

类型为term类型,DSL示例:

GET /hotel/_search

{

"size": 0, // 设置size为0,结果中不包含文档,只包含聚合结果

"aggs": { // 定义聚合

"brandAgg": { // 给聚合起个名字

"terms": { // 聚合的类型,按照品牌值聚合,所以选择term

"field": "brand", //参与聚合的字段

"size": 20 // 希望获取的聚合结果数量

}

}

}

}

示例:

GET /hotel/_search

{

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"size": 20

}

}

}

}

1.2.1 Bucket聚合

Bucket聚合-聚合结果排序

默认情况下,Bucket聚合会统计Bucket内的文档数量,记为_count,并且按照_count降序排序。

可以修改结果排序方式:

# 聚合结果排序

GET /hotel/_search

{

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"order": {

"_count" : "asc" //按照_count升序排序

},

"size": 20

}

}

}

}

Bucket聚合-限定聚合范围

默认情况下,Bucket聚合是对索引库的所有文档做聚合,我们可以限定要聚合的文档范围,只要添加query条件即可:

# 限定聚合范围

GET /hotel/_search

{

"query": {

"range": {

"price": {

"lte": 200 //只对200元以下的文档聚合

}

}

},

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"size": 10

}

}

}

}

总结:

- aggs代表聚合,与query同级,此时query的作用是?

- 限定聚合的文档范围

- 聚合必须的三要素:

- 聚合名称

- 聚合类型

- 聚合字段

- 聚合可以配置的属性有:

- size:指定聚合结果的数量

- order:指定聚合结果的排序方式

- field:指定聚合字段

1.2.2 Metrics聚合

例如,要求获取每个品牌的用户评分的min、max、avg等值

可以利用stats聚合:

# 嵌套聚合metrics

GET /hotel/_search

{

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"size": 20

"order": {

"score_stats.avg": "desc"

},

"aggs": { // 是brands聚合的子聚合,也就是分组后对每组分别计算

"score_stats": { //聚合名称

"stats": { //集合类型,这里stats可以计算min、max、avg等

"field": "score" //聚合字段,这里是score

}

}

}

}

}

}

1.3 RestAPI实现聚合

以品牌聚合为例,示例下Java的RestClient使用,看请求组装:

聚合结果解析:

@Test

void testAggregation() throws IOException {

//1.准备Request

SearchRequest request = new SearchRequest("hotel");

//2.准备DSL

//2.1.设置size

request.source().size(0);

//2.2.聚合

request.source().aggregation(AggregationBuilders.terms("brandAgg")

.size(10)

.field("brand")

);

//3.发出请求

SearchResponse response = client.search(request, RequestOptions.DEFAULT);

//4.解析结果

//4.1解析聚合结果

Aggregations aggregations = response.getAggregations();

//4.2根据名称获取聚合结果

Terms brandTerms = aggregations.get("brandAgg");

//4.3获取桶

List<? extends Terms.Bucket> buckets = brandTerms.getBuckets();

//4.4遍历

for (Terms.Bucket bucket : buckets) {

//获取key也就是品牌信息

String brandName = bucket.getKeyAsString();

System.out.println(brandName);

}

}

案例:在IUserService中定义方法,实现对品牌、城市、星级的聚合

需求:搜索页面的品牌、城市等信息不应该是在页面写死的,而是通过聚合索引库中的酒店数据得来的:

在IHotelService中定义一个方法,实现对品牌、城市、星级的聚合,方法声明如下:

@Override

public Map<String, List<String>> filters() {

try {

//1.准备request

SearchRequest request = new SearchRequest("hotel");

//2.准备DSL

//2.1设置size

request.source().size(0);

//2.2设置聚合

request.source().aggregation(AggregationBuilders

.terms("brandAgg")

.field("brand")

.size(100));

request.source().aggregation(AggregationBuilders

.terms("cityAgg")

.field("city")

.size(100));

request.source().aggregation(AggregationBuilders

.terms("starAgg")

.field("star")

.size(100));

//3.发出请求

SearchResponse response = client.search(request, RequestOptions.DEFAULT);

//4.解析结果

Map<String, List<String>> result = new HashMap<>();

Aggregations aggregations = response.getAggregations();

//4.1根据品牌名称获取品牌的结果

List<String> brandList = getAggByName(aggregations, "brandAgg");

List<String> cityList = getAggByName(aggregations, "cityAgg");

List<String> startList = getAggByName(aggregations, "starAgg");

//4.4放入map

result.put("品牌", brandList);

result.put("城市", cityList);

result.put("星级", startList);

return result;

} catch (IOException e) {

throw new RuntimeException(e);

}

}

private List<String> getAggByName(Aggregations aggregations, String aggName) {

//4.1根据聚合名称获取聚合结果

Terms brandTerms = aggregations.get(aggName);

//4.2获取buckets

List<? extends Terms.Bucket> buckets = brandTerms.getBuckets();

//4.3遍历

List<String> brandList = new ArrayList<>();

for (Terms.Bucket bucket : buckets) {

String key = bucket.getKeyAsString();

brandList.add(key);

}

return brandList;

}

对接前端接口

前端页面会向服务发起请求,查询品牌、城市、星级等字段的聚合结果:

可以看到请求参数与之前search时的RequestParam完全一致,这是在限定聚合时的文档范围。

例如: 用户搜索“沙滩”,价格在300~600,那聚合必须是在这个搜索条件基础上完成。

因此需要:

- 编写controller接口,接收该请求

@RequestMapping("filters")

public Map<String, List<String>> getFilters(@RequestBody RequestParams params) {

return hotelService.filters(params);

}

- 修改IHotelService#getFilters()方法,添加RequestParam参数

Map<String, List<String>> filters(RequestParams params);

- 修改getFilters方法的业务,聚合时添加query条件

buildBasicQuery(param, request);

2.自动补全

2.1 拼音分词器

当用户在搜索框输入字符时,应该提示出与该字符有关的搜索项,如图:

要实现根据字母做补全,就必须对文档按照拼音分词。在GitHub上恰好有elasticsearch的拼音分词插件,地址:

官方链接

安装方式与IK分词器一样,分三步

①解压

②上传到服务器/虚拟机,elasticsearch的plugin目录

③重启elasticsearch

docker restart es

④测试

2.2 自定义分词器

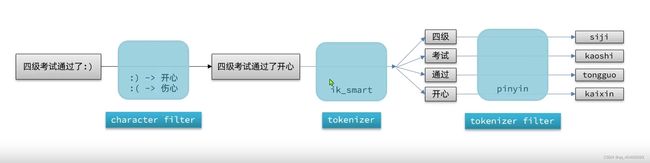

elasticsearch分词器(analyzer)的组成包含三部分:

- character filters:在tokenizer之前对文本进行处理。例如删除字符、替换字符

- tokenizer:将文本按照一定的规则切割成词条(term)。例如keyword,就是不分词;还有ik_smart

- tokenizer filter:将tokenizer输出的词条做进一步处理。例如大小写转换、同义词处理、拼音处理等

可以在创建索引库时,通过setting来配置自定义的analyzer(分词器):

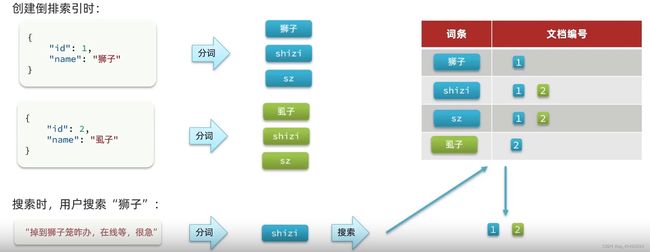

拼音分词器适合在创建倒排索引的时候使用,但不能在搜索索引的时候使用。

拼音分词器适合在创建倒排索引的时候使用,但不能在搜索索引的时候使用。

创建倒排索引时:

因此字段在创建倒排索引时应该用my_analyzer分词器;字段在搜索时应该使用ik_smart分词器

PUT /test

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "ik_max_word",

"filter": "py"

}

},

"filter": {

"py": {

"type": "pinyin",

"keep_full_pinyin": false,

"keep_joined_full_pinyin": true,

"keep_original": true,

"limit_first_letter_length": 16,

"remove_duplicated_term": true,

"none_chinese_pinyin_tokenize": false

}

}

}

},

"mappings": {

"properties": {

"name": {

"type": "text",

"analyzer": "my_analyzer",

"search_analyzer": "ik_smart"

}

}

}

}

总结:

- 如何使用拼音分词器?

①下载pinyin分词器

②解压后放到elasticserach的plugin目录

③重启elasticsearch - 如何自定义拼音分词器?

①创建索引库时,在settings中配置,可以包含三部分

②character filter

③tokenizer

④filter - 拼音分词器注意事项?

- 为了避免搜索到同音字,搜索时不要使用拼音分词器

2.3 自动补全查询

elasticsearch提供了Completion Suggester查询来实现自动补全功能。这个查询会匹配以用户输入内容开头的词条并返回。为了提高补全查询的效率,对于文档中字段的类型有一些约束:

- 参与补全查询的字段必须是completion类型。

//创建索引库

PUT /test

{

"mappings": {

"properties": {

"title":{

"type": "completion"

}

}

}

}

- 字段的内容一般是用来补全的多个词条形成的数组。

//示例

POST /test/_doc

{

"title": ["Sony","WH-1000XM3"]

}

POST /test/_doc

{

"title": ["SK-Ⅱ","PITERA"]

}

POST /test/_doc

{

"title": ["Nintendo","switch"]

}

查询语法如下:

//自动补全查询

GET /test/_search

{

"suggest": {

"title_suggest": {

"text": "s", //关键字

"completion": {

"field": "title", //补全查询的字段

"skip_duplicates": true, //跳过重复的

"size": 10 //获取前10条结果

}

}

}

}

总结:自动补全对字段的要求:

- 类型是completion

- 字段值是多词条的数组

2.4实现酒店搜索框自动补全

案例: 实现hotel索引库的自动补全、拼音搜索功能

思路如下:

- 修改hotel索引库结构,设置自定义拼音分词器

- 修改索引库的name、all字段,使用自定义分词器

- 索引库添加一个新字段suggestion,类型为completion,使用自定义的分词器

// 酒店数据索引库

PUT /hotel

{

"settings": {

"analysis": {

"analyzer": {

"text_anlyzer": {

"tokenizer": "ik_max_word",

"filter": "py"

},

"completion_analyzer": {

"tokenizer": "keyword",

"filter": "py"

}

},

"filter": {

"py": {

"type": "pinyin",

"keep_full_pinyin": false,

"keep_joined_full_pinyin": true,

"keep_original": true,

"limit_first_letter_length": 16,

"remove_duplicated_term": true,

"none_chinese_pinyin_tokenize": false

}

}

}

},

"mappings": {

"properties": {

"id":{

"type": "keyword"

},

"name":{

"type": "text",

"analyzer": "text_anlyzer",

"search_analyzer": "ik_smart",

"copy_to": "all"

},

"address":{

"type": "keyword",

"index": false

},

"price":{

"type": "integer"

},

"score":{

"type": "integer"

},

"brand":{

"type": "keyword",

"copy_to": "all"

},

"city":{

"type": "keyword"

},

"starName":{

"type": "keyword"

},

"business":{

"type": "keyword",

"copy_to": "all"

},

"location":{

"type": "geo_point"

},

"pic":{

"type": "keyword",

"index": false

},

"all":{

"type": "text",

"analyzer": "text_anlyzer",

"search_analyzer": "ik_smart"

},

"suggestion":{

"type": "completion",

"analyzer": "completion_analyzer"

}

}

}

}

- 给HotelDoc类添加suggestion字段,内容包含brand、business

@Data

@NoArgsConstructor

public class HotelDoc {

private Long id;

private String name;

private String address;

private Integer price;

private Integer score;

private String brand;

private String city;

private String starName;

private String business;

private String location;

private String pic;

private Object distance;

private boolean isAD;

private List<String> suggestion;

public HotelDoc(Hotel hotel) {

this.id = hotel.getId();

this.name = hotel.getName();

this.address = hotel.getAddress();

this.price = hotel.getPrice();

this.score = hotel.getScore();

this.brand = hotel.getBrand();

this.city = hotel.getCity();

this.starName = hotel.getStarName();

this.business = hotel.getBusiness();

this.location = hotel.getLatitude() + ", " + hotel.getLongitude();

this.pic = hotel.getPic();

if (this.business.contains("/")){

//business有多个值,需要切割

String[] arr = this.business.split("/");

//添加元素

this.suggestion = new ArrayList<>();

this.suggestion.add(this.brand);

Collections.addAll(this.suggestion,arr);

}else {

this.suggestion = Arrays.asList(this.brand,this.business);}

}

}

- 重新导入数据到hotel库

@Test

void testBulk() throws IOException {

//批量查询酒店数据

List<Hotel> hotels = hotelService.list();

//1.创建Bulk请求

BulkRequest request = new BulkRequest();

//2.添加要批量提交的请求:这里添加了两个新增文档的请求

for (Hotel hotel : hotels) {

//转换为文档类型HotelDoc

HotelDoc hotelDoc = new HotelDoc(hotel);

//创建新增文档的Request对象

request.add(new IndexRequest("hotel").id(hotel.getId().toString())

.source(JSON.toJSONString(hotelDoc), XContentType.JSON));

}

//3.发起bulk请求

client.bulk(request, RequestOptions.DEFAULT);

}

- 测试

# 以拼音实现自动补全

GET /hotel/_search

{

"suggest": {

"suggestions": {

"text": "s",

"completion": {

"field": "suggestion",

"skip_duplicates": true,

"size": 10

}

}

}

}

RestAPI实现自动补全

@Test

void testSuggest() throws IOException {

//1.先准备request

SearchRequest request = new SearchRequest("hotel");

//2.准备DSL

request.source().suggest(new SuggestBuilder().addSuggestion(

"suggestions",

SuggestBuilders.completionSuggestion("suggestion")

.prefix("h")

.skipDuplicates(true)

.size(10)

));

//3.发请请求

SearchResponse response = client.search(request, RequestOptions.DEFAULT);

//4.解析结果

//4.1 处理结果

Suggest suggest = response.getSuggest();

//4.2根据名称获取补全结果

CompletionSuggestion suggestion = suggest.getSuggestion("suggestions");

//4.3获取option并遍历

List<CompletionSuggestion.Entry.Option> options = suggestion.getOptions();

for (CompletionSuggestion.Entry.Option option : options) {

String text = option.getText().toString();

System.out.println(text);

}

}

查看前端页面,发现当我们在输入键入时,前端会发起ajax请求:

在服务端编写接口,接收该请求,返回补全结果的集合,类型为List< String >

@Override

public List<String> getSuggestions(String prefix) {

try {

//1.发起请求

SearchRequest request = new SearchRequest("hotel");

//2.准备DSL

request.source().suggest(new SuggestBuilder().addSuggestion("suggestions",

SuggestBuilders.completionSuggestion("suggestion")

.prefix(prefix)

.skipDuplicates(true)

.size(10)

));

//3.发起请求

SearchResponse response = client.search(request, RequestOptions.DEFAULT);

//4.解析结果

Suggest suggest = response.getSuggest();

//4.1根据补全查询名称,获取补全结果

CompletionSuggestion suggestions = suggest.getSuggestion("suggestions");

//4.2获取options

List<CompletionSuggestion.Entry.Option> options = suggestions.getOptions();

//4.3遍历

List<String> list = new ArrayList<>(options.size());

for (CompletionSuggestion.Entry.Option option : options) {

String text = option.getText().toString();

list.add(text);

}

return list;

} catch (IOException e) {

throw new RuntimeException(e);

}

}

3.数据同步

3.1 数据同步思路分析

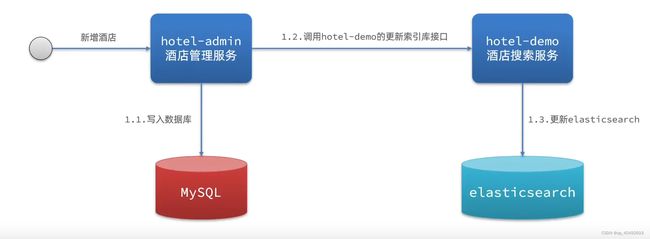

elasticsearch中的酒店数据来自于mysql数据库,因此mysql数据发生改变时elasticsearch也必须跟着改变,这个就是elasticsearch与mysql之间的数据同步

方案一:同步调用

方案二:异步通知

方案三:监听binlog

总结:三种方式对比

方式一:同步调用

- 优点:实现简单,粗暴

- 缺点:业务耦合度高

方式二:异步通知

- 优点:低耦合,实现难度一般

- 缺点:依赖于MQ的可靠性

方式三:监听binlog

- 优点:完全解除服务间耦合

- 缺点:开启binlog增加数据库负担,实现复杂度高

3.2 实现elasticsearch与数据库数据同步

案例:利用MQ实现mysql与elasticsearch数据同步

利用hotel-admin项目作为酒店管理的微服务,当酒店 数据发生增、删、改时,要求对elasticsearch中数据也要完成相同的操作。

步骤:

- 导入hotel-admin项目,启动测试就带你数据的CRUD

- 声明exchange、queue、RoutingKey

public class MqConstands {

/**

* 交换机

*/

public final static String HOTEL_EXCHANGE = "hotel.topic";

/**

* 监听新增和修改的队列

*/

public final static String HOTEL_INSERT_QUEUE = "hotel.insert.queue";

/**

* 监听删除的队列

*/

public final static String HOTEL_DELETE_QUEUE = "hotel.delete.queue";

/**

* 新增或修改的RoutingKey

*/

public final static String HOTEL_INSERT_KEY = "hotel.insert";

/**

* 删除的RoutingKey

*/

public final static String HOTEL_DELETE_KEY = "hotel.delete";

}

- 在hotel-admin中的增、删、改业务中完成消息发送

//增

rabbitTemplate.convertAndSend(MqConstands.HOTEL_EXCHANGE, MqConstands.HOTEL_INSERT_KEY, hotel.getId());

//删

rabbitTemplate.convertAndSend(MqConstands.HOTEL_EXCHANGE, MqConstands.HOTEL_DELETE_KEY, id);

//改

rabbitTemplate.convertAndSend(MqConstands.HOTEL_EXCHANGE, MqConstands.HOTEL_INSERT_KEY, hotel.getId());

- 在hotel-demo中完成消息的监听,并更新elasticsearch中数据

@Component

public class HotelListener {

@Autowired

private IHotelService hotelService;

/**

* 监听新增或修改酒店的业务

* @param id 酒店的id

*/

@RabbitListener(queues = MqConstands.HOTEL_INSERT_QUEUE)

public void listenHotelInsertOrUpdate(Long id) {

hotelService.inserById(id);

}

/**

* 监听酒店的删除业务

* @param id 酒店的id

*/

@RabbitListener(queues = MqConstands.HOTEL_DELETE_QUEUE)

public void listenHotelDelete(Long id){

hotelService.deleteById(id);

}

}

@Override

public void deleteById(Long id) {

try {

//1.准备request

DeleteRequest request = new DeleteRequest("hotel", id.toString());

//2.准备发送请求

client.delete(request, RequestOptions.DEFAULT);

} catch (IOException e) {

throw new RuntimeException(e);

}

}

private List<String> getAggByName(Aggregations aggregations, String aggName) {

//4.1根据聚合名称获取聚合结果

Terms brandTerms = aggregations.get(aggName);

//4.2获取buckets

List<? extends Terms.Bucket> buckets = brandTerms.getBuckets();

//4.3遍历

List<String> brandList = new ArrayList<>();

for (Terms.Bucket bucket : buckets) {

String key = bucket.getKeyAsString();

brandList.add(key);

}

return brandList;

}

4.集群

4.1 搭建ES集群

单机的elasticsearch做数据存储,必然要面临两个问题:海量的数据问题、单点故障问题。

-

海量数据存储问题:将索引库从逻辑上拆分为N个分片(shard),存储到多个节点

-

单点故障问题:将分片数据在不同节点备份(replica)

4.1.1 部署es集群

部署es集群可以直接使用docker-compose来完成,不过要求你的Linux虚拟机至少有4G的内存空间

首先编写一个docker-compose文件,内容如下:

version: '2.2'

services:

es01:

image: elasticsearch:7.12.1

container_name: es01

environment:

- node.name=es01

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es02,es03

- cluster.initial_master_nodes=es01,es02,es03

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

volumes:

- data01:/usr/share/elasticsearch/data

ports:

- 9200:9200

networks:

- elastic

es02:

image: elasticsearch:7.12.1

container_name: es02

environment:

- node.name=es02

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es01,es03

- cluster.initial_master_nodes=es01,es02,es03

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

volumes:

- data02:/usr/share/elasticsearch/data

ports:

- 9201:9200

networks:

- elastic

es03:

image: elasticsearch:7.12.1

container_name: es03

environment:

- node.name=es03

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es01,es02

- cluster.initial_master_nodes=es01,es02,es03

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

volumes:

- data03:/usr/share/elasticsearch/data

networks:

- elastic

ports:

- 9202:9200

volumes:

data01:

driver: local

data02:

driver: local

data03:

driver: local

networks:

elastic:

driver: bridge

es运行需要修改一些linux系统权限,修改/etc/sysctl.conf文件

vi /etc/sysctl.conf

添加下面的内容:

vm.max_map_count=262144

然后执行命令,让配置生效:

sysctl -p

通过docker-compose启动集群:

docker-compose up -d

4.1.2 集群状态监控

kibana可以监控es集群,不过新版本需要依赖es的x-pack 功能,配置比较复杂。

这里推荐使用cerebro来监控es集群状态,官方网址:官网

进入对应的bin目录,双击其中的cerebro.bat文件即可启动服务。

访问http://localhost:9000 即可进入管理界面:

输入你的elasticsearch的任意节点的地址和端口,点击connect即可:

4.1.3 创建索引库

1)利用kibana的DevTools创建索引库

在DevTools中输入指令:

PUT /itcast

{

"settings": {

"number_of_shards": 3, // 分片数量

"number_of_replicas": 1 // 副本数量

},

"mappings": {

"properties": {

// mapping映射定义 ...

}

}

}

2)利用cerebro创建索引库

利用cerebro还可以创建索引库:

填写索引库信息,点击右下角的create按钮:

4.1.4 查看分片效果

回到首页,即可查看索引库分片效果:

绿色的条,代表集群处于绿色(健康状态)。

4.2集群的节点角色

elasticsearch中集群节点有不同的职责划分:

| 节点类型 | 配置参数 | 默认值 | 节点职责 |

|---|---|---|---|

| master eligible | node.master | true | <备选系节点:主节点可以管理和记录集群状态、决定分片在哪个节点、处理创建和删除索引库的请求/td> |

| data | node.data | true | 数据节点:存储数据、搜索、聚合、CURD |

| ingest | node.ingest | true | 数据存储之前的预处理 |

| coordinating | 上面3个参数都为false则为coordinating节点 | 无 | 路由请求到其他节点合并其他节点处理的结果,返回给用户 |

elasticsearch中的每个节点角色都有自己不同的职责,因此建议集群部署时,每个节点都有独立的角色。

4.3集群脑裂问题

默认情况下,每个节点都是master eligible节点,因此一旦master节点宕机,其它候选节点会选举一个称为主节点。当主节点与其他节点网络故障时,可能发现脑裂问题。

为了避免脑裂,需要要求选票超过(eligible节点数量+1)/2才能当选为主,因此eligible节点数量最好是奇数。对应配置项是discovery.zen.minimum_master_nodes,在es7.0后,已经成为默认配置,一次一般不会发送脑裂问题。

总结:

master eligible节点的作用是什么?

- 参与集群选主

- 主节点可以管理集群状态、管理分片信息、处理创建和删除索引库的请求

data节点的作用是什么?

- 数据的CRUD

coordinator节点的作用是什么?

- 路由请求到其他节点

- 合并查询到的结果,返回给用户

4.4集群分布式存储

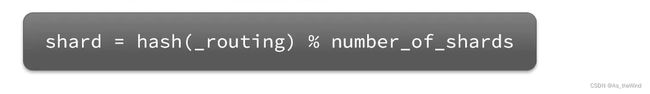

当新增文档时,应该保存到不同分片,保证数据均衡,那么coordinating node如何确定数据该存储到哪个分片呢?

elasticsearch会通过hash算法来计算文档应该存储到哪个分配:

说明:

- _routing默认是文档的id

- 算法与分片数量有关,因此索引库一旦创建,分片数量不能修改!

新增文档流程:

4.5集群分布式查询

elasticsearch的查询分成两个阶段:

- scatter phase:分散阶段,coordinate node会把请求分发到每一个分片

- gather phase:聚集阶段,coordinate node汇总data node的搜索结果,并处理为最终结果集返回给用户

总结:

分布式新增如何确定分片?

- coordinating node根据id做hash运算,得到结果对shard数量取余,余数就是对应的分片

分布式查询:

- 分散阶段:coordinating node将查询请求分发给不同分片

- 收集阶段:将查询结果汇总到coordinating node,整理并返回给用户

4.6集群故障转移

集群的master节点会监控集群中的节点状态,如果发现有节点宕机,会立即将宕机节点的分片数据迁移到其他节点,确保数据安全,这个叫做故障转移。

总结:

- master宕机后,EligibleMaster选举为新的主节点。

- master节点监控分片,节点状态,将故障节点上的分片转移到正常节点,确保数据安全