Apache+Hudi入门指南: Spark+Hudi+Hive+Presto

一、整合

hive集成hudi方法:将hudi jar复制到hive lib下

cp ./packaging/hudi-hadoop-mr-bundle/target/hudi-hadoop-mr-bundle-0.5.2-SNAPSHOT.jar $HIVE_HOME/lib

4.1 hive

hive 查询hudi 数据主要是在hive中建立外部表数据路径指向hdfs 路径,同时hudi 重写了inputformat 和outpurtformat。因为hudi 在读的数据的时候会读元数据来决定我要加载那些parquet文件,而在写的时候会写入新的元数据信息到hdfs路径下。所以hive 要集成hudi 查询要把编译的jar 包放到HIVE-HOME/lib 下面。否则查询时找不到inputformat和outputformat的类。

hive 外表数据结构如下:

CREATE EXTERNAL TABLE `test_partition`(

`_hoodie_commit_time` string,

`_hoodie_commit_seqno` string,

`_hoodie_record_key` string,

`_hoodie_file_name` string,

`id` string,

`oid` string,

`name` string,

`dt` string,

`isdeleted` string,

`lastupdatedttm` string,

`rowkey` string)

PARTITIONED BY (

`_hoodie_partition_path` string)

ROW FORMAT SERDE

'org.apache.hadoop.hive.ql.io.parquet.serde.ParquetHiveSerDe'

STORED AS INPUTFORMAT

'org.apache.hudi.hadoop.HoodieParquetInputFormat'

OUTPUTFORMAT

'org.apache.hadoop.hive.ql.io.parquet.MapredParquetOutputFormat'

LOCATION

'hdfs://hj:9000/tmp/hudi'

TBLPROPERTIES (

'transient_lastDdlTime'='1582111004')

presto 集成 hudi

presto 集成hudi 是基于hive catalog 同样是访问hive 外表进行查询,如果要集成需要把hudi 包copy 到presto hive-hadoop2插件下面。

presto集成hudi方法: 将hudi jar复制到 presto hive-hadoop2下

cp ./packaging/hudi-hadoop-mr-bundle/target/hudi-hadoop-mr-bundle-0.5.2-SNAPSHOT.jar $PRESTO_HOME/plugin/hive-hadoop2/

5. Hudi代码实战

5.1 Copy_on_Write 模式操作(默认模式)

5.1.1 insert操作(初始化插入数据)

// 不带分区写入

@Test

def insert(): Unit = {

val spark = SparkSession.builder.appName("hudi insert").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

val insertData = spark.read.parquet("/tmp/1563959377698.parquet")

insertData.write.format("org.apache.hudi")

// 设置主键列名

.option(DataSourceWriteOptions.RECORDKEY_FIELD_OPT_KEY, "rowkey")

// 设置数据更新时间的列名

.option(DataSourceWriteOptions.PRECOMBINE_FIELD_OPT_KEY, "lastupdatedttm")

// 并行度参数设置

.option("hoodie.insert.shuffle.parallelism", "2")

.option("hoodie.upsert.shuffle.parallelism", "2")

// table name 设置

.option(HoodieWriteConfig.TABLE_NAME, "test")

.mode(SaveMode.Overwrite)

// 写入路径设置

.save("/tmp/hudi")

}

// 带分区写入

@Test

def insertPartition(): Unit = {

val spark = SparkSession.builder.appName("hudi insert").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

// 读取文本文件转换为df

val insertData = Util.readFromTxtByLineToDf(spark, "/home/huangjing/soft/git/experiment/hudi-test/src/main/resources/test_insert_data.txt")

insertData.write.format("org.apache.hudi")

// 设置主键列名

.option(DataSourceWriteOptions.RECORDKEY_FIELD_OPT_KEY, "rowkey")

// 设置数据更新时间的列名

.option(DataSourceWriteOptions.PRECOMBINE_FIELD_OPT_KEY, "lastupdatedttm")

// 设置分区列

.option(DataSourceWriteOptions.PARTITIONPATH_FIELD_OPT_KEY, "dt")

// 设置索引类型目前有HBASE,INMEMORY,BLOOM,GLOBAL_BLOOM 四种索引 为了保证分区变更后能找到必须设置全局GLOBAL_BLOOM

.option(HoodieIndexConfig.BLOOM_INDEX_UPDATE_PARTITION_PATH, "true")

// 设置索引类型目前有HBASE,INMEMORY,BLOOM,GLOBAL_BLOOM 四种索引

.option(HoodieIndexConfig.INDEX_TYPE_PROP, HoodieIndex.IndexType.GLOBAL_BLOOM.name())

// 并行度参数设置

.option("hoodie.insert.shuffle.parallelism", "2")

.option("hoodie.upsert.shuffle.parallelism", "2")

.option(HoodieWriteConfig.TABLE_NAME, "test_partition")

.mode(SaveMode.Overwrite)

.save("/tmp/hudi")

}

5.1.2 upsert操作(数据存在时修改,不存在时新增)

// 不带分区upsert

@Test

def upsert(): Unit = {

val spark = SparkSession.builder.appName("hudi upsert").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

val insertData = spark.read.parquet("/tmp/1563959377699.parquet")

insertData.write.format("org.apache.hudi")

// 设置主键列名

.option(DataSourceWriteOptions.RECORDKEY_FIELD_OPT_KEY, "rowkey")

// 设置数据更新时间的列名

.option(DataSourceWriteOptions.PRECOMBINE_FIELD_OPT_KEY, "lastupdatedttm")

// 表名称设置

.option(HoodieWriteConfig.TABLE_NAME, "test")

// 并行度参数设置

.option("hoodie.insert.shuffle.parallelism", "2")

.option("hoodie.upsert.shuffle.parallelism", "2")

.mode(SaveMode.Append)

// 写入路径设置

.save("/tmp/hudi");

}

// 带分区upsert

@Test

def upsertPartition(): Unit = {

val spark = SparkSession.builder.appName("upsert partition").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

val upsertData = Util.readFromTxtByLineToDf(spark, "/home/huangjing/soft/git/experiment/hudi-test/src/main/resources/test_update_data.txt")

upsertData.write.format("org.apache.hudi").option(DataSourceWriteOptions.RECORDKEY_FIELD_OPT_KEY, "rowkey")

.option(DataSourceWriteOptions.PRECOMBINE_FIELD_OPT_KEY, "lastupdatedttm")

// 分区列设置

.option(DataSourceWriteOptions.PARTITIONPATH_FIELD_OPT_KEY, "dt")

.option(HoodieWriteConfig.TABLE_NAME, "test_partition")

.option(HoodieIndexConfig.INDEX_TYPE_PROP, HoodieIndex.IndexType.GLOBAL_BLOOM.name())

.option("hoodie.insert.shuffle.parallelism", "2")

.option("hoodie.upsert.shuffle.parallelism", "2")

.mode(SaveMode.Append)

.save("/tmp/hudi");

}

5.1.3 delete操作(删除数据)

@Test

def delete(): Unit = {

val spark = SparkSession.builder.appName("delta insert").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

val deleteData = spark.read.parquet("/tmp/1563959377698.parquet")

deleteData.write.format("com.uber.hoodie")

// 设置主键列名

.option(DataSourceWriteOptions.RECORDKEY_FIELD_OPT_KEY, "rowkey")

// 设置数据更新时间的列名

.option(DataSourceWriteOptions.PRECOMBINE_FIELD_OPT_KEY, "lastupdatedttm")

// 表名称设置

.option(HoodieWriteConfig.TABLE_NAME, "test")

// 硬删除配置

.option(DataSourceWriteOptions.PAYLOAD_CLASS_OPT_KEY, "org.apache.hudi.EmptyHoodieRecordPayload")

}

删除操作分为软删除和硬删除配置在这里查看:http://hudi.apache.org/cn/docs/0.5.0-writing_data.html#%E5%88%A0%E9%99%A4%E6%95%B0%E6%8D%AE

5.1.4 query操作(查询数据)

@Test

def query(): Unit = {

val basePath = "/tmp/hudi"

val spark = SparkSession.builder.appName("query insert").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

val tripsSnapshotDF = spark.

read.

format("org.apache.hudi").

load(basePath + "/*/*")

tripsSnapshotDF.show()

}

5.1.5 同步至Hive

@Test

def hiveSync(): Unit = {

val spark = SparkSession.builder.appName("delta hiveSync").config("spark.serializer", "org.apache.spark.serializer.KryoSerializer").master("local[3]").getOrCreate()

val upsertData = Util.readFromTxtByLineToDf(spark, "/home/huangjing/soft/git/experiment/hudi-test/src/main/resources/hive_sync.txt")

upsertData.write.format("org.apache.hudi")

// 设置主键列名

.option(DataSourceWriteOptions.RECORDKEY_FIELD_OPT_KEY, "rowkey")

// 设置数据更新时间的列名

.option(DataSourceWriteOptions.PRECOMBINE_FIELD_OPT_KEY, "lastupdatedttm")

// 分区列设置

.option(DataSourceWriteOptions.PARTITIONPATH_FIELD_OPT_KEY, "dt")

// 设置要同步的hive库名

.option(DataSourceWriteOptions.HIVE_DATABASE_OPT_KEY, "hj_repl")

// 设置要同步的hive表名

.option(DataSourceWriteOptions.HIVE_TABLE_OPT_KEY, "test_partition")

// 设置数据集注册并同步到hive

.option(DataSourceWriteOptions.HIVE_SYNC_ENABLED_OPT_KEY, "true")

// 设置当分区变更时,当前数据的分区目录是否变更

.option(HoodieIndexConfig.BLOOM_INDEX_UPDATE_PARTITION_PATH, "true")

// 设置要同步的分区列名

.option(DataSourceWriteOptions.HIVE_PARTITION_FIELDS_OPT_KEY, "dt")

// 设置jdbc 连接同步

.option(DataSourceWriteOptions.HIVE_URL_OPT_KEY, "jdbc:hive2://localhost:10000")

// hudi表名称设置

.option(HoodieWriteConfig.TABLE_NAME, "test_partition")

// 用于将分区字段值提取到Hive分区列中的类,这里我选择使用当前分区的值同步

.option(DataSourceWriteOptions.HIVE_PARTITION_EXTRACTOR_CLASS_OPT_KEY, "org.apache.hudi.hive.MultiPartKeysValueExtractor")

// 设置索引类型目前有HBASE,INMEMORY,BLOOM,GLOBAL_BLOOM 四种索引 为了保证分区变更后能找到必须设置全局GLOBAL_BLOOM

.option(HoodieIndexConfig.INDEX_TYPE_PROP, HoodieIndex.IndexType.GLOBAL_BLOOM.name())

// 并行度参数设置

.option("hoodie.insert.shuffle.parallelism", "2")

.option("hoodie.upsert.shuffle.parallelism", "2")

.mode(SaveMode.Append)

.save("/tmp/hudi");

}

@Test

def hiveSyncMergeOnReadByUtil(): Unit = {

val args: Array[String] = Array("--jdbc-url",

"jdbc:hive2://hj:10000",

"--partition-value-extractor",

"org.apache.hudi.hive.MultiPartKeysValueExtractor",

"--user", "hive", "--pass", "hive",

"--partitioned-by", "dt", "--base-path",

"/tmp/hudi_merge_on_read", "--database", "hj_repl",

"--table", "test_partition_merge_on_read")

HiveSyncTool.main(args)

}

这里可以选择使用spark 或者hudi-hive包中的hiveSynTool进行同步,hiveSynTool类其实就是run_sync_tool.sh运行时调用的。hudi 和hive同步时保证hive目标表不存在,同步其实就是建立外表的过程。

5.1.6 Hive查询读优化视图和增量视图

@Test

def hiveViewRead(): Unit = {

// 目标表

val sourceTable = "test_partition"

// 增量视图开始时间点

val fromCommitTime = "20200220094506"

// 获取当前增量视图后几个提交批次

val maxCommits = "2"

Class.forName("org.apache.hive.jdbc.HiveDriver")

val prop = new Properties()

prop.put("user", "hive")

prop.put("password", "hive")

val conn = DriverManager.getConnection("jdbc:hive2://localhost:10000/hj_repl", prop)

val stmt = conn.createStatement

// 这里设置增量视图参数

stmt.execute("set hive.input.format=org.apache.hudi.hadoop.hive.HoodieCombineHiveInputFormat")

// Allow queries without partition predicate

stmt.execute("set hive.strict.checks.large.query=false")

// Dont gather stats for the table created

stmt.execute("set hive.stats.autogather=false")

// Set the hoodie modie

stmt.execute("set hoodie." + sourceTable + ".consume.mode=INCREMENTAL")

// Set the from commit time

stmt.execute("set hoodie." + sourceTable + ".consume.start.timestamp=" + fromCommitTime)

// Set number of commits to pull

stmt.execute("set hoodie." + sourceTable + ".consume.max.commits=" + maxCommits)

val rs = stmt.executeQuery("select * from " + sourceTable)

val metaData = rs.getMetaData

val count = metaData.getColumnCount

while (rs.next()) {

for (i <- 1 to count) {

println(metaData.getColumnName(i) + ":" + rs.getObject(i).toString)

}

println("-----------------------------------------------------------")

}

rs.close()

stmt.close()

conn.close()

}

5.1.7 Presto查询读优化视图(暂不支持增量视图)

@Test

def prestoViewRead(): Unit = {

// 目标表

val sourceTable = "test_partition"

Class.forName("com.facebook.presto.jdbc.PrestoDriver")

val conn = DriverManager.getConnection("jdbc:presto://hj:7670/hive/hj_repl", "hive", null)

val stmt = conn.createStatement

val rs = stmt.executeQuery("select * from " + sourceTable)

val metaData = rs.getMetaData

val count = metaData.getColumnCount

while (rs.next()) {

for (i <- 1 to count) {

println(metaData.getColumnName(i) + ":" + rs.getObject(i).toString)

}

println("-----------------------------------------------------------")

}

rs.close()

stmt.close()

conn.close()

}

6. 问题整理

1. merg on read 问题

merge on read 要配置option(DataSourceWriteOptions.TABLE_TYPE_OPT_KEY, DataSourceWriteOptions.MOR_TABLE_TYPE_OPT_VAL)才会生效,配置为option(HoodieTableConfig.HOODIE_TABLE_TYPE_PROP_NAME, HoodieTableType.MERGE_ON_READ.name())将不会生效。

2. spark pom 依赖问题

不要引入spark-hive 的依赖里面包含了hive 1.2.1的相关jar包,而hudi 要求的版本是2.x版本。如果一定要使用请排除相关依赖。

3. hive视图同步问题

代码与hive视图同步时resources要加入hive-site.xml 配置文件,不然同步hive metastore 会报错。

————————————————

原文链接:https://blog.csdn.net/h335146502/article/details/104485494/

Apache+Hudi入门指南(含代码示例)_h335146502的专栏-CSDN博客_hudi部署

Apache Hudi集成Spark SQL抢先体验

1. 摘要

社区小伙伴一直期待的Hudi整合Spark SQL的PR正在积极Review中并已经快接近尾声,Hudi集成Spark SQL预计会在下个版本正式发布,在集成Spark SQL后,会极大方便用户对Hudi表的DDL/DML操作,下面就来看看如何使用Spark SQL操作Hudi表。

2. 环境准备

首先需要将PR拉取到本地打包,生成SPARK_BUNDLE_JAR(hudi-spark-bundle_2.11-0.9.0-SNAPSHOT.jar)包

2.1 启动spark-sql

在配置完spark环境后可通过如下命令启动spark-sql

spark-sql --jars $PATH_TO_SPARK_BUNDLE_JAR

--conf 'spark.serializer=org.apache.spark.serializer.KryoSerializer'

--conf 'spark.sql.extensions=org.apache.spark.sql.hudi.HoodieSparkSessionExtension'

2.2 设置并发度

由于Hudi默认upsert/insert/delete的并发度是1500,对于演示的小规模数据集可设置更小的并发度。

set hoodie.upsert.shuffle.parallelism = 1;

set hoodie.insert.shuffle.parallelism = 1;

set hoodie.delete.shuffle.parallelism = 1;

同时设置不同步Hudi表元数据

set hoodie.datasource.meta.sync.enable=false;

3. Create Table

使用如下SQL创建表

create table test_hudi_table (

id int,

name string,

price double,

ts long,

dt string

) using hudi

partitioned by (dt)

options (

primaryKey = 'id',

type = 'mor'

)

location 'file:///tmp/test_hudi_table'

说明:表类型为MOR,主键为id,分区字段为dt,合并字段默认为ts。

创建Hudi表后查看创建的Hudi表

show create table test_hudi_table4. Insert Into

4.1 Insert

使用如下SQL插入一条记录

INSERT INTO test_hudi_table

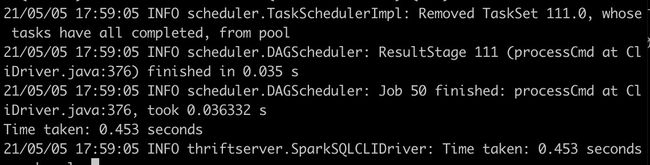

SELECT 1 AS id, 'hudi' AS name, 10 AS price, 1000 AS ts, '2021-05-05' AS dtinsert完成后查看Hudi表本地目录结构,生成的元数据、分区和数据与Spark Datasource写入均相同。

4.2 Select

使用如下SQL查询Hudi表数据

select * from test_hudi_table

查询结果如下

5. Update

5.1 Update

使用如下SQL将id为1的price字段值变更为20

update test_hudi_table set price = 20.0 where id = 1

5.2 Select

再次查询Hudi表数据

select * from test_hudi_table

查询结果如下,可以看到price已经变成了20.0

查看Hudi表的本地目录结构如下,可以看到在update之后又生成了一个deltacommit,同时生成了一个增量log文件。

6. Delete

6.1 Delete

使用如下SQL将id=1的记录删除

delete from test_hudi_table where id = 1

查看Hudi表的本地目录结构如下,可以看到delete之后又生成了一个deltacommit,同时生成了一个增量log文件。

6.2 Select

再次查询Hudi表

select * from test_hudi_table;

查询结果如下,可以看到已经查询不到任何数据了,表明Hudi表中已经不存在任何记录了。

7. Merge Into

7.1 Merge Into Insert

使用如下SQL向test_hudi_table插入数据

merge into test_hudi_table as t0

using (

select 1 as id, 'a1' as name, 10 as price, 1000 as ts, '2021-03-21' as dt

) as s0

on t0.id = s0.id

when not matched and s0.id % 2 = 1 then insert *

7.2 Select

查询Hudi表数据

select * from test_hudi_table

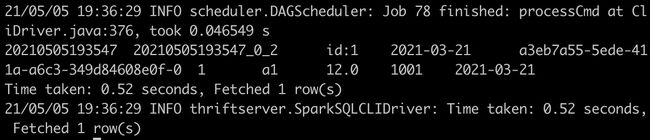

查询结果如下,可以看到Hudi表中存在一条记录

7.4 Merge Into Update

使用如下SQL更新数据

merge into test_hudi_table as t0

using (

select 1 as id, 'a1' as name, 12 as price, 1001 as ts, '2021-03-21' as dt

) as s0

on t0.id = s0.id

when matched and s0.id % 2 = 1 then update set *

7.5 Select

查询Hudi表

select * from test_hudi_table

查询结果如下,可以看到Hudi表中的分区已经更新了

7.6 Merge Into Delete

使用如下SQL删除数据

merge into test_hudi_table t0

using (

select 1 as s_id, 'a2' as s_name, 15 as s_price, 1001 as s_ts, '2021-03-21' as dt

) s0

on t0.id = s0.s_id

when matched and s_ts = 1001 then delete

查询结果如下,可以看到Hudi表中已经没有数据了

8. 删除表

使用如下命令删除Hudi表

drop table test_hudi_table;

使用show tables查看表是否存在

show tables;

可以看到已经没有表了

9. 总结

通过上面示例简单展示了通过Spark SQL Insert/Update/Delete Hudi表数据,通过SQL方式可以非常方便地操作Hudi表,降低了使用Hudi的门槛。另外Hudi集成Spark SQL工作将继续完善语法,尽量对标Snowflake和BigQuery的语法,如插入多张表(INSERT ALL WHEN condition1 INTO t1 WHEN condition2 into t2),变更Schema以及CALL Cleaner、CALL Clustering等Hudi表服务。