Spark SQL之RDD, DataFrame, DataSet详细使用

前言

Spark Core 中,如果想要执行应用程序,需要首先构建上下文环境对象 SparkContext,Spark SQL 其实可以理解为对 Spark Core 的一种封装,不仅仅在模型上进行了封装,上下文环境对象也进行了封装;

在老的版本中,SparkSQL 提供两种 SQL 查询起始点:一个叫 SQLContext,用于 Spark自己提供的 SQL 查询;一个叫 HiveContext,用于连接 Hive 的查询;

SparkSession 是 Spark 最新的 SQL 查询起始点,实质上是 SQLContext 和 HiveContext的组合,所以在 SQLContex 和 HiveContext 上可用的 API 在 SparkSession 上同样是可以使用的。

SparkSession 内部封装了 SparkContext,所以计算实际上是由 sparkContext 完成的。当我们使用spark-shell的时候,spark框架会自动的创建一个名称叫做spark的SparkSession对象, 就像我们以前可以自动获取到一个 sc 来表示 SparkContext 对象一样;

一、DataFrame

Spark SQL 的 DataFrame API 允许我们使用 DataFrame 而不用必须去注册临时表或者生成 SQL 表达式。 DataFrame API 既有 transformation 操作也有 action 操作

创建 DataFrame

在 Spark SQL 中 SparkSession 是创建 DataFrame 和执行 SQL 的入口,创建 DataFrame

有三种方式:通过 Spark 的数据源进行创建;从一个存在的 RDD 进行转换;还可以从 Hive

Table 进行查询返回。

- 从 Spark 数据源进行创建;

- 查看 Spark 支持创建文件的数据源格式;

- 读取 json 文件创建 DataFrame;

- 在 spark 的 bin/data 目录中创建 user.json 文件;

注意:如果从内存中获取数据, spark 可以知道数据类型具体是什么。如果是数字,默认作为 Int 处理;但是从文件中读取的数字,不能确定是什么类型,所以用 bigint 接收,可以和Long 类型转换,但是和 Int 不能进行转换

SQL 语法

SQL 语法风格是指我们查询数据的时候使用 SQL 语句来查询,这种风格的查询必须要

有临时视图或者全局视图来辅助;

1、读取 JSON 文件创建 DataFrame

scala> val df = spark.read.json("data/user.json")

df: org.apache.spark.sql.DataFrame = [age: bigint, username: string]2、对 DataFrame 创建一个临时表

scala> df.createOrReplaceTempView("people")3、通过 SQL 语句实现查询全表

scala> val sqlDF = spark.sql("SELECT * FROM people")

sqlDF: org.apache.spark.sql.DataFrame = [age: bigint, name: string]4、通过 SQL 语句实现查询全表

scala> val sqlDF = spark.sql("SELECT * FROM people")

sqlDF: org.apache.spark.sql.DataFrame = [age: bigint, name: string]注意:普通临时表是 Session 范围内的,如果想应用范围内有效,可以使用全局临时表。使用全局临时表时需要全路径访问,如: global_temp .people

5、 对于 DataFrame 创建一个全局表

scala> df.createGlobalTempView("people")6、通过 SQL 语句实现查询全表

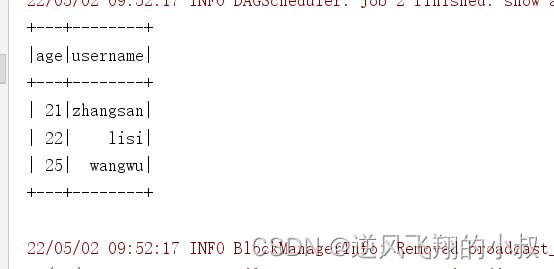

scala> spark.sql("SELECT * FROM global_temp.people").show()

+---+--------+

|age|username|

+---+--------+

| 20|zhangsan|

| 30| lisi|

| 40| wangwu|

+---+--------+

scala> spark.newSession().sql("SELECT * FROM global_temp.people").show()

+---+--------+

|age|username|

+---+--------+

| 20|zhangsan|

| 30| lisi|

| 40| wangwu|

+---+--------+DSL 语法

DataFrame 提供一个特定领域语言 (domain-specific language, DSL) 去管理结构化的数据。

可以在 Scala, Java, Python 和 R 中使用 DSL ,使用 DSL 语法风格不必去创建临时视图了

1、创建一个 DataFrame

scala> val df = spark.read.json("data/user.json")

df: org.apache.spark.sql.DataFrame = [age: bigint, name: string]2、查看 DataFrame 的 Schema 信息

scala> df.printSchema

root

|-- age: Long (nullable = true)

|-- username: string (nullable = true)3、只查看"username"列数据,

scala> df.select("username").show()

+--------+

|username|

+--------+

|zhangsan|

| lisi|

| wangwu|

+--------+4、查看"username"列数据以及"age+1"数据

scala> df.select($"username",$"age" + 1).show

scala> df.select('username, 'age + 1).show()

scala> df.select('username, 'age + 1 as "newage").show()

+--------+---------+

|username|(age + 1)|

+--------+---------+

|zhangsan| 21|

| lisi| 31|

| wangwu| 41|

+--------+---------+注意 : 涉及到运算的时候 , 每列都必须使用 $, 或者采用引号表达式:单引号 + 字段名

5、查看"age"大于"30"的数据

scala> df.filter($"age">30).show

+---+---------+

|age| username|

+---+---------+

| 40| wangwu|

+---+---------+6、按照"age"分组,查看数据条数

scala> df.groupBy("age").count.show

+---+-----+

|age|count|

+---+-----+

| 20| 1|

| 30| 1|

| 40| 1|

+---+-----+RDD 转换为 DataFrame

在 IDEA 中开发程序时,如果需要 RDD 与 DF 或者 DS 之间互相操作,那么需要引入

import spark.implicits._

这里的 spark 不是 Scala 中的包名,而是创建的 sparkSession 对象的变量名称,所以必须先创建 SparkSession 对象再导入。这里的 spark 对象不能使用 var 声明,因为 Scala 只支持val 修饰的对象的引入;

spark-shell 中无需导入,自动完成此操作

scala> val idRDD = sc.textFile("data/id.txt")

scala> idRDD.toDF("id").show

+---+

| id|

+---+

| 1|

| 2|

| 3|

| 4|

+---+ 实际开发中,一般通过样例类将 RDD 转换为 DataFrame

scala> case class User(name:String, age:Int)

defined class User

scala> sc.makeRDD(List(("zhangsan",30), ("lisi",40))).map(t=>User(t._1,

t._2)).toDF.show

+--------+---+

| name|age|

+--------+---+

|zhangsan| 30|

| lisi| 40|

+--------+---+DataFrame 转换为 RDD

DataFrame 其实就是对 RDD 的封装,所以可以直接获取内部的 RDD

scala> val df = sc.makeRDD(List(("zhangsan",30), ("lisi",40))).map(t=>User(t._1,

t._2)).toDF

df: org.apache.spark.sql.DataFrame = [name: string, age: int]

scala> val rdd = df.rdd

rdd: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[46]

at rdd at :25

scala> val array = rdd.collect

array: Array[org.apache.spark.sql.Row] = Array([zhangsan,30], [lisi,40]) 注意:此时得到的 RDD 存储类型为 Row

scala> array(0)

res28: org.apache.spark.sql.Row = [zhangsan,30]

scala> array(0)(0)

res29: Any = zhangsan

scala> array(0).getAs[String]("name")

res30: String = zhangsan二、DataSet

DataSet 是具有 强类型 的数据集合,需要提供对应的类型信息。

创建 DataSet

1、使用样例类序列创建 DataSet

scala> case class Person(name: String, age: Long)

defined class Person

scala> val caseClassDS = Seq(Person("zhangsan",2)).toDS()

caseClassDS: org.apache.spark.sql.Dataset[Person] = [name: string, age: Long]

scala> caseClassDS.show

+---------+---+

| name|age|

+---------+---+

| zhangsan| 2|

+---------+---+2、使用基本类型的序列创建 DataSet

scala> val ds = Seq(1,2,3,4,5).toDS

ds: org.apache.spark.sql.Dataset[Int] = [value: int]

scala> ds.show

+-----+

|value|

+-----+

| 1|

| 2|

| 3|

| 4|

| 5|

+-----+注意:在实际使用的时候,很少用到把序列转换成 DataSet ,更多的是通过 RDD 来得到 DataSet

RDD 转换为 DataSet

SparkSQL 能够自动将包含有 case 类的 RDD 转换成 DataSet , case 类定义了 table 的结 构,case 类属性通过反射变成了表的列名。 Case 类可以包含诸如 Seq 或者 Array 等复杂的结构

scala> case class User(name:String, age:Int)

defined class User

scala> sc.makeRDD(List(("zhangsan",30), ("lisi",49))).map(t=>User(t._1,

t._2)).toDS

res11: org.apache.spark.sql.Dataset[User] = [name: string, age: int]DataSet 转换为 RDD

DataSet 其实也是对 RDD 的封装,所以可以直接获取内部的 RDD

scala> case class User(name:String, age:Int)

defined class User

scala> sc.makeRDD(List(("zhangsan",30), ("lisi",49))).map(t=>User(t._1,

t._2)).toDS

res11: org.apache.spark.sql.Dataset[User] = [name: string, age: int]

scala> val rdd = res11.rdd

rdd: org.apache.spark.rdd.RDD[User] = MapPartitionsRDD[51] at rdd at

:25

scala> rdd.collect

res12: Array[User] = Array(User(zhangsan,30), User(lisi,49)) DataFrame 和 DataSet 转换

DataFrame 其实是 DataSet 的特例,所以它们之间是可以互相转换的

DataFrame 转换为 DataSet

scala> case class User(name:String, age:Int)

defined class User

scala> val df = sc.makeRDD(List(("zhangsan",30),

("lisi",49))).toDF("name","age")

df: org.apache.spark.sql.DataFrame = [name: string, age: int]

scala> val ds = df.as[User]

ds: org.apache.spark.sql.Dataset[User] = [name: string, age: int] DataSet 转换为 DataFrame

scala> val ds = df.as[User]

ds: org.apache.spark.sql.Dataset[User] = [name: string, age: int]

scala> val df = ds.toDF

df: org.apache.spark.sql.DataFrame = [name: string, age: int]RDD、DataFrame、DataSet 三者的关系

在 SparkSQL 中 Spark 为我们提供了两个新的抽象,分别是 DataFrame 和 DataSet 。他们

和 RDD 有什么区别呢?首先从版本的产生上来看:

- Spark1.0 => RDD

- Spark1.3 => DataFrame

- Spark1.6 => Dataset

如果同样的数据都给到这三个数据结构,他们分别计算之后,都会给出相同的结果。不

同是的他们的执行效率和执行方式。在后期的 Spark 版本中, DataSet 有可能会逐步取代 RDD

和 DataFrame 成为唯一的 API 接口

三者的共性

- RDD、DataFrame、DataSet 全都是 spark 平台下的分布式弹性数据集,为处理超大型数

据提供便利; - 三者都有惰性机制,在进行创建、转换,如 map 方法时,不会立即执行,只有在遇到

Action 如 foreach 时,三者才会开始遍历运算; - 三者有许多共同的函数,如 filter,排序等;

- 在对 DataFrame 和 Dataset 进行操作许多操作都需要这个包:import spark.implicits._(在

创建好 SparkSession 对象后尽量直接导入); - 三者都会根据 Spark 的内存情况自动缓存运算,这样即使数据量很大,也不用担心会

内存溢出; - 三者都有 partition 的概念;

- DataFrame 和 DataSet 均可使用模式匹配获取各个字段的值和类型;

三者的区别

RDD

1、RDD 一般和 spark mllib 同时使用;2、RDD 不支持 sparksql 操作

DataFrame

1、与 RDD 和 Dataset 不同, DataFrame 每一行的类型固定为 Row ,每一列的值没法直接访问,只有通过解析才能获取各个字段的值;2、 DataFrame 与 DataSet 一般不与 spark mllib 同时使用;3、DataFrame 与 DataSet 均支持 SparkSQL 的操作,比如 select,groupby 之类,还能注册临时表 / 视窗,进行 sql 语句操作;4、DataFrame 与 DataSet 支持一些特别方便的保存方式,比如保存成 csv,可以带上表头,这样每一列的字段名一目了然

DataSet

1、Dataset 和 DataFrame 拥有完全相同的成员函数,区别只是每一行的数据类型不同。DataFrame 其实就是 DataSet 的一个特例 type DataFrame = Dataset[Row];2、DataFrame 也可以叫 Dataset[Row], 每一行的类型是 Row ,不解析,每一行究竟有哪些字段,各个字段又是什么类型都无从得知,只能用上面提到的 getAS 方法或者共性中的第七条提到的模式匹配拿出特定字段。而 Dataset 中,每一行是什么类型是不一定的,在自定义了 case class 之后可以很自由的获得每一行的信息;

案例实操

1、引入依赖

org.codehaus.janino

janino

3.0.8

org.apache.spark

spark-sql_2.12

3.0.0

2、实例代码

dataframe使用

import org.apache.spark.SparkConf

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.{DataFrame, Dataset, Row, SparkSession}

object SparkSql1 {

def main(args: Array[String]): Unit = {

// TODO 创建SparkSQL的运行环境

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("sparkSQL")

val spark = SparkSession.builder().config(sparkConf).getOrCreate()

import spark.implicits._

// DataFrame 展示数据

val df: DataFrame = spark.read.json("E:\\code-self\\spi\\datas\\user.json")

df.show()

//DataFrame 转sql

df.createTempView("user")

spark.sql("select * from user").show()

spark.sql("select avg(age) from user").show()

spark.close()

}

运行上面的代码,观察输出效果

dataset使用

import org.apache.spark.SparkConf

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.{DataFrame, Dataset, Row, SparkSession}

object SparkSql1 {

def main(args: Array[String]): Unit = {

// TODO 创建SparkSQL的运行环境

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("sparkSQL")

val spark = SparkSession.builder().config(sparkConf).getOrCreate()

import spark.implicits._

//DataSet

val seq = Seq(1,2,3,4)

val dsVal = seq.toDS()

dsVal.show()

spark.close()

}

case class User( id:Int, name:String, age:Int )

}

RDD, DataFrame, DataSet互转

import org.apache.spark.SparkConf

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.{DataFrame, Dataset, Row, SparkSession}

object SparkSql1 {

def main(args: Array[String]): Unit = {

// TODO 创建SparkSQL的运行环境

val sparkConf = new SparkConf().setMaster("local[*]").setAppName("sparkSQL")

val spark = SparkSession.builder().config(sparkConf).getOrCreate()

import spark.implicits._

// DataFrame 展示数据

/*val df: DataFrame = spark.read.json("E:\\code-self\\spi\\datas\\user.json")

df.show()

//DataFrame 转sql

df.createTempView("user")

spark.sql("select * from user").show()

spark.sql("select avg(age) from user").show()*/

//DataFrame 转DSL

//df.select("age","username").show()

//DataSet

/*val seq = Seq(1,2,3,4)

val dsVal = seq.toDS()

dsVal.show()*/

// RDD与DataFrame转换

var rdd1 = spark.sparkContext.makeRDD(List(

(1,"张三",30),(2,"李四",25),(3,"王五",28)

))

var dataFrame = rdd1.toDF("id","name","age")

// DataFrame转RDD

val rdd = dataFrame.rdd

// DataFrame转DataSet 【需要引入case class】

val ds = dataFrame.as[User]

val df2 = ds.toDF()

// RDD转DataSet 【需要引入case class】

val ds1 = rdd1.map {

case (id, name, age) => {

User(id, name, age)

}

}.toDS()

val userRdd = ds1.rdd

spark.close()

}

case class User( id:Int, name:String, age:Int )

}