【DETR】训练自己的数据集-实践笔记

DETR(Detection with TRansformers)训练自己的数据集-实践笔记&问题总结

DETR(Detection with TRansformers)是基于transformer的端对端目标检测,无NMS后处理步骤,无anchor。

实现使用NWPUVHR10数据集训练DETR.

NWPU数据集总共包含十种类别目标,包含650个正样本,150个负样本(没有用到)。

NWPU_CATEGORIES=['airplane','ship','storage tank','baseball diamond','tennis court',\

'basketball court','ground track field','harbor','bridge','vehicle']

代码:https://github.com/facebookresearch/detr

文章目录

-

- DETR(Detection with TRansformers)训练自己的数据集-实践笔记&问题总结

- 一.训练

-

- 1.数据集准备

- 2.环境配置

- 3.pth文件生成

- 4.参数修改

- 5.训练

- 二. 出现的bug

-

- 1. KeyError: 'area'

- 三.评估及预测

-

- 1.评估

- 2.预测

一.训练

1.数据集准备

DETR需要的数据集格式为coco格式,图片和标签文件保存于训练集、测试集、验证集、标签文件四个文件夹中,其中annotations中存放json格式的标签文件

下面的代码包含了几种数据集RSOD、NWPU、DIOR、YOLO数据集标签文件转换json功能。新建py文件tojson.py,使用如下代码生成需要的json文件。

生成instances_train2017.json

(a)修改29行image_path默认路径为train2017的路径;

(b)修改31行annotation_path默认路径为标签文件路径(train和val的标签都放在这个文件夹下,所以生成instances_val2017.json时就不需要再修改这个路径了);

(c)修改33行dataset为自己的数据集名称NWPU

(d).修改34行save的默认路径为json文件的保存路径…/NWPUVHR-10/annotations/instances_train2017.json

import os

import cv2

import json

import argparse

from tqdm import tqdm

import xml.etree.ElementTree as ET

COCO_DICT=['images','annotations','categories']

IMAGES_DICT=['file_name','height','width','id']

ANNOTATIONS_DICT=['image_id','iscrowd','area','bbox','category_id','id']

CATEGORIES_DICT=['id','name']

## {'supercategory': 'person', 'id': 1, 'name': 'person'}

## {'supercategory': 'vehicle', 'id': 2, 'name': 'bicycle'}

YOLO_CATEGORIES=['person']

RSOD_CATEGORIES=['aircraft','playground','overpass','oiltank']

NWPU_CATEGORIES=['airplane','ship','storage tank','baseball diamond','tennis court',\

'basketball court','ground track field','harbor','bridge','vehicle']

VOC_CATEGORIES=['aeroplane','bicycle','bird','boat','bottle','bus','car','cat','chair','cow',\ 'diningtable','dog','horse','motorbike','person','pottedplant','sheep','sofa','train','tvmonitor']

DIOR_CATEGORIES=['golffield','Expressway-toll-station','vehicle','trainstation','chimney','storagetank',\

'ship','harbor','airplane','groundtrackfield','tenniscourt','dam','basketballcourt',\

'Expressway-Service-area','stadium','airport','baseballfield','bridge','windmill','overpass']

parser=argparse.ArgumentParser(description='2COCO')

#parser.add_argument('--image_path',type=str,default=r'T:/shujuji/DIOR/JPEGImages-trainval/',help='config file')

parser.add_argument('--image_path',type=str,default=r'G:/NWPU VHR-10 dataset/positive image set/',help='config file')

#parser.add_argument('--annotation_path',type=str,default=r'T:/shujuji/DIOR/Annotations/',help='config file')

parser.add_argument('--annotation_path',type=str,default=r'G:/NWPU VHR-10 dataset/ground truth/',help='config file')

parser.add_argument('--dataset',type=str,default='NWPU',help='config file')

parser.add_argument('--save',type=str,default='G:/NWPU VHR-10 dataset/instances_train2017.json',help='config file')

args=parser.parse_args()

def load_json(path):

with open(path,'r') as f:

json_dict=json.load(f)

for i in json_dict:

print(i)

print(json_dict['annotations'])

def save_json(dict,path):

print('SAVE_JSON...')

with open(path,'w') as f:

json.dump(dict,f)

print('SUCCESSFUL_SAVE_JSON:',path)

def load_image(path):

img=cv2.imread(path)

#print(path)

return img.shape[0],img.shape[1]

def generate_categories_dict(category): #ANNOTATIONS_DICT=['image_id','iscrowd','area','bbox','category_id','id']

print('GENERATE_CATEGORIES_DICT...')

return [{CATEGORIES_DICT[0]:category.index(x)+1,CATEGORIES_DICT[1]:x} for x in category] #CATEGORIES_DICT=['id','name']

def generate_images_dict(imagelist,image_path,start_image_id=11725): #IMAGES_DICT=['file_name','height','width','id']

print('GENERATE_IMAGES_DICT...')

images_dict=[]

with tqdm(total=len(imagelist)) as load_bar:

for x in imagelist: #x就是图片的名称

#print(start_image_id)

dict={IMAGES_DICT[0]:x,IMAGES_DICT[1]:load_image(image_path+x)[0],\

IMAGES_DICT[2]:load_image(image_path+x)[1],IMAGES_DICT[3]:imagelist.index(x)+start_image_id}

load_bar.update(1)

images_dict.append(dict)

return images_dict

# return [{IMAGES_DICT[0]:x,IMAGES_DICT[1]:load_image(image_path+x)[0],\

# IMAGES_DICT[2]:load_image(image_path+x)[1],IMAGES_DICT[3]:imagelist.index(x)+start_image_id} for x in imagelist]

def DIOR_Dataset(image_path,annotation_path,start_image_id=11725,start_id=0):

categories_dict=generate_categories_dict(DIOR_CATEGORIES) #CATEGORIES_DICT=['id':,1'name':golffield......] id从1开始

imgname=os.listdir(image_path)

images_dict=generate_images_dict(imgname,image_path,start_image_id) #IMAGES_DICT=['file_name','height','width','id'] id从0开始的

print('GENERATE_ANNOTATIONS_DICT...') #生成cooc的注记 ANNOTATIONS_DICT=['image_id','iscrowd','area','bbox','category_id','id']

annotations_dict=[]

id=start_id

for i in images_dict:

image_id=i['id']

print(image_id)

image_name=i['file_name']

annotation_xml=annotation_path+image_name.split('.')[0]+'.xml'

tree=ET.parse(annotation_xml)

root=tree.getroot()

for j in root.findall('object'):

category=j.find('name').text

category_id=DIOR_CATEGORIES.index(category) #字典的索引,是从1开始的

x_min=float(j.find('bndbox').find('xmin').text)

y_min=float(j.find('bndbox').find('ymin').text)

w=float(j.find('bndbox').find('xmax').text)-x_min

h=float(j.find('bndbox').find('ymax').text)-y_min

bbox=[x_min,y_min,w,h]

dict={'image_id':image_id,'iscrowd':0,'bbox':bbox,'category_id':category_id,'id':id}

annotations_dict.append(dict)

id=id+1

print('SUCCESSFUL_GENERATE_DIOR_JSON')

return {COCO_DICT[0]:images_dict,COCO_DICT[1]:annotations_dict,COCO_DICT[2]:categories_dict}

def NWPU_Dataset(image_path,annotation_path,start_image_id=0,start_id=0):

categories_dict=generate_categories_dict(NWPU_CATEGORIES)

imgname=os.listdir(image_path)

images_dict=generate_images_dict(imgname,image_path,start_image_id)

print('GENERATE_ANNOTATIONS_DICT...')

annotations_dict=[]

id=start_id

for i in images_dict:

image_id=i['id']

image_name=i['file_name']

annotation_txt=annotation_path+image_name.split('.')[0]+'.txt'

txt=open(annotation_txt,'r')

lines=txt.readlines()

for j in lines:

if j=='\n':

continue

category_id=int(j.split(',')[4])

category=NWPU_CATEGORIES[category_id-1]

print(category_id,' ',category)

x_min=float(j.split(',')[0].split('(')[1])

y_min=float(j.split(',')[1].split(')')[0])

w=float(j.split(',')[2].split('(')[1])-x_min

h=float(j.split(',')[3].split(')')[0])-y_min

bbox=[x_min,y_min,w,h]

dict={'image_id':image_id,'iscrowd':0,'bbox':bbox,'category_id':category_id,'id':id}

id=id+1

annotations_dict.append(dict)

print('SUCCESSFUL_GENERATE_NWPU_JSON')

return {COCO_DICT[0]:images_dict,COCO_DICT[1]:annotations_dict,COCO_DICT[2]:categories_dict}

def YOLO_Dataset(image_path,annotation_path,start_image_id=0,start_id=0):

categories_dict=generate_categories_dict(YOLO_CATEGORIES)

imgname=os.listdir(image_path)

images_dict=generate_images_dict(imgname,image_path)

print('GENERATE_ANNOTATIONS_DICT...')

annotations_dict=[]

id=start_id

for i in images_dict:

image_id=i['id']

image_name=i['file_name']

W,H=i['width'],i['height']

annotation_txt=annotation_path+image_name.split('.')[0]+'.txt'

txt=open(annotation_txt,'r')

lines=txt.readlines()

for j in lines:

category_id=int(j.split(' ')[0])+1

category=YOLO_CATEGORIES

x=float(j.split(' ')[1])

y=float(j.split(' ')[2])

w=float(j.split(' ')[3])

h=float(j.split(' ')[4])

x_min=(x-w/2)*W

y_min=(y-h/2)*H

w=w*W

h=h*H

area=w*h

bbox=[x_min,y_min,w,h]

dict={'image_id':image_id,'iscrowd':0,'area':area,'bbox':bbox,'category_id':category_id,'id':id}

annotations_dict.append(dict)

id=id+1

print('SUCCESSFUL_GENERATE_YOLO_JSON')

return {COCO_DICT[0]:images_dict,COCO_DICT[1]:annotations_dict,COCO_DICT[2]:categories_dict}

def RSOD_Dataset(image_path,annotation_path,start_image_id=0,start_id=0):

categories_dict=generate_categories_dict(RSOD_CATEGORIES)

imgname=os.listdir(image_path)

images_dict=generate_images_dict(imgname,image_path,start_image_id)

print('GENERATE_ANNOTATIONS_DICT...')

annotations_dict=[]

id=start_id

for i in images_dict:

image_id=i['id']

image_name=i['file_name']

annotation_txt=annotation_path+image_name.split('.')[0]+'.txt'

txt=open(annotation_txt,'r')

lines=txt.readlines()

for j in lines:

category=j.split('\t')[1]

category_id=RSOD_CATEGORIES.index(category)+1

x_min=float(j.split('\t')[2])

y_min=float(j.split('\t')[3])

w=float(j.split('\t')[4])-x_min

h=float(j.split('\t')[5])-y_min

bbox=[x_min,y_min,w,h]

dict={'image_id':image_id,'iscrowd':0,'bbox':bbox,'category_id':category_id,'id':id}

annotations_dict.append(dict)

id=id+1

print('SUCCESSFUL_GENERATE_RSOD_JSON')

return {COCO_DICT[0]:images_dict,COCO_DICT[1]:annotations_dict,COCO_DICT[2]:categories_dict}

if __name__=='__main__':

dataset=args.dataset #数据集名字

save=args.save #json的保存路径

image_path=args.image_path #对于coco是图片的路径

annotation_path=args.annotation_path #coco的annotation路径

if dataset=='RSOD':

json_dict=RSOD_Dataset(image_path,annotation_path,0)

if dataset=='NWPU':

json_dict=NWPU_Dataset(image_path,annotation_path,0)

if dataset=='DIOR':

json_dict=DIOR_Dataset(image_path,annotation_path,11725)

if dataset=='YOLO':

json_dict=YOLO_Dataset(image_path,annotation_path,0)

save_json(json_dict,save)

运行生成instances_train2017.json,再修改路径生成instances_train2017.json。

如果自己的数据集是voc格式,可以使用参考链接1中的大大给出的代码。

2.环境配置

激活当前项目所在环境,使用如下命令完成环境配置:

pip install -r requirements.txt

3.pth文件生成

先下载预训练文件,官方提供了 DETR 和 DETR-DC5 models,两个模型,选择一个下载

新建py文件,mydataset.py,使用如下代码,修改第5行为自己的类别数+1

import torch

pretrained_weights = torch.load('detr-r50-e632da11.pth')

#NWPU数据集,10类

num_class = 11 #类别数+1,1为背景

pretrained_weights["model"]["class_embed.weight"].resize_(num_class+1, 256)

pretrained_weights["model"]["class_embed.bias"].resize_(num_class+1)

torch.save(pretrained_weights, "detr-r50_%d.pth"%num_class)

运行,生成detr-r50_11.pth

4.参数修改

修改models/detr.py文件,313行的num_classes为自己的类别数

5.训练

进行训练需要设置一些参数,可以在main.py文件中直接修改或者使用命令行

【a】main.py文件直接修改

修改main.py文件的epochs、lr、batch_size等训练参数

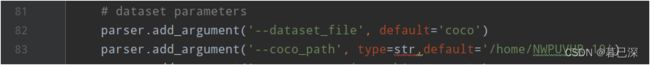

修改自己的数据集路径:

设置输出路径:

![]()

修改resume为自己的预训练权重文件路径

![]()

【b】命令行

python main.py --dataset_file "coco" --coco_path "/myData/coco" --epoch 300 --lr=1e-4 --batch_size=8 --num_workers=4 --output_dir="outputs" --resume="detr_r50_11.pth"

运行main.py文件

二. 出现的bug

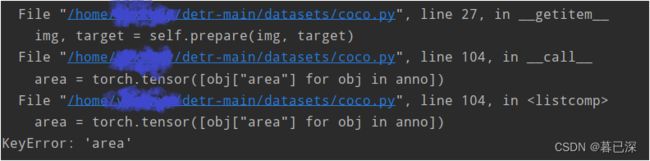

1. KeyError: ‘area’

area = torch.tensor([obj[“area”] for obj in anno])

KeyError: ‘area’

由定位到的语句可知,应该是字典obj没有名为area的key,查看了一下我的标签文件,确实生成的有问题,里面没有area信息,重新生成了一下,成功运行。

三.评估及预测

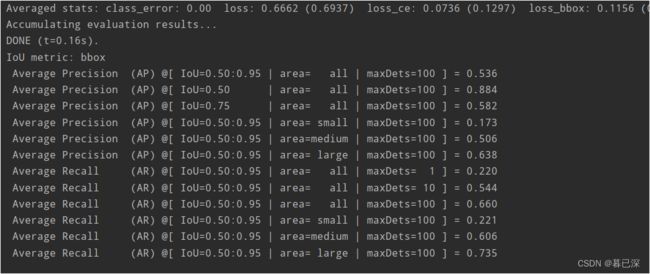

1.评估

其实训练过程中,DETR在每个epoch都会自动进行一次精度评估,评估结果查看直接输出或生成的中间文件就行,也可以再用main.py评估一下精度,将下列代码中的–resume改为最后一个epoch输出的模型路径,–coco_path改为自己的数据集路径即可

python main.py --batch_size 6 --no_aux_loss --eval --resume /home/detr-main/outputs/checkpoint0299.pth --coco_path /home/NWPUVHR-10

精度评估结果:

训练了300个epoch,IoU=0.5的AP为88%左右

2.预测

使用参考链接3中的代码,将要预测的图片保存在一个文件夹下,预测时一次输出所有图片的预测结果

需要修改的参数有:

第30行,我当时下载的backbone是resnet50,修改(训练时已经下载好了主干特征网络是Resnet50的DETR权重文件,放在主文件夹下)

七八十行

–coco_path 修改为自己的数据集路径

–outputdir 修改为建立的预测图片的保存文件夹

–resume 修改为训练好的模型文件路径

修改182行187行的路径为要预测的图片文件夹路径

ps:由于我用服务器跑,无法传回图片而出现一个报错,于是把这两句注释掉了:

#cv2.imshow("images",image)

#cv2.waitKey(1)

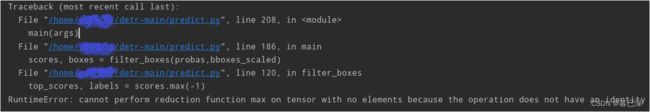

有可能会报一个错,这个是你的某张图片预测时没有检测出目标导致的:

预测结果:

参考:

1.windows10复现DEtection TRansformers(DETR

2.如何用DETR(detection transformer)训练自己的数据集

3.pytorch实现DETR的推理程序