k8s——kubernetes二进制多节点部署(单节点部署为基础)

这里写目录标题

- 1. kubernetes集群架构与组件

-

- 1.1 Master组件

-

- 1.1.1 kube-apiserver

- 1.1.2 kube-controller-manager

- 1.1.3 kube-scheduler

- 1.1.4 etcd

- 1.2 Node组件

-

- 1.2.1 kubelet

- 1.2.2 kube-proxy

- 1.2.3 docker或rocket

- 2.kubernetes集群部署

-

- 2.1 部署过程

- 2.2 实验拓扑图

- 3.二进制多节点部署

-

- 3.1 实验平台环境规划

- 3.2 实验步骤

-

- 1.K8S单节点部署

- 2.多节点部署

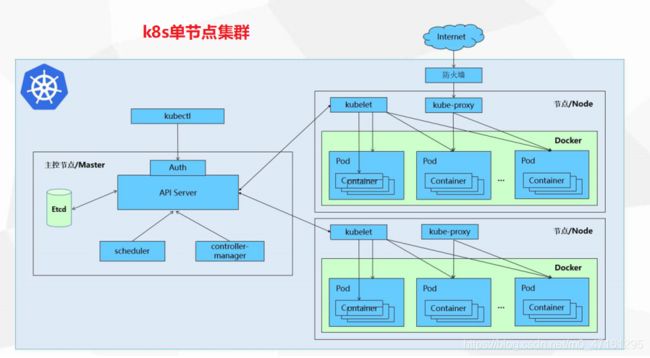

1. kubernetes集群架构与组件

1.1 Master组件

1.1.1 kube-apiserver

Kubernetes API,集群的统- . 入口,各组件协调者,以RESTful API提供接口服务,所有对象资源的增删改查和监听操作都交给APIServer处理后再提交给Etcd存储。

1.1.2 kube-controller-manager

处理集群中常规后台任务,- - 个资源对应一个控制器, 而ControllerManager就是负贵管理这些控制器的。

1.1.3 kube-scheduler

根据调度算法为新创建的Pod选择- - 个Node节点,可以任意部署可以部署在同一个节点上,也可以部署在不同的节点上。

1.1.4 etcd

分布式键值存储系统。用于保存集群状态数据,比如Pod. Service等对象信息。

1.2 Node组件

1.2.1 kubelet

kubelet是Master在Node节点上的Agent,管理本机运行容器的生命周期,比如创建容器、Pod挂载数据卷、下 载secret、获取容器和节点状态等工作。kubelet将每个Pod转换成一组容器。

1.2.2 kube-proxy

在Node节点上实现Pod网络代理,维护网络规则和四层负载均衡工作。

1.2.3 docker或rocket

2.kubernetes集群部署

2.1 部署过程

1.官方提供的三种部署方式

2. Kubernetes 平台环境规划

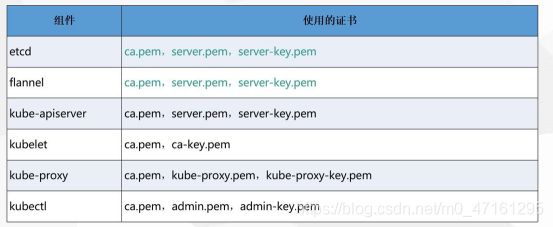

3.自签SSL证书

4.Etcd数据库集群部署

5. Node安装Docker

6. Flannel容 器集群网络部署

7.部署Master组件

8.部署Node组件

9.部署-一个测试示例

10.部署Web UI ( Dashboard )

11.部署集群内部DNS解析服务(CoreDNS)

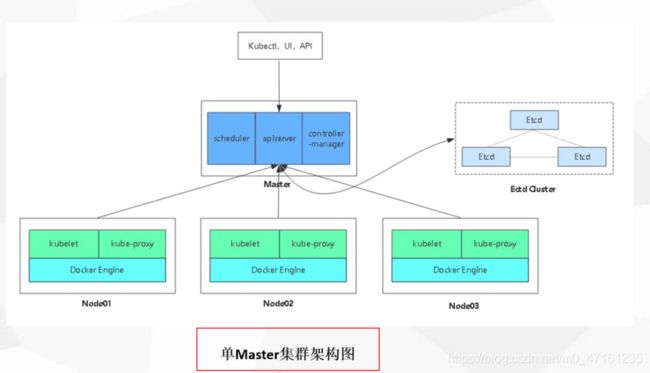

2.2 实验拓扑图

3.二进制多节点部署

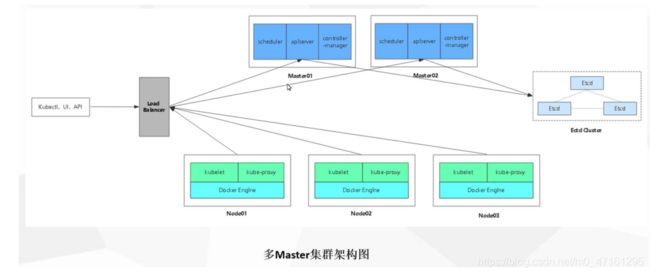

3.1 实验平台环境规划

master1:192.168.200.100

master2:192.168.200.90

node1:192.168.200.110

node2:192.168.200.120

load balance(master)192.168.200.80

load balance(back up)192.169.200.70

vip : 192.168.200.200

3.2 实验步骤

1.K8S单节点部署

master01 192.168.200.100

[root@localhost ~]# iptables -F

[root@localhost ~]# setenforce 0

[root@localhost ~]# hostnamectl set-hostname master

[root@localhost ~]# su

[root@master01 ~]# mkdir k8s

[root@master01 ~]# cd k8s/

[root@master01 k8s]# rz -E

rz waiting to receive.

[root@master01 k8s]# ls

etcd-cert.sh etcd.sh

[root@master01 k8s]# mkdir etcd-cert

[root@master01 k8s]# ls

etcd-cert etcd-cert.sh etcd.sh

[root@master01 k8s]# mv etcd-cert.sh etcd-cert

[root@master01 k8s]# ls

etcd-cert etcd.sh

[root@master01 k8s]# cd /usr/local/bin/

[root@master01 bin]# ls

[root@master01 bin]# rz -E

rz waiting to receive.

[root@master01 bin]# ls

cfssl cfssl-certinfo cfssljson

[root@master01 bin]# chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

[root@master01 bin]# ls

cfssl cfssl-certinfo cfssljson #cfssl 生成证书工具 cfssljson通过传入json文件生成证书 cfssl-certinfo查看证书信息

#开始制作证书

[root@master01 bin]# cd ~/k8s/etcd-cert/

#定义ca证书

cat > ca-config.json <{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

[root@master01 etcd-cert]# ls

ca-config.json etcd-cert.sh

#实现证书签名

cat > ca-csr.json <{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

[root@master01 etcd-cert]# ls

ca-config.json ca-csr.json etcd-cert.sh

#生产证书,生成ca-key.pem ca.pem

[root@master01 etcd-cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2020/10/08 00:46:06 [INFO] generating a new CA key and certificate from CSR

2020/10/08 00:46:06 [INFO] generate received request

2020/10/08 00:46:06 [INFO] received CSR

2020/10/08 00:46:06 [INFO] generating key: rsa-2048

2020/10/08 00:46:07 [INFO] encoded CSR

2020/10/08 00:46:07 [INFO] signed certificate with serial number 682011236265898836699745690623627317797100291414

[root@master01 etcd-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd-cert.sh

#指定etcd三个节点之间的通信验证

cat > server-csr.json <{

"CN": "etcd",

"hosts": [

"192.168.200.100",

"192.168.200.110",

"192.168.200.120"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

[root@master01 etcd-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd-cert.sh server-csr.json

#生成ETCD证书 server-key.pem server.pem

[root@master01 etcd-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

2020/10/08 00:47:56 [INFO] generate received request

2020/10/08 00:47:56 [INFO] received CSR

2020/10/08 00:47:56 [INFO] generating key: rsa-2048

2020/10/08 00:47:57 [INFO] encoded CSR

2020/10/08 00:47:57 [INFO] signed certificate with serial number 622675374353910529509628185945252518303577262750

2020/10/08 00:47:57 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master01 etcd-cert]# ls

ca-config.json ca-csr.json ca.pem server.csr server-key.pem

ca.csr ca-key.pem etcd-cert.sh server-csr.json server.pem

#下载ETCD 二进制包地址

[root@master01 etcd-cert]# cd ~/k8s/

[root@master01 k8s]# ls

etcd-cert etcd-v3.3.10-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

etcd.sh flannel-v0.10.0-linux-amd64.tar.gz

[root@master01 k8s]# tar zxvf etcd-v3.3.10-linux-amd64.tar.gz

[root@master01 k8s]# ls etcd-v3.3.10-linux-amd64

Documentation etcd etcdctl README-etcdctl.md README.md READMEv2-etcdctl.md

[root@master01 k8s]# mkdir /opt/etcd/{cfg,bin,ssl} -p #创建etcd配置文件,命令文件,证书

[root@master01 k8s]# mv etcd-v3.3.10-linux-amd64/etcd etcd-v3.3.10-linux-amd64/etcdctl /opt/etcd/bin/

#证书拷贝

[root@master01 k8s]# cp etcd-cert/*.pem /opt/etcd/ssl/

[root@master01 k8s]# ls /opt/etcd/ssl/

ca-key.pem ca.pem server-key.pem server.pem

#进入卡住状态等待其他节点加入

[root@master01 k8s]# vim etcd.sh

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.1.10 etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat <$WORK_DIR/cfg/etcd

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat </usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

[root@master01 k8s]# bash etcd.sh etcd01 192.168.200.100 etcd02=https://192.168.200.110:2380,etcd03=https://192.168.200.120:2380

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service.

#打开另外一个会话,会发现etcd进程已经开启

[root@master01 ~]# ps -ef | grep etcd

root 13066 12731 0 00:59 pts/1 00:00:00 bash etcd.sh etcd01 192.168.200.100 etcd02=https://192.168.200.110:2380,etcd03=https://192.168.200.120:2380

root 13113 13066 0 00:59 pts/1 00:00:00 systemctl restart etcd

root 13119 1 4 00:59 ? 00:00:02 /opt/etcd/bin/etcd --name=etcd01 --data-dir=/var/lib/etcd/default.etcd --listen-peer-urls=https://192.168.200.100:2380 --listen-client-urls=https://192.168.200.100:2379,http://127.0.0.1:2379 --advertise-client-urls=https://192.168.200.100:2379 --initial-advertise-peer-urls=https://192.168.200.100:2380 --initial-cluster=etcd01=https://192.168.200.100:2380,etcd02=https://192.168.200.110:2380,etcd03=https://192.168.200.120:2380 --initial-cluster-token=etcd-cluster --initial-cluster-state=new --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --peer-cert-file=/opt/etcd/ssl/server.pem --peer-key-file=/opt/etcd/ssl/server-key.pem --trusted-ca-file=/opt/etcd/ssl/ca.pem --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

root 13192 13149 0 01:00 pts/2 00:00:00 grep --color=auto etcd

#拷贝证书去其他节点

[root@master01 ~]# scp -r /opt/etcd/ [email protected]:/opt/

The authenticity of host '192.168.200.110 (192.168.200.110)' can't be established.

ECDSA key fingerprint is SHA256:eLdHi9BvCNVro0zGiYPq1F+Psfoo9V+9EDIvdZDR8vM.

ECDSA key fingerprint is MD5:2d:34:ae:97:fd:bc:af:4f:e1:6b:92:22:48:4d:69:b4.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.200.110' (ECDSA) to the list of known hosts.

[email protected]'s password:

etcd 100% 523 508.0KB/s 00:00

etcd 100% 18MB 85.5MB/s 00:00

etcdctl 100% 15MB 95.2MB/s 00:00

ca-key.pem 100% 1679 2.4MB/s 00:00

ca.pem 100% 1265 1.4MB/s 00:00

server-key.pem 100% 1679 651.0KB/s 00:00

server.pem 100% 1338 737.7KB/s 00:00

[root@master01 ~]# scp -r /opt/etcd/ [email protected]:/opt/

The authenticity of host '192.168.200.120 (192.168.200.120)' can't be established.

ECDSA key fingerprint is SHA256:dMvbIJyuN9aFqJR+OwwLY436gqKEgtipcBLofzOilgU.

ECDSA key fingerprint is MD5:7a:d8:f0:f5:c5:ff:95:36:11:fe:e8:b3:c0:dc:d7:2e.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.200.120' (ECDSA) to the list of known hosts.

[email protected]'s password:

etcd 100% 523 362.4KB/s 00:00

etcd 100% 18MB 95.3MB/s 00:00

etcdctl 100% 15MB 104.1MB/s 00:00

ca-key.pem 100% 1679 2.2MB/s 00:00

ca.pem 100% 1265 2.1MB/s 00:00

server-key.pem 100% 1679 715.4KB/s 00:00

server.pem 100% 1338 759.8KB/s 00:00

#启动脚本拷贝其他节点

[root@master01 ~]# scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

root@192.168.200.110's password:

etcd.service 100% 923 412.9KB/s 00:00

[root@master01 ~]# scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

[email protected]'s password:

etcd.service 100% 923 805.5KB/s 00:00

启动

[root@master01 k8s]# systemctl start etcd

[root@master01 k8s]# systemctl enable etcd

[root@master01 k8s]# systemctl status etcd

● etcd.service - Etcd Server

Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2020-10-08 01:07:12 CST; 1min 44s ago

#检查群集状态

[root@master01 k8s]# cd etcd-cert/ #需要在有证书的文件夹里面验证

[root@master01 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379" cluster-health

member 6a670a4e5fc9896c is healthy: got healthy result from https://192.168.200.100:2379

member 8f01b24208072c50 is healthy: got healthy result from https://192.168.200.120:2379

member 9f5aa0e1c7d6b024 is healthy: got healthy result from https://192.168.200.110:2379

cluster is healthy

2.配置node01 192.168.200.110

[root@promote ~]# iptables -F

[root@promote ~]# setenforce 0

[root@promote ~]# hostnamectl set-hostname node01

[root@promote ~]# su

#在node01节点修改

[root@node01 ~]# vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd02" ##02

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.200.110:2380" ## 110

ETCD_LISTEN_CLIENT_URLS="https://192.168.200.110:2379" ## 110

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.200.110:2380" ## 110

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.200.110:2379" ## 110

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.200.100:2380,etcd02=https://192.168.200.110:2380,etcd03=https://192.168.200.120:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

启动

[root@node01 ~]# systemctl start etcd

[root@node01 ~]# systemctl enable etcd

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service.

[root@node01 ~]# systemctl status etcd

● etcd.service - Etcd Server

Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2020-10-08 01:07:21 CST; 25s ago

3.配置node02 192.168.200.120

[root@localhost ~]# iptables -F

[root@localhost ~]# setenforce 0

[root@localhost ~]# hostnamectl set-hostname node02

[root@localhost ~]# su

#在node02节点修改

[root@node02 ~]# vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd03" ## 03

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.200.120:2380" ## 120

ETCD_LISTEN_CLIENT_URLS="https://192.168.200.120:2379" ## 120

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.200.120:2380" ## 120

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.200.120:2379" ## 120

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.200.100:2380,etcd02=https://192.168.200.110:2380,etcd03=https://192.168.200.120:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

~

启动

[root@node02 ~]# systemctl start etcd

[root@node02 ~]# systemctl enable etcd

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service.

[root@node02 ~]# systemctl status etcd

● etcd.service - Etcd Server

Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2020-10-08 01:07:19 CST; 38s ago

4.所有node节点部署docker引擎

1.先卸载原来版本的docker

yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-engine

2.需要的安装包

yum install -y yum-utils device-mapper-persistent-data lvm2

3.设置阿里云镜像

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

4.更新yum软件包索引

yum makecache fast

5.安装docker-CE,安装docker相关的 docker-ce社区 ee企业版

yum -y install docker-ce docker-ce-cli containerd.io

6.启动docker

systemctl start docker

systemctl enable docker

7.设置镜像加速

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://sno1b9w3.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

8.使用docker version 查看是否安装成功

9.网络优化 开启路由功能

vim /etc/sysctl.conf

net.ipv4.ip_forward=1

sysctl -p

重启网络

service network restart

systemctl restart docker

5.配置flannel网络配置

#写入分配的子网段到ETCD中,供flannel使用

[root@master01 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

#查看写入的信息

[root@master01 etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379" get /coreos.com/network/config

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

#拷贝到所有node节点(只需要部署在node节点即可)

[root@master01 etcd-cert]# cd ~/k8s/

[root@master01 k8s]# ls

etcd-cert etcd-v3.3.10-linux-amd64 flannel-v0.10.0-linux-amd64.tar.gz

etcd.sh etcd-v3.3.10-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

[root@master01 k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz [email protected]:/root

root@192.168.200.110's password:

flannel-v0.10.0-linux-amd64.tar.gz 100% 9479KB 73.2MB/s 00:00

[root@master01 k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz [email protected]:/root

[email protected]'s password:

flannel-v0.10.0-linux-amd64.tar.gz 100% 9479KB 58.7MB/s 00:00

#所有node节点操作解压

[root@node01 ~]# tar zxvf flannel-v0.10.0-linux-amd64.tar.gz

flanneld

mk-docker-opts.sh

README.md

[root@node01 ~]# ls

anaconda-ks.cfg initial-setup-ks.cfg 公共 图片 音乐

flanneld mk-docker-opts.sh 模板 文档 桌面

flannel-v0.10.0-linux-amd64.tar.gz README.md 视频 下载

[root@node01 ~]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p

[root@node01 ~]# mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

[root@node01 ~]# vim flannel.sh

#!/bin/bash

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"} ##生产环境中指向其中一个master,一般master做etcd服务器

cat </opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/opt/etcd/ssl/ca.pem \

-etcd-certfile=/opt/etcd/ssl/server.pem \

-etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat </usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

#开启flannel网络功能

[root@node01 ~]# bash flannel.sh https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/flanneld.service to /usr/lib/systemd/system/flanneld.service.

#配置docker连接flannel

[root@node01 ~]# vim /usr/lib/systemd/system/docker.service

[Service]

Type=notify

# the default is not to use systemd for cgroups because the delegate issues still

# exists and systemd currently does not support the cgroup feature set required

# for containers run by docker

EnvironmentFile=/run/flannel/subnet.env ##加这句话

#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H fd:// --containerd=/run/containerd/containerd.sock ##注释上面一句,增加这一句

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=0

RestartSec=2

Restart=always

[root@node01 ~]# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.25.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.25.1/24 --ip-masq=false --mtu=1450" #说明:bip指定启动时的子网

#重启docker服务

[root@node01 ~]# systemctl daemon-reload

[root@node01 ~]# systemctl restart docker

[root@node01 ~]# ifconfig

docker0: flags=4099,BROADCAST,MULTICAST> mtu 1500

inet 172.17.25.1 netmask 255.255.255.0 broadcast 172.17.25.255

ether 02:42:cd:34:d8:9f txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

ens33: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.200.110 netmask 255.255.255.0 broadcast 192.168.200.255

inet6 fe80::7fc5:140b:bf58:bfb8 prefixlen 64 scopeid 0x20

ether 00:0c:29:60:ee:f7 txqueuelen 1000 (Ethernet)

RX packets 215434 bytes 175791247 (167.6 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 119849 bytes 13699087 (13.0 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel.1: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.25.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::d8fd:c4ff:fe97:35c0 prefixlen 64 scopeid 0x20

ether da:fd:c4:97:35:c0 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 36 overruns 0 carrier 0 collisions 0

####node2同上操作###

[root@node02 ~]# ifconfig

docker0: flags=4099,BROADCAST,MULTICAST> mtu 1500

inet 172.17.4.1 netmask 255.255.255.0 broadcast 172.17.4.255

ether 02:42:51:7e:94:e3 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

ens33: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.200.120 netmask 255.255.255.0 broadcast 192.168.200.255

inet6 fe80::2ddd:910e:9dd7:3804 prefixlen 64 scopeid 0x20

ether 00:0c:29:49:1a:03 txqueuelen 1000 (Ethernet)

RX packets 222680 bytes 181133492 (172.7 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 124770 bytes 14292218 (13.6 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel.1: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.4.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::782c:42ff:feca:531a prefixlen 64 scopeid 0x20

ether 7a:2c:42:ca:53:1a txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 35 overruns 0 carrier 0 collisions 0

# 测试ping通对方docker0网卡 证明flannel起到路由作用

[root@node01 ~]# docker run -it centos:7 /bin/bash

[root@deaaa18a94fa /]# yum install net-tools -y

[root@deaaa18a94fa /]# ifconfig

eth0: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.25.2 netmask 255.255.255.0 broadcast 172.17.25.255

[root@deaaa18a94fa /]# ping 172.17.4.1

PING 172.17.4.1 (172.17.4.1) 56(84) bytes of data.

64 bytes from 172.17.4.1: icmp_seq=1 ttl=63 time=0.755 ms

64 bytes from 172.17.4.1: icmp_seq=2 ttl=63 time=2.13 ms

#再次测试ping通两个node中的centos:7容器

[root@node02 ~]# docker run -it centos:7 /bin/bash

[root@6c63efd0712d /]# yum install net-tools -y

[root@6c63efd0712d /]# ifconfig

eth0: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.4.2 netmask 255.255.255.0 broadcast 172.17.4.255

[root@6c63efd0712d /]# ping 172.17.25.2

PING 172.17.25.2 (172.17.25.2) 56(84) bytes of data.

64 bytes from 172.17.25.2: icmp_seq=1 ttl=62 time=0.488 ms

64 bytes from 172.17.25.2: icmp_seq=2 ttl=62 time=0.549 ms

6.部署master组件

在master上操作,api-server生成证书

[root@master01 k8s]# ls

etcd-cert etcd-v3.3.10-linux-amd64 flannel-v0.10.0-linux-amd64.tar.gz master.zip

etcd.sh etcd-v3.3.10-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

[root@master01 k8s]# unzip master.zip

Archive: master.zip

inflating: apiserver.sh

inflating: controller-manager.sh

inflating: scheduler.sh

[root@master01 k8s]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p

[root@master01 k8s]# mkdir k8s-cert

[root@master01 k8s]# cd k8s-cert/

cat > ca-config.json <{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

[root@master01 k8s-cert]# ls

ca-config.json

cat > ca-csr.json <{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

[root@master01 k8s-cert]# ls

ca-config.json ca-csr.json

[root@master01 k8s-cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2020/10/08 02:03:35 [INFO] generating a new CA key and certificate from CSR

2020/10/08 02:03:35 [INFO] generate received request

2020/10/08 02:03:35 [INFO] received CSR

2020/10/08 02:03:35 [INFO] generating key: rsa-2048

2020/10/08 02:03:35 [INFO] encoded CSR

2020/10/08 02:03:35 [INFO] signed certificate with serial number 117717371577420932601042163897098685802446401912

[root@master01 k8s-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

cat > server-csr.json <{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.200.100", //master1

"192.168.200.90", //master2

"192.168.200.200", //vip

"192.168.200.80", //lb (master)

"192.168.200.70", //lb (backup)

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

[root@master01 k8s-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem server-csr.json

[root@master01 k8s-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

2020/10/08 02:07:24 [INFO] generate received request

2020/10/08 02:07:24 [INFO] received CSR

2020/10/08 02:07:24 [INFO] generating key: rsa-2048

2020/10/08 02:07:24 [INFO] encoded CSR

2020/10/08 02:07:24 [INFO] signed certificate with serial number 430083805945050994239855620117268877861873072530

2020/10/08 02:07:24 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master01 k8s-cert]# ls

ca-config.json ca-csr.json ca.pem server-csr.json server.pem

ca.csr ca-key.pem server.csr server-key.pem

cat > admin-csr.json <{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

[root@master01 k8s-cert]# ls

admin-csr.json ca.csr ca-key.pem server.csr server-key.pem

ca-config.json ca-csr.json ca.pem server-csr.json server.pem

[root@master01 k8s-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

2020/10/08 02:08:16 [INFO] generate received request

2020/10/08 02:08:16 [INFO] received CSR

2020/10/08 02:08:16 [INFO] generating key: rsa-2048

2020/10/08 02:08:16 [INFO] encoded CSR

2020/10/08 02:08:16 [INFO] signed certificate with serial number 128703393960965588319835722368006072668825407118

2020/10/08 02:08:16 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master01 k8s-cert]# ls

admin.csr admin.pem ca-csr.json server.csr server.pem

admin-csr.json ca-config.json ca-key.pem server-csr.json

admin-key.pem ca.csr ca.pem server-key.pem

cat > kube-proxy-csr.json <{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

[root@master01 k8s-cert]# ls

admin.csr admin.pem ca-csr.json kube-proxy-csr.json server-key.pem

admin-csr.json ca-config.json ca-key.pem server.csr server.pem

admin-key.pem ca.csr ca.pem server-csr.json

[root@master01 k8s-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

2020/10/08 02:09:22 [INFO] generate received request

2020/10/08 02:09:22 [INFO] received CSR

2020/10/08 02:09:22 [INFO] generating key: rsa-2048

2020/10/08 02:09:23 [INFO] encoded CSR

2020/10/08 02:09:23 [INFO] signed certificate with serial number 657166277082708480716187649317888036937464272665

2020/10/08 02:09:23 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@master01 k8s-cert]# ls

admin.csr ca-config.json ca.pem kube-proxy.pem server.pem

admin-csr.json ca.csr kube-proxy.csr server.csr

admin-key.pem ca-csr.json kube-proxy-csr.json server-csr.json

admin.pem ca-key.pem kube-proxy-key.pem server-key.pem

[root@master01 k8s-cert]# ls *pem

admin-key.pem ca-key.pem kube-proxy-key.pem server-key.pem

admin.pem ca.pem kube-proxy.pem server.pem

[root@master01 k8s-cert]# cp ca*pem server*pem /opt/kubernetes/ssl/

[root@master01 k8s-cert]# cd ..

#解压kubernetes压缩包

[root@master01 k8s]# tar zxvf kubernetes-server-linux-amd64.tar.gz

[root@master01 k8s]# cd kubernetes/server/bin/

#复制关键命令文件

[root@master01 bin]# cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

[root@master01 bin]# cd /root/k8s

#使用 head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 可以随机生成序列号

[root@master01 k8s]# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

2616bd5c1fa27f74c3ddd35d5e9c29f2

[root@master01 k8s]# vim /opt/kubernetes/cfg/token.csv

2616bd5c1fa27f74c3ddd35d5e9c29f2,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

序列号,用户名,id,角色

#二进制文件,token,证书都准备好,开启apiserver

[root@master01 k8s]# bash apiserver.sh 192.168.200.100 https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

#检查进程是否启动成功

[root@master01 k8s]# ps aux | grep kube

root 69333 37.5 8.3 405152 321744 ? Ssl 02:16 0:12 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379 --bind-address=192.168.200.100 --secure-port=6443 --advertise-address=192.168.200.100 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 69348 0.0 0.0 112724 988 pts/1 S+ 02:17 0:00 grep --color=auto kube

#查看配置文件

[root@master01 k8s]# cat /opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379 \

--bind-address=192.168.200.100 \

--secure-port=6443 \

--advertise-address=192.168.200.100 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--kubelet-https=true \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

#监听的https端口

[root@master01 k8s]# netstat -ntap | grep 6443

tcp 0 0 192.168.200.100:6443 0.0.0.0:* LISTEN 69333/kube-apiserve

tcp 0 0 192.168.200.100:46264 192.168.200.100:6443 ESTABLISHED 69333/kube-apiserve

tcp 0 0 192.168.200.100:6443 192.168.200.100:46264 ESTABLISHED 69333/kube-apiserve

[root@master01 k8s]# netstat -ntap | grep 8080

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 69333/kube-apiserve

#启动scheduler服务

[root@master01 k8s]# ./scheduler.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

[root@master01 k8s]# ps aux | grep ku

postfix 69261 0.0 0.1 91732 4084 ? S 02:14 0:00 pickup -l -t unix -u

root 69333 9.3 8.3 405216 322440 ? Ssl 02:16 0:18 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379 --bind-address=192.168.200.100 --secure-port=6443 --advertise-address=192.168.200.100 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 69432 2.9 0.5 46128 19488 ? Ssl 02:19 0:00 /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect

root 69452 0.0 0.0 112728 984 pts/1 S+ 02:20 0:00 grep --color=auto ku

[root@master01 k8s]# chmod +x controller-manager.sh

#启动controller-manager

[root@master01 k8s]# ./controller-manager.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

[root@master01 k8s]# ps aux | grep kube

root 69333 7.4 8.3 405216 322912 ? Ssl 02:16 0:22 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379 --bind-address=192.168.200.100 --secure-port=6443 --advertise-address=192.168.200.100 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 69432 2.4 0.5 47184 20392 ? Ssl 02:19 0:03 /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect

root 69517 5.0 1.5 137560 58608 ? Ssl 02:21 0:02 /opt/kubernetes/bin/kube-controller-manager --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true --address=127.0.0.1 --service-cluster-ip-range=10.0.0.0/24 --cluster-name=kubernetes --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem --root-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem --experimental-cluster-signing-duration=87600h0m0s

root 69549 0.0 0.0 112724 988 pts/1 S+ 02:22 0:00 grep --color=auto kube

#查看master 节点状态

[root@master01 k8s]# /opt/kubernetes/bin/kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

7.node节点部署

//master上操作

//把 kubelet、kube-proxy拷贝到node节点上去

[root@master01 k8s]# cd kubernetes/server/bin/

[root@master01 bin]# scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin/

root@192.168.200.110's password:

kubelet 100% 168MB 72.0MB/s 00:02

kube-proxy 100% 48MB 76.0MB/s 00:00

[root@master01 bin]# scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin/

[email protected]'s password:

kubelet 100% 168MB 101.4MB/s 00:01

kube-proxy 100% 48MB 94.3MB/s 00:00

#nod01节点操作(复制node.zip到/root目录下再解压)

[root@node01 ~]# ls

anaconda-ks.cfg flannel-v0.10.0-linux-amd64.tar.gz node.zip 公共 视频 文档 音乐

flannel.sh initial-setup-ks.cfg README.md 模板 图片 下载 桌面

#解压node.zip,获得kubelet.sh proxy.sh

[root@node01 ~]# unzip node.zip

Archive: node.zip

inflating: proxy.sh

inflating: kubelet.sh

#在master上操作

[root@master01 bin]# cd /root/k8s/

[root@master01 k8s]# mkdir kubeconfig

[root@master01 k8s]# cd kubeconfig/

[root@master01 kubeconfig]# ls

[root@master01 kubeconfig]# rz -E

rz waiting to receive.

[root@master01 kubeconfig]# ls

kubeconfig.sh

[root@master01 kubeconfig]# mv kubeconfig.sh kubeconfig

[root@master01 kubeconfig]# cat /opt/kubernetes/cfg/token.csv

2616bd5c1fa27f74c3ddd35d5e9c29f2,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

[root@master01 kubeconfig]# vim kubeconfig

APISERVER=$1

SSL_DIR=$2

# 创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://$APISERVER:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=2616bd5c1fa27f74c3ddd35d5e9c29f2 \ #修改token信息

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

#设置环境变量(可以写入到/etc/profile中)

[root@master01 kubeconfig]# vim /etc/profile

export PATH=$PATH:/opt/kubernetes/bin/

[root@master01 kubeconfig]# source /etc/profile

[root@master01 kubeconfig]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

#生成配置文件

[root@master01 kubeconfig]# bash kubeconfig 192.168.200.100 /root/k8s/k8s-cert/

Cluster "kubernetes" set.

User "kubelet-bootstrap" set.

Context "default" created.

Switched to context "default".

Cluster "kubernetes" set.

User "kube-proxy" set.

Context "default" created.

Switched to context "default".

[root@master01 kubeconfig]# ls

bootstrap.kubeconfig kubeconfig kube-proxy.kubeconfig

#拷贝配置文件到node节点

[root@master01 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/kubernetes/cfg/

root@192.168.200.110's password:

bootstrap.kubeconfig 100% 2169 1.7MB/s 00:00

kube-proxy.kubeconfig 100% 6275 3.5MB/s 00:00

[root@master01 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/kubernetes/cfg/

[email protected]'s password:

bootstrap.kubeconfig 100% 2169 1.7MB/s 00:00

kube-proxy.kubeconfig 100% 6275 3.4MB/s 00:00

#创建bootstrap角色赋予权限用于连接apiserver请求签名(关键)

[root@master01 kubeconfig]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

#在node01节点上操作

[root@node01 ~]# bash kubelet.sh 192.168.200.110

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

#检查kubelet服务启动

[root@node01 ~]# ps aux | grep kube

root 71302 0.1 0.5 325908 22272 ? Ssl 01:40 0:03 /opt/kubernetes/bin/flanneld --ip-masq --etcd-endpoints=https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 76483 1.0 1.1 470876 45012 ? Ssl 02:37 0:00 /opt/kubernetes/bin/kubelet --logtostderr=true --v=4 --hostname-override=192.168.200.110 --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig --config=/opt/kubernetes/cfg/kubelet.config --cert-dir=/opt/kubernetes/ssl --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

root 76539 0.0 0.0 112724 988 pts/1 S+ 02:38 0:00 grep --color=auto kube

#master上操作

#检查到node01节点的请求

[root@master01 kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-BogyYYmDDbmbX0aBxMsx_ETxdt1mPCtSj9FiP3irWsk 87s kubelet-bootstrap Pending(等待集群给该节点颁发证书)

#Master颁发证书

[root@master01 kubeconfig]# kubectl certificate approve node-csr-BogyYYmDDbmbX0aBxMsx_ETxdt1mPCtSj9FiP3irWsk

certificatesigningrequest.certificates.k8s.io/node-csr-BogyYYmDDbmbX0aBxMsx_ETxdt1mPCtSj9FiP3irWsk approved

#继续查看证书状态

[root@master01 kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-BogyYYmDDbmbX0aBxMsx_ETxdt1mPCtSj9FiP3irWsk 2m51s kubelet-bootstrap Approved,Issued(已经被允许加入群集)

#查看群集节点,成功加入node01节点

[root@master01 kubeconfig]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.200.110 Ready 75s v1.12.3

#在node01节点操作,启动proxy服务

[root@node01 ~]# bash proxy.sh 192.168.200.110

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

[root@node01 ~]# systemctl status kube-proxy.service

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2020-10-08 02:42:16 CST; 15s ago

[root@node01 ~]# systemctl enable kubelet.service

[root@node01 ~]# systemctl enable kube-proxy.service

#node02节点部署

//在node01节点操作

//把现成的/opt/kubernetes目录复制到其他节点进行修改即可

[root@node01 ~]# scp -r /opt/kubernetes/ [email protected]:/opt/

The authenticity of host '192.168.200.120 (192.168.200.120)' can't be established.

ECDSA key fingerprint is SHA256:dMvbIJyuN9aFqJR+OwwLY436gqKEgtipcBLofzOilgU.

ECDSA key fingerprint is MD5:7a:d8:f0:f5:c5:ff:95:36:11:fe:e8:b3:c0:dc:d7:2e.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.200.120' (ECDSA) to the list of known hosts.

[email protected]'s password:

flanneld 100% 241 160.0KB/s 00:00

bootstrap.kubeconfig 100% 2169 1.4MB/s 00:00

kube-proxy.kubeconfig 100% 6275 3.0MB/s 00:00

kubelet 100% 379 275.9KB/s 00:00

kubelet.config 100% 269 179.0KB/s 00:00

kubelet.kubeconfig 100% 2298 841.6KB/s 00:00

kube-proxy 100% 191 172.1KB/s 00:00

mk-docker-opts.sh 100% 2139 1.9MB/s 00:00

scp: /opt//kubernetes/bin/flanneld: Text file busy

kubelet 100% 168MB 116.8MB/s 00:01

kube-proxy 100% 48MB 110.6MB/s 00:00

kubelet.crt 100% 2197 760.0KB/s 00:00

kubelet.key 100% 1675 851.2KB/s 00:00

kubelet-client-2020-10-08-02-40-21.pem 100% 1277 612.3KB/s 00:00

kubelet-client-current.pem 100% 1277 825.1KB/s 00:00

#把kubelet,kube-proxy的service文件拷贝到node2中

[root@node01 ~]# scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service [email protected]:/usr/lib/systemd/system/

root@192.168.200.120's password:

kubelet.service 100% 264 262.6KB/s 00:00

kube-proxy.service 100% 231 105.4KB/s 00:00

//在node02上操作,进行修改

//首先删除复制过来的证书,等会node02会自行申请证书

[root@node02 ~]# cd /opt/kubernetes/ssl/

[root@node02 ssl]# ls

kubelet-client-2020-10-08-02-40-21.pem kubelet-client-current.pem kubelet.crt kubelet.key

[root@node02 ssl]# rm -rf *

//修改配置文件kubelet kubelet.config kube-proxy(三个配置文件)

[root@node02 ssl]# cd ../cfg/

[root@node02 cfg]# vim kubelet

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.200.120 \ # 改成120

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

[root@node02 cfg]# vim kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.200.120 # 改成120

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

[root@node02 cfg]# vim kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.200.120 \ #改成120

--cluster-cidr=10.0.0.0/24 \

--proxy-mode=ipvs \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

//启动服务

[root@node02 cfg]# systemctl start kubelet.service

[root@node02 cfg]# systemctl enable kubelet.service

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

[root@node02 cfg]# systemctl start kube-proxy.service

[root@node02 cfg]# systemctl enable kube-proxy.service

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

//在master上操作查看请求

[root@master01 kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-BogyYYmDDbmbX0aBxMsx_ETxdt1mPCtSj9FiP3irWsk 13m kubelet-bootstrap Approved,Issued

node-csr-lgGd4HsdfVfC56DL3j8U_liiI7ZquBaBfEeAg0OTMkQ 46s kubelet-bootstrap Pending

//授权许可加入群集

[root@master01 kubeconfig]# kubectl certificate approve node-csr-lgGd4HsdfVfC56DL3j8U_liiI7ZquBaBfEeAg0OTMkQ

certificatesigningrequest.certificates.k8s.io/node-csr-lgGd4HsdfVfC56DL3j8U_liiI7ZquBaBfEeAg0OTMkQ approved

[root@master01 kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-BogyYYmDDbmbX0aBxMsx_ETxdt1mPCtSj9FiP3irWsk 14m kubelet-bootstrap Approved,Issued

node-csr-lgGd4HsdfVfC56DL3j8U_liiI7ZquBaBfEeAg0OTMkQ 84s kubelet-bootstrap Approved,Issued

//查看群集中的节点

[root@master01 kubeconfig]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.200.110 Ready 12m v1.12.3

192.168.200.120 Ready 44s v1.12.3

单节点部署完毕!

2.多节点部署

在单节点的基础上进行多节点部署

1.master02部署

//优先关闭防火墙和selinux服务

[root@promote ~]# iptables -F

[root@promote ~]# setenforce 0

[root@promote ~]# hostnamectl set-hostname master02

[root@promote ~]# su

//在master01上操作

//复制kubernetes目录到master02

[root@master01 k8s]# scp -r /opt/kubernetes/ [email protected]:/opt

The authenticity of host '192.168.200.90 (192.168.200.90)' can't be established.

ECDSA key fingerprint is SHA256:LGVQSrzmeWOjKsn2nM6C187BdfANy9jsFvmzXotxD7M.

ECDSA key fingerprint is MD5:d2:cd:3a:66:ab:05:b8:16:f8:42:4a:88:4c:60:14:b4.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.200.90' (ECDSA) to the list of known hosts.

[email protected]'s password:

token.csv 100% 84 26.7KB/s 00:00

kube-apiserver 100% 939 474.1KB/s 00:00

kube-scheduler 100% 94 37.7KB/s 00:00

kube-controller-manager 100% 483 341.2KB/s 00:00

kube-apiserver 100% 184MB 105.1MB/s 00:01

kubectl 100% 55MB 114.6MB/s 00:00

kube-controller-manager 100% 155MB 115.7MB/s 00:01

kube-scheduler 100% 55MB 103.4MB/s 00:00

ca-key.pem 100% 1679 365.7KB/s 00:00

ca.pem 100% 1359 429.0KB/s 00:00

server-key.pem 100% 1675 1.2MB/s 00:00

server.pem 100% 1643 754.4KB/s 00:00

//复制master中的三个组件启动脚本kube-apiserver.service kube-controller-manager.service kube-scheduler.service

[root@master01 k8s]# scp /usr/lib/systemd/system/{kube-apiserver,kube-controller-manager,kube-scheduler}.service [email protected]:/usr/lib/systemd/system/

root@192.168.200.90's password:

kube-apiserver.service 100% 282 110.1KB/s 00:00

kube-controller-manager.service 100% 317 277.0KB/s 00:00

kube-scheduler.service 100% 281 496.7KB/s 00:00

//master02上操作

//修改配置文件kube-apiserver中的IP

[root@master02 ~]# cd /opt/kubernetes/cfg/

[root@master02 cfg]# vim kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.200.100:2379,https://192.168.200.110:2379,https://192.168.200.120:2379 \

--bind-address=192.168.200.90 \ #改90

--secure-port=6443 \

--advertise-address=192.168.200.90 \ #改90

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--kubelet-https=true \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

//特别注意:master02一定要有etcd证书

//需要拷贝master01上已有的etcd证书给master02使用

[root@master01 k8s]# scp -r /opt/etcd/ [email protected]:/opt/

[email protected]'s password:

etcd 100% 523 274.8KB/s 00:00

etcd 100% 18MB 85.9MB/s 00:00

etcdctl 100% 15MB 71.6MB/s 00:00

ca-key.pem 100% 1679 266.4KB/s 00:00

ca.pem 100% 1265 671.4KB/s 00:00

server-key.pem 100% 1679 516.1KB/s 00:00

server.pem 100% 1338 822.6KB/s 00:00

//启动master02中的三个组件服务

[root@master02 cfg]# systemctl start kube-apiserver.service

[root@master02 cfg]# systemctl start kube-controller-manager.service

[root@master02 cfg]# systemctl start kube-scheduler.service

//增加环境变量

[root@master02 cfg]# vim /etc/profile

export PATH=$PATH:/opt/kubernetes/bin/

[root@master02 cfg]# source /etc/profile

[root@master02 cfg]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.200.110 Ready 30m v1.12.3

192.168.200.120 Ready 18m v1.12.3

2.lb01配置

[root@localhost ~]# systemctl stop firewalld.service

[root@localhost ~]# setenforce 0

[root@localhost ~]# hostnamectl set-hostname nginx01

[root@localhost ~]# su

[root@nginx01 ~]# vim /etc/yum.repos.d/nginx.repo

[nginx]

name=nginx repo

baseurl=http://nginx.org/packages/centos/7/$basearch/

gpgcheck=0

[root@nginx01 ~]# yum install nginx -y

//添加四层转发 在events和http中间添加

events {

worker_connections 1024;

}

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.200.100:6443;

server 192.168.200.90:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

http {

[root@nginx01 ~]# systemctl start nginx

//部署keepalived服务

[root@nginx01 ~]# yum install keepalived -y

//修改配置文件

[root@nginx01 ~]# rz -E

rz waiting to receive.

[root@nginx01 ~]# ls

anaconda-ks.cfg keepalived.conf 模板 图片 下载 桌面

initial-setup-ks.cfg 公共 视频 文档 音乐

[root@nginx01 ~]# cp keepalived.conf /etc/keepalived/keepalived.conf

cp:是否覆盖"/etc/keepalived/keepalived.conf"? yes

//注意:lb01是Mster配置如下:

[root@nginx01 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51# VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.200.200/24 ###

}

track_script {

check_nginx ###

}

}

[root@nginx01 ~]# vim /etc/nginx/check_nginx.sh

count=$(ps -ef |grep nginx |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

[root@nginx01 ~]# chmod +x /etc/nginx/check_nginx.sh

[root@nginx01 ~]# systemctl start keepalived

3.lb2配置

[root@promote ~]# systemctl stop firewalld.service

[root@promote ~]# setenforce 0

[root@promote ~]# hostnamectl set-hostname nginx02

[root@promote ~]# su

[root@nginx02 ~]# vim /etc/yum.repos.d/nginx.repo

[nginx]

name=nginx repo

baseurl=http://nginx.org/packages/centos/7/$basearch/

gpgcheck=0

[root@nginx02 ~]# yum install nginx -y

//添加四层转发

[root@nginx02 ~]# vim /etc/nginx/nginx.conf

events {

worker_connections 1024;

}

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.200.100:6443;

server 192.168.200.90:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

http {

[root@nginx02 ~]# systemctl restart nginx

//部署keepalived服务

[root@nginx02 ~]# yum install keepalived -y

//修改配置文件

[root@nginx02 ~]# rz -E

rz waiting to receive.

[root@nginx02 ~]# ls

anaconda-ks.cfg keepalived.conf 模板 图片 下载 桌面

initial-setup-ks.cfg 公共 视频 文档 音乐

[root@nginx02 ~]# cp keepalived.conf /etc/keepalived/keepalived.conf

cp:是否覆盖"/etc/keepalived/keepalived.conf"? yes

[root@nginx02 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/nginx/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.200.200/24

}

track_script {

check_nginx

}

}

[root@nginx02 ~]# vim /etc/nginx/check_nginx.sh

count=$(ps -ef |grep nginx |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

[root@nginx02 ~]# chmod +x /etc/nginx/check_nginx.sh

[root@nginx02 ~]# systemctl start keepalived

4.验证配置

//查看lb01地址信息

[root@nginx01 ~]# ip a

1: lo: ,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: ,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:79:9c:93 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.80/24 brd 192.168.200.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.200.200/24 scope global secondary ens33 //漂移地址在lb01中

valid_lft forever preferred_lft forever

inet6 fe80::b948:c5a0:c6f:e7b7/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: virbr0: -CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:e8:d2:e6 brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: ,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:e8:d2:e6 brd ff:ff:ff:ff:ff:ff

//查看lb02地址信息

[root@nginx02 ~]# ip a

1: lo: ,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: ,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:05:81:3c brd ff:ff:ff:ff:ff:ff

inet 192.168.200.70/24 brd 192.168.200.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::fc8b:3133:2445:5d32/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: virbr0: -CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default qlen 1000

link/ether 52:54:00:64:ff:7e brd ff:ff:ff:ff:ff:ff

inet 192.168.122.1/24 brd 192.168.122.255 scope global virbr0

valid_lft forever preferred_lft forever

4: virbr0-nic: ,MULTICAST> mtu 1500 qdisc pfifo_fast master virbr0 state DOWN group default qlen 1000

link/ether 52:54:00:64:ff:7e brd ff:ff:ff:ff:ff:ff

//验证地址漂移(lb01中使用pkill nginx,再在lb02中使用ip a 查看)

[root@nginx01 ~]# pkill nginx

[root@nginx01 ~]# ip a

1: lo: ,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: ,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:79:9c:93 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.80/24 brd 192.168.200.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet6 fe80::b948:c5a0:c6f:e7b7/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@nginx02 ~]# ip a

1: lo: ,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: ,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:05:81:3c brd ff:ff:ff:ff:ff:ff

inet 192.168.200.70/24 brd 192.168.200.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.200.200/24 scope global secondary ens33 #漂移

//恢复操作(在lb01中先启动nginx服务,再启动keepalived服务)

[root@nginx01 ~]# systemctl start nginx

[root@nginx01 ~]# systemctl start keepalived

[root@nginx01 ~]# ip a

1: lo: ,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: ,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:79:9c:93 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.80/24 brd 192.168.200.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 192.168.200.200/24 scope global secondary ens33 #漂移回来

//nginx站点/usr/share/nginx/html

5.开始修改node节点配置文件统一VIP

//开始修改node节点配置文件统一VIP(bootstrap.kubeconfig,kubelet.kubeconfig)

[root@node02 cfg]# vim /opt/kubernetes/cfg/bootstrap.kubeconfig

server: https://192.168.200.200:6443

[root@node02 cfg]# vim /opt/kubernetes/cfg/kubelet.kubeconfig

server: https://192.168.200.200:6443

[root@node02 cfg]# vim /opt/kubernetes/cfg/kube-proxy.kubeconfig

server: https://192.168.200.200:6443

[root@node02 cfg]# systemctl restart kube-proxy.service

[root@node02 cfg]# systemctl restart kubelet.service

#

[root@node01 ~]# cd /opt/kubernetes/cfg/

[root@node01 cfg]# ls

bootstrap.kubeconfig kubelet kubelet.kubeconfig kube-proxy.kubeconfig

flanneld kubelet.config kube-proxy

[root@node01 cfg]# vim /opt/kubernetes/cfg/bootstrap.kubeconfig

[root@node01 cfg]# vim /opt/kubernetes/cfg/kubelet.kubeconfig

[root@node01 cfg]# vim /opt/kubernetes/cfg/kube-proxy.kubeconfig

server: https://192.168.200.200:6443

[root@node01 cfg]# systemctl restart kubelet.service

[root@node01 cfg]# systemctl restart kube-proxy.service

替换完成直接自检

[root@node01 cfg]# grep 200.200 *

bootstrap.kubeconfig: server: https://192.168.200.200:6443

kubelet.kubeconfig: server: https://192.168.200.200:6443

kube-proxy.kubeconfig: server: https://192.168.200.200:6443

[root@node02 cfg]# grep 200.200 *

bootstrap.kubeconfig: server: https://192.168.200.200:6443

kubelet.kubeconfig: server: https://192.168.200.200:6443

kube-proxy.kubeconfig: server: https://192.168.200.200:6443

//在lb01上查看nginx的k8s日志

[root@nginx01 ~]# tail /var/log/nginx/k8s-access.log //重启服务产生日志

192.168.200.110 192.168.200.100:6443 - [08/Oct/2020:04:14:23 +0800] 200 1121

192.168.200.110 192.168.200.90:6443 - [08/Oct/2020:04:14:23 +0800] 200 1120

192.168.200.120 192.168.200.90:6443 - [08/Oct/2020:04:14:40 +0800] 200 1120

192.168.200.120 192.168.200.90:6443 - [08/Oct/2020:04:14:40 +0800] 200 1121

6.状态检查

//在master01上操作

//测试创建pod

[root@master01 ~]# kubectl run nginx --image=nginx

kubectl run --generator=deployment/apps.v1beta1 is DEPRECATED and will be removed in a future version. Use kubectl create instead.

deployment.apps/nginx created

//查看状态

[root@master01 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-dbddb74b8-rksr7 1/1 Running 0 39s

注意日志问题,直接查看报错

[root@master01 ~]# kubectl logs nginx-dbddb74b8-rksr7

Error from server (Forbidden): Forbidden (user=system:anonymous, verb=get, resource=nodes, subresource=proxy) ( pods/log nginx-dbddb74b8-rksr7)

解决方法 创建匿名用户查看

[root@master01 ~]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

clusterrolebinding.rbac.authorization.k8s.io/cluster-system-anonymous created

[root@master01 ~]# kubectl logs nginx-dbddb74b8-rksr7 查看日志

/docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration

/docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: Getting the checksum of /etc/nginx/conf.d/default.conf

10-listen-on-ipv6-by-default.sh: Enabled listen on IPv6 in /etc/nginx/conf.d/default.conf

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

//查看pod网络

[root@master01 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

nginx-dbddb74b8-rksr7 1/1 Running 0 3m2s 172.17.25.2 192.168.200.110

//在对应网段的node节点上操作可以直接访问

[root@node01 cfg]# curl 172.17.25.2

<!DOCTYPE html>

Welcome to nginx<span class="token operator">!</span><<span class="token operator">/</span>title>

<style>

body <span class="token punctuation">{</span>

width: 35em<span class="token punctuation">;</span>

margin: 0 auto<span class="token punctuation">;</span>

font<span class="token operator">-</span>family: Tahoma<span class="token punctuation">,</span> Verdana<span class="token punctuation">,</span> Arial<span class="token punctuation">,</span> sans<span class="token operator">-</span>serif<span class="token punctuation">;</span>

<span class="token punctuation">}</span>

<<span class="token operator">/</span>style>

<<span class="token operator">/</span>head>

<body>

<h1>Welcome to nginx<span class="token operator">!</span><<span class="token operator">/</span>h1>

<p><span class="token keyword">If</span> you see this page<span class="token punctuation">,</span> the nginx web server is successfully installed and

working<span class="token punctuation">.</span> Further configuration is required<span class="token punctuation">.</span><<span class="token operator">/</span>p>

<p><span class="token keyword">For</span> online documentation and support please refer to

<a href=<span class="token string">"http://nginx.org/"</span>>nginx<span class="token punctuation">.</span>org<<span class="token operator">/</span>a><span class="token punctuation">.</span><br<span class="token operator">/</span>>

Commercial support is available at

<a href=<span class="token string">"http://nginx.com/"</span>>nginx<span class="token punctuation">.</span>com<<span class="token operator">/</span>a><span class="token punctuation">.</span><<span class="token operator">/</span>p>

<p><em>Thank you <span class="token keyword">for</span> <span class="token keyword">using</span> nginx<span class="token punctuation">.</span><<span class="token operator">/</span>em><<span class="token operator">/</span>p>

<<span class="token operator">/</span>body>

<<span class="token operator">/</span>html>

<span class="token operator">/</span><span class="token operator">/</span>访问就会产生日志

<span class="token operator">/</span><span class="token operator">/</span>回到master01操作

<span class="token namespace">[root@master01 ~]</span><span class="token comment"># kubectl logs nginx-dbddb74b8-rksr7</span>

<span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>sh: <span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>d<span class="token operator">/</span> is not empty<span class="token punctuation">,</span> will attempt to perform configuration

<span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>sh: Looking <span class="token keyword">for</span> shell scripts in <span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>d<span class="token operator">/</span>

<span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>sh: Launching <span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>d<span class="token operator">/</span>10<span class="token operator">-</span>listen<span class="token operator">-</span>on<span class="token operator">-</span>ipv6<span class="token operator">-</span>by<span class="token operator">-</span>default<span class="token punctuation">.</span>sh

10<span class="token operator">-</span>listen<span class="token operator">-</span>on<span class="token operator">-</span>ipv6<span class="token operator">-</span>by<span class="token operator">-</span>default<span class="token punctuation">.</span>sh: Getting the checksum of <span class="token operator">/</span>etc<span class="token operator">/</span>nginx<span class="token operator">/</span>conf<span class="token punctuation">.</span>d<span class="token operator">/</span>default<span class="token punctuation">.</span>conf

10<span class="token operator">-</span>listen<span class="token operator">-</span>on<span class="token operator">-</span>ipv6<span class="token operator">-</span>by<span class="token operator">-</span>default<span class="token punctuation">.</span>sh: Enabled listen on IPv6 in <span class="token operator">/</span>etc<span class="token operator">/</span>nginx<span class="token operator">/</span>conf<span class="token punctuation">.</span>d<span class="token operator">/</span>default<span class="token punctuation">.</span>conf

<span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>sh: Launching <span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>d<span class="token operator">/</span>20<span class="token operator">-</span>envsubst<span class="token operator">-</span>on<span class="token operator">-</span>templates<span class="token punctuation">.</span>sh

<span class="token operator">/</span>docker<span class="token operator">-</span>entrypoint<span class="token punctuation">.</span>sh: Configuration complete<span class="token punctuation">;</span> ready <span class="token keyword">for</span> <span class="token function">start</span> up

172<span class="token punctuation">.</span>17<span class="token punctuation">.</span>25<span class="token punctuation">.</span>1 <span class="token operator">-</span> <span class="token operator">-</span> <span class="token punctuation">[</span>07<span class="token operator">/</span>Oct<span class="token operator">/</span>2020:20:21:12 <span class="token operator">+</span>0000<span class="token punctuation">]</span> <span class="token string">"GET / HTTP/1.1"</span> 200 612 <span class="token string">"-"</span> <span class="token string">"curl/7.29.0"</span> <span class="token string">"-"</span>

</code></pre>

</div>

</div>

</div>

</div>

</div>

<!--PC和WAP自适应版-->

<div id="SOHUCS" sid="1560860774807703552"></div>

<script type="text/javascript" src="/views/front/js/chanyan.js"></script>

<!-- 文章页-底部 动态广告位 -->

<div class="youdao-fixed-ad" id="detail_ad_bottom"></div>

</div>

<div class="col-md-3">

<div class="row" id="ad">

<!-- 文章页-右侧1 动态广告位 -->

<div id="right-1" class="col-lg-12 col-md-12 col-sm-4 col-xs-4 ad">

<div class="youdao-fixed-ad" id="detail_ad_1"> </div>

</div>

<!-- 文章页-右侧2 动态广告位 -->

<div id="right-2" class="col-lg-12 col-md-12 col-sm-4 col-xs-4 ad">

<div class="youdao-fixed-ad" id="detail_ad_2"></div>

</div>

<!-- 文章页-右侧3 动态广告位 -->

<div id="right-3" class="col-lg-12 col-md-12 col-sm-4 col-xs-4 ad">

<div class="youdao-fixed-ad" id="detail_ad_3"></div>

</div>

</div>

</div>

</div>

</div>

</div>

<div class="container">

<h4 class="pt20 mb15 mt0 border-top">你可能感兴趣的:(kubernetes,kubernetes,k8s多节点二进制部署,k8s单节点二进制部署,k8s集群,云计算)</h4>

<div id="paradigm-article-related">

<div class="recommend-post mb30">

<ul class="widget-links">

<li><a href="/article/1892645648461787136.htm"

title="前端与后端的对接事宜、注意事项" target="_blank">前端与后端的对接事宜、注意事项</a>

<span class="text-muted">ZhooooYuChEnG</span>

<a class="tag" taget="_blank" href="/search/%E5%89%8D%E7%AB%AF/1.htm">前端</a><a class="tag" taget="_blank" href="/search/%E5%89%8D%E5%90%8E%E7%AB%AF%E5%AF%B9%E6%8E%A5/1.htm">前后端对接</a>

<div>前端与后端的对接事宜、注意事项一、对接核心流程(完整生命周期)需求分析接口设计开发联调测试验证上线部署二、前端视角:对接方法与注意事项1.对接流程接口文档确认:阅读后端提供的OpenAPI/Swagger文档请求构造:处理参数、请求头、认证信息发送请求:通过AJAX/Fetch/Axios发起HTTP调用响应处理:解析数据、错误处理、状态管理数据渲染:将接口数据转换为UI可用的格式2.关键代码示例</div>

</li>

<li><a href="/article/1892643884815675392.htm"

title="内网下,Ubuntu (24.10) 离线安装docker以及离线安装docker compose最新教程" target="_blank">内网下,Ubuntu (24.10) 离线安装docker以及离线安装docker compose最新教程</a>

<span class="text-muted">AIOTASUD</span>

<a class="tag" taget="_blank" href="/search/python/1.htm">python</a><a class="tag" taget="_blank" href="/search/%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD/1.htm">人工智能</a><a class="tag" taget="_blank" href="/search/Ubuntu/1.htm">Ubuntu</a><a class="tag" taget="_blank" href="/search/Python/1.htm">Python</a><a class="tag" taget="_blank" href="/search/%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD/1.htm">人工智能</a><a class="tag" taget="_blank" href="/search/Ubuntu/1.htm">Ubuntu</a><a class="tag" taget="_blank" href="/search/docker/1.htm">docker</a><a class="tag" taget="_blank" href="/search/%E7%A6%BB%E7%BA%BF%E5%AE%89%E8%A3%85docker/1.htm">离线安装docker</a>

<div>一般在数据比较敏感的情况下,是无法使用网络的,而对于Ubuntu系统来说,怎么离线安装docker和离线安装dockercompose呢?下面我给大家来讲一下:离线安装docker采用二进制安装:1.下载docker离线包官网下载:Indexoflinux/static/stable/x86_64/https://download.docker.com/linux/static/stable/x8</div>

</li>

<li><a href="/article/1892639593170268160.htm"

title="使用SingleStoreDB构建高效的AI检索器" target="_blank">使用SingleStoreDB构建高效的AI检索器</a>

<span class="text-muted">qahaj</span>

<a class="tag" taget="_blank" href="/search/%E4%BA%BA%E5%B7%A5%E6%99%BA%E8%83%BD/1.htm">人工智能</a><a class="tag" taget="_blank" href="/search/python/1.htm">python</a>

<div>在构建现代AI应用时,高效存储和检索向量数据是不可或缺的一环。SingleStoreDB是一款高性能的分布式SQL数据库,不仅支持云端和本地部署,还具备向量存储能力及相关函数(如dot_product和euclidean_distance),能够很好地支持基于向量的应用场景,如文本相似度匹配。本文将以SingleStoreDB为核心,结合LangChain生态系统,展示如何实现一个简单但功能强大的</div>

</li>

<li><a href="/article/1892638206986350592.htm"

title="Docker部署Kibana8" target="_blank">Docker部署Kibana8</a>

<span class="text-muted">GitIDEA</span>

<a class="tag" taget="_blank" href="/search/docker/1.htm">docker</a><a class="tag" taget="_blank" href="/search/%E5%AE%B9%E5%99%A8/1.htm">容器</a><a class="tag" taget="_blank" href="/search/%E8%BF%90%E7%BB%B4/1.htm">运维</a>