好好学习第5天:Xception动物识别

>- ** 本文为[365天深度学习训练营](https://mp.weixin.qq.com/s/k-vYaC8l7uxX51WoypLkTw) 中的学习记录博客**

活动地址:CSDN21天学习挑战赛

一、Xception介绍

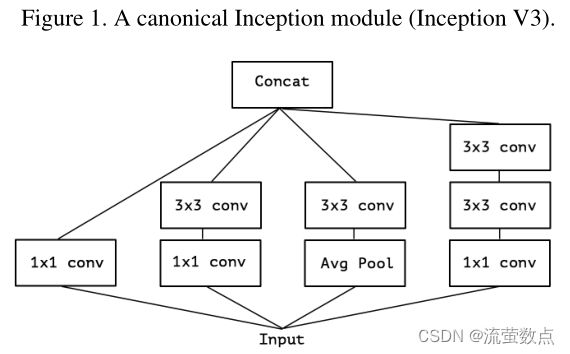

Xception作为Inception v3的改进,主要是在Inception v3的基础上引入了depthwise separable convolution,在基本不增加网络复杂度的前提下提高了模型的效果。

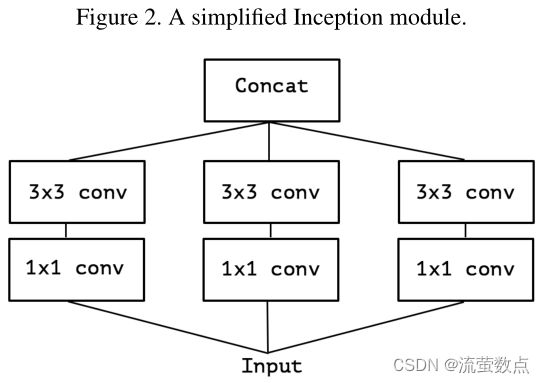

简化 的Inception模块

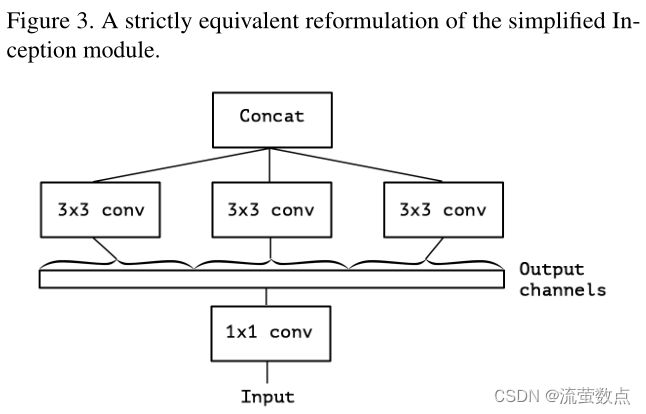

简化 的Inception模块的严格重构

Inception 模块的“极端”版本,1x1 卷积的每个输出通道都有一个空间卷积。

参考论文:Xception: Deep Learning with Depthwise Separable Convolutions

将卷积神经网络中的 Inception 模块解释为介于常规卷积和深度可分离卷积操作(深度卷积后跟点卷积)之间的中间步骤。从这个角度来看,深度可分离卷积可以理解为具有最大数量的塔的 Inception 模块。这一观察使我们提出了一种受 Inception 启发的新型深度卷积神经网络架构,其中 Inception 模块已被深度可分离卷积取代。我们表明,这种被称为 Xception 的架构在 ImageNet 数据集(Inception V3 的设计目标)上略微优于 Inception V3,并且在包含 3.5 亿张图像和 17,000 个类别的更大图像分类数据集上显着优于 Inception V3。由于 Xception 架构与 Inception V3 具有相同数量的参数,因此性能提升不是由于容量增加,而是更有效地使用模型参数。

深度可分离卷积,由深度卷积组成,即在输入的每个通道上独立执行的空间卷积,然后是逐点卷积,即 1x1 卷积,将深度卷积输出的通道投影到新的通道空间上。不要将其与空间可分离卷积混淆,后者在图像处理社区中通常也称为“可分离卷积”。

Xception网络结构:

Xception 架构有 36 个卷积层,构成网络的特征提取基础。在论文的实验评估中,专门研究图像分类,因此卷积基础之后将是逻辑回归层。可以选择在逻辑回归层之前插入全连接层。36 个卷积层被构造成 14 个模块,除了第一个和最后一个模块外,所有这些模块周围都有线性残差连接。

二、实验过程

1.导入库

# 查看当前kernel下已安装的包 list packages

!pip list --format=columnspip install tensorflow==2.4.1刚开始使用的tensorflow版本是1.4.0,后面会报错。

import matplotlib.pyplot as plt

# 支持中文

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

import os,PIL

# 设置随机种子尽可能使结果可以重现

import numpy as np

np.random.seed(1)

# 设置随机种子尽可能使结果可以重现

import tensorflow as tf

tf.random.set_seed(1)

import pathlib2.导入数据集

data_dir = "data-xception"

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*/*')))

print("图片总数为:",image_count)

应该是4000,不知道为啥只有3998。

batch_size = 2

img_height = 299

img_width = 299

就下面这一段代码需要tensorflow版本2.2.0以上

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.2,

subset="validation",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

class_names = train_ds.class_names

print(class_names)

![]()

为什么会出现“.ipynb_checkpoints”?

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

AUTOTUNE = tf.data.AUTOTUNE

train_ds = (

train_ds.cache()

.shuffle(1000)

# .map(train_preprocessing) # 这里可以设置预处理函数

# .batch(batch_size) # 在image_dataset_from_directory处已经设置了batch_size

.prefetch(buffer_size=AUTOTUNE)

)

val_ds = (

val_ds.cache()

.shuffle(1000)

# .map(val_preprocessing) # 这里可以设置预处理函数

# .batch(batch_size) # 在image_dataset_from_directory处已经设置了batch_size

.prefetch(buffer_size=AUTOTUNE)

)

3.网络结构

#====================================#

# Xception的网络部分

#====================================#

from tensorflow.keras.preprocessing import image

from tensorflow.keras.models import Model

from tensorflow.keras import layers

from tensorflow.keras.layers import Dense,Input,BatchNormalization,Activation,Conv2D,SeparableConv2D,MaxPooling2D

from tensorflow.keras.layers import GlobalAveragePooling2D,GlobalMaxPooling2D

from tensorflow.keras import backend as K

from tensorflow.keras.applications.imagenet_utils import decode_predictions

def Xception(input_shape = [299,299,3],classes=1000):

img_input = Input(shape=input_shape)

#=================#

# Entry flow

#=================#

# block1

# 299,299,3 -> 149,149,64

x = Conv2D(32, (3, 3), strides=(2, 2), use_bias=False, name='block1_conv1')(img_input)

x = BatchNormalization(name='block1_conv1_bn')(x)

x = Activation('relu', name='block1_conv1_act')(x)

x = Conv2D(64, (3, 3), use_bias=False, name='block1_conv2')(x)

x = BatchNormalization(name='block1_conv2_bn')(x)

x = Activation('relu', name='block1_conv2_act')(x)

# block2

# 149,149,64 -> 75,75,128

residual = Conv2D(128, (1, 1), strides=(2, 2), padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv1')(x)

x = BatchNormalization(name='block2_sepconv1_bn')(x)

x = Activation('relu', name='block2_sepconv2_act')(x)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv2')(x)

x = BatchNormalization(name='block2_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block2_pool')(x)

x = layers.add([x, residual])

# block3

# 75,75,128 -> 38,38,256

residual = Conv2D(256, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block3_sepconv1_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv1')(x)

x = BatchNormalization(name='block3_sepconv1_bn')(x)

x = Activation('relu', name='block3_sepconv2_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv2')(x)

x = BatchNormalization(name='block3_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block3_pool')(x)

x = layers.add([x, residual])

# block4

# 38,38,256 -> 19,19,728

residual = Conv2D(728, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block4_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv1')(x)

x = BatchNormalization(name='block4_sepconv1_bn')(x)

x = Activation('relu', name='block4_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv2')(x)

x = BatchNormalization(name='block4_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block4_pool')(x)

x = layers.add([x, residual])

#=================#

# Middle flow

#=================#

# block5--block12

# 19,19,728 -> 19,19,728

for i in range(8):

residual = x

prefix = 'block' + str(i + 5)

x = Activation('relu', name=prefix + '_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv1')(x)

x = BatchNormalization(name=prefix + '_sepconv1_bn')(x)

x = Activation('relu', name=prefix + '_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv2')(x)

x = BatchNormalization(name=prefix + '_sepconv2_bn')(x)

x = Activation('relu', name=prefix + '_sepconv3_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv3')(x)

x = BatchNormalization(name=prefix + '_sepconv3_bn')(x)

x = layers.add([x, residual])

#=================#

# Exit flow

#=================#

# block13

# 19,19,728 -> 10,10,1024

residual = Conv2D(1024, (1, 1), strides=(2, 2),

padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block13_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block13_sepconv1')(x)

x = BatchNormalization(name='block13_sepconv1_bn')(x)

x = Activation('relu', name='block13_sepconv2_act')(x)

x = SeparableConv2D(1024, (3, 3), padding='same', use_bias=False, name='block13_sepconv2')(x)

x = BatchNormalization(name='block13_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block13_pool')(x)

x = layers.add([x, residual])

# block14

# 10,10,1024 -> 10,10,2048

x = SeparableConv2D(1536, (3, 3), padding='same', use_bias=False, name='block14_sepconv1')(x)

x = BatchNormalization(name='block14_sepconv1_bn')(x)

x = Activation('relu', name='block14_sepconv1_act')(x)

x = SeparableConv2D(2048, (3, 3), padding='same', use_bias=False, name='block14_sepconv2')(x)

x = BatchNormalization(name='block14_sepconv2_bn')(x)

x = Activation('relu', name='block14_sepconv2_act')(x)

x = GlobalAveragePooling2D(name='avg_pool')(x)

x = Dense(classes, activation='softmax', name='predictions')(x)

inputs = img_input

model = Model(inputs, x, name='xception')

return model

model = Xception()

# 打印模型信息

model.summary()

4.训练过程

# 设置初始学习率

initial_learning_rate = 1e-4

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate,

decay_steps=300, # 敲黑板!!!这里是指 steps,不是指epochs

decay_rate=0.96, # lr经过一次衰减就会变成 decay_rate*lr

staircase=True)

# 将指数衰减学习率送入优化器

optimizer = tf.keras.optimizers.Adam(learning_rate=lr_schedule)

model.compile(optimizer=optimizer,

loss ='sparse_categorical_crossentropy',

metrics =['accuracy'])epochs = 3

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

才训练两下就搞不动了,CPU不给力啊,我真的会谢!

5.评估

# 模型评估

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

等我搞出来上一步!