李沐d2l(十一)--锚框

文章目录

-

- 一、概念

- 二、代码

-

- 1 生成锚框

- 2 IoU(交互比)

- 3 将真实边界框分配给锚框

- 4 标记类和偏移

- 5 应用逆偏移变换来返回预测的边界框坐标

- 6 nms

- 7 将非极大值抑制应用于预测边界框

一、概念

在目标检测算法中,通常会在输入图像中采样大量的区域(生成多个边缘框),锚框就是从多个边缘框中判断最接近真实预测目标的区域。

整个过程分为两次预测:

- 类别:预测锚框中所含物体的类别

- 位置:预测锚框到边缘框的位置偏移

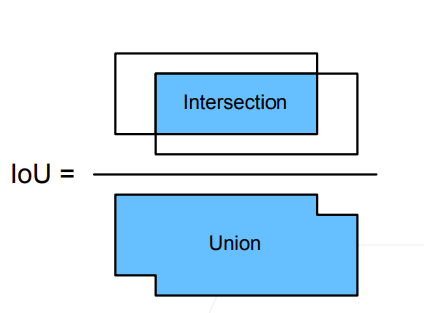

IoU-交叉比

IoU用来计算两个框之间的相似度:0 表示无重叠,1 表示重合。

赋予锚框标号

在训练集中,将每个锚框视为一个训练样本,为了训练目标检测模型,需要每个锚框的类别和偏移量(真实边缘框相对于锚框的偏移量)标签。

在预测的时候,首先为每个图像生成多个锚框,预测所有锚框的类别和偏移量,根据预测的偏移量调整它们的位置以获得预测的边缘框,最后只输出符合特定条件的预测边缘框

过程

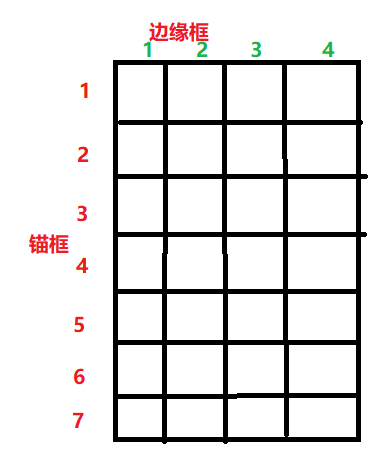

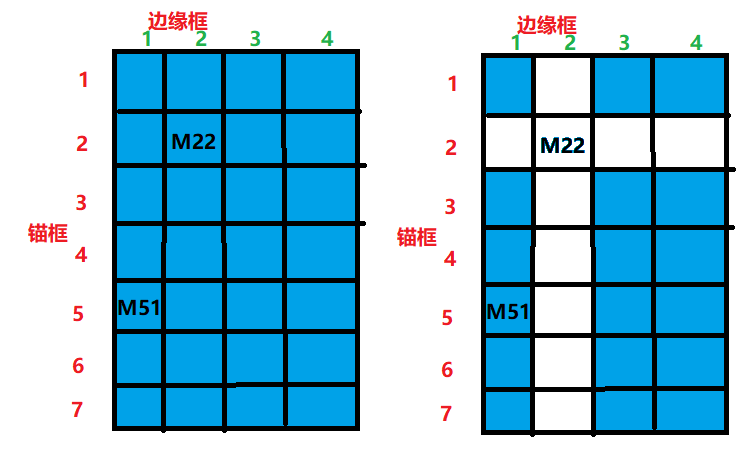

如下图所示,我们有4个边缘框,对应4个类别物体,每一列对应7个锚框。

首先我们要求每个锚框与边缘框的IoU值,蓝色的代表已经算出IoU。假设M22是最大的IoU值,就说明锚框2的任务是去预测边缘框2,接下来把M22的行列删除,如右图。

现在M51是最大的IoU,同理说明锚框5的任务是去预测边缘框1。以此类推,直到所有的锚框都有了标号。

非极大值抑制(NMS)输出

每个锚框都会预测一个边缘框,这些预测值之间可能存在比较相似的预测,NMS就是去合并相似的预测,让输出干净一点。

过程:

- 选中非背景的最大预测值

- 去掉所有其它和它IoU值大于θ的预测(也就是去掉和最大预测值相似度比较高的其它锚框)

- 重复上面过程直到所有预测要么被选中,要么被去掉

二、代码

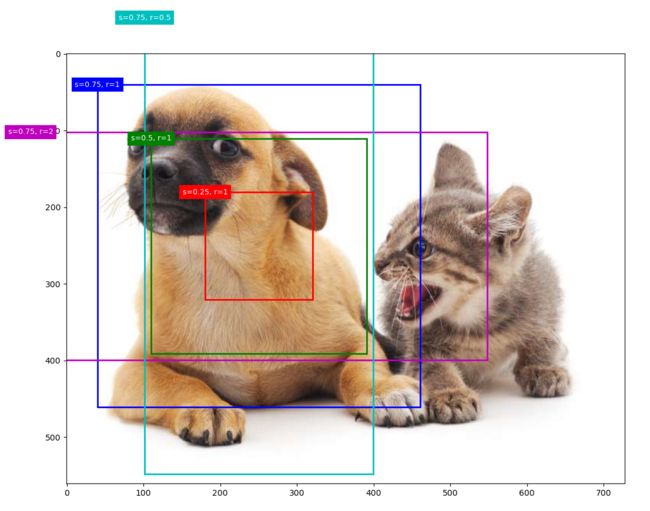

1 生成锚框

以每个像素点为中心,生成高、宽不同的锚框。锚框的宽度和高度分别是 w s ( r ) ws\sqrt(r) ws(r)和 h s / ( r ) hs/\sqrt(r) hs/(r),w和h是高、宽,s表示锚框占图片的大小,r是锚框的高宽比。一般的组合是 ( s 1 , r 1 ) , ( s 1 , r 2 ) , . . . , ( s 1 , r m ) , ( s 2 , r 1 ) , ( s 3 , r 1 ) , . . . , ( s n , r 1 ) (s1,r1),(s1,r2),...,(s1,rm),(s2,r1),(s3,r1),...,(sn,r1) (s1,r1),(s1,r2),...,(s1,rm),(s2,r1),(s3,r1),...,(sn,r1)

def multibox_prior(data, sizes, ratios):

in_height, in_width = data.shape[-2:]

device, num_sizes, num_ratios = data.device, len(sizes), len(ratios)

boxes_per_pixel = (num_sizes + num_ratios - 1)

size_tensor = torch.tensor(sizes, device=device)

ratio_tensor = torch.tensor(ratios, device=device)

offset_h, offset_w = 0.5, 0.5

steps_h = 1.0 / in_height

steps_w = 1.0 / in_width

center_h = (torch.arange(in_height, device=device) + offset_h) * steps_h

center_w = (torch.arange(in_width, device=device) + offset_w) * steps_w

shift_y, shift_x = torch.meshgrid(center_h, center_w)

shift_y, shift_x = shift_y.reshape(-1), shift_x.reshape(-1)

w = torch.cat((size_tensor * torch.sqrt(ratio_tensor[0]),

sizes[0] * torch.sqrt(ratio_tensor[1:])))\

* in_height / in_width

h = torch.cat((size_tensor / torch.sqrt(ratio_tensor[0]),

sizes[0] / torch.sqrt(ratio_tensor[1:])))

anchor_manipulations = torch.stack(

(-w, -h, w, h)).T.repeat(in_height * in_width, 1) / 2

out_grid = torch.stack([shift_x, shift_y, shift_x, shift_y],

dim=1).repeat_interleave(boxes_per_pixel, dim=0)

output = out_grid + anchor_manipulations

return output.unsqueeze(0)

展示一个锚框

img = d2l.plt.imread('catdog.jpg')

h, w = img.shape[:2] # 561 728

X = torch.rand(size=(1, 3, h, w))

Y = multibox_prior(X, sizes=[0.75, 0.5, 0.25], ratios=[1, 2, 0.5]) # torch.Size([1, 2042040, 4])

boxes = Y.reshape(h, w, 5, 4) # tensor([0.06, 0.07, 0.63, 0.82])

def show_bboxes(axes, bboxes, labels=None, colors=None):

"""显示所有边界框。"""

def _make_list(obj, default_values=None):

if obj is None:

obj = default_values

elif not isinstance(obj, (list, tuple)):

obj = [obj]

return obj

labels = _make_list(labels)

colors = _make_list(colors, ['b', 'g', 'r', 'm', 'c'])

for i, bbox in enumerate(bboxes):

color = colors[i % len(colors)]

rect = d2l.bbox_to_rect(bbox.detach().numpy(), color)

axes.add_patch(rect)

if labels and len(labels) > i:

text_color = 'k' if color == 'w' else 'w'

axes.text(rect.xy[0], rect.xy[1], labels[i], va='center',

ha='center', fontsize=9, color=text_color,

bbox=dict(facecolor=color, lw=0))

d2l.set_figsize()

bbox_scale = torch.tensor((w, h, w, h))

fig = d2l.plt.imshow(img)

show_bboxes(fig.axes, boxes[250, 250, :, :] * bbox_scale, [

's=0.75, r=1', 's=0.5, r=1', 's=0.25, r=1', 's=0.75, r=2', 's=0.75, r=0.5'

])

d2l.plt.show()

2 IoU(交互比)

def box_iou(boxes1, boxes2):

"""计算两个锚框或边界框列表中成对的交并比。"""

box_area = lambda boxes: ((boxes[:, 2] - boxes[:, 0]) *

(boxes[:, 3] - boxes[:, 1]))

areas1 = box_area(boxes1) # 算出锚框1的面积

areas2 = box_area(boxes2) # 算出锚框2的面积

inter_upperlefts = torch.max(boxes1[:, None, :2], boxes2[:, :2])

inter_lowerrights = torch.min(boxes1[:, None, 2:], boxes2[:, 2:])

inters = (inter_lowerrights - inter_upperlefts).clamp(min=0)

inter_areas = inters[:, :, 0] * inters[:, :, 1] # 交集框

union_areas = areas1[:, None] + areas2 - inter_areas # 各自的面积减去交集

return inter_areas / union_areas

3 将真实边界框分配给锚框

def assign_anchor_to_bbox(ground_truth, anchors, device, iou_threshold=0.5):

num_anchors, num_gt_boxes = anchors.shape[0], ground_truth.shape[0]

jaccard = box_iou(anchors, ground_truth)

anchors_bbox_map = torch.full((num_anchors,), -1, dtype=torch.long,

device=device)

max_ious, indices = torch.max(jaccard, dim=1)

anc_i = torch.nonzero(max_ious >= 0.5).reshape(-1)

box_j = indices[max_ious >= 0.5]

anchors_bbox_map[anc_i] = box_j

col_discard = torch.full((num_anchors,), -1)

row_discard = torch.full((num_gt_boxes,), -1)

for _ in range(num_gt_boxes):

max_idx = torch.argmax(jaccard)

box_idx = (max_idx % num_gt_boxes).long()

anc_idx = (max_idx / num_gt_boxes).long()

anchors_bbox_map[anc_idx] = box_idx

jaccard[:, box_idx] = col_discard

jaccard[anc_idx, :] = row_discard

return anchors_bbox_map

4 标记类和偏移

def offset_boxes(anchors, assigned_bb, eps=1e-6):

"""对锚框偏移量的转换。"""

c_anc = d2l.box_corner_to_center(anchors)

c_assigned_bb = d2l.box_corner_to_center(assigned_bb)

offset_xy = 10 * (c_assigned_bb[:, :2] - c_anc[:, :2]) / c_anc[:, 2:]

offset_wh = 5 * torch.log(eps + c_assigned_bb[:, 2:] / c_anc[:, 2:])

offset = torch.cat([offset_xy, offset_wh], axis=1)

return offset

def multibox_target(anchors, labels):

"""使用真实边界框标记锚框。"""

batch_size, anchors = labels.shape[0], anchors.squeeze(0)

batch_offset, batch_mask, batch_class_labels = [], [], []

device, num_anchors = anchors.device, anchors.shape[0]

for i in range(batch_size):

label = labels[i, :, :]

anchors_bbox_map = assign_anchor_to_bbox(label[:, 1:], anchors,

device)

bbox_mask = ((anchors_bbox_map >= 0).float().unsqueeze(-1)).repeat(

1, 4)

class_labels = torch.zeros(num_anchors, dtype=torch.long,

device=device)

assigned_bb = torch.zeros((num_anchors, 4), dtype=torch.float32,

device=device)

indices_true = torch.nonzero(anchors_bbox_map >= 0)

bb_idx = anchors_bbox_map[indices_true]

class_labels[indices_true] = label[bb_idx, 0].long() + 1

assigned_bb[indices_true] = label[bb_idx, 1:]

offset = offset_boxes(anchors, assigned_bb) * bbox_mask

batch_offset.append(offset.reshape(-1))

batch_mask.append(bbox_mask.reshape(-1))

batch_class_labels.append(class_labels)

bbox_offset = torch.stack(batch_offset)

bbox_mask = torch.stack(batch_mask)

class_labels = torch.stack(batch_class_labels)

return (bbox_offset, bbox_mask, class_labels)

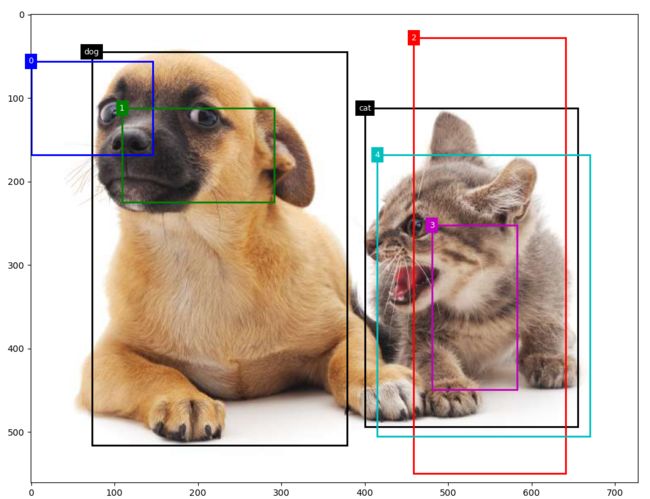

在图像中绘制这些地面真相边界框和锚框

ground_truth = torch.tensor([[0, 0.1, 0.08, 0.52, 0.92],

[1, 0.55, 0.2, 0.9, 0.88]])

anchors = torch.tensor([[0, 0.1, 0.2, 0.3], [0.15, 0.2, 0.4, 0.4],

[0.63, 0.05, 0.88, 0.98], [0.66, 0.45, 0.8, 0.8],

[0.57, 0.3, 0.92, 0.9]])

fig = d2l.plt.imshow(img)

show_bboxes(fig.axes, ground_truth[:, 1:] * bbox_scale, ['dog', 'cat'], 'k')

show_bboxes(fig.axes, anchors * bbox_scale, ['0', '1', '2', '3', '4'])

d2l.plt.show()

5 应用逆偏移变换来返回预测的边界框坐标

def offset_inverse(anchors, offset_preds):

"""根据带有预测偏移量的锚框来预测边界框。"""

anc = d2l.box_corner_to_center(anchors)

pred_bbox_xy = (offset_preds[:, :2] * anc[:, 2:] / 10) + anc[:, :2]

pred_bbox_wh = torch.exp(offset_preds[:, 2:] / 5) * anc[:, 2:]

pred_bbox = torch.cat((pred_bbox_xy, pred_bbox_wh), axis=1)

predicted_bbox = d2l.box_center_to_corner(pred_bbox)

return predicted_bbox

6 nms

def nms(boxes, scores, iou_threshold):

"""对预测边界框的置信度进行排序。"""

B = torch.argsort(scores, dim=-1, descending=True)

keep = []

while B.numel() > 0:

i = B[0]

keep.append(i)

if B.numel() == 1: break

iou = box_iou(boxes[i, :].reshape(-1, 4),

boxes[B[1:], :].reshape(-1, 4)).reshape(-1)

inds = torch.nonzero(iou <= iou_threshold).reshape(-1)

B = B[inds + 1]

return torch.tensor(keep, device=boxes.device)

7 将非极大值抑制应用于预测边界框

def multibox_detection(cls_probs, offset_preds, anchors, nms_threshold=0.5,

pos_threshold=0.009999999):

"""使用非极大值抑制来预测边界框。"""

device, batch_size = cls_probs.device, cls_probs.shape[0]

anchors = anchors.squeeze(0)

num_classes, num_anchors = cls_probs.shape[1], cls_probs.shape[2]

out = []

for i in range(batch_size):

cls_prob, offset_pred = cls_probs[i], offset_preds[i].reshape(-1, 4)

conf, class_id = torch.max(cls_prob[1:], 0)

predicted_bb = offset_inverse(anchors, offset_pred)

keep = nms(predicted_bb, conf, nms_threshold)

all_idx = torch.arange(num_anchors, dtype=torch.long, device=device)

combined = torch.cat((keep, all_idx))

uniques, counts = combined.unique(return_counts=True)

non_keep = uniques[counts == 1]

all_id_sorted = torch.cat((keep, non_keep))

class_id[non_keep] = -1

class_id = class_id[all_id_sorted]

conf, predicted_bb = conf[all_id_sorted], predicted_bb[all_id_sorted]

below_min_idx = (conf < pos_threshold)

class_id[below_min_idx] = -1

conf[below_min_idx] = 1 - conf[below_min_idx]

pred_info = torch.cat(

(class_id.unsqueeze(1), conf.unsqueeze(1), predicted_bb), dim=1)

out.append(pred_info)

return torch.stack(out)

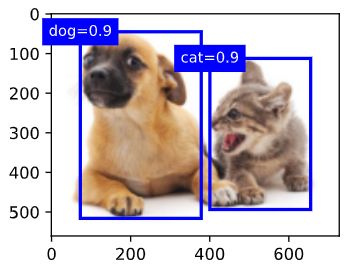

最后的输出只保留了最大置信度的锚框