Hbase: ------ 架构原理、集群搭建。

Hbase架构

宏观架构

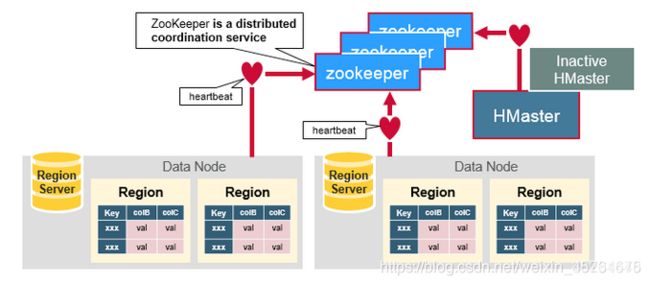

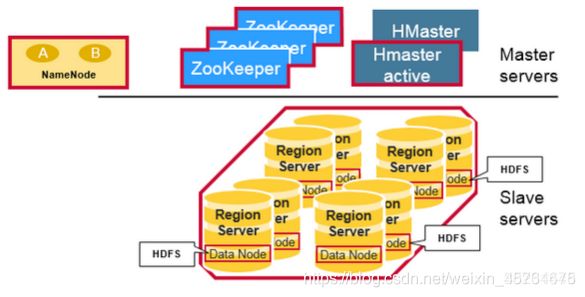

HBase采用Master/Slave架构搭建集群,它隶属于Hadoop生态系统,由一下类型节点组成:HMaster节点、HRegionServer节点、ZooKeeper集群,而在底层,它将数据存储于HDFS中,因而涉及到HDFS的NameNode、DataNode等,总体结构如下:

在物理上,HBase由master/slave类型体系结构中的三种服务器组成。RegionServer为读取和写入提供数据。访问数据时,Client直接与Region Server通信。Region的分配,DDL(创建,删除表)操作由HMaster处理。作为HDFS一部分的Zookeeper维护活动集群状态。 Hadoop DataNode存储Region Server正在管理的数据。所有HBase数据都存储在HDFS文件中。Region Server与HDFS数据节点并置,从而为Region Server提供的数据实现数据局部性。除了Region在Split的时候,Hbase写入不是本地的,但是在Hbase在完成compaction之后 HBase数据是基于Local写入的。NameNode维护构成文件的所有物理数据块的元数据信息。

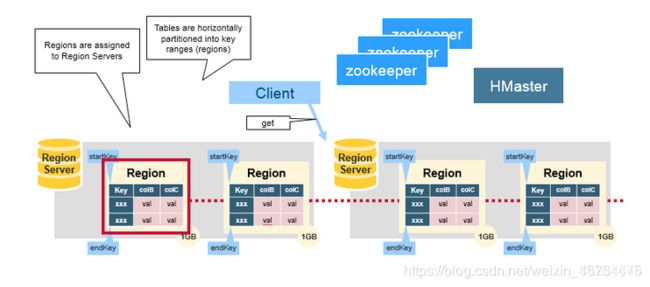

Regions

HBase表按RowKey范围水平划分为“Region”。一个Region包含表中该Region的开始键和结束键之间的所有行。将Region分配给群集中称为“Region Server”的节点,这些Region Server为读取和写入提供数据。Region Server可以服务大约1,000个区域。

HBase HMaster

Region分配,DDL(创建,删除表)操作由HBase Master处理。

HBase Master主要负责:

Coordinating the region servers

-

Assigning regions on startup , re-assigning regions for recovery or load balancing

-

Monitoring all RegionServer instances in the cluster (listens for notifications from zookeeper)

Admin functions

- Interface for creating, deleting, updating tables

ZooKeeper

ZooKeeper为HBase集群提供协调服务,它管理着HMaster和HRegionServer的状态(available/alive等),并且会在它们宕机时通知给HMaster,从而HMaster可以实现HMaster之间的failover,或对宕机的HRegionServer中的HRegion集合的修复(将它们分配给其他的HRegionServer)。ZooKeeper集群本身使用一致性协议(PAXOS协议)保证每个节点状态的一致性。

总结

Zookeeper用于协调分布式系统成员的共享状态信息。Region Server和Active的HMaster通过与ZooKeeper的会话连接。 ZooKeeper通过心跳维护临时节点以进行活动会话。

每个RegionServer都会创建一个临时节点。 HMaster监视这些节点以发现可用的Region Server,并且还监视这些节点的服务器故障。 HMasters试图创建一个临时节点。 Zookeeper确定第一个Master,并使用它来确保只有一个Master处于Active状态。活动的HMaster将心跳发送到Zookeeper,非活动的HMaster侦听活动的HMaster故障的通知。 如果Region Server或Active的HMaster无法发送心跳,则会话过期,并删除相应的临时节点。更新的侦听器将被通知已删除的节点。Active的HMaster侦听Region Sever,并在发生故障时恢复Region Server。非活动HMaster侦听活动的HMaster故障,如果Active的HMaster发生故障,则非活动的HMaster会变为活动状态。

Hbase HA搭建

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-AXkYjP0X-1587022472188)(assets/1578474400154.png)]

基础配置

1, 保证所有物理主机的时钟同步,否则集群搭建失败

[root@CentOSX ~]# yum install -y ntp -y

[root@CentOSX ~]# ntpdate time.apple.com

[root@CentOSA ~]# clock -w

由于Hbase服务器之间需要通过心跳确定服务器是否在正常运行,所以这里在搭建的物理主机的时候一定要确保所有的物理主机的时钟是同步的。

2、为了保证服务器间能够正常通信,通常需要配置

- 主机名和IP映射关系

[root@CentOSX ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.186.152 CentOSA

192.168.186.153 CentOSB

192.168.186.154 CentOSC

- 关闭防火墙

[root@CentOSX ~]# systemctl stop firewalld

[root@CentOSX ~]# systemctl disable firewalld

[root@CentOSX ~]# firewall-cmd --state

not running

3、所有的物理主机安装JDK

[root@CentOSX ~]# rpm -ivh jdk-8u171-linux-x64.rpm

[root@CentOSX ~]# vi .bashrc

JAVA_HOME=/usr/java/latest/

CLASSPATH=.

PATH=$PATH:$JAVA_HOME/bin

export JAVA_HOME

export CLASSPATH

export PATH

[root@CentOSX ~]# source .bashrc

Zookeeper集群

1、上传zookeeper的安装包,并解压在/usr目录下

[root@CentOX ~]# tar -zxf zookeeper-3.4.12.tar.gz -C /usr/

2、配置Zookepeer的zoo.cfg

[root@CentOSX ~]# tar -zxf zookeeper-3.4.12.tar.gz -C /usr/

[root@CentOSX ~]# cd /usr/zookeeper-3.4.12/

[root@CentOSX zookeeper-3.4.12]# cp conf/zoo_sample.cfg conf/zoo.cfg

[root@CentOSX zookeeper-3.4.12]# vi conf/zoo.cfg

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/root/zkdata

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

server.1=CentOSA:2888:3888

server.2=CentOSB:2888:3888

server.3=CentOSC:2888:3888

3、创建zookeeper的数据目录

[root@CentOSX ~]# mkdir /root/zkdata

4、在CentOSA/B/C的zookeeper的datadir分别创建myid文件

[root@CentOSA ~]# echo 1 > /root/zkdata/myid

[root@CentOSB ~]# echo 2 > /root/zkdata/myid

[root@CentOSC ~]# echo 3 > /root/zkdata/myid

5、启动zookeeper服务

[root@CentOSX ~]# cd /usr/zookeeper-3.4.12/

[root@CentOSX zookeeper-3.4.12]# ./bin/zkServer.sh start zoo.cfg

ZooKeeper JMX enabled by default

Using config: /usr/zookeeper-3.4.12/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@CentOSX zookeeper-3.4.12]# ./bin/zkServer.sh status zoo.cfg

ZooKeeper JMX enabled by default

Using config: /usr/zookeeper-3.4.12/bin/../conf/zoo.cfg

Mode: leader|folower

HDFS-HA

1、解压Hadoop安装包到/usr目录下,并且需要配置HADOOP_HOME

[root@CentOSX ~]# tar -zxf hadoop-2.9.2.tar.gz -C /usr/

[root@CentOSX ~]# vi .bashrc

HADOOP_HOME=/usr/hadoop-2.9.2

JAVA_HOME=/usr/java/latest/

CLASSPATH=.

PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export JAVA_HOME

export CLASSPATH

export PATH

export HADOOP_HOME

[root@CentOSX ~]# source .bashrc

[root@CentOSX ~]# hadoop classpath

/usr/hadoop-2.9.2/etc/hadoop:/usr/hadoop-2.9.2/share/hadoop/common/lib/*:/usr/hadoop-2.9.2/share/hadoop/common/*:/usr/hadoop-2.9.2/share/hadoop/hdfs:/usr/hadoop-2.9.2/share/hadoop/hdfs/lib/*:/usr/hadoop-2.9.2/share/hadoop/hdfs/*:/usr/hadoop-2.9.2/share/hadoop/yarn:/usr/hadoop-2.9.2/share/hadoop/yarn/lib/*:/usr/hadoop-2.9.2/share/hadoop/yarn/*:/usr/hadoop-2.9.2/share/hadoop/mapreduce/lib/*:/usr/hadoop-2.9.2/share/hadoop/mapreduce/*:/usr/hadoop-2.9.2/contrib/capacity-scheduler/*.jar

2、配置core-site.xml

<property>

<name>fs.defaultFSname>

<value>hdfs://myclustervalue>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/usr/hadoop-2.9.2/hadoop-${user.name}value>

property>

<property>

<name>ha.zookeeper.quorumname>

<value>CentOSA:2181,CentOSB:2181,CentOSC:2181value>

property>

3、配置hdfs-site.xml

<property>

<name>dfs.nameservicesname>

<value>myclustervalue>

property>

<property>

<name>dfs.ha.namenodes.myclustername>

<value>nn1,nn2value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1name>

<value>CentOSA:9000value>

property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2name>

<value>CentOSB:9000value>

property>

<property>

<name>dfs.namenode.shared.edits.dirname>

<value>qjournal://CentOSA:8485;CentOSB:8485;CentOSC:8485/myclustervalue>

property>

<property>

<name>dfs.journalnode.edits.dirname>

<value>/usr/hadoop-2.9.2/hadoop-journaldatavalue>

property>

<property>

<name>dfs.ha.automatic-failover.enabledname>

<value>truevalue>

property>

<property>

<name>dfs.client.failover.proxy.provider.myclustername>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvidervalue>

property>

<property>

<name>dfs.ha.fencing.methodsname>

<value>sshfencevalue>

property>

<property>

<name>dfs.ha.fencing.ssh.private-key-filesname>

<value>/root/.ssh/id_rsavalue>

property>

4、配置三台主机ssh免密码登录

[root@CentOSX ~]# ssh-keygen -t rsa

[root@CentOSX ~]# ssh-copy-id CentOSA

[root@CentOSX ~]# ssh-copy-id CentOSB

[root@CentOSX ~]# ssh-copy-id CentOSC

5、初始化HDFS-HA

[root@CentOSX ~]# hadoop-daemon.sh start journalnode

[root@CentOSA ~]# hdfs namenode -format

[root@CentOSA ~]# hadoop-daemon.sh start namenode

[root@CentOSB ~]# hdfs namenode -bootstrapStandBy

[root@CentOSB ~]# hadoop-daemon.sh start namenode

[root@CentOSA|B ~]# hdfs zkfc -formatZK

[root@CentOSA ~]# hadoop-daemon.sh start zkfc

[root@CentOSB ~]# hadoop-daemon.sh start zkfc

[root@CentOSX ~]# hadoop-daemon.sh start datanode

注意CentOS7,需要额外安装yum install psmisc -y 才可以实现自动故障转移

HBase-HA

1、 解压并配置HBase

[root@CentOSX ~]# tar -zxf hbase-1.2.4-bin.tar.gz -C /usr/

2,配置Hbase环境变量HBASE_HOME

[root@CentOSX ~]# vi .bashrc

HBASE_HOME=/root/hbase-1.2.4

HADOOP_HOME=/usr/hadoop-2.9.2

JAVA_HOME=/usr/java/latest

CLASSPATH=.

PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HBASE_HOME/bin

export JAVA_HOME

export CLASSPATH

export PATH

export HADOOP_HOME

export HBASE_HOME

[root@CentOSX ~]# source .bashrc

[root@CentOSX ~]# hbase classpath # 测试Hbase是否识别Hadoop

/usr/hbase-1.2.4/conf:/usr/java/latest/lib/tools.jar:/usr/hbase-1.2.4:/usr/hbase-1.2.4/lib/activation-1.1.jar:/usr/hbase-1.2.4/lib/aopalliance-1.0.jar:/usr/hbase-1.2.4/lib/apacheds-i18n-2.0.0-M15.jar:/usr/hbase-1.2.4/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/usr/hbase-1.2.4/lib/api-asn1-api-1.0.0-M20.jar:/usr/hbase-1.2.4/lib/api-util-1.0.0-M20.jar:/usr/hbase-1.2.4/lib/asm-3.1.jar:/usr/hbase-1.2.4/lib/avro-

...

1.7.4.jar:/usr/hbase-1.2.4/lib/commons-beanutils-1.7.0.jar:/usr/hbase-1.2.4/lib/commons-

2.9.2/share/hadoop/yarn/*:/usr/hadoop-2.9.2/share/hadoop/mapreduce/lib/*:/usr/hadoop-2.9.2/share/hadoop/mapreduce/*:/usr/hadoop-2.9.2/contrib/capacity-scheduler/*.jar

3,配置hbase-site.xml

[root@CentOSX ~]# cd /root/hbase-1.2.4/

[root@CentOSX hbase-1.2.4]# vim conf/hbase-site.xml

<property>

<name>hbase.rootdirname>

<value>hdfs://mycluster/hbasevalue>

property>

<property>

<name>hbase.cluster.distributedname>

<value>truevalue>

property>

<property>

<name>hbase.zookeeper.quorumname>

<value>CentOSA,CentOSB,CentOSCvalue>

property>

<property>

<name>hbase.zookeeper.property.clientPortname>

<value>2181value>

property>

4,修改hbase-env.sh,将HBASE_MANAGES_ZK修改为false

[root@CentOSX ~]# cd /root/hbase-1.2.4/

[root@CentOSX hbase-1.2.4]# grep -i HBASE_MANAGES_ZK conf/hbase-env.sh

# export HBASE_MANAGES_ZK=true

[root@CentOSX hbase-1.2.4]# vim conf/hbase-env.sh

export HBASE_MANAGES_ZK=false

[root@CentOSX hbase-1.2.4]# grep -i HBASE_MANAGES_ZK conf/hbase-env.sh

export HBASE_MANAGES_ZK=false

export HBASE_MANAGES_ZK=false告知Hbase,使用外部Zookeeper

5, 修改RegionServers

CentOSA

CentOSB

CentOSC

6,启动Hbase

[root@CentOSX hbase-1.2.4]# ./bin/hbase-daemon.sh start master

[root@CentOSX hbase-1.2.4]# ./bin/hbase-daemon.sh start regionserver

6,验证Hbase安装是否成功

- WebUI验证 http://192.168.186.152:16010/

- Hbase shell验证(靠谱)

[root@CentOSB hbase-1.2.4]# ./bin/hbase shell

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/hbase-1.2.4/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/hadoop-2.9.2/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

HBase Shell; enter 'help' for list of supported commands.

Type "exit" to leave the HBase Shell

Version 1.2.4, rUnknown, Wed Feb 15 18:58:00 CST 2017

hbase(main):001:0> status

1 active master, 2 backup masters, 3 servers, 0 dead, 0.6667 average load

4,修改hbase-env.sh,将HBASE_MANAGES_ZK修改为false

[root@CentOSX ~]# cd /root/hbase-1.2.4/

[root@CentOSX hbase-1.2.4]# grep -i HBASE_MANAGES_ZK conf/hbase-env.sh

# export HBASE_MANAGES_ZK=true

[root@CentOSX hbase-1.2.4]# vim conf/hbase-env.sh

export HBASE_MANAGES_ZK=false

[root@CentOSX hbase-1.2.4]# grep -i HBASE_MANAGES_ZK conf/hbase-env.sh

export HBASE_MANAGES_ZK=false

export HBASE_MANAGES_ZK=false告知Hbase,使用外部Zookeeper

5, 修改RegionServers

CentOSA

CentOSB

CentOSC

6,启动Hbase

[root@CentOSX hbase-1.2.4]# ./bin/hbase-daemon.sh start master

[root@CentOSX hbase-1.2.4]# ./bin/hbase-daemon.sh start regionserver

6,验证Hbase安装是否成功

-

WebUI验证 http://192.168.186.152:16010/

-

Hbase shell验证(靠谱)

[root@CentOSB hbase-1.2.4]# ./bin/hbase shell

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/hbase-1.2.4/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/hadoop-2.9.2/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

HBase Shell; enter 'help' for list of supported commands.

Type "exit" to leave the HBase Shell

Version 1.2.4, rUnknown, Wed Feb 15 18:58:00 CST 2017

hbase(main):001:0> status

1 active master, 2 backup masters, 3 servers, 0 dead, 0.6667 average load