深度学习课后week3 编程( 带有一个隐藏层的平面数据分类)

参考:https://blog.csdn.net/u013733326/article/details/79702148

1.编程要求

搭建带有一个隐藏层的平面数据分类的神经网络;

2.所用到的库

| 库 | 说明 |

|---|---|

| sklearn | 进行数据挖掘和数据分析 |

| matplotlib.pyplot | pyplot是matplotlib子库,用于绘制2D图表 |

| numpy | 进行矩阵计算 |

| testCases | (吴恩达自己定义的库)测试示例来评估函数的正确性 |

| planar_utils | (吴恩达自己定义的库)包含原始数据集、激活函数等数据 |

3.具体步骤

- 1.导入库

- 2.查看数据集,可视化数据集(仅了解,不写入最终代码)

- 3.查看逻辑回归的分类效果(仅了解,不写入最终代码)

- 4.搭建神经网络(定义神经网络结构、初始化模型参数、循环)

- 6.定义函数(前向传播、损失函数、反向传播、更新参数)

- 5.模型整合

- 6.预测

- 7.测试结果

- 8.更改隐藏层结点数量再次测试

4.代码实现

1.导入库:

#导入库

import numpy as np

import matplotlib.pyplot as plt

from testCases import *

import sklearn

import sklearn.datasets

import sklearn.linear_model

from planar_utils import plot_decision_boundary, sigmoid, load_planar_dataset, load_extra_datasets

2.查看数据集,可视化数据集(仅了解,不写入最终代码)

这里如果不太了解plt.scatter(),可以看我的另一篇文章:https://blog.csdn.net/meini32/article/details/126510146

#导入数据集,数据可视化

X,Y = load_planar_dataset() #这里X代表横纵坐标,Y代表散列点颜色(红0,蓝1)

plt.scatter(X[0,:],X[1,:],c=np.squeeze(Y),s=40,cmap=plt.cm.Spectral)

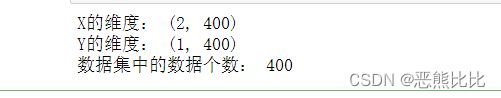

#查看数据集

shape_X = X.shape

shape_Y = Y.shape

m = Y.shape[1]

print("X的维度:",str(shape_X))

print("Y的维度:",str(shape_Y))

print("数据集中的数据个数:",str(m))

3.查看逻辑回归的分类效果(仅了解,不写入最终代码)

skerarn库介绍:

sklearn 基于 Python 语言的机器学习工具,Sklea是处理机器学习 (有监督学习和无监督学习) 的包。它建立在 NumPy, SciPy, Pandas 和 Matplotlib 之上,其主要集成了数据预处理、数据特征选择;

- sklearn.datasets 包含三个主要的获得数据的模块:

loader模块:其中包含了一些很小的,很标准的,不需要进行数据处理的,可以直接使用模型训练的数据集,通用格式为load_();

fetcher模块:这个模块用来下载在现实生活中的大型的数据集,通用格式为fetch_()

maker模块:这个模块用来自己生成数据,通用格式为maker_*() - sklearn.linear_model模块:创建一个线性模型

关于logistics回归之sklearn中的LogisticRegressionCV的认识和应用场景:https://blog.csdn.net/qq_41076797/article/details/102692799

#逻辑回归分类器

clf = sklearn.linear_model.LogisticRegressionCV()

clf.fit(X.T,Y.T)

plot_decision_boundary(lambda x: clf.predict(x),X,Y) #绘制边缘决策

plt.title("LogisticRegression")

LR_predictions = clf.predict(X.T) #逻辑回归预测

print("逻辑回归准确性:%d " % float((np.dot(Y,LR_predictions)+np.dot(1-Y,1-LR_predictions))/float(Y.size)*100)+"%"+"(正确标记的数据点所占的百分比)")

4. 定义神经网络结构

根据吴恩达老师testCases库中的对神经网络结构初始化的定义

可知神经网络模型基本为:

#神经网络结构

def layer_sizes(X,Y):

n_x = X.shape[0]

n_y = Y.shape[0]

n_h = 4

return n_x,n_h,n_y

5.初始化模型参数

目的:确保参数大小合适

#初始化模型参数

def initialize_parameters(n_x,n_h,n_y):

#n_x,n_h,n_y分别代表:输入、隐藏、输出层结点数量

#w,b分别代表:权重矩阵,偏向量

np.random.seed(2)

w1 = np.random.randn(n_h,n_x)*0.01

b1 = np.zeros(shape = (n_h,1))

w2 = np.random.randn(n_y,n_h)*0.01

b1 = np.zeros(shape = (n_y,1))

parameters = {'w1:'w1,'w2:'w2,'b1:'b1,'b2:'b2}

return parameters

6.定义函数(前向传播、损失函数、反向传播、更新参数)

- 前向传播:计算Z[1] 、A[1] 、Z[2] 、A[2]

#定义函数

#前向传播函数

#实现前向传播函数可以使用sigmoid函数也可以使用tanh函数

def forward_propagation(X,parameters):

W1 = parameters['W1']

W2 = parameters['W2']

b1 = parameters['b1']

b2 = parameters['b2']

Z1 = np.dot(W1,X)+b1

A1 = np.tanh(Z1)

Z2 = np.dot(W2,A1)+b2

A2 = sigmoid(Z2)

cache = {'Z1':Z1,'Z2':Z2,'A1':A1,'A2':A2}

return A2,cache

- 损失函数 :

#损失函数

def compute_cost(A2,Y,parameters):

m = Y.shape[1]

W1 = parameters['W1']

W2 = parameters['W2']

#计算成本

logprobs = np.multiply(np.log(A2),Y)+np.multiply((1-Y),np.log(1-A2))

cost = - np.sum(logprobs)/m

cost = float(np.squeeze(cost))

return cost

- 反向传播:

#反向传播

def backward_propagation(parameters,cache,X,Y):

m = X.shape[1]

W1 = parameters["W1"]

W2 = parameters["W2"]

A1 = cache["A1"]

A2 = cache["A2"]

dZ2= A2 - Y

dW2 = (1 / m) * np.dot(dZ2, A1.T)

db2 = (1 / m) * np.sum(dZ2, axis=1, keepdims=True)

dZ1 = np.multiply(np.dot(W2.T, dZ2), 1 - np.power(A1, 2))

dW1 = (1 / m) * np.dot(dZ1, X.T)

db1 = (1 / m) * np.sum(dZ1, axis=1, keepdims=True)

grads = {"dW1": dW1,

"db1": db1,

"dW2": dW2,

"db2": db2 }

return grads

- 更新参数

#更新参数

#通过(dW1, db1, dW2, db2)的学习效果来更新(W1, b1, W2, b2),最终的目的是最小化损失函数

def update_parameters(parameters,grads,learning_rate=1.2):

W1,W2 = parameters["W1"],parameters["W2"]

b1,b2 = parameters["b1"],parameters["b2"]

dW1,dW2 = grads["dW1"],grads["dW2"]

db1,db2 = grads["db1"],grads["db2"]

W1 = W1 - learning_rate * dW1

b1 = b1 - learning_rate * db1

W2 = W2 - learning_rate * dW2

b2 = b2 - learning_rate * db2

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return parameters

5.模型整合

#模型整合

def nn_model(X,Y,n_h,num_iterations,print_cost=False):

np.random.seed(3) #指定随机种子

n_x = layer_sizes(X, Y)[0]

n_y = layer_sizes(X, Y)[2]

parameters = initialize_parameters(n_x,n_h,n_y)

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

for i in range(num_iterations):

A2 , cache = forward_propagation(X,parameters)

cost = compute_cost(A2,Y,parameters)

grads = backward_propagation(parameters,cache,X,Y)

parameters = update_parameters(parameters,grads,learning_rate = 0.5)

if print_cost:

if i%1000 == 0:

print("第 ",i," 次循环,成本为:"+str(cost))

return parameters

6.预测

#预测

def predict(parameters,X):

A2 , cache = forward_propagation(X,parameters)

predictions = np.round(A2)

return predictions

7.测试结果

#测试

parameters = nn_model(X, Y, n_h = 4, num_iterations=10000, print_cost=True)

#绘制边界

plot_decision_boundary(lambda x: predict(parameters, x.T), X, Y)

plt.title("Decision Boundary for hidden layer size " + str(4))

predictions = predict(parameters, X)

print ('准确率: %d' % float((np.dot(Y, predictions.T) + np.dot(1 - Y, 1 - predictions.T)) / float(Y.size) * 100) + '%')

8.更改隐藏层结点数量再次测试

plt.figure(figsize=(16, 32))

hidden_layer_sizes = [1, 2, 3, 4, 5, 20, 50] #隐藏层数量

for i, n_h in enumerate(hidden_layer_sizes):

plt.subplot(5, 2, i + 1)

plt.title('Hidden Layer of size %d' % n_h)

parameters = nn_model(X, Y, n_h, num_iterations=5000)

plot_decision_boundary(lambda x: predict(parameters, x.T), X, Y)

predictions = predict(parameters, X)

accuracy = float((np.dot(Y, predictions.T) + np.dot(1 - Y, 1 - predictions.T)) / float(Y.size) * 100)

print ("隐藏层的节点数量: {} ,准确率: {} %".format(n_h, accuracy))