Segformer Decoder - Segformer_Head

创新点一:

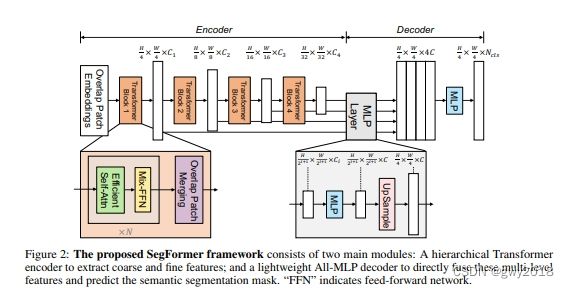

SegFormer consists of two main modules:

LightWeight Decoder - All_MLP【主角】

在Encoder特征提取过程类似采用FPN方法,经过Encoder后得到feature,通过linear_fuse卷及操作实现融合。

self.linear_fuse = ConvModule(

c1=embedding_dim * 4, c2=embedding_dim, k=1,

)

1.ConvModule的实现:

class ConvModule(nn.Module):

def __init__(self, c1, c2, k=1, s=1, p=0, g=1, act=True):

super(ConvModule, self).__init__()

self.conv = nn.Conv2d(c1, c2, k, s, p, groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2, eps=0.001, momentum=0.03)

self.act = nn.ReLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuse_forward(self, x):

return self.act(self.conv(x))

kernel_size = 1x1;实现维度变换

Decoder MLP【区别Encoder MLP】

class MLP(nn.Module):

"""

Linear Embedding

"""

def __init__(self, input_dim=2048, embed_dim=768):

super().__init__()

self.proj = nn.Linear(input_dim, embed_dim)

def forward(self, x):

x = x.flatten(2).transpose(1, 2)

x = self.proj(x)

return x

x - input,数据类型[N,C,H,W],数据转换x = x.flatten(2)表示将H,W合并,Transformer的输入格式[N, HxW, C]

再次通过线性映射维度转换。input_dim=2048, embed_dim=768不要太在意,会通过传参获取的。

SegFormer Head

self.linear_c4 = MLP(input_dim=c4_in_channels, embed_dim=embedding_dim)

_c4 = self.linear_c4(c4).permute(0, 2, 1).reshape(n, -1, c4.shape[2], c4.shape[3])

_c4 = F.interpolate(_c4, size=c1.size()[2:], mode='bilinear', align_corners=False)

经过MLP数据格式[N, HxW, C],卷积的要求的数据格式[N,C,H,W],使用reshape得到,其中C通过自动推理得到。C = 总数/NHW

F.interpolate上采样的双线性差值计算得到。学习过数值分析的理解双线性插值的原理,可了解一下。

class MLP(nn.Module):

"""

Linear Embedding

"""

def __init__(self, input_dim=2048, embed_dim=768):

super().__init__()

self.proj = nn.Linear(input_dim, embed_dim)

def forward(self, x):

x = x.flatten(2).transpose(1, 2)

x = self.proj(x)

return x

class ConvModule(nn.Module):

def __init__(self, c1, c2, k=1, s=1, p=0, g=1, act=True):

super(ConvModule, self).__init__()

self.conv = nn.Conv2d(c1, c2, k, s, p, groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2, eps=0.001, momentum=0.03)

self.act = nn.ReLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuseforward(self, x):

return self.act(self.conv(x))

class SegFormerHead(nn.Module):

"""

SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers

"""

def __init__(self, num_classes=20, in_channels=[32, 64, 160, 256], embedding_dim=768, dropout_ratio=0.1):

super(SegFormerHead, self).__init__()

c1_in_channels, c2_in_channels, c3_in_channels, c4_in_channels = in_channels

self.linear_c4 = MLP(input_dim=c4_in_channels, embed_dim=embedding_dim)

self.linear_c3 = MLP(input_dim=c3_in_channels, embed_dim=embedding_dim)

self.linear_c2 = MLP(input_dim=c2_in_channels, embed_dim=embedding_dim)

self.linear_c1 = MLP(input_dim=c1_in_channels, embed_dim=embedding_dim)

self.linear_fuse = ConvModule(

c1=embedding_dim * 4,

c2=embedding_dim,

k=1,

)

self.linear_pred = nn.Conv2d(embedding_dim, num_classes, kernel_size=1)

self.dropout = nn.Dropout2d(dropout_ratio)

def forward(self, inputs):

c1, c2, c3, c4 = inputs

# ############# MLP decoder on C1-C4 ###########

n, _, h, w = c4.shape

_c4 = self.linear_c4(c4).permute(0, 2, 1).reshape(n, -1, c4.shape[2], c4.shape[3])

_c4 = F.interpolate(_c4, size=c1.size()[2:], mode='bilinear', align_corners=False)

_c3 = self.linear_c3(c3).permute(0, 2, 1).reshape(n, -1, c3.shape[2], c3.shape[3])

_c3 = F.interpolate(_c3, size=c1.size()[2:], mode='bilinear', align_corners=False)

_c2 = self.linear_c2(c2).permute(0, 2, 1).reshape(n, -1, c2.shape[2], c2.shape[3])

_c2 = F.interpolate(_c2, size=c1.size()[2:], mode='bilinear', align_corners=False)

_c1 = self.linear_c1(c1).permute(0, 2, 1).reshape(n, -1, c1.shape[2], c1.shape[3])

_c = self.linear_fuse(torch.cat([_c4, _c3, _c2, _c1], dim=1))

x = self.dropout(_c)

x = self.linear_pred(x)

return x