Zookeeper ---- Zookeeper集群操作

Zookeeper ---- Zookeeper集群操作

- 1. 集群操作

-

- 1. 集群规划

- 2. 选举机制(面试重点)

- 3. ZK集群启动停止脚本

- 2. 客户端命令操作

-

- 1. 命令行语法

- 2. znode节点数据信息

- 3. 节点类型(持久/短暂/有序号/无序号)

- 4. 监听器原理

- 5. 节点删除与查看

- 3. 客户端API操作

-

- 1. IDEA环境搭建

- 2. 创建 ZooKeeper 客户端

- 3. 创建子节点

- 4. 获取子节点并监听节点变化

- 5. 判断Znode是否存在

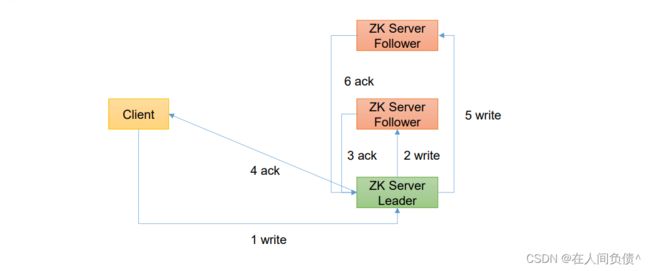

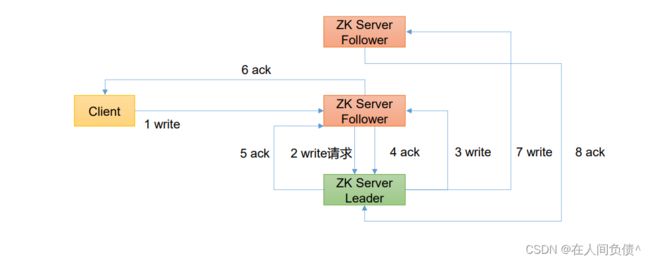

- 4. 客户端向服务端写数据流程

1. 集群操作

1. 集群规划

1)集群规划

在 hadoop102、hadoop103、hadoop104 三个节点上都部署 Zookeeper。

2)解压安装

- 解压到指定目录

[fickler@hadoop102 software]$ tar -zxvf apache-zookeeper-3.5.7-bin.tar.gz -C /opt/module/

- 修改名称

[fickler@hadoop102 module]$ mv apache-zookeeper-3.5.7-bin/ zookeeper-3.5.7

3)配置服务器编号

- 在 opt/module/zookeeper-3.5.7/ 这个目录上创建 zkData 文件夹

[fickler@hadoop102 zookeeper-3.5.7]$ mkdir zkData

- 在 /opt/modile/zookeeper-3.5.7/zkData 目录下创建一个 myid 的文件

[fickler@hadoop102 zkData]$ vi myid

在文件中添加与 server 对应的变化(注意:上下不要有空行,左右不要有空格)

2

注意:添加 myid 文件,一定要在 Linux 中创建,在 notepad++ 里面很有可能乱码

- 拷贝配置好的 zookeeper 到其他机器上

[fickler@hadoop102 module]$ xsync zookeeper-3.5.7

并分别在 hadoop103、hadoop104 上修改 myid 文件中内容为 3、4

4)配置zoo.cfg文件

- 重命名 /opt/module/zookeeper-3.5.7/conf 这个目录下的 zoo_sample.cfg 为 zoo.cfg

[fickler@hadoop102 conf]$ mv zoo_sample.cfg zoo.cfg

- 打开 zoo.cfg 文件

[fickler@hadoop102 conf]$ vim zoo.cfg

修改 dataDir 路径

dataDir=/opt/module/zookeeper-3.5.7/zkData

增加如下配置

#######################cluster##########################

server.2=hadoop102:2888:3888

server.3=hadoop103:2888:3888

server.4=hadoop104:2888:3888

- 配置参数解读

server.A=B:C:D。

A:是一个数字,表示这个是第几号服务器;

集群模式下配置一个文件 myid,这个文件在 dataDir 目录下,这个文件里面有一个数据就是 A 的值,Zookeeper 启动时读取此文件,拿到里面的数据域 zoo.cfg 里面的配置信息比较从而判断到底是哪个 server。

B:是这个服务器的地址。

C:是这个服务器 Follower 与集群中的 Leader 服务器交换信息的端口。

D:是万一集群中的 Leader 服务器挂了,需要一个端口来重新进行选举,选出一个新的 Leader,而这个端口就是用来执行选举时服务器相互通信的端口。

- 同步 zoo.cfg 配置文件

[fickler@hadoop102 conf]$ xsync zoo.cfg

5)集群操作

- 分别启动 Zookeeper

[fickler@hadoop102 zookeeper-3.5.7]$ bin/zkServer.sh start

[fickler@hadoop103 zookeeper-3.5.7]$ bin/zkServer.sh start

[fickler@hadoop104 zookeeper-3.5.7]$ bin/zkServer.sh start

- 查看状态

[fickler@hadoop102 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: standalone

[fickler@hadoop103 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: follower

[fickler@hadoop104 zookeeper-3.5.7]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: leader

2. 选举机制(面试重点)

SID:服务器ID。用来唯一标识一台Zookeeper集群中的机器,每台机器不能重复,和myid一致。

ZXID:事务ID。ZXID是一个事务ID,用来标识一次服务器状态的变更。在某一时刻,集群中的每台机器的 ZXID 值不一定完全一致,这和 Zookeeper 服务器对于客户端“更新请求”的处理逻辑有关。

Epoch:每个 Leader 任期的代号。没有 Leader 时同一轮投票过程中的逻辑时钟是相同的。每投完一次票这个数据就会增加。

Zookeeper选举机制----第一次启动

Zookeeper选举机制----非第一次启动

3. ZK集群启动停止脚本

- 在 hadoop102 的 /home/fickler/bin 目录下创建脚本

[fickler@hadoop102 bin]$ vim zk.sh

在脚本中编写如下内容

#!/bin/bash

case $1 in

"start"){

for i in hadoop102 hadoop103 hadoop104

do

echo ---------- zookeeper $i 启动 ------------

ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh start"

done

};;

"stop"){

for i in hadoop102 hadoop103 hadoop104

do

echo ---------- zookeeper $i 停止 ------------

ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh stop"

done

};;

"status"){

for i in hadoop102 hadoop103 hadoop104

do

echo ---------- zookeeper $i 状态 ------------

ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh status"

done

};;

esac

- 增加脚本执行权限

[fickler@hadoop102 bin]$ chmod u+x zk.sh

- Zookeeper集群启动脚本

[fickler@hadoop102 bin]$ zk.sh start

---------- zookeeper hadoop102 启动 ------------

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

---------- zookeeper hadoop103 启动 ------------

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

---------- zookeeper hadoop104 启动 ------------

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

- Zookeeper集群停止脚本

[fickler@hadoop102 bin]$ zk.sh stop

---------- zookeeper hadoop102 停止 ------------

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Stopping zookeeper ... STOPPED

---------- zookeeper hadoop103 停止 ------------

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Stopping zookeeper ... STOPPED

---------- zookeeper hadoop104 停止 ------------

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.7/bin/../conf/zoo.cfg

Stopping zookeeper ... STOPPED

2. 客户端命令操作

1. 命令行语法

| 命令基本语法 | 功能描述 |

|---|---|

| help | 显示所有操作命令 |

| ls path | 使用 ls 命令来查看当前 znode 的子节点【可监听】-w 监听子节点变化 -s 附加次级信息 |

| create | 普通创建 -s 含有序列 -e 临时(重启或者超时消失) |

| get path | 获得节点的值【可监听】-w 监听节点内容变化 -s 附加次级信息 |

| set | 设置节点的具体值 |

| stat | 查看节点状态 |

| delete | 删除节点 |

| deleteall | 递归删除节点 |

1)启动客户端

[fickler@hadoop102 zookeeper-3.5.7]$ bin/zkCli.sh -server

2)显示所有操作命令

[zk: localhost:2181(CONNECTED) 3] help

2. znode节点数据信息

1)查看当前znode中所包含的内容

[zk: localhost:2181(CONNECTED) 4] ls /

[zookeeper]

2)查看当前节点详细数据

[zk: localhost:2181(CONNECTED) 5] ls -s /

[zookeeper]cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0x0

cversion = -1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 1

- czxid:创建节点的事务zxid

每次修改 zookeeper 状态都会产生一个 zookeeper 事务 ID。事务 ID 是 zookeeper 中所有修改总的次序。每次修改都会有唯一的 zxid,如果 zxid1 小于 zxid2,那么 zxid1 在 zxid2 之前发生。 - ctime:znode 被创建的毫秒数(从 1970 年开始)

- mzxid:znode 最后更新的事务 zxid

- mtime:znode 最后修改的毫秒数(从 1970 年开始)

- pZxid:znode 最后更新的子节点 zxid

- cversion:znode 子节点变化号,znode 子节点修改次数

- dataversion:znode 数据变化号

- aclVersion:znode 访问控制列表的变化号

- ephemeralOwner:如果是临时节点,这个是 znode 拥有者的 session id。如果不是临时节点则是 0。

- dataLength:znode 的数据长度

- numChildren:znode 子节点数量

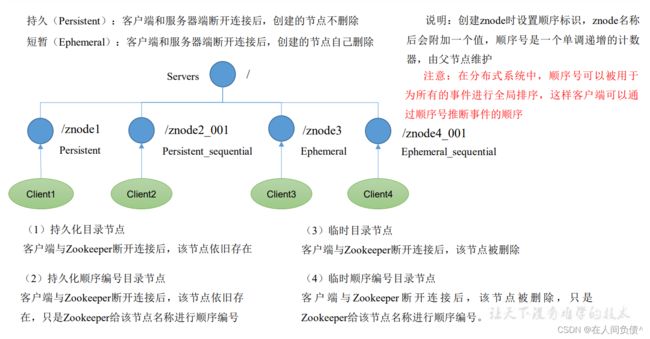

3. 节点类型(持久/短暂/有序号/无序号)

[zk: localhost:2181(CONNECTED) 6] create /sanguo "diaochan"

Created /sanguo

[zk: localhost:2181(CONNECTED) 7] create /sanguo/shuguo "liubei"

Created /sanguo/shuguo

2)获得节点的值

[zk: localhost:2181(CONNECTED) 8] get -s /sanguo

diaochan

cZxid = 0x300000002

ctime = Mon Oct 10 13:35:13 CST 2022

mZxid = 0x300000002

mtime = Mon Oct 10 13:35:13 CST 2022

pZxid = 0x300000003

cversion = 1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 8

numChildren = 1

[zk: localhost:2181(CONNECTED) 9] get -s /sanguo/shuguo

liubei

cZxid = 0x300000003

ctime = Mon Oct 10 13:35:37 CST 2022

mZxid = 0x300000003

mtime = Mon Oct 10 13:35:37 CST 2022

pZxid = 0x300000003

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 6

numChildren = 0

3)创建带序号的节点(永久节点 + 带序号)

- 先创建一个普通的根节点 /sanguo/weiguo

[zk: localhost:2181(CONNECTED) 10] create /sanguo/weiguo "caocao"

Created /sanguo/weiguo

- 创建带序号的节点

[zk: localhost:2181(CONNECTED) 11] create -s /sanguo/weiguo/zhangliao "zhangliao"

Created /sanguo/weiguo/zhangliao0000000000

[zk: localhost:2181(CONNECTED) 12] create -s /sanguo/weiguo/zhaogliao "zhangliao"

Created /sanguo/weiguo/zhaogliao0000000001

[zk: localhost:2181(CONNECTED) 13] create -s /sanguo/weiguo/xuchu "xuchu"

Created /sanguo/weiguo/xuchu0000000002

如果原来没有序号节点,序号从 0 开始依次递增。如果原来节点下已经有 2 个节点,则再排序时从 2 开始,以此类推。

4)创建短暂节点(短暂节点 + 不带序号 or 带序号)

- 创建短暂的不带序号的节点

[zk: localhost:2181(CONNECTED) 14] create -e /sanguo/wuguo "zhangyu"

Created /sanguo/wuguo

- 创建短暂的带序号的节点

[zk: localhost:2181(CONNECTED) 15] create -e -s /sanguo/wuguo "zhangyu"

Created /sanguo/wuguo0000000003

- 在当前客户端是能查看到的

[zk: localhost:2181(CONNECTED) 16] ls /sanguo

[shuguo, weiguo, wuguo, wuguo0000000003]

- 退出当前客户端然后再重启客户端

[zk: localhost:2181(CONNECTED) 17] quit

[fickler@hadoop102 zookeeper-3.5.7]$ bin/zkCli.sh

- 再次查看根目录下短暂节点已经删除

[zk: localhost:2181(CONNECTED) 0] ls /sanguo

[shuguo, weiguo]

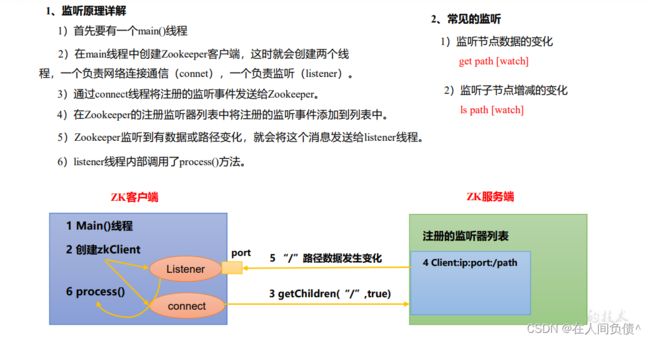

4. 监听器原理

客户端注册监听它关心的目录节点,当目录节点发送变化(数据改变、节点删除、子目录增加删除)时,ZooKeeper 会通知客户端。监听机制保证 ZooKeeper 保存的任何的数据的任何改变都能快速的响应到监听了该节点的应用程序。

1)节点的值变化监听

- 在 hadoop104 主机上注册监听 /sanguo 节点数据变化

[zk: localhost:2181(CONNECTED) 0] get -w /sanguo

diaochan

- 在 hadoop103 主机上修改 /sanguo 节点的数据

[zk: localhost:2181(CONNECTED) 0] set /sanguo "xisi"

- 观察 hadoop104 主机收到数据变化的监听

[zk: localhost:2181(CONNECTED) 1]

WATCHER::

WatchedEvent state:SyncConnected type:NodeDataChanged path:/sanguo

注意:在 hadoop103 再多次修改 /sanguo 的值,hadoop104 上不会再收到监听。因为注册一次,只能监听一次。想再次监听,需要再次注册。

2)节点的子节点变化监听(路径变化)

- 在 hadoop104 主机上注册监听 /sanguo 节点的子节点变化

[zk: localhost:2181(CONNECTED) 2] ls -w /sanguo

[shuguo, weiguo]

- 在 hadoop103 主机 /sanguo 节点上创建子节点

[zk: localhost:2181(CONNECTED) 2] create /sanguo/jin "simayi"

Created /sanguo/jin

- 观察 hadoop104 主机收到子节点变化的监听

[zk: localhost:2181(CONNECTED) 3]

WATCHER::

WatchedEvent state:SyncConnected type:NodeChildrenChanged path:/sanguo

注意:节点的路径变化,也是注册一次,生效一次。想多次生效,就需要多次注册。

5. 节点删除与查看

1)删除节点

[zk: localhost:2181(CONNECTED) 3] delete /sanguo/jin

2)递归删除节点

[zk: localhost:2181(CONNECTED) 4] deleteall /sanguo/shuguo

3)查看节点状态

[zk: localhost:2181(CONNECTED) 5] stat /sanguo

cZxid = 0x300000002

ctime = Mon Oct 10 13:35:13 CST 2022

mZxid = 0x30000000e

mtime = Mon Oct 10 14:01:03 CST 2022

pZxid = 0x300000012

cversion = 9

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 4

numChildren = 1

3. 客户端API操作

前提:保证 hadoop102、hadoop103、hadoop104服务器上 zookeeper 集群服务器启动。

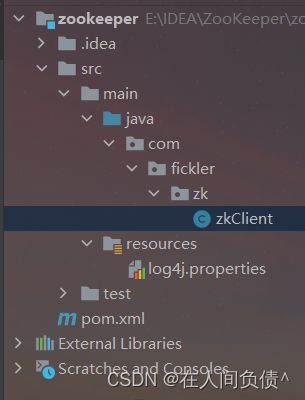

1. IDEA环境搭建

1)创建一个工程:zookeeper

2)添加 pom 文件

<dependencies>

<dependency>

<groupId>junitgroupId>

<artifactId>junitartifactId>

<version>RELEASEversion>

dependency>

<dependency>

<groupId>org.apache.logging.log4jgroupId>

<artifactId>log4j-coreartifactId>

<version>2.8.2version>

dependency>

<dependency>

<groupId>org.apache.zookeepergroupId>

<artifactId>zookeeperartifactId>

<version>3.5.7version>

dependency>

dependencies>

3)拷贝 log4j.properties 文件到项目根目录

需要在项目的 src/main/resources 目录下,新建一个文件,命名为 log4j.properties,在文件中填入

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

4)创建包名 com.fickler.zk

5)创建类名称 zkClient

2. 创建 ZooKeeper 客户端

//注意:逗号左右不能有空格

private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";

private int sessionTimeout = 2000;

@Test

public void init() throws IOException {

ZooKeeper zooKeeper = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

@Override

public void process(WatchedEvent watchedEvent) {

}

});

}

Zookeeper 客户端创建成功

3. 创建子节点

@Test

public void create() throws InterruptedException, KeeperException {

//参数1:要创建爱你的节点的路径;参数2:节点数据;参数3:节点权限;参数4:节点的类型

String nodeCreated = zooKeeper.create("/atguigu", "shuaige".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);

}

测试:在 hadoop102 的 zk 客户端上查看创建节点情况

[zk: localhost:2181(CONNECTED) 7] ls /

[atguigu, sanguo, sanguojin, zookeeper]

4. 获取子节点并监听节点变化

@Test

public void getChildren() throws InterruptedException, KeeperException {

List<String> children = zooKeeper.getChildren("/", true);

for (String child : children) {

System.out.println(child);

}

//延时阻塞

Thread.sleep(Long.MAX_VALUE);

}

在 IDEA 控制台上看到如下节点:

5. 判断Znode是否存在

@Test

public void exist() throws InterruptedException, KeeperException {

Stat stat = zooKeeper.exists("/atguigu", false);

System.out.println(stat == null ? "not exist" : "exist");

}