基于ubuntu 20.04与cri-docker 搭建部署高可用k8s 1.25.3

目录

一、overlay简介

二、overlay通信过程

三、overlay应用场景

四、underlay简介

五、underlay实现模式简介

六、MAC Vlan工作模式

七、kubernetes pod通信总结

八、underlay实验案例

8.2、安装docker环境

8.3、安装cri-docker

8.4、集群初始化准备

8.5、初始化集群

8.6、添加集群节点

8.6.2、添加node节点

九、安装underlay网络组件

9.2、部署hybridnet

一、overlay简介

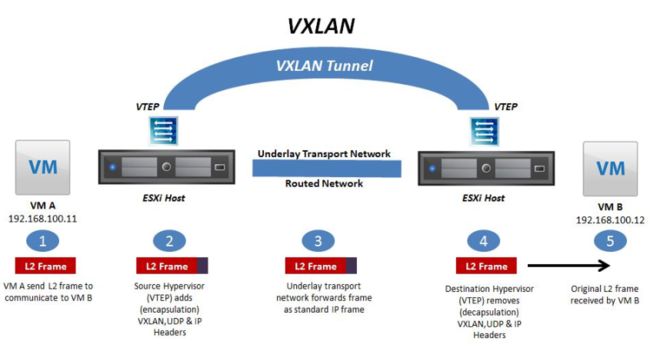

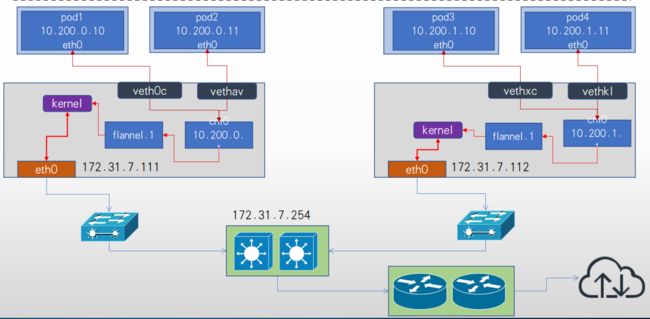

- VxLAN: VxLAN全称是Virtual eXtensible Local Area Network(虚拟扩展本地局域网) ,主要有Cisco推出, vxlan是一个 VLAN 的扩展协议, 是由IETF定义的NVO3(Network Virtualization over Layer 3) 标准技术之一,VXLAN的特点是将L2的以太帧封装到UDP报文(即L2 over L4) 中, 并在L3网络中传输, 即使用MAC in UDP的方法对报文进行重新封装, VxLAN 本质上是一种overlay的隧道封装技术, 它将L2的以太网帧封装成L4的UDP数据报,然后在L3的网络中传输, 效果就像L2的以太网帧在一个广播域中传输一样, 实际上L2的以太网帧跨越了L3网络传输, 但是缺不受L3网络的限制, vxlan采用24位标识vlan ID号, 因此可以支持2^24=16777216个vlan, 其可扩展性比vlan强大的多, 可以支持大规模数据中心的网络需求。

- VTEP(VXLAN Tunnel Endpoint vxlan隧道端点),VTEP是VXLAN网络的边缘设备, 是VXLAN隧道的起点和终点, VXLAN对用户原始数据帧的封装和解封装均在VTEP上进行,用于VXLAN报文的封装和解封装,VTEP与物理网络相连,分配的地址为物理网IP地址,VXLAN报文中源IP地址为本节点的VTEP地址,VXLAN报文中目的IP地址为对端节点的VTEP地址, 一对VTEP地址就对应着一个VXLAN隧道, 服务器上的虚拟交换机(隧道flannel.1就是VTEP), 比如一个虚拟机网络中的多个vxlan就需要多个VTEP对不同网络的报文进行封装与解封装。

- VNI(VXLAN Network Identifier) : VXLAN网络标识VNI类似VLAN ID,用于区分VXLAN段,不同VXLAN段的虚拟机不能直接二层相互通信,一个VNI表示一个租户,即使多个终端用户属于同一个VNI,也表示一个租户。

- NVGRE: Network Virtualization using Generic Routing Encapsulation, 主要支持者是Microsoft, 与VXLAN不同的是, NVGRE没有采用标准传输协议(TCP/UDP) , 而是借助通用路由封装协议(GRE) , NVGRE使用GRE头部的低24位作为租户网络标识符(TNI) , 与VXLAN一样可以支持1777216个vlan。

二、overlay通信过程

- VM A发送L2 帧与VM请求与VM B通信。

- 源宿主机VTEP添加或者封装VXLAN、 UDP及IP头部报文。

- 网络层设备将封装后的报文通过标准的报文在三层网络进行转发到目标主机。

- 目标宿主机VTEP删除或者解封装VXLAN、 UDP及IP头部。

- 将原始L2帧发送给目标VM。

三、overlay应用场景

- 叠加网络/覆盖网络, 在物理网络的基础之上叠加实现新的虚拟网络, 即可使网络的中的容器可以相互通信。

- 优点是对物理网络的兼容性比较好, 可以实现pod的夸宿主机子网通信。

- calico与flannel等网络插件都支持overlay网络。

- 缺点是有额外的封装与解封性能开销。

- 目前私有云使用比较多。

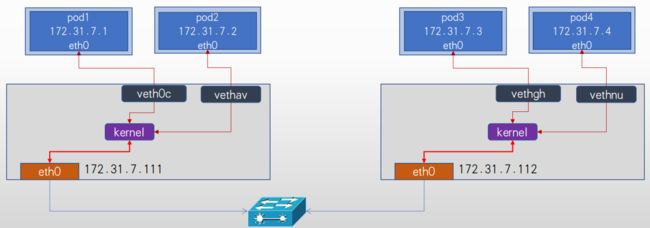

四、underlay简介

1、Underlay网络就是传统IT基础设施网络, 由交换机和路由器等设备组成, 借助以太网协议、 路由协议和VLAN协议等驱动, 它还是Overlay网络的底层网络, 为Overlay网络提供数据通信服务。 容器网络中的Underlay网络是指借助驱动程序将宿主机的底层网络接口直接暴露给容器使用的一种网络构建技术,较为常见的解决方案有MAC VLAN、 IP VLAN和直接路由等。

2、Underlay依赖于网络网络进行跨主机通信。

五、underlay实现模式简介

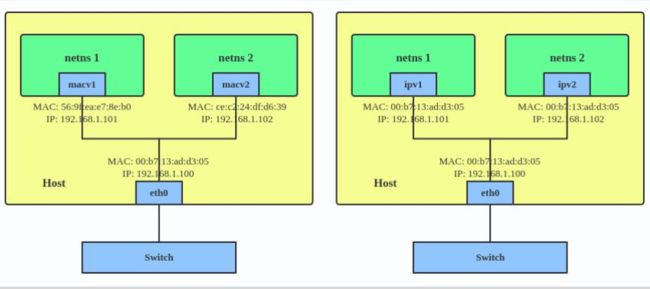

1、Mac Vlan模式:

- MAC VLAN: 支持在同一个以太网接口上虚拟出多个网络接口(子接口), 每个虚拟接口都拥有唯一的MAC地址并可配置网卡子接口IP。

2、IP VLAN模式:

- IP VLAN类似于MAC VLAN, 它同样创建新的虚拟网络接口并为每个接口分配唯一的IP地址, 不同之处在于, 每个虚拟接口将共享使用物理接口的MAC地址。

六、MAC Vlan工作模式

Private(私有)模式:

- 在Private模式下, 同一个宿主机下的容器不能通信, 即使通过交换机再把数据报文转发回来也不行。

VEPA模式:

- 虚拟以太端口汇聚器(Virtual Ethernet Port Aggregator, 简称VEPA) , 在这种模式下, macvlan内的容器不能直接接收在同一个物理网卡的容器的请求数据包,

- 但是可以经过交换机的(端口回流)再转发回来可以实现通信。

passthru(直通)模式:

- Passthru模式下该macvlan只能创建一个容器, 当运行一个容器后再创建其他容器则会报错。

bridge模式:

- 在bridge这种模式下, 使用同一个宿主机网络的macvlan容器可以直接实现通信, 推荐使用此模式。

Overlay: 基于VXLAN、 NVGRE等封装技术实现overlay叠加网络。

Macvlan: 基于Docker宿主机物理网卡的不同子接口实现多个虚拟vlan, 一个子接口就是一个虚拟vlan, 容器通过宿主机的路由功能和外网保持通信。

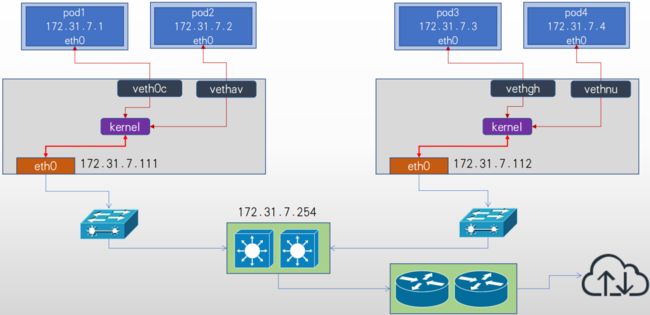

Underlay架构图

网络通信总结:

Overlay:基于VXLAN、 NVGRE等封装技术实现overlay叠加网络。

Macvlan:基于Docker宿主机物理网卡的不同子接口实现多个虚拟vlan,一个子接口就是一个虚拟vlan,容器通过宿主机的路由功能和外网保持通信。

七、kubernetes pod通信总结

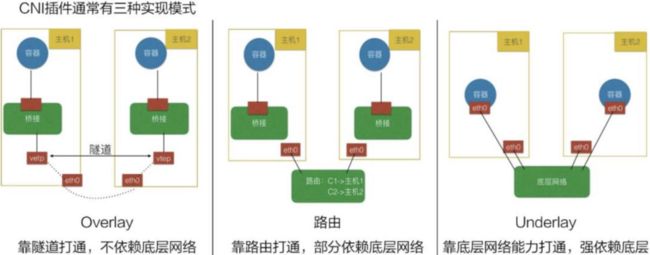

7.1、CNS插件三种模式

7.2、k8s 网络通信模式

Overlay网络:

- Flannel Vxlan、 Calico BGP、 Calico Vxlan

- 将pod 地址信息封装在宿主机地址信息以内, 实现跨主机且可跨node子网的通信报文。

直接路由:

- Flannel Host-gw、 Flannel VXLAN Directrouting、 Calico Directrouting

- 基于主机路由, 实现报文从源主机到目的主机的直接转发, 不需要进行报文的叠加封装, 性能比overlay更好。

Underlay:

- 需要为pod启用单独的虚拟机网络, 而是直接使用宿主机物理网络, pod甚至可以在k8s环境之外的节点直接访问(与node节点的网络被打通), 相当于把pod当桥接模式的虚拟机使用, 比较方便k8s环境以外的访问访问k8s环境中的pod中的服务, 而且由于主机使用的宿主机网络, 其性能最好。

八、underlay实验案例

192.168.247.71 k8s-master-underlay-01 2vcpu 4G 50G

192.168.247.72 k8s-master-underlay-02 2vcpu 4G 50G

192.168.247.73 k8s-master-underlay-03 2vcpu 4G 50G

192.168.247.74 k8s-node-underlay-01 2vcpu 4G 50G

#修改主机名

hostnamectl set-hostname k8s-master-underlay-01

hostnamectl set-hostname k8s-master-underlay-02

hostnamectl set-hostname k8s-master-underlay-03

hostnamectl set-hostname k8s-node-underlay-01

#配置hosts

cat > /etc/hosts << EOF

127.0.0.1 localhost

127.0.1.1 cyh

# The following lines are desirable for IPv6 capable hosts

::1 ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

192.168.247.71 k8s-master-underlay-01

192.168.247.72 k8s-master-underlay-02

192.168.247.73 k8s-master-underlay-03

192.168.247.74 k8s-node-underlay-01

EOF

swapoff -a #关闭swap交换分区

sed -i 's@/swap.img@#swap.img@g' /etc/fstab #禁止开机前启动交换分区

apt-get update #更新本地仓库源8.2、安装docker环境

#安装必要的一些系统工具

apt -y install apt-transport-https ca-certificates curl software-properties-common

#安装GPG证书

curl -fsSL http://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add -

#写入软件源信息

add-apt-repository "deb [arch=amd64] http://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable"

#更新软件源

apt-get -y update

#查看可安装的Docker版本

apt-cache madison docker-ce docker-ce-cli

apt install -y docker-ce docker-ce-cli

systemctl start docker && systemctl enable docker

参数优化, 配置镜像加速并使用systemd:

mkdir -p /etc/docker

cat > /etc/docker/daemon.json <8.3、安装cri-docker

[root@k8s-master-underlay-01 ~]# wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.2.6/cri-dockerd-0.2.6.amd64.tgz

[root@k8s-master-underlay-01 ~]# tar xvf cri-dockerd-0.2.6.amd64.tgz

[root@k8s-master-underlay-01 ~]# cp cri-dockerd/cri-dockerd /usr/local/bin/

[root@k8s-master-underlay-01 ~]# scp cri-dockerd/cri-dockerd [email protected]:/usr/local/bin/

[root@k8s-master-underlay-01 ~]# scp cri-dockerd/cri-dockerd [email protected]:/usr/local/bin/

[root@k8s-master-underlay-01 ~]# scp cri-dockerd/cri-dockerd [email protected]:/usr/local/bin/

#所有节点配置cri-dockerd.service文件

cat > /lib/systemd/system/cri-docker.service < /etc/systemd/system/cri-docker.socket < 8.4、集群初始化准备

安装kubeadm、kubelet、kubectl组件,版本 ≥ 1.24.0

所有节点安装kubelet kubeadm kubectl, 配置阿里云镜像的kubernetes源(用于安装kubelet kubeadm kubectl命令)

使用阿里或者清华大学的kubernetes镜像源

apt-get update && apt-get install -y apt-transport-https

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

cat </etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOF

#开始安装kubeadm:

apt-get update

apt-cache madison kubeadm

apt-get install -y kubelet=1.25.3-00 kubeadm=1.25.3-00 kubectl=1.25.3-00

#验证版本

# kubeadm version

kubeadm version: &version.Info{Major:"1", Minor:"25", GitVersion:"v1.25.3", GitCommit:"434bfd82814af038ad94d62ebe59b133fcb50506", GitTreeState:"clean", BuildDate:"2022-10-12T10:55:36Z", GoVersion:"go1.19.2", Compiler:"gc", Platform:"linux/amd64"} 镜像准备

[root@k8s-master-underlay-01 ~]# kubeadm config images list --kubernetes-version v1.25.3

registry.k8s.io/kube-apiserver:v1.25.3

registry.k8s.io/kube-controller-manager:v1.25.3

registry.k8s.io/kube-scheduler:v1.25.3

registry.k8s.io/kube-proxy:v1.25.3

registry.k8s.io/pause:3.8

registry.k8s.io/etcd:3.5.4-0

registry.k8s.io/coredns/coredns:v1.9.3

[root@k8s-master-underlay-01 ~]#

[root@k8s-master-underlay-01 ~]# vi images-download.sh

[root@k8s-master-underlay-01 ~]# cat images-download.sh

#!/bin/bash

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.25.3

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.25.3

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.25.3

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.25.3

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.8

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.4-0

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns/coredns:v1.9.3

[root@k8s-master-underlay-01 ~]# bash images-download.sh8.5、初始化集群

场景1: pod可以选择overlay或者underlay, SVC使用overlay, 如果是underlay需要配置SVC使用宿主机的子网比如以下场景是overlay网络、 后期会用于overlay场景的pod, service会用于overlay的svc场景。

kubeadm init --apiserver-advertise-address=192.168.247.71 \

--apiserver-bind-port=6443 \

--kubernetes-version=v1.25.3 \

--pod-network-cidr=10.200.0.0/16 \

--service-cidr=10.100.0.0/16 \

--service-dns-domain=cluster.local \

--image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers \

--cri-socket unix:///var/run/cri-dockerd.sock

场景2: pod可以选择overlay或者underlay, SVC使用underlay初始化, --pod-network-cidr=10.200.0.0/16会用于后期overlay的场景, underlay的网络CIDR后期单独指定, overlay会与underlay并存, --servicecidr=192.168.200.0/24用于后期的underlay svc, 通过SVC可以直接访问pod。

kubeadm init --apiserver-advertise-address=192.168.247.71 \

--apiserver-bind-port=6443 \

--kubernetes-version=v1.25.3 \

--pod-network-cidr=10.200.0.0/16 \

--service-cidr=192.168.200.0/24 \

--service-dns-domain=cluster.local \

--image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers \

--cri-socket unix:///var/run/cri-dockerd.sock

注意: 后期如果要访问SVC则需要在网络设备配置静态路由, 因为SVC是iptables或者IPVS规则, 不会进行arp报文广播:

-A KUBE-SERVICES -d 172.31.5.148/32 -p tcp -m comment --comment "myserver/myserver-tomcat-app1-service-underlay:http cluster IP" -m tcp --dport 80 -j KUBE-SVC-DXPW2IL54XTPIKP5

-A KUBE-SVC-DXPW2IL54XTPIKP5 ! -s 10.200.0.0/16 -d 172.31.5.148/32 -p tcp -m comment --comment "myserver/myserver-tomcat-app1-serviceunderlay:http cluster IP" -m tcp --dport 80 -j KUBE-MARK-MASQ场景1-初始化

[root@k8s-master-underlay-01 ~]# kubeadm init --control-plane-endpoint "192.168.247.71" \

> --upload-certs \

> --apiserver-advertise-address=192.168.247.71 \

> --apiserver-bind-port=6443 \

> --kubernetes-version=v1.25.3 \

> --pod-network-cidr=10.200.0.0/16 \

> --service-cidr=10.100.0.0/16 \

> --service-dns-domain=cluster.local \

> --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers \

> --cri-socket unix:///var/run/cri-dockerd.sock

[init] Using Kubernetes version: v1.25.3

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master-underlay-01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.100.0.1 192.168.247.71]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master-underlay-01 localhost] and IPs [192.168.247.71 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master-underlay-01 localhost] and IPs [192.168.247.71 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 19.007146 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

f8450272a7f24480172a246c55d0fa7f542e19f5769b6e91e2cd26421183896b

[mark-control-plane] Marking the node k8s-master-underlay-01 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master-underlay-01 as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: 7vag71.8v7mqsajsya5n00t

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of the control-plane node running the following command on each as root:

kubeadm join 192.168.247.71:6443 --token 7vag71.8v7mqsajsya5n00t \

--discovery-token-ca-cert-hash sha256:471386ff8802bd6aff33e41518f1644153ea475ecfa4eb9f94bda8be66ebb388 \

--control-plane --certificate-key f8450272a7f24480172a246c55d0fa7f542e19f5769b6e91e2cd26421183896b

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

"kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.247.71:6443 --token 7vag71.8v7mqsajsya5n00t \

--discovery-token-ca-cert-hash sha256:471386ff8802bd6aff33e41518f1644153ea475ecfa4eb9f94bda8be66ebb388

[root@k8s-master-underlay-01 ~]#8.6、添加集群节点

8.6.1、添加master

[root@k8s-master-underlay-02 ~]# kubeadm join 192.168.247.71:6443 --token 7vag71.8v7mqsajsya5n00t \

> --discovery-token-ca-cert-hash sha256:471386ff8802bd6aff33e41518f1644153ea475ecfa4eb9f94bda8be66ebb388 \

> --control-plane --certificate-key f8450272a7f24480172a246c55d0fa7f542e19f5769b6e91e2cd26421183896b \

> --cri-socket unix:///var/run/cri-dockerd.sock

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[preflight] Running pre-flight checks before initializing the new control plane instance

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[download-certs] Downloading the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master-underlay-02 localhost] and IPs [192.168.247.72 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master-underlay-02 localhost] and IPs [192.168.247.72 127.0.0.1 ::1]

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master-underlay-02 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.100.0.1 192.168.247.72 192.168.247.71]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Valid certificates and keys now exist in "/etc/kubernetes/pki"

[certs] Using the existing "sa" key

[kubeconfig] Generating kubeconfig files

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[check-etcd] Checking that the etcd cluster is healthy

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

[etcd] Announced new etcd member joining to the existing etcd cluster

[etcd] Creating static Pod manifest for "etcd"

[etcd] Waiting for the new etcd member to join the cluster. This can take up to 40s

The 'update-status' phase is deprecated and will be removed in a future release. Currently it performs no operation

[mark-control-plane] Marking the node k8s-master-underlay-02 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master-underlay-02 as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

This node has joined the cluster and a new control plane instance was created:

* Certificate signing request was sent to apiserver and approval was received.

* The Kubelet was informed of the new secure connection details.

* Control plane label and taint were applied to the new node.

* The Kubernetes control plane instances scaled up.

* A new etcd member was added to the local/stacked etcd cluster.

To start administering your cluster from this node, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Run 'kubectl get nodes' to see this node join the cluster.

[root@k8s-master-underlay-02 ~]# mkdir -p $HOME/.kube

[root@k8s-master-underlay-02 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master-underlay-02 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master-underlay-02 ~]#

[root@k8s-master-underlay-03 ~]# kubeadm join 192.168.247.71:6443 --token 7vag71.8v7mqsajsya5n00t \

> --discovery-token-ca-cert-hash sha256:471386ff8802bd6aff33e41518f1644153ea475ecfa4eb9f94bda8be66ebb388 \

> --control-plane --certificate-key f8450272a7f24480172a246c55d0fa7f542e19f5769b6e91e2cd26421183896b \

> --cri-socket unix:///var/run/cri-dockerd.sock

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[preflight] Running pre-flight checks before initializing the new control plane instance

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[download-certs] Downloading the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master-underlay-03 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.100.0.1 192.168.247.73 192.168.247.71]

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master-underlay-03 localhost] and IPs [192.168.247.73 127.0.0.1 ::1]

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master-underlay-03 localhost] and IPs [192.168.247.73 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Valid certificates and keys now exist in "/etc/kubernetes/pki"

[certs] Using the existing "sa" key

[kubeconfig] Generating kubeconfig files

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[check-etcd] Checking that the etcd cluster is healthy

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

[etcd] Announced new etcd member joining to the existing etcd cluster

[etcd] Creating static Pod manifest for "etcd"

[etcd] Waiting for the new etcd member to join the cluster. This can take up to 40s

The 'update-status' phase is deprecated and will be removed in a future release. Currently it performs no operation

[mark-control-plane] Marking the node k8s-master-underlay-03 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master-underlay-03 as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

This node has joined the cluster and a new control plane instance was created:

* Certificate signing request was sent to apiserver and approval was received.

* The Kubelet was informed of the new secure connection details.

* Control plane label and taint were applied to the new node.

* The Kubernetes control plane instances scaled up.

* A new etcd member was added to the local/stacked etcd cluster.

To start administering your cluster from this node, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Run 'kubectl get nodes' to see this node join the cluster.

[root@k8s-master-underlay-03 ~]# mkdir -p $HOME/.kube

[root@k8s-master-underlay-03 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master-underlay-03 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master-underlay-03 ~]#8.6.2、添加node节点

[root@k8s-node-underlay-01 ~]# kubeadm join 192.168.247.71:6443 --token 7vag71.8v7mqsajsya5n00t \

> --discovery-token-ca-cert-hash sha256:471386ff8802bd6aff33e41518f1644153ea475ecfa4eb9f94bda8be66ebb388 \

> --cri-socket unix:///var/run/cri-dockerd.sock

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@k8s-node-underlay-01 ~]#九、安装underlay网络组件

9.1、helm环境准备

官网地址:

https://helm.sh/docs/intro/install/

https://github.com/helm/helm/releases

[root@k8s-master-underlay-01 ~]# wget https://get.helm.sh/helm-v3.9.0-linux-amd64.tar.gz

[root@k8s-master-underlay-01 ~]# tar xvf helm-v3.9.0-linux-amd64.tar.gz

[root@k8s-master-underlay-01 ~]# mv linux-amd64/helm /usr/local/bin/

[root@k8s-master-underlay-01 ~]# helm version

version.BuildInfo{Version:"v3.9.0", GitCommit:"7ceeda6c585217a19a1131663d8cd1f7d641b2a7", GitTreeState:"clean", GoVersion:"go1.17.5"}

[root@k8s-master-underlay-01 ~]#9.2、部署hybridnet

[root@k8s-master-underlay-01 ~]# helm repo add hybridnet https://alibaba.github.io/hybridnet/

"hybridnet" has been added to your repositories

[root@k8s-master-underlay-01 ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "hybridnet" chart repository

Update Complete. ⎈Happy Helming!⎈

[root@k8s-master-underlay-01 ~]# helm install hybridnet hybridnet/hybridnet -n kube-system --set init.cidr=10.200.0.0/16 #配置overlay pod网络, 如果不指定--set init.cidr=10.200.0.0/16默认会使用100.64.0.0/16 NAME: hybridnet

LAST DEPLOYED: Fri Oct 28 01:01:18 2022

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

[root@k8s-master-underlay-01 ~]#

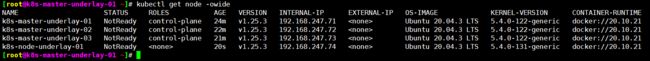

[root@k8s-master-underlay-01 ~]# kubectl get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master-underlay-01 Ready control-plane 46m v1.25.3 192.168.247.71 Ubuntu 20.04.3 LTS 5.4.0-122-generic docker://20.10.21

k8s-master-underlay-02 Ready control-plane 44m v1.25.3 192.168.247.72 Ubuntu 20.04.3 LTS 5.4.0-122-generic docker://20.10.21

k8s-master-underlay-03 Ready control-plane 43m v1.25.3 192.168.247.73 Ubuntu 20.04.3 LTS 5.4.0-122-generic docker://20.10.21

k8s-node-underlay-01 Ready 22m v1.25.3 192.168.247.74 Ubuntu 20.04.3 LTS 5.4.0-131-generic docker://20.10.21

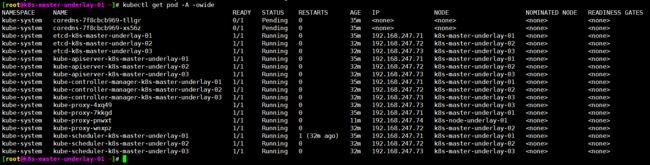

[root@k8s-master-underlay-01 ~]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-7f8cbcb969-tllgr 0/1 ContainerCreating 0 46m

kube-system coredns-7f8cbcb969-xs56z 0/1 ContainerCreating 0 46m

kube-system etcd-k8s-master-underlay-01 1/1 Running 0 46m

kube-system etcd-k8s-master-underlay-02 1/1 Running 0 44m

kube-system etcd-k8s-master-underlay-03 1/1 Running 0 43m

kube-system hybridnet-daemon-956nw 2/2 Running 0 3m41s

kube-system hybridnet-daemon-f86fj 2/2 Running 0 3m43s

kube-system hybridnet-daemon-mvfbf 2/2 Running 0 3m48s

kube-system hybridnet-daemon-p9jnd 2/2 Running 0 3m43s

kube-system hybridnet-manager-5fcd869c59-bwwdc 0/1 Pending 0 3m43s

kube-system hybridnet-manager-5fcd869c59-crdf8 0/1 Pending 0 3m41s

kube-system hybridnet-manager-5fcd869c59-vpj7s 0/1 Pending 0 3m41s

kube-system hybridnet-webhook-5dc9fc7d9d-8r5zp 0/1 Pending 0 3m48s

kube-system hybridnet-webhook-5dc9fc7d9d-kwxz4 0/1 Pending 0 3m48s

kube-system hybridnet-webhook-5dc9fc7d9d-rkvz4 0/1 Pending 0 3m48s

kube-system kube-apiserver-k8s-master-underlay-01 1/1 Running 0 46m

kube-system kube-apiserver-k8s-master-underlay-02 1/1 Running 0 43m

kube-system kube-apiserver-k8s-master-underlay-03 1/1 Running 0 43m

kube-system kube-controller-manager-k8s-master-underlay-01 1/1 Running 0 46m

kube-system kube-controller-manager-k8s-master-underlay-02 1/1 Running 0 44m

kube-system kube-controller-manager-k8s-master-underlay-03 1/1 Running 0 43m

kube-system kube-proxy-4xq49 1/1 Running 0 43m

kube-system kube-proxy-7kkgd 1/1 Running 0 46m

kube-system kube-proxy-pnwxt 1/1 Running 0 22m

kube-system kube-proxy-wnxpz 1/1 Running 0 44m

kube-system kube-scheduler-k8s-master-underlay-01 1/1 Running 1 (44m ago) 46m

kube-system kube-scheduler-k8s-master-underlay-02 1/1 Running 0 43m

kube-system kube-scheduler-k8s-master-underlay-03 1/1 Running 0 43m

[root@k8s-master-underlay-01 ~]# 此时hybridnet-manager、hybridnet-webhook pod Pending,通过describe查发现没有集群节点没标master

[root@k8s-master-underlay-01 ~]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-7f8cbcb969-tllgr 0/1 ContainerCreating 0 45m

kube-system coredns-7f8cbcb969-xs56z 0/1 ContainerCreating 0 45m

kube-system etcd-k8s-master-underlay-01 1/1 Running 0 45m

kube-system etcd-k8s-master-underlay-02 1/1 Running 0 43m

kube-system etcd-k8s-master-underlay-03 1/1 Running 0 42m

kube-system hybridnet-daemon-956nw 2/2 Running 0 2m42s

kube-system hybridnet-daemon-f86fj 2/2 Running 0 2m44s

kube-system hybridnet-daemon-mvfbf 2/2 Running 0 2m49s

kube-system hybridnet-daemon-p9jnd 2/2 Running 0 2m44s

kube-system hybridnet-manager-5fcd869c59-bwwdc 0/1 Pending 0 2m44s

kube-system hybridnet-manager-5fcd869c59-crdf8 0/1 Pending 0 2m42s

kube-system hybridnet-manager-5fcd869c59-vpj7s 0/1 Pending 0 2m42s

kube-system hybridnet-webhook-5dc9fc7d9d-8r5zp 0/1 Pending 0 2m49s

kube-system hybridnet-webhook-5dc9fc7d9d-kwxz4 0/1 Pending 0 2m49s

kube-system hybridnet-webhook-5dc9fc7d9d-rkvz4 0/1 Pending 0 2m49s

kube-system kube-apiserver-k8s-master-underlay-01 1/1 Running 0 45m

kube-system kube-apiserver-k8s-master-underlay-02 1/1 Running 0 42m

kube-system kube-apiserver-k8s-master-underlay-03 1/1 Running 0 42m

kube-system kube-controller-manager-k8s-master-underlay-01 1/1 Running 0 45m

kube-system kube-controller-manager-k8s-master-underlay-02 1/1 Running 0 43m

kube-system kube-controller-manager-k8s-master-underlay-03 1/1 Running 0 42m

kube-system kube-proxy-4xq49 1/1 Running 0 42m

kube-system kube-proxy-7kkgd 1/1 Running 0 45m

kube-system kube-proxy-pnwxt 1/1 Running 0 21m

kube-system kube-proxy-wnxpz 1/1 Running 0 43m

kube-system kube-scheduler-k8s-master-underlay-01 1/1 Running 1 (43m ago) 45m

kube-system kube-scheduler-k8s-master-underlay-02 1/1 Running 0 42m

kube-system kube-scheduler-k8s-master-underlay-03 1/1 Running 0 42m

[root@k8s-master-underlay-01 ~]#

[root@k8s-master-underlay-01 ~]# kubectl describe pod -n kube-system hybridnet-manager-5fcd869c59-bwwdc

Name: hybridnet-manager-5fcd869c59-bwwdc

Namespace: kube-system

Priority: 2000000000

Priority Class Name: system-cluster-critical

Service Account: hybridnet

Node:

Labels: app=hybridnet-manager

pod-template-hash=5fcd869c59

Annotations:

Status: Pending

IP:

IPs:

Controlled By: ReplicaSet/hybridnet-manager-5fcd869c59

Containers:

hybridnet-manager:

Image: docker.io/hybridnetdev/hybridnet:v0.7.4

Port: 9899/TCP

Host Port: 9899/TCP

Command:

/hybridnet/hybridnet-manager

--default-ip-retain=true

--feature-gates=MultiCluster=false,VMIPRetain=false

--controller-concurrency=Pod=1,IPAM=1,IPInstance=1

--kube-client-qps=300

--kube-client-burst=600

--metrics-port=9899

Environment:

DEFAULT_NETWORK_TYPE: Overlay

DEFAULT_IP_FAMILY: IPv4

NAMESPACE: kube-system (v1:metadata.namespace)

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-nr5vh (ro)

Conditions:

Type Status

PodScheduled False

Volumes:

kube-api-access-nr5vh:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: node-role.kubernetes.io/master=

Tolerations: :NoSchedule op=Exists

node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 6m3s default-scheduler 0/4 nodes are available: 4 node(s) didn't match Pod's node affinity/selector. preemption: 0/4 nodes are available: 4 Preemption is not helpful for scheduling.

Warning FailedScheduling 56s default-scheduler 0/4 nodes are available: 4 node(s) didn't match Pod's node affinity/selector. preemption: 0/4 nodes are available: 4 Preemption is not helpful for scheduling.

[root@k8s-master-underlay-01 ~]#

解决办法:

[root@k8s-master-underlay-01 ~]# kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-master-underlay-01 Ready control-plane 50m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master-underlay-01,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-master-underlay-02 Ready control-plane 47m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master-underlay-02,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-master-underlay-03 Ready control-plane 47m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master-underlay-03,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-node-underlay-01 Ready 25m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node-underlay-01,kubernetes.io/os=linux

[root@k8s-master-underlay-01 ~]#

[root@k8s-master-underlay-01 ~]# kubectl label node k8s-master-underlay-01 node-role.kubernetes.io/master=

node/k8s-master-underlay-01 labeled

[root@k8s-master-underlay-01 ~]# kubectl label node k8s-master-underlay-02 node-role.kubernetes.io/master=

node/k8s-master-underlay-02 labeled

[root@k8s-master-underlay-01 ~]# kubectl label node k8s-master-underlay-03 node-role.kubernetes.io/master=

node/k8s-master-underlay-03 labeled

[root@k8s-master-underlay-01 ~]#

[root@k8s-master-underlay-01 ~]# kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-master-underlay-01 Ready control-plane,master 52m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master-underlay-01,kubernetes.io/os=linux,networking.alibaba.com/overlay-network-attachment=true,node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-master-underlay-02 Ready control-plane,master 49m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master-underlay-02,kubernetes.io/os=linux,networking.alibaba.com/overlay-network-attachment=true,node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-master-underlay-03 Ready control-plane,master 49m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-master-underlay-03,kubernetes.io/os=linux,networking.alibaba.com/overlay-network-attachment=true,node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-node-underlay-01 Ready 27m v1.25.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node-underlay-01,kubernetes.io/os=linux,networking.alibaba.com/overlay-network-attachment=true

[root@k8s-master-underlay-01 ~]#

[root@k8s-master-underlay-01 ~]# kubectl get pod -A -owide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-7f8cbcb969-tllgr 0/1 Running 0 51m 10.200.0.1 k8s-node-underlay-01

kube-system coredns-7f8cbcb969-xs56z 0/1 Running 0 51m 10.200.0.2 k8s-node-underlay-01

kube-system etcd-k8s-master-underlay-01 1/1 Running 0 51m 192.168.247.71 k8s-master-underlay-01

kube-system etcd-k8s-master-underlay-02 1/1 Running 0 49m 192.168.247.72 k8s-master-underlay-02

kube-system etcd-k8s-master-underlay-03 1/1 Running 0 48m 192.168.247.73 k8s-master-underlay-03

kube-system hybridnet-daemon-956nw 2/2 Running 0 8m57s 192.168.247.74 k8s-node-underlay-01

kube-system hybridnet-daemon-f86fj 2/2 Running 0 8m59s 192.168.247.72 k8s-master-underlay-02

kube-system hybridnet-daemon-mvfbf 2/2 Running 0 9m4s 192.168.247.71 k8s-master-underlay-01

kube-system hybridnet-daemon-p9jnd 2/2 Running 0 8m59s 192.168.247.73 k8s-master-underlay-03

kube-system hybridnet-manager-5fcd869c59-bwwdc 1/1 Running 0 8m59s 192.168.247.71 k8s-master-underlay-01

kube-system hybridnet-manager-5fcd869c59-crdf8 1/1 Running 0 8m57s 192.168.247.73 k8s-master-underlay-03

kube-system hybridnet-manager-5fcd869c59-vpj7s 1/1 Running 0 8m57s 192.168.247.72 k8s-master-underlay-02

kube-system hybridnet-webhook-5dc9fc7d9d-8r5zp 1/1 Running 0 9m4s 192.168.247.73 k8s-master-underlay-03

kube-system hybridnet-webhook-5dc9fc7d9d-kwxz4 1/1 Running 0 9m4s 192.168.247.72 k8s-master-underlay-02

kube-system hybridnet-webhook-5dc9fc7d9d-rkvz4 1/1 Running 0 9m4s 192.168.247.71 k8s-master-underlay-01

kube-system kube-apiserver-k8s-master-underlay-01 1/1 Running 0 51m 192.168.247.71 k8s-master-underlay-01

kube-system kube-apiserver-k8s-master-underlay-02 1/1 Running 0 48m 192.168.247.72 k8s-master-underlay-02

kube-system kube-apiserver-k8s-master-underlay-03 1/1 Running 0 48m 192.168.247.73 k8s-master-underlay-03

kube-system kube-controller-manager-k8s-master-underlay-01 1/1 Running 0 51m 192.168.247.71 k8s-master-underlay-01

kube-system kube-controller-manager-k8s-master-underlay-02 1/1 Running 0 49m 192.168.247.72 k8s-master-underlay-02

kube-system kube-controller-manager-k8s-master-underlay-03 1/1 Running 0 48m 192.168.247.73 k8s-master-underlay-03

kube-system kube-proxy-4xq49 1/1 Running 0 48m 192.168.247.73 k8s-master-underlay-03

kube-system kube-proxy-7kkgd 1/1 Running 0 51m 192.168.247.71 k8s-master-underlay-01

kube-system kube-proxy-pnwxt 1/1 Running 0 27m 192.168.247.74 k8s-node-underlay-01

kube-system kube-proxy-wnxpz 1/1 Running 0 49m 192.168.247.72 k8s-master-underlay-02

kube-system kube-scheduler-k8s-master-underlay-01 1/1 Running 1 (49m ago) 51m 192.168.247.71 k8s-master-underlay-01

kube-system kube-scheduler-k8s-master-underlay-02 1/1 Running 0 49m 192.168.247.72 k8s-master-underlay-02

kube-system kube-scheduler-k8s-master-underlay-03 1/1 Running 0 48m 192.168.247.73 k8s-master-underlay-03

[root@k8s-master-underlay-01 ~]# 9.3、验证underlay

创建underlay网络并与node节点关联

[root@k8s-master-underlay-01 ~]# mkdir /root/hybridnet

[root@k8s-master-underlay-01 ~]# cd hybridnet/

[root@k8s-master-underlay-01 hybridnet]# pwd

/root/hybridnet

[root@k8s-master-underlay-01 hybridnet]#

[root@k8s-master-underlay-01 hybridnet]# kubectl label node k8s-master-underlay-01 network=underlay-nethost

node/k8s-master-underlay-01 labeled

[root@k8s-master-underlay-01 hybridnet]# kubectl label node k8s-master-underlay-02 network=underlay-nethost

node/k8s-master-underlay-02 labeled

[root@k8s-master-underlay-01 hybridnet]# kubectl label node k8s-master-underlay-03 network=underlay-nethost

node/k8s-master-underlay-03 labeled

[root@k8s-master-underlay-01 hybridnet]#