【MMDetection3D】基于单目(Monocular)的3D目标检测入门实战

文章目录

- 前言

- 单目3D检测

-

- 概述

- 检测算法

- nuScenes-Mini数据集

-

- 简介

- 下载

- MMDetection3D

-

- 数据集准备

-

- KITTI

- nuScenes-Mini

- 配置文件

- 多卡训练

- 测试及可视化

- 参考资料

前言

本文简要介绍单目(仅一个摄像头)3D目标检测算法,并使用MMDetection3D算法库,对KITTI(SMOKE算法)、nuScenes-Mini(FCOS3D、PGD算法)进行训练、测试以及可视化操作。

单目3D检测

概述

单目3D检测,顾名思义,就是只使用一个摄像头采集图像数据,并将图像作为输入送入模型进,为每一个感兴趣的目标预测 3D 框及类别标签。但可以想到,图像不能提供足够的三维信息(缺失深度信息),因此人们在前些年热衷于研究LIDAR-based的算法(如: PointNet、VoxelNet、PointPillars等等),大大提高3D物体检测的精度。

但是LIDAR-based的缺点在于成本较高,且易受天气环境的影响,而Camera-based算法能够提高检测系统的鲁棒性,尤其是当其他更昂贵的模块失效时。因此,如何基于单/多摄像头数据实现可靠/精确的3D检测显得尤为重要。为了解决Camera-based中物体定位问题,人们做了很多努力,例如从图像中推断深度、利用几何约束和形状先验等。然而,这个问题远未解决。由于3D定位能力差,Camera-based算法检测方法的性能仍然比LIDAR-based方法差得多。

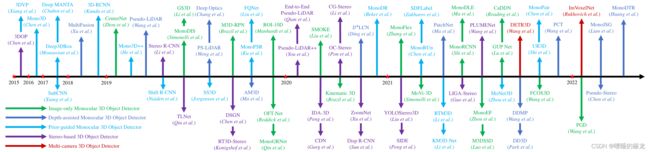

检测算法

下面这张图出自这篇论文:3D Object Detection for Autonomous Driving: A Review and New Outlooks,论文非常详细地列出了在2015年至2022年上半年之间,基于单目的3D目标检测研究工作,并且细分了Image-only Monocular 3D Object Detector、Depth-assisted Monocular 3D Object Detector、Prior-guided Monocular 3D Object Detector、Stereo-based 3D Object Detector和Multi-camera 3D Object Detector五个小方向,个人认为还是比较全面和客观的

具体的论文可参照:Learning-Deep-Learning

另外还可以关注nuScenes官网中Detection模块的榜单,选择Camera模式,就可以看到实时更新的SOTA算法啦~

nuScenes-Mini数据集

官网:https://www.nuscenes.org/

所属公司:Motional(前身为 nuTonomy)

更多信息:https://www.nuscenes.org/nuscenes

简介

nuScenes 数据集(发音为 /nuːsiːnz/)是由 Motional(前身为 nuTonomy)团队开发的带有 3d 对象注释的大规模自动驾驶数据集,它的特点:

- Full sensor suite (1x LIDAR, 5x RADAR, 6x camera, IMU, GPS)

- 1000 scenes of 20s each

- 1,400,000 camera images

- 390,000 lidar sweeps

- Two diverse cities: Boston and Singapor

- Left versus right hand traffic

- Detailed map information

- 1.4M 3D bounding boxes manually annotated for 23 object classes

- Attributes such as visibility, activity and pose

- New: 1.1B lidar points manually annotated for 32 classes

- New: Explore nuScenes on Strada

- Free to use for non-commercial use

下载

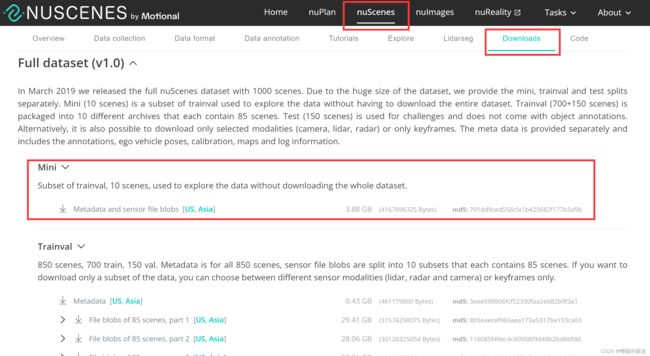

完整的nuScenes数据集要500+G(要了命了),为了方便操作,节约时间,便于入门学习(理由可真多hhh),后续实战我们选择nuScenes-Mini数据集,官方是这么说的:

Full dataset (v1.0)

In March 2019 we released the full nuScenes dataset with 1000 scenes. Due to the huge size of the dataset, we provide the mini, trainval and test splits separately. Mini (10 scenes) is a subset of trainval used to explore the data without having to download the entire dataset. Trainval (700+150 scenes) is packaged into 10 different archives that each contain 85 scenes. Test (150 scenes) is used for challenges and does not come with object annotations. Alternatively, it is also possible to download only selected modalities (camera, lidar, radar) or only keyframes. The meta data is provided separately and includes the annotations, ego vehicle poses, calibration, maps and log information.

下载方法一:手动下载并解压(不推荐)

首先在官网注册账号,然后进入nuScenes-Downloads页面,滑到最下面,就可以看到Full dataset (v1.0)以及Mini数据集的下载地址啦~

nuScenes官网上有详细的使用教程:Tutorial,为了后续实战方便,这里选择下载到mmdetection3d/data/nuscenes-mini/目录下:

# Make the directory to store the nuScenes dataset in.

!mkdir -p data/nuscenes-mini

cd data/nuscenes-mini

# Download the nuScenes mini split.

!wget https://www.nuscenes.org/data/v1.0-mini.tgz

# Uncompress the nuScenes mini split.

!tar -xf v1.0-mini.tgz -C data/nuscenes-mini

# Install nuScenes.

!pip install nuscenes-devkit &> /dev/null

安装完成后,数据集结构如下:

mmdetection3d

├── mmdet3d

├── tools

├── configs

├── data

│ ├── nuscenes-mini

│ │ ├── maps

│ │ ├── samples

│ │ ├── sweep

| | ├── v1.0-mini

MMDetection3D

版本:Release V1.1.0rc0

安装配置、环境搭建:【MMDetection3D】环境搭建,使用PointPillers训练&测试&可视化KITTI数据集

下面,我们在MMDet3D库中进行如下实验:

| 单目算法 | KITTI | nuScenes-Mini |

|---|---|---|

| PGD | √ | √ |

| SMOKE | √ | |

| FCOS3D | √ |

即:使用PGD算法和SMOKE算法对KITTI进行训练、测试和可视化,使用PGD和FCOS3D算法对nuScenes-Mini进行训练、测试和可视化。

数据集准备

KITTI

3D 目标检测 KITTI 数据集

nuScenes-Mini

3D 目标检测 NUSCENES 数据集

配置文件

1、首先,要修改数据集路径

以nuScenes-Mini数据集为例,在/mmdetection3d/configs/_base_/datasets/文件夹中新建nus-mini-mono3d.py文件,即用于单目检测的nuScenes-Mini数据配置文件,将同文件夹下的nus-mono3d.py文件中的内容复制到新建文件中,并修改data_root参数:

dataset_type = 'NuScenesMonoDataset'

# 修改为你的数据集路径

data_root = 'your_dataset_root'

class_names = [

'car', 'truck', 'trailer', 'bus', 'construction_vehicle', 'bicycle',

'motorcycle', 'pedestrian', 'traffic_cone', 'barrier'

]

# Input modality for nuScenes dataset, this is consistent with the submission

# format which requires the information in input_modality.

input_modality = dict(

use_lidar=False,

use_camera=True,

use_radar=False,

use_map=False,

use_external=False)

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

train_pipeline = [

dict(type='LoadImageFromFileMono3D'),

dict(

type='LoadAnnotations3D',

with_bbox=True,

with_label=True,

with_attr_label=True,

with_bbox_3d=True,

with_label_3d=True,

with_bbox_depth=True),

dict(type='Resize', img_scale=(1600, 900), keep_ratio=True),

dict(type='RandomFlip3D', flip_ratio_bev_horizontal=0.5),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle3D', class_names=class_names),

dict(

type='Collect3D',

keys=[

'img', 'gt_bboxes', 'gt_labels', 'attr_labels', 'gt_bboxes_3d',

'gt_labels_3d', 'centers2d', 'depths'

]),

]

test_pipeline = [

dict(type='LoadImageFromFileMono3D'),

dict(

type='MultiScaleFlipAug',

scale_factor=1.0,

flip=False,

transforms=[

dict(type='RandomFlip3D'),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(

type='DefaultFormatBundle3D',

class_names=class_names,

with_label=False),

dict(type='Collect3D', keys=['img']),

])

]

# construct a pipeline for data and gt loading in show function

# please keep its loading function consistent with test_pipeline (e.g. client)

eval_pipeline = [

dict(type='LoadImageFromFileMono3D'),

dict(

type='DefaultFormatBundle3D',

class_names=class_names,

with_label=False),

dict(type='Collect3D', keys=['img'])

]

data = dict(

samples_per_gpu=2,

workers_per_gpu=2,

train=dict(

type=dataset_type,

data_root=data_root,

ann_file=data_root + 'nuscenes_infos_train_mono3d.coco.json',

img_prefix=data_root,

classes=class_names,

pipeline=train_pipeline,

modality=input_modality,

test_mode=False,

box_type_3d='Camera'),

val=dict(

type=dataset_type,

data_root=data_root,

ann_file=data_root + 'nuscenes_infos_val_mono3d.coco.json',

img_prefix=data_root,

classes=class_names,

pipeline=test_pipeline,

modality=input_modality,

test_mode=True,

box_type_3d='Camera'),

test=dict(

type=dataset_type,

data_root=data_root,

ann_file=data_root + 'nuscenes_infos_val_mono3d.coco.json',

img_prefix=data_root,

classes=class_names,

pipeline=test_pipeline,

modality=input_modality,

test_mode=True,

box_type_3d='Camera'))

evaluation = dict(interval=2)

2、修改模型配置文件(以PGD为例)

打开/mmdetection3d/configs/pgd/文件夹下,新建/pgd_r101_caffe_fpn_gn-head_2x16_1x_nus-mini-mono3d.py文件,并将同文件夹下的/pgd_r101_caffe_fpn_gn-head_2x16_1x_nus-mono3d.py文件内容复制到新建文件中,并修改'../_base_/datasets/nus-mini-mono3d.py'参数:

_base_ = [

'../_base_/datasets/nus-mini-mono3d.py', '../_base_/models/pgd.py',

'../_base_/schedules/mmdet_schedule_1x.py', '../_base_/default_runtime.py'

]

# model settings

model = dict(

backbone=dict(

dcn=dict(type='DCNv2', deform_groups=1, fallback_on_stride=False),

stage_with_dcn=(False, False, True, True)),

bbox_head=dict(

pred_bbox2d=True,

group_reg_dims=(2, 1, 3, 1, 2,

4), # offset, depth, size, rot, velo, bbox2d

reg_branch=(

(256, ), # offset

(256, ), # depth

(256, ), # size

(256, ), # rot

(), # velo

(256, ) # bbox2d

),

loss_depth=dict(type='SmoothL1Loss', beta=1.0 / 9.0, loss_weight=1.0),

bbox_coder=dict(

type='PGDBBoxCoder',

base_depths=((31.99, 21.12), (37.15, 24.63), (39.69, 23.97),

(40.91, 26.34), (34.16, 20.11), (22.35, 13.70),

(24.28, 16.05), (27.26, 15.50), (20.61, 13.68),

(22.74, 15.01)),

base_dims=((4.62, 1.73, 1.96), (6.93, 2.83, 2.51),

(12.56, 3.89, 2.94), (11.22, 3.50, 2.95),

(6.68, 3.21, 2.85), (6.68, 3.21, 2.85),

(2.11, 1.46, 0.78), (0.73, 1.77, 0.67),

(0.41, 1.08, 0.41), (0.50, 0.99, 2.52)),

code_size=9)),

# set weight 1.0 for base 7 dims (offset, depth, size, rot)

# 0.05 for 2-dim velocity and 0.2 for 4-dim 2D distance targets

train_cfg=dict(code_weight=[

1.0, 1.0, 0.2, 1.0, 1.0, 1.0, 1.0, 0.05, 0.05, 0.2, 0.2, 0.2, 0.2

]),

test_cfg=dict(nms_pre=1000, nms_thr=0.8, score_thr=0.01, max_per_img=200))

class_names = [

'car', 'truck', 'trailer', 'bus', 'construction_vehicle', 'bicycle',

'motorcycle', 'pedestrian', 'traffic_cone', 'barrier'

]

img_norm_cfg = dict(

mean=[103.530, 116.280, 123.675], std=[1.0, 1.0, 1.0], to_rgb=False)

train_pipeline = [

dict(type='LoadImageFromFileMono3D'),

dict(

type='LoadAnnotations3D',

with_bbox=True,

with_label=True,

with_attr_label=True,

with_bbox_3d=True,

with_label_3d=True,

with_bbox_depth=True),

dict(type='Resize', img_scale=(1600, 900), keep_ratio=True),

dict(type='RandomFlip3D', flip_ratio_bev_horizontal=0.5),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle3D', class_names=class_names),

dict(

type='Collect3D',

keys=[

'img', 'gt_bboxes', 'gt_labels', 'attr_labels', 'gt_bboxes_3d',

'gt_labels_3d', 'centers2d', 'depths'

]),

]

test_pipeline = [

dict(type='LoadImageFromFileMono3D'),

dict(

type='MultiScaleFlipAug',

scale_factor=1.0,

flip=False,

transforms=[

dict(type='RandomFlip3D'),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(

type='DefaultFormatBundle3D',

class_names=class_names,

with_label=False),

dict(type='Collect3D', keys=['img']),

])

]

data = dict(

samples_per_gpu=2,

workers_per_gpu=2,

train=dict(pipeline=train_pipeline),

val=dict(pipeline=test_pipeline),

test=dict(pipeline=test_pipeline))

# optimizer

optimizer = dict(

lr=0.004, paramwise_cfg=dict(bias_lr_mult=2., bias_decay_mult=0.))

optimizer_config = dict(

_delete_=True, grad_clip=dict(max_norm=35, norm_type=2))

# learning policy

lr_config = dict(

policy='step',

warmup='linear',

warmup_iters=500,

warmup_ratio=1.0 / 3,

step=[8, 11])

total_epochs = 12

evaluation = dict(interval=4)

runner = dict(max_epochs=total_epochs)

3、修改训练Epoch数、保存权重间隔等等(可选)

- 在每个模型的配置文件中,如果是

EpochBasedRunner的runner,可以直接修改max_epochs参数改变训练的epoch数 - 在

/mmdetection3d/configs/_base_/default_runtime.py文件中,修改第一行代码的interval参数,即可改变保存权重间隔

多卡训练

这里以使用PGD模型训练nuScenes-Mini数据集为例,在Terminal中执行如下命令,训练文件默认保存至/mmdetection3d/work_dirs/pgd/pgd_r101_caffe_fpn_gn-head_2x16_1x_nus-mini-mono3d文件夹中:

CUDA_VISIBLE_DEVICES=0,1,2,3 tools/dist_train.sh configs/pgd/pgd_r101_caffe_fpn_gn-head_2x16_1x_nus-mini-mono3d.py 4

测试及可视化

如果是在VScode中进行测试和可视化,需要先设置$DISPLAY参数:

首先在MobaXterm中输入:echo $DISPLAY,查看当前窗口DISPLAY环境变量的值

(mmdet3d) xxx@xxx:~/det3d/mmdetection3d$ echo $DISPLAY

localhost:10.0

之后,在VScode的终端输设置DISPLAY环境变量的值为10.0,并查看:

(mmdet3d) xxx@xxx:~/det3d/mmdetection3d$ export DISPLAY=:10.0

(mmdet3d) xxx@xxx:~/det3d/mmdetection3d$ echo $DISPLAY

:10.0

这里以PGD模型为例,在Terminal中执行如下命令,测试文件默认保存至/mmdetection3d/outputs/pgd_nus_mini文件夹中:

python tools/test.py configs/pgd/pgd_r101_caffe_fpn_gn-head_2x16_2x_nus-mini-mono3d_finetune.py work_dirs/pgd_r101_caffe_fpn_gn-head_2x16_2x_nus-mini-mono3d_finetune/latest.pth --show --show-dir ./outputs/pgd/pgd_nus_mini

可视化结果如下:

KITTI

|

|

PGD PGD

|

SMOKE SMOKE

|

nuScenes-Mini

|

|

|

|

|

|

可以看到检测出的多余框非常多,应该是NMS阈值和score阈值设置问题,下面我们修改阈值,以PGD-nuScenes为例,修改configs/pgd/pgd_r101_caffe_fpn_gn-head_2x16_2x_nus-mini-mono3d_finetune.py文件中的测试参数:

test_cfg=dict(nms_pre=1000, nms_thr=0.2, score_thr=0.1, max_per_img=200))

分别将NMS参数和score参数设置为:nms_thr=0.2, score_thr=0.1

再次进行测试并可视化:

|

|

|

|

参考资料

3D Object Detection for Autonomous Driving: A Review and New Outlooks

nuScenes 数据集

MMDetection3D说明文档:基于视觉的 3D 检测

Questions about FCOS3D and PGD model 3D box