NNDL 作业6:基于CNN的XO识别

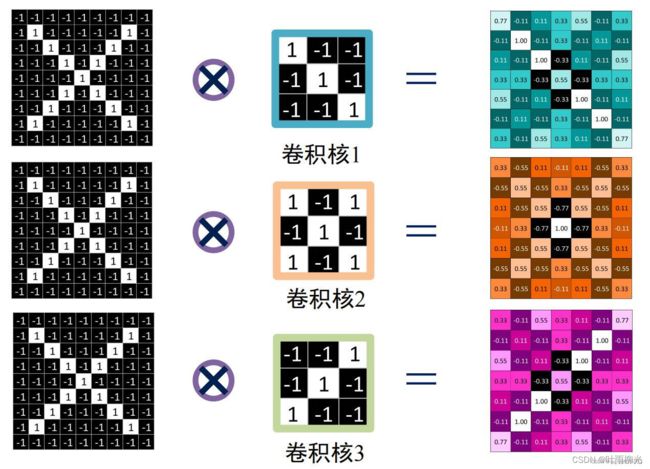

一、实现卷积-池化-激活

1. Numpy版本:手工实现 卷积-池化-激活

自定义卷积算子、池化算子实现

import numpy as np

# 初始化一张X图片矩阵

x = np.array([[-1, -1, -1, -1, -1, -1, -1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, -1, -1, 1, -1, -1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, -1, -1, -1, -1, -1, -1, -1]])

# 输出原矩阵

print("x=\n", x)

# 初始化 三个 卷积核

Kernel = [[0 for i in range(0, 3)] for j in range(0, 3)]

# 参考图卷积核1

Kernel[0] = np.array([[1, -1, -1],

[-1, 1, -1],

[-1, -1, 1]])

# 参考图卷积核2

Kernel[1] = np.array([[1, -1, 1],

[-1, 1, -1],

[1, -1, 1]])

# 参考图卷积核3

Kernel[2] = np.array([[-1, -1, 1],

[-1, 1, -1],

[1, -1, -1]])

# --------------- 卷积 ---------------

stride = 1 # 步长

feature_map_h = 7 # 特征图的高

feature_map_w = 7 # 特征图的宽

feature_map = [0 for i in range(0, 3)] # 初始化3个特征图

for i in range(0, 3):

feature_map[i] = np.zeros((feature_map_h, feature_map_w)) # 初始化特征图

for h in range(feature_map_h): # 向下滑动,得到卷积后的固定行

for w in range(feature_map_w): # 向右滑动,得到卷积后的固定行的列

v_start = h * stride # 滑动窗口的起始行(高)

v_end = v_start + 3 # 滑动窗口的结束行(高)

h_start = w * stride # 滑动窗口的起始列(宽)

h_end = h_start + 3 # 滑动窗口的结束列(宽)

window = x[v_start:v_end, h_start:h_end] # 从图切出一个滑动窗口

for i in range(0, 3):

feature_map[i][h, w] = np.divide(np.sum(np.multiply(window, Kernel[i][:, :])), 9)

print("feature_map:\n", np.around(feature_map, decimals=2))

# --------------- 池化 ---------------

pooling_stride = 2 # 步长

pooling_h = 4 # 特征图的高

pooling_w = 4 # 特征图的宽

feature_map_pad_0 = [[0 for i in range(0, 8)] for j in range(0, 8)]

for i in range(0, 3): # 特征图 补 0 ,行 列 都要加 1 (因为上一层是奇数,池化窗口用的偶数)

feature_map_pad_0[i] = np.pad(feature_map[i], ((0, 1), (0, 1)), 'constant', constant_values=(0, 0))

# print("feature_map_pad_0 0:\n", np.around(feature_map_pad_0[0], decimals=2))

pooling = [0 for i in range(0, 3)]

for i in range(0, 3):

pooling[i] = np.zeros((pooling_h, pooling_w)) # 初始化特征图

for h in range(pooling_h): # 向下滑动,得到卷积后的固定行

for w in range(pooling_w): # 向右滑动,得到卷积后的固定行的列

v_start = h * pooling_stride # 滑动窗口的起始行(高)

v_end = v_start + 2 # 滑动窗口的结束行(高)

h_start = w * pooling_stride # 滑动窗口的起始列(宽)

h_end = h_start + 2 # 滑动窗口的结束列(宽)

for i in range(0, 3):

pooling[i][h, w] = np.max(feature_map_pad_0[i][v_start:v_end, h_start:h_end])

print("pooling:\n", np.around(pooling[0], decimals=2))

print("pooling:\n", np.around(pooling[1], decimals=2))

print("pooling:\n", np.around(pooling[2], decimals=2))

# --------------- 激活 ---------------

def relu(x):

return (abs(x) + x) / 2

relu_map_h = 7 # 特征图的高

relu_map_w = 7 # 特征图的宽

relu_map = [0 for i in range(0, 3)] # 初始化3个特征图

for i in range(0, 3):

relu_map[i] = np.zeros((relu_map_h, relu_map_w)) # 初始化特征图

for i in range(0, 3):

relu_map[i] = relu(feature_map[i])

print("relu map :\n", np.around(relu_map[0], decimals=2))

print("relu map :\n", np.around(relu_map[1], decimals=2))

print("relu map :\n", np.around(relu_map[2], decimals=2))

C:\Users\74502\ven\Scripts\python.exe C:/Users/74502/PycharmProjects/pythonProject/fdh.py

x=

[[-1 -1 -1 -1 -1 -1 -1 -1 -1]

[-1 1 -1 -1 -1 -1 -1 1 -1]

[-1 -1 1 -1 -1 -1 1 -1 -1]

[-1 -1 -1 1 -1 1 -1 -1 -1]

[-1 -1 -1 -1 1 -1 -1 -1 -1]

[-1 -1 -1 1 -1 1 -1 -1 -1]

[-1 -1 1 -1 -1 -1 1 -1 -1]

[-1 1 -1 -1 -1 -1 -1 1 -1]

[-1 -1 -1 -1 -1 -1 -1 -1 -1]]

feature_map:

[[[ 0.78 -0.11 0.11 0.33 0.56 -0.11 0.33]

[-0.11 1. -0.11 0.33 -0.11 0.11 -0.11]

[ 0.11 -0.11 1. -0.33 0.11 -0.11 0.56]

[ 0.33 0.33 -0.33 0.56 -0.33 0.33 0.33]

[ 0.56 -0.11 0.11 -0.33 1. -0.11 0.11]

[-0.11 0.11 -0.11 0.33 -0.11 1. -0.11]

[ 0.33 -0.11 0.56 0.33 0.11 -0.11 0.78]]

[[ 0.33 -0.56 0.11 -0.11 0.11 -0.56 0.33]

[-0.56 0.56 -0.56 0.33 -0.56 0.56 -0.56]

[ 0.11 -0.56 0.56 -0.78 0.56 -0.56 0.11]

[-0.11 0.33 -0.78 1. -0.78 0.33 -0.11]

[ 0.11 -0.56 0.56 -0.78 0.56 -0.56 0.11]

[-0.56 0.56 -0.56 0.33 -0.56 0.56 -0.56]

[ 0.33 -0.56 0.11 -0.11 0.11 -0.56 0.33]]

[[ 0.33 -0.11 0.56 0.33 0.11 -0.11 0.78]

[-0.11 0.11 -0.11 0.33 -0.11 1. -0.11]

[ 0.56 -0.11 0.11 -0.33 1. -0.11 0.11]

[ 0.33 0.33 -0.33 0.56 -0.33 0.33 0.33]

[ 0.11 -0.11 1. -0.33 0.11 -0.11 0.56]

[-0.11 1. -0.11 0.33 -0.11 0.11 -0.11]

[ 0.78 -0.11 0.11 0.33 0.56 -0.11 0.33]]]

pooling:

[[1. 0.33 0.56 0.33]

[0.33 1. 0.33 0.56]

[0.56 0.33 1. 0.11]

[0.33 0.56 0.11 0.78]]

pooling:

[[0.56 0.33 0.56 0.33]

[0.33 1. 0.56 0.11]

[0.56 0.56 0.56 0.11]

[0.33 0.11 0.11 0.33]]

pooling:

[[0.33 0.56 1. 0.78]

[0.56 0.56 1. 0.33]

[1. 1. 0.11 0.56]

[0.78 0.33 0.56 0.33]]

relu map :

[[0.78 0. 0.11 0.33 0.56 0. 0.33]

[0. 1. 0. 0.33 0. 0.11 0. ]

[0.11 0. 1. 0. 0.11 0. 0.56]

[0.33 0.33 0. 0.56 0. 0.33 0.33]

[0.56 0. 0.11 0. 1. 0. 0.11]

[0. 0.11 0. 0.33 0. 1. 0. ]

[0.33 0. 0.56 0.33 0.11 0. 0.78]]

relu map :

[[0.33 0. 0.11 0. 0.11 0. 0.33]

[0. 0.56 0. 0.33 0. 0.56 0. ]

[0.11 0. 0.56 0. 0.56 0. 0.11]

[0. 0.33 0. 1. 0. 0.33 0. ]

[0.11 0. 0.56 0. 0.56 0. 0.11]

[0. 0.56 0. 0.33 0. 0.56 0. ]

[0.33 0. 0.11 0. 0.11 0. 0.33]]

relu map :

[[0.33 0. 0.56 0.33 0.11 0. 0.78]

[0. 0.11 0. 0.33 0. 1. 0. ]

[0.56 0. 0.11 0. 1. 0. 0.11]

[0.33 0.33 0. 0.56 0. 0.33 0.33]

[0.11 0. 1. 0. 0.11 0. 0.56]

[0. 1. 0. 0.33 0. 0.11 0. ]

[0.78 0. 0.11 0.33 0.56 0. 0.33]]

进程已结束,退出代码为 0

2. Pytorch版本:调用函数实现 卷积-池化-激活

调用框架自带算子实现,对比自定义算子

import torch

import torch.nn as nn

x = torch.tensor([[[[-1, -1, -1, -1, -1, -1, -1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, -1, -1, 1, -1, -1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, -1, -1, -1, -1, -1, -1, -1]]]], dtype=torch.float)

print(x.shape)

print(x)

print("--------------- 卷积 ---------------")

conv1 = nn.Conv2d(1, 1, (3, 3), 1) # in_channel , out_channel , kennel_size , stride

conv1.weight.data = torch.Tensor([[[[1, -1, -1],

[-1, 1, -1],

[-1, -1, 1]]

]])

conv2 = nn.Conv2d(1, 1, (3, 3), 1) # in_channel , out_channel , kennel_size , stride

conv2.weight.data = torch.Tensor([[[[1, -1, 1],

[-1, 1, -1],

[1, -1, 1]]

]])

conv3 = nn.Conv2d(1, 1, (3, 3), 1) # in_channel , out_channel , kennel_size , stride

conv3.weight.data = torch.Tensor([[[[-1, -1, 1],

[-1, 1, -1],

[1, -1, -1]]

]])

feature_map1 = conv1(x)

feature_map2 = conv2(x)

feature_map3 = conv3(x)

print(feature_map1 / 9)

print(feature_map2 / 9)

print(feature_map3 / 9)

print("--------------- 池化 ---------------")

max_pool = nn.MaxPool2d(2, padding=0, stride=2) # Pooling

zeroPad = nn.ZeroPad2d(padding=(0, 1, 0, 1)) # pad 0 , Left Right Up Down

feature_map_pad_0_1 = zeroPad(feature_map1)

feature_pool_1 = max_pool(feature_map_pad_0_1)

feature_map_pad_0_2 = zeroPad(feature_map2)

feature_pool_2 = max_pool(feature_map_pad_0_2)

feature_map_pad_0_3 = zeroPad(feature_map3)

feature_pool_3 = max_pool(feature_map_pad_0_3)

print(feature_pool_1.size())

print(feature_pool_1 / 9)

print(feature_pool_2 / 9)

print(feature_pool_3 / 9)

print("--------------- 激活 ---------------")

activation_function = nn.ReLU()

feature_relu1 = activation_function(feature_map1)

feature_relu2 = activation_function(feature_map2)

feature_relu3 = activation_function(feature_map3)

print(feature_relu1 / 9)

print(feature_relu2 / 9)

print(feature_relu3 / 9)

C:\Users\74502\ven\Scripts\python.exe C:/Users/74502/PycharmProjects/pythonProject/fdh.py

torch.Size([1, 1, 9, 9])

tensor([[[[-1., -1., -1., -1., -1., -1., -1., -1., -1.],

[-1., 1., -1., -1., -1., -1., -1., 1., -1.],

[-1., -1., 1., -1., -1., -1., 1., -1., -1.],

[-1., -1., -1., 1., -1., 1., -1., -1., -1.],

[-1., -1., -1., -1., 1., -1., -1., -1., -1.],

[-1., -1., -1., 1., -1., 1., -1., -1., -1.],

[-1., -1., 1., -1., -1., -1., 1., -1., -1.],

[-1., 1., -1., -1., -1., -1., -1., 1., -1.],

[-1., -1., -1., -1., -1., -1., -1., -1., -1.]]]])

--------------- 卷积 ---------------

tensor([[[[ 0.7612, -0.1277, 0.0945, 0.3167, 0.5390, -0.1277, 0.3167],

[-0.1277, 0.9834, -0.1277, 0.3167, -0.1277, 0.0945, -0.1277],

[ 0.0945, -0.1277, 0.9834, -0.3499, 0.0945, -0.1277, 0.5390],

[ 0.3167, 0.3167, -0.3499, 0.5390, -0.3499, 0.3167, 0.3167],

[ 0.5390, -0.1277, 0.0945, -0.3499, 0.9834, -0.1277, 0.0945],

[-0.1277, 0.0945, -0.1277, 0.3167, -0.1277, 0.9834, -0.1277],

[ 0.3167, -0.1277, 0.5390, 0.3167, 0.0945, -0.1277, 0.7612]]]],

grad_fn=<DivBackward0>)

tensor([[[[ 0.3026, -0.5863, 0.0804, -0.1418, 0.0804, -0.5863, 0.3026],

[-0.5863, 0.5248, -0.5863, 0.3026, -0.5863, 0.5248, -0.5863],

[ 0.0804, -0.5863, 0.5248, -0.8085, 0.5248, -0.5863, 0.0804],

[-0.1418, 0.3026, -0.8085, 0.9693, -0.8085, 0.3026, -0.1418],

[ 0.0804, -0.5863, 0.5248, -0.8085, 0.5248, -0.5863, 0.0804],

[-0.5863, 0.5248, -0.5863, 0.3026, -0.5863, 0.5248, -0.5863],

[ 0.3026, -0.5863, 0.0804, -0.1418, 0.0804, -0.5863, 0.3026]]]],

grad_fn=<DivBackward0>)

tensor([[[[ 0.3514, -0.0931, 0.5736, 0.3514, 0.1292, -0.0931, 0.7958],

[-0.0931, 0.1292, -0.0931, 0.3514, -0.0931, 1.0180, -0.0931],

[ 0.5736, -0.0931, 0.1292, -0.3153, 1.0180, -0.0931, 0.1292],

[ 0.3514, 0.3514, -0.3153, 0.5736, -0.3153, 0.3514, 0.3514],

[ 0.1292, -0.0931, 1.0180, -0.3153, 0.1292, -0.0931, 0.5736],

[-0.0931, 1.0180, -0.0931, 0.3514, -0.0931, 0.1292, -0.0931],

[ 0.7958, -0.0931, 0.1292, 0.3514, 0.5736, -0.0931, 0.3514]]]],

grad_fn=<DivBackward0>)

--------------- 池化 ---------------

torch.Size([1, 1, 4, 4])

tensor([[[[0.9834, 0.3167, 0.5390, 0.3167],

[0.3167, 0.9834, 0.3167, 0.5390],

[0.5390, 0.3167, 0.9834, 0.0945],

[0.3167, 0.5390, 0.0945, 0.7612]]]], grad_fn=<DivBackward0>)

tensor([[[[0.5248, 0.3026, 0.5248, 0.3026],

[0.3026, 0.9693, 0.5248, 0.0804],

[0.5248, 0.5248, 0.5248, 0.0804],

[0.3026, 0.0804, 0.0804, 0.3026]]]], grad_fn=<DivBackward0>)

tensor([[[[0.3514, 0.5736, 1.0180, 0.7958],

[0.5736, 0.5736, 1.0180, 0.3514],

[1.0180, 1.0180, 0.1292, 0.5736],

[0.7958, 0.3514, 0.5736, 0.3514]]]], grad_fn=<DivBackward0>)

--------------- 激活 ---------------

tensor([[[[0.7612, 0.0000, 0.0945, 0.3167, 0.5390, 0.0000, 0.3167],

[0.0000, 0.9834, 0.0000, 0.3167, 0.0000, 0.0945, 0.0000],

[0.0945, 0.0000, 0.9834, 0.0000, 0.0945, 0.0000, 0.5390],

[0.3167, 0.3167, 0.0000, 0.5390, 0.0000, 0.3167, 0.3167],

[0.5390, 0.0000, 0.0945, 0.0000, 0.9834, 0.0000, 0.0945],

[0.0000, 0.0945, 0.0000, 0.3167, 0.0000, 0.9834, 0.0000],

[0.3167, 0.0000, 0.5390, 0.3167, 0.0945, 0.0000, 0.7612]]]],

grad_fn=<DivBackward0>)

tensor([[[[0.3026, 0.0000, 0.0804, 0.0000, 0.0804, 0.0000, 0.3026],

[0.0000, 0.5248, 0.0000, 0.3026, 0.0000, 0.5248, 0.0000],

[0.0804, 0.0000, 0.5248, 0.0000, 0.5248, 0.0000, 0.0804],

[0.0000, 0.3026, 0.0000, 0.9693, 0.0000, 0.3026, 0.0000],

[0.0804, 0.0000, 0.5248, 0.0000, 0.5248, 0.0000, 0.0804],

[0.0000, 0.5248, 0.0000, 0.3026, 0.0000, 0.5248, 0.0000],

[0.3026, 0.0000, 0.0804, 0.0000, 0.0804, 0.0000, 0.3026]]]],

grad_fn=<DivBackward0>)

tensor([[[[0.3514, 0.0000, 0.5736, 0.3514, 0.1292, 0.0000, 0.7958],

[0.0000, 0.1292, 0.0000, 0.3514, 0.0000, 1.0180, 0.0000],

[0.5736, 0.0000, 0.1292, 0.0000, 1.0180, 0.0000, 0.1292],

[0.3514, 0.3514, 0.0000, 0.5736, 0.0000, 0.3514, 0.3514],

[0.1292, 0.0000, 1.0180, 0.0000, 0.1292, 0.0000, 0.5736],

[0.0000, 1.0180, 0.0000, 0.3514, 0.0000, 0.1292, 0.0000],

[0.7958, 0.0000, 0.1292, 0.3514, 0.5736, 0.0000, 0.3514]]]],

grad_fn=<DivBackward0>)

进程已结束,退出代码为 0

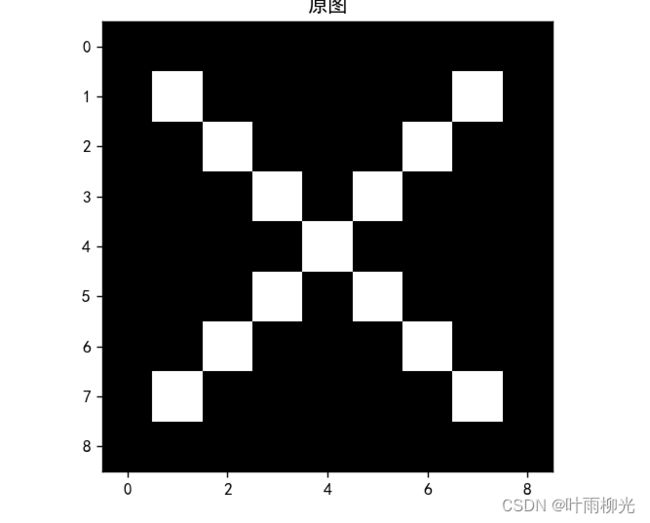

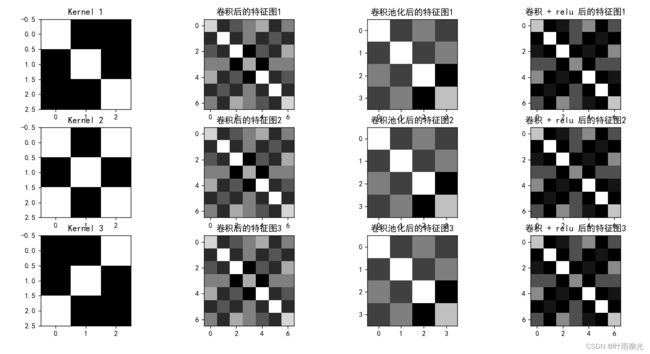

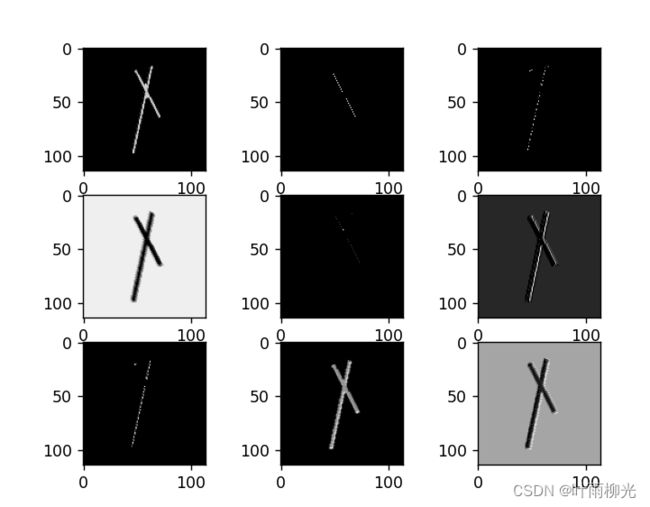

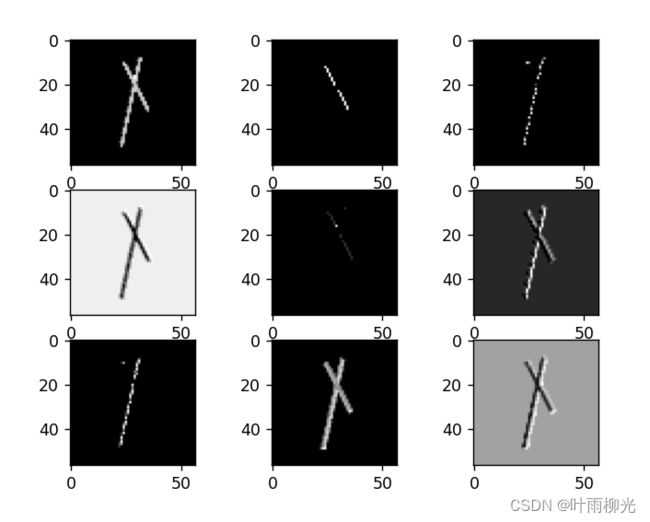

3. 可视化:了解数字与图像之间的关系

可视化卷积核和特征图

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号 #有中文出现的情况,需要u'内容

x = torch.tensor([[[[-1, -1, -1, -1, -1, -1, -1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, -1, -1, 1, -1, -1, -1, -1],

[-1, -1, -1, 1, -1, 1, -1, -1, -1],

[-1, -1, 1, -1, -1, -1, 1, -1, -1],

[-1, 1, -1, -1, -1, -1, -1, 1, -1],

[-1, -1, -1, -1, -1, -1, -1, -1, -1]]]], dtype=torch.float)

print(x.shape)

print(x)

img = x.data.squeeze().numpy() # 将输出转换为图片的格式

plt.imshow(img, cmap='gray')

plt.title('原图')

plt.show()

print("--------------- 卷积 ---------------")

conv1 = nn.Conv2d(1, 1, (3, 3), 1) # in_channel , out_channel , kennel_size , stride

conv1.weight.data = torch.Tensor([[[[1, -1, -1],

[-1, 1, -1],

[-1, -1, 1]]

]])

img = conv1.weight.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 1)

plt.imshow(img, cmap='gray')

plt.title('Kernel 1')

conv2 = nn.Conv2d(1, 1, (3, 3), 1) # in_channel , out_channel , kennel_size , stride

conv2.weight.data = torch.Tensor([[[[1, -1, 1],

[-1, 1, -1],

[1, -1, 1]]

]])

img = conv2.weight.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 5)

plt.imshow(img, cmap='gray')

plt.title('Kernel 2')

conv3 = nn.Conv2d(1, 1, (3, 3), 1) # in_channel , out_channel , kennel_size , stride

conv3.weight.data = torch.Tensor([[[[-1, -1, 1],

[-1, 1, -1],

[1, -1, -1]]

]])

img = conv3.weight.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 9)

plt.imshow(img, cmap='gray')

plt.title('Kernel 3')

feature_map1 = conv1(x)

feature_map2 = conv2(x)

feature_map3 = conv3(x)

print(feature_map1 / 9)

print(feature_map2 / 9)

print(feature_map3 / 9)

img = feature_map1.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 2)

plt.imshow(img, cmap='gray')

plt.title('卷积后的特征图1')

img2 = feature_map2.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 6)

plt.imshow(img, cmap='gray')

plt.title('卷积后的特征图2')

img3 = feature_map3.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 10)

plt.imshow(img, cmap='gray')

plt.title('卷积后的特征图3')

print("--------------- 池化 ---------------")

max_pool = nn.MaxPool2d(2, padding=0, stride=2) # Pooling

zeroPad = nn.ZeroPad2d(padding=(0, 1, 0, 1)) # pad 0 , Left Right Up Down

feature_map_pad_0_1 = zeroPad(feature_map1)

feature_pool_1 = max_pool(feature_map_pad_0_1)

feature_map_pad_0_2 = zeroPad(feature_map2)

feature_pool_2 = max_pool(feature_map_pad_0_2)

feature_map_pad_0_3 = zeroPad(feature_map3)

feature_pool_3 = max_pool(feature_map_pad_0_3)

print(feature_pool_1.size())

print(feature_pool_1 / 9)

print(feature_pool_2 / 9)

print(feature_pool_3 / 9)

img = feature_pool_1.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 3)

plt.imshow(img, cmap='gray')

plt.title('卷积池化后的特征图1')

img2 = feature_pool_2.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 7)

plt.imshow(img, cmap='gray')

plt.title('卷积池化后的特征图2')

img3 = feature_pool_3.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 11)

plt.imshow(img, cmap='gray')

plt.title('卷积池化后的特征图3')

print("--------------- 激活 ---------------")

activation_function = nn.ReLU()

feature_relu1 = activation_function(feature_map1)

feature_relu2 = activation_function(feature_map2)

feature_relu3 = activation_function(feature_map3)

print(feature_relu1 / 9)

print(feature_relu2 / 9)

print(feature_relu3 / 9)

img = feature_relu1.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 4)

plt.imshow(img, cmap='gray')

plt.title('卷积 + relu 后的特征图1')

img2 = feature_relu2.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 8)

plt.imshow(img, cmap='gray')

plt.title('卷积 + relu 后的特征图2')

img3 = feature_relu3.data.squeeze().numpy() # 将输出转换为图片的格式

plt.subplot(3, 4, 12)

plt.imshow(img, cmap='gray')

plt.title('卷积 + relu 后的特征图3')

plt.show()

C:\Users\74502\ven\Scripts\python.exe C:/Users/74502/PycharmProjects/pythonProject/fdh.py

torch.Size([1, 1, 9, 9])

tensor([[[[-1., -1., -1., -1., -1., -1., -1., -1., -1.],

[-1., 1., -1., -1., -1., -1., -1., 1., -1.],

[-1., -1., 1., -1., -1., -1., 1., -1., -1.],

[-1., -1., -1., 1., -1., 1., -1., -1., -1.],

[-1., -1., -1., -1., 1., -1., -1., -1., -1.],

[-1., -1., -1., 1., -1., 1., -1., -1., -1.],

[-1., -1., 1., -1., -1., -1., 1., -1., -1.],

[-1., 1., -1., -1., -1., -1., -1., 1., -1.],

[-1., -1., -1., -1., -1., -1., -1., -1., -1.]]]])

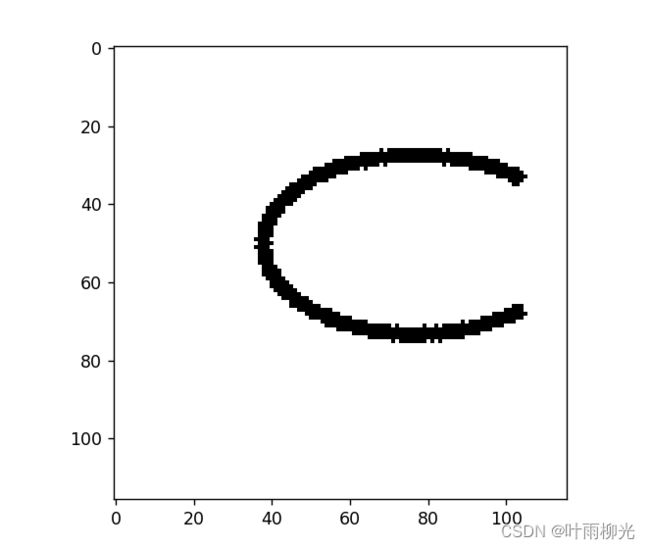

二、 基于CNN的XO识别

构建模型

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 9, 3)

self.maxpool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(9, 5, 3)

self.relu = nn.ReLU()

self.fc1 = nn.Linear(27 * 27 * 5, 1200)

self.fc2 = nn.Linear(1200, 64)

self.fc3 = nn.Linear(64, 2)

def forward(self, x):

x = self.maxpool(self.relu(self.conv1(x)))

x = self.maxpool(self.relu(self.conv2(x)))

x = x.view(-1, 27 * 27 * 5)

x = self.relu(self.fc1(x))

x = self.relu(self.fc2(x))

x = self.fc3(x)

return x

训练模型

model = Net()

criterion = torch.nn.CrossEntropyLoss() # 损失函数 交叉熵损失函数

optimizer = optim.SGD(model.parameters(), lr=0.1) # 优化函数:随机梯度下降

use_gpu = torch.cuda.is_available()

if use_gpu: # 有cuda

print("use gpu for training")

criterion = criterion.cuda()

model = model.to('cuda')

epochs = 10

for epoch in range(epochs):

running_loss = 0.0

for i, data in enumerate(data_loader):

images, label = data

out = model(images.to('cuda')).to('cuda')

loss = criterion(out, label.to('cuda'))

optimizer.zero_grad()

loss.backward()

optimizer.step()

running_loss += loss.item()

if (i + 1) % 10 == 0:

print('[%d %5d] loss: %.3f' % (epoch + 1, i + 1, running_loss / 100))

running_loss = 0.0

print('finished train')

# 保存模型

torch.save(model, 'model_name.pth') # 保存的是模型, 不止是w和b权重值

# torch.save(model.state_dict(), 'model_name1.pth') # 保存的是w和b权重值

[1 10] loss: 0.069

[1 20] loss: 0.069

[2 10] loss: 0.069

[2 20] loss: 0.068

[3 10] loss: 0.066

[3 20] loss: 0.061

[4 10] loss: 0.067

[4 20] loss: 0.062

[5 10] loss: 0.061

[5 20] loss: 0.065

[6 10] loss: 0.069

[6 20] loss: 0.069

[7 10] loss: 0.069

[7 20] loss: 0.069

[8 10] loss: 0.069

[8 20] loss: 0.068

[9 10] loss: 0.061

[9 20] loss: 0.045

[10 10] loss: 0.018

[10 20] loss: 0.077

finished train

进程已结束,退出代码为 0

测试训练好的模型

# 读取模型

model_load = torch.load('model_name.pth')

# 读取一张图片 images[0],测试

print("labels[0] truth:\t", labels[0])

x = images[0]

x = x.reshape([1, x.shape[0], x.shape[1], x.shape[2]])

predicted = torch.max(model_load(x), 1)

print("labels[0] predict:\t", predicted.indices)

img = images[0].data.squeeze().numpy() # 将输出转换为图片的格式

plt.imshow(img, cmap='gray')

plt.show()

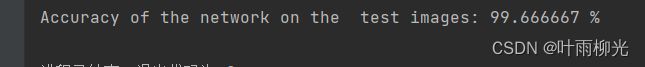

计算模型的准确率

model = Net()

model.load_state_dict(torch.load('model_name1.pth', map_location='cpu')) # 导入网络的参数

correct = 0

total = 0

with torch.no_grad(): # 进行评测的时候网络不更新梯度

for data in data_loader_test: # 读取测试集

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, 1) # 取出 最大值的索引 作为 分类结果

total += labels.size(0) # labels 的长度

correct += (predicted == labels).sum().item() # 预测正确的数目

print('Accuracy of the network on the test images: %f %%' % (100. * correct / total))

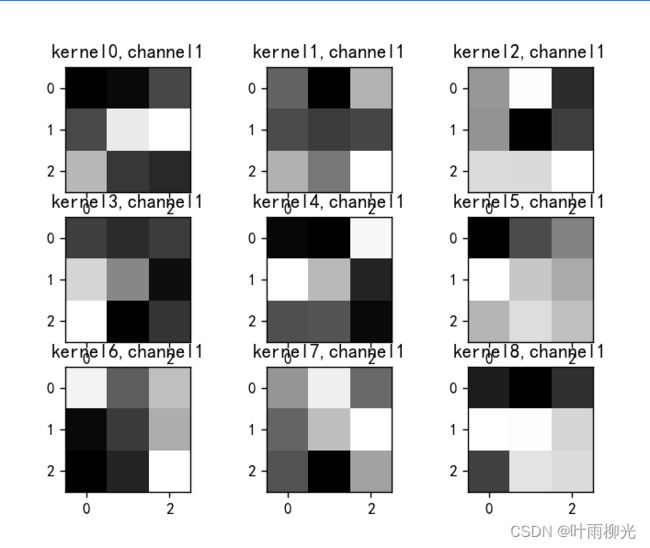

查看训练好的模型的卷积核

import torch

import matplotlib.pyplot as plt

import numpy as np

from PIL import Image

from torchvision import transforms, datasets

import torch.nn as nn

from torch.utils.data import DataLoader

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号 #有中文出现的情况,需要u'内容

# 定义图像预处理过程(要与网络模型训练过程中的预处理过程一致)

transforms = transforms.Compose([

transforms.ToTensor(), # 把图片进行归一化,并把数据转换成Tensor类型

transforms.Grayscale(1) # 把图片 转为灰度图

])

path = r'D:\project\DL\training_data_sm'

data_train = datasets.ImageFolder(path, transform=transforms)

data_loader = DataLoader(data_train, batch_size=64, shuffle=True)

for i, data in enumerate(data_loader):

images, labels = data

break

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 9, 3) # in_channel , out_channel , kennel_size , stride

self.maxpool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(9, 5, 3) # in_channel , out_channel , kennel_size , stride

self.relu = nn.ReLU()

self.fc1 = nn.Linear(27 * 27 * 5, 1200) # full connect 1

self.fc2 = nn.Linear(1200, 64) # full connect 2

self.fc3 = nn.Linear(64, 2) # full connect 3

def forward(self, x):

outputs = []

x = self.maxpool(self.relu(self.conv1(x)))

# outputs.append(x)

x = self.maxpool(self.relu(self.conv2(x)))

outputs.append(x)

x = x.view(-1, 27 * 27 * 5)

x = self.relu(self.fc1(x))

x = self.relu(self.fc2(x))

x = self.fc3(x)

return outputs

# create model

model1 = Net()

# load model weights加载预训练权重

model_weight_path = "model_name1.pth"

model1.load_state_dict(torch.load(model_weight_path))

x = images[0]

x = x.reshape([1, x.shape[0], x.shape[1], x.shape[2]])

# forward正向传播过程

out_put = model1(x)

weights_keys = model1.state_dict().keys()

for key in weights_keys:

print("key :", key)

# 卷积核通道排列顺序 [kernel_number, kernel_channel, kernel_height, kernel_width]

if key == "conv1.weight":

weight_t = model1.state_dict()[key].numpy()

print("weight_t.shape", weight_t.shape)

k = weight_t[:, 0, :, :] # 获取第一个卷积核的信息参数

# show 9 kernel ,1 channel

plt.figure()

for i in range(9):

ax = plt.subplot(3, 3, i + 1) # 参数意义:3:图片绘制行数,5:绘制图片列数,i+1:图的索引

plt.imshow(k[i, :, :], cmap='gray')

title_name = 'kernel' + str(i) + ',channel1'

plt.title(title_name)

plt.show()

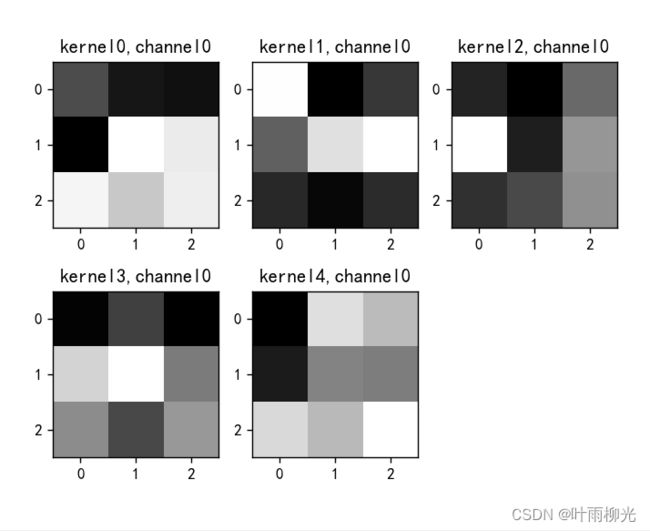

if key == "conv2.weight":

weight_t = model1.state_dict()[key].numpy()

print("weight_t.shape", weight_t.shape)

k = weight_t[:, :, :, :] # 获取第一个卷积核的信息参数

print(k.shape)

print(k)

plt.figure()

for c in range(9):

channel = k[:, c, :, :]

for i in range(5):

ax = plt.subplot(2, 3, i + 1) # 参数意义:3:图片绘制行数,5:绘制图片列数,i+1:图的索引

plt.imshow(channel[i, :, :], cmap='gray')

title_name = 'kernel' + str(i) + ',channel' + str(c)

plt.title(title_name)

plt.show()

总结

第一部分作业为numpy卷积-池化-激活和使用Pytorch包装好的卷积-池化-激活层,加深了一下这三层的印象第二部分作业主要是基于Pytorch搭建简单的卷积网络来实现XO识别,熟悉了操作的流程。