深度学习第9周猫狗识别2

- 本文为365天深度学习训练营 中的学习记录博客

- 参考文章:365天深度学习训练营-第9周:猫狗识别-2(训练营内部成员可读)

- 原作者:K同学啊|接辅导、项目定制

第9周:猫狗识别-2

● 难度:夯实基础⭐⭐

● 语言:Python3、TensorFlow2

要求:

- 找到并处理第8周的程序问题(本文给出了答案)

第8周的问题处在训练集和测试集数据的历史损失和准确率,因为是每一个batch输出一次history而不是epoch输出一次,因此需要对一个epoch中的8个batch取平均值。

拔高(可选):

- 请尝试增加数据增强部分内容以提高准确率

- 可以使用哪些方式进行数据增强?(下一周给出了答案)

探索(难度有点大)

- 本文中的代码存在较大赘余,请对代码进行精简

一、前期准备

import tensorflow as tf

import matplotlib.pyplot as plt

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")

# 打印显卡信息,确认GPU可用

print(gpus

[PhysicalDevice(name=‘/physical_device:GPU:0’, device_type=‘GPU’)]

2、导入数据

import PIL,pathlib

dir_path = r'/home/mw/input/88559128/dataset/dataset/365-7-data'

dir_path = pathlib.Path(dir_path)

image_cnt = len(list(dir_path.glob('*/*.jpg')))

image_cnt

3400

二、数据预处理

1. 加载数据

batch_size = 8

img_height = 224

img_width = 224

train_ds = tf.keras.preprocessing.image_dataset_from_directory(dir_path,

batch_size=batch_size,

image_size=(img_height,img_width),

seed=12,

shuffle=True,

validation_split=0.2,

subset="training")

val_ds = tf.keras.preprocessing.image_dataset_from_directory(dir_path,

batch_size=batch_size,

image_size=(img_height,img_width),

seed=12,

shuffle=True,

validation_split=0.2,

subset="validation")

class_names = train_ds.class_names

class_names

[‘cat’, ‘dog’]

2. 再次检查数据

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

(8, 224, 224, 3)

(8,)

3. 配置数据集

from tensorflow.keras import models,layers

import numpy as np

AUTOTUNE = tf.data.experimental.AUTOTUNE

def preprocess_image(image,label):

return (image/255.0,label)

# 归一化处理

train_ds = train_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

val_ds = val_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

4、查看图片

from tensorflow.keras import models,layers

import numpy as np

AUTOTUNE = tf.data.experimental.AUTOTUNE

def preprocess_image(image,label):

return (image/255.0,label)

# 归一化处理

train_ds = train_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

val_ds = val_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

![]()

三、设置网络模型

from tensorflow.keras import layers, models, Input

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Flatten, Dropout

def VGG16(nb_classes, input_shape):

input_tensor = Input(shape=input_shape)

# 1st block

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv1')(input_tensor)

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block1_pool')(x)

# 2nd block

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv1')(x)

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block2_pool')(x)

# 3rd block

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv1')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv2')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block3_pool')(x)

# 4th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block4_pool')(x)

# 5th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block5_pool')(x)

# full connection

x = Flatten()(x)

x = Dense(1024, activation='relu', name='fc1')(x)

x = Dense(128, activation='relu', name='fc2')(x)

output_tensor = Dense(nb_classes, activation='softmax', name='predictions')(x)

model = Model(input_tensor, output_tensor)

return model

model=VGG16(len(class_names), (img_width, img_height, 3))# 1000改为2

model.summary()

model.compile(optimizer='adam',loss=tf.keras.losses.sparse_categorical_crossentropy,

metrics = ['accuracy'])

四、模型训练

新知识点

tqdm参数

class tqdm(object):

“”"

Decorate an iterable object, returning an iterator which acts exactly

like the original iterable, but prints a dynamically updating

progressbar every time a value is requested.

“”"

def init(self, iterable=None, desc=None, total=None, leave=False,

file=sys.stderr, ncols=None, mininterval=0.1,

maxinterval=10.0, miniters=None, ascii=None,

disable=False, unit=‘it’, unit_scale=False,

dynamic_ncols=False, smoothing=0.3, nested=False,

bar_format=None, initial=0, gui=False):

iterable: 可迭代的对象, 在手动更新时不需要进行设置

desc: 字符串, 左边进度条描述文字

total: 总的项目数

leave: bool值, 迭代完成后是否保留进度条

file: 输出指向位置, 默认是终端, 一般不需要设置

ncols: 调整进度条宽度, 默认是根据环境自动调节长度, 如果设置为0, 就没有进度条, 只有输出的信息

unit: 描述处理项目的文字, 默认是’it’, 例如: 100 it/s, 处理照片的话设置为’img’ ,则为 100 img/s

unit_scale: 自动根据国际标准进行项目处理速度单位的换算, 例如 100000 it/s >> 100k it/s

colour: 进度条颜色,例如:‘green’, ‘#00ff00’。支持的颜色:“BLACK, RED, GREEN, YELLOW, BLUE, MAGENTA, CYAN, WHITE”

train_on_batch函数原型

y_pred = Model.train_on_batch(

x,

y=None,

sample_weight=None,

class_weight=None,

reset_metrics=True,

return_dict=False,

)

参数详解:

- x:模型输入,单输入就是一个 numpy 数组, 多输入就是 numpy 数组的列表

- y:标签,单输出模型就是一个 numpy 数组, 多输出模型就是 numpy 数组列表

- sample_weight:mini-batch 中每个样本对应的权重,形状为 (batch_size)

- class_weight:类别权重,作用于损失函数,为各个类别的损失添加权重,主要用于类别不平衡的情况, 形状为 (num_classes)

- reset_metrics:默认True,返回的metrics只针对这个mini-batch, 如果False,metrics 会跨批次累积

- return_dict:默认 False, y_pred 为一个列表,如果 True 则 y_pred 是一个字典

- 优点

● 更精细自定义训练过程,更精准的收集 loss 和 metrics

● 分步训练模型-GAN的实现

● 多GPU训练保存模型更加方便

● 更多样的数据加载方式

tf.keras.backend

keras后端API

定义python操作时使用的上下文管理器

…backend.set_value函数

从Numpy数组设置变量的值。

参数:

x:要设置为新值的Tensor。

value:将张量设置为Numpy数组(具有相同形状)的值。

tf.keras.backend.get_value(x)返回一个变量的值

from tqdm import tqdm

import tensorflow.keras.backend as K

epochs = 12

lr = 1e-4

#记录训练数据,方便后续分析

history_train_loss = []

history_train_accuracy = []

history_val_loss = []

history_val_accuracy = []

for epoch in range(epochs):

train_total = len(train_ds)

val_total = len(val_ds)

"""

total:预期的迭代数目

ncols:控制进度条宽度

mininterval:进度更新最小间隔,以秒为单位(默认值:0.1)

"""

with tqdm(total=train_total,desc=f'Epoch {epoch+1}/{epochs}',mininterval=1,ncols=100) as pbar:

lr = lr*0.9 #随epoch减小学习率

K.set_value(model.optimizer.lr,lr) ##通过K.set_value对原模型的学习率进行更新

train_loss = []

train_accuracy = []

for image,label in train_ds:

history = model.train_on_batch(image,label) #输入数据与对应标签

train_loss.append(history[0]) #这里我们是既有loss也由metrics的单输出模型

train_accuracy.append(history[1])

pbar.set_postfix({"loss": "%.4f"%history[0],

"accuracy":"%.4f"%history[1],

"lr": K.get_value(model.optimizer.lr)})

pbar.update(1)

history_train_loss.append(np.mean(train_loss))

history_train_accuracy.append(np.mean(train_accuracy))

print('开始验证!')

with tqdm(total=val_total, desc=f'Epoch {epoch + 1}/{epochs}',mininterval=0.5,ncols=100) as pbar:

val_loss = []

val_accuracy = []

for image,label in val_ds:

# 这里生成的是每一个batch的acc与loss而不是每一个echo,因此要取平均值,这也是上一周的逻辑错误

history = model.test_on_batch(image,label)

val_loss.append(history[0])

val_accuracy.append(history[1])

pbar.set_postfix({"val_loss": "%.4f"%history[0],

"val_acc":"%.4f"%history[1]})

pbar.update(1)

history_val_loss.append(np.mean(val_loss))

history_val_accuracy.append(np.mean(val_accuracy))

print('结束验证!')

print("验证loss为:%.4f"%np.mean(val_loss))

print("验证准确率为:%.4f"%np.mean(val_accuracy))

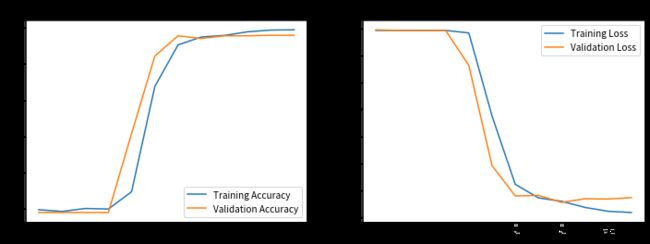

最终

验证loss为:0.0728

验证准确率为:0.9794

五、模型评估

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, history_train_accuracy, label='Training Accuracy')

plt.plot(epochs_range, history_val_accuracy, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, history_train_loss, label='Training Loss')

plt.plot(epochs_range, history_val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

import numpy as np

# 采用加载的模型(new_model)来看预测结果

plt.figure(figsize=(18, 3)) # 图形的宽为18高为5

plt.suptitle("预测结果展示")

for images, labels in val_ds.take(1):

for i in range(8):

ax = plt.subplot(1,8, i + 1)

# 显示图片

plt.imshow(images[i].numpy())

# 需要给图片增加一个维度

img_array = tf.expand_dims(images[i], 0)

# 使用模型预测图片中的人物

predictions = model.predict(img_array)

plt.title(class_names[np.argmax(predictions)])

plt.axis("off")

![]()