ModelArts运行mindspore程序实例教程

ModelArts运行mindspore程序实例教程

-

- 一、运行Helloworld

-

- 1、新建helloworld.py

- 2、上传至OBS

- 3、创建算法

- 4、创建训练作业

- 5、查看日志

- 二、训练LeNet5

-

- 1、下载数据

- 2、准备训练代码

- 3、复制数据集与代码到OBS

- 4、创建算法

- 5、创建训练作业

一、运行Helloworld

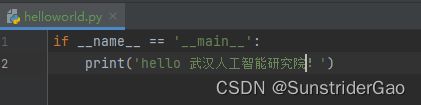

1、新建helloworld.py

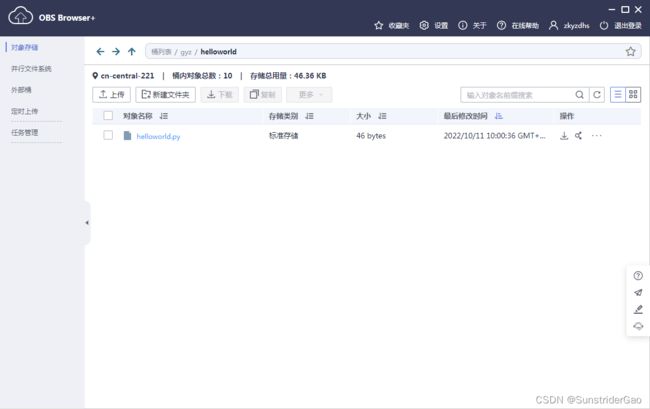

2、上传至OBS

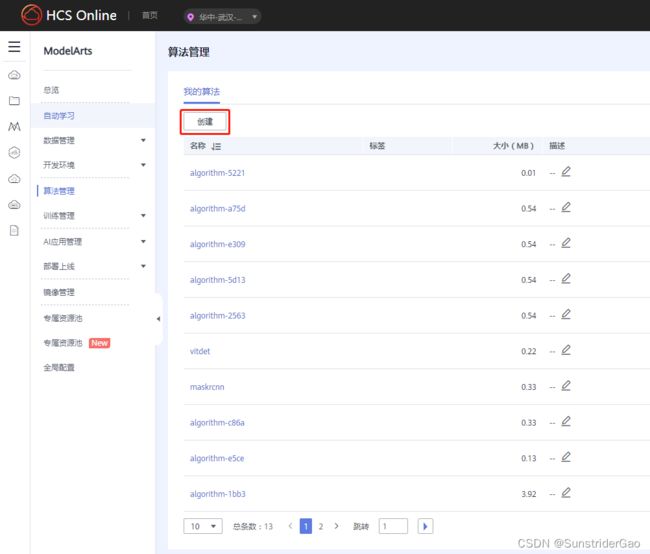

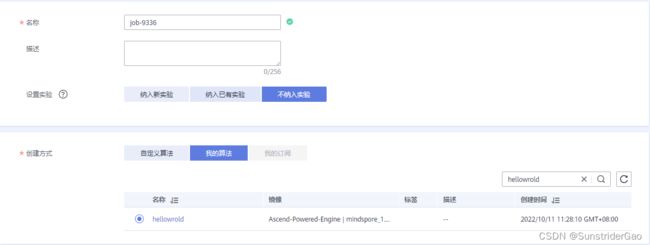

3、创建算法

点击算法管理-创建;

输入名称,启动方式选择预置框架以及对应版本的mindspore,代码目录选择OBS上存储代码的路径,启动文件指运行那个Python脚本;

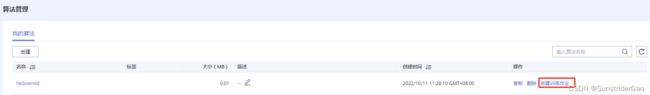

最后点击提交完成算法的创建。

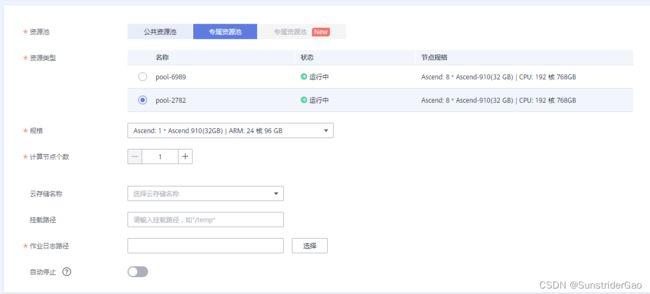

4、创建训练作业

算法创建完成之后,点击创建训练作业;

选择不纳入实验;

选择专属资源池,pool-2782,目前pool-6989有问题,使用该资源池会导致运行之后程序停在 Wait for Rank table file ready ;

选择作业日志存储路径;

最后点击提交完成训练作业的创建。

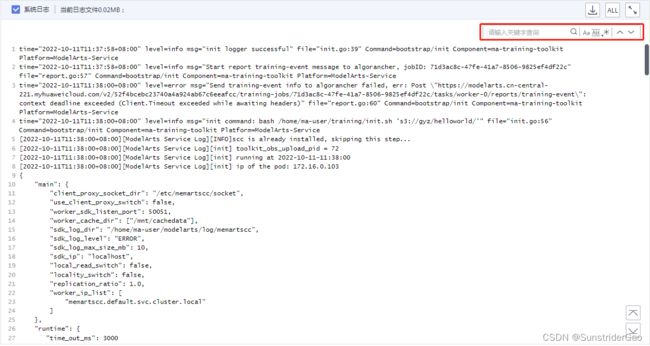

5、查看日志

训练作业创建之后,需要等待程序运行完成,点击名称可查看运行日志;

搜索程序打印的字符,若成功打印则程序正确运行。

二、训练LeNet5

1、下载数据

下载MNIST数据集,地址: http://yann.lecun.com/exdb/mnist/ ;

2、准备训练代码

编写代码 main.py;

# LeNet5 MNIST

import os

# os.environ['DEVICE_ID'] = '0'

import mindspore as ms

import mindspore.context as context

import mindspore.dataset.transforms.c_transforms as C

import mindspore.dataset.vision.c_transforms as CV

from mindspore import nn

from mindspore.train import Model

from mindspore.train.callback import LossMonitor

context.set_context(mode=context.GRAPH_MODE, device_target='Ascend') # Ascend, CPU, GPU

def create_dataset(data_dir, training=True, batch_size=32, resize=(32, 32),

rescale=1/(255*0.3081), shift=-0.1307/0.3081, buffer_size=64):

data_train = os.path.join(data_dir, 'train') # train set

data_test = os.path.join(data_dir, 'test') # test set

ds = ms.dataset.MnistDataset(data_train if training else data_test)

ds = ds.map(input_columns=["image"], operations=[CV.Resize(resize), CV.Rescale(rescale, shift), CV.HWC2CHW()])

ds = ds.map(input_columns=["label"], operations=C.TypeCast(ms.int32))

# When `dataset_sink_mode=True` on Ascend, append `ds = ds.repeat(num_epochs) to the end

ds = ds.shuffle(buffer_size=buffer_size).batch(batch_size, drop_remainder=True)

return ds

class LeNet5(nn.Cell):

def __init__(self):

super(LeNet5, self).__init__()

self.conv1 = nn.Conv2d(1, 6, 5, stride=1, pad_mode='valid')

self.conv2 = nn.Conv2d(6, 16, 5, stride=1, pad_mode='valid')

self.relu = nn.ReLU()

self.pool = nn.MaxPool2d(kernel_size=2, stride=2)

self.flatten = nn.Flatten()

self.fc1 = nn.Dense(400, 120)

self.fc2 = nn.Dense(120, 84)

self.fc3 = nn.Dense(84, 10)

def construct(self, x):

x = self.relu(self.conv1(x))

x = self.pool(x)

x = self.relu(self.conv2(x))

x = self.pool(x)

x = self.flatten(x)

x = self.fc1(x)

x = self.fc2(x)

x = self.fc3(x)

return x

def train(data_dir, lr=0.01, momentum=0.9, num_epochs=3):

ds_train = create_dataset(data_dir)

ds_eval = create_dataset(data_dir, training=False)

net = LeNet5()

loss = nn.loss.SoftmaxCrossEntropyWithLogits(sparse=True, reduction='mean')

opt = nn.Momentum(net.trainable_params(), lr, momentum)

loss_cb = LossMonitor(per_print_times=ds_train.get_dataset_size())

model = Model(net, loss, opt, metrics={'acc', 'loss'})

# dataset_sink_mode can be True when using Ascend

model.train(num_epochs, ds_train, callbacks=[loss_cb], dataset_sink_mode=False)

metrics = model.eval(ds_eval, dataset_sink_mode=False)

print('Metrics:', metrics)

if __name__ == "__main__":

import argparse

parser = argparse.ArgumentParser()

parser.add_argument('--data_url', required=False, default='MNIST/', help='Location of data.')

parser.add_argument('--train_url', required=False, default=None, help='Location of training outputs.')

args, unknown = parser.parse_known_args()

data_path = os.path.abspath(args.data_url)

train(data_path)

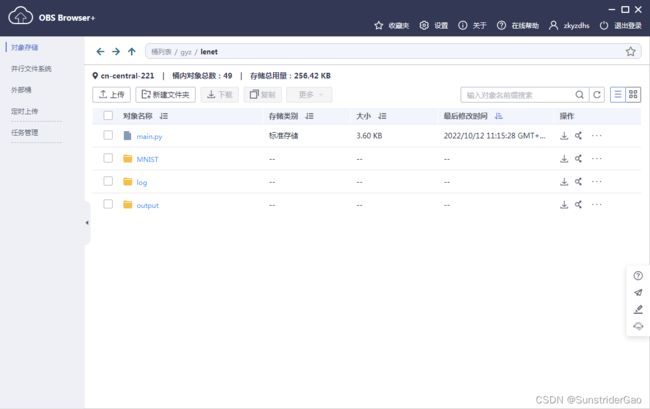

3、复制数据集与代码到OBS

复制数据集与代码main.py到OBS,并新建文件加outpu与log存放模型输出文件及日志文件,文件结构如下;

lenet

├── MNIST

│ ├── test

│ │ ├── t10k-images-idx3-ubyte

│ │ └── t10k-labels-idx1-ubyte

│ └── train

│ ├── train-images-idx3-ubyte

│ └── train-labels-idx1-ubyte

└── main.py

└── log

└── output

4、创建算法

点击算法管理创建算法,启动方式选择预置框架,指定代码目录与启动文件;然后在输入数据配置处点击添加输入数据配置,代码路径参数为 data_url;最后点击提交完成算法创建。

5、创建训练作业

出去运行helloworld时选择的参数外,需要指定data_url。点击数据存储位置,选择MNIST文件加所在位置。

最后点击立即创建,创建训练作业。并等待作业训练完成。