利用fine-tune的VGG模型实现“猫狗大战”测试

第四次作业:猫狗大战挑战赛

作业内容

这个作业为 Kaggle 于2013年举办的猫狗大战比赛,判断一张输入图像是“猫”还是“狗”。该教程使用在 ImageNet 上预训练 的 VGG 网络进行测试。因为原网络的分类结果是1000类,所以这里进行迁移学习,对原网络进行 fine-tune (即固定前面若干层,作为特征提取器,只重新训练最后两层)。

VGG模型的迁移学习

- 首先进行数据操作,再引入ImageNet (120万张训练数据) 上预训练好的通用的CNN模型

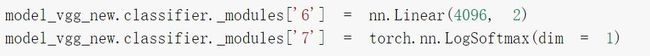

- 优化模型:VGG16的 nn.Linear 层由1000类替换为2类,同时为保证只会更新最后一层的参数,需要在训练中冻结前面层的参数。如下图所示

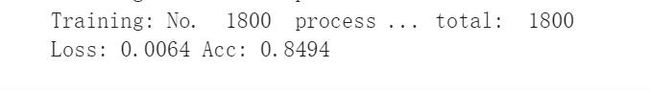

- 个人训练所得准确率如下,训练集包含1800张图(猫的图片900张,狗的图片900张)

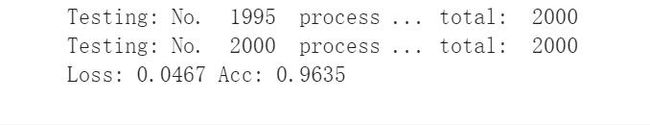

- 个人测试所的准确率为0.96,是个蛮不错的数据。其中测试集包含2000张图。

- 心得:此次训练将1000类替换为2类,是为了适应数据的需求,简单的数据用简化的网络,繁复的数据要fine-tune网络即所谓的奥卡姆剃刀原理。

fine-tune 预训练好的VGG16网络测试“猫狗”分类

训练过程

- 引入相关的包和文件并选用GPU训练

import numpy as np

import matplotlib.pyplot as plt

import os

import torch

import torch.nn as nn

import torchvision

from torchvision import models,transforms,datasets

import time

import json

# 判断是否存在GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using gpu: %s ' % torch.cuda.is_available())

- 解压并加载数据

在使用CNN处理图像时,需要进行预处理。图片将被整理成 224 ∗ 224 ∗ 3 224* 224 *3 224∗224∗3的大小,同时还将进行归一化处理。将AI研习社的train,val,test数据集全部加载,但仅使用train进行训练。

同时增大batch_size为128,梯度反向传播变得更准确

#解压文件

!unzip "/content/drive/MyDrive/cat_dog.zip"

#处理数据

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

data_dir = './cat_dog'

dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format)

for x in ['train', 'val', 'test']}

dset_sizes = {x: len(dsets[x]) for x in ['train', 'val', 'test']}

dset_classes = dsets['train'].classes

#修改batch_size

loader_train = torch.utils.data.DataLoader(dsets['train'], batch_size=128, shuffle=True, num_workers=6)

loader_valid = torch.utils.data.DataLoader(dsets['val'], batch_size=5, shuffle=False, num_workers=6)

loader_test = torch.utils.data.DataLoader(dsets['test'], batch_size=5, shuffle=False, num_workers=6)

- 下载使用ImageNet 上预训练好的通用的VGG16模型,同时按照需要冻结前面层的参数(反向传播梯度不改变)仅修改最后全连接层为2类(0代表cat,1代表dog)。

★有尝试添加多个卷积层来训练发现效果差不太多,反而计算复杂度上去了,最后选用老师最直接的办法。不过可能调整其他的参数会有奇妙的效果,但没有进行过多的探索。

#加载vgg16模型

!wget https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json

#使用vgg16需要

model_vgg = models.vgg16(pretrained=True)

print(model_vgg)

model_vgg_new = model_vgg;

#冻结VGG16中的参数,不进行梯度下降

for param in model_vgg_new.parameters():

param.requires_grad = False

#修改模型后两层

model_vgg_new.classifier._modules['6'] = nn.Linear(4096, 2)

model_vgg_new.classifier._modules['7'] = torch.nn.LogSoftmax(dim = 1)

model_vgg_new = model_vgg_new.to(device)

print(model_vgg_new.classifier)

- 使用大赛给出的数据集训练模型并测试

训练集包含25000张pics,测试集包含12500张pics。

★优化器抛弃了原有的SGD,SGD 虽能达到极小值,但是用时长,而且可能会被困在鞍点,为加快梯度收敛减少震荡,故选用最好的自适应算法Adam。

★batch_size增大了,要到达相同的准确度,必须要增大epoch,故将其从1个调整为10个。

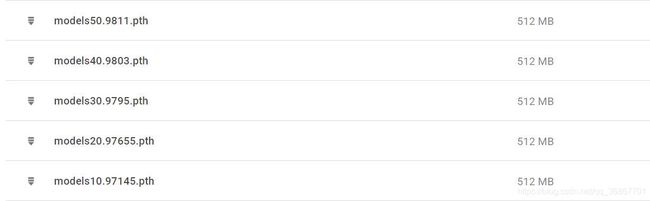

★设定max_acc用于寻找最优模型。(模型名称中直接标注了准确率,直观又方便迁移。)

'''

第一步:创建损失函数和优化器

损失函数 NLLLoss() 的 输入 是一个对数概率向量和一个目标标签.

它不会为我们计算对数概率,适合最后一层是log_softmax()的网络.

'''

criterion = nn.NLLLoss()

# 学习率

lr = 0.001

#修改为Adam优化器

optimizer_vgg = torch.optim.Adam(model_vgg_new.classifier[6].parameters(), lr=lr)

'''

第二步:训练模型

'''

def train_model(model,dataloader,size,epochs=1,optimizer=None):

model.train()

max_acc = 0

for epoch in range(epochs):

running_loss = 0.0

running_corrects = 0

count = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

optimizer = optimizer

optimizer.zero_grad()

loss.backward()

optimizer.step()

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

count += len(inputs)

#print('Training: No. ', count, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('epoch: {:} Loss: {:.4f} Acc: {:.4f}\n'.format(epoch,epoch_loss, epoch_acc))

if epoch_acc > max_acc:

max_acc = epoch_acc

path = './drive/MyDrive/models' + str(epoch+1) + '' + str(epoch_acc) + '' + '.pth'

torch.save(model, path)

print("save: ", path,"\n")

# 模型训练,修改训练次数10次

train_model(model_vgg_new, loader_train, size=dset_sizes['train'], epochs=10,

optimizer=optimizer_vgg)

# 第三步:测试模型

def test_model(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

all_classes = np.zeros(size)

all_proba = np.zeros((size,2))

i = 0

running_loss = 0.0

running_corrects = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

predictions[i:i+len(classes)] = preds.to('cpu').numpy()

all_classes[i:i+len(classes)] = classes.to('cpu').numpy()

all_proba[i:i+len(classes),:] = outputs.data.to('cpu').numpy()

i += len(classes)

print('Testing: No. ', i, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

return predictions, all_proba, all_classes

# 模型测试

predictions, all_proba, all_classes = test_model(model_vgg_new,loader_valid,size=dset_sizes['val'])

训练结果

训练10次,第5次模型达到最优准确率为98.11%,选用它!

模型测试:test集 准确率为0.96

正式测试

加载训练好的模型models50.9811,测试AI研习社猫狗大赛Val集,并将结果保存到.csv文件中。

★这里做了简单的数据处理,将图片按batch_size大小存进数组中。

★为加快进度,使用老师原来设定的数目2000张val图片做测试。

CV测试

import numpy as np

import matplotlib.pyplot as plt

import os

import torch

import torch.nn as nn

import torchvision

from torchvision import models,transforms,datasets

import time

import json

# 判断是否存在GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using gpu: %s ' % torch.cuda.is_available())

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

data_dir = '/content/cat_dog/test'

dsets = {'test': datasets.ImageFolder(data_dir, vgg_format)}

dset_sizes = {x: len(dsets[x]) for x in ['test']}

loader_test = torch.utils.data.DataLoader(dsets['test'], batch_size=1, shuffle=False, num_workers=0)

model_vgg_new = torch.load('/content/drive/MyDrive/models50.9811.pth')

model_vgg_new = model_vgg_new.to(device)

def test(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

i = 0

all_preds = {}

for inputs,classes in dataloader:

inputs = inputs.to(device)

outputs = model(inputs)

_,preds = torch.max(outputs.data,1)

# statistics

#按照batch_size重新分组数据,因为dset中的数据不是按1-2000顺序排列的

key = dsets['test'].imgs[i][0]

print(key)

all_preds[key] = preds[0]

i += 1

print('Testing: No. ', i, ' process ... total: ', size)

with open("./drive/MyDrive/result.csv", 'a+') as f:

for i in range(2000):

f.write("{},{}\n".format(i, all_preds["./cat_dog/test/TT/"+str(i)+".jpg"]))

test(model_vgg_new,loader_test,size=dset_sizes['test'])

结果

心得

修改卷积层,fine tune模型可以训练更多的数据得到更高的准确率,也了解到了batch_size ,epoch和几种优化器的概念与优缺点,同时在编写代码当中犯的错误,让我对python语言有了更深刻的认识。

附 模型 测试页面,有完整代码!