NNDL 作业3:分别使用numpy和pytorch实现FNN例题

目录

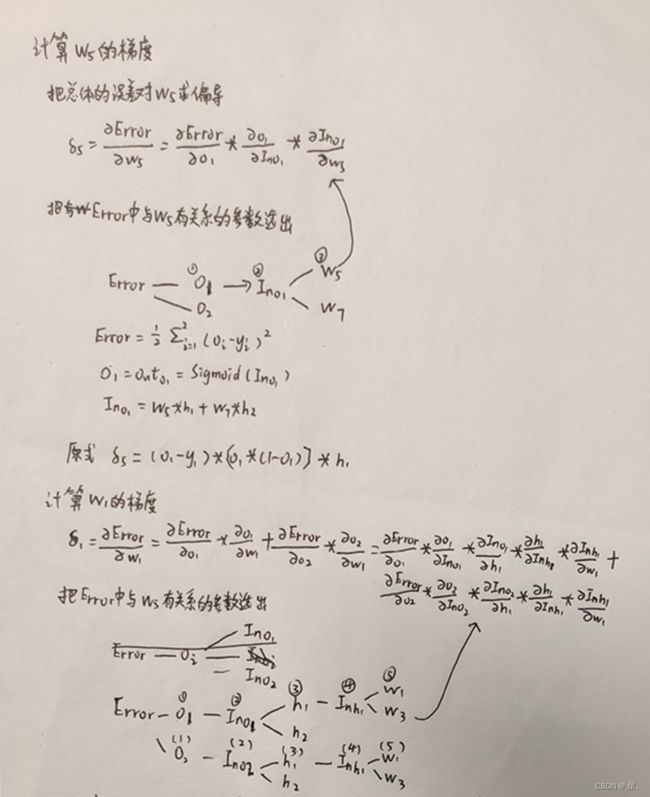

1.过程推导 - 了解BP原理

2.数值计算 - 手动计算,掌握细节

3.代码实现 - numpy手推 + pytorch自动

3.1对比【numpy】和【pytorch】程序,总结并陈述。

3.2激活函数Sigmoid用PyTorch自带函数torch.sigmoid(),观察、总结并陈述。

3.3激活函数Sigmoid改变为Relu,观察、总结并陈述。

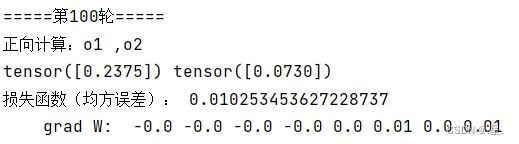

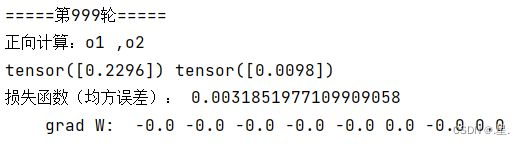

3.4损失函数MSE用PyTorch自带函数 t.nn.MSELoss()替代,观察、总结并陈述。

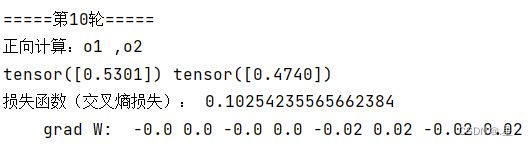

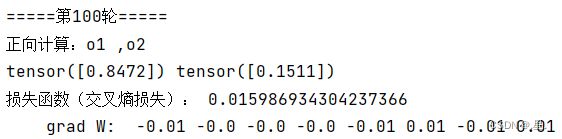

3.5损失函数MSE改变为交叉熵,观察、总结并陈述。

3.6改变步长,训练次数,观察、总结并陈述

3.7权值w1-w8初始值换为随机数,对比“指定权值”的结果,观察、总结并陈述

3.8权值w1-w8初始值换为0,观察、总结并陈述

3.9心得体会

1.过程推导 - 了解BP原理

2.数值计算 - 手动计算,掌握细节

3.代码实现 - numpy手推 + pytorch自动

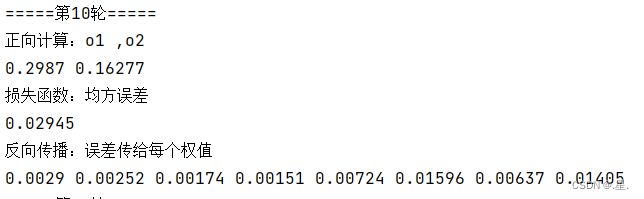

输入值:x1, x2 = 0.5,0.3

输出值:y1, y2 =0.23, -0.07

激活函数:sigmoid

损失函数:MSE

初始权值:0.2 -0.4 0.5 0.6 0.1 -0.5 -0.3 0.8

目标:通过反向传播优化权值

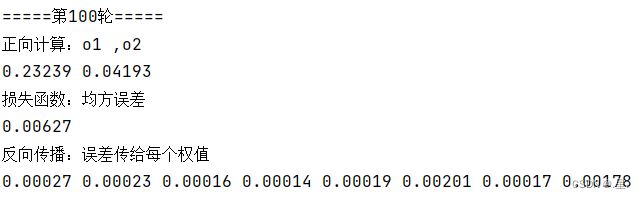

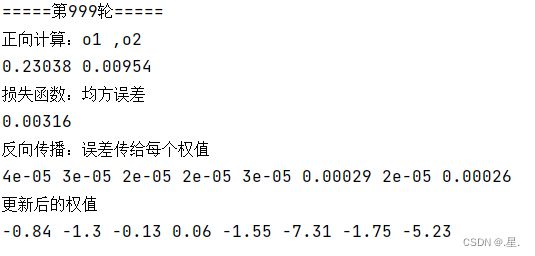

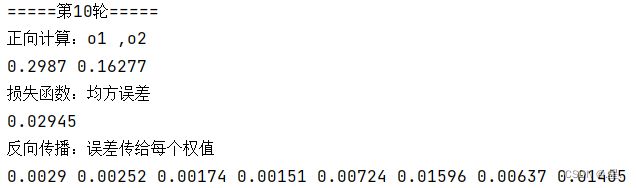

3.1对比【numpy】和【pytorch】程序,总结并陈述。

numpy代码

import numpy as np

def sigmoid(z):

a = 1 / (1 + np.exp(-z))

return a

def forward_propagate(x1, x2, y1, y2, w1, w2, w3, w4, w5, w6, w7, w8):

in_h1 = w1 * x1 + w3 * x2

out_h1 = sigmoid(in_h1)

in_h2 = w2 * x1 + w4 * x2

out_h2 = sigmoid(in_h2)

in_o1 = w5 * out_h1 + w7 * out_h2

out_o1 = sigmoid(in_o1)

in_o2 = w6 * out_h1 + w8 * out_h2

out_o2 = sigmoid(in_o2)

print("正向计算:o1 ,o2")

print(round(out_o1, 5), round(out_o2, 5))

error = (1 / 2) * (out_o1 - y1) ** 2 + (1 / 2) * (out_o2 - y2) ** 2

print("损失函数:均方误差")

print(round(error, 5))

return out_o1, out_o2, out_h1, out_h2

def back_propagate(out_o1, out_o2, out_h1, out_h2):

# 反向传播

d_o1 = out_o1 - y1

d_o2 = out_o2 - y2

# print(round(d_o1, 2), round(d_o2, 2))

d_w5 = d_o1 * out_o1 * (1 - out_o1) * out_h1

d_w7 = d_o1 * out_o1 * (1 - out_o1) * out_h2

# print(round(d_w5, 2), round(d_w7, 2))

d_w6 = d_o2 * out_o2 * (1 - out_o2) * out_h1

d_w8 = d_o2 * out_o2 * (1 - out_o2) * out_h2

# print(round(d_w6, 2), round(d_w8, 2))

d_w1 = (d_w5 + d_w6) * out_h1 * (1 - out_h1) * x1

d_w3 = (d_w5 + d_w6) * out_h1 * (1 - out_h1) * x2

# print(round(d_w1, 2), round(d_w3, 2))

d_w2 = (d_w7 + d_w8) * out_h2 * (1 - out_h2) * x1

d_w4 = (d_w7 + d_w8) * out_h2 * (1 - out_h2) * x2

# print(round(d_w2, 2), round(d_w4, 2))

print("反向传播:误差传给每个权值")

print(round(d_w1, 5), round(d_w2, 5), round(d_w3, 5), round(d_w4, 5), round(d_w5, 5), round(d_w6, 5),

round(d_w7, 5), round(d_w8, 5))

return d_w1, d_w2, d_w3, d_w4, d_w5, d_w6, d_w7, d_w8

def update_w(w1, w2, w3, w4, w5, w6, w7, w8):

# 步长

step = 5

w1 = w1 - step * d_w1

w2 = w2 - step * d_w2

w3 = w3 - step * d_w3

w4 = w4 - step * d_w4

w5 = w5 - step * d_w5

w6 = w6 - step * d_w6

w7 = w7 - step * d_w7

w8 = w8 - step * d_w8

return w1, w2, w3, w4, w5, w6, w7, w8

if __name__ == "__main__":

w1, w2, w3, w4, w5, w6, w7, w8 = 0.2, -0.4, 0.5, 0.6, 0.1, -0.5, -0.3, 0.8

x1, x2 = 0.5, 0.3

y1, y2 = 0.23, -0.07

print("=====输入值:x1, x2;真实输出值:y1, y2=====")

print(x1, x2, y1, y2)

print("=====更新前的权值=====")

print(round(w1, 2), round(w2, 2), round(w3, 2), round(w4, 2), round(w5, 2), round(w6, 2), round(w7, 2),

round(w8, 2))

for i in range(1000):

print("=====第" + str(i) + "轮=====")

out_o1, out_o2, out_h1, out_h2 = forward_propagate(x1, x2, y1, y2, w1, w2, w3, w4, w5, w6, w7, w8)

d_w1, d_w2, d_w3, d_w4, d_w5, d_w6, d_w7, d_w8 = back_propagate(out_o1, out_o2, out_h1, out_h2)

w1, w2, w3, w4, w5, w6, w7, w8 = update_w(w1, w2, w3, w4, w5, w6, w7, w8)

print("更新后的权值")

print(round(w1, 2), round(w2, 2), round(w3, 2), round(w4, 2), round(w5, 2), round(w6, 2), round(w7, 2),

round(w8, 2))pytorch代码

import torch

x1, x2 = torch.Tensor([0.5]), torch.Tensor([0.3])

y1, y2 = torch.Tensor([0.23]), torch.Tensor([-0.07])

print("=====输入值:x1, x2;真实输出值:y1, y2=====")

print(x1, x2, y1, y2)

w1, w2, w3, w4, w5, w6, w7, w8 = torch.Tensor([0.2]), torch.Tensor([-0.4]), torch.Tensor([0.5]), torch.Tensor(

[0.6]), torch.Tensor([0.1]), torch.Tensor([-0.5]), torch.Tensor([-0.3]), torch.Tensor([0.8]) # 权重初始值

w1.requires_grad = True

w2.requires_grad = True

w3.requires_grad = True

w4.requires_grad = True

w5.requires_grad = True

w6.requires_grad = True

w7.requires_grad = True

w8.requires_grad = True

def sigmoid(z):

a = 1 / (1 + torch.exp(-z))

return a

def forward_propagate(x1, x2):

in_h1 = w1 * x1 + w3 * x2

out_h1 = sigmoid(in_h1) # out_h1 = torch.sigmoid(in_h1)

in_h2 = w2 * x1 + w4 * x2

out_h2 = sigmoid(in_h2) # out_h2 = torch.sigmoid(in_h2)

in_o1 = w5 * out_h1 + w7 * out_h2

out_o1 = sigmoid(in_o1) # out_o1 = torch.sigmoid(in_o1)

in_o2 = w6 * out_h1 + w8 * out_h2

out_o2 = sigmoid(in_o2) # out_o2 = torch.sigmoid(in_o2)

print("正向计算:o1 ,o2")

print(out_o1.data, out_o2.data)

return out_o1, out_o2

def loss_fuction(x1, x2, y1, y2): # 损失函数

y1_pred, y2_pred = forward_propagate(x1, x2) # 前向传播

loss = (1 / 2) * (y1_pred - y1) ** 2 + (1 / 2) * (y2_pred - y2) ** 2 # 考虑 : t.nn.MSELoss()

print("损失函数(均方误差):", loss.item())

return loss

def update_w(w1, w2, w3, w4, w5, w6, w7, w8):

# 步长

step = 1

w1.data = w1.data - step * w1.grad.data

w2.data = w2.data - step * w2.grad.data

w3.data = w3.data - step * w3.grad.data

w4.data = w4.data - step * w4.grad.data

w5.data = w5.data - step * w5.grad.data

w6.data = w6.data - step * w6.grad.data

w7.data = w7.data - step * w7.grad.data

w8.data = w8.data - step * w8.grad.data

w1.grad.data.zero_() # 注意:将w中所有梯度清零

w2.grad.data.zero_()

w3.grad.data.zero_()

w4.grad.data.zero_()

w5.grad.data.zero_()

w6.grad.data.zero_()

w7.grad.data.zero_()

w8.grad.data.zero_()

return w1, w2, w3, w4, w5, w6, w7, w8

if __name__ == "__main__":

print("=====更新前的权值=====")

print(w1.data, w2.data, w3.data, w4.data, w5.data, w6.data, w7.data, w8.data)

for i in range(1):

print("=====第" + str(i) + "轮=====")

L = loss_fuction(x1, x2, y1, y2) # 前向传播,求 Loss,构建计算图

L.backward() # 自动求梯度,不需要人工编程实现。反向传播,求出计算图中所有梯度存入w中

print("\tgrad W: ", round(w1.grad.item(), 2), round(w2.grad.item(), 2), round(w3.grad.item(), 2),

round(w4.grad.item(), 2), round(w5.grad.item(), 2), round(w6.grad.item(), 2), round(w7.grad.item(), 2),

round(w8.grad.item(), 2))

w1, w2, w3, w4, w5, w6, w7, w8 = update_w(w1, w2, w3, w4, w5, w6, w7, w8)

print("更新后的权值")

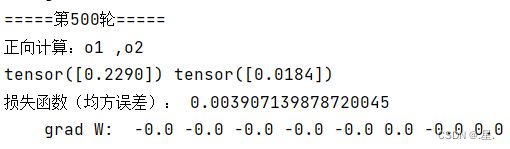

print(w1.data, w2.data, w3.data, w4.data, w5.data, w6.data, w7.data, w8.data)运行结果:

两者结果基本相同,但pytorch中有自动求梯度的函数,不用再编程实现,相比numpy更加方便

3.2激活函数Sigmoid用PyTorch自带函数torch.sigmoid(),观察、总结并陈述。

在训练轮数较少时,PyTorch自带函数torch.sigmoid()的均方误差较大,但最后结果两者没有差别

3.3激活函数Sigmoid改变为Relu,观察、总结并陈述。

将

def sigmoid(z):

a = 1 / (1 + np.exp(-z))

return a换为

def relu(z):

return np.maximum(0, z)运行结果:

激活函数变为Relu时,均方误差下降速度更快

3.4损失函数MSE用PyTorch自带函数 t.nn.MSELoss()替代,观察、总结并陈述。

def loss_fuction(x1, x2, y1, y2): # 损失函数

y1_pred, y2_pred = forward_propagate(x1, x2) # 前向传播

# loss = (1 / 2) * (y1_pred - y1) ** 2 + (1 / 2) * (y2_pred - y2) ** 2 # 考虑 : t.nn.MSELoss()

loss_func = torch.nn.MSELoss() # 创建损失函数

y_pred = torch.cat((y1_pred, y2_pred), dim=0) # 将y1_pred, y2_pred合并成一个向量

y = torch.cat((y1, y2), dim=0)

loss = loss_func(y_pred, y) # 计算损失

print("损失函数(均方误差):", loss.item())

return loss运行结果:

可以看出替代前后两者结果基本相同

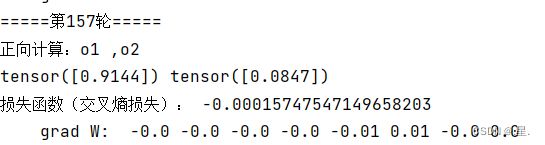

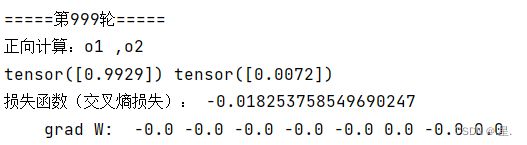

3.5损失函数MSE改变为交叉熵,观察、总结并陈述。

def loss_fuction(x1, x2, y1, y2):

y1_pred, y2_pred = forward_propagate(x1, x2)

loss_func = torch.nn.CrossEntropyLoss() # 创建交叉熵损失函数

y_pred = torch.stack([y1_pred, y2_pred], dim=1)

y = torch.stack([y1, y2], dim=1)

loss = loss_func(y_pred, y) # 计算

print("损失函数(交叉熵损失):", loss.item())

return loss

运行结果:

在150左右时损失函数已经变成负数,从而说明交叉熵损失函数并不适合此神经网络

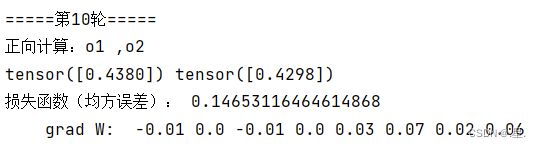

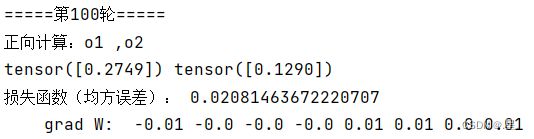

3.6改变步长,训练次数,观察、总结并陈述

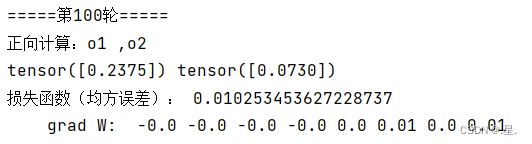

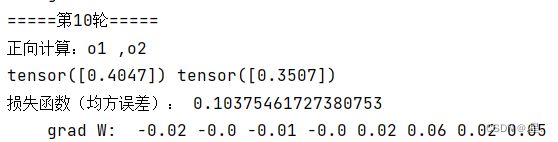

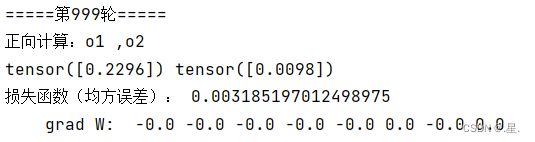

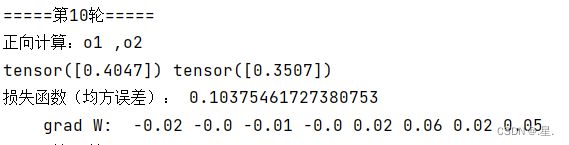

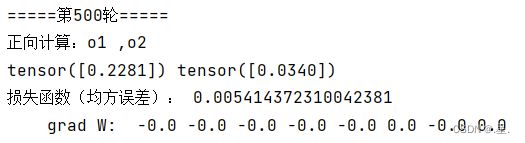

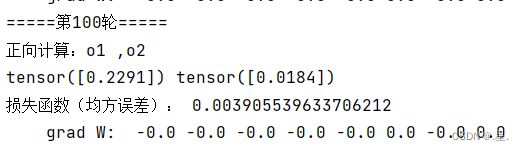

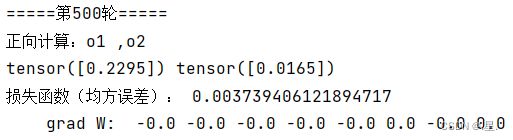

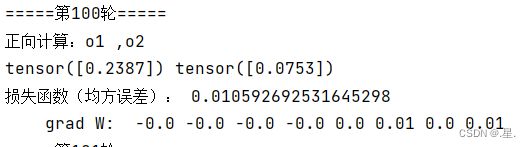

步长为1时

步长为0.5时

步长为5时

由此可见,步长越大,收敛速度越快;训练轮数越多,结果越准确

3.7权值w1-w8初始值换为随机数,对比“指定权值”的结果,观察、总结并陈述

可以看出权值换为随机数后均方误差下降速度变快

3.8权值w1-w8初始值换为0,观察、总结并陈述

3.9心得体会

通过此次作业的学习,我对反向传播原理及其代码实现有了更加深刻的印象,原理方面不太理解的地方也通过慕课上的学习而掌握。代码实现中,损失函数的改变、步长大小、训练轮数等因素都会影响效率与最后的结果,使用合适的才能有最好的效果。

参考:【2021-2022 春学期】人工智能-作业2:例题程序复现

【2021-2022 春学期】人工智能-作业3:例题程序复现 PyTorch版

【人工智能导论:模型与算法】MOOC 8.3 误差后向传播(BP) 例题 编程验证numpy版

【人工智能导论:模型与算法】MOOC 8.3 误差后向传播(BP) 例题 编程验证 Pytorch版本

【人工智能导论:模型与算法】误差后向传播(BP) 例题 编程验证【勘误版】