Pytorch实战 | P9 YOLOv5-Backbone模块实现天气识别

● 本文为365天深度学习训练营 中的学习记录博客

● 参考文章:Pytorch实战 | 第P9天:YOLOv5-Backbone模块

● 原作者:K同学啊|接辅导、项目定制

一、我的环境

● 语言环境:Python3.8

● 编译器:pycharm

● 深度学习环境:Pytorch

● 数据来源:链接: https://pan.baidu.com/s/1SEfd8mvWt7BpzmWOeaIRkQ 提取码: gdie

二、代码实现

1、mian.py

# -*- coding: utf-8 -*-

import copy

import torch.utils.data

from torchvision import datasets, transforms

from model import train1, test1, YOLOv5f_backbone

import torch.nn as nn

# 一、导入和转换数据

img_path = './data/'

# 加载数据

train_transforms = transforms.Compose([

transforms.Resize([224, 224]),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

])

total_data = datasets.ImageFolder(img_path, transform=train_transforms)

# 训练数据和测试数据切分

train_size = int(len(total_data) * 0.8)

test_size = len(total_data) - train_size

train_data, test_data = torch.utils.data.random_split(total_data, [train_size, test_size])

# 按批次处理

batch_size = 4

train_dl = torch.utils.data.DataLoader(train_data, batch_size=batch_size, shuffle=True)

test_dl = torch.utils.data.DataLoader(test_data, batch_size=batch_size, shuffle=True)

# 二、模型网络结构 model.py

# 三、训练函数 model.py train1()和test1()函数

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print('using:{}'.format(device))

model = YOLOv5f_backbone().to(device)

optimizer = torch.optim.Adam(model.parameters(), lr=1e-4)

loss_fn = nn.CrossEntropyLoss()

epochs = 60

train_loss = []

test_loss = []

train_acc = []

test_acc = []

best_acc = 0

best_model = None

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train1(train_dl, model, loss_fn, optimizer, device)

model.eval()

epoch_test_acc, epoch_test_loss = test1(test_dl, model, loss_fn, device)

# 保存最佳模型到best_model

if epoch_test_acc > best_acc:

best_acc = epoch_test_acc

best_model = copy.deepcopy(model)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}, Test_loss:{:.3f}, Lr:{:.2E}')

print(

template.format(epoch + 1, epoch_train_acc * 100, epoch_train_loss, epoch_test_acc * 100, epoch_test_loss, lr))

model_path = './model/model.pth'

torch.save(best_model.state_dict(), model_path)

print('Done!')

# 四、模型评估

import matplotlib.pyplot as plt

# 隐藏警告

import warnings

warnings.filterwarnings("ignore") # 忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 # 分辨率

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

2、model.py

# -*- coding: utf-8 -*-

import warnings

import torch.nn.functional as F

import torch.nn as nn

import torch

def autopad(k, p=None):

# Pad to 'same

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

return p

class Conv(nn.Module):

# Standard convolution

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=True): # ch_in, ch_out, kernel, stride, padding, groups

super(Conv, self).__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = nn.SiLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

class Bottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

def forward(self, x):

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3(nn.Module):

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super(C3, self).__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # act=FReLU(c2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

def forward(self, x):

a = self.m(self.cv1(x))

b = self.cv2(x)

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), dim=1))

class SPPF(nn.Module):

def __init__(self, c1, c2, k=5):

super(SPPF, self).__init__()

c_ = c1 // 2 # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_ * 4, c2, 1, 1)

self.m = nn.MaxPool2d(kernel_size=k, stride=1, padding=k // 2)

def forward(self, x):

x = self.cv1(x)

with warnings.catch_warnings():

warnings.simplefilter('ignore')

y1 = self.m(x)

y2 = self.m(y1)

return self.cv2(torch.cat([x, y1, y2, self.m(y2)], 1))

class YOLOv5f_backbone(nn.Module):

def __init__(self):

super(YOLOv5f_backbone, self).__init__()

self.Conv_1 = Conv(3, 64, 3, 2, 2)

self.Conv_2 = Conv(64, 128, 3, 2)

self.C3_3 = C3(128, 128)

self.Conv_4 = Conv(128, 256, 3, 2)

self.C3_5 = C3(256, 256)

self.Conv_6 = Conv(256, 512, 3, 2)

self.C3_7 = C3(512, 512)

self.Conv_8 = Conv(512, 1024, 3, 2)

self.C3_9 = C3(1024, 1024)

self.SPPF = SPPF(1024, 1024, 5)

self.classifier = nn.Sequential(

nn.Linear(in_features=65536, out_features=100),

nn.ReLU(),

nn.Linear(in_features=100, out_features=4)

)

def forward(self, x):

x = self.Conv_1(x)

x = self.Conv_2(x)

x = self.C3_3(x)

x = self.Conv_4(x)

x = self.C3_5(x)

x = self.Conv_6(x)

x = self.C3_7(x)

x = self.Conv_8(x)

x = self.C3_9(x)

x = self.SPPF(x)

x = torch.flatten(x, start_dim=1)

x = self.classifier(x)

return x

def train1(dataloader, model, fn_loss, optimizer, device):

size = len(dataloader.dataset)

batch_num = len(dataloader)

train_loss, train_acc = 0, 0

for X, y in dataloader:

X, y = X.to(device), y.to(device)

pred = model(X)

loss = fn_loss(pred, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= batch_num

return train_acc, train_loss

def test1(dataloader, model, fn_loss, device):

size = len(dataloader.dataset)

num_batchs = len(dataloader)

test_acc, test_loss = 0, 0

# 当不训练时停止梯度更新

with torch.no_grad():

for X, y in dataloader:

X, y = X.to(device), y.to(device)

pred = model(X)

loss = fn_loss(pred, y)

test_loss += loss.item()

test_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batchs

return test_acc, test_loss

三、遇到的主要问题

1、把__init__写成了__int__,报错TypeError: init() takes 1 positional argument but 3 were given,找了半天才发现问题

2、forward写成了farward,导致报错

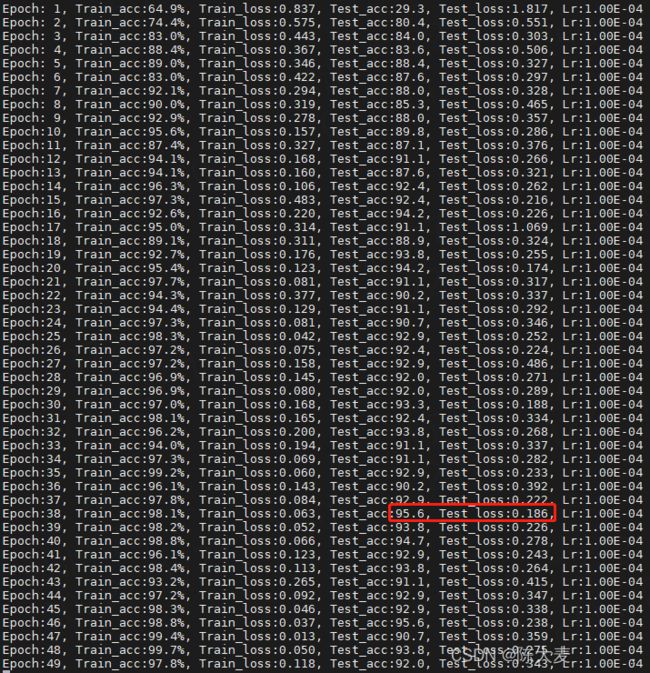

3、最终跑的结果