Pytorch学习笔记(三):神经网络基本骨架

一、Containers

Module:给所有神经网络提供基本骨架,输入的参数x会经过一次卷积,一次非线性,再进行一次卷积与非线性才会得到输出。

神经网络基本结构的使用:

1. 卷积层

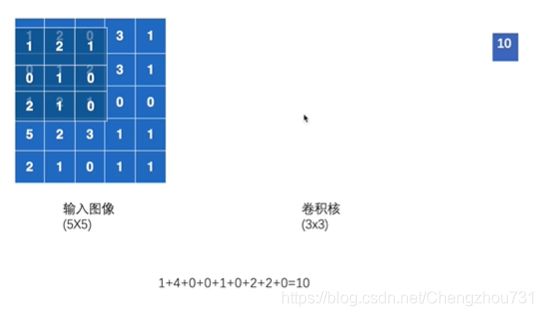

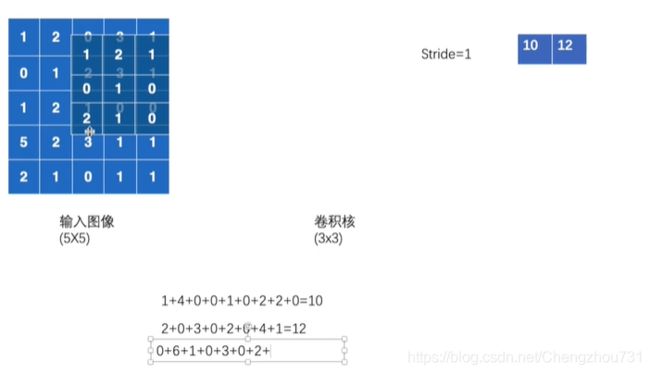

接下来卷积核会在图像上逐渐移动,移动的步长可以通过stride参数进行调整。输入的参数可以是一个数,也可以通过元祖来设置横向和纵向移动的步长。

代码实现:

##----nn.conv2d

import torch

import torchvision

from torch.utils.data import DataLoader

from torch.nn import Conv2d

dataset = torchvision.datasets.CIFAR10("dataset",train = False , transform = torchvision.transforms.ToTensor(),download= True)

dataloader = DataLoader(dataset, batch_size= 64)

class Test(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = Conv2d(in_channels=3,out_channels=6,kernel_size=3,stride=1,padding=0) # 网络中有卷积层

def forward(self,x):

x = self.conv1(x)

return x

test = Test()

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter("logs")

step = 0

for data in dataloader:

imgs,targets = data

output = test(imgs)

print(imgs.shape)

print(output.shape)

# torch.Size([64, 3, 32, 32])

writer.add_images("input",imgs,step)

# torch.Size([64, 6, 30, 30])

output = torch.reshape(output,(-1,3,30,30))

writer.add_images("output",output,step)

step += 1可以根据以下公式,确定padding和dilation去进行实现。

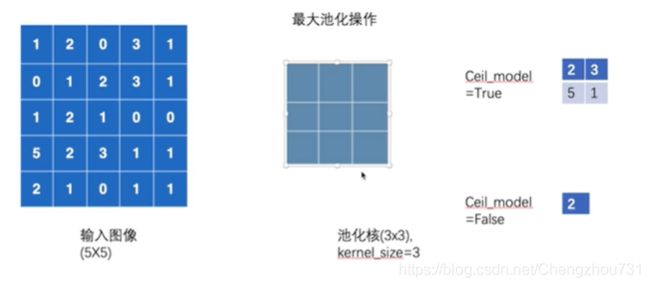

2. 池化层:池化层夹在连续的卷积层中间, 用于压缩数据和参数的量,减小过拟合。

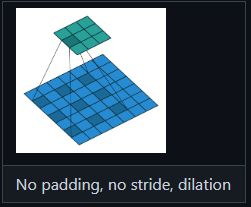

dilation: 每一个核当中的元素与核当中另外一个元素会有空格,也称其为空洞卷积

ceil_mode: 当设置为True时,则为ceil模式

最大池化操作:减少数据量,训练速度更快

代码实现:

## nn_maxpool

import torch

from torch import nn

from torch.nn import MaxPool2d

input = torch.tensor([[1,2,0,3,1],

[0,1,2,3,1],

[1,2,1,0,0],

[5,2,3,1,1],

[2,1,0,1,1]],dtype = torch.float32) # 设置为浮点数

input = torch.reshape(input,(-1,1,5,5))

class model(nn.Module):

def __init__(self):

super().__init__()

self.maxpool1 = MaxPool2d(kernel_size = 3, ceil_mode = True)

def forward(self,input):

output = self.maxpool1(input)

return output

Model = model()

output = Model(input)

print(output)

dataset = torchvision.datasets.CIFAR10("dataset",train = False , transform = torchvision.transforms.ToTensor(),download= True)

dataloader = DataLoader(dataset, batch_size= 64)

writer = SummaryWriter("logs_maxpool")

step = 0

for data in dataloader:

imgs,target = data

writer.add_images("input",imgs,step)

output = Model(imgs)

writer.add_images("output",imgs,step)

print(imgs.shape) # 通道数是没有进行变化的

print(output.shape)

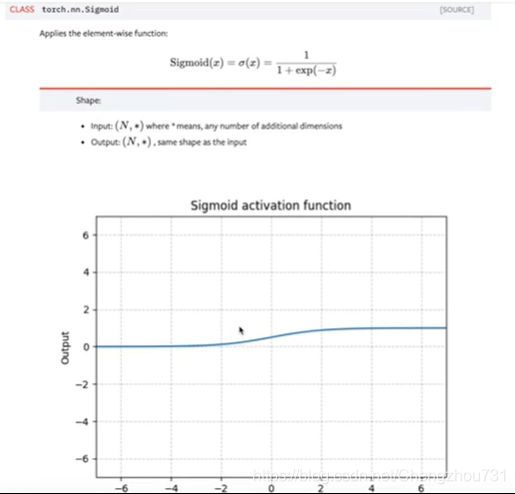

step += 13. 非线性激活:给神经网络中引入非线性特质

RELU:当Input>0时,保持原函数值;当Input<0时,取值变为0。

Sigmoid:

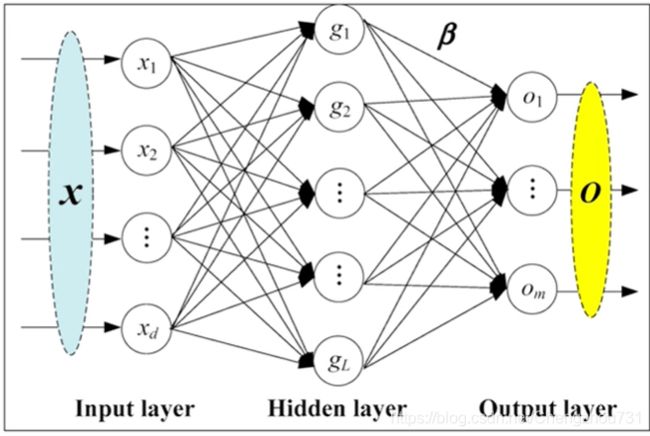

4. 线性层及其他层介绍

CLASS torch.nn.Linear(in_features,out_features,bias = True)

in_features:输入特征 x1, x2, x3

out_features:输出特征g1, g2, g3

bias:决定是否附加bias

例:对于一张5 * 5的图片,将其变成1 * 3的图片

## nn.linear

from torch import nn

import torch

import torchvision

from torch.utils.data import DataLoader

from torch.nn import Linear

dataset = torchvision.datasets.CIFAR10("dataset",train = False , transform = torchvision.transforms.ToTensor(),download= True)

dataloader = DataLoader(dataset, batch_size= 64,drop_last= True)

class linear(nn.Module):

def __init__(self):

super().__init__()

self.linear1 = Linear(196608,10)

def forward(self,input):

output = self.linear1(input)

return output

linear = linear()

for data in dataloader:

imgs,targets = data

# output = torch.reshape(imgs,(1,1,1,-1))

output = torch.flatten(imgs) # 与上方函数作用相同

output = linear(output)

print(output.shape)5. Sequential:A sequential container.Modules will be added to it in the order they are passed in the constructor. Alternatively, an ordered dict of modules can also be passed in.

## 搭建一个简单的神经网络

from torch import nn

from torch.nn import Conv2d,MaxPool2d,Flatten,Linear

class model(nn.Module):

def __init__(self):

super().__init__()

self.model1 = nn.Sequential(

Conv2d(3,32,5,1,2),

MaxPool2d(2),

Conv2d(32,32,5,1,2),

MaxPool2d(2),

Conv2d(32,64,5,1,2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

) # Sequential 简洁了后续操作

def forward(self,input):

output = self.model1(input)

return output

model = model()

x = torch.ones([64,3,32,32])

output = model(x)

print(output.shape)

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter("logs")

writer.add_graph(model,input)

writer.close()6. 损失函数与反向传播:计算实际输出和目标之间的差距,为我们更新输出提供一定的依据(反向传播)

L1loss:绝对值损失

import torch

inputs = torch.tensor([1,2,3],dtype = torch.float32)

targets = torch.tensor([1,2,5],dtype = torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss = torch.nn.L1Loss()

loss(inputs,targets)MSEloss:均方误差

import torch

from torch import nn

inputs = torch.tensor([1,2,3],dtype = torch.float32)

targets = torch.tensor([1,2,5],dtype = torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

# loss = torch.nn.L1Loss()

loss_mse = nn.MSELoss()

# loss(inputs,targets)

loss_mse(inputs,targets)CrossEntropyLoss:交叉熵误差——分类问题

x = torch.tensor([0.1,0.2,0.3])

x = torch.reshape(x,(1,3)) # batch size = 1 , 有3类

y = torch.tensor([1])

loss_cross = nn.CrossEntropyLoss()

result_cross = loss_cross(x,y)

print(result_cross)反向传播:计算实际输出和目标之间的差距,为我们更新输出提供一定的依据。

优化器:

para:所需传入的模型参数

lr:learning rate 学习速率

## Optimizer

import torch

loss = nn.CrossEntropyLoss()

optim = torch.optim.SGD(model.parameters(),lr = 0.01)

for epoch in range(20):

running_loss = 0.0

for data in dataloader:

imgs,targets = data

outputs = model(imgs)

result_loss = loss(outputs,targets)

optim.zero_grad()

result_loss.backward() #允许其可以反向传播

optim.step()

running_loss = running_loss + result_loss

print(running_loss)