Gstreamer 硬解码Rtsp流及代码实现

背景

业务需要自己做解码,因为软解码CPU占有率太高,需要硬件加速,也就是硬解码。可以使用ffmpeg或者Gstreamer进行解码,我们选择用Gstreamer做解码。

系统环境:Ubuntu 20.04

代码功能:实现rtsp流的H264硬解码,获取解码后的数据;

一、Gstreamer介绍

Gstreamer是一个用于开发流媒体应用的开源框架,采用了基于插件(plugin)和管道(pipeline)的体系结构,框架中的所有的功能模块都被实现成可以插拔的元素(Element),并且能够很方便地安装到任意一个管道上。由于所有插件都通过管道机制进行统一的数据交换,因此很容易利用已有的各种插件“组装”出一个功能完善的流媒体应用程序。

二、Gstreamer基础概念

2.1 Element

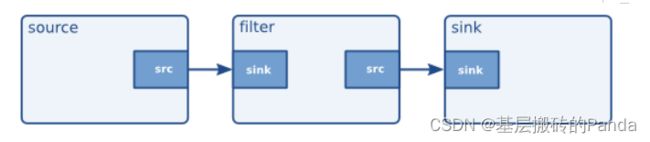

从 GStreamer 自身的观点来看,GstElement 可以描述为一个具有特定属性的黑盒子,它通过连接点(link point)与外界进行交互,向框架中的其余部分表征自己的特性或者功能。

一个Element实现了一个功能(如读取文件,解码,输出等),一个程序需要创建多个element,并按顺序将其串连起来,构成一个完整的pipeline

按照各自功能上的差异,GstElement 可以细分成如下几类:

-

Source Element 数据源元件,只有输出端。它仅能用来产生供管道消费的数据,而不能对数据做任何处理。一个典型的数据源元件的例子是音频捕获单元,它负责从声卡读取原始的音频数据,然后作为数据源提供给其它模块使用。

-

Filter Element 过滤器元件,既有输入端又有输出端。它从输入端获得相应的数据,并在经过特殊处理之后传递给输出端。 一个典型的过滤器元件的例子是音频编码单元,它首先从外界获得音频数据,然后根据特定的压缩算法对其进行编码,最后再将编码后的结果提供给其它模块使用)

-

Sink Element 接收器元件,只有输入端。它仅具有消费数据的能力,是整条媒体管道的终端。一个典型的接收器元件的例子是音频回放单元,它负责将接收到的数据写到声卡上,通常这也是音频处理过程中的最后一个环节。

三、Gstreamer编解码一般步骤

- 创建所需elements

- gst_bin_add_many添加创建的elements

- 链接elements

- 设置pipeline为Playing状态

- 设置采样回调

三、完整代码示例

#include