水很深的深度学习-卷积神经网络篇

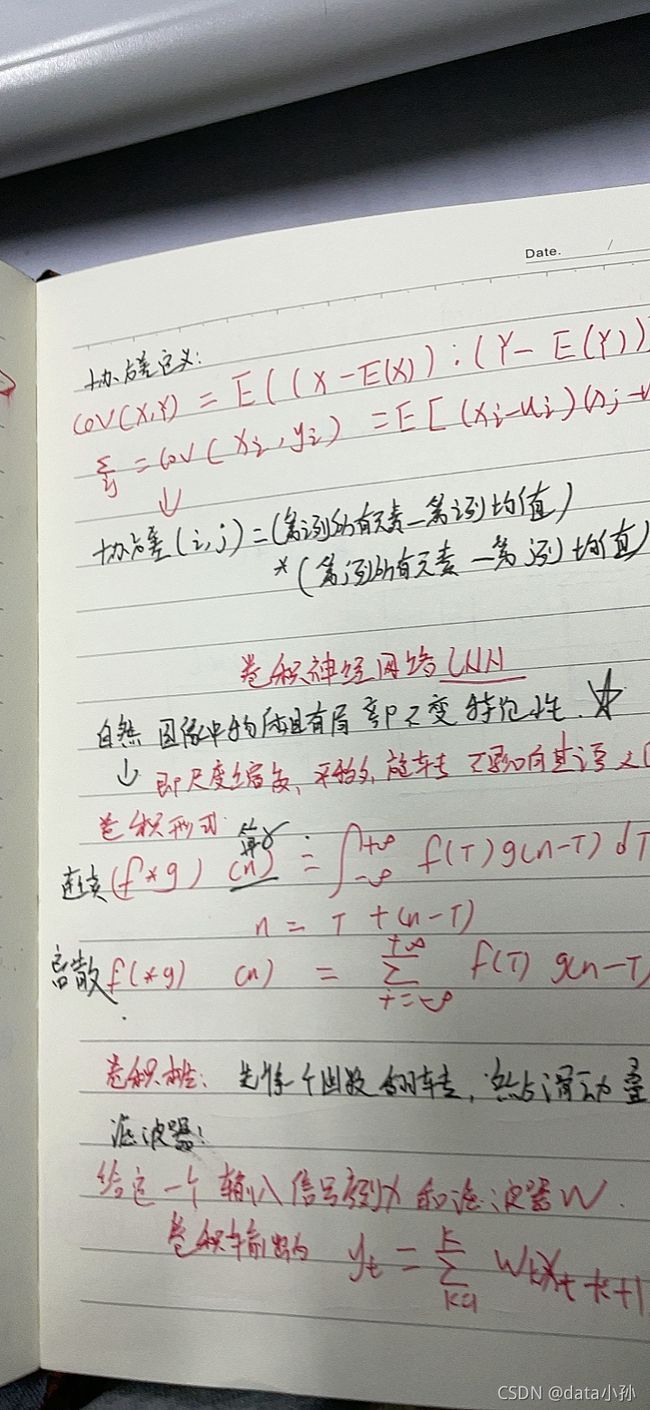

什么是卷积

先将一个函数反转,然后滑动叠加

最容易理解的对卷积(convolution)的解释_bitcarmanlee的博客-CSDN博客_卷积

这篇文章可以深入理解下卷积

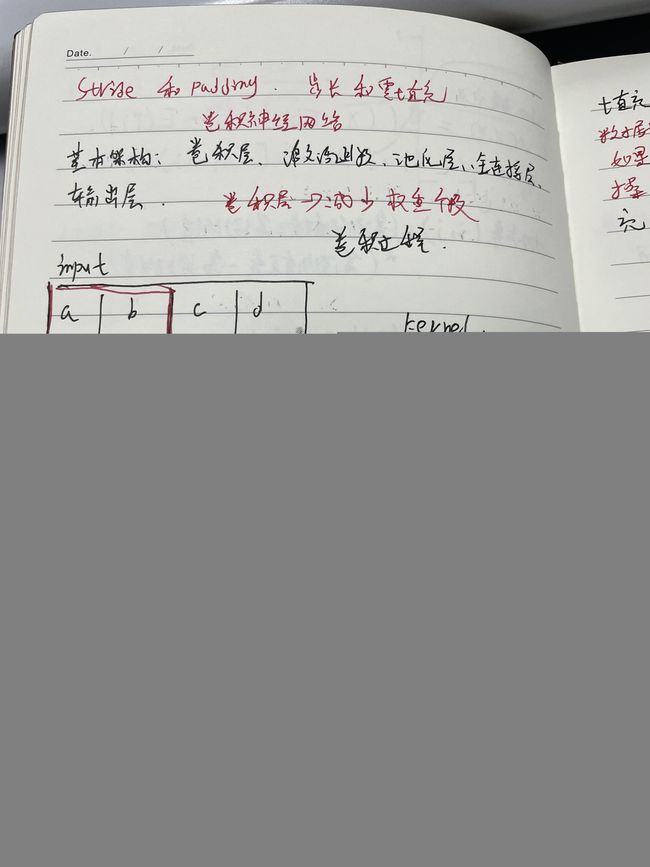

卷积操作

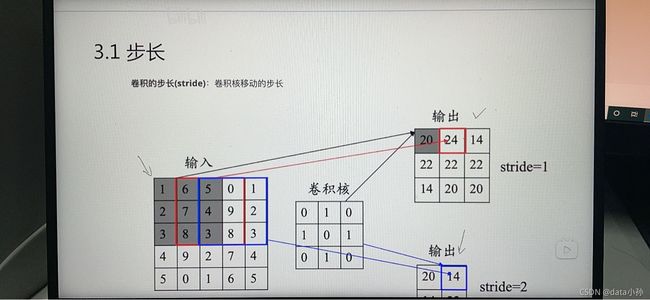

步长:卷积核移动的步长

其他卷积

转置卷积/反卷积

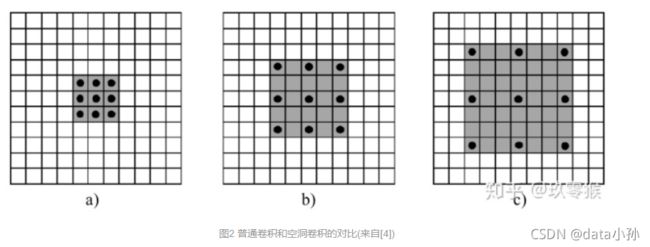

空洞卷积

空洞卷积可以增大感受野,但是可以不改变图像输出特征图的尺寸

我想说下自己白痴理解

扩张率这个东西,对比上面3个图,b相比于a,想像下左上角第一个是一个棋子,走到中间位置需要走两步,并不是一步可以走到,c图就是走了3步,扩张率就是走的步数,不知道这样说是否合适

上面三个图同样是3*3卷积,却发挥了5*5 7*7卷积的同样作用

吃透空洞卷积(Dilated Convolutions)_程序客栈(@qq704783475)-CSDN博客_膨胀卷积和空洞卷积

卷积神经网络

卷积神经网络包含卷积层、激活函数、池化层、全连接层、输出层

特征图

浅层卷积层:提取图像基本特征,如边缘、方向和纹理

深层卷积层:提取图像高阶特征,出现了高层语义模式

特征映射

一幅图在经过卷积操作后得到结果为feature

池化层:使用某位置相邻输出的总体特征作为该位置的输出,常用的池化操作优最大池化和均值池化

池化层作用

减少参数,防止过拟合

增强网络对输入图片的小变形、扭曲、平移的鲁棒性

帮助获得不因尺寸改变的等效图片表征

全连接层:

对卷积层和池化层输出的特征图进行降维

输出层:分类问题sofxmax函数

回归问题:线性函数

经典卷积神经网络

VGG、Lenet

resnet、Alexnet、Inception NET

LeNet-5(未完全理解),后续继续更新自己理解

输入层:输入图像尺寸归一化为32*32

C1层-卷积层:

S2池化层

C3卷积层

S4池化层

C5卷积层

F6全连接层

输出层全连接层

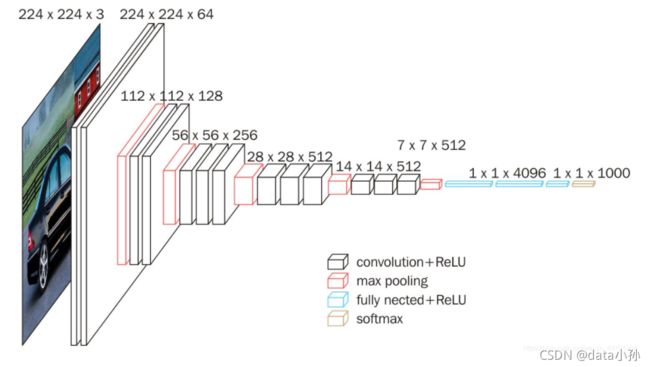

vggNET(参考pytorch深度学习电子书实现)

https://blog.csdn.net/weixin_44791964/article/details/102585038?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522163799975316780255242987%2522%252C%2522scm%2522%253A%252220140713.130102334.pc%255Fall.%2522%257D&request_id=163799975316780255242987&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~all~first_rank_ecpm_v1~rank_v31_ecpm-1-102585038.pc_search_result_cache&utm_term=vgg16&spm=1018.2226.3001.4187![]() https://blog.csdn.net/weixin_44791964/article/details/102585038?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522163799975316780255242987%2522%252C%2522scm%2522%253A%252220140713.130102334.pc%255Fall.%2522%257D&request_id=163799975316780255242987&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~all~first_rank_ecpm_v1~rank_v31_ecpm-1-102585038.pc_search_result_cache&utm_term=vgg16&spm=1018.2226.3001.4187

https://blog.csdn.net/weixin_44791964/article/details/102585038?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522163799975316780255242987%2522%252C%2522scm%2522%253A%252220140713.130102334.pc%255Fall.%2522%257D&request_id=163799975316780255242987&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~all~first_rank_ecpm_v1~rank_v31_ecpm-1-102585038.pc_search_result_cache&utm_term=vgg16&spm=1018.2226.3001.4187

#了解VGG并实现

class VGG(nn.Module):

def __init__(self):

super(VGG,self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3,64,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(64,64,kernel_size=3,padding=1)

nn.ReLU(True)

nn.MaxPool2d(kernel_size=2,stride=2)

nn.Conv2d(64,128,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(128,128,kernel_size=3,padding=1)

nn.ReLU(True)

nn.MaxPool2d(kernel_size=3,stride=2)

nn.Conv2d(128,256,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(256,256,kernel_size=3,padding=1)

nn.ReLU(True)

nn.MaxPool2d(kernel_size=2,stride=2)

nn.Conv2d(256,256,kernel_size=3,padding=1)

nn.ReLU(True)

nn.MaxPool2d(kernel_size=2,stride=2)

nn.Conv2d(128,512,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(512,512,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(512,512,kernel_size=3,padding=1)

nn.ReLU(True)

nn.MaxPool2d(kernel_size=2,stride=2)

nn.Conv2d(512,512,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(512,512,kernel_size=3,padding=1)

nn.ReLU(True)

nn.Conv2d(512,512,kernel_size=3,padding=1)

nn.ReLU(True)

nn.MaxPool2d(kernel_size=2,stride=2)

self.classifier = nn.Sequnential(

nn.Linear(512*7*7,4096),

nn.ReLU(True),

nn.Dropout(),

nn.Linear(4096,4096),

nn.ReLU(True),

nn.Dropout(),

nn.Linear(4096,num_classes),)

self._initialize_weight()

def forwars(self,x):

x= self.features(x)

x= x.view(x.size(0),-1)

x= self.classifier(x)innception Module

Resnet

resnet核心是残差块残差块可以视作标准神经网路添加了跳跃连接

目前还在继续学习

参考

https://github.com/datawhalechina/unusual-deep-learning/blob/main/docs/5.CNN.md