docker实现locust+prometheus+grafana性能测试监控

1. Locust + Prometheus + Grafana

简单总结起来就是:实现一个Locust的prometheus的exporter,将数据导入prometheus,然后使用grafana进行数据展示。

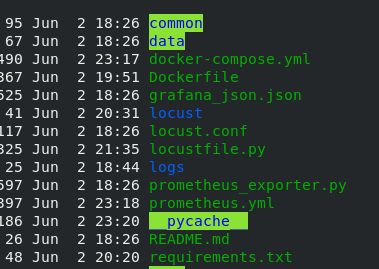

2.安装python和docker,整个项目框架如下图所示

commmon可以放置常用的方法,比如获取数据,mysql操作等方法

data放置数据

prometheus_exporter采集locust的性能结果, locust结果的采集在boomer 项目下有一个年久失修的 exporter 实现——prometheus_exporter.py

import six

from itertools import chain

from flask import request, Response

from locust import stats as locust_stats, runners as locust_runners

from locust import User, task, events

from prometheus_client import Metric, REGISTRY, exposition

# This locustfile adds an external web endpoint to the locust master, and makes it serve as a prometheus exporter.

# Runs it as a normal locustfile, then points prometheus to it.

# locust -f prometheus_exporter.py --master

# Lots of code taken from [mbolek's locust_exporter](https://github.com/mbolek/locust_exporter), thx mbolek!

class LocustCollector(object):

registry = REGISTRY

def __init__(self, environment, runner):

self.environment = environment

self.runner = runner

def collect(self):

# collect metrics only when locust runner is hatching or running.

runner = self.runner

if runner and runner.state in (locust_runners.STATE_SPAWNING, locust_runners.STATE_RUNNING):

stats = []

for s in chain(locust_stats.sort_stats(runner.stats.entries), [runner.stats.total]):

stats.append({

"method": s.method,

"name": s.name,

"num_requests": s.num_requests,

"num_failures": s.num_failures,

"avg_response_time": s.avg_response_time,

"min_response_time": s.min_response_time or 0,

"max_response_time": s.max_response_time,

"current_rps": s.current_rps,

"median_response_time": s.median_response_time,

"ninetieth_response_time": s.get_response_time_percentile(0.9),

# only total stats can use current_response_time, so sad.

# "current_response_time_percentile_95": s.get_current_response_time_percentile(0.95),

"avg_content_length": s.avg_content_length,

"current_fail_per_sec": s.current_fail_per_sec

})

# perhaps StatsError.parse_error in e.to_dict only works in python slave, take notices!

errors = [e.to_dict() for e in six.itervalues(runner.stats.errors)]

metric = Metric('locust_user_count', 'Swarmed users', 'gauge')

metric.add_sample('locust_user_count', value=runner.user_count, labels={})

yield metric

metric = Metric('locust_errors', 'Locust requests errors', 'gauge')

for err in errors:

metric.add_sample('locust_errors', value=err['occurrences'],

labels={'path': err['name'], 'method': err['method'],

'error': err['error']})

yield metric

is_distributed = isinstance(runner, locust_runners.MasterRunner)

if is_distributed:

metric = Metric('locust_slave_count', 'Locust number of slaves', 'gauge')

metric.add_sample('locust_slave_count', value=len(runner.clients.values()), labels={})

yield metric

metric = Metric('locust_fail_ratio', 'Locust failure ratio', 'gauge')

metric.add_sample('locust_fail_ratio', value=runner.stats.total.fail_ratio, labels={})

yield metric

metric = Metric('locust_state', 'State of the locust swarm', 'gauge')

metric.add_sample('locust_state', value=1, labels={'state': runner.state})

yield metric

stats_metrics = ['avg_content_length', 'avg_response_time', 'current_rps', 'current_fail_per_sec',

'max_response_time', 'ninetieth_response_time', 'median_response_time',

'min_response_time',

'num_failures', 'num_requests']

for mtr in stats_metrics:

mtype = 'gauge'

if mtr in ['num_requests', 'num_failures']:

mtype = 'counter'

metric = Metric('locust_stats_' + mtr, 'Locust stats ' + mtr, mtype)

for stat in stats:

# Aggregated stat's method label is None, so name it as Aggregated

# locust has changed name Total to Aggregated since 0.12.1

if 'Aggregated' != stat['name']:

metric.add_sample('locust_stats_' + mtr, value=stat[mtr],

labels={'path': stat['name'], 'method': stat['method']})

else:

metric.add_sample('locust_stats_' + mtr, value=stat[mtr],

labels={'path': stat['name'], 'method': 'Aggregated'})

yield metric

@events.init.add_listener

def locust_init(environment, runner, **kwargs):

print("locust init event received")

if environment.web_ui and runner:

@environment.web_ui.app.route("/export/prometheus")

def prometheus_exporter():

registry = REGISTRY

encoder, content_type = exposition.choose_encoder(request.headers.get('Accept'))

if 'name[]' in request.args:

registry = REGISTRY.restricted_registry(request.args.get('name[]'))

body = encoder(registry)

return Response(body, content_type=content_type)

REGISTRY.register(LocustCollector(environment, runner))

class Dummy(User):

@task(2)

def hello(self):

pass

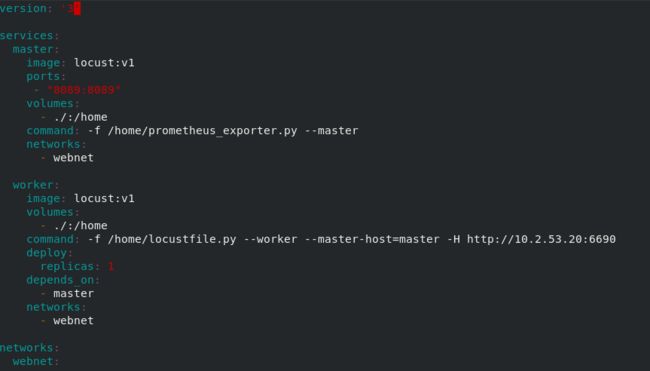

docker-compose 或者swarm管理locust master和work,这里是单机主从配置

locustfile.py为接口测试设计,下为网上的一个demo

from locust import HttpLocust, TaskSet, task, between

class NoSlowQTaskSet(TaskSet):

def on_start(self):

# 登录

data = {"username": "admin", "password": "admin"}

self.client.post("/user/login", json=data)

@task(50)

def getTables(self):

r = self.client.get("/newsql/api/getTablesByAppId?appId=1")

@task(50)

def get_apps(self):

r = self.client.get("/user/api/getApps")

class MyLocust(HttpUser):

task_set = NoSlowQTaskSet

host = "http://localhost:9528"prometheus.yml文件 prometheus配置文件

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: prometheus

static_configs:

- targets: ['localhost:9090']

labels:

instance: prometheus

- job_name: locust

metrics_path: '/export/prometheus'

static_configs:

- targets: ['192.168.20.8:8089']

labels:

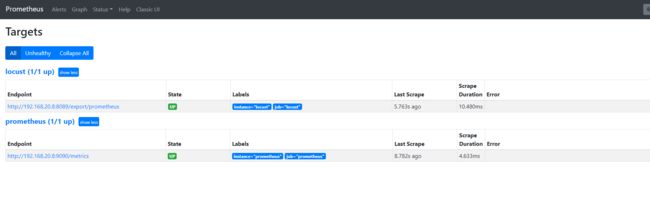

instance: locust3.用docker built构建locust镜像,我这里镜像名为locust:v1,然后执行docker init ,docker stack deploy -c ./docker-compose.yml test (或者执行docker-compose up -d)启动locust matser和work,此时就可以访问locust页面,http://ip:8089,可以进行性能测试

4.访问http://masterip:8089/export/prometheus是否正常,也可以执行性能测试,查看该页面收集数据是否正常

5.安装prometheus和grafana镜像

docker pull prom/prometheus

docker pull grafana/grafana并用下面命令启动两个服务

docker run -d --name prometheus -p 9090:9090 -v $PWD/prometheus.yml:/etc/prometheus/prometheus.yml prom/prometheusdocker run -d --name grafana -p 3000:3000 grafana/grafana启动之后可通过http://ip:9090/查看prometheus是否启动正常

6.网页端访问 localhost:3000 验证部署成功

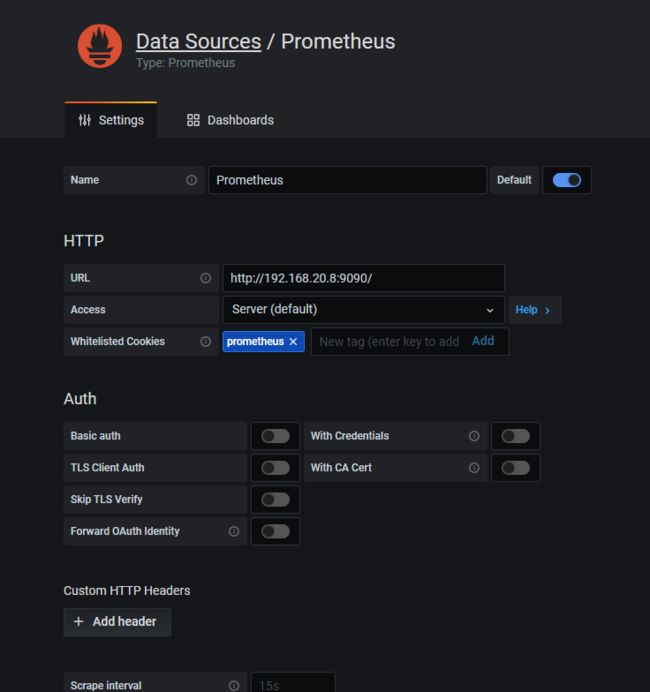

选择添加 prometheus 数据源

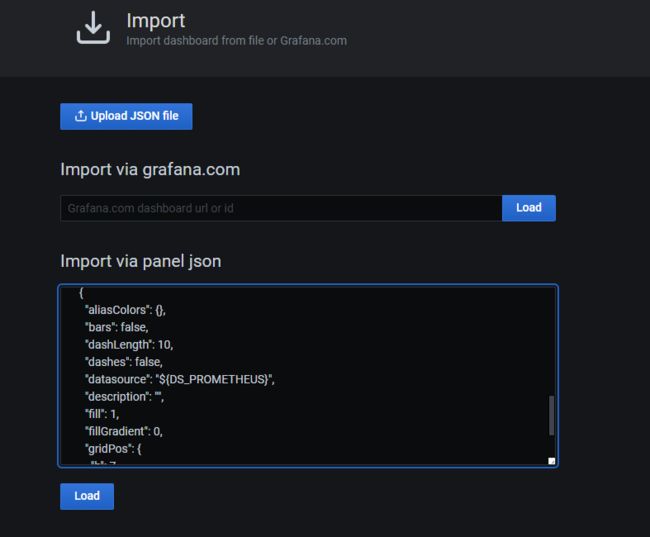

然后再配置模板,导入模板有几种方式,选择一种方式将dashboard模板导入

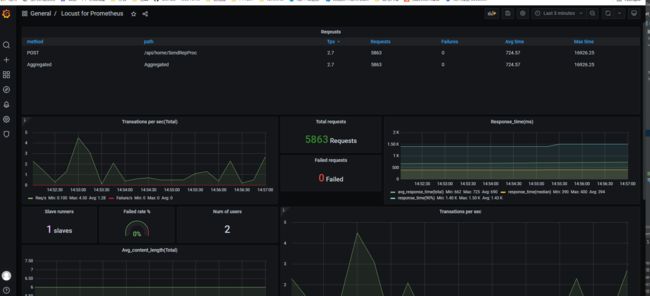

最后效果展示