- 深入理解 MultiQueryRetriever:提升向量数据库检索效果的强大工具

nseejrukjhad

数据库python

深入理解MultiQueryRetriever:提升向量数据库检索效果的强大工具引言在人工智能和自然语言处理领域,高效准确的信息检索一直是一个关键挑战。传统的基于距离的向量数据库检索方法虽然广泛应用,但仍存在一些局限性。本文将介绍一种创新的解决方案:MultiQueryRetriever,它通过自动生成多个查询视角来增强检索效果,提高结果的相关性和多样性。MultiQueryRetriever的工

- FlagEmbedding

吉小雨

python库python

FlagEmbedding教程FlagEmbedding是一个用于生成文本嵌入(textembeddings)的库,适合处理自然语言处理(NLP)中的各种任务。嵌入(embeddings)是将文本表示为连续向量,能够捕捉语义上的相似性,常用于文本分类、聚类、信息检索等场景。官方文档链接:FlagEmbedding官方GitHub一、FlagEmbedding库概述1.1什么是FlagEmbeddi

- 基于深度学习的多模态信息检索

SEU-WYL

深度学习dnn深度学习人工智能

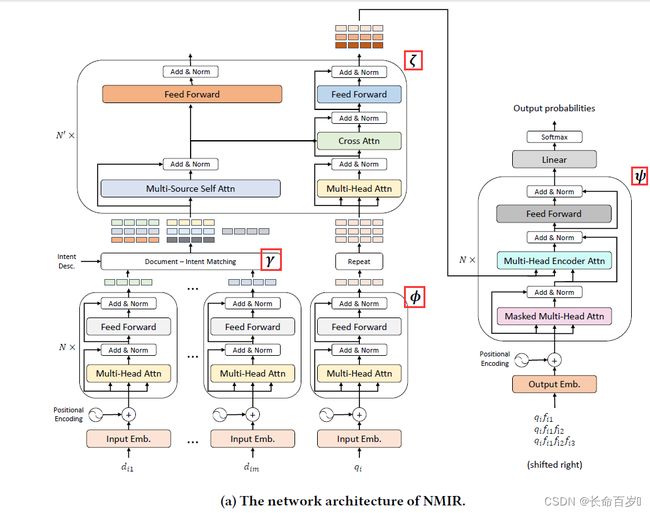

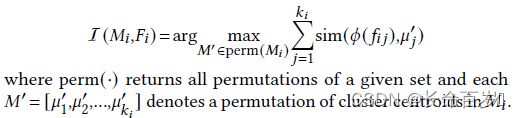

基于深度学习的多模态信息检索(MultimodalInformationRetrieval,MMIR)是指利用深度学习技术,从包含多种模态(如文本、图像、视频、音频等)的数据集中检索出满足用户查询意图的相关信息。这种方法不仅可以处理单一模态的数据,还可以在多种模态之间建立关联,从而更准确地满足用户需求。1.多模态信息检索的挑战异构数据表示:多模态数据通常具有不同的特征和表示形式(如文本的词嵌入与图

- 《互联网时代教师自主成长的模式研究》论文阅读与思考2

宁超群

2.第二部分教师自主成长的模式建构,实质上是对新网师底层逻辑的描述。你认为,新网师的培训模式与传统常见的培训模式有哪些区别?这些区别有什么意义或价值?读完第二部分后,你对新网师有哪些新的认识或理解?你认为新网师目前哪些方面做得好,哪些方面做得还不够?答:我认为新网师的培训模式与传统常见的培训模式有以下区别:(1)培训对象的参与动机不同。新网师学员的参与是自觉自愿、积极主动,而传统培训更多是被迫参与

- 计算机网络笔记分享(第六章 应用层)

寒页_

计算机网络计算机网络笔记

文章目录六、应用层6.1域名系统DNS解析的两种查询方式6.2文件传送协议FTP简单传输协议TFTP6.3远程终端协议TELNET6.4万维网WWW统一资源定位符URL超文本传输协议HTTP万维网的文档HTML万维网的信息检索系统博客和微博社交网站6.5电子邮件6.6动态主机配置协议DHCP6.7简单网络管理协议SNMP6.8应用进程跨越网络的通信几种常用的系统调用6.9P2P应用介绍学习计算机网

- 【定位系列论文阅读】-Patch-NetVLAD: Multi-Scale Fusion of Locally-Global Descriptors for Place Recognition(一)

醉酒柴柴

论文阅读学习笔记

这里写目录标题概述研究内容Abstract第一段(介绍本文算法大致结构与优点)1.Introduction介绍第一段(介绍视觉位置识别的重要性)第二段(VPR的两种常见方法,本文方法结合了两种方法)第三段(本文贡献)第四段(为证明本文方法优越性,进行的测试以及比较)2.RelatedWork相关工作第一段(介绍早期与深度学习的全局图像描述符)第二段(介绍局部关键点描述符)第三段(局部描述符可以进一

- 论文阅读笔记(十九):YOLO9000: Better, Faster, Stronger

__Sunshine__

笔记YOLO9000detectionclassification

WeintroduceYOLO9000,astate-of-the-art,real-timeobjectdetectionsystemthatcandetectover9000objectcategories.FirstweproposevariousimprovementstotheYOLOdetectionmethod,bothnovelanddrawnfrompriorwork.Theim

- 论文阅读笔记: DINOv2: Learning Robust Visual Features without Supervision

小夏refresh

论文计算机视觉深度学习论文阅读笔记深度学习计算机视觉人工智能

DINOv2:LearningRobustVisualFeatureswithoutSupervision论文地址:https://arxiv.org/abs/2304.07193代码地址:https://github.com/facebookresearch/dinov2摘要大量数据上的预训练模型在NLP方面取得突破,为计算机视觉中的类似基础模型开辟了道路。这些模型可以通过生成通用视觉特征(即无

- 德克萨斯大学奥斯汀分校自然语言处理硕士课程汉化版(第十一周) - 自然语言处理扩展研究

Encarta1993

自然语言处理自然语言处理人工智能

自然语言处理扩展研究1.多语言研究2.语言锚定3.伦理问题1.多语言研究多语言(Multilinguality)是NLP的一个重要研究方向,旨在开发能够处理多种语言的模型和算法。由于不同语言在语法、词汇和语义结构上存在差异,这成为一个复杂且具有挑战性的研究领域。多语言性的研究促进了机器翻译、跨语言信息检索和多语言对话系统等应用的发展。以下是多语言的几个主要研究方向和重要技术:多语言模型的构建,开发

- 周四 2020-01-09 08:00 - 24:30 多云 02h10m

么得感情的日更机器

南昌。二〇二〇年一月九日基本科研[1]:1.论文阅读论文--二小时十分2.论文实现实验--小时3.数学SINS推导回顾--O分4.科研参考书【】1)的《》看0/0页-5.科研文档1)组织工作[1]:例会--英语能力[2]:1.听力--十分2.单词--五分3.口语--五分4.英语文档1)编程能力[2]:1.编程语言C语言--O分2.数据结构与算法C语言数据结构--O分3.编程参考书1)陈正冲的《C语

- 【论文阅读】Mamba:选择状态空间模型的线性时间序列建模(二)

syugyou

Mamba状态空间模型论文阅读

文章目录3.4一个简化的SSM结构3.5选择机制的性质3.5.1和门控机制的联系3.5.2选择机制的解释3.6额外的模型细节A讨论:选择机制C选择SSM的机制Mamba论文第一部分Mamba:选择状态空间模型的线性时间序列建模(一)3.4一个简化的SSM结构如同结构SSM,选择SSM是单独序列变换可以灵活地整合进神经网络。H3结构式最知名SSM结构地基础,其通常包括受线性注意力启发的和MLP交替地

- 【机器学习】朴素贝叶斯方法的概率图表示以及贝叶斯统计中的共轭先验方法

Lossya

机器学习概率论人工智能朴素贝叶斯共轭先验

引言朴素贝叶斯方法是一种基于贝叶斯定理的简单概率模型,它假设特征之间相互独立。文章目录引言一、朴素贝叶斯方法的概率图表示1.1节点表示1.2边表示1.3无其他连接1.4总结二、朴素贝叶斯的应用场景2.1文本分类2.2推荐系统2.3医疗诊断2.4欺诈检测2.5情感分析2.6邮件过滤2.7信息检索2.8生物信息学三、朴素贝叶斯的优点四、朴素贝叶斯的局限性4.1特征独立性假设4.2敏感于输入数据的表示4

- 爬取微博热搜榜

带刺的厚崽

python数据挖掘开发语言

201911081102汤昕宇现代信息检索导论实验一程序运行的截图:[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-GimpWjCB-1639531088565)(程序运行截图.png)]当时微博热搜的截图[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-lDXRgrxa-1639531088568)(微博热搜截图.png)]对应的CSV截

- 使用DuckDuckGo搜索API进行智能信息检索:实用指南与最佳实践

qq_37836323

java前端服务器python

使用DuckDuckGo搜索API进行智能信息检索:实用指南与最佳实践1.引言在当今信息爆炸的时代,快速准确地获取所需信息变得越来越重要。DuckDuckGo作为一个注重隐私的搜索引擎,不仅为普通用户提供了优质的搜索服务,还为开发者提供了强大的搜索API。本文将深入探讨如何利用DuckDuckGo搜索API进行智能信息检索,并提供实用的代码示例和最佳实践。2.DuckDuckGo搜索API概述Du

- SAFEFL: MPC-friendly Framework for Private and Robust Federated Learning论文阅读笔记

慘綠青年627

论文阅读笔记深度学习

SAFEFL:MPC-friendlyFrameworkforPrivateandRobustFederatedLearning适用于私有和鲁棒联邦学习的MPC友好框架SAFEFL,这是一个利用安全多方计算(MPC)来评估联邦学习(FL)技术在防止隐私推断和中毒攻击方面的有效性和性能的框架。概述传统机器学习(ML):集中收集数据->隐私保护问题privacy-preservingML(PPML)采

- MixMAE(MixMIM):用于分层视觉变压器有效预训练的混合和掩码自编码器 论文阅读

皮卡丘ZPC

扩散模型阅读论文阅读

论文:MixMAE(arxiv.org)代码:Sense-X/MixMIM:MixMIM:MixedandMaskedImageModelingforEfficientVisualRepresentationLearning(github.com)摘要:本文提出MixMAE(MixedandmaskAutoEncoder),这是一种简单而有效的预训练方法,适用于各种层次视觉变压器。现有的分层视觉变

- GitHub每周最火火火项目(8.26-9.1)

FutureUniant

Github周推github音视频人工智能计算机视觉ai

项目名称:Cinnamon/kotaemon项目介绍:kotaemon是一个基于开源RAG(检索增强生成)的工具,旨在实现与文档的聊天交互。它为用户提供了一种便捷的方式来与自己的文档进行对话,通过检索文档中的信息来回答用户的问题。这使得用户能够更高效地获取文档中的知识,提高信息检索和利用的效率。项目地址:https://github.com/Cinnamon/kotaemon项目名称:frappe

- 【论文阅读】LLM4CP: Adapting Large Language Models for Channel Prediction(2024)

Bosenya12

科研学习论文阅读语言模型人工智能信道预测时间序列

摘要Channelprediction(信道预测)isaneffectiveapproach(有效方法)forreducingthefeedback(减少反馈)orestimationoverhead(估计开销)inmassivemulti-inputmulti-output(大规模多输入输出)(m-MIMO)systems.However,existingchannelpredictionmet

- 国开(电大)2024秋《文献检索与论文写作》综合练习2

电大题园(1)

学习方法经验分享笔记

国开(电大)2024秋《文献检索与论文写作》综合练习2一、单选题(14题)1.什么数据库为用户提供深入到图书章节和内容的全文检索(C)A、知网B、万方C、读秀知识库D、维普解析:“读秀”是由海量全文数据及资料基本信息组成的超大型数据库,为用户提供深入到图书章节和内容的全文检索。2.信息检索根据检索对象不同,一般分为:(D)A、二次检索、高级检索B、分类检索、主题检索C、计算机检索、手工检索D、数据

- 【论文阅读】AugSteal: Advancing Model Steal With Data Augmentation in Active Learning Frameworks(2024)

Bosenya12

科研学习模型窃取论文阅读模型窃取模型提取数据增强主动学习

摘要Withtheproliferationof(随着)machinelearningmodels(机器学习模型)indiverseapplications,theissueofmodelsecurity(模型的安全问题)hasincreasinglybecomeafocalpoint(日益成为人们关注的焦点).Modelstealattacks(模型窃取攻击)cancausesignifican

- 偏见的亮点:认知偏见如何增强推荐系统

量子位AI

人工智能机器学习

认知偏见,曾被视为人类决策过程中的缺陷,现在被认为对学习和决策有潜在的积极影响。然而,在机器学习中,尤其是在搜索和排序系统中,认知偏见的研究仍需改进。尽管有大量研究集中在探讨这些偏见如何影响模型训练和机器行为的道德性,但信息检索领域大多关注于检测偏见及其对搜索行为的影响。这在利用这些认知偏见来增强检索算法方面带来了挑战,这一领域尚未广泛探讨,对研究者而言提供了机遇和挑战。现有的一些方法,如推荐系统

- 每天一个数据分析题(五百二十一)- 词袋模型

跟着紫枫学姐学CDA

数据分析题库数据分析

词袋模型(英语:Bag-of-wordsmodel)是个在自然语言处理和信息检索(IR)下被简化的表达模型。以下关于词袋模型(BagofWord,BoW)的说法正确的是?A.将所有词语装进一个袋子里,不考虑其词法和语序的问题,即每个词语都是独立的B.词袋模型只能应用在文件分类C.CBOW是词袋模型的一种D.GloVe模型是词袋模型的一种数据分析认证考试介绍:点击进入数据分析考试大纲下载题目来源于C

- 平均精度(Average Precision,AP)以及AP50、AP75、APs、APm、APl、Box AP、Mask AP等不同阈值和细分类别的评估指标说明

fydw_715

深度学习基础分类数据挖掘人工智能

平均精度(AveragePrecision,AP)是信息检索领域和机器学习评价指标中常用的一个衡量方法,特别广泛用于目标检测任务。它在评估模型的表现时结合了准确率(Precision)和召回率(Recall),为我们提供一个综合性的评估指标。关键概念Precision(准确率):精确率表示在模型预测为正例的所有样本中,实际上为正例的比例。它的计算公式为:Precision=TruePositive

- Bert系列:论文阅读Rethink Training of BERT Rerankers in Multi-Stage Retrieval Pipeline

凝眸伏笔

nlp论文阅读bertrerankerretrieval

一句话总结:提出LocalizedContrastiveEstimation(LCE),来优化检索排序。摘要预训练的深度语言模型(LM)在文本检索中表现出色。基于丰富的上下文匹配信息,深度LM微调重新排序器从候选集合中找出更为关联的内容。同时,深度lm也可以用来提高搜索索引,构建更好的召回。当前的reranker方法并不能完全探索到检索结果的效果。因此,本文提出了LocalizedContrast

- python机器学习算法--贝叶斯算法

在下小天n

机器学习python机器学习算法

1.贝叶斯定理在20世纪60年代初就引入到文字信息检索中,仍然是文字分类的一种热门(基准)方法。文字分类是以词频为特征判断文件所属类型或其他(如垃圾邮件、合法性、新闻分类等)的问题。原理牵涉到概率论的问题,不在详细说明。sklearn.naive_bayes.GaussianNB(priors=None,var_smoothing=1e-09)#Bayes函数·priors:矩阵,shape=[n

- A Tutorial on Near-Field XL-MIMO Communications Towards 6G【论文阅读笔记】

Cc小跟班

【论文阅读】相关论文阅读笔记

此系列是本人阅读论文过程中的简单笔记,比较随意且具有严重的偏向性(偏向自己研究方向和感兴趣的),随缘分享,共同进步~论文主要内容:建立XL-MIMO模型,考虑NUSW信道和非平稳性;基于近场信道模型,分析性能(SNRscalinglaws,波束聚焦、速率、DoF)XL-MIMO设计问题:信道估计、波束码本、波束训练、DAMXL-MIMO信道特性变化:UPW➡NUSW空间平稳–>空间非平稳(可视区域

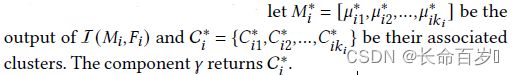

- 论文阅读:scMGCA----模型方法

dundunmm

论文阅读论文阅读人工智能聚类生物聚类单细胞聚类单细胞分析

Yu,Z.,Su,Y.,Lu,Y.etal.Topologicalidentificationandinterpretationforsingle-cellgeneregulationelucidationacrossmultipleplatformsusingscMGCA.NatCommun14,400(2023).https://doi.org/10.1038/s41467-023-36134

- 论文阅读:scHybridBERT

dundunmm

论文阅读机器学习人工智能神经网络深度学习单细胞基因测序

ZhangWei,WuChenjun,XingFeiyang,JiangMingfeng,ZhangYixuan,LiuQi,ShiZhuoxing,DaiQi,scHybridBERT:integratinggeneregulationandcellgraphforspatiotemporaldynamicsinsingle-cellclustering,BriefingsinBioinform

- 【论文阅读】Purloining Deep Learning Models Developed for an Ultrasound Scanner to a Competitor Machine

Bosenya12

科研学习模型窃取论文阅读深度学习人工智能模型安全

TheArtoftheSteal:PurloiningDeepLearningModelsDevelopedforanUltrasoundScannertoaCompetitorMachine(2024)摘要Atransferfunctionapproach(传递函数方法)hasrecentlyproveneffectiveforcalibratingdeeplearning(DL)algorit

- 《Motion Forecasting with Dual Consistency and Multi-Pseudo-Target Supervision》论文阅读之DCMS

山水之间2018

无人驾驶PaperReading大数据轨迹预测自动驾驶人工智能

目录摘要1简介2相关工作3.方法3.1结构3.2双重一致性约束3.3多伪目标监督3.4学习4实验4.1实验装置4.2实验结果4.3消融研究4.4泛化能力5限制6结论DCMS:具有双重一致性和多伪目标监督的运动预测香港科技大学暂无代码。摘要我们提出了一种具有双重一致性约束和多伪目标监督的运动预测新框架。运动预测任务通过结合过去的空间和时间信息来预测车辆的未来轨迹。DCMS的一个关键设计是提出双重一致

- java责任链模式

3213213333332132

java责任链模式村民告县长

责任链模式,通常就是一个请求从最低级开始往上层层的请求,当在某一层满足条件时,请求将被处理,当请求到最高层仍未满足时,则请求不会被处理。

就是一个请求在这个链条的责任范围内,会被相应的处理,如果超出链条的责任范围外,请求不会被相应的处理。

下面代码模拟这样的效果:

创建一个政府抽象类,方便所有的具体政府部门继承它。

package 责任链模式;

/**

*

- linux、mysql、nginx、tomcat 性能参数优化

ronin47

一、linux 系统内核参数

/etc/sysctl.conf文件常用参数 net.core.netdev_max_backlog = 32768 #允许送到队列的数据包的最大数目

net.core.rmem_max = 8388608 #SOCKET读缓存区大小

net.core.wmem_max = 8388608 #SOCKET写缓存区大

- php命令行界面

dcj3sjt126com

PHPcli

常用选项

php -v

php -i PHP安装的有关信息

php -h 访问帮助文件

php -m 列出编译到当前PHP安装的所有模块

执行一段代码

php -r 'echo "hello, world!";'

php -r 'echo "Hello, World!\n";'

php -r '$ts = filemtime("

- Filter&Session

171815164

session

Filter

HttpServletRequest requ = (HttpServletRequest) req;

HttpSession session = requ.getSession();

if (session.getAttribute("admin") == null) {

PrintWriter out = res.ge

- 连接池与Spring,Hibernate结合

g21121

Hibernate

前几篇关于Java连接池的介绍都是基于Java应用的,而我们常用的场景是与Spring和ORM框架结合,下面就利用实例学习一下这方面的配置。

1.下载相关内容: &nb

- [简单]mybatis判断数字类型

53873039oycg

mybatis

昨天同事反馈mybatis保存不了int类型的属性,一直报错,错误信息如下:

Caused by: java.lang.NumberFormatException: For input string: "null"

at sun.mis

- 项目启动时或者启动后ava.lang.OutOfMemoryError: PermGen space

程序员是怎么炼成的

eclipsejvmtomcatcatalina.sheclipse.ini

在启动比较大的项目时,因为存在大量的jsp页面,所以在编译的时候会生成很多的.class文件,.class文件是都会被加载到jvm的方法区中,如果要加载的class文件很多,就会出现方法区溢出异常 java.lang.OutOfMemoryError: PermGen space.

解决办法是点击eclipse里的tomcat,在

- 我的crm小结

aijuans

crm

各种原因吧,crm今天才完了。主要是接触了几个新技术:

Struts2、poi、ibatis这几个都是以前的项目中用过的。

Jsf、tapestry是这次新接触的,都是界面层的框架,用起来也不难。思路和struts不太一样,传说比较简单方便。不过个人感觉还是struts用着顺手啊,当然springmvc也很顺手,不知道是因为习惯还是什么。jsf和tapestry应用的时候需要知道他们的标签、主

- spring里配置使用hibernate的二级缓存几步

antonyup_2006

javaspringHibernatexmlcache

.在spring的配置文件中 applicationContent.xml,hibernate部分加入

xml 代码

<prop key="hibernate.cache.provider_class">org.hibernate.cache.EhCacheProvider</prop>

<prop key="hi

- JAVA基础面试题

百合不是茶

抽象实现接口String类接口继承抽象类继承实体类自定义异常

/* * 栈(stack):主要保存基本类型(或者叫内置类型)(char、byte、short、 *int、long、 float、double、boolean)和对象的引用,数据可以共享,速度仅次于 * 寄存器(register),快于堆。堆(heap):用于存储对象。 */ &

- 让sqlmap文件 "继承" 起来

bijian1013

javaibatissqlmap

多个项目中使用ibatis , 和数据库表对应的 sqlmap文件(增删改查等基本语句),dao, pojo 都是由工具自动生成的, 现在将这些自动生成的文件放在一个单独的工程中,其它项目工程中通过jar包来引用 ,并通过"继承"为基础的sqlmap文件,dao,pojo 添加新的方法来满足项

- 精通Oracle10编程SQL(13)开发触发器

bijian1013

oracle数据库plsql

/*

*开发触发器

*/

--得到日期是周几

select to_char(sysdate+4,'DY','nls_date_language=AMERICAN') from dual;

select to_char(sysdate,'DY','nls_date_language=AMERICAN') from dual;

--建立BEFORE语句触发器

CREATE O

- 【EhCache三】EhCache查询

bit1129

ehcache

本文介绍EhCache查询缓存中数据,EhCache提供了类似Hibernate的查询API,可以按照给定的条件进行查询。

要对EhCache进行查询,需要在ehcache.xml中设定要查询的属性

数据准备

@Before

public void setUp() {

//加载EhCache配置文件

Inpu

- CXF框架入门实例

白糖_

springWeb框架webserviceservlet

CXF是apache旗下的开源框架,由Celtix + XFire这两门经典的框架合成,是一套非常流行的web service框架。

它提供了JAX-WS的全面支持,并且可以根据实际项目的需要,采用代码优先(Code First)或者 WSDL 优先(WSDL First)来轻松地实现 Web Services 的发布和使用,同时它能与spring进行完美结合。

在apache cxf官网提供

- angular.equals

boyitech

AngularJSAngularJS APIAnguarJS 中文APIangular.equals

angular.equals

描述:

比较两个值或者两个对象是不是 相等。还支持值的类型,正则表达式和数组的比较。 两个值或对象被认为是 相等的前提条件是以下的情况至少能满足一项:

两个值或者对象能通过=== (恒等) 的比较

两个值或者对象是同样类型,并且他们的属性都能通过angular

- java-腾讯暑期实习生-输入一个数组A[1,2,...n],求输入B,使得数组B中的第i个数字B[i]=A[0]*A[1]*...*A[i-1]*A[i+1]

bylijinnan

java

这道题的具体思路请参看 何海涛的微博:http://weibo.com/zhedahht

import java.math.BigInteger;

import java.util.Arrays;

public class CreateBFromATencent {

/**

* 题目:输入一个数组A[1,2,...n],求输入B,使得数组B中的第i个数字B[i]=A

- FastDFS 的安装和配置 修订版

Chen.H

linuxfastDFS分布式文件系统

FastDFS Home:http://code.google.com/p/fastdfs/

1. 安装

http://code.google.com/p/fastdfs/wiki/Setup http://hi.baidu.com/leolance/blog/item/3c273327978ae55f93580703.html

安装libevent (对libevent的版本要求为1.4.

- [强人工智能]拓扑扫描与自适应构造器

comsci

人工智能

当我们面对一个有限拓扑网络的时候,在对已知的拓扑结构进行分析之后,发现在连通点之后,还存在若干个子网络,且这些网络的结构是未知的,数据库中并未存在这些网络的拓扑结构数据....这个时候,我们该怎么办呢?

那么,现在我们必须设计新的模块和代码包来处理上面的问题

- oracle merge into的用法

daizj

oraclesqlmerget into

Oracle中merge into的使用

http://blog.csdn.net/yuzhic/article/details/1896878

http://blog.csdn.net/macle2010/article/details/5980965

该命令使用一条语句从一个或者多个数据源中完成对表的更新和插入数据. ORACLE 9i 中,使用此命令必须同时指定UPDATE 和INSE

- 不适合使用Hadoop的场景

datamachine

hadoop

转自:http://dev.yesky.com/296/35381296.shtml。

Hadoop通常被认定是能够帮助你解决所有问题的唯一方案。 当人们提到“大数据”或是“数据分析”等相关问题的时候,会听到脱口而出的回答:Hadoop! 实际上Hadoop被设计和建造出来,是用来解决一系列特定问题的。对某些问题来说,Hadoop至多算是一个不好的选择,对另一些问题来说,选择Ha

- YII findAll的用法

dcj3sjt126com

yii

看文档比较糊涂,其实挺简单的:

$predictions=Prediction::model()->findAll("uid=:uid",array(":uid"=>10));

第一个参数是选择条件:”uid=10″。其中:uid是一个占位符,在后面的array(“:uid”=>10)对齐进行了赋值;

更完善的查询需要

- vim 常用 NERDTree 快捷键

dcj3sjt126com

vim

下面给大家整理了一些vim NERDTree的常用快捷键了,这里几乎包括了所有的快捷键了,希望文章对各位会带来帮助。

切换工作台和目录

ctrl + w + h 光标 focus 左侧树形目录ctrl + w + l 光标 focus 右侧文件显示窗口ctrl + w + w 光标自动在左右侧窗口切换ctrl + w + r 移动当前窗口的布局位置

o 在已有窗口中打开文件、目录或书签,并跳

- Java把目录下的文件打印出来

蕃薯耀

列出目录下的文件文件夹下面的文件目录下的文件

Java把目录下的文件打印出来

>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

蕃薯耀 2015年7月11日 11:02:

- linux远程桌面----VNCServer与rdesktop

hanqunfeng

Desktop

windows远程桌面到linux,需要在linux上安装vncserver,并开启vnc服务,同时需要在windows下使用vnc-viewer访问Linux。vncserver同时支持linux远程桌面到linux。

linux远程桌面到windows,需要在linux上安装rdesktop,同时开启windows的远程桌面访问。

下面分别介绍,以windo

- guava中的join和split功能

jackyrong

java

guava库中,包含了很好的join和split的功能,例子如下:

1) 将LIST转换为使用字符串连接的字符串

List<String> names = Lists.newArrayList("John", "Jane", "Adam", "Tom");

- Web开发技术十年发展历程

lampcy

androidWeb浏览器html5

回顾web开发技术这十年发展历程:

Ajax

03年的时候我上六年级,那时候网吧刚在小县城的角落萌生。传奇,大话西游第一代网游一时风靡。我抱着试一试的心态给了网吧老板两块钱想申请个号玩玩,然后接下来的一个小时我一直在,注,册,账,号。

彼时网吧用的512k的带宽,注册的时候,填了一堆信息,提交,页面跳转,嘣,”您填写的信息有误,请重填”。然后跳转回注册页面,以此循环。我现在时常想,如果当时a

- 架构师之mima-----------------mina的非NIO控制IOBuffer(说得比较好)

nannan408

buffer

1.前言。

如题。

2.代码。

IoService

IoService是一个接口,有两种实现:IoAcceptor和IoConnector;其中IoAcceptor是针对Server端的实现,IoConnector是针对Client端的实现;IoService的职责包括:

1、监听器管理

2、IoHandler

3、IoSession

- ORA-00054:resource busy and acquire with NOWAIT specified

Everyday都不同

oraclesessionLock

[Oracle]

今天对一个数据量很大的表进行操作时,出现如题所示的异常。此时表明数据库的事务处于“忙”的状态,而且被lock了,所以必须先关闭占用的session。

step1,查看被lock的session:

select t2.username, t2.sid, t2.serial#, t2.logon_time

from v$locked_obj

- javascript学习笔记

tntxia

JavaScript

javascript里面有6种基本类型的值:number、string、boolean、object、function和undefined。number:就是数字值,包括整数、小数、NaN、正负无穷。string:字符串类型、单双引号引起来的内容。boolean:true、false object:表示所有的javascript对象,不用多说function:我们熟悉的方法,也就是

- Java enum的用法详解

xieke90

enum枚举

Java中枚举实现的分析:

示例:

public static enum SEVERITY{

INFO,WARN,ERROR

}

enum很像特殊的class,实际上enum声明定义的类型就是一个类。 而这些类都是类库中Enum类的子类 (java.l

![]()