ML - 集成学习 代码实现

文章目录

-

- 什么是集成学习

-

- 手动实现集成学习

- 使用 Hard VotingClassifier

- 使用 Soft Voting Classifier

- Bagging 和 Pasting

-

- oob

- 并行化处理(n_jobs)

- 随机采样 bootstrap_features

- 随机森林

- Extra-Trees

- 集成学习解决回归问题

- Boosting

-

- AdaBoosting

- Gradient Boosting

- Boosting 解决回归问题

什么是集成学习

import numpy as np

import matplotlib.pyplot as plt

from sklearn import datasets

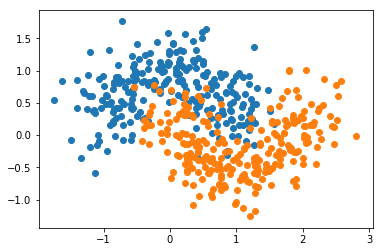

X, y = datasets.make_moons(n_samples=500, noise=0.3, random_state=42) # n_samples 不赋值的话,默认生成 100个数据。

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=42)

plt.scatter(X[y==0,0], X[y==0,1])

plt.scatter(X[y==1,0], X[y==1,1])

plt.show()

手动实现集成学习

from sklearn.linear_model import LogisticRegression

log_clf = LogisticRegression()

log_clf.fit(X_train, y_train)

log_clf.score(X_test, y_test) # 0.86399999999999999

from sklearn.svm import SVC

svm_clf = SVC()

svm_clf.fit(X_train, y_train)

svm_clf.score(X_test, y_test) # 0.88800000000000001

from sklearn.tree import DecisionTreeClassifier

dt_clf = DecisionTreeClassifier(random_state=666)

dt_clf.fit(X_train, y_train)

dt_clf.score(X_test, y_test) # 0.86399999999999999

y_predict1 = log_clf.predict(X_test)

y_predict2 = svm_clf.predict(X_test)

y_predict3 = dt_clf.predict(X_test)

# 少数服从多数;至少有两个模型认为它是 1,就判断为 1,否则为 0.

y_predict = np.array((y_predict1 + y_predict2 + y_predict3) >= 2, dtype='int')

y_predict[:10]

# array([1, 0, 0, 1, 1, 1, 0, 0, 0, 0])

from sklearn.metrics import accuracy_score

accuracy_score(y_test, y_predict) # 0.89600000000000002

使用 Hard VotingClassifier

from sklearn.ensemble import VotingClassifier # ensemble 就是集成学习的意思

voting_clf = VotingClassifier(estimators=[

('log_clf', LogisticRegression()),

('svm_clf', SVC()),

('dt_clf', DecisionTreeClassifier(random_state=666))],

voting='hard')

voting_clf.fit(X_train, y_train)

voting_clf.score(X_test, y_test) # 0.89600000000000002

VotingClassifier : https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.VotingClassifier.html

使用 Soft Voting Classifier

voting_clf2 = VotingClassifier(estimators=[

('log_clf', LogisticRegression()),

('svm_clf', SVC(probability=True)), # SVC 默认不计算概率;需要把参数 probability 设置为 true 才行。

('dt_clf', DecisionTreeClassifier(random_state=666))],

voting='soft')

voting_clf2.fit(X_train, y_train)

voting_clf2.score(X_test, y_test) # 0.91200000000000003

Bagging 和 Pasting

from sklearn.tree import DecisionTreeClassifier # 这里使用决策树模型,因为更能产生差异比较大的子模型;所以要在集成学习中,集成成百上千个子模型,首选决策树

from sklearn.ensemble import BaggingClassifier

# n_estimators:集成多少个子模型;max_samples:每个子模型看多少个样本数据;bootstrap:是否放回取样,true:放回。 Bagging 和 Pasting 在sklearn 中统一使用 BaggingClassifier,仅用 bootstrap 区分。

bagging_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=500, max_samples=100,

bootstrap=True)

bagging_clf.fit(X_train, y_train)

bagging_clf.score(X_test, y_test) # 0.91200000000000003

# 集成更多子模型,运行会稍微慢一些;理论上子模型越大,准确率越高

bagging_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=5000, max_samples=100,

bootstrap=True)

bagging_clf.fit(X_train, y_train)

bagging_clf.score(X_test, y_test) # 0.92000000000000004

oob

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import BaggingClassifier

bagging_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=500, max_samples=100,

bootstrap=True, oob_score=True) # 构造的使用需要增加 oob_score,告诉这个类需要记录oob

bagging_clf.fit(X, y)

BaggingClassifier(base_estimator=DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features=None, max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False, random_state=None,

splitter='best'),

bootstrap=True, bootstrap_features=False, max_features=1.0,

max_samples=100, n_estimators=500, n_jobs=1, oob_score=True,

random_state=None, verbose=0, warm_start=False)

bagging_clf.oob_score_ # 0.91800000000000004

并行化处理(n_jobs)

%%time

bagging_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=500, max_samples=100,

bootstrap=True, oob_score=True)

bagging_clf.fit(X, y)

'''

CPU times: user 1.81 s, sys: 27.2 ms, total: 1.84 s

Wall time: 2.95 s

'''

%%time

bagging_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=500, max_samples=100,

bootstrap=True, oob_score=True,

n_jobs=-1) # -1 所有的核

bagging_clf.fit(X, y)

'''

CPU times: user 385 ms, sys: 56.1 ms, total: 441 ms

Wall time: 1.83 s

'''

随机采样 bootstrap_features

# max_features 每次取多少个特征

random_subspaces_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=500, max_samples=500, # 只有500个样本,取500个;相当于没有对样本数据随机

bootstrap=True, oob_score=True,

max_features=1, bootstrap_features=True)

random_subspaces_clf.fit(X, y)

random_subspaces_clf.oob_score_ # 0.83399999999999996

random_patches_clf = BaggingClassifier(DecisionTreeClassifier(),

n_estimators=500, max_samples=100, # 既 对样本数进行有放回随机采样,又对 特征进行...

bootstrap=True, oob_score=True,

max_features=1, bootstrap_features=True)

random_patches_clf.fit(X, y)

random_patches_clf.oob_score_ # 0.85799999999999998

随机森林

from sklearn.ensemble import RandomForestClassifier

rf_clf = RandomForestClassifier(n_estimators=500, oob_score=True, random_state=666, n_jobs=-1)

rf_clf.fit(X, y)

RandomForestClassifier(bootstrap=True, class_weight=None, criterion='gini',

max_depth=None, max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, n_estimators=500, n_jobs=-1,

oob_score=True, random_state=666, verbose=0, warm_start=False)

rf_clf.oob_score_ # 0.89200000000000002

rf_clf2 = RandomForestClassifier(n_estimators=500, max_leaf_nodes=16, oob_score=True, random_state=666, n_jobs=-1)

rf_clf2.fit(X, y)

rf_clf2.oob_score_ # 0.90600000000000003

随机森林拥有决策树和BaggingClassifier的所有参数

Extra-Trees

from sklearn.ensemble import ExtraTreesClassifier

et_clf = ExtraTreesClassifier(n_estimators=500, bootstrap=True, oob_score=True, random_state=666, n_jobs=-1)

et_clf.fit(X, y)

ExtraTreesClassifier(bootstrap=True, class_weight=None, criterion='gini',

max_depth=None, max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, n_estimators=500, n_jobs=-1,

oob_score=True, random_state=666, verbose=0, warm_start=False)

et_clf.oob_score_ # 0.89200000000000002

集成学习解决回归问题

from sklearn.ensemble import BaggingRegressor

from sklearn.ensemble import RandomForestRegressor

from sklearn.ensemble import ExtraTreesRegressor

Boosting

AdaBoosting

不再有 oob,还是使用 train_test_split。

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import AdaBoostClassifier

ada_clf = AdaBoostClassifier(DecisionTreeClassifier(max_depth=2), n_estimators=500)

ada_clf.fit(X_train, y_train)

AdaBoostClassifier(algorithm='SAMME.R',

base_estimator=DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=2,

max_features=None, max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False, random_state=None,

splitter='best'),

learning_rate=1.0, n_estimators=500, random_state=None)

ada_clf.score(X_test, y_test) # 0.85599999999999998

Gradient Boosting

from sklearn.ensemble import GradientBoostingClassifier

gb_clf = GradientBoostingClassifier(max_depth=2, n_estimators=30)

gb_clf.fit(X_train, y_train)

GradientBoostingClassifier(criterion='friedman_mse', init=None,

learning_rate=0.1, loss='deviance', max_depth=2,

max_features=None, max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, n_estimators=30,

presort='auto', random_state=None, subsample=1.0, verbose=0,

warm_start=False)

gb_clf.score(X_test, y_test) # 0.90400000000000003

Boosting 解决回归问题

from sklearn.ensemble import AdaBoostRegressor

from sklearn.ensemble import GradientBoostingRegressor