Gradient Descent Algorithm 梯度下降算法

文章目录

-

- 2、Gradient Descent Algorithm 梯度下降算法

-

- 2.1 优化问题

- 2.2 公式推导

- 2.3 Gradient Descent 梯度下降

- 2.4 Stochastic Gradient Descent 随机梯度下降

2、Gradient Descent Algorithm 梯度下降算法

B站视频教程传送门:PyTorch深度学习实践 - 梯度下降算法

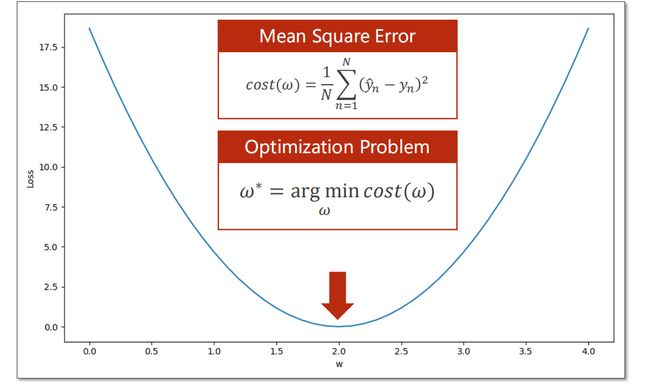

2.1 优化问题

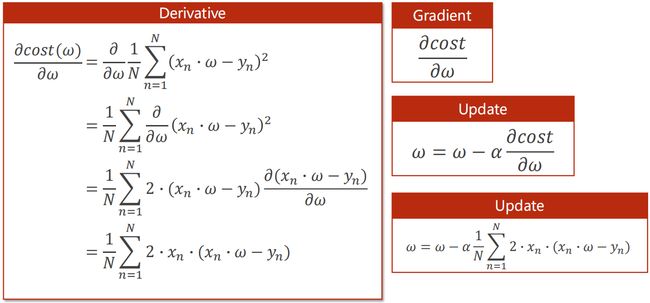

2.2 公式推导

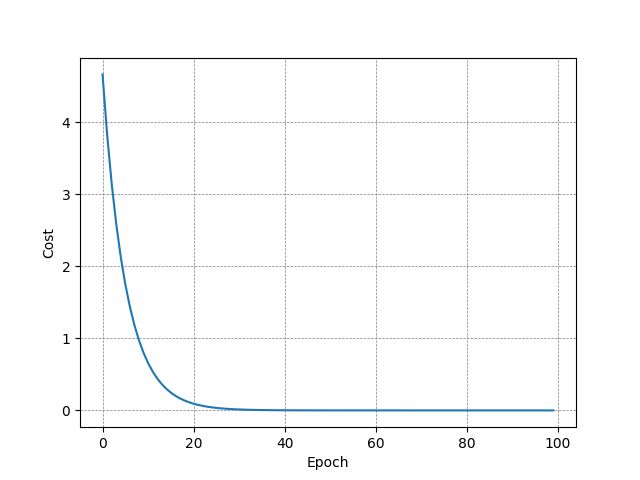

2.3 Gradient Descent 梯度下降

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

w = 1.0

def forward(x):

return x * w

def cost(xs, ys):

cost = 0

for x, y in zip(xs, ys):

y_pred = forward(x)

cost += (y_pred - y) ** 2

return cost / len(xs)

def gradient(xs, ys):

grad = 0

for x, y in zip(xs, ys):

grad += 2 * x * (x * w - y)

return grad / len(xs)

epoch_list = []

cost_list = []

print('Predict (before training)', 4, forward(4))

for epoch in range(100):

cost_val = cost(x_data, y_data)

grad_val = gradient(x_data, y_data)

w -= 0.01 * grad_val

print('Epoch:', epoch, 'W=', round(w, 2), 'Loss=', round(cost_val, 2))

epoch_list.append(epoch)

cost_list.append(cost_val)

print('Predict (after training)', 4, forward(4))

plt.plot(epoch_list, cost_list)

plt.grid(True, linestyle="--", color="gray", linewidth="0.5", axis="both")

plt.xlabel('Epoch')

plt.ylabel('Cost')

plt.show()

Predict (before training) 4 4.0

Epoch: 0 W= 1.09 Loss= 4.67

...

Epoch: 99 W= 2.0 Loss= 0.0

Predict (after training) 4 7.999777758621207

2.4 Stochastic Gradient Descent 随机梯度下降

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

w = 1.0

def forward(x):

return x * w

def loss(x, y):

y_pred = forward(x)

return (y_pred - y) ** 2

def gradient(x, y):

return 2 * x * (x * w - y)

epoch_list = []

loss_list = []

print('Predict (before training)', 4, forward(4))

for epoch in range(100):

for x, y in zip(x_data, y_data):

grad = gradient(x, y)

w -= 0.01 * grad

print("grad:", x, y, grad)

l = loss(x, y)

print("progress:", epoch, "w=", round(w, 2), "loss=", round(l, 2))

epoch_list.append(epoch)

loss_list.append(l)

print('Predict (after training)', 4, forward(4))

plt.plot(epoch_list, loss_list)

plt.grid(True, linestyle="--", color="gray", linewidth="0.5", axis="both")

plt.xlabel('Epoch')

plt.ylabel('Cost')

plt.show()

Predict (before training) 4 4.0

grad: 1.0 2.0 -2.0

grad: 2.0 4.0 -7.84

grad: 3.0 6.0 -16.2288

progress: 0 w= 1.26 loss= 4.92

...

grad: 1.0 2.0 -2.0650148258027912e-13

grad: 2.0 4.0 -8.100187187665142e-13

grad: 3.0 6.0 -1.6786572132332367e-12

progress: 99 w= 2.0 loss= 0.0

Predict (after training) 4 7.9999999999996945