nnUnet测试

https://github.com/MIC-DKFZ/nnUNet

nnUnet要在Windows上跑起来有点麻烦,主要是项目路径的问题,我目前测试了2分类遥感数据(其实只要是二分类都行,无所谓什么数据),我这里说难是因为我没有安装,没有用命令行跑,我直接在脚本里改了参数运行,下面我对关键部分做下说明

1.数据准备

数据就用阿里天池大数据竞赛里那个建筑比赛数据,这个比赛是长期开放的,有兴趣的可以把自己的结果拿去给系统评价一下,数据链接如下:

链接:https://pan.baidu.com/s/1A2owAk1gUlycM7_kDPjIng

提取码:x5wt

--来自百度网盘超级会员V6的分享

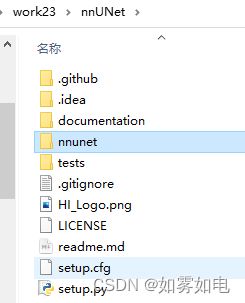

去源码链接下载有一下项目,创建一个放数据的文件夹datasets

一级目录:

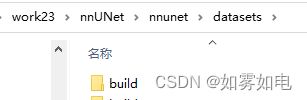

二级目录:

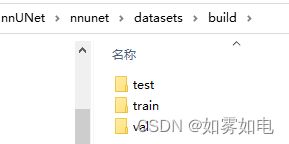

三级目录:

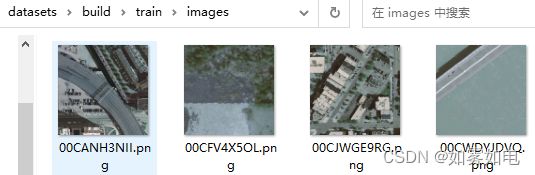

其中train,val,test的结构一样,images放图片,labels里放标签,标签值为0和1

train里有3万张样本,由于nnUnet是用5折交叉验证策略训练的,没必要专门剪切数据出来作为验证测试数据了,所以我只是复制了1000个样本作为val和test,并且val和test一模一样,train还是3万张。另外注意,下面的调试操作尽量拿出几十张先把过程走通,nnUnet数据转化和预处理还是需要不少时间的!!!直接用3万张去调试恐怕很难了,一次错误就要浪费很长时间重来。

2.数据标准化

nnUnet在训练之前需要先标准化和预处理,先说标准化。

因为nnUnet本来是在Linux上做的工程,并且是用在三维的医疗数据上的,需要把数据格式标准化到医疗数据格式,另外,在Windows上有些东西可能不起作用,至少目前我是这样的,下面是作者提供的二维数据的转化脚本./dataset_conversion/Task120_Massachusetts_RoadSegm.py文件,我做了些修改,贴出来,务必好好看这个文件的说明

import numpy as np

from batchgenerators.utilities.file_and_folder_operations import *

from nnunet.dataset_conversion.utils import generate_dataset_json

from nnunet.paths import nnUNet_raw_data, preprocessing_output_dir

from nnunet.utilities.file_conversions import convert_2d_image_to_nifti

def mkdir_func(path):

if os.path.exists(path):

pass

else:

os.mkdir(path)

if __name__ == '__main__':

"""

nnU-Net was originally built for 3D images. It is also strongest when applied to 3D segmentation problems because a

large proportion of its design choices were built with 3D in mind. Also note that many 2D segmentation problems,

especially in the non-biomedical domain, may benefit from pretrained network architectures which nnU-Net does not

support.

Still, there is certainly a need for an out of the box segmentation solution for 2D segmentation problems. And

also on 2D segmentation tasks nnU-Net cam perform extremely well! We have, for example, won a 2D task in the cell

tracking challenge with nnU-Net (see our Nature Methods paper) and we have also successfully applied nnU-Net to

histopathological segmentation problems.

Working with 2D data in nnU-Net requires a small workaround in the creation of the dataset. Essentially, all images

must be converted to pseudo 3D images (so an image with shape (X, Y) needs to be converted to an image with shape

(1, X, Y). The resulting image must be saved in nifti format. Hereby it is important to set the spacing of the

first axis (the one with shape 1) to a value larger than the others. If you are working with niftis anyways, then

doing this should be easy for you. This example here is intended for demonstrating how nnU-Net can be used with

'regular' 2D images. We selected the massachusetts road segmentation dataset for this because it can be obtained

easily, it comes with a good amount of training cases but is still not too large to be difficult to handle.

"""

# download dataset from https://www.kaggle.com/insaff/massachusetts-roads-dataset

# extract the zip file, then set the following path according to your system:

base = 'D:/csdn/tc/work23/nnUNet/nnunet/datasets/build'

# this folder should have the training and testing subfolders

# now start the conversion to nnU-Net:

task_name = 'Task120_MassRoadsSeg'

target_base = join(nnUNet_raw_data, task_name)

target_imagesTr = join(target_base, "imagesTr")

target_imagesTs = join(target_base, "imagesTs")

target_labelsTs = join(target_base, "labelsTs")

target_labelsTr = join(target_base, "labelsTr")

# maybe_mkdir_p(target_imagesTr)

# maybe_mkdir_p(target_labelsTs)

# maybe_mkdir_p(target_imagesTs)

# maybe_mkdir_p(target_labelsTr)

mkdir_func(target_base)

mkdir_func(target_imagesTr)

mkdir_func(target_labelsTs)

mkdir_func(target_imagesTs)

mkdir_func(target_labelsTr)

# convert the training examples. Not all training images have labels, so we just take the cases for which there are

# labels

labels_dir_tr = join(base, 'train', 'labels')

images_dir_tr = join(base, 'train', 'images')

training_cases = subfiles(labels_dir_tr, suffix='.png', join=False)

for t in training_cases:

unique_name = t[:-4] # just the filename with the extension cropped away, so img-2.png becomes img-2 as unique_name

input_segmentation_file = join(labels_dir_tr, t)

input_image_file = join(images_dir_tr, t)

output_image_file = join(target_imagesTr, unique_name) # do not specify a file ending! This will be done for you

output_seg_file = join(target_labelsTr, unique_name) # do not specify a file ending! This will be done for you

# this utility will convert 2d images that can be read by skimage.io.imread to nifti. You don't need to do anything.

# if this throws an error for your images, please just look at the code for this function and adapt it to your needs

convert_2d_image_to_nifti(input_image_file, output_image_file, is_seg=False)

# the labels are stored as 0: background, 255: road. We need to convert the 255 to 1 because nnU-Net expects

# the labels to be consecutive integers. This can be achieved with setting a transform

# convert_2d_image_to_nifti(input_segmentation_file, output_seg_file, is_seg=True,

# transform=lambda x: (x == 255).astype(int))

convert_2d_image_to_nifti(input_segmentation_file, output_seg_file, is_seg=True)

# now do the same for the test set

labels_dir_ts = join(base, 'val', 'labels')

images_dir_ts = join(base, 'val', 'images')

testing_cases = subfiles(labels_dir_ts, suffix='.png', join=False)

for ts in testing_cases:

unique_name = ts[:-4]

input_segmentation_file = join(labels_dir_ts, ts)

input_image_file = join(images_dir_ts, ts)

output_image_file = join(target_imagesTs, unique_name)

output_seg_file = join(target_labelsTs, unique_name)

convert_2d_image_to_nifti(input_image_file, output_image_file, is_seg=False)

# convert_2d_image_to_nifti(input_segmentation_file, output_seg_file, is_seg=True,

# transform=lambda x: (x == 255).astype(int)) #当标签是0,1时,lambda x: (x == 255)这个操作不能有,会产生无效标签

convert_2d_image_to_nifti(input_segmentation_file, output_seg_file, is_seg=True)

# finally we can call the utility for generating a dataset.json

generate_dataset_json(join(target_base, 'dataset.json'), target_imagesTr, target_imagesTs, ('Red', 'Green', 'Blue'),

labels={0: 'background', 1: 'build'}, dataset_name=task_name, license='hands off!')

"""

once this is completed, you can use the dataset like any other nnU-Net dataset. Note that since this is a 2D

dataset there is no need to run preprocessing for 3D U-Nets. You should therefore run the

`nnUNet_plan_and_preprocess` command like this:

> nnUNet_plan_and_preprocess -t 120 -pl3d None

once that is completed, you can run the trainings as follows:

> nnUNet_train 2d nnUNetTrainerV2 120 FOLD

(where fold is again 0, 1, 2, 3 and 4 - 5-fold cross validation)

there is no need to run nnUNet_find_best_configuration because there is only one model to choose from.

Note that without running nnUNet_find_best_configuration, nnU-Net will not have determined a postprocessing

for the whole cross-validation. Spoiler: it will determine not to run postprocessing anyways. If you are using

a different 2D dataset, you can make nnU-Net determine the postprocessing by using the

`nnUNet_determine_postprocessing` command

"""

改动说明:

1.我创建了mkdir_func函数,用于新建文件夹,因为原始的maybe_mkdir_p没起作用,这里需要把maybe_mkdir_p函数全部替换为mkdir_func函数;

2.base是数据的根目录,给绝对路径;

3.注意到一些项目路径是从path.py导入的from nnunet.paths import nnUNet_raw_data, preprocessing_output_dir, 那我们还要转到这个脚本去看看

# Copyright 2020 Division of Medical Image Computing, German Cancer Research Center (DKFZ), Heidelberg, Germany

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

import os

from batchgenerators.utilities.file_and_folder_operations import maybe_mkdir_p, join

def mkdir_func(path):

if os.path.exists(path):

pass

else:

os.mkdir(path)

# do not modify these unless you know what you are doing

my_output_identifier = "nnUNet"

default_plans_identifier = "nnUNetPlansv2.1"

default_data_identifier = 'nnUNetData_plans_v2.1'

default_trainer = "nnUNetTrainerV2"

default_cascade_trainer = "nnUNetTrainerV2CascadeFullRes"

"""

PLEASE READ paths.md FOR INFORMATION TO HOW TO SET THIS UP

"""

# base = os.environ['nnUNet_raw_data_base'] if "nnUNet_raw_data_base" in os.environ.keys() else None

# preprocessing_output_dir = os.environ['nnUNet_preprocessed'] if "nnUNet_preprocessed" in os.environ.keys() else None

# network_training_output_dir_base = os.path.join(os.environ['RESULTS_FOLDER']) if "RESULTS_FOLDER" in os.environ.keys() else None

base = 'D:/csdn/tc/work23/nnUNet/nnunet/nnUNet_raw_data_base'

preprocessing_output_dir = base + '/nnUNet_preprocessed'

network_training_output_dir_base = base + '/nnUNet_trained_models'

mkdir_func(base)

mkdir_func(preprocessing_output_dir)

mkdir_func(network_training_output_dir_base)

if base is not None:

nnUNet_raw_data = join(base, "nnUNet_raw_data")

nnUNet_cropped_data = join(base, "nnUNet_cropped_data")

# maybe_mkdir_p(nnUNet_raw_data)

# maybe_mkdir_p(nnUNet_cropped_data)

mkdir_func(nnUNet_raw_data)

mkdir_func(nnUNet_cropped_data)

else:

print("nnUNet_raw_data_base is not defined and nnU-Net can only be used on data for which preprocessed files "

"are already present on your system. nnU-Net cannot be used for experiment planning and preprocessing like "

"this. If this is not intended, please read documentation/setting_up_paths.md for information on how to set this up properly.")

nnUNet_cropped_data = nnUNet_raw_data = None

if preprocessing_output_dir is not None:

maybe_mkdir_p(preprocessing_output_dir)

else:

print("nnUNet_preprocessed is not defined and nnU-Net can not be used for preprocessing "

"or training. If this is not intended, please read documentation/setting_up_paths.md for information on how to set this up.")

preprocessing_output_dir = None

if network_training_output_dir_base is not None:

network_training_output_dir = join(network_training_output_dir_base, my_output_identifier)

maybe_mkdir_p(network_training_output_dir)

else:

print("RESULTS_FOLDER is not defined and nnU-Net cannot be used for training or "

"inference. If this is not intended behavior, please read documentation/setting_up_paths.md for information on how to set this "

"up.")

network_training_output_dir = None

很明显,这里也遵循上述1中的说明,并且base路径我同样给了绝对路径,其实相对路径应该也行,但是为了少点错误,先给绝对,另外,nnUNet_raw_data_base文件夹是我手动创建的,这里改完以后继续返回Task120_Massachusetts_RoadSegm.py看。

4.注意图像和标签的后缀都要是png,不是的话改下 suffix='.png'。

5.这一句话

convert_2d_image_to_nifti(input_segmentation_file, output_seg_file, is_seg=True,

transform=lambda x: (x == 255).astype(int))

一定要改成

convert_2d_image_to_nifti(input_segmentation_file, output_seg_file, is_seg=True)

代码里说了原因

6.注意这句

generate_dataset_json(join(target_base, 'dataset.json'), target_imagesTr, target_imagesTs, ('Red', 'Green', 'Blue'),

labels={0: 'background', 1: 'build'}, dataset_name=task_name, license='hands off!')

这个 labels={0: 'background', 1: 'build'} 别忘了改。

7.走到文件的最下面,作者给了提示,你接下来要做的就是数据的预处理nnUNet_plan_and_preprocess -t 120 -pl3d None,但是我没有安装也不想跑命令行,我就去experiment_planning文件夹下找到nnUNet_plan_and_preprocess.py脚本,改!

""" once this is completed, you can use the dataset like any other nnU-Net dataset. Note that since this is a 2D dataset there is no need to run preprocessing for 3D U-Nets. You should therefore run the `nnUNet_plan_and_preprocess` command like this: > nnUNet_plan_and_preprocess -t 120 -pl3d None once that is completed, you can run the trainings as follows: > nnUNet_train 2d nnUNetTrainerV2 120 FOLD (where fold is again 0, 1, 2, 3 and 4 - 5-fold cross validation) there is no need to run nnUNet_find_best_configuration because there is only one model to choose from. Note that without running nnUNet_find_best_configuration, nnU-Net will not have determined a postprocessing for the whole cross-validation. Spoiler: it will determine not to run postprocessing anyways. If you are using a different 2D dataset, you can make nnU-Net determine the postprocessing by using the `nnUNet_determine_postprocessing` command """

3.数据预处理

./experiment_planning/nnUNet_plan_and_preprocess.py脚本运行会有很多错误,但全都是路径错误,下面我把要改的地方说明下,下面贴一下nnUNet_plan_and_preprocess.py脚本

# Copyright 2020 Division of Medical Image Computing, German Cancer Research Center (DKFZ), Heidelberg, Germany

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

import nnunet

from batchgenerators.utilities.file_and_folder_operations import *

from nnunet.experiment_planning.DatasetAnalyzer import DatasetAnalyzer

from nnunet.experiment_planning.utils import crop

from nnunet.paths import *

import shutil

from nnunet.utilities.task_name_id_conversion import convert_id_to_task_name

from nnunet.preprocessing.sanity_checks import verify_dataset_integrity

from nnunet.training.model_restore import recursive_find_python_class

import warnings

warnings.filterwarnings("ignore")

def mkdir_func(path):

if os.path.exists(path):

pass

else:

os.mkdir(path)

def main():

import argparse

parser = argparse.ArgumentParser()

parser.add_argument("-t", "--task_ids", nargs="+", default=[120], help="List of integers belonging to the task ids you wish to run"

" experiment planning and preprocessing for. Each of these "

"ids must, have a matching folder 'TaskXXX_' in the raw "

"data folder")

parser.add_argument("-pl3d", "--planner3d", type=str, default="None",

help="Name of the ExperimentPlanner class for the full resolution 3D U-Net and U-Net cascade. "

"Default is ExperimentPlanner3D_v21. Can be 'None', in which case these U-Nets will not be "

"configured")

parser.add_argument("-pl2d", "--planner2d", type=str, default="ExperimentPlanner2D_v21",

help="Name of the ExperimentPlanner class for the 2D U-Net. Default is ExperimentPlanner2D_v21. "

"Can be 'None', in which case this U-Net will not be configured")

parser.add_argument("-no_pp", action="store_true",

help="Set this flag if you dont want to run the preprocessing. If this is set then this script "

"will only run the experiment planning and create the plans file")

parser.add_argument("-tl", type=int, required=False, default=2,

help="Number of processes used for preprocessing the low resolution data for the 3D low "

"resolution U-Net. This can be larger than -tf. Don't overdo it or you will run out of "

"RAM")

parser.add_argument("-tf", type=int, required=False, default=2,

help="Number of processes used for preprocessing the full resolution data of the 2D U-Net and "

"3D U-Net. Don't overdo it or you will run out of RAM")

# parser.add_argument("--verify_dataset_integrity", required=False, default=False, action="store_true",

# help="set this flag to check the dataset integrity. This is useful and should be done once for "

# "each dataset!")

parser.add_argument("--verify_dataset_integrity", required=False, default=True, action="store_true",

help="set this flag to check the dataset integrity. This is useful and should be done once for "

"each dataset!")

parser.add_argument("-overwrite_plans", type=str, default=None, required=False,

help="Use this to specify a plans file that should be used instead of whatever nnU-Net would "

"configure automatically. This will overwrite everything: intensity normalization, "

"network architecture, target spacing etc. Using this is useful for using pretrained "

"model weights as this will guarantee that the network architecture on the target "

"dataset is the same as on the source dataset and the weights can therefore be transferred.\n"

"Pro tip: If you want to pretrain on Hepaticvessel and apply the result to LiTS then use "

"the LiTS plans to run the preprocessing of the HepaticVessel task.\n"

"Make sure to only use plans files that were "

"generated with the same number of modalities as the target dataset (LiTS -> BCV or "

"LiTS -> Task008_HepaticVessel is OK. BraTS -> LiTS is not (BraTS has 4 input modalities, "

"LiTS has just one)). Also only do things that make sense. This functionality is beta with"

"no support given.\n"

"Note that this will first print the old plans (which are going to be overwritten) and "

"then the new ones (provided that -no_pp was NOT set).")

parser.add_argument("-overwrite_plans_identifier", type=str, default=None, required=False,

help="If you set overwrite_plans you need to provide a unique identifier so that nnUNet knows "

"where to look for the correct plans and data. Assume your identifier is called "

"IDENTIFIER, the correct training command would be:\n"

"'nnUNet_train CONFIG TRAINER TASKID FOLD -p nnUNetPlans_pretrained_IDENTIFIER "

"-pretrained_weights FILENAME'")

args = parser.parse_args()

task_ids = args.task_ids

dont_run_preprocessing = args.no_pp

tl = args.tl

tf = args.tf

planner_name3d = args.planner3d

planner_name2d = args.planner2d

if planner_name3d == "None":

planner_name3d = None

if planner_name2d == "None":

planner_name2d = None

if args.overwrite_plans is not None:

if planner_name2d is not None:

print("Overwriting plans only works for the 3d planner. I am setting '--planner2d' to None. This will "

"skip 2d planning and preprocessing.")

assert planner_name3d == 'ExperimentPlanner3D_v21_Pretrained', "When using --overwrite_plans you need to use " \

"'-pl3d ExperimentPlanner3D_v21_Pretrained'"

# we need raw data

tasks = []

for i in task_ids:

i = int(i)

task_name = convert_id_to_task_name(i)

if args.verify_dataset_integrity:

verify_dataset_integrity(join(nnUNet_raw_data, task_name))

crop(task_name, False, tf)

tasks.append(task_name)

search_in = join(nnunet.__path__[0], "experiment_planning")

if planner_name3d is not None:

planner_3d = recursive_find_python_class([search_in], planner_name3d, current_module="nnunet.experiment_planning")

if planner_3d is None:

raise RuntimeError("Could not find the Planner class %s. Make sure it is located somewhere in "

"nnunet.experiment_planning" % planner_name3d)

else:

planner_3d = None

if planner_name2d is not None:

planner_2d = recursive_find_python_class([search_in], planner_name2d, current_module="nnunet.experiment_planning")

if planner_2d is None:

raise RuntimeError("Could not find the Planner class %s. Make sure it is located somewhere in "

"nnunet.experiment_planning" % planner_name2d)

else:

planner_2d = None

for t in tasks:

print("\n\n\n", t)

cropped_out_dir = os.path.join(nnUNet_cropped_data, t).replace('\\', '/') #D:/csdn/tc/work23/nnUNet/nnunet/nnUNet_raw_data_base\nnUNet_cropped_data\Task120_MassRoadsSeg

preprocessing_output_dir_this_task = os.path.join(preprocessing_output_dir, t)

#splitted_4d_output_dir_task = os.path.join(nnUNet_raw_data, t)

#lists, modalities = create_lists_from_splitted_dataset(splitted_4d_output_dir_task)

# we need to figure out if we need the intensity propoerties. We collect them only if one of the modalities is CT

dataset_json = load_json(join(cropped_out_dir, 'dataset.json'))

modalities = list(dataset_json["modality"].values())

collect_intensityproperties = True if (("CT" in modalities) or ("ct" in modalities)) else False

dataset_analyzer = DatasetAnalyzer(cropped_out_dir, overwrite=False, num_processes=tf) # this class creates the fingerprint

_ = dataset_analyzer.analyze_dataset(collect_intensityproperties) # this will write output files that will be used by the ExperimentPlanner

# maybe_mkdir_p(preprocessing_output_dir_this_task)

mkdir_func(preprocessing_output_dir_this_task)

shutil.copy(join(cropped_out_dir, "dataset_properties.pkl"), preprocessing_output_dir_this_task)

shutil.copy(join(nnUNet_raw_data, t, "dataset.json"), preprocessing_output_dir_this_task)

threads = (tl, tf)

print("number of threads: ", threads, "\n")

if planner_3d is not None:

if args.overwrite_plans is not None:

assert args.overwrite_plans_identifier is not None, "You need to specify -overwrite_plans_identifier"

exp_planner = planner_3d(cropped_out_dir, preprocessing_output_dir_this_task, args.overwrite_plans,

args.overwrite_plans_identifier)

else:

exp_planner = planner_3d(cropped_out_dir, preprocessing_output_dir_this_task)

exp_planner.plan_experiment()

if not dont_run_preprocessing: # double negative, yooo

exp_planner.run_preprocessing(threads)

if planner_2d is not None:

# preprocessing_output_dir_this_task D:/csdn/tc/work23/nnUNet/nnunet/nnUNet_raw_data_base/nnUNet_preprocessed\Task120_MassRoadsSeg

exp_planner = planner_2d(cropped_out_dir, preprocessing_output_dir_this_task.replace('\\', '/'))

exp_planner.plan_experiment()

if not dont_run_preprocessing: # double negative, yooo

exp_planner.run_preprocessing(threads)

if __name__ == "__main__":

main()

修改说明:

1.由于路径问题还是要加mkdir_func函数,并且去找到用了maybe_mkdir_p的地方替换

2.直接加default参数,懒得用命令行了,default=[120]

parser.add_argument("-t", "--task_ids", nargs="+", default=[120]

3. 3维给none, default="None"

parser.add_argument("-pl3d", "--planner3d", type=str, default="None",

4. 2维用ExperimentPlanner2D_v21,

parser.add_argument("-pl2d", "--planner2d", type=str, default="ExperimentPlanner2D_v21",

5. –tl 和 –tf 参数需根据自身电脑配置更改,原始是8我改为了2,实在不行就1,不然会报系统错误以及多线程错误,错误本质上还是因为Windows的多线程问题,本身nnUnet就是在Linux上做的,Windows上有问题也正常

6. 有两处路径用了.replace('\\', '/'),之所以这样是因为很多路径进入函数以后,作者会利用split('/')来切割路径,路径里如果有\\必然报路径错误,这个在Linux里肯定不会遇到。

上面这些就算改完了还是会有很多路径错误,下面我把需要改路径的脚本列一下,自己去改吧,就不一一拿出来说了,套路完全和上面一样

nnunet\preprocessing\cropping.py 197行 maybe_mkdir_p换成 mkdir_func, 59行,64行加.replace('\\', '/') 127行和188行 改 i.replace(‘\\’, ‘/’)

nnunet\experiment_planning/utils.py 所有maybe_mkdir_p换成 mkdir_func,并且100-108行涉及到路径的,末尾都要加 .replace('\\', '/') 来改变斜杠方向

nnunet\preprocessing/preprocessing.py 添加mkdir_func函数且所有maybe_mkdir_p换成 mkdir_func

nnunet\experiment_planning/DatasetAnalyzer.py 37行 加 .replace(‘\\’, ‘/’)

nnunet\experiment_planning/experiment_planner_baseline_3DUNet.py 34行和406行 加 .replace(‘\\’, ‘/’)

如果上面的改了还有路径错误,改的方式肯定是一样的,定位到错误的地方按上面逻辑改下就行了

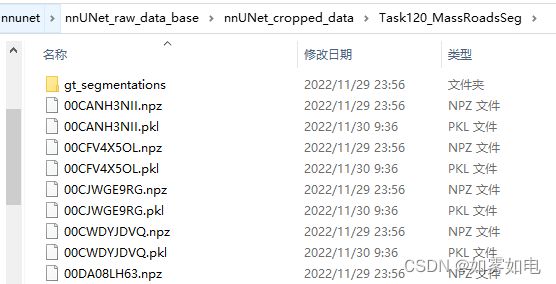

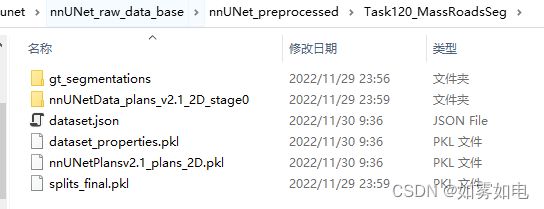

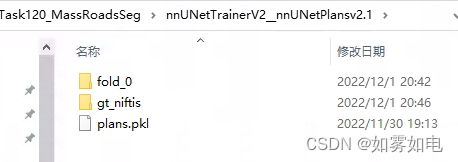

上面的步骤跑完以后,nnUNet_cropped_data和nnUNet_preprocessed里会产生很多文件

4.模型训练

按照作者的逻辑上面数据转化、预处理做完该去训练了,命令行是

nnUNet_train 2d nnUNetTrainerV2 120 FOLD

这里我还是不用命令行,继续去代码里改

在nnunet/run/run_training.py脚本里改,这里是训练的入口,下面贴出run_training.py改动的代码

def main():

parser = argparse.ArgumentParser()

parser.add_argument("--network", default='2d') # 在./run/default_configuration.py 中说了网络的类型 ['2d', '3d_lowres', '3d_fullres', '3d_cascade_fullres']

parser.add_argument("--network_trainer", default='nnUNetTrainerV2') #在./training/nework_traing/ 下有以下训练器可选nnUNetTrainer, nnUNetTrainerCascadeFullRes, nnUNetTrainerV2,

# nnUNetTrainerV2_CascadeFullRes, nnUNetTrainerV2_DDP, nnUNetTrainerV2_DP, nnUNetTrainerV2_fp32, 这里是单机单卡,除了DDP,DP都可以试试看。

parser.add_argument("--task", default='120', help="can be task name or task id") #任务名

parser.add_argument("--fold", default='0', help='0, 1, ..., 5 or \'all\'') #这里一定注意下,0,1,2,3,4都要跑一遍,代表5折交叉的每一折

parser.add_argument("-val", "--validation_only", help="use this if you want to only run the validation",

action="store_true", default=False)

parser.add_argument("-c", "--continue_training", help="use this if you want to continue a training",

action="store_true")

parser.add_argument("-p", help="plans identifier. Only change this if you created a custom experiment planner",

default=default_plans_identifier, required=False)

parser.add_argument("--use_compressed_data", default=False, action="store_true",

help="If you set use_compressed_data, the training cases will not be decompressed. Reading compressed data "

"is much more CPU and RAM intensive and should only be used if you know what you are "

"doing", required=False)

parser.add_argument("--deterministic",

help="Makes training deterministic, but reduces training speed substantially. I (Fabian) think "

"this is not necessary. Deterministic training will make you overfit to some random seed. "

"Don't use that.",

required=False, default=False, action="store_true")

parser.add_argument("--npz", required=False, default=False, action="store_true", help="if set then nnUNet will "

"export npz files of "

"predicted segmentations "

"in the validation as well. "

"This is needed to run the "

"ensembling step so unless "

"you are developing nnUNet "

"you should enable this")

parser.add_argument("--find_lr", required=False, default=False, action="store_true",

help="not used here, just for fun")

parser.add_argument("--valbest", required=False, default=False, action="store_true",

help="hands off. This is not intended to be used")

parser.add_argument("--fp32", required=False, default=False, action="store_true",

help="disable mixed precision training and run old school fp32")

parser.add_argument("--val_folder", required=False, default="validation_raw",

help="name of the validation folder. No need to use this for most people")

parser.add_argument("--disable_saving", required=False, action='store_true',

help="If set nnU-Net will not save any parameter files (except a temporary checkpoint that "

"will be removed at the end of the training). Useful for development when you are "

"only interested in the results and want to save some disk space")

parser.add_argument("--disable_postprocessing_on_folds", required=False, action='store_true',

help="Running postprocessing on each fold only makes sense when developing with nnU-Net and "

"closely observing the model performance on specific configurations. You do not need it "

"when applying nnU-Net because the postprocessing for this will be determined only once "

"all five folds have been trained and nnUNet_find_best_configuration is called. Usually "

"running postprocessing on each fold is computationally cheap, but some users have "

"reported issues with very large images. If your images are large (>600x600x600 voxels) "

"you should consider setting this flag.")

# parser.add_argument("--interp_order", required=False, default=3, type=int,

# help="order of interpolation for segmentations. Testing purpose only. Hands off")

# parser.add_argument("--interp_order_z", required=False, default=0, type=int,

# help="order of interpolation along z if z is resampled separately. Testing purpose only. "

# "Hands off")

# parser.add_argument("--force_separate_z", required=False, default="None", type=str,

# help="force_separate_z resampling. Can be None, True or False. Testing purpose only. Hands off")

parser.add_argument('--val_disable_overwrite', action='store_false', default=True,

help='Validation does not overwrite existing segmentations')

parser.add_argument('--disable_next_stage_pred', action='store_true', default=False,

help='do not predict next stage')

parser.add_argument('-pretrained_weights', type=str, required=False, default=None,

help='path to nnU-Net checkpoint file to be used as pretrained model (use .model '

'file, for example model_final_checkpoint.model). Will only be used when actually training. '

'Optional. Beta. Use with caution.')

args = parser.parse_args()不需要贴全,这里只改了参数的配置部分,部分参数在参数的后面用中文稍微说明了下,注意看下

注意事项:

1.训练的主体nnunet/training/network_training/nnUNetTrainer.py中的nnUNetTrainer类继承了nnunet/training/network_training/network_trainer.py中NetworkTrainer类

而nnUNetTrainerV2又继承了nnunet/training/network_training/nnUNetTrainer.py中的nnUNetTrainer类 (这里只是说下它们的关系,便于你们自己梳理代码,可以不用太在意)

其中network_trainer.py包含了很多基础的参数,epoch想要改的话就在这里改那个max_num_epoch

nnUNetTrainer.py包含了数据加载函数get_basic_generators而get_basic_generators函数进一步定位到nnunet/training/dataloading/dataset_loading.py中的DataLoader2D函数,这里还是注意下,数据加载函数的位置,后面如果有需要改的话能快速转过去。

2. nnunet/training/dataloading/dataset_loading.py 中的unpack_dataset函数是数据加载多线程函数,其中的线程开启个数由configuration.py中的default_num_threads决定(改为1),改/nnunet/configuration.py中的线程个数在很多地方都用了,所以改为1是很有必要的,不然会报多线程相关的错误,估计这也是Windows特有的错误

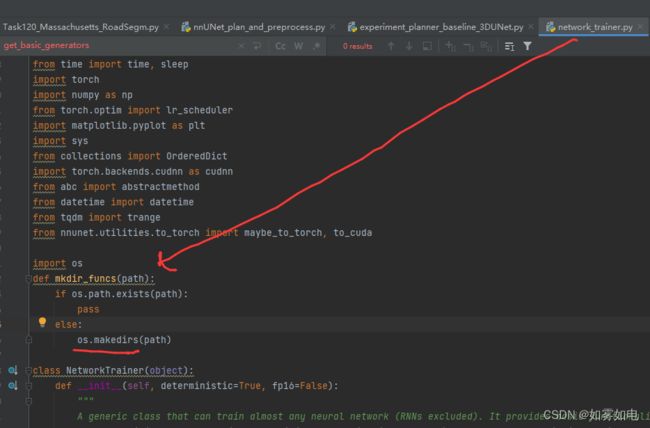

3. nnunet/training/network_training/networking_trainer.py 添加mkdir_funcs函数 且所有maybe_mkdir_p 改为 mkdir_funcs 创建多级目录(和mkdir_func不同)

import os

def mkdir_funcs(path):

if os.path.exists(path):

pass

else:

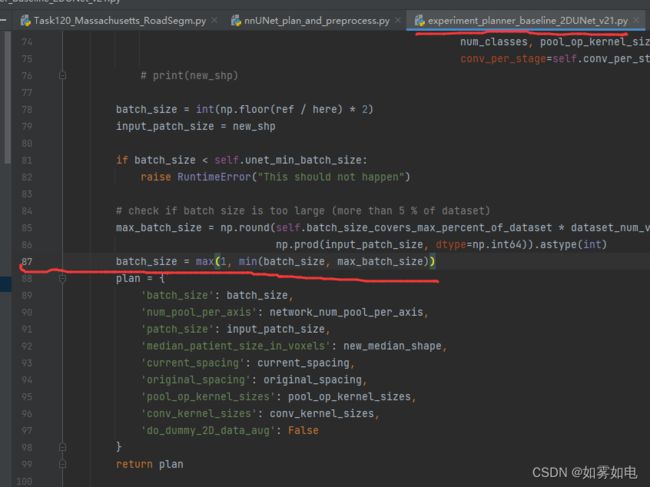

os.makedirs(path)4. batchsize的产生由nnunet/experiment_planning/nnUNet_plan_and_preprocess.py(上一步的数据预处理阶段产生)自动产生,直到因为显存问题开始想改变batchsize的时候才发现上一步不止数据预处理,还根据数据情况生成了一个训练计划,所以上一步没有提这个事,所以如果到这里你的显存爆了,那么你需要先删除上一步产生的nnUNet_cropped_data和nnUNet_preprocessed文件夹及内容,重新跑一遍。

而具体生成计划的脚本隐藏的太深,下面具体说下,

注意47行的-pl2d参数,此处我给的是ExperimentPlanner2D_v21,接着再看到133行:

planner_2d = recursive_find_python_class([search_in], planner_name2d, current_module="nnunet.experiment_planning")

它这里不在开头导入nnunet.experiment_planning,而是通过recursive_find_python_class函数去nnunet.experiment_planning下检索出了experiment_planner_baseline_2DUNet_v21.py脚本中的ExperimentPlanner2D_v21类,并调用生成了训练计划,另外ExperimentPlanner2D_v21类继承了experiment_planner_baseline_2DUNet.py脚本中的ExperimentPlanner2D类,如果还有什么要调整的,两个脚本的类需要一起看。

experiment_planner_baseline_2DUNet_v21.py脚本中batchsize最终在87行被确定

如果显卡爆了,直接在这里改batchsize,直接在87行后面强制改就行,比如batch_size=2

5.其它

任务创建的以下三个文件夹,如果有第二个任务,需要更改文件夹名字或者删除不然报错

RuntimeError: More than one task name found for task id 120. Please correct that. (I looked in the following folders:

nnUNet_raw_data Preprocessed nnUNet_cropped_data

也就是说,你如果要做新的任务,你需要把之前任务名120改一下。

改完上面以后应该就能训练起来了,下面是训练结果

5.预测

预测的问题,也差不多,还会出现Windows特有的错误,主要在多线程方面

程序的入口是./nnunet/inference/predict_simple.py脚本,主要还是改下参数就好

def main():

parser = argparse.ArgumentParser()

parser.add_argument("--input_folder", default='D:/wcs/nnUNet/nnunet/nnUNet_raw_data_base/nnUNet_raw_data/Task120_MassRoadsSeg/imagesTs/', help="Must contain all modalities for each patient in the correct"

" order (same as training). Files must be named "

"CASENAME_XXXX.nii.gz where XXXX is the modality "

"identifier (0000, 0001, etc)")

parser.add_argument("--output_folder", default='D:/wcs/nnUNet/nnunet/nnUNet_raw_data_base/nnUNet_raw_data/Task120_MassRoadsSeg/test/', help="folder for saving predictions")

parser.add_argument("--task_name", help='task name or task ID, required.',

default='120')

parser.add_argument('-tr', '--trainer_class_name',

help='Name of the nnUNetTrainer used for 2D U-Net, full resolution 3D U-Net and low resolution '

'U-Net. The default is %s. If you are running inference with the cascade and the folder '

'pointed to by --lowres_segmentations does not contain the segmentation maps generated by '

'the low resolution U-Net then the low resolution segmentation maps will be automatically '

'generated. For this case, make sure to set the trainer class here that matches your '

'--cascade_trainer_class_name (this part can be ignored if defaults are used).'

% default_trainer,

required=False,

default=default_trainer)

parser.add_argument('-ctr', '--cascade_trainer_class_name',

help="Trainer class name used for predicting the 3D full resolution U-Net part of the cascade."

"Default is %s" % default_cascade_trainer, required=False,

default=default_cascade_trainer)

parser.add_argument('-m', '--model', help="2d, 3d_lowres, 3d_fullres or 3d_cascade_fullres. Default: 3d_fullres",

default="2d", required=False)

parser.add_argument('-p', '--plans_identifier', help='do not touch this unless you know what you are doing',

default=default_plans_identifier, required=False)

parser.add_argument('-f', '--folds', nargs='+', default='None',

help="folds to use for prediction. Default is None which means that folds will be detected "

"automatically in the model output folder")

parser.add_argument('-z', '--save_npz', required=False, action='store_true',

help="use this if you want to ensemble these predictions with those of other models. Softmax "

"probabilities will be saved as compressed numpy arrays in output_folder and can be "

"merged between output_folders with nnUNet_ensemble_predictions")

parser.add_argument('-l', '--lowres_segmentations', required=False, default='None',

help="if model is the highres stage of the cascade then you can use this folder to provide "

"predictions from the low resolution 3D U-Net. If this is left at default, the "

"predictions will be generated automatically (provided that the 3D low resolution U-Net "

"network weights are present")

parser.add_argument("--part_id", type=int, required=False, default=0, help="Used to parallelize the prediction of "

"the folder over several GPUs. If you "

"want to use n GPUs to predict this "

"folder you need to run this command "

"n times with --part_id=0, ... n-1 and "

"--num_parts=n (each with a different "

"GPU (for example via "

"CUDA_VISIBLE_DEVICES=X)")

parser.add_argument("--num_parts", type=int, required=False, default=1,

help="Used to parallelize the prediction of "

"the folder over several GPUs. If you "

"want to use n GPUs to predict this "

"folder you need to run this command "

"n times with --part_id=0, ... n-1 and "

"--num_parts=n (each with a different "

"GPU (via "

"CUDA_VISIBLE_DEVICES=X)")

parser.add_argument("--num_threads_preprocessing", required=False, default=0, type=int, help=

"Determines many background processes will be used for data preprocessing. Reduce this if you "

"run into out of memory (RAM) problems. Default: 6")

parser.add_argument("--num_threads_nifti_save", required=False, default=1, type=int, help=

"Determines many background processes will be used for segmentation export. Reduce this if you "

"run into out of memory (RAM) problems. Default: 2")

parser.add_argument("--disable_tta", required=False, default=False, action="store_true",

help="set this flag to disable test time data augmentation via mirroring. Speeds up inference "

"by roughly factor 4 (2D) or 8 (3D)")

parser.add_argument("--overwrite_existing", required=False, default=False, action="store_true",

help="Set this flag if the target folder contains predictions that you would like to overwrite")

parser.add_argument("--mode", type=str, default="normal", required=False, help="Hands off!")

parser.add_argument("--all_in_gpu", type=str, default="None", required=False, help="can be None, False or True. "

"Do not touch.")

parser.add_argument("--step_size", type=float, default=0.5, required=False, help="don't touch")

# parser.add_argument("--interp_order", required=False, default=3, type=int,

# help="order of interpolation for segmentations, has no effect if mode=fastest. Do not touch this.")

# parser.add_argument("--interp_order_z", required=False, default=0, type=int,

# help="order of interpolation along z is z is done differently. Do not touch this.")

# parser.add_argument("--force_separate_z", required=False, default="None", type=str,

# help="force_separate_z resampling. Can be None, True or False, has no effect if mode=fastest. "

# "Do not touch this.")

parser.add_argument('-chk',

help='checkpoint name, default: model_final_checkpoint',

required=False,

default='model_final_checkpoint')

parser.add_argument('--disable_mixed_precision', default=False, action='store_true', required=False,

help='Predictions are done with mixed precision by default. This improves speed and reduces '

'the required vram. If you want to disable mixed precision you can set this flag. Note '

'that this is not recommended (mixed precision is ~2x faster!)')

args = parser.parse_args()改动说明:

1.输入参数input_folder/output_folder/task_name/m/num_threads_preprocessing/num_threads_nifti_save,具体改动上面给出了,其中一定要注意 num_threads_preprocessing必须为0,不然会报错:

_pickle.PicklingError: Can't pickle

不要试图去解决这个问题了,这个问题在Windows上并不好解决,也是多线程多进程问题,在Linux不会有,如果你感兴趣,那么解决思路是:

python3 PicklingError: Can't pickle

涉及到的文件在 ./nnunet/utilities/nd_softmax.py,那个lamdba在Windows进程里会有问题,不好解决。

2.同样还有路径无法创建的问题,在./nnunet/inference/predict.py脚本里添加路径函数mkdir_func

找到并替换所有maybe_mkdir_p

3.nnunet/training/model_restore.py加载模型时候会用到,第145行的路径加replace即:

all_best_model_files = [join(i, "%s.model" % checkpoint_name).replace('\\', '/') for i in folds]

未完待续。。。