【Pranet】论文及代码解读(Res2Net部分)——peiheng jia

【Pranet】论文及代码解读——Res2Net部分

1.Res2Net结构简介

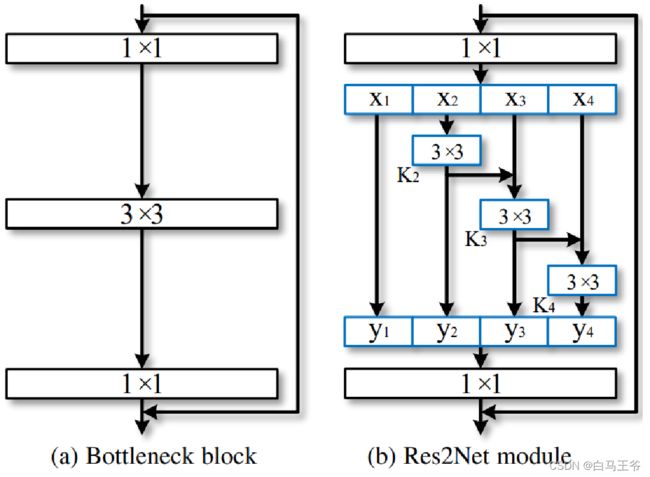

Res2Net网络中的主要结构的思想是将原来残差卷积中的3×3卷积层接收到的来自输入层1×1卷积后的特征图分解为四部分,第一部分不进行操作,第二部分通过一个3×3卷积层,第三部分在通过一个3×3卷积层前与第二部分卷积后的特征图相加,第四部分在通过一个3×3卷积层前与第三部分卷积后的特征图相加,最终将得到的四个部分的特征图进行拼接成与输入层输出同样层数的特征图再送到输出层做1×1卷积。

2.Res2Net代码详解

a.Bottle2neck类

该论文中定义了两种stype,分别是stage与normal。其中stage不用加上前一小块的输出结果,而是直接 sp = spx[i] ;normal需要加上前一个小块儿的输出,即 sp = sp + spx[i] 。

resnet50和Res2Net50中的block作比较,可以发现Bottle2neck中的中间层的通道数与第一层并不一样,而是根据作者自己定义的baseWidth=26来计算的。

需要注意的是,虽然论文中给出的Res2Net结构如上图是第一个块 x 1 x_1 x1不卷积直接到 y 1 y_1 y1,但是代码中其实是最后一个块儿不经过卷积直接下来的,即 x 4 x_4 x4不卷积直接到 y 4 y_4 y4,但是其实是效果是一样的。

class Bottle2neck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None, baseWidth=26, scale = 4, stype='normal'):

""" Constructor

Args:

inplanes: input channel dimensionality #输入通道维度(通道个数嘛?)

planes: output channel dimensionality #输出通道维度

stride: conv stride. Replaces pooling layer. #conv的步长,主要是避免使用池化造成信息丢失

downsample: None when stride = 1

baseWidth: basic width of conv3x3 #基本宽度(?)

scale: number of scale. #论文中的s。也即组的数目

type: 'normal': normal set. 'stage': first block of a new stage.

"""

super(Bottle2neck, self).__init__()

# scale, 尺度数目,也即有多少个小组 n=s*w

# width就是文章中的w,表征每一个小组中有几个channel

# Width/baseWidth 仅用于控制每次拆分中的通道数。

width = int(math.floor(planes * (baseWidth/64.0)))

self.conv1 = nn.Conv2d(inplanes, width*scale, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(width*scale)

if scale == 1:

self.nums = 1

else:

self.nums = scale -1

if stype == 'stage':

self.pool = nn.AvgPool2d(kernel_size=3, stride = stride, padding=1)

convs = []

bns = []

for i in range(self.nums):

# 输入通道等于输出通道的卷积操作(3*3)

convs.append(nn.Conv2d(width, width, kernel_size=3, stride = stride, padding=1, bias=False))

bns.append(nn.BatchNorm2d(width))

self.convs = nn.ModuleList(convs)

self.bns = nn.ModuleList(bns)

self.conv3 = nn.Conv2d(width*scale, planes * self.expansion, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stype = stype

self.scale = scale

self.width = width

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

# 将tensor中第1维度,也即(N,C,H,W)中的C进行拆分

spx = torch.split(out, self.width, 1)

# 对于stype==‘stage’的时候不用加上前一小块的输出结果,而是直接 sp = spx[i]

# 是因为输入输出的尺寸不一致(通道数不一样),所以没法加起来

for i in range(self.nums):

if i==0 or self.stype=='stage':

sp = spx[i]

else:

sp = sp + spx[i]

sp = self.convs[i](sp)

sp = self.relu(self.bns[i](sp))

if i==0:

out = sp

else:

out = torch.cat((out, sp), 1)

# 注意有两种情况,stage类型来说,这个其实是总是执行sp = spx[i]这一分支,

# 然后进行conv,bn,relu组合,期间执行conv的stride=2的降维,

# 最后一个splited分支使用pooling 降维

if self.scale != 1 and self.stype=='normal':

out = torch.cat((out, spx[self.nums]),1)

# 在这里需要加pool的原因是因为,对于每一个layer的stage模块,它的stride是不确定,layer1的stride=1

# layer2、3、4的stride=2,前三小块都经过了stride=2的3*3卷积,而第四小块是直接送到y中的,但它必须要pool一下

# 不然尺寸和不能和前面三个小块对应上,无法完成最后的econcat操作

elif self.scale != 1 and self.stype=='stage':

out = torch.cat((out, self.pool(spx[self.nums])),1)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

b.Res2Net类

这一部分与ResNet是相似的。

class Res2Net(nn.Module):

def __init__(self, block, layers, baseWidth = 26, scale = 4, num_classes=1000):

self.inplanes = 64

super(Res2Net, self).__init__()

self.baseWidth = baseWidth

self.scale = scale

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AdaptiveAvgPool2d(1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample=downsample,

stype='stage', baseWidth = self.baseWidth, scale=self.scale))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes, baseWidth = self.baseWidth, scale=self.scale))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x