- DeepSeek:突破传统的AI算法与下载排行分析

smart_ljh

行业搜索人工智能AI

DeepSeek的AI算法突破DeepSeek相较于OpenAI以及其它平台的性能对比DeepSeek的下载排行分析(截止2025/1/28AI人工智能相关DeepSeek甚至一度被推上了搜索)未来发展趋势总结在人工智能技术飞速发展的当下,搜索引擎市场也迎来了新的变革。DeepSeek,作为一款基于深度学习技术和大数据算法的搜索引擎,以其独特的优势在国内外市场上引起了广泛关注。下面介绍一下针对De

- Python数据可视化 Pyecharts 制作 Sankey 桑基图

Mr数据杨

Python数据可视化python数据可视化pyecharts数据分析

桑基图作为一种强大的数据可视化工具,常用于展现不同节点之间的流动关系及其数量分布。其通过直观的连线展示,帮助用户理解复杂系统中各个部分的连接和交互。Python的pyecharts库提供了Sankey类,支持用户灵活创建各种桑基图,不仅能够展示流动数据,还能根据节点层级及连线样式进行高度定制,使得桑基图在信息传达和视觉表现上更具表现力。文章目录Sankey:桑基图Demo总结Sankey:桑基图桑

- Python 实现车牌识别

菜狗小测试

Python技术专栏python计算机视觉opencv

一、车牌识别的基本原理车牌识别主要包括以下几个步骤:图像采集:通过摄像头或其他图像采集设备获取包含车牌的图像。图像预处理:对采集到的图像进行灰度化、滤波、增强等操作,以提高图像的质量和清晰度,便于后续的处理。车牌定位:从预处理后的图像中找出车牌的位置。这可以通过一些特征提取和机器学习算法来实现,例如基于颜色特征、边缘特征等方法来定位车牌区域。字符分割:将定位到的车牌区域中的字符分割开,以便对每个字

- ESP32内存管理详解:从基础到进阶

又吹风_Bassy

ESP32内存管理PSRAMDRAMFLASH

最近在学习ESP32,下面整理了一些存储和内存相关知识点。ESP32作为一款功能强大的物联网芯片,广泛应用于各种嵌入式开发场景。有效管理ESP32的内存资源,对于提升应用性能和系统稳定性至关重要。本文将系统性地介绍ESP32的内存架构、存储硬件知识、内存分配机制、常见内存问题及解决方案,帮助新手开发者全面掌握ESP32的内存管理。一、内存系统概览1.1ESP32内存架构ESP32的内存架构复杂而灵

- Qt调用FFmpeg库实时播放UDP组播视频流

daqinzl

qtffmpeg流媒体qtffmpegudp组播流

基于以下参考链接,通过改进实现实时播放UDP组播视频流https://blog.csdn.net/u012532263/article/details/102736700源码在windows(qt-opensource-windows-x86-5.12.9.exe)、ubuntu20.04.6(x64)(qt-opensource-linux-x64-5.12.12.run)、以及针对arm64的

- Solon Cloud Gateway 开发:熟悉 Completable 响应式接口

组合缺一

SolonJavaFrameworkgatewaysolonjavareactor

Solon-Rx(约2Kb)是基于reactive-streams封装的RxJava极简版(约2Mb左右)。目前仅一个接口Completable,意为:可完成的发布者。使用场景及接口:接口说明Completable作为返回类型Completable::complete()构建完成发布者Completable::error(cause)构建异常发布者Completable::create((emit

- Solon Cloud Gateway 开发:熟悉 ExContext 及相关接口

组合缺一

SolonJavaFrameworkgatewaysolonjava后端

分布式网关的主要工作是路由及数据交换,在定义时,会经常用到:接口说明RouteFilterFactory路由过滤器工厂RoutePredicateFactory路由检测器工厂CloudGatewayFilter分布式网关过滤器ExFilter交换过滤器ExPredicate交换检测器ExContext交换上下文ExFilter应用场景CloudGatewayFilterextendsExFilte

- 【车牌识别】卷积神经网络CNN车牌识别【含 GUI Matlab源码 2638期】

Matlab仿真科研站

matlab

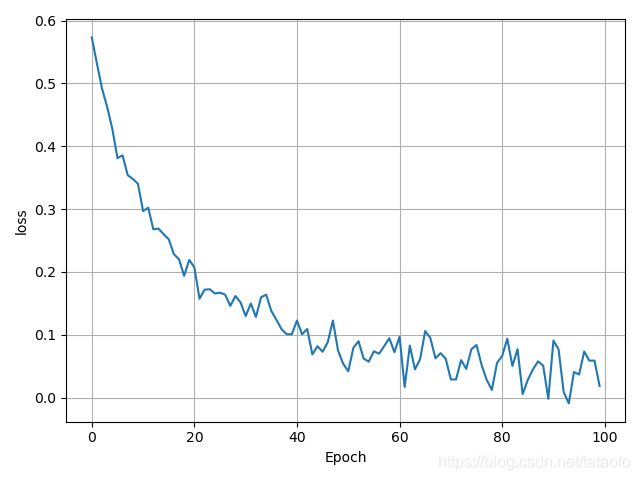

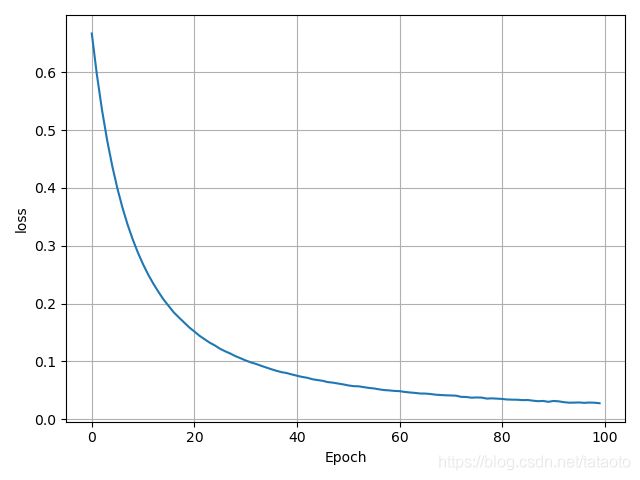

欢迎来到Matlab仿真科研站博客之家✅博主简介:热爱科研的Matlab仿真开发者,修心和技术同步精进,Matlab项目合作可私信。个人主页:Matlab仿真科研站博客之家代码获取方式:扫描文章底部QQ二维码⛳️座右铭:行百里者,半于九十;路漫漫其修远兮,吾将上下而求索。⛄更多Matlab图像处理(仿真科研站版)仿真内容点击Matlab图像处理(仿真科研站版)⛄一、CNN车牌识别简介1车牌定位1.

- 深入解析ncnn::Net类——高效部署神经网络的核心组件

又吹风_Bassy

人工智能深度学习ncnnncnnNetncnn使用示例

最近在学习ncnn推理框架,下面整理了ncnn::Net的使用方法。在移动端和嵌入式设备上进行高效的神经网络推理,要求框架具备轻量化、高性能以及灵活的扩展能力。作为腾讯开源的高性能神经网络推理框架,ncnn在这些方面表现出色。而在ncnn的核心组件中,ncnn::Net类扮演了至关重要的角色。本文将详细介绍ncnn::Net类的结构、功能及其使用方法,帮助开发者更好地理解和利用这一强大的工具。目录

- 带你简单认识真实场景下的Git版本控制流程

gitgithub

Git版本控制-HowieCong1.项目初始化与仓库创建gitinit命令初始化一个本地git仓库在github创建一个远程仓库,将本地仓库与远程仓库,gitremoteaddorigin[远程仓库地址]2.分支管理策略(采用常用的分支开发模式)以master或main为主分支,存放稳定可上线的代码开发新功能的代码时,从master分支创建特性分支,例如feature/[功能名称],在特性分支

- python实现dbscan

怎么就重名了

算法python开发语言

python实现dbscan原理DBSCAN(Density-BasedSpatialClusteringofApplicationswithNoise)是一个比较有代表性的基于密度的聚类算法。它将簇定义为密度相连的点的最大集合,能够把具有足够高密度的区域划分为簇,并可在噪声的空间数据库中发现任意形状的聚类。DBSCAN中的几个定义:Ε邻域:给定对象半径为Ε内的区域称为该对象的Ε邻域;核心对象:如

- 动物判别系统python实现

L C H

python人工智能算法矩阵线性代数

动物判别系统由于明天的实验时间较为紧张,所以本人提前完成实验,将代码呈现如下(有些许参考):由于明天的实验时间较为紧张,所以本人提前完成实验,将代码呈现如下(有些许参考):#判断有无重复元素defjudge_repeat(value,list=[]):foriinrange(0,len(list)):if(list[i]==value):return1else:if(i!=len(list)-1)

- 02数组+字符串+滑动窗口+前缀和与差分+双指针(D5_双指针)

Java丨成神之路

06数据结构与算法java

目录一、基本介绍二、算法思想三、算法模型1.对撞指针2.快慢指针3.滑动窗口一、基本介绍双指针是一种应用很广泛且基础的算法,严格来说双指针不是算法更像是一种思想。双指针中的“指针”不仅仅是大家所熟知的C/C++里面的地址指针,还是索引、游标。二、算法思想双指针是指在遍历对象时,使用两个或多个指针进行遍历及相应的操作。大多用于数组操作,这利用了数组连序性的特点。双指针常用来降低算法的时间复杂度,因为

- Python实现图像(边缘)锐化:梯度锐化、Roberts 算子、Laplace算子、Sobel算子的详细方法

闲人编程

pythonpython计算机视觉人工智能SobelLaplaceRoberts锐化

目录Python实现图像(边缘)锐化:梯度锐化、Roberts算子、Laplace算子、Sobel算子的详细方法引言一、图像锐化的基本原理1.1什么是图像锐化?1.2边缘检测的基本概念二、常用的图像锐化算法2.1梯度锐化2.1.1实现步骤2.2Roberts算子2.2.1实现步骤2.3Laplace算子2.3.1实现步骤2.4Sobel算子2.4.1实现步骤三、Python实现图像锐化3.1导入必

- CSS:跑马灯

sunly_

csscss前端

.swiper-container{width:100%;overflow:hidden;padding:0.4rem;position:relative;}.swiper-wrapper{display:flex;animation:scroll30slinearinfinite;width:fit-content;//确保容器宽度适应内容}.item{flex-shrink:0;width:2

- 大模型应用:探索AI大模型的50个应用场景:让科技改变生活。

AGI大模型资料分享员

人工智能科技生活agi语言模型自然语言处理

随着人工智能技术的迅猛发展,AI大模型在各个领域的应用日益广泛。百度创始人、董事长兼首席执行官李彦宏在2024年世界人工智能大会上表示,目前AI技术发展路线发生了方向性改变,已从过去辨别式人工智能转向了未来生成式人工智能。他更是呼吁:“大家不要卷模型,要卷应用!”本文将为大家盘点AI大模型的50个应用场景,并按应用频率从高到低进行排列,带您了解AI如何深刻改变我们的工作与生活。1.自然语言处理(N

- Qt-Ribbon-Widget 项目教程

柯戈喻James

Qt-Ribbon-Widget项目教程Qt-Ribbon-WidgetARibbonwidgetforQt项目地址:https://gitcode.com/gh_mirrors/qt/Qt-Ribbon-Widget1、项目的目录结构及介绍Qt-Ribbon-Widget项目的目录结构如下:Qt-Ribbon-Widget/├──src/│├──main.cpp│├──mainwindow.cp

- Qt Ribbon使用实例

依梦_728297725

QTc++ribbonSARibbon

采用SARibbon创建简单的ribbon界面实例代码如下所示:1、头文件:#pragmaonce#include#include"SARibbonMainWindow.h"classQTextEdit;classSAProjectDemo1:publicSARibbonMainWindow{Q_OBJECTpublic:SAProjectDemo1(QWidget*parent=Q_NULLPT

- 2024 LLM年度事件回顾:价格全面下跌、本地运行大模型、多模态能力爆发……

大模型.

人工智能语言模型自然语言处理知识图谱架构大模型

2025年伊始,Django的作者之一SimonWillison,带我们回顾了2024年AI的重磅进展,堪称大模型的“里程碑”盘点。快来看看有哪些突破,刷新了我们对AI的认知!原文很长,下面给大家列几个关键点:1、GPT-4壁垒被突破从前,GPT-4被视为无人能及的高度智能“天花板”,现在,ChatbotArea排行榜上已经有近70个模型,超过了2023年3月版本的GPT-4。谷歌的Gemini1

- 从零到手搓一个Agent:AI Agents新手入门精通

大模型.

人工智能chatgpt大数据深度学习智能体算法大模型

今日主题:当什么是Agent,与LLM的区别又是啥这一天,你的女朋友问你(假设我们有女朋友),宝宝,什么是Agent啊,Agent和LLM有什么区别呀,最近大家都在说的Agent究竟是什么,包括很多文章都在写的Agent,还有之前谷歌发布的Agents白皮书究竟是什么,对我们有什么帮助,对我们有什么影响呢?现在,编者专门做了一个系列,从最简单的讲起,解开这个迷雾,这个系列的教程,会帮助你了解基本概

- 我们为什么要用大语言模型来迭代数据安全能力?

大模型.

语言模型人工智能自然语言处理架构深度学习大数据大模型

在当今科技飞速发展的时代,大语言模型无疑是最炙手可热的话题之一。从OpenAI的GPT系列到谷歌的BERT,这些拥有海量参数的模型宛如智能巨人,正重塑着自然语言处理(NLP)的格局。你或许好奇,大语言模型究竟为何如此备受瞩目?这得从自然语言处理领域的核心任务——文本分类说起。文本分类,就像是给五花八门的文本信息贴上合适的“标签”,无论是判断一封邮件是正常邮件还是垃圾邮件,分析社交媒体上的评论是积极

- 盘点50个AI大模型企业和典型产品

大模型玩家

人工智能语言模型ai自然语言处理深度学习大模型

OpenAI:-ChatGPT:是OpenAI推出的非常具有影响力的聊天机器人程序,能够进行自然流畅的对话、文本创作、问题解答等,不断迭代升级,引发了全球对大模型的广泛关注。-GPT-4O:OpenAI的新一代AI模型,在语言理解和生成能力上有进一步提升,能够感知用户的情绪,并针对问题以带有情绪的“嗓音”做出反馈。-Sora:文生视频大模型,可根据文本指令生成复杂且具有一定时长的视频,具有多个镜头

- 从模型到实际:人工智能项目落地的关键要素

IT猫仔

科技人工智能语言模型自然语言处理搜索引擎服务器机器学习

引言近年来,人工智能技术从实验室走向实际应用,其潜力在各行各业得到了初步的验证。然而,AI技术的落地并非一蹴而就,许多企业在尝试部署AI项目时,却发现自己陷入了“模型很好看,应用却难做”的困境。无论是数据准备不足、算法与场景的不匹配,还是缺乏持续优化的机制,这些问题都可能导致项目停滞,甚至功亏一篑。前排提示,文末有大模型AGI-CSDN独家资料包哦!对于企业来说,人工智能的价值不仅在于模型的高精度

- webview_flutter 4.10.0 技术文档

LuiChun

flutter

https://github.com/flutter/packages/tree/main/packages/webview_flutter/webview_flutter文档一webview_flutter_4.10.0_docs.txtwebview_flutter_4.10.0_docs.txtwebview_flutter_4.10.0_docs.txtwebview_flutter4.1

- QT +MYSQL+PYTHON,完成一个数据库表的增删改查

laocooon523857886

QTPython数据库qtmysql

ui_form.py#-*-coding:utf-8-*-##################################################################################FormgeneratedfromreadingUIfile'form.ui'####Createdby:QtUserInterfaceCompilerversion6.8.1#

- AI大模型项目实战:智能校园的秘密——深度剖析AI数字校园架构与解决方案

大模型.

人工智能架构开发语言深度学习机器学习产品经理

在这篇文章中,我们将详细解读一幅关于AI数字校园架构的图示,深入剖析其各个功能模块和层级的解决方案,探讨AI技术如何在校园环境中落地实施,以提升教育、管理和决策的智能化水平。文章将逐层分析从用户交互到技术基础设施的架构内容,并针对每个模块给出详细的解决方案,帮助理解该架构如何通过AI技术为师生及管理者提供智能化的服务。一、用户层:多角色智能化交互用户层是AI数字校园的表层,它将直接服务于三类核心用

- 安卓(Android)平台上的MVVM架构:关键知识点、优劣分析及实践示例

洪信智能

安卓开发android架构

摘要本文旨在探讨安卓平台上广泛应用的Model-View-ViewModel(MVVM)架构模式的核心概念、主要优点与潜在不足,并通过实际示例代码阐明其在实际项目中的应用方式。MVVM作为一款推动关注点分离和提高软件质量的架构方案,在安卓应用开发中起着至关重要的作用。一、安卓MVVM架构核心知识点1.1、架构组成1.1.1、Model层承载业务逻辑与数据实体,独立于UI并与ViewModel进行交

- 【上市公司文本分析】Python正则表达式从非结构化文本数据中提取结构化信息——以从上市公司高管简历中提取毕业院校信息为例

Ryo_Yuki

#上市公司文本分析Pythonpython正则表达式

从CSMAR中可以获取上市公司高管的简历文本信息,虽然是非结构化的,但是隐约可以从中发现一些规律,例如毕业院校很多出现在毕业于、就读于等词语之后,专业很多出现在大学名之后,但这些又不是绝对的,也会有其他一些规则。下方代码是我基于我的300多条示例数据(如果需要练习,可以评论邮箱),经过反复修改正则表达式规则去编制的,能够做到节约85%左右的人工工作量,但无法保证绝对精确。对于其他非结构化但又有规律

- python中全局变量和局部变量详解_Python局部变量与全局变量区别原理解析

weixin_39998795

1、局部变量name="YangLi"defchange_name(name):print("beforechange:",name)name="你好"print("afterchange",name)change_name(name)print("在外面看看name改了么?",name)输出:beforechange:YangLiafterchange你好在外面看看name改了么?YangLi2

- python中全局变量的使用

weixin_33737774

python

python中在module定义的变量可以认为是全局变量,而对于全局变量的赋值有个地方需要注意。test.py--------------------------------------------------importsysusername="muzizongheng"password="xxxx"defLogin(u,p):username=upassword=pprint("usernam

- 多线程编程之join()方法

周凡杨

javaJOIN多线程编程线程

现实生活中,有些工作是需要团队中成员依次完成的,这就涉及到了一个顺序问题。现在有T1、T2、T3三个工人,如何保证T2在T1执行完后执行,T3在T2执行完后执行?问题分析:首先问题中有三个实体,T1、T2、T3, 因为是多线程编程,所以都要设计成线程类。关键是怎么保证线程能依次执行完呢?

Java实现过程如下:

public class T1 implements Runnabl

- java中switch的使用

bingyingao

javaenumbreakcontinue

java中的switch仅支持case条件仅支持int、enum两种类型。

用enum的时候,不能直接写下列形式。

switch (timeType) {

case ProdtransTimeTypeEnum.DAILY:

break;

default:

br

- hive having count 不能去重

daizj

hive去重having count计数

hive在使用having count()是,不支持去重计数

hive (default)> select imei from t_test_phonenum where ds=20150701 group by imei having count(distinct phone_num)>1 limit 10;

FAILED: SemanticExcep

- WebSphere对JSP的缓存

周凡杨

WAS JSP 缓存

对于线网上的工程,更新JSP到WebSphere后,有时会出现修改的jsp没有起作用,特别是改变了某jsp的样式后,在页面中没看到效果,这主要就是由于websphere中缓存的缘故,这就要清除WebSphere中jsp缓存。要清除WebSphere中JSP的缓存,就要找到WAS安装后的根目录。

现服务

- 设计模式总结

朱辉辉33

java设计模式

1.工厂模式

1.1 工厂方法模式 (由一个工厂类管理构造方法)

1.1.1普通工厂模式(一个工厂类中只有一个方法)

1.1.2多工厂模式(一个工厂类中有多个方法)

1.1.3静态工厂模式(将工厂类中的方法变成静态方法)

&n

- 实例:供应商管理报表需求调研报告

老A不折腾

finereport报表系统报表软件信息化选型

引言

随着企业集团的生产规模扩张,为支撑全球供应链管理,对于供应商的管理和采购过程的监控已经不局限于简单的交付以及价格的管理,目前采购及供应商管理各个环节的操作分别在不同的系统下进行,而各个数据源都独立存在,无法提供统一的数据支持;因此,为了实现对于数据分析以提供采购决策,建立报表体系成为必须。 业务目标

1、通过报表为采购决策提供数据分析与支撑

2、对供应商进行综合评估以及管理,合理管理和

- mysql

林鹤霄

转载源:http://blog.sina.com.cn/s/blog_4f925fc30100rx5l.html

mysql -uroot -p

ERROR 1045 (28000): Access denied for user 'root'@'localhost' (using password: YES)

[root@centos var]# service mysql

- Linux下多线程堆栈查看工具(pstree、ps、pstack)

aigo

linux

原文:http://blog.csdn.net/yfkiss/article/details/6729364

1. pstree

pstree以树结构显示进程$ pstree -p work | grep adsshd(22669)---bash(22670)---ad_preprocess(4551)-+-{ad_preprocess}(4552) &n

- html input与textarea 值改变事件

alxw4616

JavaScript

// 文本输入框(input) 文本域(textarea)值改变事件

// onpropertychange(IE) oninput(w3c)

$('input,textarea').on('propertychange input', function(event) {

console.log($(this).val())

});

- String类的基本用法

百合不是茶

String

字符串的用法;

// 根据字节数组创建字符串

byte[] by = { 'a', 'b', 'c', 'd' };

String newByteString = new String(by);

1,length() 获取字符串的长度

&nbs

- JDK1.5 Semaphore实例

bijian1013

javathreadjava多线程Semaphore

Semaphore类

一个计数信号量。从概念上讲,信号量维护了一个许可集合。如有必要,在许可可用前会阻塞每一个 acquire(),然后再获取该许可。每个 release() 添加一个许可,从而可能释放一个正在阻塞的获取者。但是,不使用实际的许可对象,Semaphore 只对可用许可的号码进行计数,并采取相应的行动。

S

- 使用GZip来压缩传输量

bijian1013

javaGZip

启动GZip压缩要用到一个开源的Filter:PJL Compressing Filter。这个Filter自1.5.0开始该工程开始构建于JDK5.0,因此在JDK1.4环境下只能使用1.4.6。

PJL Compressi

- 【Java范型三】Java范型详解之范型类型通配符

bit1129

java

定义如下一个简单的范型类,

package com.tom.lang.generics;

public class Generics<T> {

private T value;

public Generics(T value) {

this.value = value;

}

}

- 【Hadoop十二】HDFS常用命令

bit1129

hadoop

1. 修改日志文件查看器

hdfs oev -i edits_0000000000000000081-0000000000000000089 -o edits.xml

cat edits.xml

修改日志文件转储为xml格式的edits.xml文件,其中每条RECORD就是一个操作事务日志

2. fsimage查看HDFS中的块信息等

&nb

- 怎样区别nginx中rewrite时break和last

ronin47

在使用nginx配置rewrite中经常会遇到有的地方用last并不能工作,换成break就可以,其中的原理是对于根目录的理解有所区别,按我的测试结果大致是这样的。

location /

{

proxy_pass http://test;

- java-21.中兴面试题 输入两个整数 n 和 m ,从数列 1 , 2 , 3.......n 中随意取几个数 , 使其和等于 m

bylijinnan

java

import java.util.ArrayList;

import java.util.List;

import java.util.Stack;

public class CombinationToSum {

/*

第21 题

2010 年中兴面试题

编程求解:

输入两个整数 n 和 m ,从数列 1 , 2 , 3.......n 中随意取几个数 ,

使其和等

- eclipse svn 帐号密码修改问题

开窍的石头

eclipseSVNsvn帐号密码修改

问题描述:

Eclipse的SVN插件Subclipse做得很好,在svn操作方面提供了很强大丰富的功能。但到目前为止,该插件对svn用户的概念极为淡薄,不但不能方便地切换用户,而且一旦用户的帐号、密码保存之后,就无法再变更了。

解决思路:

删除subclipse记录的帐号、密码信息,重新输入

- [电子商务]传统商务活动与互联网的结合

comsci

电子商务

某一个传统名牌产品,过去销售的地点就在某些特定的地区和阶层,现在进入互联网之后,用户的数量群突然扩大了无数倍,但是,这种产品潜在的劣势也被放大了无数倍,这种销售利润与经营风险同步放大的效应,在最近几年将会频繁出现。。。。

如何避免销售量和利润率增加的

- java 解析 properties-使用 Properties-可以指定配置文件路径

cuityang

javaproperties

#mq

xdr.mq.url=tcp://192.168.100.15:61618;

import java.io.IOException;

import java.util.Properties;

public class Test {

String conf = "log4j.properties";

private static final

- Java核心问题集锦

darrenzhu

java基础核心难点

注意,这里的参考文章基本来自Effective Java和jdk源码

1)ConcurrentModificationException

当你用for each遍历一个list时,如果你在循环主体代码中修改list中的元素,将会得到这个Exception,解决的办法是:

1)用listIterator, 它支持在遍历的过程中修改元素,

2)不用listIterator, new一个

- 1分钟学会Markdown语法

dcj3sjt126com

markdown

markdown 简明语法 基本符号

*,-,+ 3个符号效果都一样,这3个符号被称为 Markdown符号

空白行表示另起一个段落

`是表示inline代码,tab是用来标记 代码段,分别对应html的code,pre标签

换行

单一段落( <p>) 用一个空白行

连续两个空格 会变成一个 <br>

连续3个符号,然后是空行

- Gson使用二(GsonBuilder)

eksliang

jsongsonGsonBuilder

转载请出自出处:http://eksliang.iteye.com/blog/2175473 一.概述

GsonBuilder用来定制java跟json之间的转换格式

二.基本使用

实体测试类:

温馨提示:默认情况下@Expose注解是不起作用的,除非你用GsonBuilder创建Gson的时候调用了GsonBuilder.excludeField

- 报ClassNotFoundException: Didn't find class "...Activity" on path: DexPathList

gundumw100

android

有一个工程,本来运行是正常的,我想把它移植到另一台PC上,结果报:

java.lang.RuntimeException: Unable to instantiate activity ComponentInfo{com.mobovip.bgr/com.mobovip.bgr.MainActivity}: java.lang.ClassNotFoundException: Didn't f

- JavaWeb之JSP指令

ihuning

javaweb

要点

JSP指令简介

page指令

include指令

JSP指令简介

JSP指令(directive)是为JSP引擎而设计的,它们并不直接产生任何可见输出,而只是告诉引擎如何处理JSP页面中的其余部分。

JSP指令的基本语法格式:

<%@ 指令 属性名="

- mac上编译FFmpeg跑ios

啸笑天

ffmpeg

1、下载文件:https://github.com/libav/gas-preprocessor, 复制gas-preprocessor.pl到/usr/local/bin/下, 修改文件权限:chmod 777 /usr/local/bin/gas-preprocessor.pl

2、安装yasm-1.2.0

curl http://www.tortall.net/projects/yasm

- sql mysql oracle中字符串连接

macroli

oraclesqlmysqlSQL Server

有的时候,我们有需要将由不同栏位获得的资料串连在一起。每一种资料库都有提供方法来达到这个目的:

MySQL: CONCAT()

Oracle: CONCAT(), ||

SQL Server: +

CONCAT() 的语法如下:

Mysql 中 CONCAT(字串1, 字串2, 字串3, ...): 将字串1、字串2、字串3,等字串连在一起。

请注意,Oracle的CON

- Git fatal: unab SSL certificate problem: unable to get local issuer ce rtificate

qiaolevip

学习永无止境每天进步一点点git纵观千象

// 报错如下:

$ git pull origin master

fatal: unable to access 'https://git.xxx.com/': SSL certificate problem: unable to get local issuer ce

rtificate

// 原因:

由于git最新版默认使用ssl安全验证,但是我们是使用的git未设

- windows命令行设置wifi

surfingll

windowswifi笔记本wifi

还没有讨厌无线wifi的无尽广告么,还在耐心等待它慢慢启动么

教你命令行设置 笔记本电脑wifi:

1、开启wifi命令

netsh wlan set hostednetwork mode=allow ssid=surf8 key=bb123456

netsh wlan start hostednetwork

pause

其中pause是等待输入,可以去掉

2、

- Linux(Ubuntu)下安装sysv-rc-conf

wmlJava

linuxubuntusysv-rc-conf

安装:sudo apt-get install sysv-rc-conf 使用:sudo sysv-rc-conf

操作界面十分简洁,你可以用鼠标点击,也可以用键盘方向键定位,用空格键选择,用Ctrl+N翻下一页,用Ctrl+P翻上一页,用Q退出。

背景知识

sysv-rc-conf是一个强大的服务管理程序,群众的意见是sysv-rc-conf比chkconf

- svn切换环境,重发布应用多了javaee标签前缀

zengshaotao

javaee

更换了开发环境,从杭州,改变到了上海。svn的地址肯定要切换的,切换之前需要将原svn自带的.svn文件信息删除,可手动删除,也可通过废弃原来的svn位置提示删除.svn时删除。

然后就是按照最新的svn地址和规范建立相关的目录信息,再将原来的纯代码信息上传到新的环境。然后再重新检出,这样每次修改后就可以看到哪些文件被修改过,这对于增量发布的规范特别有用。

检出