李沐动手学深度学习V2-实战 Kaggle 比赛:图像分类 (CIFAR-10)和代码实现

一.实战Kaggle竞赛:图像分类(CIFAR10)

1. 数据集信息

比赛数据集分为训练集和测试集,其中训练集包含50000张、测试集包含300000张图像。 在测试集中,10000张图像将被用于评估,而剩下的290000张图像将不会被进行评估,包含它们只是为了防止手动标记测试集并提交标记结果。 两个数据集中的图像都是png格式,高度和宽度均为32像素并有三个颜色通道(RGB)。 这些图片共涵盖10个类别:飞机、汽车、鸟类、猫、鹿、狗、青蛙、马、船和卡车。

比赛网址为:https://www.kaggle.com/c/cifar-10

2. 完整数据集结构

在…/data中解压下载的文件并在其中解压缩train.7z和test.7z后的结构如下,train和test文件夹分别包含训练和测试图像,trainLabels.csv含有训练图像的标签, sample_submission.csv是提交文件的范例:

…/data/cifar-10/train/[1-50000].png

…/data/cifar-10/test/[1-300000].png

…/data/cifar-10/trainLabels.csv

…/data/cifar-10/sampleSubmission.csv

为了便于入门,我们提供包含前1000个训练图像和5个随机测试图像的数据集的小规模样本。 要使用Kaggle竞赛的完整数据集,你需要将以下demo变量设置为False,并且把Kaggle上面数据集下载下来放到指定文件夹下面。

import collections

import math

import os.path

import shutil

import d2l.torch

import torch

import torchvision.transforms

import torch.utils.data

from torch import nn

import pandas as pd

d2l.torch.DATA_HUB['cifar10_tiny'] = (d2l.torch.DATA_URL+'kaggle_cifar10_tiny.zip','2068874e4b9a9f0fb07ebe0ad2b29754449ccacd')

demo = True

if demo:

data_dir = d2l.torch.download_extract('cifar10_tiny')

else:

data_dir = '../data/cifar-10'

3. 数据集预处理

- 首先,定义函数read_csv_data()来读取CSV文件中的标签,它返回一个字典,该字典将文件名中不带扩展名的部分映射到字典中的键。

def read_csv_data(fname):

with open(fname,'r') as f:

lines = f.readlines()[1:]

#读取文件中每一行数据,获取数据集的标签和对应的图片索引号,需要去除第一行标题名称

tokens = [line.rstrip().split(',') for line in lines]

return dict(((name,label) for name,label in tokens))

labels = read_csv_data(os.path.join(data_dir,'trainLabels.csv'))

print('样本数:',len(labels))

print('类别数:',len(set(labels.values())))

- 下一步定义split_copy_train_valid函数来将验证集从原始的训练集中拆分出来。 参数split_to_valid_ratio是验证集中的样本数与原始训练集中的样本数之比。 令 等于样本最少的类别中的图像数量,而 是比率,训练集为每个类别拆分出 max(⌊⌋,1) 张图像作为验证集。比如 split_to_valid_ratio=0.1,原始的训练集每个类别的图片最少的有5000张作业,因此train_valid_test/train路径中将有45000张图像用于训练,而剩下5000张图像将作为路径train_valid_test/valid中的验证集。 组织数据集后,同类别的图像将被放置在同一文件夹下。

def copy_file(fname,target_dir):

#创建文件夹,如果存在,就不再重复创建

os.makedirs(name=target_dir,exist_ok=True)

#将源文件图片复制到指定文件夹下

shutil.copy(fname,target_dir)

#从训练集中拆分一部分图片用作验证集,然后复制到指定文件夹下面

def split_copy_train_valid(data_dir,labels,split_to_valid_ratio):

#labels.values()是具体的标签值,通过使用collections.Counter()函数对训练集类别数目进行计数,然后从大到小排列,获取最少的一类数目

split_num = collections.Counter(labels.values()).most_common()[-1][1]

#获取从训练集中每一类需要选出多少个样本作为验证集

num_valid_per_label = max(1,math.floor(split_num*split_to_valid_ratio))

valid_label_count = {}

for train_file in os.listdir(os.path.join(data_dir,'train')):

#获取当前图片的label

label = labels[train_file.split('.')[0]]

train_file_path = os.path.join(data_dir,'train',train_file)

absolute_path = os.path.join(data_dir,'train_valid_test')

#复制训练集的图片到'train_valid'文件夹下

copy_file(train_file_path,os.path.join(absolute_path,'train_valid',label))

if label not in valid_label_count or valid_label_count[label]<num_valid_per_label:

# 复制训练集的图片到'valid'文件夹下

copy_file(train_file_path,os.path.join(absolute_path,'valid',label))

valid_label_count[label] = valid_label_count.get(label,0)+1

else:

# 复制训练集的图片到'train'文件夹下

copy_file(train_file_path,os.path.join(absolute_path,'train',label))

return num_valid_per_label

#复制测试集的图片到指定文件夹下

def copy_test(data_dir):

for test_file in os.listdir(os.path.join(data_dir,'test')):

# 复制测试集的图片到'test'文件夹下

copy_file(os.path.join(data_dir,'test',test_file),os.path.join(data_dir,'train_valid_test','test','unknown'))

def copy_cifar10_data(data_dir,split_to_valid_ratio):

labels = read_csv_data(fname=os.path.join(data_dir,'trainLabels.csv'))

split_copy_train_valid(data_dir,labels,split_to_valid_ratio)

copy_test(data_dir)

batch_size = 32 if demo else 128

#10%的训练样本作为调整超参数的验证集

split_to_valid_ratio = 0.1

copy_cifar10_data(data_dir,split_to_valid_ratio)

- 图像增广

使用图像增广来解决过拟合的问题。例如在训练中,随机水平翻转图像,或者对彩色图像的三个RGB通道执行标准化。

transform_train = torchvision.transforms.Compose([

torchvision.transforms.Resize(40),

torchvision.transforms.RandomResizedCrop(32,scale=(0.64,1.0),ratio=(1.0,1.0)),

torchvision.transforms.RandomHorizontalFlip(),

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize([0.4914, 0.4822, 0.4465],[0.2023, 0.1994, 0.2010])

])

#在测试期间,只对图像执行标准化,以消除评估结果中的随机性。

transform_test = torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

#CIFAR10数据集所有图片RGB三通道均值为[0.4914, 0.4822, 0.4465],标准差为[0.2023, 0.1994, 0.2010]

torchvision.transforms.Normalize([0.4914, 0.4822, 0.4465],[0.2023, 0.1994, 0.2010])

])

- 加载数据集

使用torchvision.datasets.ImageFolder加载由原始图像组成的数据集,每个样本都包括一张图片和一个标签。

使用ImageFolder加载的数据集组织结构如下,训练集和验证集每个类别的图像要各自为一个文件夹,测试集文件夹只有一个unknown文件,里面放着测试集所有数据:

注意:

4.1. 使用ImageFolder的 dataset 数据集的类别标签储存于 dataset.classes 中

4.2. 使用 torch.utils.data.DataLoader 加载 dataset 时,其类别标签返回的是相应类别的索引,而非类别标签本身

4.3. 在训练模型时,直接使用类别标签的索引作为 target ,若有需要,可在训练结束后进行索引和类别的转换即可

#ImageFolder重新组织数据集

train_datasets,train_valid_datasets = [torchvision.datasets.ImageFolder(

root=os.path.join(data_dir,'train_valid_test',folder),transform=transform_train)for folder in ['train','train_valid']]

test_datasets,valid_datasets = [torchvision.datasets.ImageFolder(root=os.path.join(data_dir,'train_valid_test',folder),transform=transform_test)for folder in ['test','valid']]

- 读取数据集

在训练期间,需要对训练集指定特定的图像增广操作。 当验证集在超参数调整过程中用于模型评估时,不应引入图像增广的随机性。 在最终预测之前,我们根据训练集和验证集组合而成的训练模型进行训练,以充分利用所有标记的数据。

#创建数据集迭代器,训练集shuffle可以为True,表示训练完每轮后,数据集都会重新洗牌,每轮数据集的顺序都不一样,测试集,验证集shuffle必须为False,不然每次训练完后验证集的数据会不一样,测试集的数据每次预测时顺序也会不一样,从而导致后面生成CSV文件时,id编号和对应的label会不对应,从而得出的准确率结果不对,因为shuffle为False时,Pytorch是从相对应的文件中顺序读取图片文件的

train_iter,train_valid_iter = [torch.utils.data.DataLoader(dataset=ds,batch_size=batch_size,shuffle=True,drop_last=True)for ds in [train_datasets,train_valid_datasets]]

test_iter,valid_iter = [torch.utils.data.DataLoader(dataset=ds,batch_size=batch_size,shuffle=False,drop_last=False)for ds in [test_datasets,valid_datasets]]

- 定义模型(注意:loss不是求均值,而是所有训练样本的损失和)

def get_net():

num_classes = 10

net = d2l.torch.resnet18(num_classes=num_classes,in_channels=3)

return net

#loss不是求均值,而是所有训练样本的损失和

loss = nn.CrossEntropyLoss(reduction='none')

- 定义训练函数

根据模型在验证集上的表现来选择模型并调整超参数

def train(net,train_iter,valid_iter,num_epochs,lr,weight_decay,lr_period,lr_decay,devices):

#优化器函数:SGD

optim = torch.optim.SGD(net.parameters(),lr=lr,momentum=0.9,weight_decay=weight_decay)

#每隔四轮,学习率就衰减为lr*lr_decay

lr_scheduler = torch.optim.lr_scheduler.StepLR(optimizer=optim,step_size=lr_period,gamma=lr_decay)

timer,num_batches = d2l.torch.Timer(),len(train_iter)

legend = ['train loss','train acc']

if valid_iter is not None:

legend.append('valid acc')

animator = d2l.torch.Animator(xlabel='epoch',xlim=[1,num_epochs],legend=legend)

#GPU计算

net = nn.DataParallel(module=net,device_ids=devices).to(devices[0])

for epoch in range(num_epochs):

accumulator=d2l.torch.Accumulator(3)

net.train()#网络开始训练

for i,(X,y) in enumerate(train_iter):

timer.start()

#每一个批量进行训练所得到的批量损失和精确度

batch_loss,batch_acc = d2l.torch.train_batch_ch13(net,X,y,loss,optim,devices)

accumulator.add(batch_loss,batch_acc,y.shape[0])

timer.stop()

if i%(num_batches//5)==0 or i == num_batches-1:

animator.add(epoch+(i+1)/num_batches,(accumulator[0]/accumulator[2],accumulator[1]/accumulator[2],None))

net.eval()#训练每一轮结束后,模型需要用于验证数据集

measures = f'train loss {accumulator[0]/accumulator[2]},train acc {accumulator[1]/accumulator[2]},\n'

if valid_iter is not None:

valid_acc = d2l.torch.evaluate_accuracy_gpu(net, valid_iter,devices[0])

animator.add(epoch+1,(None,None,valid_acc))

measures += f'valid acc {valid_acc},'

lr_scheduler.step()#判断是否需要进行学习率衰减

print(measures+f'\n{num_epochs*accumulator[2]/timer.sum()} examples/sec,on {str(devices[0])}')

- 训练和验证模型

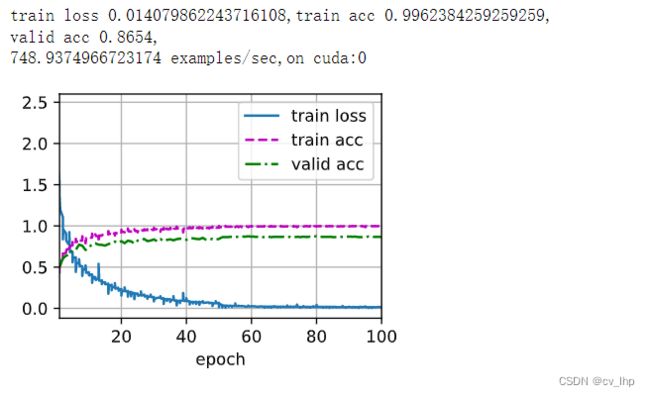

lr_period和lr_decay分别设置为30和0.1时,表示优化算法的学习率每30轮需要乘以0.1,对学习率进行调整(衰减),因为刚开始训练时参数需要更新快一点(梯度刚开始也会比较大一些),学习率要大一点,训练到后面需要把学习率调小一点,从而让参数更新变慢一些(因为训练到后面梯度会越来越小),lr学习率为1e-5,weight_decay = 5e-4,训练轮数num_epochs = 100,模型训练和验证结果如下图所示。

lr,weight_decay,epochs = 1e-5,5e-4,100

lr_decay,lr_period,net,devices= 0.1,30,get_net(),d2l.torch.try_all_gpus()

train(net,train_iter,valid_iter,epochs,lr,weight_decay,lr_period,lr_decay,devices)

- 对测试集进行分类并在 Kaggle 上提交结果

获得满意的超参数后,再使用所有标记的数据(包括验证集)来重新训练模型并对测试集进行分类,使用所有标记的数据(包括验证集)重新训练结果如下图所示,由于根据上图模型训练和验证结果看出,模型训练60轮后没有发生多大变化,因此重新训练时这里把轮数调整为了60轮,减少了训练时间。

net,preds = get_net(),[]

#当训练完后,得到合适的超参数,然后重新把之前训练集和验证集合在一起重新进行训练,得到训练好的网络,用于预测

train(net,train_valid_iter,None,epochs,lr,weight_decay,lr_period,lr_decay,devices)

for X,_ in test_iter:

#将测试数据集复制到GPU上面

y_hat = net(X.to(devices[0]))

#list.exend()表示一次性添加多个数据到列表中,list.append()表示一次性只添加一个数据

#y_hat.argmax(dim=1)表示得到每行值最大的一个索引,转化成int类型,复制到cpu上面(因为要输出到csv文件中),再转成numpy类型

#preds里面的值是标签的索引号,ImageFolder决定的 preds.extend(y_hat.argmax(dim=1).type(torch.int32).cpu().numpy())

indexs = list(range(1,len(test_datasets)+1))#生成索引号

indexs.sort(key=lambda x:str(x))

submission = pd.DataFrame({'id':indexs,'labels':preds})#使用pandas.DataFrame组织格式:编号,标签两列

#https://blog.csdn.net/weixin_48249563/article/details/114318425

#将索引号转换成对应的标签名称,ImageFolder组织数据集时将文件夹名称(如:cat文件夹)生成一个对应的类名(如:cat类)

submission['labels'] = submission['labels'].apply(lambda x:train_valid_datasets.classes[x])

#输出到csv文件中

submission.to_csv('submission.csv',index=False)

print("CIFAR10类别以及对应的索引位置:",train_valid_datasets.classes)

'''

输出结果:

['airplane',

'automobile',

'bird',

'cat',

'deer',

'dog',

'frog',

'horse',

'ship',

'truck']

'''

4. 小结

- 将包含原始图像文件的数据集组织为所需格式后,使用ImageFolder加载它们,使用DataLoader来进行读取数据

- 在图像分类中使用卷积神经网络和图像增广

- 之前一直使用Pytorch的高级API直接获取张量格式的图像数据集, 但是在实践中,图像数据集通常以图像文件的形式出现,因此这次将从原始图像文件开始,然后逐步组织、读取并将它们转换为张量格式(使用ImageFolder组织图像文件结构)。

5.全部代码

import collections

import math

import os.path

import shutil

import d2l.torch

import torch

import torchvision.transforms

import torch.utils.data

from torch import nn

import pandas as pd

d2l.torch.DATA_HUB['cifar10_tiny'] = (d2l.torch.DATA_URL+'kaggle_cifar10_tiny.zip','2068874e4b9a9f0fb07ebe0ad2b29754449ccacd')

demo = True

if demo:

data_dir = d2l.torch.download_extract('cifar10_tiny')

else:

data_dir = '../data/cifar-10'

def read_csv_data(fname):

with open(fname,'r') as f:

lines = f.readlines()[1:]

#读取文件中每一行数据,获取数据集的标签和对应的图片索引号,需要去除第一行标题名称

tokens = [line.rstrip().split(',') for line in lines]

return dict(((name,label) for name,label in tokens))

labels = read_csv_data(os.path.join(data_dir,'trainLabels.csv'))

print('样本数:',len(labels))

print('类别数:',len(set(labels.values())))

def copy_file(fname,target_dir):

#创建文件夹,如果存在,就不再重复创建

os.makedirs(name=target_dir,exist_ok=True)

#将源文件图片复制到指定文件夹下

shutil.copy(fname,target_dir)

#从训练集中拆分一部分图片用作验证集,然后复制到指定文件夹下面

def split_copy_train_valid(data_dir,labels,split_to_valid_ratio):

#labels.values()是具体的标签值,通过使用collections.Counter()函数对训练集类别数目进行计数,然后从大到小排列,获取最少的一类数目

split_num = collections.Counter(labels.values()).most_common()[-1][1]

#获取从训练集中每一类需要选出多少个样本作为验证集

num_valid_per_label = max(1,math.floor(split_num*split_to_valid_ratio))

valid_label_count = {}

for train_file in os.listdir(os.path.join(data_dir,'train')):

#获取当前图片的label

label = labels[train_file.split('.')[0]]

train_file_path = os.path.join(data_dir,'train',train_file)

absolute_path = os.path.join(data_dir,'train_valid_test')

#复制训练集的图片到'train_valid'文件夹下

copy_file(train_file_path,os.path.join(absolute_path,'train_valid',label))

if label not in valid_label_count or valid_label_count[label]<num_valid_per_label:

# 复制训练集的图片到'valid'文件夹下

copy_file(train_file_path,os.path.join(absolute_path,'valid',label))

valid_label_count[label] = valid_label_count.get(label,0)+1

else:

# 复制训练集的图片到'train'文件夹下

copy_file(train_file_path,os.path.join(absolute_path,'train',label))

return num_valid_per_label

#复制测试集的图片到指定文件夹下

def copy_test(data_dir):

for test_file in os.listdir(os.path.join(data_dir,'test')):

# 复制测试集的图片到'test'文件夹下

copy_file(os.path.join(data_dir,'test',test_file),os.path.join(data_dir,'train_valid_test','test','unknown'))

def copy_cifar10_data(data_dir,split_to_valid_ratio):

labels = read_csv_data(fname=os.path.join(data_dir,'trainLabels.csv'))

split_copy_train_valid(data_dir,labels,split_to_valid_ratio)

copy_test(data_dir)

batch_size = 32 if demo else 128

split_to_valid_ratio = 0.1

copy_cifar10_data(data_dir,split_to_valid_ratio)

transform_train = torchvision.transforms.Compose([

torchvision.transforms.Resize(40),

torchvision.transforms.RandomResizedCrop(32,scale=(0.64,1.0),ratio=(1.0,1.0)),

torchvision.transforms.RandomHorizontalFlip(),

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize([0.4914, 0.4822, 0.4465],[0.2023, 0.1994, 0.2010])

])

transform_test = torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

#CIFAR10数据集所有图片RGB三通道均值为[0.4914, 0.4822, 0.4465],标准差为[0.2023, 0.1994, 0.2010]

torchvision.transforms.Normalize([0.4914, 0.4822, 0.4465],[0.2023, 0.1994, 0.2010])

])

#ImageFolder重新组织数据集

train_datasets,train_valid_datasets = [torchvision.datasets.ImageFolder(

root=os.path.join(data_dir,'train_valid_test',folder),transform=transform_train)for folder in ['train','train_valid']]

test_datasets,valid_datasets = [torchvision.datasets.ImageFolder(root=os.path.join(data_dir,'train_valid_test',folder),transform=transform_test)for folder in ['test','valid']]

#创建数据集迭代器

train_iter,train_valid_iter = [torch.utils.data.DataLoader(dataset=ds,batch_size=batch_size,shuffle=True,drop_last=True)for ds in [train_datasets,train_valid_datasets]]

test_iter,valid_iter = [torch.utils.data.DataLoader(dataset=ds,batch_size=batch_size,shuffle=False,drop_last=False)for ds in [test_datasets,valid_datasets]]

def get_net():

num_classes = 10

net = d2l.torch.resnet18(num_classes=num_classes,in_channels=3)

return net

loss = nn.CrossEntropyLoss(reduction='none')

def train(net,train_iter,valid_iter,num_epochs,lr,weight_decay,lr_period,lr_decay,devices):

#优化器函数:SGD

optim = torch.optim.SGD(net.parameters(),lr=lr,momentum=0.9,weight_decay=weight_decay)

#每隔四轮,学习率就衰减为lr*lr_decay

lr_scheduler = torch.optim.lr_scheduler.StepLR(optimizer=optim,step_size=lr_period,gamma=lr_decay)

timer,num_batches = d2l.torch.Timer(),len(train_iter)

legend = ['train loss','train acc']

if valid_iter is not None:

legend.append('valid acc')

animator = d2l.torch.Animator(xlabel='epoch',xlim=[1,num_epochs],legend=legend)

#GPU计算

net = nn.DataParallel(module=net,device_ids=devices).to(devices[0])

for epoch in range(num_epochs):

accumulator=d2l.torch.Accumulator(3)

net.train()#网络开始训练

for i,(X,y) in enumerate(train_iter):

timer.start()

#每一个批量进行训练所得到的批量损失和精确度

batch_loss,batch_acc = d2l.torch.train_batch_ch13(net,X,y,loss,optim,devices)

accumulator.add(batch_loss,batch_acc,y.shape[0])

timer.stop()

if i%(num_batches//5)==0 or i == num_batches-1:

animator.add(epoch+(i+1)/num_batches,(accumulator[0]/accumulator[2],accumulator[1]/accumulator[2],None))

net.eval()#训练每一轮结束后,模型需要用于验证数据集

measures = f'train loss {accumulator[0]/accumulator[2]},train acc {accumulator[1]/accumulator[2]},\n'

if valid_iter is not None:

valid_acc = d2l.torch.evaluate_accuracy_gpu(net, valid_iter,devices[0])

animator.add(epoch+1,(None,None,valid_acc))

measures += f'valid acc {valid_acc},'

lr_scheduler.step()#判断是否需要进行学习率衰减

print(measures+f'\n{num_epochs*accumulator[2]/timer.sum()} examples/sec,on {str(devices[0])}')

lr,weight_decay,epochs = 1e-5,5e-4,100

lr_decay,lr_period,net,devices= 0.1,30,get_net(),d2l.torch.try_all_gpus()

train(net,train_iter,valid_iter,epochs,lr,weight_decay,lr_period,lr_decay,devices)

net,preds = get_net(),[]

#当训练完后,得到合适的超参数,然后重新把之前训练集和验证集合在一起重新进行训练,得到训练好的网络,用于预测

train(net,train_valid_iter,None,epochs,lr,weight_decay,lr_period,lr_decay,devices)

for X,_ in test_iter:

#将测试数据集复制到GPU上面

y_hat = net(X.to(devices[0]))

#list.exend()表示一次性添加多个数据到列表中,list.append()表示一次性只添加一个数据

#y_hat.argmax(dim=1)表示得到每行值最大的一个索引,转化成int类型,复制到cpu上面(因为要输出到csv文件中),再转成numpy类型

preds.extend(y_hat.argmax(dim=1).type(torch.int32).cpu().numpy())

indexs = list(range(1,len(test_datasets)+1))#生成索引号

indexs.sort(key=lambda x:str(x))

submission = pd.DataFrame({'id':indexs,'labels':preds})#使用pandas.DataFrame组织格式:编号,标签两列

#https://blog.csdn.net/weixin_48249563/article/details/114318425

#将索引数字转换成对应的标签名称,ImageFolder组织数据集时将文件夹名称(如:cat文件夹)生成一个对应的类名(如:cat类)

submission['labels'] = submission['labels'].apply(lambda x:train_valid_datasets.classes[x])

#输出到csv文件中

submission.to_csv('submission.csv',index=False)