HRNet源码阅读笔记(4),庞大的PoseHighResolutionNet模块-stage1

一、图和代码

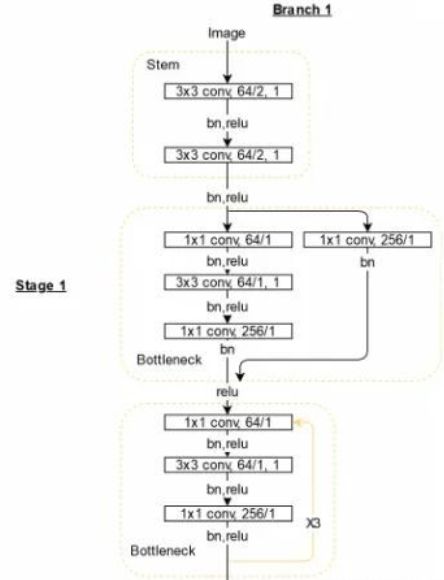

上一讲的图中,有stage1

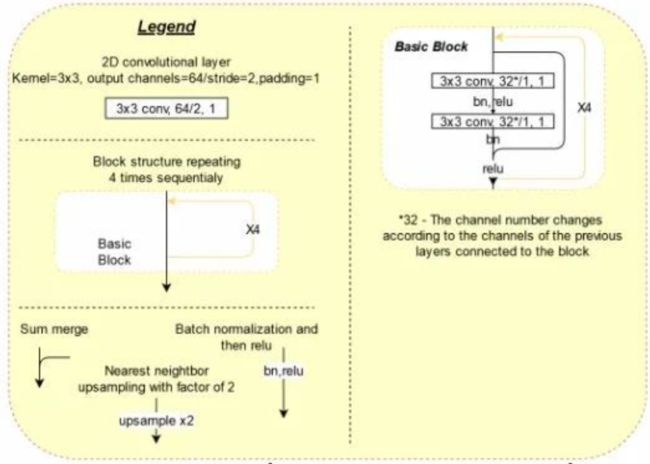

图例如下;

关键是看pose_hrnet.py中PoseHighResolutionNet模块的forward函数

相关部分如下:

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.conv2(x)

x = self.bn2(x)

x = self.relu(x)

x = self.layer1(x)其中涉及到的定义如下:

def __init__(self, cfg, **kwargs):

self.inplanes = 64

extra = cfg['MODEL']['EXTRA']

super(PoseHighResolutionNet, self).__init__()

# stem net

self.conv1 = nn.Conv2d(3, 64, kernel_size=3, stride=2, padding=1,

bias=False)

self.bn1 = nn.BatchNorm2d(64, momentum=BN_MOMENTUM)

self.conv2 = nn.Conv2d(64, 64, kernel_size=3, stride=2, padding=1,

bias=False)

self.bn2 = nn.BatchNorm2d(64, momentum=BN_MOMENTUM)

self.relu = nn.ReLU(inplace=True)

self.layer1 = self._make_layer(Bottleneck, 64, 4)其中,第14行_make_layer定义如下:

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(

self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False

),

nn.BatchNorm2d(planes * block.expansion, momentum=BN_MOMENTUM),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)还有,__init__第14行有个Bottleneck,定义如下:

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes, momentum=BN_MOMENTUM)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes, momentum=BN_MOMENTUM)

self.conv3 = nn.Conv2d(planes, planes * self.expansion, kernel_size=1,

bias=False)

self.bn3 = nn.BatchNorm2d(planes * self.expansion,

momentum=BN_MOMENTUM)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out这个Bottleneck,就是个残差网络。关于残差网络,推荐大家好好百度一下,值得明白他的基本想法。

注意两点:

Bottleneck和basicblock两回事

self.layer1 = self._make_layer(Bottleneck, 64, 4)最后一个参数,说的是,重复4次。

二、魔性的_make_layer

_make_layer定义中的第3行条件,既:

if stride != 1 or self.inplanes != planes * block.expansion:对于 self.layer1 = self._make_layer(Bottleneck, 64, 4),满足吗?

首先,stride=1,前一半不满足了。

其次,self.inplanes=64,而planes=64,block.expansion=4.后一半满足了!

那么downsample就会被执行了。

通俗滴说,layer1的输入与输出,特征维度不一样多,为了残差,就需要下采样!

这里的下采样,你看,kernel_size=1,所以,目的不是分辨率,而是特征维数的统一。

看看这些代码,跟示意图,对的上号吗?我觉得稍有差异。不求甚解了,继续往后看!