Pytorch与权重衰减(L2范数)

理论

l ( w 1 , w 2 , b ) = 1 n ∑ i = 1 n 1 2 ( x 1 ( i ) w 1 + x 2 ( i ) + b − y ( i ) ) 2 l(w_1, w_2, b)=\frac{1}{n}\sum_{i=1}^n\frac{1}{2}(x_1^{(i)}w_1+x_2^{(i)}+b-y^{(i)})^2 l(w1,w2,b)=n1∑i=1n21(x1(i)w1+x2(i)+b−y(i))2

带有penalty如下:

l ( w 1 , w 2 , b ) + λ 2 n ∣ ∣ ∣ w ∣ ∣ 2 l(w_1, w_2, b)+\frac{\lambda}{2n}|||w||^2 l(w1,w2,b)+2nλ∣∣∣w∣∣2

其中超参数 λ > 0 \lambda>0 λ>0当权重参数均为0的时候,惩罚项最小.当 λ \lambda λ比较大的时候,惩罚项在损失函数中的比重较大,这通常会使学到的权重参数的元素较接近0.当λλ设为0时,惩罚项完全不起作用.上式中 L 2 L_2 L2范数平方 ∣ ∣ w ∣ ∣ 2 ||w||^2 ∣∣w∣∣2展开后得到 w 1 2 + w 2 2 w_1^2+w_2^2 w12+w22.有了 L 2 L_2 L2范数惩罚项后,在小批量随机梯度下降中,我们将线性回归一节中权重 w 1 , w 2 w_1,w_2 w1,w2的迭代方式更改为:

可见, L 2 L_2 L2范数正则化令权重 w 1 , w 2 w_1,w_2 w1,w2先自乘小于1的数,再减去不含惩罚项的梯度。因此, L 2 L_2 L2范数正则化又叫权重衰减。权重衰减通过惩罚绝对值较大的模型参数为需要学习的模型增加了限制,这可能对过拟合有效。实际场景中,我们有时也在惩罚项中添加偏差元素的平方和。

实现高维线性回归

简述

下面,我们以高维线性回归为例来引入一个过拟合问题,并使用权重衰减来应对过拟合。设数据样本特征的维度为pp。对于训练数据集和测试数据集中特征为 x 1 , x 2 , . . . , x p x_1, x_2,...,x_p x1,x2,...,xp的任一样本,我们使用如下的线性函数来生成该样本的标签:

y = 0.05 + ∑ i = 1 p 0.01 x i + ϵ y=0.05+\sum_{i=1}^p0.01x_i+ϵ y=0.05+∑i=1p0.01xi+ϵ

其中噪声项ϵ服从均值为0、标准差为0.01的正态分布。为了较容易地观察过拟合,我们考虑高维线性回归问题,如设维度p=200;同时c我们特意把训练数据集的样本数设低c如20。

d2lzh_pytorch.py

import random

from IPython import display

import matplotlib.pyplot as plt

import torch

import torchvision

import torchvision.transforms as transforms

import matplotlib.pyplot as plt

import time

import sys

import torch.nn as nn

def use_svg_display():

# 用矢量图显示

display.set_matplotlib_formats('svg')

def set_figsize(figsize=(3.5, 2.5)):

use_svg_display()

# 设置图的尺寸

plt.rcParams['figure.figsize'] = figsize

'''给定batch_size, feature, labels,做数据的打乱并生成指定大小的数据集'''

def data_iter(batch_size, features, labels):

num_examples = len(features)

indices = list(range(num_examples))

random.shuffle(indices)

for i in range(0, num_examples, batch_size): #(start, staop, step)

j = torch.LongTensor(indices[i: min(i + batch_size, num_examples)]) #最后一次可能没有一个batch

yield features.index_select(0, j), labels.index_select(0, j)

'''定义线性回归的模型'''

def linreg(X, w, b):

return torch.mm(X, w) + b

'''定义线性回归的损失函数'''

def squared_loss(y_hat, y):

return (y_hat - y.view(y_hat.size())) ** 2 / 2

'''线性回归的优化算法 —— 小批量随机梯度下降法'''

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size #这里使用的是param.data

'''MINIST,可以将数值标签转成相应的文本标签'''

def get_fashion_mnist_labels(labels):

text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat',

'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

return [text_labels[int(i)] for i in labels]

'''定义一个可以在一行里画出多张图像和对应标签的函数'''

def show_fashion_mnist(images, labels):

use_svg_display()

# 这里的_表示我们忽略(不使用)的变量

_, figs = plt.subplots(1, len(images), figsize=(12, 12))

for f, img, lbl in zip(figs, images, labels):

f.imshow(img.view((28, 28)).numpy())

f.set_title(lbl)

f.axes.get_xaxis().set_visible(False)

f.axes.get_yaxis().set_visible(False)

plt.show()

'''获取并读取Fashion-MNIST数据集;该函数将返回train_iter和test_iter两个变量'''

def load_data_fashion_mnist(batch_size):

mnist_train = torchvision.datasets.FashionMNIST(root='Datasets/FashionMNIST', train=True, download=True,

transform=transforms.ToTensor())

mnist_test = torchvision.datasets.FashionMNIST(root='Datasets/FashionMNIST', train=False, download=True,

transform=transforms.ToTensor())

if sys.platform.startswith('win'):

num_workers = 0 # 0表示不用额外的进程来加速读取数据

else:

num_workers = 4

train_iter = torch.utils.data.DataLoader(mnist_train, batch_size=batch_size, shuffle=True, num_workers=num_workers)

test_iter = torch.utils.data.DataLoader(mnist_test, batch_size=batch_size, shuffle=False, num_workers=num_workers)

return train_iter, test_iter

'''评估模型net在数据集data_iter的准确率'''

def evaluate_accuracy(data_iter, net):

acc_sum, n = 0.0, 0

for X, y in data_iter:

acc_sum += (net(X).argmax(dim=1) == y).float().sum().item()

n += y.shape[0]

return acc_sum / n

'''训练模型,softmax'''

def train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, params=None, lr=None, optimizer=None):

for epoch in range(num_epochs):

train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

for X, y in train_iter:

y_hat = net(X)

l = loss(y_hat, y).sum()

# 梯度清零

if optimizer is not None:

optimizer.zero_grad()

elif params is not None and params[0].grad is not None:

for param in params:

param.grad.data.zero_()

l.backward()

if optimizer is None:

sgd(params, lr, batch_size)

else:

optimizer.step()

train_l_sum += l.item()

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

n += y.shape[0]

test_acc = evaluate_accuracy(test_iter, net)

print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

% (epoch + 1, train_l_sum / n, train_acc_sum / n, test_acc))

'''对x的形状转换'''

class FlattenLayer(nn.Module):

def __init__(self):

super(FlattenLayer, self).__init__()

def forward(self, x):

return x.view(x.shape[0], -1)

'''作图函数,其中y轴使用了对数尺度,画出训练与测试的Loss图像'''

def semilogy(x_vals, y_vals, x_label, y_label, x2_vals = None, y2_vals = None,

legend = None, figsize=(3.5, 2.5)):

set_figsize(figsize)

plt.xlabel(x_label)

plt.ylabel(y_label)

plt.semilogy(x_vals, y_vals)

if x2_vals and y2_vals:

plt.semilogy(x2_vals, y2_vals, linestyle=':')

plt.legend(legend)

plt.show()

手动实现

main.py

import torch

import torch.nn as nn

import numpy as np

import sys

sys.path.append("..")

import d2lzh_pytorch as d2l

# 生成数据集

n_train, n_test, num_inputs = 20, 100, 200

true_w, true_b = torch.ones(num_inputs, 1) * 0.01, 0.05

features = torch.randn((n_train + n_test, num_inputs))

labels = torch.matmul(features, true_w) + true_b

labels += torch.tensor(np.random.normal(0, 0.01, size=labels.size()), dtype=torch.float)

train_features, test_features = features[:n_train, :], features[n_train:, :]

train_labels, test_labels = labels[:n_train], labels[n_train:]

# 初始化参数

def init_params():

w = torch.random((num_inputs, 1), required_grad = True)

b = torch.zeros(1, required_grad = True)

return [w, b]

# 定义L2范数惩罚项

def l2_penalty(w):

return (w ** 2).sum()/2

# 定义训练和测试

batch_size, num_epochs, lr = 1, 100, 0.003

net, loss = d2l.linreg, d2l.squared_loss

dataset = torch.utils.data.TensorDataset(train_features, train_labels)

train_iter = torch.utils.data.DataLoader(dataset, batch_size, shuffle = True)

def fit_and_plot(lambd):

w, b = init_params()

train_ls, test_ls = [], []

for _ in range(num_epochs):

for X,y in train_iter:

# 添加了l2惩罚项

l = loss(net(X, w, b)) + lambd * l2_penalty(w)

l = l.sum()

if w.grad is not None:

w.grad.data.zero_()

b.grad.data.zero_()

l.backward()

d2l.sgd([w,b], lr, batch_size)

train_ls.append(loss(net(train_features, w, b), train_labels).mean().item())

test_ls.append(loss(net(test_features, w, b), test_labels).mean().item())

d2l.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',

range(1, num_epochs + 1), test_ls, ['train', 'test'])

print('L2 norm of w:', w.norm().item())

观察过拟合

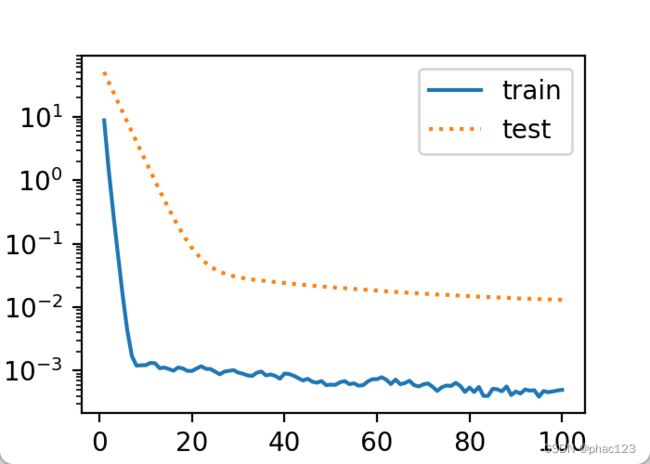

接下来,让我们训练并测试高维线性回归模型。当 λ \lambda λ设为0时,我们没有使用权重衰减。结果训练误差远小于测试集上的误差。这是典型的过拟合现象。

fit_and_plot(lambd=0)

输出:

L2 norm of w: 14.296510696411133

使用权重衰减

下面我们使用权重衰减。可以看出,训练误差虽然有所提高,但测试集上的误差有所下降。过拟合现象得到一定程度的缓解。另外,权重参数的 L 2 L_2 L2范数比不使用权重衰减时的更小,此时的权重参数更接近0。

fit_and_plot(lambd=3)

简洁实现

这里我们直接在构造优化器实例时通过weight_decay参数来指定权重衰减超参数。默认下,PyTorch会对权重和偏差同时衰减。我们可以分别对权重和偏差构造优化器实例,从而只对权重衰减。

main.py

import torch

import torch.nn as nn

import numpy as np

import sys

sys.path.append("..")

import d2lzh_pytorch as d2l

# 生成数据集

n_train, n_test, num_inputs = 20, 100, 200

true_w, true_b = torch.ones(num_inputs, 1) * 0.01, 0.05

features = torch.randn((n_train + n_test, num_inputs))

labels = torch.matmul(features, true_w) + true_b

labels += torch.tensor(np.random.normal(0, 0.01, size=labels.size()), dtype=torch.float)

train_features, test_features = features[:n_train, :], features[n_train:, :]

train_labels, test_labels = labels[:n_train], labels[n_train:]

batch_size, num_epochs, lr = 1, 100, 0.003

loss = torch.nn.MSELoss()

dataset = torch.utils.data.TensorDataset(train_features, train_labels)

train_iter = torch.utils.data.DataLoader(dataset, batch_size, shuffle=True)

def fit_and_plot_pytorch(wd):

# 对权重参数衰减。权重名称一般是以weight结尾

net = nn.Linear(num_inputs, 1)

nn.init.normal_(net.weight, mean = 0, std=1)

nn.init.normal_(net.bias, mean = 0, std = 0.01)

optimizer_w = torch.optim.SGD(params=[net.weight], lr = lr, weight_decay=wd)

optimizer_b = torch.optim.SGD(params=[net.bias], lr = lr)

train_ls, test_ls = [], []

for _ in range(num_epochs):

for X,y in train_iter:

l = loss(net(X), y).mean()

optimizer_w.zero_grad()

optimizer_b.zero_grad()

l.backward()

optimizer_w.step()

optimizer_b.step()

train_ls.append(loss(net(train_features), train_labels).mean().item())

test_ls.append(loss(net(test_features), test_labels).mean().item())

d2l.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',

range(1, num_epochs + 1), test_ls, ['train', 'test'])

print('L2 norm of w:', net.weight.data.norm().item())

fit_and_plot_pytorch(0)

fit_and_plot_pytorch(3)

与从零开始实现权重衰减的实验现象类似,使用权重衰减可以在一定程度上缓解过拟合问题。

fit_and_plot_pytorch(0)

输出:

L2 norm of w: 13.87320613861084

fit_and_plot_pytorch(3)

输出:

L2 norm of w: 0.046947550028562546

小结

- 正则化通过为模型损失函数添加惩罚项使学出的模型参数值较小,是应对过拟合的常用手段。

- 权重衰减等价于 L 2 L_2 L2范数正则化,通常会使学到的权重参数的元素较接近0。

- 权重衰减可以通过优化器中的weight_decay超参数来指定。

- 可以定义多个优化器实例对不同的模型参数使用不同的迭代方法。