ResNet模型框架(PyTorch)

I. 前言

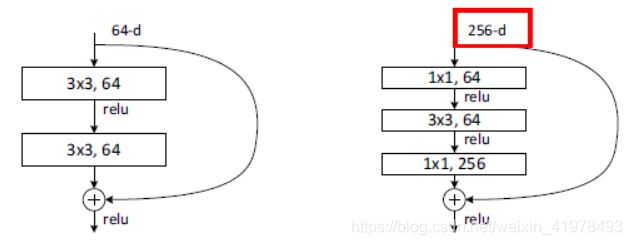

ResNet,论文地址:Deep Residual Learning for Image Recognition,该论文提出了一种残差网络结构,将网络每一部分的输入经shortcut connection,与第二(三)个卷积核的结果相加,然后一并进行ReLU生成总的激活值. 每一部分的残差网络结构如下图所示.

【注】左侧的building block是RestNet-34的, 右侧的是ResNet-50/101/152. 其中,shortcut connection有三种方案,A)在维度上升的情况下使用Zero-padding;B)在维度上升的情况下使用projection shortcut,其余直接使用identity shortcut;C)所有的shortcut都是projection shortcut. 在本文中,ResNet-34将使用B)C)两种方案,而ResNet-50/101/152则全部使用B)一种方案.

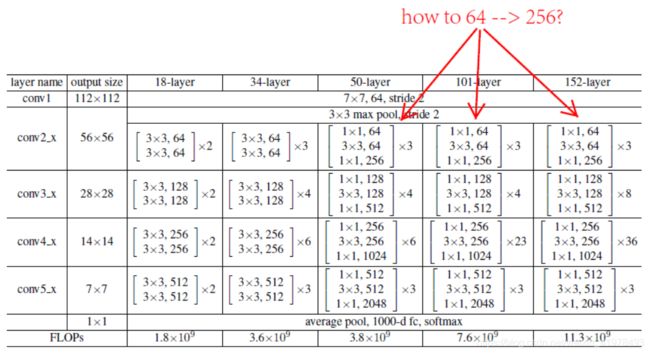

II. 模型框架图

【注】conv3_1、conv4_1以及conv5_1使用的是步长为2的downsampling,即下采样模块,首先通过一个kernel_size为1且步长为2的卷积运算,然后进行BatchNormalization. (此处令我想不通的是ResNet-50/101/152怎么从maxpool的64channels变成256channels,然后与conv2_1的输出进行相加)

III. 代码复现

1. ResNet-34

import torch

import torch.nn as nn

import torch.nn.functional as F

class ResModule(nn.Module):

def __init__(self, in_channels, first3x3, second3x3):

super(ResModule, self).__init__()

self.forward_prop = nn.Sequential(

nn.Conv2d(in_channels, first3x3, kernel_size=3, padding=1),

nn.BatchNorm2d(first3x3),

nn.ReLU(True),

nn.Conv2d(first3x3, second3x3, kernel_size=3, padding=1),

nn.BatchNorm2d(second3x3),

)

def forward(self, x):

out = self.forward_prop(x)

out += x

return F.relu(out)

class ResModuleOptB(nn.Module):

def __init__(self, in_channels, first3x3, second3x3):

super(ResModuleOptB, self).__init__()

self.forward_prop = nn.Sequential(

nn.Conv2d(in_channels, first3x3, kernel_size=3, stride=2, padding=1),

nn.BatchNorm2d(first3x3),

nn.ReLU(True),

nn.Conv2d(first3x3, second3x3, kernel_size=3, padding=1),

nn.BatchNorm2d(second3x3),

)

self.downsample = nn.Sequential(

nn.Conv2d(in_channels, second3x3, kernel_size=1, stride=2),

nn.BatchNorm2d(second3x3)

)

def forward(self, x):

out = self.forward_prop(x)

match = self.downsample(x)

out += match

return F.relu(out)

class ResNet34B(nn.Module):

def __init__(self):

super(ResNet34B, self).__init__()

self.first_layer = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1),

)

self.conv2x = ResModule(64, 64, 64)

self.conv3x_1 = ResModuleOptB(64, 128, 128)

self.conv3x_2 = ResModule(128, 128, 128)

self.conv4x_1 = ResModuleOptB(128, 256, 256)

self.conv4x_2 = ResModule(256, 256, 256)

self.conv5x_1 = ResModuleOptB(256, 512, 512)

self.conv5x_2 = ResModule(512, 512, 512)

self.avgpool = nn.AvgPool2d(kernel_size=7, stride=1)

self.fc = nn.Linear(512, 1000)

def forward(self, x):

out = self.first_layer(x)

out = self.conv2x(self.conv2x(self.conv2x(out)))

out = self.conv3x_2(self.conv3x_2(self.conv3x_2(self.conv3x_1(out))))

out = self.conv4x_2(self.conv4x_2(self.conv4x_2(self.conv4x_2(self.conv4x_2(self.conv4x_1(out))))))

out = self.conv5x_2(self.conv5x_2(self.conv5x_1(out)))

out = self.avgpool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

class ResNet34C(nn.Module):

def __init__(self):

super(ResNet34C, self).__init__()

self.first_layer = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1),

)

self.conv2x = ResModule(64, 64, 64)

self.conv3x_1 = ResModuleOptB(64, 128, 128)

self.conv3x_2 = ResModule(128, 128, 128)

self.conv4x_1 = ResModuleOptB(128, 256, 256)

self.conv4x_2 = ResModule(256, 256, 256)

self.conv5x_1 = ResModuleOptB(256, 512, 512)

self.conv5x_2 = ResModule(512, 512, 512)

self.avgpool = nn.AvgPool2d(kernel_size=7, stride=1)

self.fc = nn.Linear(512, 1000)

def forward(self, x):

out = self.first_layer(x)

out = self.conv2x(self.conv2x(self.conv2x(out)))

out = self.conv3x_1(self.conv3x_1(self.conv3x_1(self.conv3x_1(out))))

out = self.conv4x_1(self.conv4x_1(self.conv4x_1(self.conv4x_1(self.conv4x_1(self.conv4x_1(out))))))

out = self.conv5x_1(self.conv5x_1(self.conv5x_1(out)))

out = self.avgpool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

2. ResNet-50/101/152

import torch

import torch.nn as nn

import torch.nn.functional as F

class ResModule(nn.Module):

def __init__(self, in_channels, first1x1, n3x3, second1x1):

super(ResModule, self).__init__()

self.forward_prop = nn.Sequential(

nn.Conv2d(in_channels, first1x1, kernel_size=1)

nn.BatchNorm2d(first1x1)

nn.ReLU(True)

nn.Conv2d(first1x1, n3x3, kernel_size=3, padding=1)

nn.BatchNorm2d(n3x3)

nn.Conv2d(n3x3, second1x1, kernel_size=1)

nn.BatchNorm2d(second1x1)

def forward(self, x):

out = self.forward_prop(x)

out = out + x

return F.relu(out)

class ResModuleOptB(nn.Module):

def __init__(self, in_channels, first1x1, n3x3, second1x1):

super(ResModuleOptB, self).__init__()

self.forward_prop = nn.Sequential(

nn.Conv2d(in_channels, first1x1, kernel_size=1, stride=2),

nn.BatchNorm2d(first1x1),

nn.ReLU(True),

nn.Conv2d(first1x1, n3x3, kernel_size=3, padding=1),

nn.BatchNorm2d(n3x3),

nn.ReLU(True),

nn.Conv2d(n3x3, second1x1, kernel_size=1),

nn.BatchNorm2d(second1x1),

nn.ReLU(True)

)

self.downsample = nn.Sequential(

nn.Conv2d(in_channels, second1x1, kernel_size=1, stride=2),

nn.BatchNorm2d(second1x1)

)

def forward(self, x):

out = self.forward_prop(x)

match = self.downsample(x)

out += match

return F.relu(out)

class ResNet50(nn.Module):

def __init__(self):

super(ResNet50, self).__init__()

self.first_layer = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.Conv2d(64, 256, kernel_size=1),

nn.BatchNorm2d(256),

nn.ReLU(True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1),

)

self.conv2x = ResModule(256, 64, 64, 256)

self.conv3_1 = ResModuleOptB(256, 128, 128, 512)

self.conv3_res = ResModule(512, 128, 128, 512)

self.conv4_1 = ResModuleOptB(512, 256, 256, 1024)

self.conv4_res = ResModule(1024, 256, 256, 1024)

self.conv5_1 = ResModuleOptB(1024, 512, 512, 2048)

self.conv5_res = ResModule(2048, 512, 512, 2048)

self.avgpool = nn.AvgPool2d(kernel_size=7, stride=1)

self.fc = nn.Linear(2048, 1000)

def forward(self, x):

out = self.first_layer(x)

net = [0, 0, 3, 4, 6, 3]

if net[2] > 0:

out = self.conv2x(out)

net[2] -= 1

out = self.conv3_1(out)

if net[3] > 1:

out = self.conv3_res(out)

net[3] -= 1

out = self.conv4_1(out)

if net[4] > 1:

out = self.conv4_res(out)

net[4] -= 1

out = self.conv5_1(out)

if net[5] > 1:

out = self.conv5_res(out)

net[5] -= 1

out = self.avgpool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

class ResNet101(nn.Module):

def __init__(self):

super(ResNet101, self).__init__()

self.first_layer = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.Conv2d(64, 256, kernel_size=1),

nn.BatchNorm2d(256),

nn.ReLU(True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1),

)

self.conv2x = ResModule(256, 64, 64, 256)

self.conv3_1 = ResModuleOptB(256, 128, 128, 512)

self.conv3_res = ResModule(512, 128, 128, 512)

self.conv4_1 = ResModuleOptB(512, 256, 256, 1024)

self.conv4_res = ResModule(1024, 256, 256, 1024)

self.conv5_1 = ResModuleOptB(1024, 512, 512, 2048)

self.conv5_res = ResModule(2048, 512, 512, 2048)

self.avgpool = nn.AvgPool2d(kernel_size=7, stride=1)

self.fc = nn.Linear(2048, 1000)

def forward(self, x):

out = self.first_layer(x)

net = [0, 0, 3, 4, 23, 3]

if net[2] > 0:

out = self.conv2x(out)

net[2] -= 1

out = self.conv3_1(out)

if net[3] > 1:

out = self.conv3_res(out)

net[3] -= 1

out = self.conv4_1(out)

if net[4] > 1:

out = self.conv4_res(out)

net[4] -= 1

out = self.conv5_1(out)

if net[5] > 1:

out = self.conv5_res(out)

net[5] -= 1

out = self.avgpool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

class ResNet152(nn.Module):

def __init__(self):

super(ResNet152, self).__init__()

self.first_layer = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.Conv2d(64, 256, kernel_size=1),

nn.BatchNorm2d(256),

nn.ReLU(True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1),

)

self.conv2x = ResModule(256, 64, 64, 256)

self.conv3_1 = ResModuleOptB(256, 128, 128, 512)

self.conv3_res = ResModule(512, 128, 128, 512)

self.conv4_1 = ResModuleOptB(512, 256, 256, 1024)

self.conv4_res = ResModule(1024, 256, 256, 1024)

self.conv5_1 = ResModuleOptB(1024, 512, 512, 2048)

self.conv5_res = ResModule(2048, 512, 512, 2048)

self.avgpool = nn.AvgPool2d(kernel_size=7, stride=1)

self.fc = nn.Linear(2048, 1000)

def forward(self, x):

out = self.first_layer(x)

net = [0, 0, 3, 8, 36, 3]

if net[2] > 0:

out = self.conv2x(out)

net[2] -= 1

out = self.conv3_1(out)

if net[3] > 1:

out = self.conv3_res(out)

net[3] -= 1

out = self.conv4_1(out)

if net[4] > 1:

out = self.conv4_res(out)

net[4] -= 1

out = self.conv5_1(out)

if net[5] > 1:

out = self.conv5_res(out)

net[5] -= 1

out = self.avgpool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out